Spark2.X集群运行模式

rn

启动

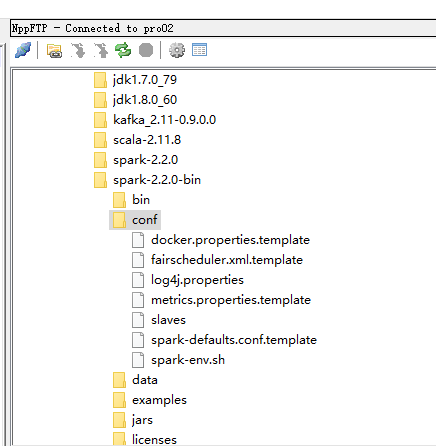

先把这三个文件的名字改一下

配置slaves

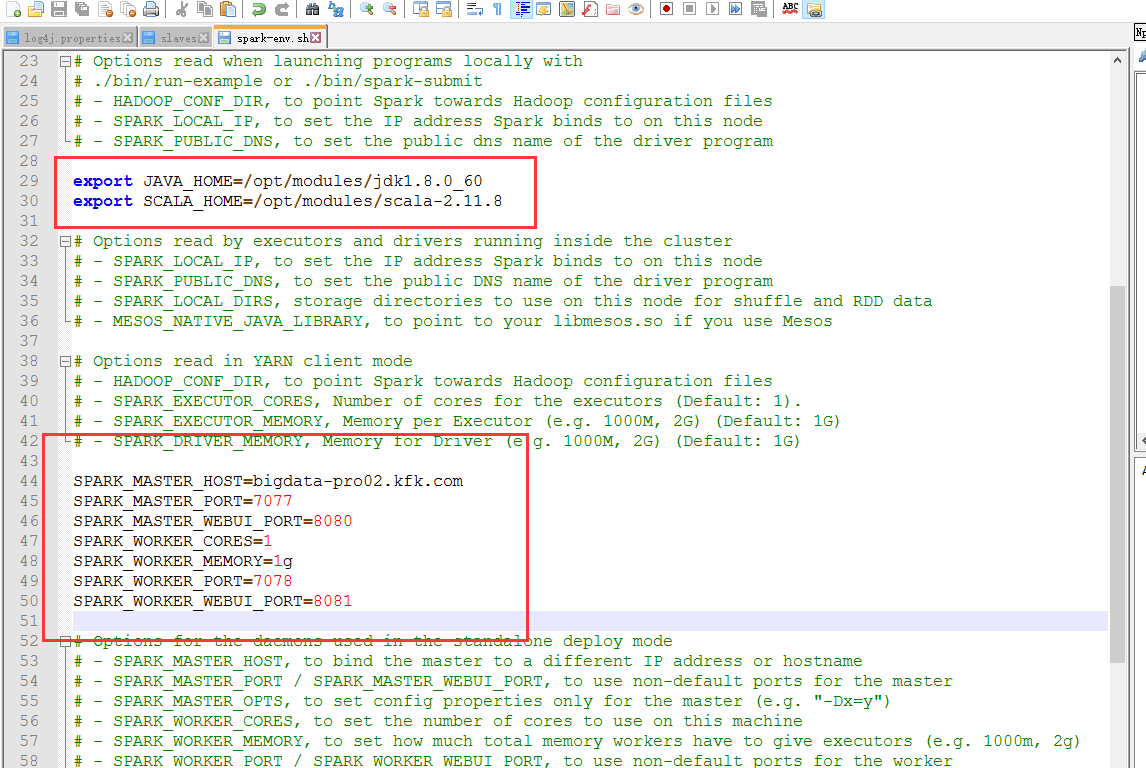

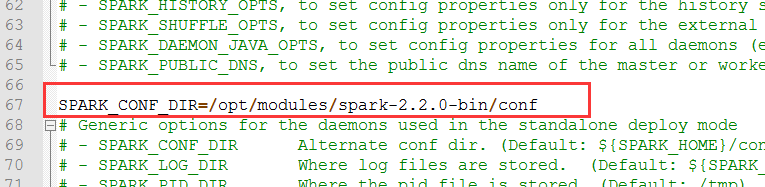

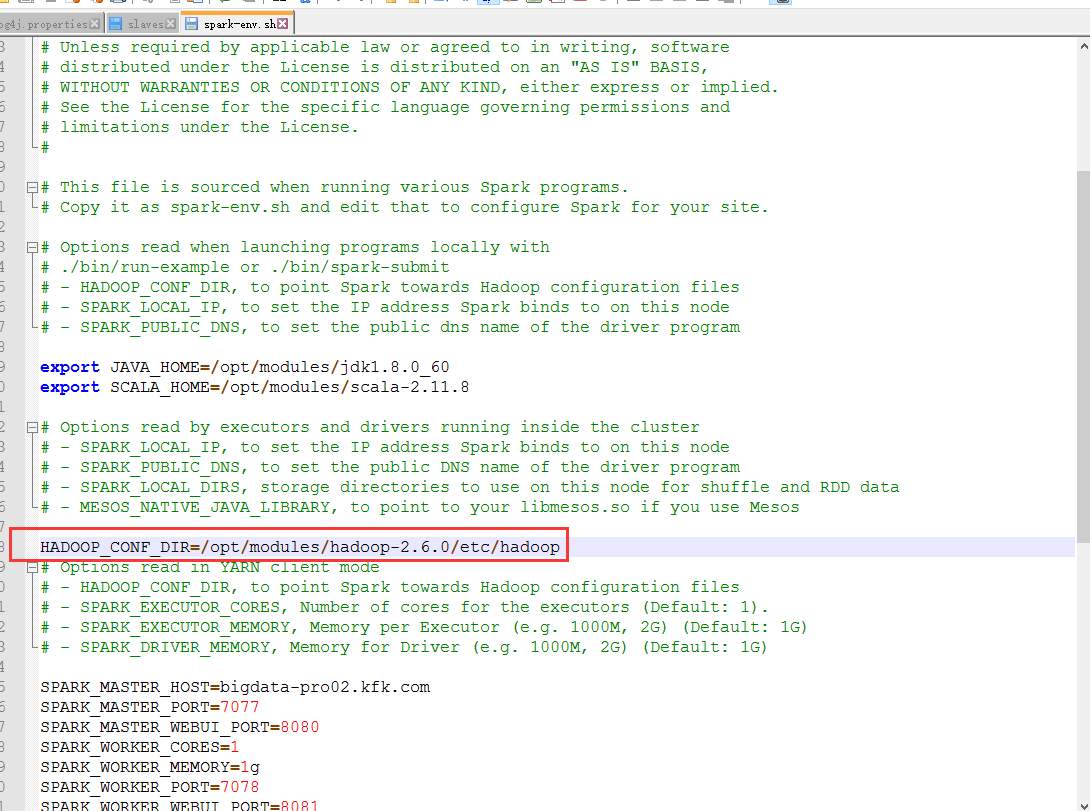

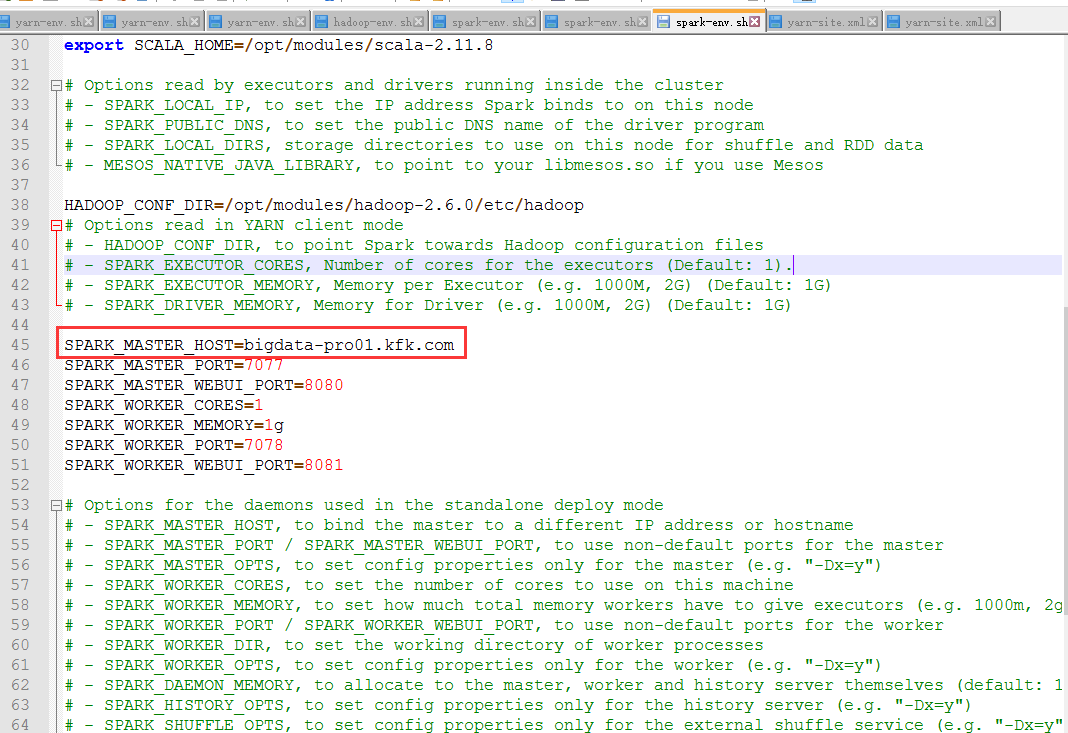

配置spark-env.sh

export JAVA_HOME=/opt/modules/jdk1.8.0_60 export SCALA_HOME=/opt/modules/scala-2.11.8 SPARK_MASTER_HOST=bigdata-pro02.kfk.com SPARK_MASTER_PORT=7077 SPARK_MASTER_WEBUI_PORT=8080 SPARK_WORKER_CORES=1 SPARK_WORKER_MEMORY=1g SPARK_WORKER_PORT=7078 SPARK_WORKER_WEBUI_PORT=8081 SPARK_CONF_DIR=/opt/modules/spark-2.2.0-bin/conf

将spark 配置分发到其他节点并修改每个节点特殊配置

scp -r spark-2.2.0-bin bigdata-pro01.kfk.com:/opt/modules/

scp -r spark-2.2.0-bin bigdata-pro03.kfk.com:/opt/modules/

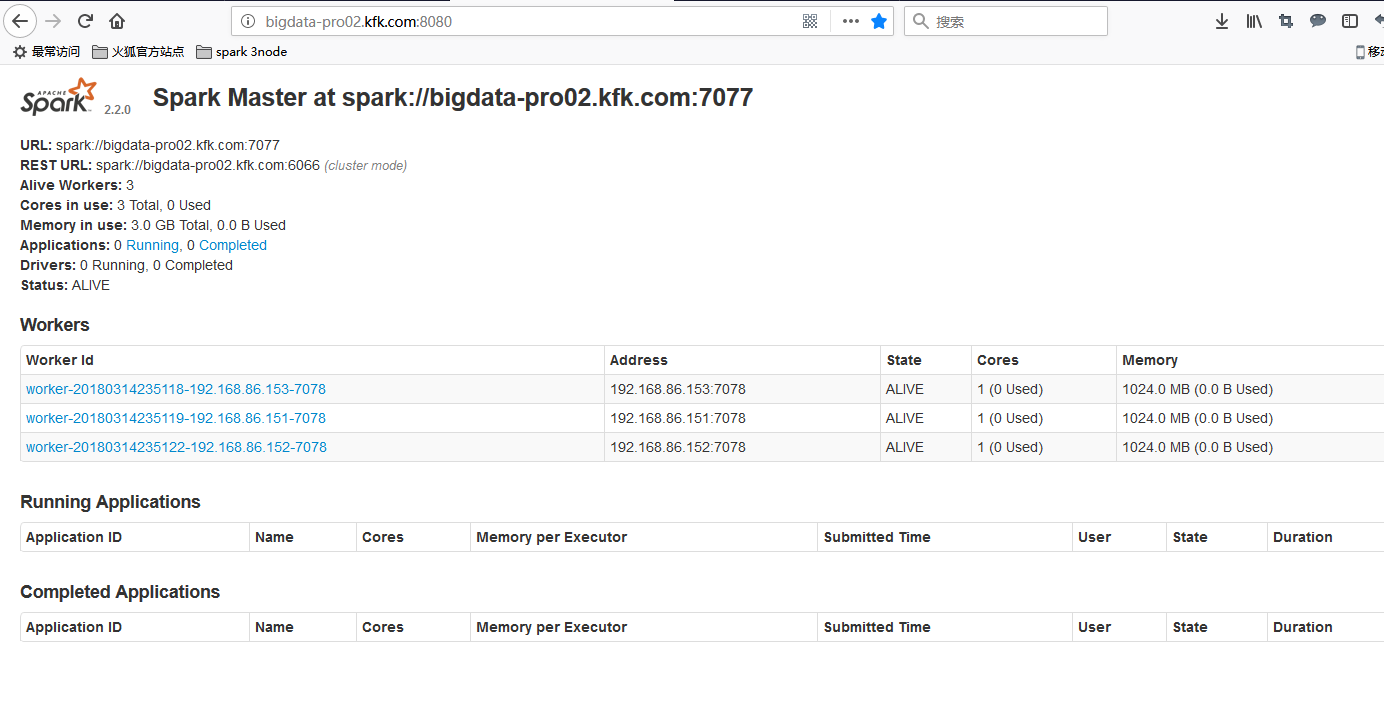

http://bigdata-pro02.kfk.com:8080/

在浏览器打开这个页面

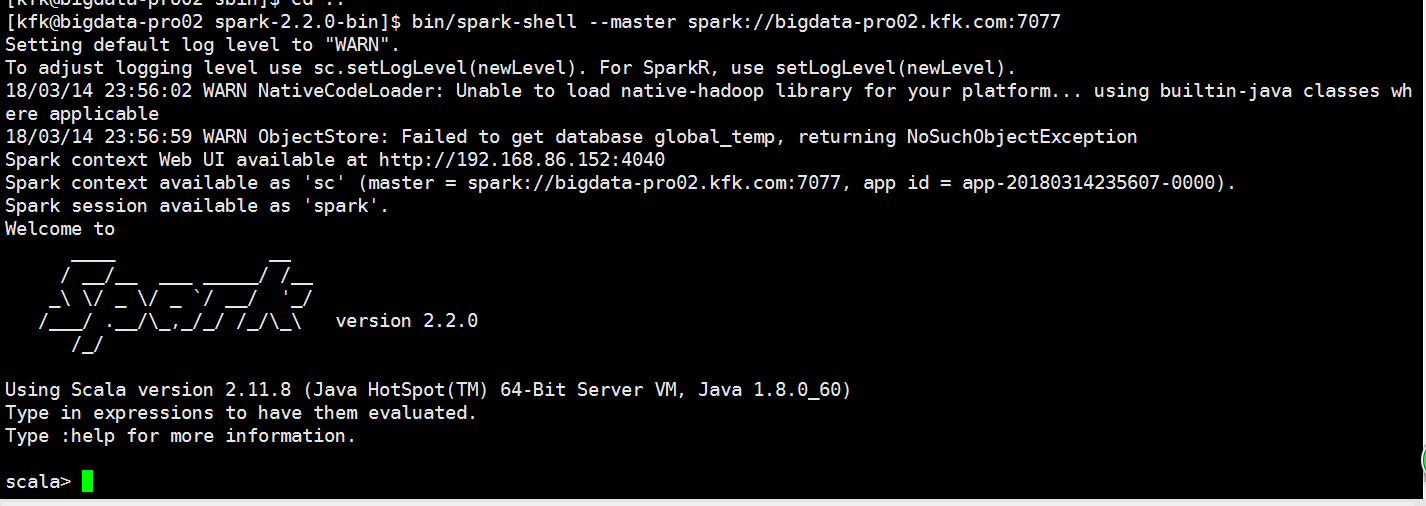

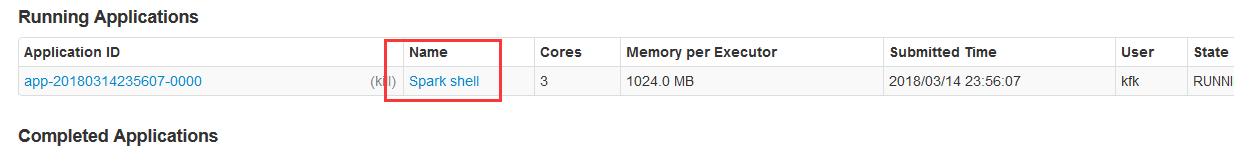

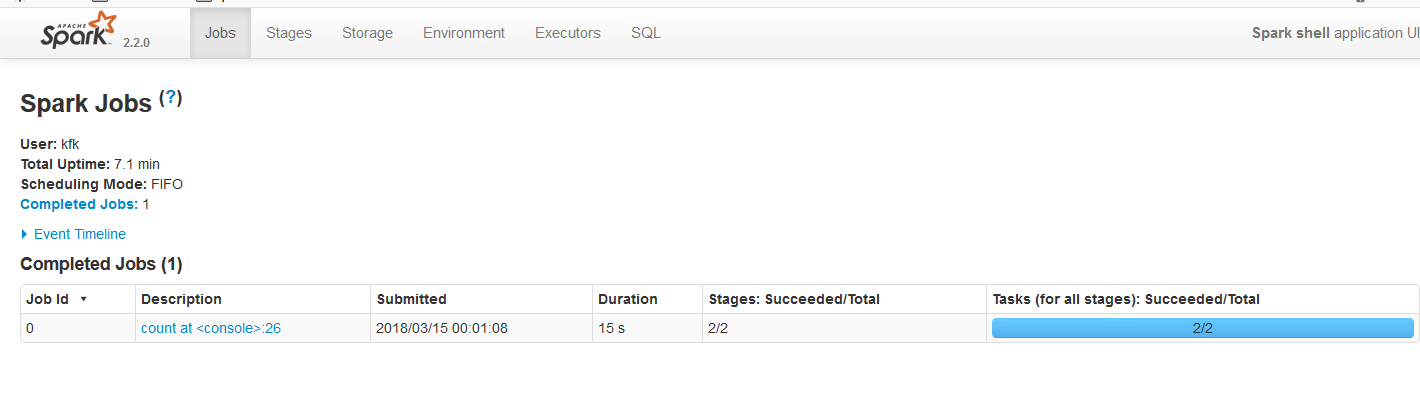

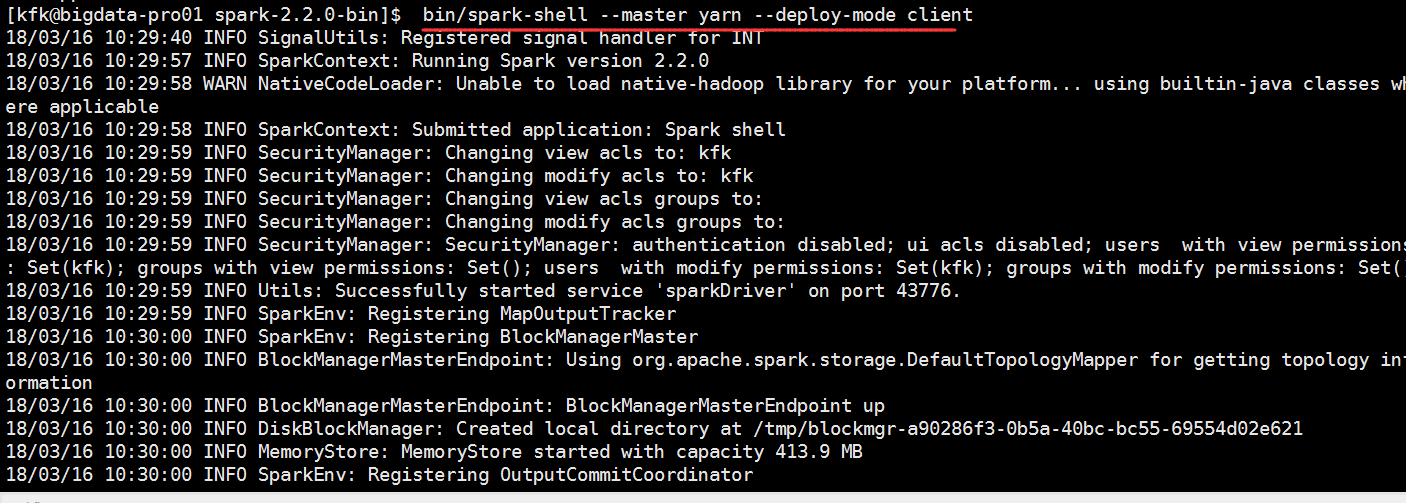

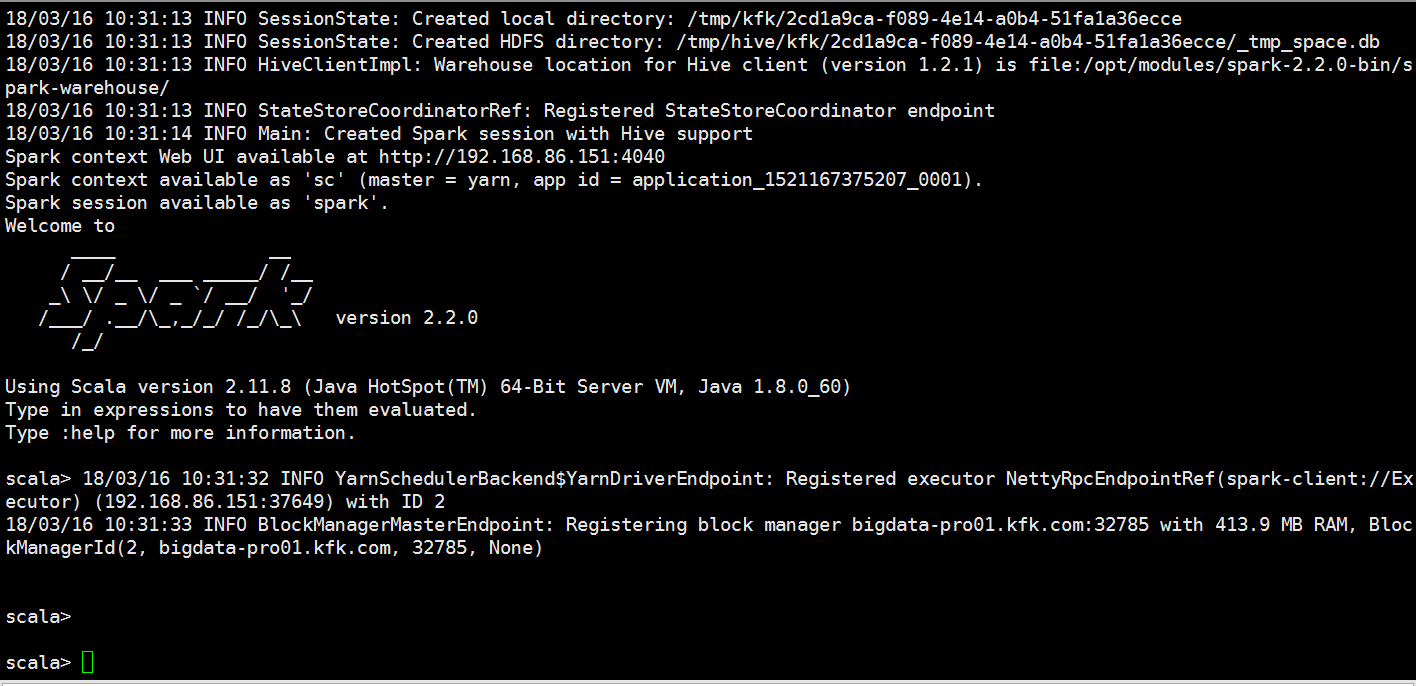

客户端测试

bin/spark-shell --master spark://bigdata-pro02.kfk.com:7077

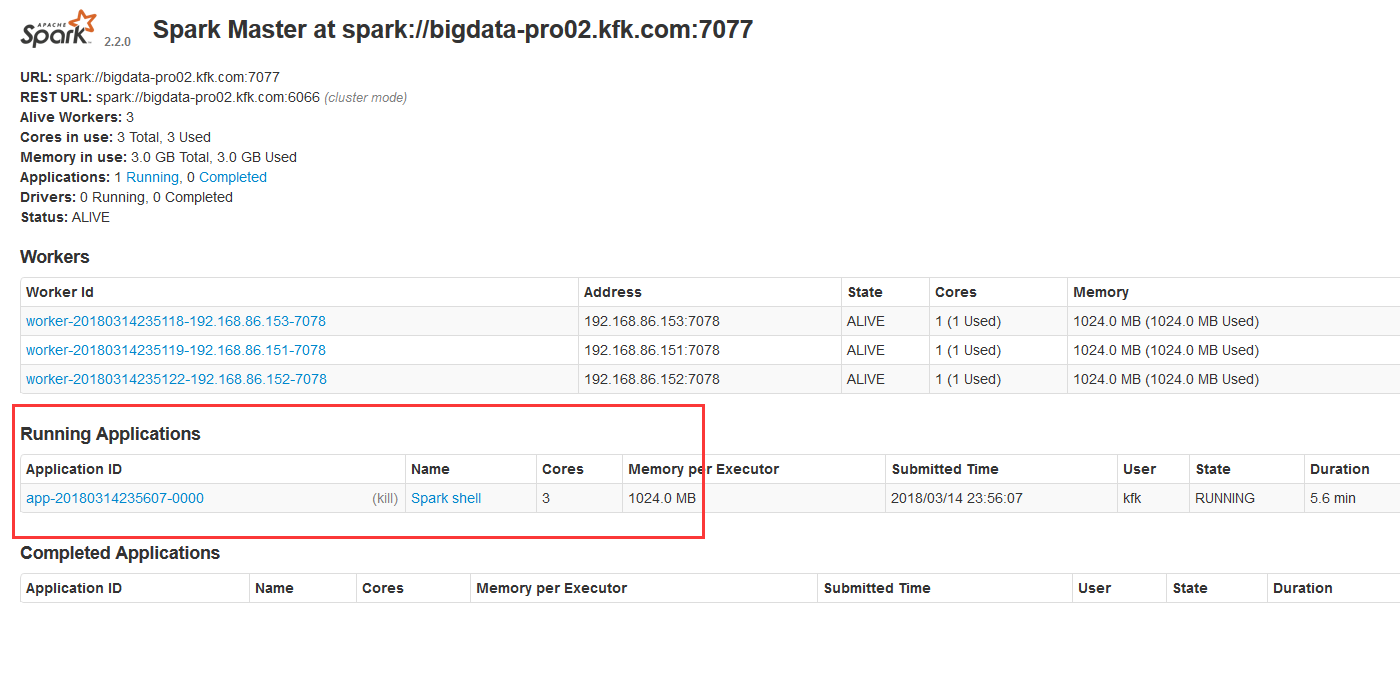

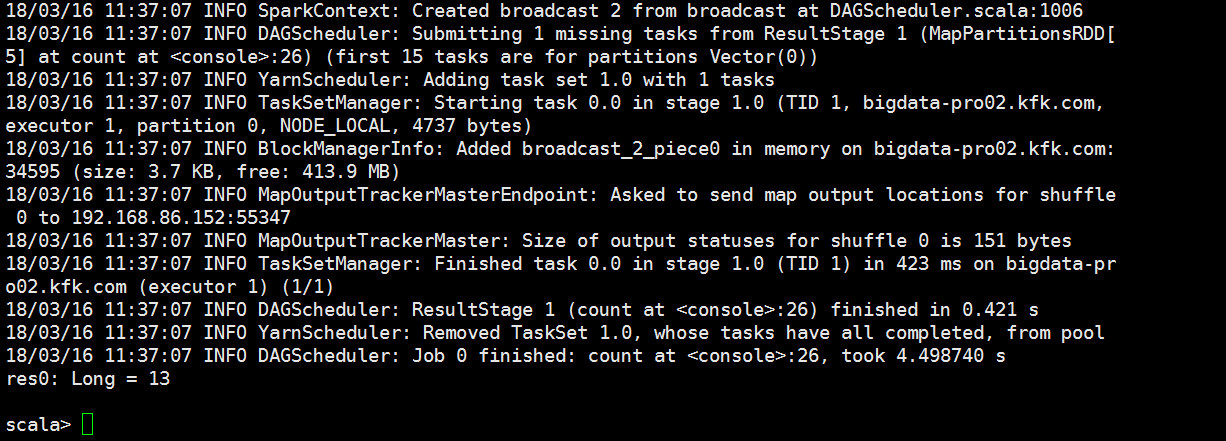

执行一个job

点进去看看

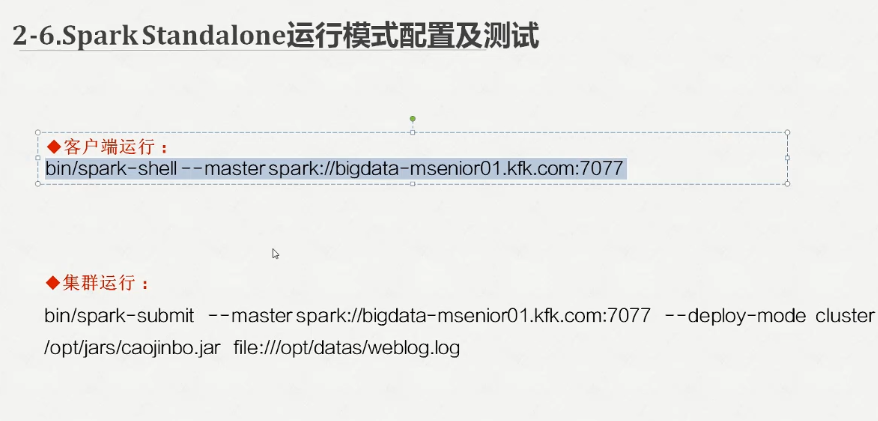

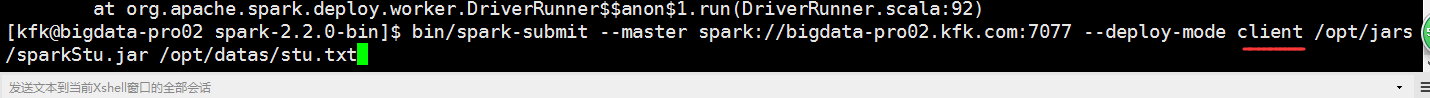

bin/spark-submit --master spark://bigdata-pro02.kfk.com:7077 --deploy-mode cluster /opt/jars/sparkStu.jar file:///opt/datas/stu.txt

可以看到报错了!!!!

我们应该使用这个模式

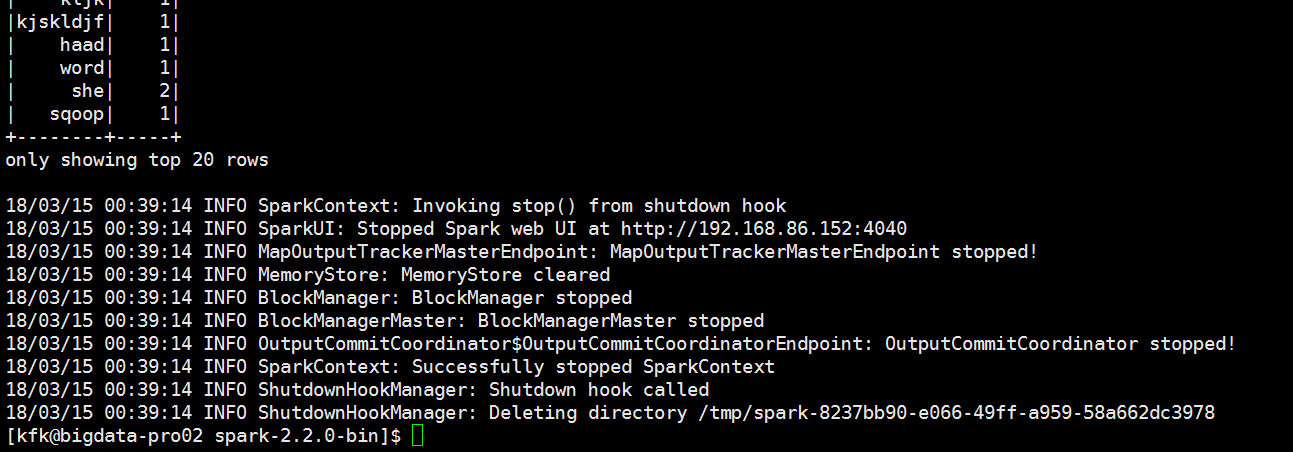

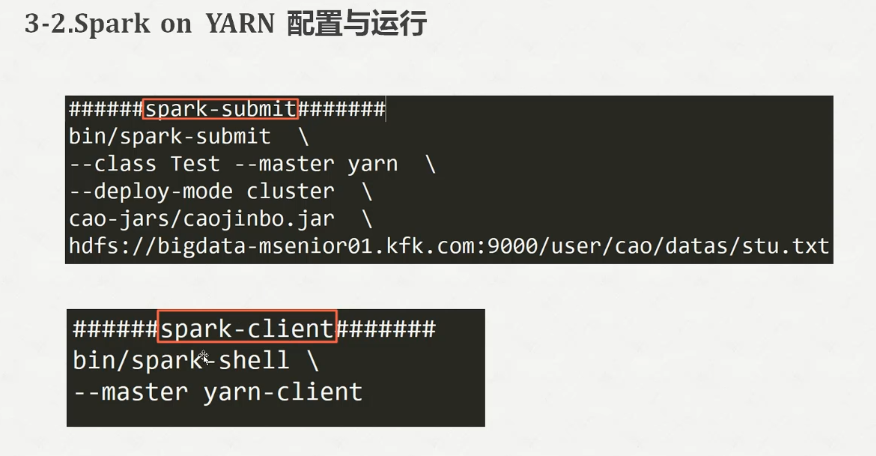

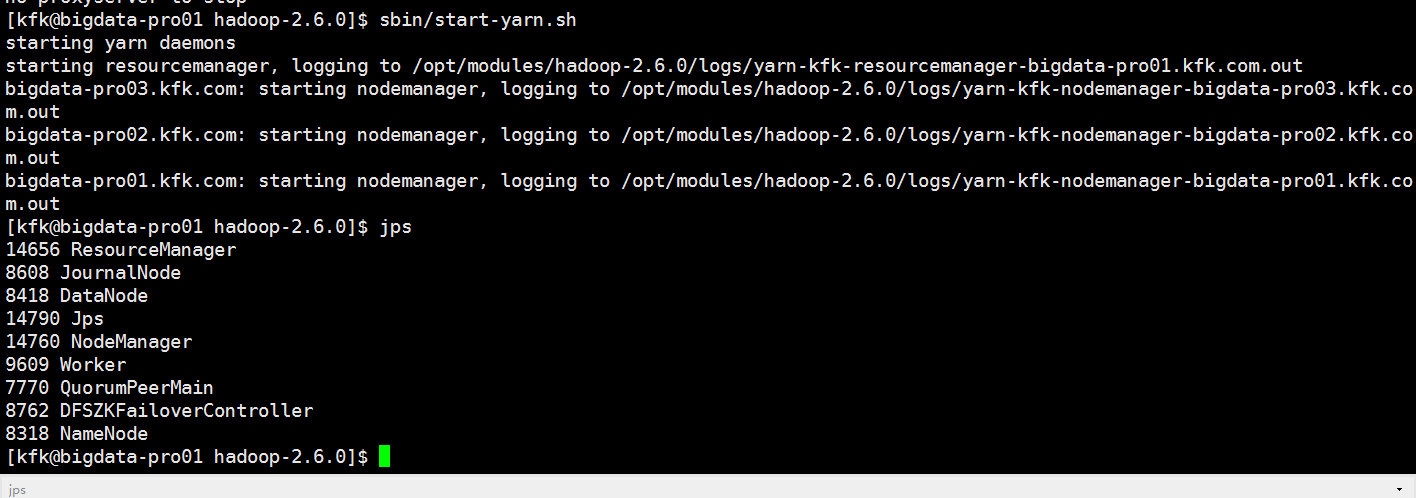

启动一下yarn

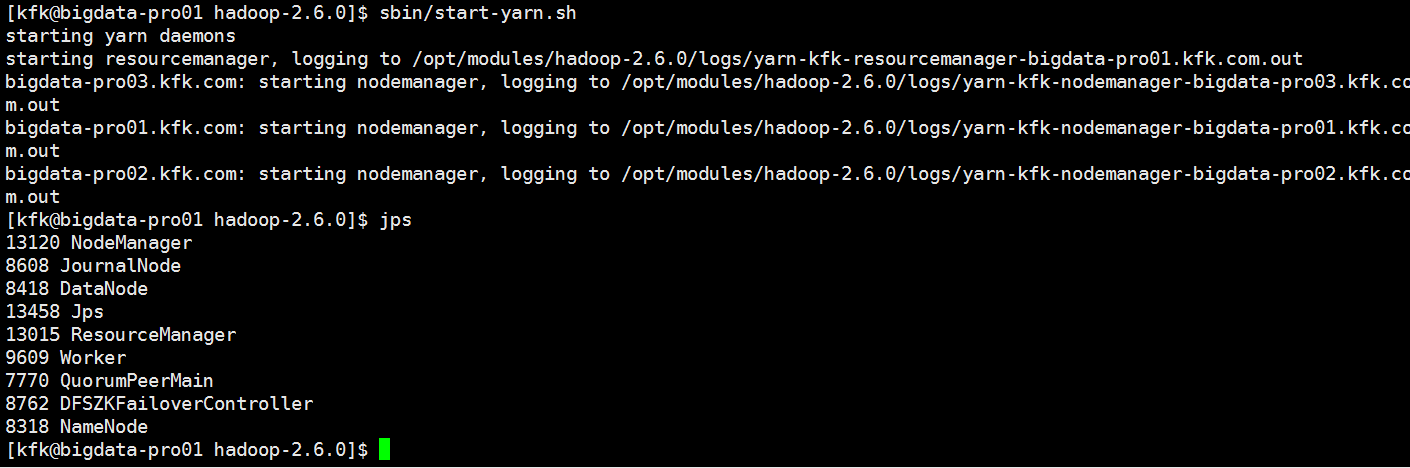

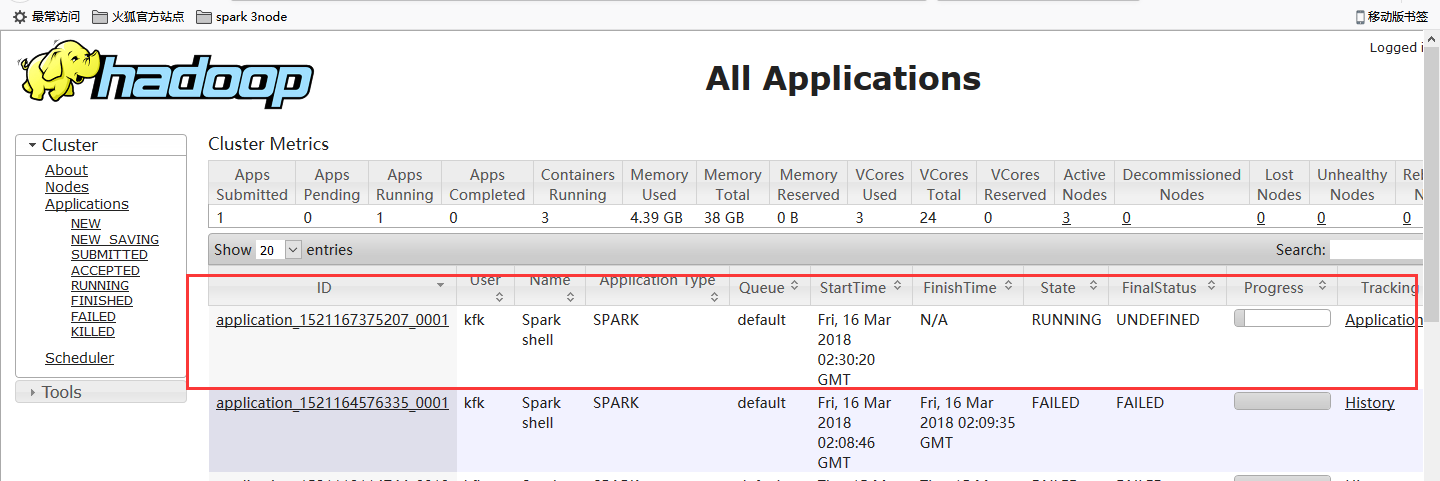

http://bigdata-pro01.kfk.com:8088/cluster

我们就把HADOOP_CONF_DIR配置近来

其他两个节点也一样。

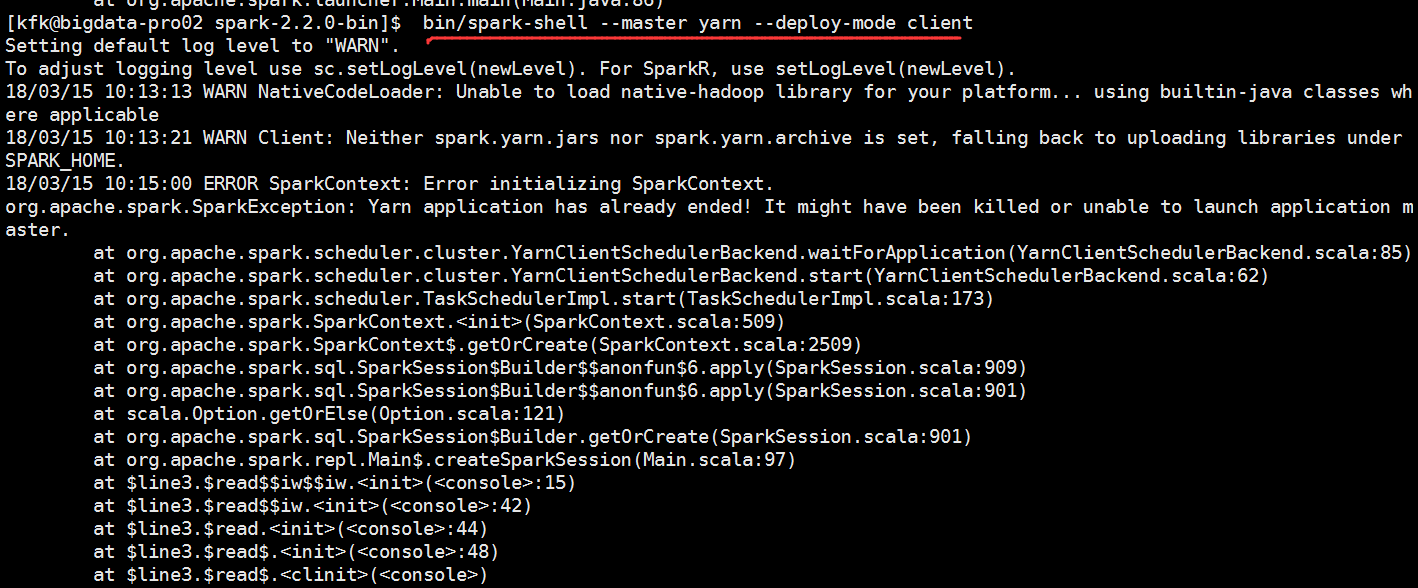

再次运行,还是报错了

[kfk@bigdata-pro02 spark-2.2.0-bin]$ bin/spark-shell --master yarn --deploy-mode client Setting default log level to "WARN". To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel). 18/03/15 10:13:13 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable 18/03/15 10:13:21 WARN Client: Neither spark.yarn.jars nor spark.yarn.archive is set, falling back to uploading libraries under SPARK_HOME. 18/03/15 10:15:00 ERROR SparkContext: Error initializing SparkContext. org.apache.spark.SparkException: Yarn application has already ended! It might have been killed or unable to launch application master. at org.apache.spark.scheduler.cluster.YarnClientSchedulerBackend.waitForApplication(YarnClientSchedulerBackend.scala:85) at org.apache.spark.scheduler.cluster.YarnClientSchedulerBackend.start(YarnClientSchedulerBackend.scala:62) at org.apache.spark.scheduler.TaskSchedulerImpl.start(TaskSchedulerImpl.scala:173) at org.apache.spark.SparkContext.<init>(SparkContext.scala:509) at org.apache.spark.SparkContext$.getOrCreate(SparkContext.scala:2509) at org.apache.spark.sql.SparkSession$Builder$$anonfun$6.apply(SparkSession.scala:909) at org.apache.spark.sql.SparkSession$Builder$$anonfun$6.apply(SparkSession.scala:901) at scala.Option.getOrElse(Option.scala:121) at org.apache.spark.sql.SparkSession$Builder.getOrCreate(SparkSession.scala:901) at org.apache.spark.repl.Main$.createSparkSession(Main.scala:97) at $line3.$read$$iw$$iw.<init>(<console>:15) at $line3.$read$$iw.<init>(<console>:42) at $line3.$read.<init>(<console>:44) at $line3.$read$.<init>(<console>:48) at $line3.$read$.<clinit>(<console>) at $line3.$eval$.$print$lzycompute(<console>:7) at $line3.$eval$.$print(<console>:6) at $line3.$eval.$print(<console>) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:497) at scala.tools.nsc.interpreter.IMain$ReadEvalPrint.call(IMain.scala:786) at scala.tools.nsc.interpreter.IMain$Request.loadAndRun(IMain.scala:1047) at scala.tools.nsc.interpreter.IMain$WrappedRequest$$anonfun$loadAndRunReq$1.apply(IMain.scala:638) at scala.tools.nsc.interpreter.IMain$WrappedRequest$$anonfun$loadAndRunReq$1.apply(IMain.scala:637) at scala.reflect.internal.util.ScalaClassLoader$class.asContext(ScalaClassLoader.scala:31) at scala.reflect.internal.util.AbstractFileClassLoader.asContext(AbstractFileClassLoader.scala:19) at scala.tools.nsc.interpreter.IMain$WrappedRequest.loadAndRunReq(IMain.scala:637) at scala.tools.nsc.interpreter.IMain.interpret(IMain.scala:569) at scala.tools.nsc.interpreter.IMain.interpret(IMain.scala:565) at scala.tools.nsc.interpreter.ILoop.interpretStartingWith(ILoop.scala:807) at scala.tools.nsc.interpreter.ILoop.command(ILoop.scala:681) at scala.tools.nsc.interpreter.ILoop.processLine(ILoop.scala:395) at org.apache.spark.repl.SparkILoop$$anonfun$initializeSpark$1.apply$mcV$sp(SparkILoop.scala:38) at org.apache.spark.repl.SparkILoop$$anonfun$initializeSpark$1.apply(SparkILoop.scala:37) at org.apache.spark.repl.SparkILoop$$anonfun$initializeSpark$1.apply(SparkILoop.scala:37) at scala.tools.nsc.interpreter.IMain.beQuietDuring(IMain.scala:214) at org.apache.spark.repl.SparkILoop.initializeSpark(SparkILoop.scala:37) at org.apache.spark.repl.SparkILoop.loadFiles(SparkILoop.scala:98) at scala.tools.nsc.interpreter.ILoop$$anonfun$process$1.apply$mcZ$sp(ILoop.scala:920) at scala.tools.nsc.interpreter.ILoop$$anonfun$process$1.apply(ILoop.scala:909) at scala.tools.nsc.interpreter.ILoop$$anonfun$process$1.apply(ILoop.scala:909) at scala.reflect.internal.util.ScalaClassLoader$.savingContextLoader(ScalaClassLoader.scala:97) at scala.tools.nsc.interpreter.ILoop.process(ILoop.scala:909) at org.apache.spark.repl.Main$.doMain(Main.scala:70) at org.apache.spark.repl.Main$.main(Main.scala:53) at org.apache.spark.repl.Main.main(Main.scala) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:497) at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:755) at org.apache.spark.deploy.SparkSubmit$.doRunMain$1(SparkSubmit.scala:180) at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:205) at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:119) at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala) 18/03/15 10:15:00 WARN YarnSchedulerBackend$YarnSchedulerEndpoint: Attempted to request executors before the AM has registered! 18/03/15 10:15:00 WARN MetricsSystem: Stopping a MetricsSystem that is not running org.apache.spark.SparkException: Yarn application has already ended! It might have been killed or unable to launch application master. at org.apache.spark.scheduler.cluster.YarnClientSchedulerBackend.waitForApplication(YarnClientSchedulerBackend.scala:85) at org.apache.spark.scheduler.cluster.YarnClientSchedulerBackend.start(YarnClientSchedulerBackend.scala:62) at org.apache.spark.scheduler.TaskSchedulerImpl.start(TaskSchedulerImpl.scala:173) at org.apache.spark.SparkContext.<init>(SparkContext.scala:509) at org.apache.spark.SparkContext$.getOrCreate(SparkContext.scala:2509) at org.apache.spark.sql.SparkSession$Builder$$anonfun$6.apply(SparkSession.scala:909) at org.apache.spark.sql.SparkSession$Builder$$anonfun$6.apply(SparkSession.scala:901) at scala.Option.getOrElse(Option.scala:121) at org.apache.spark.sql.SparkSession$Builder.getOrCreate(SparkSession.scala:901) at org.apache.spark.repl.Main$.createSparkSession(Main.scala:97) ... 47 elided <console>:14: error: not found: value spark import spark.implicits._ ^ <console>:14: error: not found: value spark import spark.sql ^ Welcome to ____ __ / __/__ ___ _____/ /__ _\ \/ _ \/ _ `/ __/ '_/ /___/ .__/\_,_/_/ /_/\_\ version 2.2.0 /_/ Using Scala version 2.11.8 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_60) Type in expressions to have them evaluated. Type :help for more information.

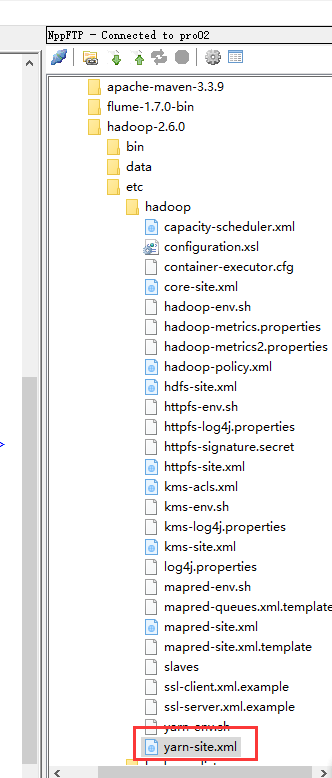

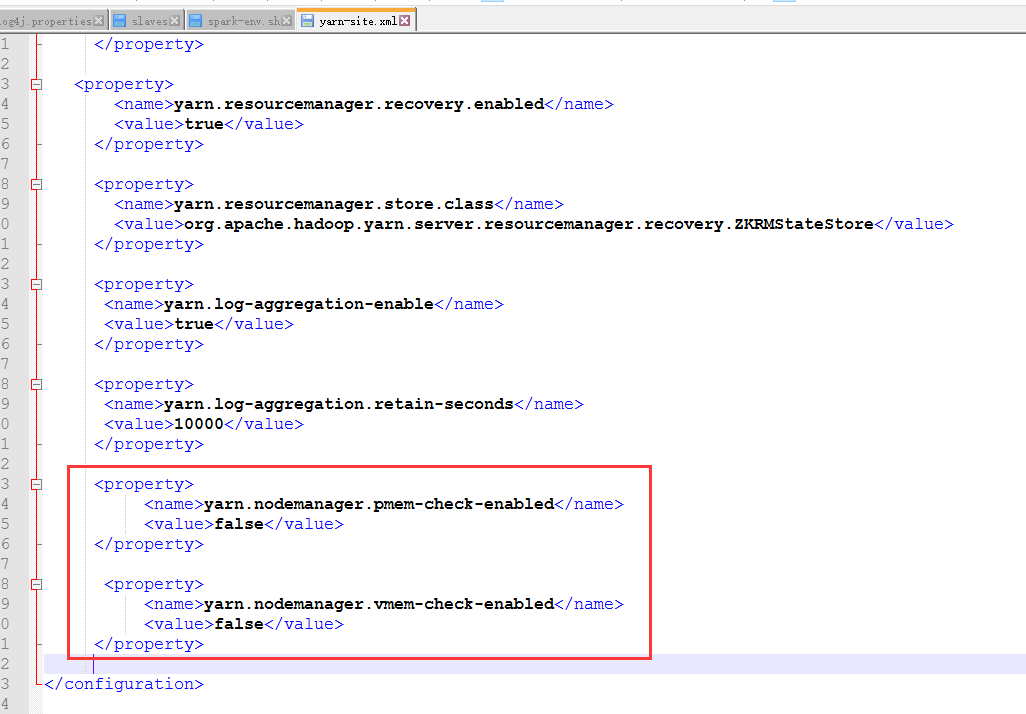

我们来修改这个配置文件yarn-site.xml

加上这两项

<property>

<name>yarn.nodemanager.pmem-check-enabled</name>

<value>false</value>

</property>

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>

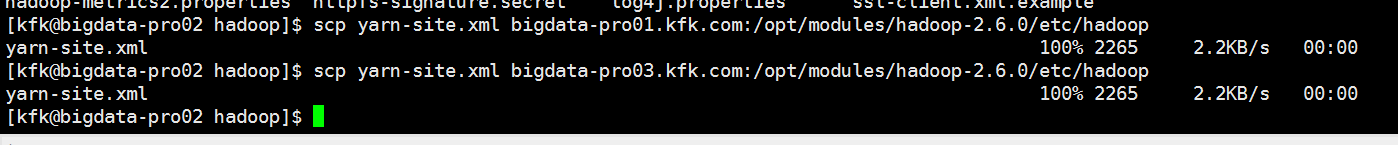

其他两个节点的yarn-site.xml也是一样,这里我就不多说了。或者是我们把节点2的这个文件分发给另外两个节点也是可以的。

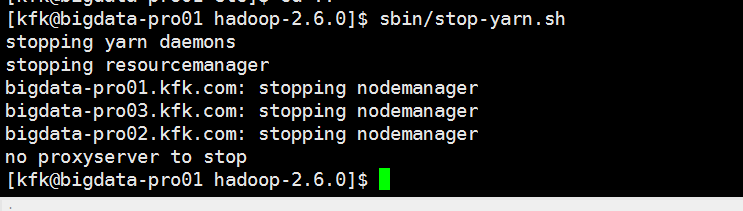

不过分发之前先把yarn停下来

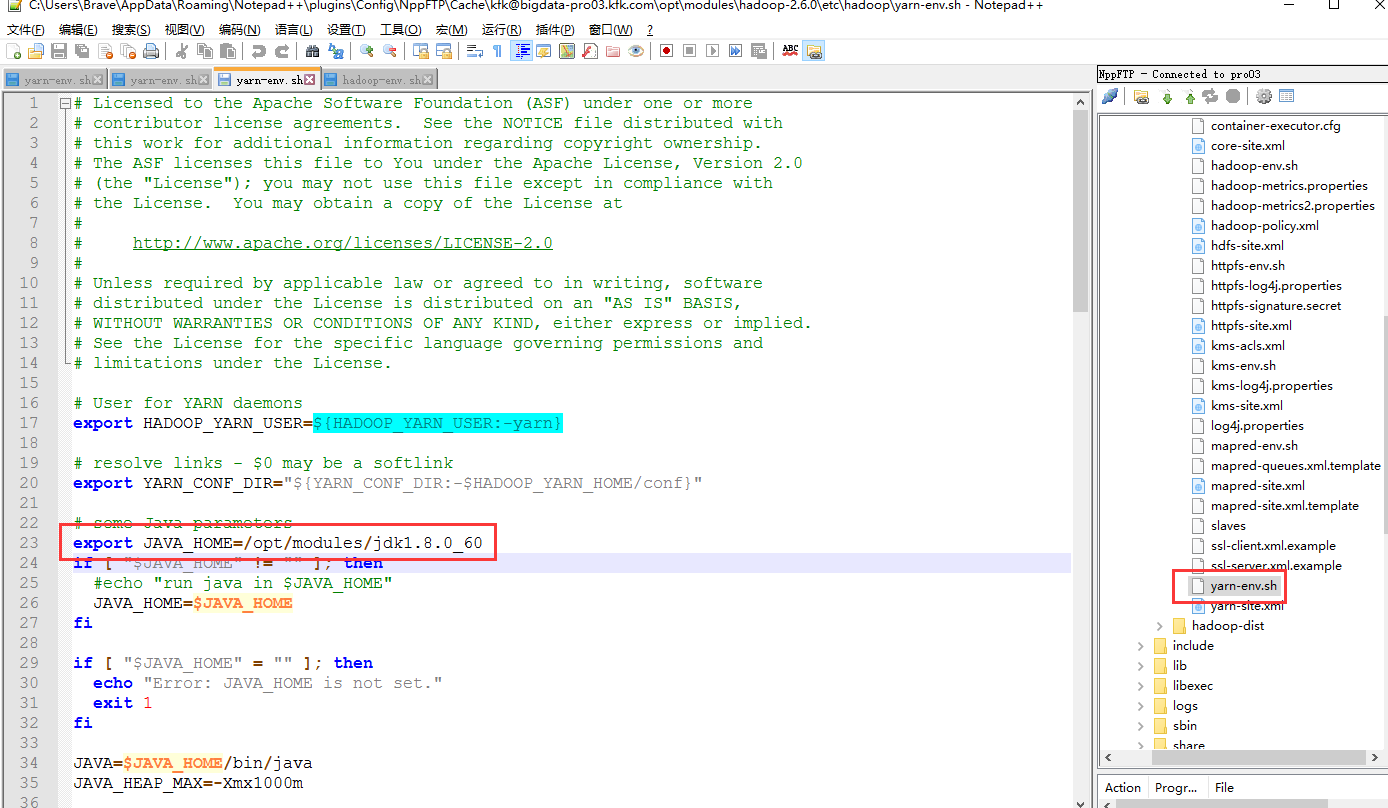

还有一点细节一定要注意,报这个错误其实原因有很多的,不单单是说内存不够的问题,内存不够只是其中一个原因,还有一个细节我们容易漏掉的就jdk版本一定要跟spark-env.sh的一致

尤其要注意hadoop里面的这两个文件

我这里是以其中一个节点来说明,其他两个节点的hadoop配置文件也是这样修改,因为我们之前的hadoop是用jdk1.7版本的,spark改用1.8版本了,所以关于hadoop的所有配置文件有关配置jdk的都某要改成1.8

我们再次启动yarn

启动spark(由于考虑到spark比较消耗内存,我就把spark的master切换到节点1去了,因为节点1我给他分配了4G内存)

记得修改spark-env.sh文件(3个节点都改)

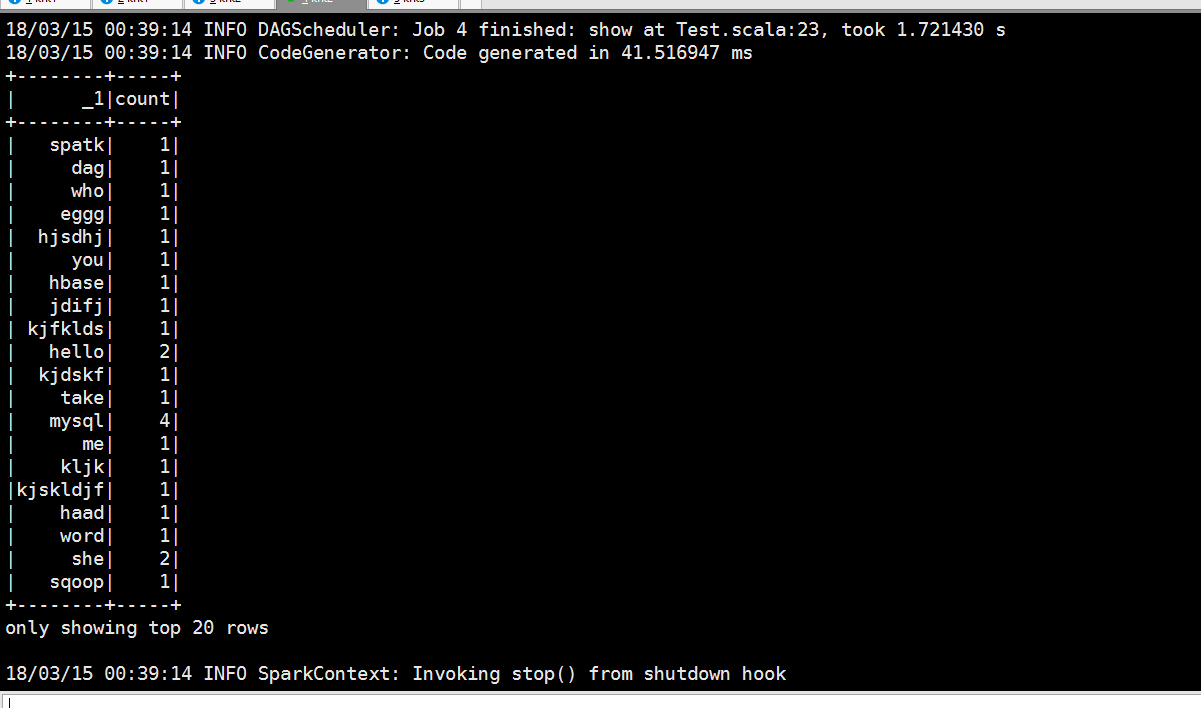

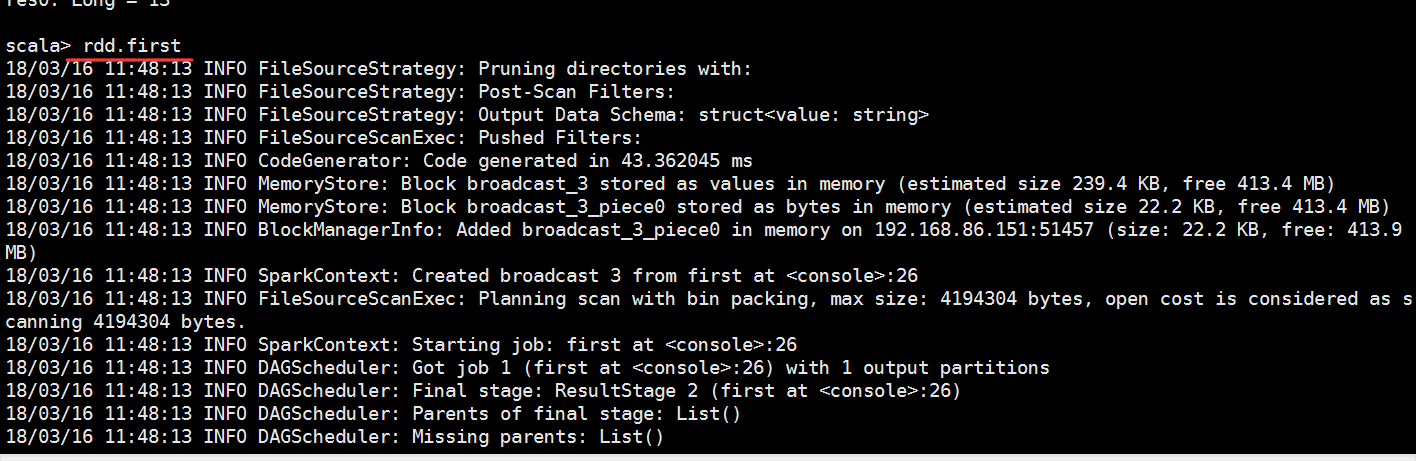

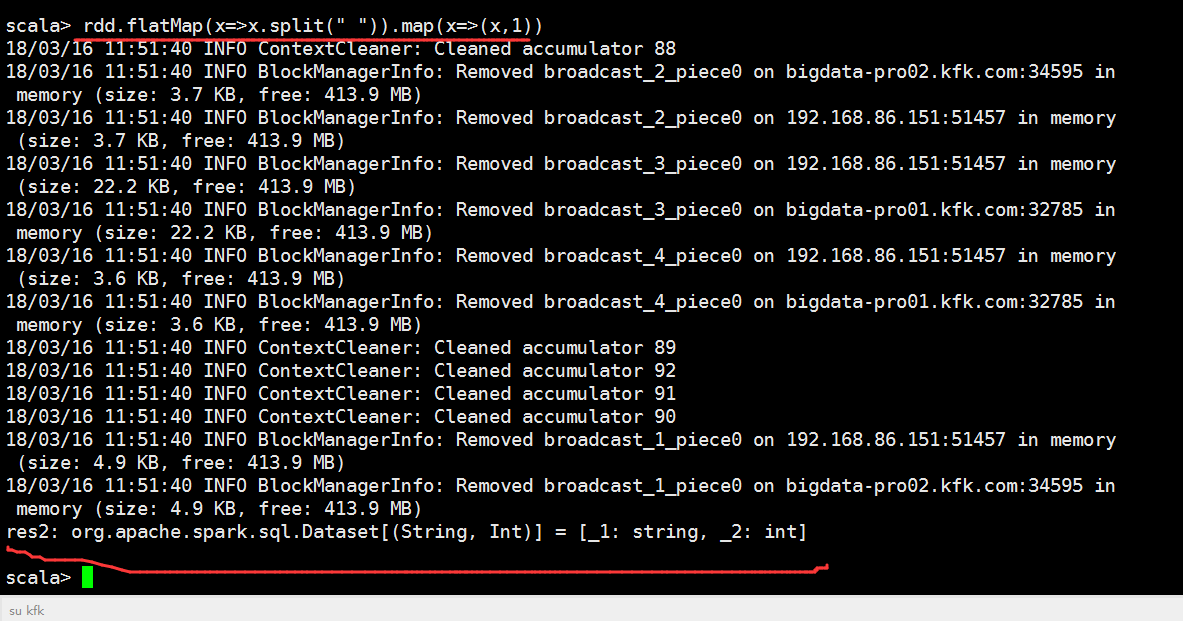

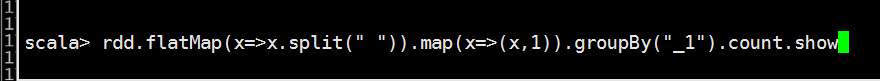

进行分组求和

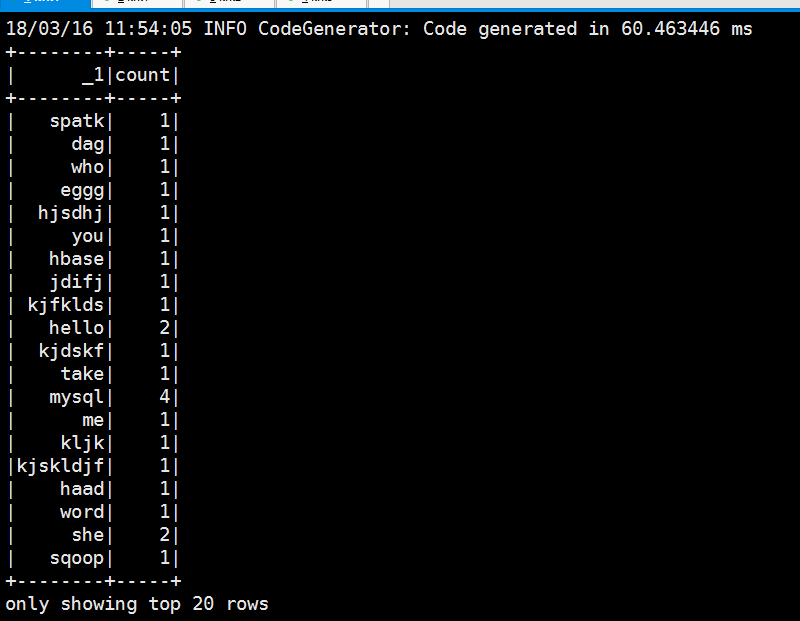

退出

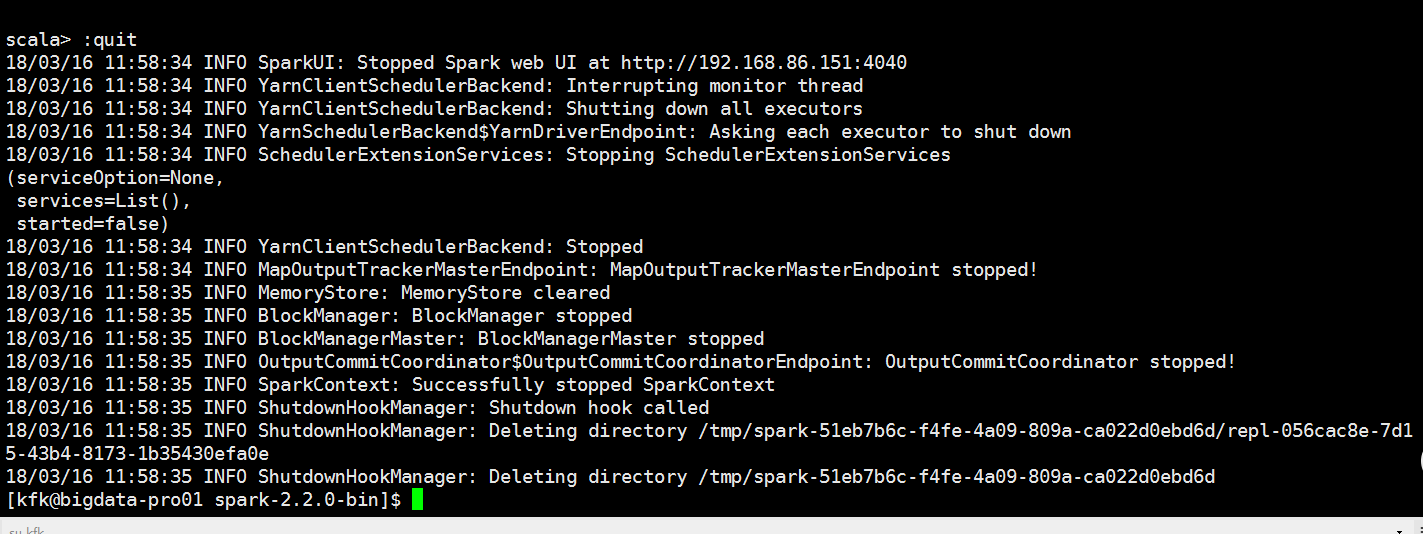

用submit模式跑一下

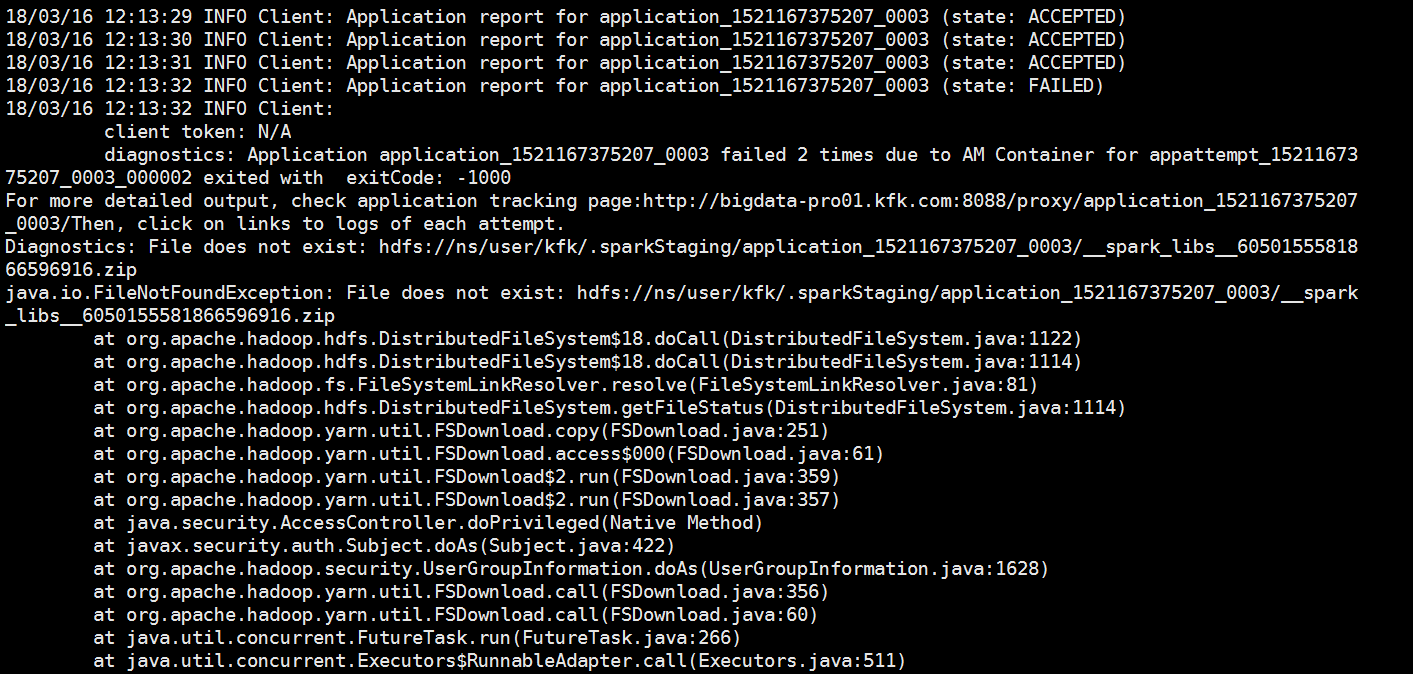

可以看到报错了

[kfk@bigdata-pro01 spark-2.2.0-bin]$ bin/spark-submit --class com.spark.test.Test --master yarn --deploy-mode cluster /opt/jars/sparkStu.jar file:///opt/datas/stu.txt 18/03/16 12:12:37 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable 18/03/16 12:12:40 INFO Client: Requesting a new application from cluster with 3 NodeManagers 18/03/16 12:12:40 INFO Client: Verifying our application has not requested more than the maximum memory capability of the cluster (16384 MB per container) 18/03/16 12:12:40 INFO Client: Will allocate AM container, with 1408 MB memory including 384 MB overhead 18/03/16 12:12:40 INFO Client: Setting up container launch context for our AM 18/03/16 12:12:40 INFO Client: Setting up the launch environment for our AM container 18/03/16 12:12:40 INFO Client: Preparing resources for our AM container 18/03/16 12:12:45 WARN Client: Neither spark.yarn.jars nor spark.yarn.archive is set, falling back to uploading libraries under SPARK_HOME. 18/03/16 12:12:47 INFO Client: Uploading resource file:/tmp/spark-edc616a1-10bf-4175-9d7c-91a2430844f8/__spark_libs__6050155581866596916.zip -> hdfs://ns/user/kfk/.sparkStaging/application_1521167375207_0003/__spark_libs__6050155581866596916.zip 18/03/16 12:12:54 INFO Client: Uploading resource file:/opt/jars/sparkStu.jar -> hdfs://ns/user/kfk/.sparkStaging/application_1521167375207_0003/sparkStu.jar 18/03/16 12:12:54 INFO Client: Uploading resource file:/tmp/spark-edc616a1-10bf-4175-9d7c-91a2430844f8/__spark_conf__6419799297331143395.zip -> hdfs://ns/user/kfk/.sparkStaging/application_1521167375207_0003/__spark_conf__.zip 18/03/16 12:12:54 INFO SecurityManager: Changing view acls to: kfk 18/03/16 12:12:54 INFO SecurityManager: Changing modify acls to: kfk 18/03/16 12:12:54 INFO SecurityManager: Changing view acls groups to: 18/03/16 12:12:54 INFO SecurityManager: Changing modify acls groups to: 18/03/16 12:12:54 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(kfk); groups with view permissions: Set(); users with modify permissions: Set(kfk); groups with modify permissions: Set() 18/03/16 12:12:54 INFO Client: Submitting application application_1521167375207_0003 to ResourceManager 18/03/16 12:12:55 INFO YarnClientImpl: Submitted application application_1521167375207_0003 18/03/16 12:12:56 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:12:56 INFO Client: client token: N/A diagnostics: N/A ApplicationMaster host: N/A ApplicationMaster RPC port: -1 queue: default start time: 1521173574957 final status: UNDEFINED tracking URL: http://bigdata-pro01.kfk.com:8088/proxy/application_1521167375207_0003/ user: kfk 18/03/16 12:12:57 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:12:58 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:12:59 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:00 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:01 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:02 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:03 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:04 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:05 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:06 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:07 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:08 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:09 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:10 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:11 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:12 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:13 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:14 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:15 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:16 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:17 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:18 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:19 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:20 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:21 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:22 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:23 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:24 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:25 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:26 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:27 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:28 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:29 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:30 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:31 INFO Client: Application report for application_1521167375207_0003 (state: ACCEPTED) 18/03/16 12:13:32 INFO Client: Application report for application_1521167375207_0003 (state: FAILED) 18/03/16 12:13:32 INFO Client: client token: N/A diagnostics: Application application_1521167375207_0003 failed 2 times due to AM Container for appattempt_1521167375207_0003_000002 exited with exitCode: -1000 For more detailed output, check application tracking page:http://bigdata-pro01.kfk.com:8088/proxy/application_1521167375207_0003/Then, click on links to logs of each attempt. Diagnostics: File does not exist: hdfs://ns/user/kfk/.sparkStaging/application_1521167375207_0003/__spark_libs__6050155581866596916.zip java.io.FileNotFoundException: File does not exist: hdfs://ns/user/kfk/.sparkStaging/application_1521167375207_0003/__spark_libs__6050155581866596916.zip at org.apache.hadoop.hdfs.DistributedFileSystem$18.doCall(DistributedFileSystem.java:1122) at org.apache.hadoop.hdfs.DistributedFileSystem$18.doCall(DistributedFileSystem.java:1114) at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81) at org.apache.hadoop.hdfs.DistributedFileSystem.getFileStatus(DistributedFileSystem.java:1114) at org.apache.hadoop.yarn.util.FSDownload.copy(FSDownload.java:251) at org.apache.hadoop.yarn.util.FSDownload.access$000(FSDownload.java:61) at org.apache.hadoop.yarn.util.FSDownload$2.run(FSDownload.java:359) at org.apache.hadoop.yarn.util.FSDownload$2.run(FSDownload.java:357) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1628) at org.apache.hadoop.yarn.util.FSDownload.call(FSDownload.java:356) at org.apache.hadoop.yarn.util.FSDownload.call(FSDownload.java:60) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617) at java.lang.Thread.run(Thread.java:745) Failing this attempt. Failing the application. ApplicationMaster host: N/A ApplicationMaster RPC port: -1 queue: default start time: 1521173574957 final status: FAILED tracking URL: http://bigdata-pro01.kfk.com:8088/cluster/app/application_1521167375207_0003 user: kfk Exception in thread "main" org.apache.spark.SparkException: Application application_1521167375207_0003 finished with failed status at org.apache.spark.deploy.yarn.Client.run(Client.scala:1104) at org.apache.spark.deploy.yarn.Client$.main(Client.scala:1150) at org.apache.spark.deploy.yarn.Client.main(Client.scala) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:497) at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:755) at org.apache.spark.deploy.SparkSubmit$.doRunMain$1(SparkSubmit.scala:180) at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:205) at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:119) at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala) 18/03/16 12:13:32 INFO ShutdownHookManager: Shutdown hook called 18/03/16 12:13:32 INFO ShutdownHookManager: Deleting directory /tmp/spark-edc616a1-10bf-4175-9d7c-91a2430844f8 [kfk@bigdata-pro01 spark-2.2.0-bin]$

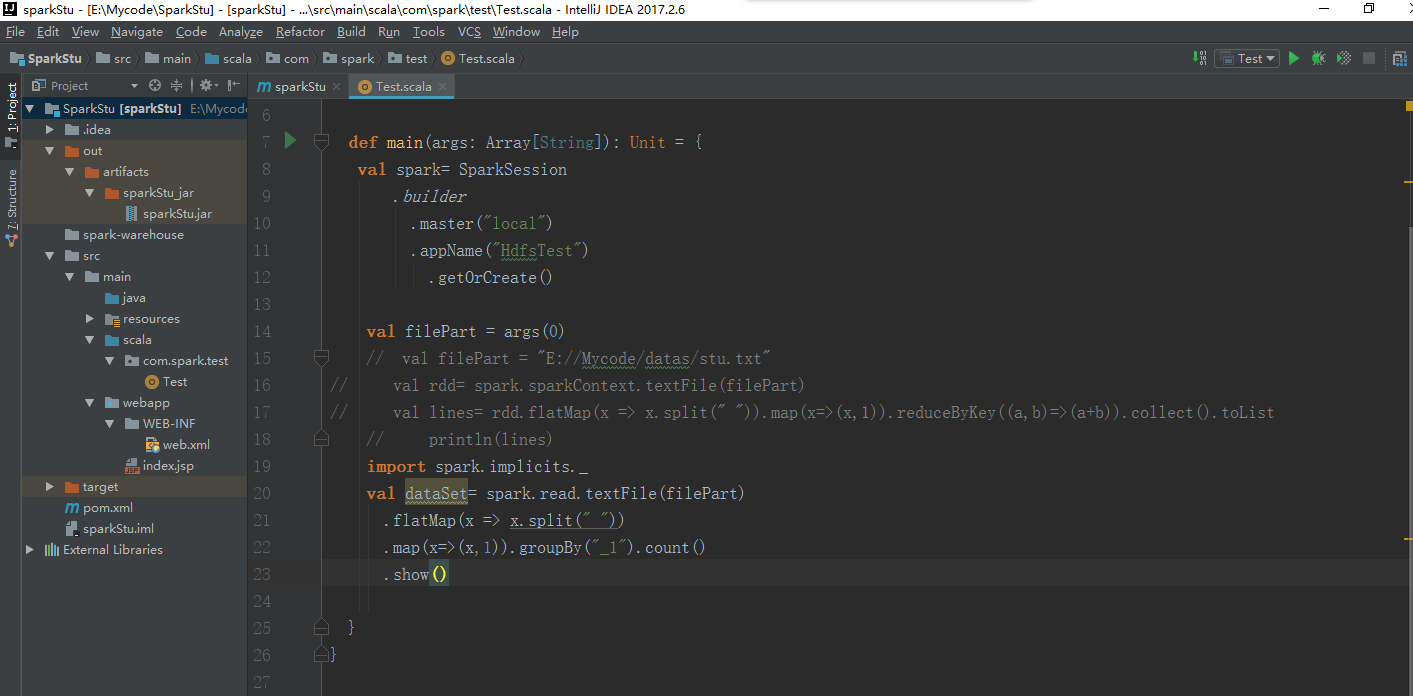

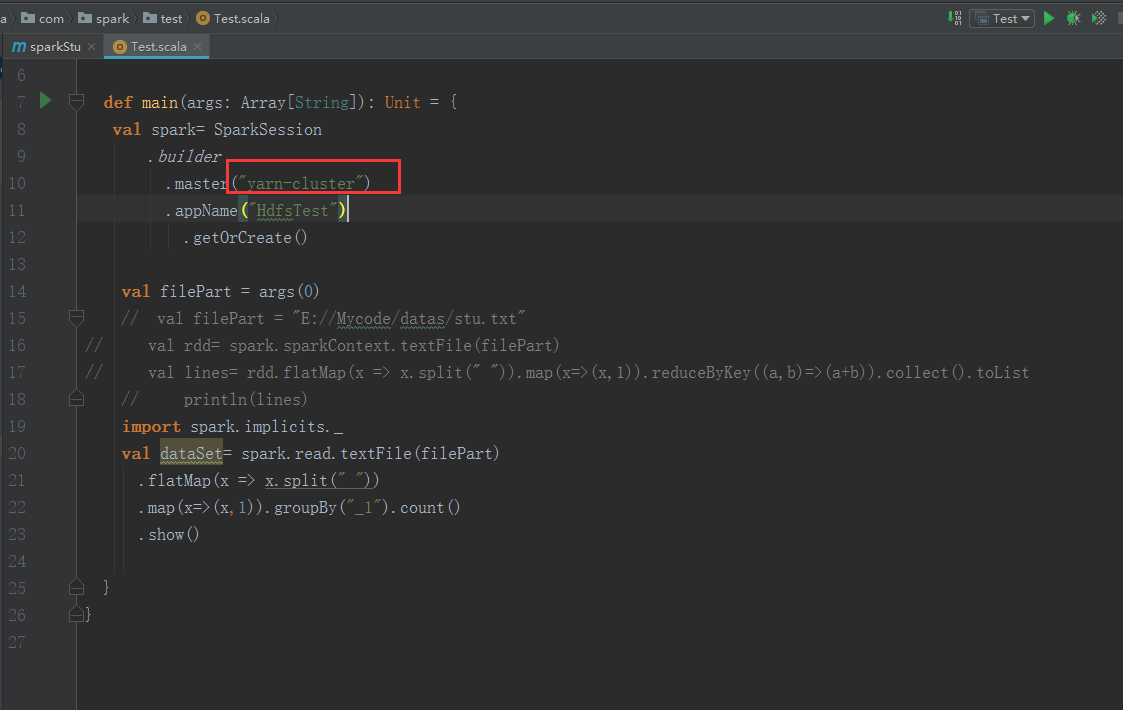

我们在idea把sparkStu的源码打开

改一下这里

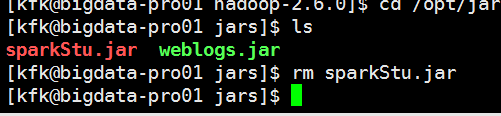

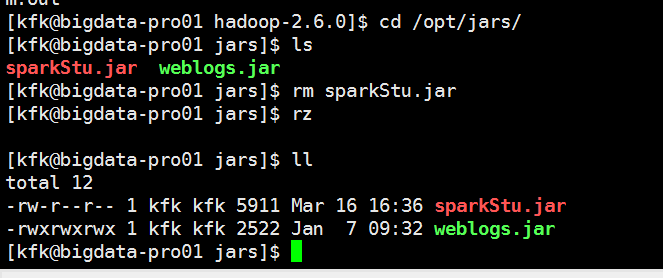

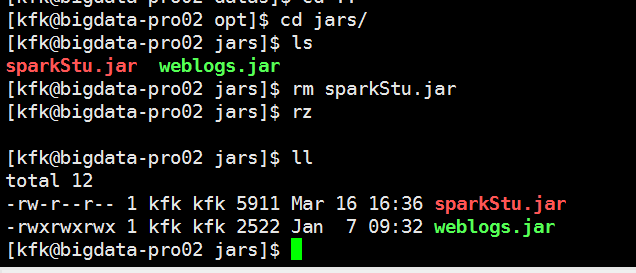

把包完之后我们把这个包再次上传(为了保险,我把3个节点都上传了,可能我比较SB)

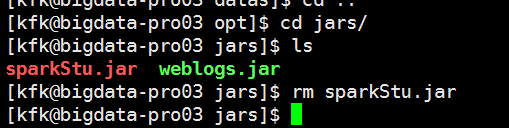

先把原来的包干掉

现在上传

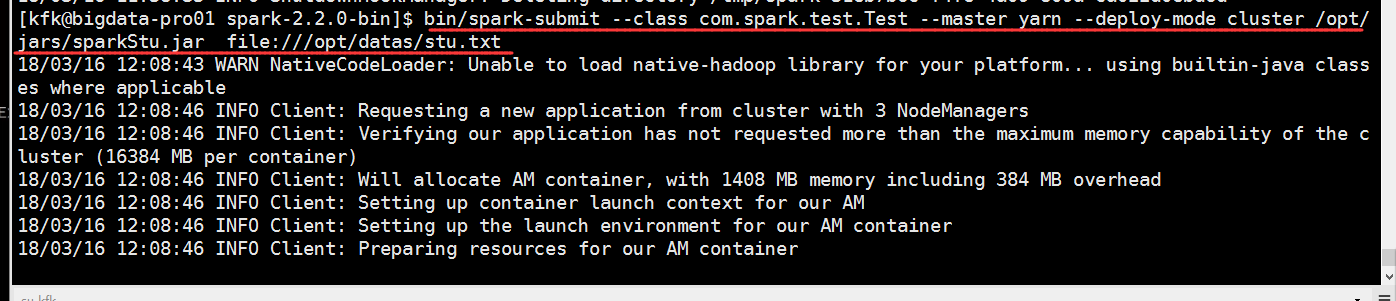

再跑一次

可以看到成功了

[kfk@bigdata-pro01 spark-2.2.0-bin]$ bin/spark-submit --class com.spark.test.Test --master yarn --deploy-mode cluster /opt/jars/sparkStu.jar file:///opt/datas/stu.txt 18/03/16 16:46:06 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable 18/03/16 16:46:10 INFO Client: Requesting a new application from cluster with 3 NodeManagers 18/03/16 16:46:10 INFO Client: Verifying our application has not requested more than the maximum memory capability of the cluster (16384 MB per container) 18/03/16 16:46:10 INFO Client: Will allocate AM container, with 1408 MB memory including 384 MB overhead 18/03/16 16:46:10 INFO Client: Setting up container launch context for our AM 18/03/16 16:46:10 INFO Client: Setting up the launch environment for our AM container 18/03/16 16:46:10 INFO Client: Preparing resources for our AM container 18/03/16 16:46:14 WARN Client: Neither spark.yarn.jars nor spark.yarn.archive is set, falling back to uploading libraries under SPARK_HOME. 18/03/16 16:46:19 INFO Client: Uploading resource file:/tmp/spark-43f281a9-034a-424b-8032-d6d00addfff6/__spark_libs__8012713420631475441.zip -> hdfs://ns/user/kfk/.sparkStaging/application_1521167375207_0004/__spark_libs__8012713420631475441.zip 18/03/16 16:46:29 INFO Client: Uploading resource file:/opt/jars/sparkStu.jar -> hdfs://ns/user/kfk/.sparkStaging/application_1521167375207_0004/sparkStu.jar 18/03/16 16:46:29 INFO Client: Uploading resource file:/tmp/spark-43f281a9-034a-424b-8032-d6d00addfff6/__spark_conf__8776342149712582279.zip -> hdfs://ns/user/kfk/.sparkStaging/application_1521167375207_0004/__spark_conf__.zip 18/03/16 16:46:29 INFO SecurityManager: Changing view acls to: kfk 18/03/16 16:46:29 INFO SecurityManager: Changing modify acls to: kfk 18/03/16 16:46:29 INFO SecurityManager: Changing view acls groups to: 18/03/16 16:46:29 INFO SecurityManager: Changing modify acls groups to: 18/03/16 16:46:29 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(kfk); groups with view permissions: Set(); users with modify permissions: Set(kfk); groups with modify permissions: Set() 18/03/16 16:46:29 INFO Client: Submitting application application_1521167375207_0004 to ResourceManager 18/03/16 16:46:30 INFO YarnClientImpl: Submitted application application_1521167375207_0004 18/03/16 16:46:31 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:31 INFO Client: client token: N/A diagnostics: N/A ApplicationMaster host: N/A ApplicationMaster RPC port: -1 queue: default start time: 1521189989993 final status: UNDEFINED tracking URL: http://bigdata-pro01.kfk.com:8088/proxy/application_1521167375207_0004/ user: kfk 18/03/16 16:46:32 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:33 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:35 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:36 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:37 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:38 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:39 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:40 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:41 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:42 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:43 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:44 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:45 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:46 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:47 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:48 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:49 INFO Client: Application report for application_1521167375207_0004 (state: ACCEPTED) 18/03/16 16:46:50 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:46:50 INFO Client: client token: N/A diagnostics: N/A ApplicationMaster host: 192.168.86.152 ApplicationMaster RPC port: 0 queue: default start time: 1521189989993 final status: UNDEFINED tracking URL: http://bigdata-pro01.kfk.com:8088/proxy/application_1521167375207_0004/ user: kfk 18/03/16 16:46:51 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:46:52 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:46:53 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:46:55 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:46:56 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:46:57 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:46:58 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:46:59 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:00 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:01 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:02 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:03 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:04 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:05 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:06 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:07 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:08 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:09 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:10 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:11 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:12 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:13 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:14 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:15 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:16 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:17 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:18 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:19 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:20 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:21 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:22 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:23 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:24 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:25 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:26 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:27 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:28 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:29 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:30 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:31 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:32 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:33 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:34 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:35 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:36 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:37 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:38 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:39 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:40 INFO Client: Application report for application_1521167375207_0004 (state: RUNNING) 18/03/16 16:47:41 INFO Client: Application report for application_1521167375207_0004 (state: FINISHED) 18/03/16 16:47:41 INFO Client: client token: N/A diagnostics: N/A ApplicationMaster host: 192.168.86.152 ApplicationMaster RPC port: 0 queue: default start time: 1521189989993 final status: SUCCEEDED tracking URL: http://bigdata-pro01.kfk.com:8088/proxy/application_1521167375207_0004/A user: kfk 18/03/16 16:47:41 INFO ShutdownHookManager: Shutdown hook called 18/03/16 16:47:41 INFO ShutdownHookManager: Deleting directory /tmp/spark-43f281a9-034a-424b-8032-d6d00addfff6 [kfk@bigdata-pro01 spark-2.2.0-bin]$

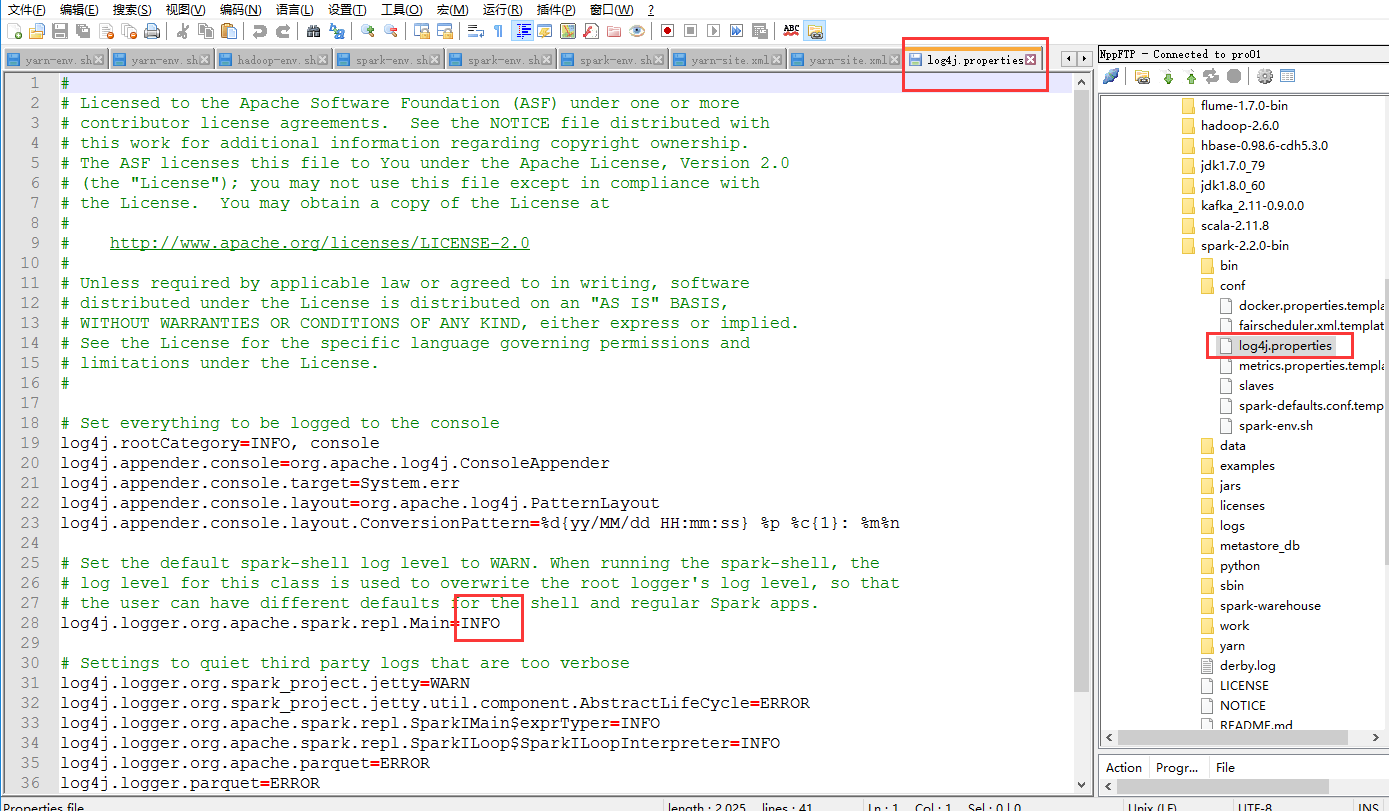

在这里我补充一下,我们能看见终端打印这么多日志,是因为修改了这个文件

浙公网安备 33010602011771号

浙公网安备 33010602011771号