Dinky的使用——hbase2mysql

要求:通过dinky把hbase的表数据导到mysql中,这种通常是用来模拟半结构化数据同步的场景

因为hbase是基于列存储的,不需要每个列都是有值的,通过列簇管理列,当然想了解hbase的表结构可以参考:https://www.cnblogs.com/braveym/p/7708332.html

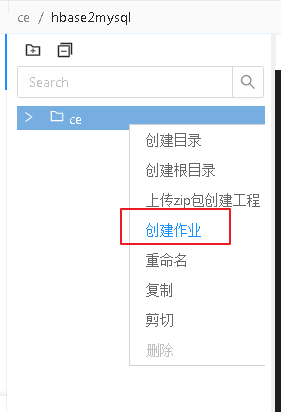

一、创建作业

二、添加依赖包

在dinky的pulgins目录下和flink/lib目录下把flink-sql-connector-hbase-2.2_2.11-1.13.6.jar包进行添加,添加后重启dinky和flink

这里大家根据自己的hbase和flink版本就行下载

下载地址可以参考:https://www.bookstack.cn/read/ApacheFlink-1.13-zh/eb86468f5753f091.md

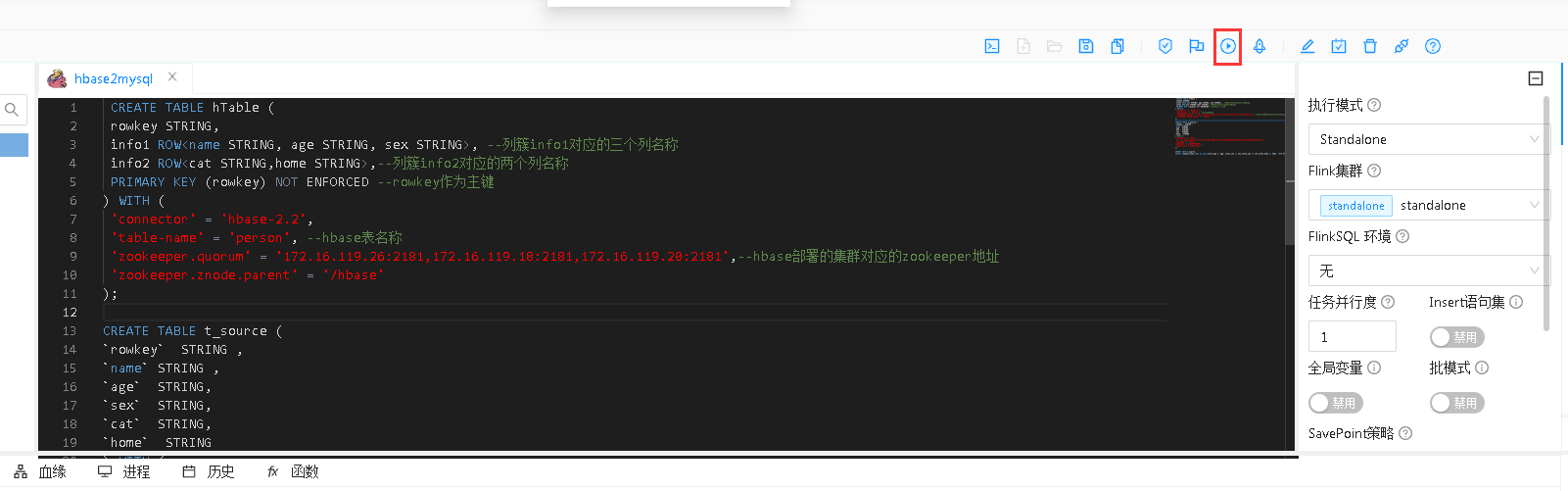

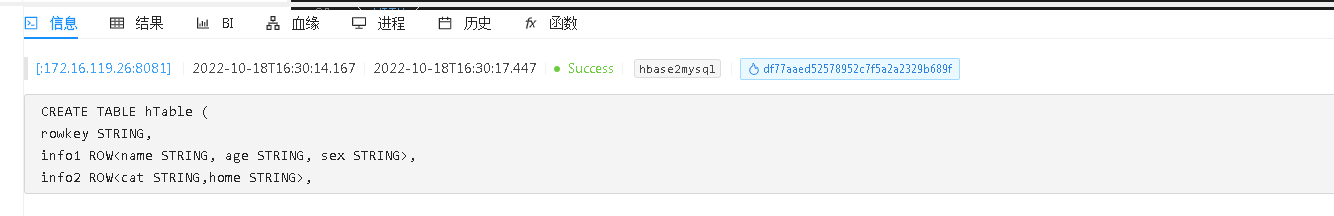

三、编写flinsql代码

CREATE TABLE hTable ( rowkey STRING, info1 ROW<name STRING, age STRING, sex STRING>, --列簇info1对应的三个列名称 info2 ROW<cat STRING,home STRING>,--列簇info2对应的两个列名称 PRIMARY KEY (rowkey) NOT ENFORCED --rowkey作为主键 ) WITH ( 'connector' = 'hbase-2.2', 'table-name' = 'person', --hbase表名称 'zookeeper.quorum' = '172.16.119.26:2181,172.16.119.18:2181,172.16.119.20:2181',--hbase部署的集群对应的zookeeper地址 'zookeeper.znode.parent' = '/hbase' ); CREATE TABLE t_source ( `rowkey` STRING , `name` STRING , `age` STRING, `sex` STRING, `cat` STRING, `home` STRING ) WITH ( 'connector' = 'jdbc', 'url' = 'jdbc:mysql://172.16.119.50:3306/test?createDatabaseIfNotExist=true&useSSL=false', 'username' = 'root', 'password' = 'Tj@20220710', 'table-name' = 'hbase_out' ); INSERT INTO t_source SELECT rowkey,info1.name as name,info1.age as age, info1.sex as sex,info2.cat as cat,info2.home as home FROM hTable;

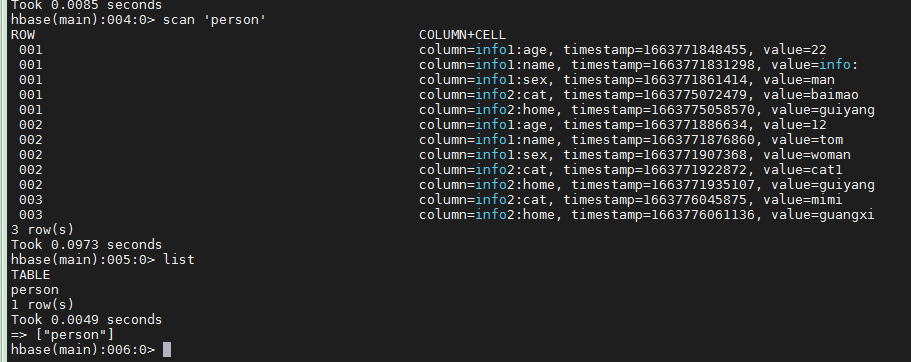

提前在hbase里面创建表和导入数据,相关操作可以参考:https://www.cnblogs.com/braveym/p/13113950.html

这个是我提前在hbase里面建表并导入的数据

四、运行作业

选定在dinky上配置的flink集群并运行作业

显示运行成功,这个时候dinky的界面显示成功了,不一定说就是数据同步成功了

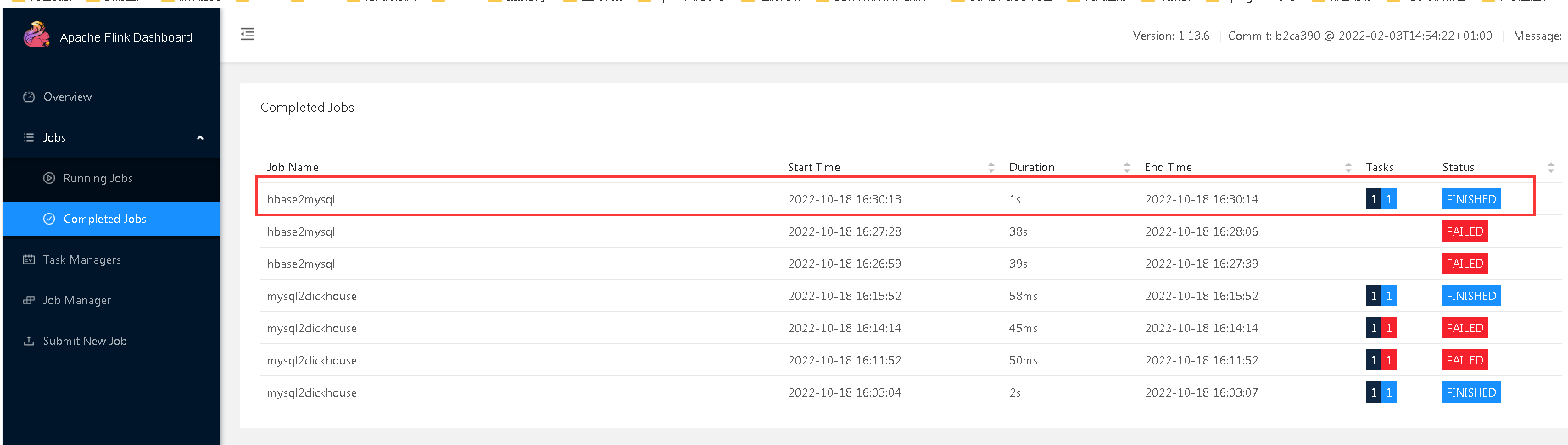

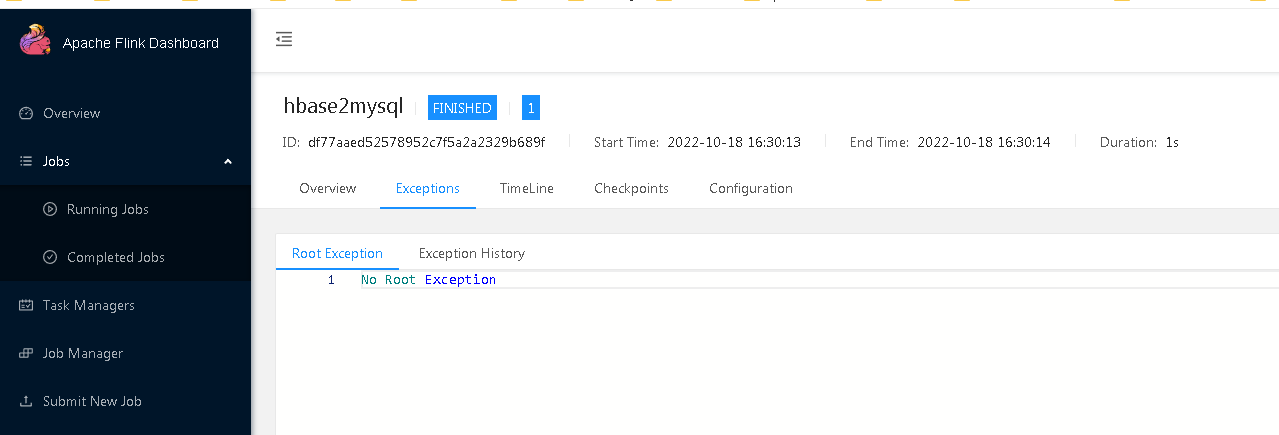

我们需要进一步查看flink的日志以及数据库表里面是否有数据同步过来了

从flink中我们看出来了,最新运行的一个任务结束状态

并没有异常信息

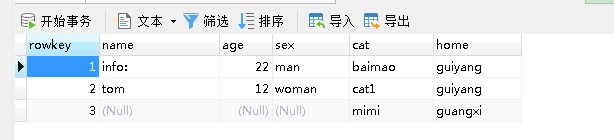

对应的mysql表也有相应的数据过来了

调试扩展内容

当然啦,实际的调试中,不可能这么顺利的,以上的任务我也是折腾了很久才调通的

比如说从flink的异常信息里面发现这样的错误:

Job failed during initialization of JobManager org.apache.flink.runtime.client.JobInitializationException: Could not start the JobMaster. at org.apache.flink.runtime.jobmaster.DefaultJobMasterServiceProcess.lambda$new$0(DefaultJobMasterServiceProcess.java:97) at java.util.concurrent.CompletableFuture.uniWhenComplete(CompletableFuture.java:774) at java.util.concurrent.CompletableFuture$UniWhenComplete.tryFire(CompletableFuture.java:750) at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:488) at java.util.concurrent.CompletableFuture$AsyncSupply.run(CompletableFuture.java:1609) at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.access$201(ScheduledThreadPoolExecutor.java:180) at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(ScheduledThreadPoolExecutor.java:293) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) at java.lang.Thread.run(Thread.java:750) Caused by: java.util.concurrent.CompletionException: java.lang.RuntimeException: org.apache.flink.runtime.JobException: Creating the input splits caused an error: Failed after attempts=11, exceptions: 2022-10-18T08:27:28.422Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:28.523Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:28.725Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:29.029Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:29.533Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:30.547Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:32.563Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:36.570Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:46.668Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:56.690Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:28:06.703Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 at java.util.concurrent.CompletableFuture.encodeThrowable(CompletableFuture.java:273) at java.util.concurrent.CompletableFuture.completeThrowable(CompletableFuture.java:280) at java.util.concurrent.CompletableFuture$AsyncSupply.run(CompletableFuture.java:1606) ... 7 more Caused by: java.lang.RuntimeException: org.apache.flink.runtime.JobException: Creating the input splits caused an error: Failed after attempts=11, exceptions: 2022-10-18T08:27:28.422Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:28.523Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:28.725Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:29.029Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:29.533Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:30.547Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:32.563Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:36.570Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:46.668Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:56.690Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:28:06.703Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 at org.apache.flink.util.ExceptionUtils.rethrow(ExceptionUtils.java:316) at org.apache.flink.util.function.FunctionUtils.lambda$uncheckedSupplier$4(FunctionUtils.java:114) at java.util.concurrent.CompletableFuture$AsyncSupply.run(CompletableFuture.java:1604) ... 7 more Caused by: org.apache.flink.runtime.JobException: Creating the input splits caused an error: Failed after attempts=11, exceptions: 2022-10-18T08:27:28.422Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:28.523Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:28.725Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:29.029Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:29.533Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:30.547Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:32.563Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:36.570Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:46.668Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:56.690Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:28:06.703Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 at org.apache.flink.runtime.executiongraph.ExecutionJobVertex.<init>(ExecutionJobVertex.java:247) at org.apache.flink.runtime.executiongraph.DefaultExecutionGraph.attachJobGraph(DefaultExecutionGraph.java:792) at org.apache.flink.runtime.executiongraph.DefaultExecutionGraphBuilder.buildGraph(DefaultExecutionGraphBuilder.java:196) at org.apache.flink.runtime.scheduler.DefaultExecutionGraphFactory.createAndRestoreExecutionGraph(DefaultExecutionGraphFactory.java:107) at org.apache.flink.runtime.scheduler.SchedulerBase.createAndRestoreExecutionGraph(SchedulerBase.java:342) at org.apache.flink.runtime.scheduler.SchedulerBase.<init>(SchedulerBase.java:190) at org.apache.flink.runtime.scheduler.DefaultScheduler.<init>(DefaultScheduler.java:122) at org.apache.flink.runtime.scheduler.DefaultSchedulerFactory.createInstance(DefaultSchedulerFactory.java:132) at org.apache.flink.runtime.jobmaster.DefaultSlotPoolServiceSchedulerFactory.createScheduler(DefaultSlotPoolServiceSchedulerFactory.java:110) at org.apache.flink.runtime.jobmaster.JobMaster.createScheduler(JobMaster.java:340) at org.apache.flink.runtime.jobmaster.JobMaster.<init>(JobMaster.java:317) at org.apache.flink.runtime.jobmaster.factories.DefaultJobMasterServiceFactory.internalCreateJobMasterService(DefaultJobMasterServiceFactory.java:107) at org.apache.flink.runtime.jobmaster.factories.DefaultJobMasterServiceFactory.lambda$createJobMasterService$0(DefaultJobMasterServiceFactory.java:95) at org.apache.flink.util.function.FunctionUtils.lambda$uncheckedSupplier$4(FunctionUtils.java:112) ... 8 more Caused by: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.client.RetriesExhaustedException: Failed after attempts=11, exceptions: 2022-10-18T08:27:28.422Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:28.523Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:28.725Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:29.029Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:29.533Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on local exception: org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.FailedServerException: This server is in the failed servers list: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:30.547Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:32.563Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:36.570Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:46.668Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:27:56.690Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 2022-10-18T08:28:06.703Z, RpcRetryingCaller{globalStartTime=1666081648417, pause=100, maxAttempts=11}, java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.client.RpcRetryingCallerImpl.callWithRetries(RpcRetryingCallerImpl.java:145) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.client.ResultBoundedCompletionService$QueueingFuture.run(ResultBoundedCompletionService.java:80) ... 3 more Caused by: java.net.ConnectException: Call to gx-dsjjq-xuyue-3/172.16.119.20:16020 failed on connection exception: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.IPCUtil.wrapException(IPCUtil.java:177) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.AbstractRpcClient.onCallFinished(AbstractRpcClient.java:392) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.AbstractRpcClient.access$100(AbstractRpcClient.java:97) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.AbstractRpcClient$3.run(AbstractRpcClient.java:423) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.AbstractRpcClient$3.run(AbstractRpcClient.java:419) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.Call.callComplete(Call.java:117) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.Call.setException(Call.java:132) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.BufferCallBeforeInitHandler.userEventTriggered(BufferCallBeforeInitHandler.java:92) at org.apache.hbase.thirdparty.io.netty.channel.AbstractChannelHandlerContext.invokeUserEventTriggered(AbstractChannelHandlerContext.java:326) at org.apache.hbase.thirdparty.io.netty.channel.AbstractChannelHandlerContext.invokeUserEventTriggered(AbstractChannelHandlerContext.java:312) at org.apache.hbase.thirdparty.io.netty.channel.AbstractChannelHandlerContext.fireUserEventTriggered(AbstractChannelHandlerContext.java:304) at org.apache.hbase.thirdparty.io.netty.channel.DefaultChannelPipeline$HeadContext.userEventTriggered(DefaultChannelPipeline.java:1426) at org.apache.hbase.thirdparty.io.netty.channel.AbstractChannelHandlerContext.invokeUserEventTriggered(AbstractChannelHandlerContext.java:326) at org.apache.hbase.thirdparty.io.netty.channel.AbstractChannelHandlerContext.invokeUserEventTriggered(AbstractChannelHandlerContext.java:312) at org.apache.hbase.thirdparty.io.netty.channel.DefaultChannelPipeline.fireUserEventTriggered(DefaultChannelPipeline.java:924) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.NettyRpcConnection.failInit(NettyRpcConnection.java:179) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.NettyRpcConnection.access$500(NettyRpcConnection.java:71) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.NettyRpcConnection$3.operationComplete(NettyRpcConnection.java:267) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.ipc.NettyRpcConnection$3.operationComplete(NettyRpcConnection.java:261) at org.apache.hbase.thirdparty.io.netty.util.concurrent.DefaultPromise.notifyListener0(DefaultPromise.java:502) at org.apache.hbase.thirdparty.io.netty.util.concurrent.DefaultPromise.notifyListeners0(DefaultPromise.java:495) at org.apache.hbase.thirdparty.io.netty.util.concurrent.DefaultPromise.notifyListenersNow(DefaultPromise.java:474) at org.apache.hbase.thirdparty.io.netty.util.concurrent.DefaultPromise.notifyListeners(DefaultPromise.java:415) at org.apache.hbase.thirdparty.io.netty.util.concurrent.DefaultPromise.setValue0(DefaultPromise.java:540) at org.apache.hbase.thirdparty.io.netty.util.concurrent.DefaultPromise.setFailure0(DefaultPromise.java:533) at org.apache.hbase.thirdparty.io.netty.util.concurrent.DefaultPromise.tryFailure(DefaultPromise.java:114) at org.apache.hbase.thirdparty.io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe.fulfillConnectPromise(AbstractNioChannel.java:327) at org.apache.hbase.thirdparty.io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe.finishConnect(AbstractNioChannel.java:343) at org.apache.hbase.thirdparty.io.netty.channel.nio.NioEventLoop.processSelectedKey(NioEventLoop.java:665) at org.apache.hbase.thirdparty.io.netty.channel.nio.NioEventLoop.processSelectedKeysOptimized(NioEventLoop.java:612) at org.apache.hbase.thirdparty.io.netty.channel.nio.NioEventLoop.processSelectedKeys(NioEventLoop.java:529) at org.apache.hbase.thirdparty.io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:491) at org.apache.hbase.thirdparty.io.netty.util.concurrent.SingleThreadEventExecutor$5.run(SingleThreadEventExecutor.java:905) at org.apache.hbase.thirdparty.io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30) ... 1 more Caused by: org.apache.hbase.thirdparty.io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: gx-dsjjq-xuyue-3/172.16.119.20:16020 at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method) at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:715) at org.apache.hbase.thirdparty.io.netty.channel.socket.nio.NioSocketChannel.doFinishConnect(NioSocketChannel.java:327) at org.apache.hbase.thirdparty.io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe.finishConnect(AbstractNioChannel.java:340) ... 7 more Caused by: java.net.ConnectException: Connection refused ... 11 more

这个时候就需要检查hbase集群是否正常了,我这里用的是cdh的hbase,可以通过页面直接重启,比较方便

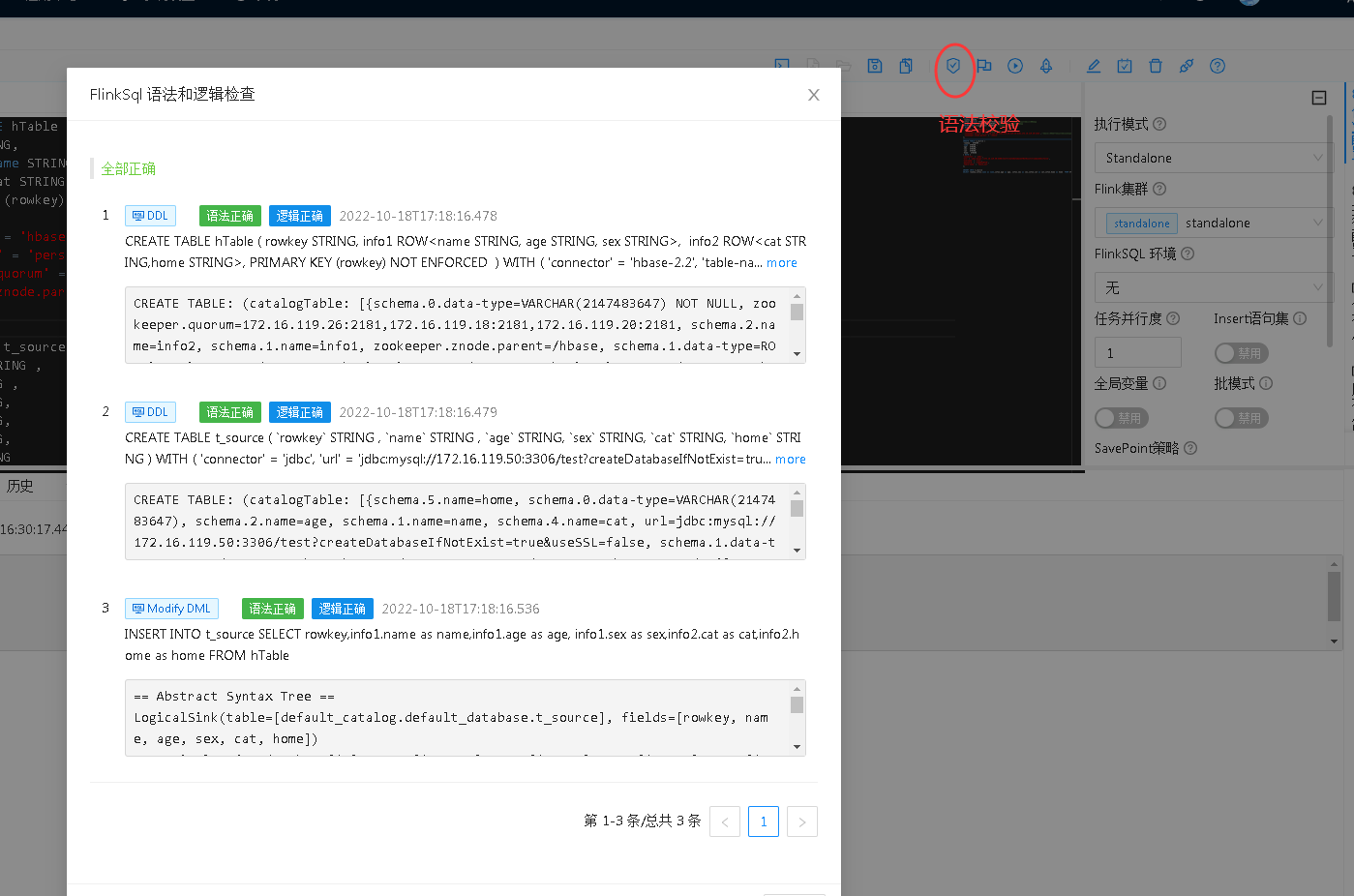

还有的错误是需要去检查hbase版本和flink版本的问题,就是看下仔的依赖包是否正确,这方面的错误日志可以通过在运行任务前的语法校验的日志来找线索

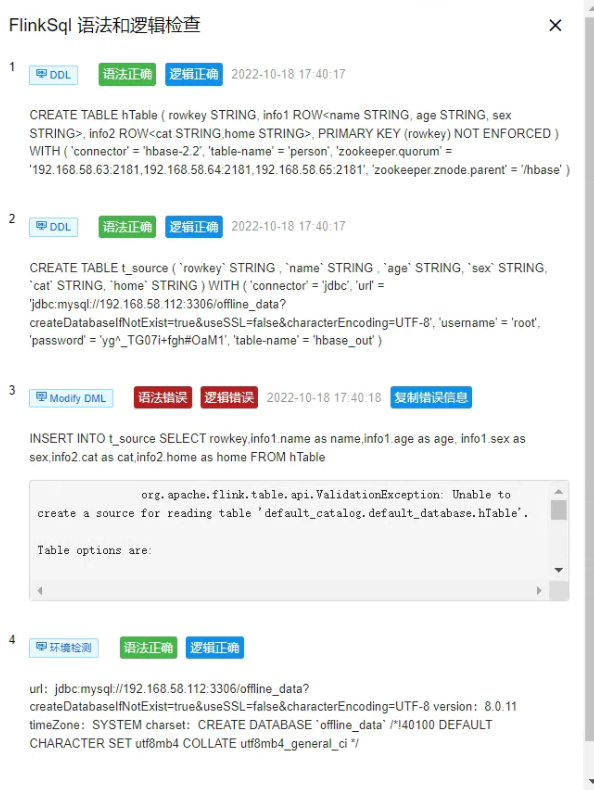

比如说语法校验不通过就会出现这样的情况

很多人这个时候第一反应就是说我的sql语句写错了,不断检查sql,结果一直没有找到原因。

我们把错误日志信息单独拿出来看

org.apache.flink.table.api.ValidationException: Unable to create a source for reading table 'default_catalog.default_database.hTable'. Table options are: 'connector'='hbase-2.2' 'table-name'='person' 'zookeeper.quorum'='192.168.58.63:2181,192.168.58.64:2181,192.168.58.65:2181' 'zookeeper.znode.parent'='/hbase' at org.apache.flink.table.factories.FactoryUtil.createTableSource(FactoryUtil.java:131) at org.apache.flink.table.planner.plan.schema.CatalogSourceTable.createDynamicTableSource(CatalogSourceTable.java:116) at org.apache.flink.table.planner.plan.schema.CatalogSourceTable.toRel(CatalogSourceTable.java:82) at org.apache.calcite.sql2rel.SqlToRelConverter.toRel(SqlToRelConverter.java:3585) at org.apache.calcite.sql2rel.SqlToRelConverter.convertIdentifier(SqlToRelConverter.java:2507) at org.apache.calcite.sql2rel.SqlToRelConverter.convertFrom(SqlToRelConverter.java:2144) at org.apache.calcite.sql2rel.SqlToRelConverter.convertFrom(SqlToRelConverter.java:2093) at org.apache.calcite.sql2rel.SqlToRelConverter.convertFrom(SqlToRelConverter.java:2050) at org.apache.calcite.sql2rel.SqlToRelConverter.convertSelectImpl(SqlToRelConverter.java:663) at org.apache.calcite.sql2rel.SqlToRelConverter.convertSelect(SqlToRelConverter.java:644) at org.apache.calcite.sql2rel.SqlToRelConverter.convertQueryRecursive(SqlToRelConverter.java:3438) at org.apache.calcite.sql2rel.SqlToRelConverter.convertQuery(SqlToRelConverter.java:570) at org.apache.flink.table.planner.calcite.FlinkPlannerImpl.org$apache$flink$table$planner$calcite$FlinkPlannerImpl$$rel(FlinkPlannerImpl.scala:168) at org.apache.flink.table.planner.calcite.FlinkPlannerImpl.rel(FlinkPlannerImpl.scala:160) at org.apache.flink.table.planner.operations.SqlToOperationConverter.toQueryOperation(SqlToOperationConverter.java:967) at org.apache.flink.table.planner.operations.SqlToOperationConverter.convertSqlQuery(SqlToOperationConverter.java:936) at org.apache.flink.table.planner.operations.SqlToOperationConverter.convert(SqlToOperationConverter.java:275) at org.apache.flink.table.planner.operations.SqlToOperationConverter.convertSqlInsert(SqlToOperationConverter.java:595) at org.apache.flink.table.planner.operations.SqlToOperationConverter.convert(SqlToOperationConverter.java:268) at org.apache.flink.table.planner.delegation.ParserImpl.parse(ParserImpl.java:99) at org.apache.flink.table.api.internal.TableEnvironmentImpl.explainSql(TableEnvironmentImpl.java:670) at com.dlink.executor.Executor.explainSql(Executor.java:230) at com.dlink.explainer.Explainer.explainSql(Explainer.java:215) at com.dlink.job.JobManager.explainSql(JobManager.java:464) at com.dlink.service.impl.StudioServiceImpl.explainFlinkSql(StudioServiceImpl.java:167) at com.dlink.service.impl.StudioServiceImpl.explainSql(StudioServiceImpl.java:154) at cn.com.taiji.datalake.processing.flink.task.service.impl.TaskManagerServicelmpl.checkSql(TaskManagerServicelmpl.java:115) at cn.com.taiji.datalake.processing.flink.task.service.impl.TaskManagerServicelmpl$$FastClassBySpringCGLIB$$5b6a72ed.invoke(<generated>) at org.springframework.cglib.proxy.MethodProxy.invoke(MethodProxy.java:218) at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.invokeJoinpoint(CglibAopProxy.java:783) at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:163) at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.proceed(CglibAopProxy.java:753) at org.springframework.transaction.interceptor.TransactionInterceptor$1.proceedWithInvocation(TransactionInterceptor.java:123) at org.springframework.transaction.interceptor.TransactionAspectSupport.invokeWithinTransaction(TransactionAspectSupport.java:388) at org.springframework.transaction.interceptor.TransactionInterceptor.invoke(TransactionInterceptor.java:119) at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:186) at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.proceed(CglibAopProxy.java:753) at org.springframework.aop.interceptor.ExposeInvocationInterceptor.invoke(ExposeInvocationInterceptor.java:97) at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:186) at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.proceed(CglibAopProxy.java:753) at org.springframework.aop.framework.CglibAopProxy$DynamicAdvisedInterceptor.intercept(CglibAopProxy.java:698) at cn.com.taiji.datalake.processing.flink.task.service.impl.TaskManagerServicelmpl$$EnhancerBySpringCGLIB$$514c1507.checkSql(<generated>) at cn.com.taiji.datalake.processing.flink.task.api.TaskManagerController.checkSql(TaskManagerController.java:52) at cn.com.taiji.datalake.processing.flink.task.api.TaskManagerController$$FastClassBySpringCGLIB$$17847fbe.invoke(<generated>) at org.springframework.cglib.proxy.MethodProxy.invoke(MethodProxy.java:218) at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.invokeJoinpoint(CglibAopProxy.java:783) at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:163) at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.proceed(CglibAopProxy.java:753) at org.springframework.aop.aspectj.MethodInvocationProceedingJoinPoint.proceed(MethodInvocationProceedingJoinPoint.java:89) at cn.com.taiji.tdp.system.logger.config.LoggingAspect.executionWithPointCut(LoggingAspect.java:83) at sun.reflect.GeneratedMethodAccessor283.invoke(Unknown Source) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at org.springframework.aop.aspectj.AbstractAspectJAdvice.invokeAdviceMethodWithGivenArgs(AbstractAspectJAdvice.java:634) at org.springframework.aop.aspectj.AbstractAspectJAdvice.invokeAdviceMethod(AbstractAspectJAdvice.java:624) at org.springframework.aop.aspectj.AspectJAroundAdvice.invoke(AspectJAroundAdvice.java:72) at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:175) at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.proceed(CglibAopProxy.java:753) at org.springframework.aop.interceptor.ExposeInvocationInterceptor.invoke(ExposeInvocationInterceptor.java:97) at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:186) at org.springframework.aop.framework.CglibAopProxy$CglibMethodInvocation.proceed(CglibAopProxy.java:753) at org.springframework.aop.framework.CglibAopProxy$DynamicAdvisedInterceptor.intercept(CglibAopProxy.java:698) at cn.com.taiji.datalake.processing.flink.task.api.TaskManagerController$$EnhancerBySpringCGLIB$$88b288af.checkSql(<generated>) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at org.springframework.web.method.support.InvocableHandlerMethod.doInvoke(InvocableHandlerMethod.java:205) at org.springframework.web.method.support.InvocableHandlerMethod.invokeForRequest(InvocableHandlerMethod.java:150) at org.springframework.web.servlet.mvc.method.annotation.ServletInvocableHandlerMethod.invokeAndHandle(ServletInvocableHandlerMethod.java:117) at org.springframework.web.servlet.mvc.method.annotation.RequestMappingHandlerAdapter.invokeHandlerMethod(RequestMappingHandlerAdapter.java:895) at org.springframework.web.servlet.mvc.method.annotation.RequestMappingHandlerAdapter.handleInternal(RequestMappingHandlerAdapter.java:808) at org.springframework.web.servlet.mvc.method.AbstractHandlerMethodAdapter.handle(AbstractHandlerMethodAdapter.java:87) at org.springframework.web.servlet.DispatcherServlet.doDispatch(DispatcherServlet.java:1067) at org.springframework.web.servlet.DispatcherServlet.doService(DispatcherServlet.java:963) at org.springframework.web.servlet.FrameworkServlet.processRequest(FrameworkServlet.java:1006) at org.springframework.web.servlet.FrameworkServlet.doPost(FrameworkServlet.java:909) at javax.servlet.http.HttpServlet.service(HttpServlet.java:681) at org.springframework.web.servlet.FrameworkServlet.service(FrameworkServlet.java:883) at javax.servlet.http.HttpServlet.service(HttpServlet.java:764) at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:227) at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) at org.apache.tomcat.websocket.server.WsFilter.doFilter(WsFilter.java:53) at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) at cn.com.taiji.tdp.system.security.core.authorize.HeTuFilterSecurityInterceptor.doFilter(HeTuFilterSecurityInterceptor.java:59) at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:113) at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) at org.springframework.security.web.authentication.AbstractAuthenticationProcessingFilter.doFilter(AbstractAuthenticationProcessingFilter.java:219) at org.springframework.security.web.authentication.AbstractAuthenticationProcessingFilter.doFilter(AbstractAuthenticationProcessingFilter.java:213) at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) at cn.com.taiji.datalake.admin.common.db.filter.LicenseFilter.doFilter(LicenseFilter.java:65) at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:327) at org.springframework.security.web.access.intercept.FilterSecurityInterceptor.invoke(FilterSecurityInterceptor.java:115) at org.springframework.security.web.access.intercept.FilterSecurityInterceptor.doFilter(FilterSecurityInterceptor.java:81) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at cn.com.taiji.tdp.system.security.core.authorize.HeTuFilterSecurityInterceptor.doFilter(HeTuFilterSecurityInterceptor.java:59) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.security.web.access.ExceptionTranslationFilter.doFilter(ExceptionTranslationFilter.java:122) at org.springframework.security.web.access.ExceptionTranslationFilter.doFilter(ExceptionTranslationFilter.java:116) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.security.web.session.SessionManagementFilter.doFilter(SessionManagementFilter.java:126) at org.springframework.security.web.session.SessionManagementFilter.doFilter(SessionManagementFilter.java:81) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.security.web.authentication.AnonymousAuthenticationFilter.doFilter(AnonymousAuthenticationFilter.java:109) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.security.web.servletapi.SecurityContextHolderAwareRequestFilter.doFilter(SecurityContextHolderAwareRequestFilter.java:149) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.security.web.savedrequest.RequestCacheAwareFilter.doFilter(RequestCacheAwareFilter.java:63) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at cn.com.taiji.tdp.system.security.core.filter.JwtAuthenticationTokenFilter.doFilterInternal(JwtAuthenticationTokenFilter.java:83) at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:119) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.security.web.authentication.AbstractAuthenticationProcessingFilter.doFilter(AbstractAuthenticationProcessingFilter.java:219) at org.springframework.security.web.authentication.AbstractAuthenticationProcessingFilter.doFilter(AbstractAuthenticationProcessingFilter.java:213) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.security.web.authentication.logout.LogoutFilter.doFilter(LogoutFilter.java:103) at org.springframework.security.web.authentication.logout.LogoutFilter.doFilter(LogoutFilter.java:89) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.web.filter.CorsFilter.doFilterInternal(CorsFilter.java:91) at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:119) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.security.web.header.HeaderWriterFilter.doHeadersAfter(HeaderWriterFilter.java:90) at org.springframework.security.web.header.HeaderWriterFilter.doFilterInternal(HeaderWriterFilter.java:75) at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:119) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.security.web.context.SecurityContextPersistenceFilter.doFilter(SecurityContextPersistenceFilter.java:110) at org.springframework.security.web.context.SecurityContextPersistenceFilter.doFilter(SecurityContextPersistenceFilter.java:80) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.security.web.context.request.async.WebAsyncManagerIntegrationFilter.doFilterInternal(WebAsyncManagerIntegrationFilter.java:55) at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:119) at org.springframework.security.web.FilterChainProxy$VirtualFilterChain.doFilter(FilterChainProxy.java:336) at org.springframework.security.web.FilterChainProxy.doFilterInternal(FilterChainProxy.java:211) at org.springframework.security.web.FilterChainProxy.doFilter(FilterChainProxy.java:183) at org.springframework.web.filter.DelegatingFilterProxy.invokeDelegate(DelegatingFilterProxy.java:358) at org.springframework.web.filter.DelegatingFilterProxy.doFilter(DelegatingFilterProxy.java:271) at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) at org.springframework.web.filter.RequestContextFilter.doFilterInternal(RequestContextFilter.java:100) at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:119) at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) at org.springframework.web.filter.FormContentFilter.doFilterInternal(FormContentFilter.java:93) at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:119) at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) at org.springframework.boot.actuate.metrics.web.servlet.WebMvcMetricsFilter.doFilterInternal(WebMvcMetricsFilter.java:97) at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:119) at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) at org.springframework.web.filter.CharacterEncodingFilter.doFilterInternal(CharacterEncodingFilter.java:201) at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:119) at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:189) at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:162) at org.apache.catalina.core.StandardWrapperValve.invoke(StandardWrapperValve.java:197) at org.apache.catalina.core.StandardContextValve.invoke(StandardContextValve.java:97) at org.apache.catalina.authenticator.AuthenticatorBase.invoke(AuthenticatorBase.java:540) at org.apache.catalina.core.StandardHostValve.invoke(StandardHostValve.java:135) at org.apache.catalina.valves.ErrorReportValve.invoke(ErrorReportValve.java:92) at org.apache.catalina.core.StandardEngineValve.invoke(StandardEngineValve.java:78) at org.apache.catalina.connector.CoyoteAdapter.service(CoyoteAdapter.java:357) at org.apache.coyote.http11.Http11Processor.service(Http11Processor.java:382) at org.apache.coyote.AbstractProcessorLight.process(AbstractProcessorLight.java:65) at org.apache.coyote.AbstractProtocol$ConnectionHandler.process(AbstractProtocol.java:895) at org.apache.tomcat.util.net.NioEndpoint$SocketProcessor.doRun(NioEndpoint.java:1722) at org.apache.tomcat.util.net.SocketProcessorBase.run(SocketProcessorBase.java:49) at org.apache.tomcat.util.threads.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1191) at org.apache.tomcat.util.threads.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:659) at org.apache.tomcat.util.threads.TaskThread$WrappingRunnable.run(TaskThread.java:61) at java.lang.Thread.run(Thread.java:748) Caused by: java.lang.RuntimeException: hbase-default.xml file seems to be for an older version of HBase (2.1.0-cdh6.3.2), this version is 2.2.3 at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.HBaseConfiguration.checkDefaultsVersion(HBaseConfiguration.java:74) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.HBaseConfiguration.addHbaseResources(HBaseConfiguration.java:84) at org.apache.flink.hbase.shaded.org.apache.hadoop.hbase.HBaseConfiguration.create(HBaseConfiguration.java:98) at org.apache.flink.connector.hbase.util.HBaseConfigurationUtil.getHBaseConfiguration(HBaseConfigurationUtil.java:49) at org.apache.flink.connector.hbase.options.HBaseOptions.getHBaseConfiguration(HBaseOptions.java:192) at org.apache.flink.connector.hbase2.HBase2DynamicTableFactory.createDynamicTableSource(HBase2DynamicTableFactory.java:79) at org.apache.flink.table.factories.FactoryUtil.createTableSource(FactoryUtil.java:128) ... 175 more

对于这个错误来说,并不是sql写错了,而是依赖包的版本不对,这个错误我可以作一下经验分享,这个是xx公司开发的一个数据治理平台,某个功能模块底层是基于dinky开发的,

在后端的依赖包里面把hbase的相关版本写死了,通常遇到这样的情况,就需要跟项目经历沟通了,这个时候不单单是自己的技术方面的技能,还有沟通方面的软技能了

当然,我这边是得到允许才去修改平台后端的依赖包,把正确版本的jar包上传,再重启平台的后端,切忌,这个操作一定要得到项目经历或者负责人的允许后才能做!!!!!!!!!

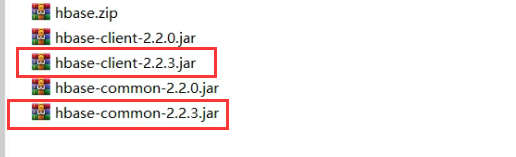

我先把原来的jar包备份

mv hbase-client-2.w1.0-cdh6.3.2.jar hbase-client-2.1.0-cdh6.3.2.jar.bak mv hbase-common-2.1.0-cdh6.3.2.jar hbase-common-2.1.0-cdh6.3.2.jar.bak

再上传对应版本的jar包,重启数据治理平台的后端