欧拉-基于Docker容器运行时实现K8S 1.31 二进制高可用集群

基于Docker容器运行时实现K8S 1.31 二进制高可用集群

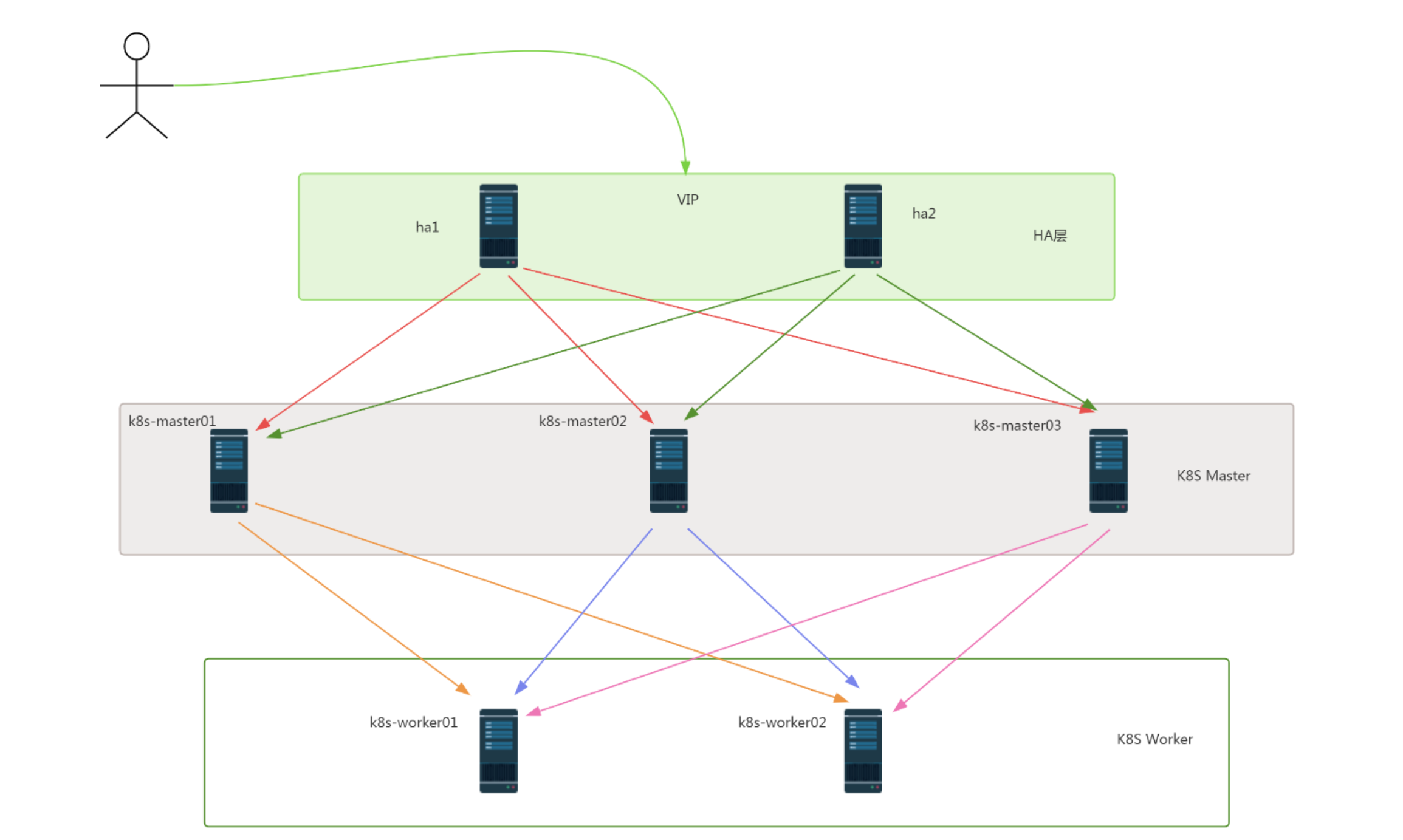

一、K8S集群架构说明

二、K8S集群主机配置

2.1 主机操作系统说明

| 序号 | 操作系统及版本 | 备注 |

|---|---|---|

| 1 | openEuler 22.03 (LTS-SP1) |

2.2 主机软、硬件配置说明

| 需求 | CPU | 内存 | 硬盘 | 角色 | 主机名 | 软件 |

|---|---|---|---|---|---|---|

| 值 | 8C | 8G | 1024GB | master | k8s-master01 | kube-apiserver、kubectl、kube-controller-manager、kube-scheduler、haproxy、keepalived |

| 值 | 8C | 8G | 1024GB | master | k8s-master02 | kube-apiserver、kubectl、kube-controller-manager、kube-scheduler、haproxy、keepalived |

| 值 | 8C | 8G | 1024GB | master | k8s-master03 | kube-apiserver、kubectl、kube-controller-manager、kube-scheduler、haproxy |

| 值 | 8C | 8G | 1024GB | worker(node) | k8s-worker01 | kubelet、kube-proxy、Docker-ce、cri-dockerd |

| 值 | 8C | 8G | 1024GB | worker(node) | k8s-worker02 | kubelet、kube-proxy、Docker-ce、cri-dockerd |

2.3 主机IP地址分配

| 序号 | 主机名 | IP 地址 |

|---|---|---|

| 1 | k8s-master01 | 10.0.0.40/24 |

| 2 | k8s-master02 | 10.0.0.41/24 |

| 3 | k8s-master03 | 10.0.0.42/24 |

| 4 | k8s-worker01 | 10.0.0.43/24 |

| 5 | k8s-worker02 | 10.0.0.44/24 |

| 6 | lb | 10.0.0.47/24 |

三、K8S集群主机准备

3.1 主机名配置

所有主机均要配置

# hostnamectl set-hostname XXX

3.3 主机名与IP地址解析配置

所有主机都要配置

cat <<EOF>> /etc/hosts

10.0.0.40 k8s-master01

10.0.0.41 k8s-master02

10.0.0.42 k8s-master03

10.0.0.43 k8s-worker01

10.0.0.44 k8s-worker02

EOF

3.4 主机安全配置

所有主机均要配置

3.4.1 主机防火墙设置

# systemctl disable --now firewalld

# systemctl status firewalld

# 查看防火墙状态

firewall-cmd --state

3.4.2 主机SELINUX设置

修改完SELINUX配置后,一定要重启主机配置。

- 临时关闭

# setenforce 0

- 永久禁用

# sed -ri 's/SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config

- 查看状态

# sestatus

3.5 主机系统时间同步配置

所有主机均要配置

# yum -y install ntpdate

# crontab -e

0 */1 * * * ntpdate time1.aliyun.com

# ntpdate time1.aliyun.com

3.6 主机ipvs管理工具安装及模块加载

为K8S集群节点进行安装,其它节点不安装。

# yum -y install ipvsadm ipset sysstat conntrack libseccomp

配置ipvsadm模块加载方式

添加需要加载的模块

# cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

EOF

# 授权、运行、检查是否加载

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack

3.7 Linux内核升级(欧拉默认内核 5+)

所有主机均可升级。

# 导入elrepo gpg key

rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

安装elrepo YUM源仓库

# yum -y install https://www.elrepo.org/elrepo-release-7.0-4.el7.elrepo.noarch.rpm

安装kernel-lt版本,ml为最新稳定版本,lt为长期维护版本

# yum --enablerepo="elrepo-kernel" -y install kernel-lt.x86_64

设置grub2默认引导为0

# grub2-set-default 0

重新生成grub2引导文件

# grub2-mkconfig -o /boot/grub2/grub.cfg

更新后,需要重启,使用升级的内核生效。

# reboot

重启后,需要验证内核是否为更新对应的版本

# uname -r

3.8 开启主机内核路由转发及网桥过滤

所有主机均需要操作。

配置内核加载

br_netfilter和iptables放行ipv6和ipv4的流量,确保集群内的容器能够正常通信。

添加网桥过滤及内核转发配置文件

# cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

vm.swappiness = 0

EOF

加载br_netfilter模块

# modprobe br_netfilter

# 查看是否加载

[root@node44 ~ ]# lsmod | grep br_netfilter

br_netfilter 32768 0

bridge 270336 1 br_netfilter

3.9 关闭主机SWAP分区

修改完成后需要重启操作系统,如不重启,可

# 查看

free -h

# 临时关闭swap

swapoff -a

# 永久关闭swap

sed -i.bak '/swap/s/^/#/' /etc/fstab

3.10 K8S集群节点间免密登录配置

在k8s-master01节点上创建即可 ,复制公钥到其它节点。

[root@k8s-master01 ~]# ssh-keygen -t rsa -b 4096 -N "" -f ~/.ssh/id_rsa <<< y

[root@k8s-master01 ~]# ssh-copy-id root@k8s-master02

[root@k8s-master01 ~]# ssh-copy-id root@k8s-master03

[root@k8s-master01 ~]# ssh-copy-id root@k8s-worker01

[root@k8s-master01 ~]# ssh-copy-id root@k8s-worker02

四、负载均衡器部署 Haproxy+Keepalived

4.1 Haproxy & Keepalived安装

在ha1及ha2节点上。

# yum -y install haproxy keepalived

4.2 Haproxy配置文件准备

所有 master 节点都相同,直接复制就可以了。

cat > /etc/haproxy/haproxy.cfg << "EOF"

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-in

bind *:33305

mode http

option httplog

monitor-uri /monitor

frontend k8s-master

bind 0.0.0.0:8443

bind 127.0.0.1:8443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server k8s-master01 10.0.0.40:6443 check

server k8s-master02 10.0.0.41:6443 check

server k8s-master03 10.0.0.42:6443 check

EOF

4.3 Keepalived配置文件及健康检查脚本准备

需要注意:Master节点与Backup节点配置有区别。

4.3.1 ha1节点

cat > /etc/keepalived/keepalived.conf << "EOF"

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state MASTER

interface ens32

mcast_src_ip 10.0.0.40

virtual_router_id 51

priority 100

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

10.0.0.47

}

track_script {

chk_apiserver

}

}

EOF

# cat > /etc/keepalived/check_apiserver.sh <<"EOF"

#!/bin/bash

err=0

for k in $(seq 1 3)

do

check_code=$(pgrep haproxy)

if [[ $check_code == "" ]]; then

err=$(expr $err + 1)

sleep 1

continue

else

err=0

break

fi

done

if [[ $err != "0" ]]; then

echo "systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

EOF

# chmod +x /etc/keepalived/check_apiserver.sh

4.3.2 ha2节点

cat > /etc/keepalived/keepalived.conf << "EOF"

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state BACKUP

interface ens32

mcast_src_ip 10.0.0.41

virtual_router_id 51

priority 99

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

10.0.0.47

}

track_script {

chk_apiserver

}

}

EOF

cat > /etc/keepalived/check_apiserver.sh <<"EOF"

#!/bin/bash

err=0

for k in $(seq 1 3)

do

check_code=$(pgrep haproxy)

if [[ $check_code == "" ]]; then

err=$(expr $err + 1)

sleep 1

continue

else

err=0

break

fi

done

if [[ $err != "0" ]]; then

echo "systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

EOF

chmod +x /etc/keepalived/check_apiserver.sh

4.4 Haproxy & Keepalived服务启动及验证

[root@ha1 ~]# systemctl enable --now haproxy keepalived

[root@ha1 ~]# systemctl is-active haproxy.service keepalived.service

[root@ha1 ~]# ip a s

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:aa:5c:b4 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.40/24 brd 192.168.10.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 10.0.0.47/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::6305:76c5:ac2c:894e/64 scope link noprefixroute

valid_lft forever preferred_lft forever

五、ETCD数据库部署

以下操作均在k8s-master01上执行即可。

5.1 创建工作目录

[root@k8s-master01 ~]# mkdir -p /data/k8s-work && cd /data/k8s-work

5.2 获取cfssl工具

说明:

cfssl是使用go编写,由CloudFlare开源的一款PKI/TLS工具。主要程序有:

- cfssl是CFSSL的命令行工具

- cfssljson用来从cfssl程序获取JSON输出,并将证书,密钥,CSR和bundle写入文件中

# 下载cfssl

[root@k8s-master01 k8s-work]# wget https://github.com/cloudflare/cfssl/releases/download/v1.6.4/cfssl_1.6.4_linux_amd64

[root@k8s-master01 k8s-work]# chmod +x chmod +x cfssl_1.6.4_linux_amd64

[root@k8s-master01 k8s-work]# mv cfssl_1.6.4_linux_amd64 /usr/local/bin/cfssl

# 下载cfssljson

[root@k8s-master01 k8s-work]# wget https://github.com/cloudflare/cfssl/releases/download/v1.6.4/cfssljson_1.6.4_linux_amd64

[root@k8s-master01 k8s-work]# chmod +x cfssljson_1.6.4_linux_amd64

[root@k8s-master01 k8s-work]# mv cfssljson_1.6.4_linux_amd64 /usr/local/bin/cfssljson

5.3 创建CA证书

5.3.1 配置CA证书请求文件

[root@k8s-master01 k8s-work]# cat > ca-csr.json <<"EOF"

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

],

"ca": {

"expiry": "87600h"

}

}

EOF

5.3.2 创建CA证书

[root@k8s-master01 k8s-work]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca

2024/09/11 15:11:42 [INFO] generating a new CA key and certificate from CSR

2024/09/11 15:11:42 [INFO] generate received request

2024/09/11 15:11:42 [INFO] received CSR

2024/09/11 15:11:42 [INFO] generating key: rsa-2048

2024/09/11 15:11:42 [INFO] encoded CSR

2024/09/11 15:11:42 [INFO] signed certificate with serial number 331363762924896784010536703605709958960618863677

[root@k8s-master01 k8s-work]# ls

ca.csr ca-csr.json ca-key.pem ca.pem

5.3.3 CA证书策略

[root@k8s-master01 k8s-work]# cat > ca-config.json <<"EOF"

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

EOF

[root@k8s-master01 k8s-work]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem

server auth 表示client可以对使用该ca对server提供的证书进行验证

client auth 表示server可以使用该ca对client提供的证书进行验证

5.4 创建etcd证书

5.4.1 配置etcd请求文件

[root@k8s-master01 k8s-work]# cat > etcd-csr.json <<"EOF"

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"10.0.0.40",

"10.0.0.41",

"10.0.0.42"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}]

}

EOF

[root@k8s-master01 k8s-work]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem etcd-csr.json

5.4.2 生成etcd证书

[root@k8s-master01 k8s-work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

2024/09/11 15:12:49 [INFO] generate received request

2024/09/11 15:12:49 [INFO] received CSR

2024/09/11 15:12:49 [INFO] generating key: rsa-2048

2024/09/11 15:12:49 [INFO] encoded CSR

2024/09/11 15:12:49 [INFO] signed certificate with serial number 28537756914902920037328158920319473953425853689

[root@k8s-master01 k8s-work]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem etcd.csr etcd-csr.json etcd-key.pem etcd.pem

5.5 etcd集群部署

5.5.1 下载etcd软件包

[root@k8s-master01 k8s-work]# wget https://github.com/etcd-io/etcd/releases/download/v3.5.16/etcd-v3.5.16-linux-amd64.tar.gz

[root@k8s-master01 k8s-work]# tar xf etcd-v3.5.16-linux-amd64.tar.gz

[root@k8s-master01 k8s-work]# cp -p etcd-v3.5.16-linux-amd64/etcd* /usr/local/bin/

5.5.2 etcd软件分发

[root@k8s-master01 k8s-work]# scp -p etcd-v3.5.16-linux-amd64/etcd* k8s-master02:/usr/local/bin/

[root@k8s-master01 k8s-work]# scp -p etcd-v3.5.16-linux-amd64/etcd* k8s-master03:/usr/local/bin/

5.5.3 创建etcd配置文件

5.5.3.1 创建etcd配置文件

所有master节点上都需要。

[root@k8s-master01 ~]# mkdir /etc/etcd

说明:

ETCD_NAME:节点名称,集群中唯一

ETCD_DATA_DIR:数据目录

ETCD_LISTEN_PEER_URLS:集群通信监听地址

ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址

ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址

ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址

ETCD_INITIAL_CLUSTER:集群节点地址

ETCD_INITIAL_CLUSTER_TOKEN:集群Token

ETCD_INITIAL_CLUSTER_STATE:加入集群的当前状态,new是新集群,existing表示加入已有集群

k8s-master01

[root@k8s-master01 ~]# cat > /etc/etcd/etcd.conf << "EOF"

#[Member]

ETCD_NAME="etcd1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.0.0.40:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.40:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.40:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.40:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://10.0.0.40:2380,etcd2=https://10.0.0.41:2380,etcd3=https://10.0.0.42:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

k8s-master02

[root@k8s-master02 ~]# cat > /etc/etcd/etcd.conf <<"EOF"

#[Member]

ETCD_NAME="etcd2"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.0.0.41:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.41:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.41:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.41:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://10.0.0.40:2380,etcd2=https://10.0.0.41:2380,etcd3=https://10.0.0.42:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

k8s-master03

[root@k8s-master03 ~]# cat > /etc/etcd/etcd.conf << "EOF"

#[Member]

ETCD_NAME="etcd3"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.0.0.42:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.42:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.42:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.42:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://10.0.0.40:2380,etcd2=https://10.0.0.41:2380,etcd3=https://10.0.0.42:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

5.5.3.2 准备证书文件及创建etcd服务配置文件

所有etcd节点都需要配置

mkdir -p /etc/etcd/ssl

mkdir -p /var/lib/etcd/default.etcd

在k8s-master01上操作

准备证书文件

cd /data/k8s-work

cp ca*.pem /etc/etcd/ssl

cp etcd*.pem /etc/etcd/ssl

- 分发证书到其他 master 节点

scp ca*.pem k8s-master02:/etc/etcd/ssl

scp etcd*.pem k8s-master02:/etc/etcd/ssl

scp ca*.pem k8s-master03:/etc/etcd/ssl

scp etcd*.pem k8s-master03:/etc/etcd/ssl

准备服务配置文件

- k8s-master01

[root@k8s-master01 ~]# cat > /etc/systemd/system/etcd.service <<"EOF"

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-cert-file=/etc/etcd/ssl/etcd.pem \

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-client-cert-auth \

--client-cert-auth

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

- k8s-master02

[root@k8s-master02 ~]# cat > /etc/systemd/system/etcd.service <<"EOF"

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-cert-file=/etc/etcd/ssl/etcd.pem \

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-client-cert-auth \

--client-cert-auth

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

- k8s-master03

[root@k8s-master03 ~]# cat > /etc/systemd/system/etcd.service <<"EOF"

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-cert-file=/etc/etcd/ssl/etcd.pem \

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-client-cert-auth \

--client-cert-auth

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

5.5.4 启动etcd集群

所有etcd节点全部操作

systemctl daemon-reload

systemctl enable --now etcd.service

systemctl status etcd

5.5.5 验证etcd集群状态

[root@k8s-master01 ~]# etcdctl member list

b3e5838df5f510, started, etcd2, https://10.0.0.41:2380, https://10.0.0.41:2379, false

1f6c7bd87e562ef7, started, etcd1, https://10.0.0.40:2380, https://10.0.0.40:2379, false

a437554da4f2a14c, started, etcd3, https://10.0.0.42:2380, https://10.0.0.42:2379, false

- 以表格格式显示 etcd 集群的成员信息

[root@k8s-master01 ~]# etcdctl member list -w table

+------------------+---------+-------+------------------------+------------------------+------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | IS LEARNER |

+------------------+---------+-------+------------------------+------------------------+------------+

| b3e5838df5f510 | started | etcd2 | https://10.0.0.41:2380 | https://10.0.0.41:2379 | false |

| 1f6c7bd87e562ef7 | started | etcd1 | https://10.0.0.40:2380 | https://10.0.0.40:2379 | false |

| a437554da4f2a14c | started | etcd3 | https://10.0.0.42:2380 | https://10.0.0.42:2379 | false |

+------------------+---------+-------+------------------------+------------------------+------------+

- 检查 etcd 集群中各个节点的健康状态

[root@k8s-master01 ~]# ETCDCTL_API=3 /usr/local/bin/etcdctl \

--write-out=table \

--cacert=/etc/etcd/ssl/ca.pem \

--cert=/etc/etcd/ssl/etcd.pem \

--key=/etc/etcd/ssl/etcd-key.pem \

--endpoints=https://10.0.0.40:2379,https://10.0.0.41:2379,https://10.0.0.42:2379 \

endpoint health

+------------------------+--------+------------+-------+

| ENDPOINT | HEALTH | TOOK | ERROR |

+------------------------+--------+------------+-------+

| https://10.0.0.40:2379 | true | 8.052138ms | |

| https://10.0.0.41:2379 | true | 8.980788ms | |

| https://10.0.0.42:2379 | true | 9.045293ms | |

+------------------------+--------+------------+-------+

各列的含义:

● ENDPOINT: etcd 集群中的节点地址。

● HEALTH: 节点的健康状况,true 表示节点正常,false 表示节点不健康。

● TOOK: 该健康检查请求所花的时间。

● ERROR: 如果某个节点不健康,这里会显示相关的错误信息。

[root@k8s-master01 k8s-work]# ETCDCTL_API=3 /usr/local/bin/etcdctl \

--write-out=table \

--cacert=/etc/etcd/ssl/ca.pem \

--cert=/etc/etcd/ssl/etcd.pem \

--key=/etc/etcd/ssl/etcd-key.pem \

--endpoints=https://10.0.0.40:2379,https://10.0.0.41:2379,https://10.0.0.42:2379 \

endpoint status

各部分的解释:

ETCDCTL_API=3: 使用 etcdctl 的 API v3 版本。

/usr/local/bin/etcdctl: etcdctl 的二进制可执行文件路径。

--write-out=table: 以表格形式输出结果。

--cacert=/etc/etcd/ssl/ca.pem: 指定 CA 证书,用于验证 etcd 集群的身份。

--cert=/etc/etcd/ssl/etcd.pem: 指定客户端的证书,用于身份验证。

--key=/etc/etcd/ssl/etcd-key.pem: 指定客户端的私钥,用于身份验证。

--endpoints=https://10.0.0.40:2379,https://10.0.0.41:2379,https://10.0.0.42:2379: 指定 etcd 集群的节点列表。

endpoint status: 显示各个 etcd 节点的状态信息。

+------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| https://10.0.0.40:2379 | 1f6c7bd87e562ef7 | 3.5.16 | 20 kB | true | false | 2 | 15 | 15 | |

| https://10.0.0.41:2379 | b3e5838df5f510 | 3.5.16 | 20 kB | false | false | 2 | 15 | 15 | |

| https://10.0.0.42:2379 | a437554da4f2a14c | 3.5.16 | 25 kB | false | false | 2 | 15 | 15 | |

+------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

各列含义:

ENDPOINT: etcd 节点的地址。

ID: 该 etcd 成员的唯一标识符。

VERSION: 该节点上运行的 etcd 版本。

DB SIZE: etcd 数据库的大小。

IS LEADER: 指示该节点是否是集群的领导者。true 表示该节点为领导者,false 表示该节点为追随者。

RAFT TERM: 当前的 Raft 选举任期,指集群在当前领导者选举时的 Raft 任期编号。

RAFT INDEX: Raft 日志的当前索引,表示该节点在 Raft 日志中的进展。

六、K8S集群部署

6.1 获取kubernetes源码

:::info

GitHub 地址:

https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.31.md#downloads-for-v1310

:::

[root@k8s-master01 k8s-work]# wget https://dl.k8s.io/v1.31.0/kubernetes-server-linux-amd64.tar.gz

6.2 kubernetes软件安装

6.2.1 k8s-master01节点安装

[root@k8s-master01 k8s-work]# tar xf kubernetes-server-linux-amd64.tar.gz

[root@k8s-master01 k8s-work]# ls

kubernetes-server-linux-amd64.tar.gz

kubernetes

[root@k8s-master01 k8s-work]# ls kubernetes

addons kubernetes-src.tar.gz LICENSES server

[root@k8s-master01 k8s-work]# ls kubernetes/server/

bin

[root@k8s-master01 k8s-work]# ls kubernetes/server/bin

apiextensions-apiserver kube-apiserver.tar kubectl-convert kube-proxy kube-scheduler.tar

kubeadm kube-controller-manager kubectl.docker_tag kube-proxy.docker_tag mounter

kube-aggregator kube-controller-manager.docker_tag kubectl.tar kube-proxy.tar

kube-apiserver kube-controller-manager.tar kubelet kube-scheduler

kube-apiserver.docker_tag kubectl kube-log-runner kube-scheduler.docker_tag

[root@k8s-master01 k8s-work]# cd kubernetes/server/bin/

[root@k8s-master01 bin]# ls

apiextensions-apiserver kube-apiserver.tar kubectl-convert kube-proxy kube-scheduler.tar

kubeadm kube-controller-manager kubectl.docker_tag kube-proxy.docker_tag mounter

kube-aggregator kube-controller-manager.docker_tag kubectl.tar kube-proxy.tar

kube-apiserver kube-controller-manager.tar kubelet kube-scheduler

kube-apiserver.docker_tag kubectl kube-log-runner kube-scheduler.docker_tag

[root@k8s-master01 bin]# cp kube-apiserver kube-controller-manager kube-scheduler kubectl /usr/local/bin/

6.2.2 k8s-master02节点安装

[root@k8s-master01 bin]# scp kube-apiserver kube-controller-manager kube-scheduler kubectl kubelet kube-proxy k8s-master02:/usr/local/bin/

6.2.3 k8s-master03节点安装

[root@k8s-master01 bin]# scp kube-apiserver kube-controller-manager kube-scheduler kubectl kubelet kube-proxy k8s-master03:/usr/local/bin/

6.2.4 k8s-worker01及k8s-worker02节点安装

[root@k8s-master01 bin]# for i in k8s-worker01 k8s-worker02;do scp kubelet kube-proxy $i:/usr/local/bin/;done

6.3 创建目录

在所有k8s master节点上。

mkdir -p /etc/kubernetes/

mkdir -p /etc/kubernetes/ssl

mkdir -p /var/log/kubernetes

6.4 kube apiserver部署

6.4.1 创建 apiserver证书请求文件

cd /data/k8s-work

[root@k8s-master01 k8s-work]# cat > kube-apiserver-csr.json << "EOF"

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"10.0.0.40",

"10.0.0.41",

"10.0.0.42",

"10.0.0.43",

"10.0.0.44",

"10.0.0.47",

"10.96.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

]

}

EOF

说明:

如果 hosts 字段不为空则需要指定授权使用该证书的 IP(含VIP) 或域名列表。

由于该证书被 集群使用,需要将节点的IP都填上,为了方便后期扩容可以多写几个预留的IP。

同时还需要填写 service 网络的首个IP(一般是 kube-apiserver 指定的 service-cluster-ip-range 网段的第一个IP,如 10.96.0.1)。

关于IPv6地址的说明:

如果需要把IPv6地址添加进去,可以直接在IPv4地址后直接添加即可,也可以添加常用域名。

也可以单独创建CA证书

[root@k8s-master01 k8s-work]# ls

kube-apiserver-csr.json

6.4.2 生成 apiserver证书

[root@k8s-master01 k8s-work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver

2024/09/11 15:44:09 [INFO] generate received request

2024/09/11 15:44:09 [INFO] received CSR

2024/09/11 15:44:09 [INFO] generating key: rsa-2048

2024/09/11 15:44:09 [INFO] encoded CSR

2024/09/11 15:44:09 [INFO] signed certificate with serial number 398546308011308782635416485447418910269925946219

验证如下:

[root@k8s-master01 k8s-work]# ls -l kube-apiserver*

kube-apiserver-csr.json

kube-apiserver-key.pem

kube-apiserver.pem

kube-apiserver.csr

6.4.3 创建TLS机制所需TOKEN

TLS Bootstraping:Master apiserver启用TLS认证后,Node节点kubelet和kube-proxy与kube-apiserver进行通信,必须使用CA签发的有效证书才可以,当Node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。为了简化流程,Kubernetes引入了TLS bootstraping机制来自动颁发客户端证书,kubelet会以一个低权限用户自动向apiserver申请证书,kubelet的证书由apiserver动态签署。所以强烈建议在Node上使用这种方式,目前主要用于kubelet,kube-proxy还是由我们统一颁发一个证书。

[root@k8s-master01 k8s-work]# cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

[root@k8s-master01 k8s-work]# ls token.csv

token.csv

[root@k8s-master01 k8s-work]# cat token.csv

d1c7c9c3ce593b8b3c2cf40a542d43e2,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

6.4.4 创建 apiserver服务配置文件

- k8s-master01

cat > /etc/kubernetes/kube-apiserver.conf << "EOF"

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--bind-address=10.0.0.40 \

--advertise-address=10.0.0.40 \

--authorization-mode=Node,RBAC \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.96.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-32767 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://10.0.0.40:2379,https://10.0.0.41:2379,https://10.0.0.42:2379 \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--v=4"

EOF

[root@k8s-master01 k8s-work]# ls /etc/kubernetes/

kube-apiserver.conf ssl

- k8s-master02

cat > /etc/kubernetes/kube-apiserver.conf << "EOF"

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--bind-address=10.0.0.41 \

--secure-port=6443 \

--advertise-address=10.0.0.41 \

--authorization-mode=Node,RBAC \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.96.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-32767 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://10.0.0.40:2379,https://10.0.0.41:2379,https://10.0.0.42:2379 \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--v=4"

EOF

- k8s-master03

cat > /etc/kubernetes/kube-apiserver.conf << "EOF"

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--bind-address=10.0.0.42 \

--secure-port=6443 \

--advertise-address=10.0.0.42 \

--authorization-mode=Node,RBAC \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.96.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-32767 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://10.0.0.40:2379,https://10.0.0.41:2379,https://10.0.0.42:2379 \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--v=4"

EOF

6.4.5 创建apiserver服务管理配置文件

所有的master节点全部执行。

[root@k8s-master01 k8s-work]# cat > /etc/systemd/system/kube-apiserver.service << "EOF"

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=etcd.service

Wants=etcd.service

[Service]

EnvironmentFile=-/etc/kubernetes/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

[root@k8s-master01 k8s-work]# ls /etc/systemd/system/

kube-apiserver.service

6.4.6 分发证书及token文件到各master节点

[root@k8s-master01 k8s-work]# cp ca*.pem kube-apiserver*.pem /etc/kubernetes/ssl/

[root@k8s-master01 k8s-work]# cp token.csv /etc/kubernetes/

- 发送到k8s-master02

[root@k8s-master01 k8s-work]# scp ca*.pem kube-apiserver*.pem k8s-master02:/etc/kubernetes/ssl/

[root@k8s-master01 k8s-work]# scp token.csv k8s-master02:/etc/kubernetes/

- 发送到k8s-master02

[root@k8s-master01 k8s-work]# scp ca*.pem kube-apiserver*.pem k8s-master03:/etc/kubernetes/ssl/

[root@k8s-master01 k8s-work]# scp token.csv k8s-master03:/etc/kubernetes/

6.4.7 启动apiserver服务

所有的master节点全部执行。

systemctl daemon-reload

systemctl enable --now kube-apiserver

systemctl status kube-apiserver

6.4.8 验证apiserver访问

[root@k8s-master01 k8s-work]# curl --insecure https://10.0.0.40:6443/

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

}[root@k8s-master01 k8s-work]# curl --insecure https://10.0.0.41:6443/

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

}[root@k8s-master01 k8s-work]# curl --insecure https://10.0.0.42:6443/

{

"kind": "Status",

"apiVersion": "v1",

"metadata": {},

"status": "Failure",

"message": "Unauthorized",

"reason": "Unauthorized",

"code": 401

}

6.5 kubectl部署

6.5.1 创建kubectl证书请求文件

后续 kube-apiserver 使用 RBAC 对客户端(如 kubelet、kube-proxy、Pod)请求进行授权;

kube-apiserver 预定义了一些 RBAC 使用的 RoleBindings,如 cluster-admin 将 Group system:masters 与 Role cluster-admin 绑定,该 Role 授予了调用kube-apiserver 的所有 API的权限;

O指定该证书的 Group 为 system:masters,kubelet 使用该证书访问 kube-apiserver 时 ,由于证书被 CA 签名,所以认证通过,同时由于证书用户组为经过预授权的 system:masters,所以被授予访问所有 API 的权限;

[root@k8s-master01 k8s-work]# cat > admin-csr.json << "EOF"

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:masters",

"OU": "system"

}

]

}

EOF

[root@k8s-master01 k8s-work]# ls admin-csr.json

admin-csr.json

说明:

这个admin 证书,是将来生成管理员用的kubeconfig 配置文件用的,现在我们一般建议使用RBAC 来对kubernetes 进行角色权限控制, kubernetes 将证书中的CN 字段 作为User, O 字段作为 Group;

"O": "system:masters", 必须是system:masters,否则后面kubectl create clusterrolebinding报错。

6.5.2 生成admin证书文件

[root@k8s-master01 k8s-work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

2024/09/11 15:52:24 [INFO] generate received request

2024/09/11 15:52:24 [INFO] received CSR

2024/09/11 15:52:24 [INFO] generating key: rsa-2048

2024/09/11 15:52:24 [INFO] encoded CSR

2024/09/11 15:52:24 [INFO] signed certificate with serial number 409695840998916998415367169340473090495304452148

2024/09/11 15:52:24 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@k8s-master01 k8s-work]# ls admin*

admin.csr

admin-csr.json

admin-key.pem

admin.pem

6.5.3 复制admin证书到指定目录

[root@k8s-master01 k8s-work]# cp admin*.pem /etc/kubernetes/ssl/

[root@k8s-master01 k8s-work]# ls /etc/kubernetes/ssl/

admin-key.pem admin.pem ca-key.pem ca.pem kube-apiserver-key.pem kube-apiserver.pem

6.5.4 生成admin配置文件 kubeconfig

kube.config 为 kubectl 的配置文件,包含访问 apiserver 的所有信息,如 apiserver 地址、CA 证书和自身使用的证书

[root@k8s-master01 k8s-work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.0.0.47:8443 --kubeconfig=kube.config

[root@k8s-master01 k8s-work]# cat kube.config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURtakNDQW9LZ0F3SUJBZ0lVT2dyZGNuYXRLcmp1WCs3dlFRaFg2TjErL0Qwd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTNNRGN3TUZvWERUTTBNRGt3T1RBM01EY3dNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RURBTwpCZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBdEJwcmxVZGJ2T1dKcE1PcDdjcXIKK29OakRMYUhhN1ZtVStIaEVDQ1pWUWtkMWl2TmVXWXF5TjUxNlU3d3hqdk5jR1pzYkZkVzVaSjdIU1JjRS9RYgpNV2t1OEZheEo0NHpKWSttM1NKL2g0R2UrL0tONWhVeEUyWjhWbDMzTTd0ZlJVTzlQOG9YdDZWSG82L1JHai91Cmp3SXBWbzU5dmZnbDA3SlNjaGtCT200bGhvRjJCeWx3TkZEVUg3cmJYV0c0T1JieWhoSGpsMzcxd0RpZjliazcKMjE4WXpXdFRIV3JqS3g0dEJjR21KTk1TbDNMLzVpYWx3ZGVRczlQN1Z5OG1FdFlxRHpOSktZQVFNRzRJSHJrSAo1SElrZnBwSGpaRGF6Vzdtb3pjL1VISnZYcnlvYVNJSnhyNDVVaEN0MEEzOFc4NlQrcTVWUDNiYjl1aVdxZjAwCm1RSURBUUFCbzBJd1FEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0R3WURWUjBUQVFIL0JBVXdBd0VCL3pBZEJnTlYKSFE0RUZnUVVRb2liOFFELzJBRkRpeXBjQUxNSCtSM2oyNDh3RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUVHbgpra1hLcGpaM0k1elprYlVxU3BNUDA3ZGhKa1UxWFJhUFJoNVZ0ZUtyRGxtOU1YQzNhLy9DUFJCNk1ScG01YUZoClRxZ3I4aVZzbEl5YXd5ZkdRUkdrdklDbjMzdWxuR1R6Mm8zdXVnTDJSS0ZqclNVL2pqQmFqQ3NUbjdxenA1a0QKTG1qZk9RZWh4KzZQNXhTem92NlJaU1czQnZQZG5HRDRndFZoQUJSS2N6U0J1dHd2WW5JUXg1QXlsaS9jdlBpcgprSTVKdEphajUybTBZbG8vOWN3ZFlkbStGaWViTmlmRGhyMnNGdHZPWjcyaEoxUGR2YmsxRmh6VldjOTRZR0sxCnNXME1rWGxMWkdrYTI1Mm9Yd3RXZWtGV0ErWlBKeTZRSVd5L3pxdHd1WDZaOTdsZlIvSFlMUGRlaGFObkFDMEoKcEVUQnJvWTNuTERiTHdhd1RVaz0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://10.0.0.47:8443

name: kubernetes

contexts: null

current-context: ""

kind: Config

preferences: {}

users: null

[root@k8s-master01 k8s-work]# kubectl config set-credentials admin --client-certificate=admin.pem --client-key=admin-key.pem --embed-certs=true --kubeconfig=kube.config

[root@k8s-master01 k8s-work]# cat kube.config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURtakNDQW9LZ0F3SUJBZ0lVT2dyZGNuYXRLcmp1WCs3dlFRaFg2TjErL0Qwd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTNNRGN3TUZvWERUTTBNRGt3T1RBM01EY3dNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RURBTwpCZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBdEJwcmxVZGJ2T1dKcE1PcDdjcXIKK29OakRMYUhhN1ZtVStIaEVDQ1pWUWtkMWl2TmVXWXF5TjUxNlU3d3hqdk5jR1pzYkZkVzVaSjdIU1JjRS9RYgpNV2t1OEZheEo0NHpKWSttM1NKL2g0R2UrL0tONWhVeEUyWjhWbDMzTTd0ZlJVTzlQOG9YdDZWSG82L1JHai91Cmp3SXBWbzU5dmZnbDA3SlNjaGtCT200bGhvRjJCeWx3TkZEVUg3cmJYV0c0T1JieWhoSGpsMzcxd0RpZjliazcKMjE4WXpXdFRIV3JqS3g0dEJjR21KTk1TbDNMLzVpYWx3ZGVRczlQN1Z5OG1FdFlxRHpOSktZQVFNRzRJSHJrSAo1SElrZnBwSGpaRGF6Vzdtb3pjL1VISnZYcnlvYVNJSnhyNDVVaEN0MEEzOFc4NlQrcTVWUDNiYjl1aVdxZjAwCm1RSURBUUFCbzBJd1FEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0R3WURWUjBUQVFIL0JBVXdBd0VCL3pBZEJnTlYKSFE0RUZnUVVRb2liOFFELzJBRkRpeXBjQUxNSCtSM2oyNDh3RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUVHbgpra1hLcGpaM0k1elprYlVxU3BNUDA3ZGhKa1UxWFJhUFJoNVZ0ZUtyRGxtOU1YQzNhLy9DUFJCNk1ScG01YUZoClRxZ3I4aVZzbEl5YXd5ZkdRUkdrdklDbjMzdWxuR1R6Mm8zdXVnTDJSS0ZqclNVL2pqQmFqQ3NUbjdxenA1a0QKTG1qZk9RZWh4KzZQNXhTem92NlJaU1czQnZQZG5HRDRndFZoQUJSS2N6U0J1dHd2WW5JUXg1QXlsaS9jdlBpcgprSTVKdEphajUybTBZbG8vOWN3ZFlkbStGaWViTmlmRGhyMnNGdHZPWjcyaEoxUGR2YmsxRmh6VldjOTRZR0sxCnNXME1rWGxMWkdrYTI1Mm9Yd3RXZWtGV0ErWlBKeTZRSVd5L3pxdHd1WDZaOTdsZlIvSFlMUGRlaGFObkFDMEoKcEVUQnJvWTNuTERiTHdhd1RVaz0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://10.0.0.47:8443

name: kubernetes

contexts: null

current-context: ""

kind: Config

preferences: {}

users:

- name: admin

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQzVENDQXNXZ0F3SUJBZ0lVUjhObG1wL0FtbzRQRDkzUFEzMlNTamFzU0RRd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTNORGN3TUZvWERUTTBNRGt3T1RBM05EY3dNRm93YXpFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RnpBVgpCZ05WQkFvVERuTjVjM1JsYlRwdFlYTjBaWEp6TVE4d0RRWURWUVFMRXdaemVYTjBaVzB4RGpBTUJnTlZCQU1UCkJXRmtiV2x1TUlJQklqQU5CZ2txaGtpRzl3MEJBUUVGQUFPQ0FROEFNSUlCQ2dLQ0FRRUF6Y1ZaMUN6V3hVNGEKNHdaMkxpRjNJK2JaMjFLSUN2d3h5by96akNwZWNUZ3QxMXNjclZyRVlxLzM5a283d09lYzlVMk9NRG5jTzExNgp4anVBUVEvTGFqS1lSZmZjUGNLVUZadHdZMGgzVzNnUTdvV1hZcmg2eDFkUVJKUXRXZG16ZWtZUWZoOUt2aFo5CmNZYWFYTUQ2c3I0MkFFT1RxWnJYSmpUb2VKR01OcGhXbVIzU3hwNGEyb2VFOVhJZXYzS0RVdGhtTnJQa0FaUW0KeHp1SklQY2Z6TjdSelc2R2RTd1ZKK1o0REVQS0tERDlOb0p0ZzQ5RDlZVlhjTnFpdnFBT3I0VlF1dERVZE5MWgpxbDZ2S1hXTHlHMVJEcVd5YXVSbG5Pb3dwTmwySWxqNWQ0dVF5VE9xMWExTElhRTJTa1pzZWkrN2pZRzJyWmw1Ck5nTHZGOEw4NlFJREFRQUJvMzh3ZlRBT0JnTlZIUThCQWY4RUJBTUNCYUF3SFFZRFZSMGxCQll3RkFZSUt3WUIKQlFVSEF3RUdDQ3NHQVFVRkJ3TUNNQXdHQTFVZEV3RUIvd1FDTUFBd0hRWURWUjBPQkJZRUZDZVJFQlh1SzJWVQpnWmZLNWN5T09Cekdwc3RtTUI4R0ExVWRJd1FZTUJhQUZFS0ltL0VBLzlnQlE0c3FYQUN6Qi9rZDQ5dVBNQTBHCkNTcUdTSWIzRFFFQkN3VUFBNElCQVFCMDkrNzB1bFF5TG1TVExPdXR4NUVlZnJYQW90TFljdEUwWmprRWJURzMKNFVZTU9mWGF0RXZwYnBYWlBmYVRudk83RS9jdHl5RksvV1ZIS1RrNnFzandWZklYZTV4cm0zbzQ5T3ExRFFDTwpMMUI5ckIzSDRNcC9ic0tQbkxBeFA4V2ZiYmN3OTdCREdleVVjZHdzTTVFYy9BSDBkaUNTancxa0haSXlWVnh0CmtHby8zQVhKek9UZXZlUi9sTVJpcGNDNTV4SnQ1Z1I0Rzhhb0N3MGg5R1QzYk9zVThRVVVHaG9oc3ZPYmU0dzkKbkF6ZTU5eks2TEtqcXNyYXROUmdtNUJ1cTFsRm11cTJ5UDNBUDVPbWpBVlNQZnNqS3NHRUVCb3Q1R0NCVnlTegoyeVFXYldwTGFzakJjSU54bzdNRnB4YmZ0NUhHeFU0ZGg0Vy9EdXJoMEl5egotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg==

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcEFJQkFBS0NBUUVBemNWWjFDeld4VTRhNHdaMkxpRjNJK2JaMjFLSUN2d3h5by96akNwZWNUZ3QxMXNjCnJWckVZcS8zOWtvN3dPZWM5VTJPTURuY08xMTZ4anVBUVEvTGFqS1lSZmZjUGNLVUZadHdZMGgzVzNnUTdvV1gKWXJoNngxZFFSSlF0V2RtemVrWVFmaDlLdmhaOWNZYWFYTUQ2c3I0MkFFT1RxWnJYSmpUb2VKR01OcGhXbVIzUwp4cDRhMm9lRTlYSWV2M0tEVXRobU5yUGtBWlFteHp1SklQY2Z6TjdSelc2R2RTd1ZKK1o0REVQS0tERDlOb0p0Cmc0OUQ5WVZYY05xaXZxQU9yNFZRdXREVWROTFpxbDZ2S1hXTHlHMVJEcVd5YXVSbG5Pb3dwTmwySWxqNWQ0dVEKeVRPcTFhMUxJYUUyU2tac2VpKzdqWUcyclpsNU5nTHZGOEw4NlFJREFRQUJBb0lCQVFEQ1c1d0RhdTdab25LRwo2VDJMU1JUTmxtbEVYZW9kNWlQcG5wcCtWQzZzWmxIMlRoc0NLdSsvLzFJSkVnanFwbHA4NE9waTV1UDhOc21XCm4vRCtnenF4Ym1TaUFnSEhYQmlmYUJoNXpxTGVoTVFKWjZtY0YzL3c5YW5kZk5CeFE4M2d1bmt0aDhVRFV4N2QKc2pQdlZGLzNvTzVFeFkrZDdhRTJkMWIxT3hUaklzR25CTzJHU3VvZE1WRDFBcXBueVJmR2NVSnM0dkFsM0tXSwp2cmxGTDEycnRqbTFNQzBHRjdwT3ZueHlkR0lhNkVZbjNFZEFCMjY1VHFhUHB4VGFDZjkwaHlQclNsVVNhbDU1Cm9BSzA5cFE0ckwxVmZFZXdUdEpCY0JIZFpMZk5GU0UyWjB5MVlFYk5QRFZ1RmswMEpOdE51L3ZOYWl6ckM4OGkKTkwzcjFWU1pBb0dCQU5IV1c5Zngxd0pyYXdnQTVEVWZ1S3RjbVZTMFJMZitJMmhiaWw1NUFlQUpRbC85TllyaQo2bG5oblUyS3RZUFlKamdEUVkvWi9iZG1ZZ1FBSm9LYVpZNFIwUkdSVGs5bm91emh1UUxQalRZbEo3ZnRCZzI0CjYvbzJwSjVnL0RuNjV1ZzMzZ0F2VTJQUm9VTGR0TFhtMTRCSloxeVJkYjE3VjJVZnlocnJHcVNYQW9HQkFQc0oKK21VZVZ1TXpWbzhrWld0bGJ0djVydER2cGlWYUU4bDNGSStTbDAxTXJDMitqREtmZG5FZEUySFdDc0RDODVDOApYdG80Wm1kWUh2ZTBMRHFKMGNSZSswa2lwdXRsUG5wOEpUaVowbFFSd1BaUUpGK3JqTVN4a2lQaGRjWk94MzJQCnJLQW9Bdk1FSWtFTG84MFgxYmYyMGs1OGNyRDUySkFVVWZTOFRCcC9Bb0dBQWwyN2JXVHh1cnBCVzdhKzNBWisKaTVnZ3RuN040NUUvRHZjeFNUMXVFdnVudnZOWS9qYnUwNUtpdG5RZzlkcWpHN0NWdGF5TW10dlJzUi9iVDArMApZM1M1K2N1OHFWS08yTUwyMWh4SENGeEU1V01MMVczSFkydm9VVXpncXpxMERkeExhWThmRHBvWGlteDdsQzJGCk1wSWhVejdrcC8xVEQvWGF6cERtSFFNQ2dZRUEyc2xHZGl4cjgxV0I0ZjBKZXdFTERpSmNibkgrYmwxRUUzaDUKN2VzSGZISVBPVXJ4YXdrNU03bndjM3NWSWd5R05DVkgwWTRJQ1pkdVhkbWtGbHlZK2prQmJpc0tLT3V5K1JNTAphWG4rS2hEVENKaXVLc2NiUnkydlBTQTVBZDBVMWVTS3dZWTlrOGlOaGZ6OEJEbjZwSHN6clAyZkE0aXNhbDJiClU5MXJ3a2NDZ1lCVE9aTzQvcGE1MUFRcjEweE5EeitlYjNReTZmcTJJd0swWm0zb0NLOC96NlZNcllFTjR6ckYKRFVxVnh3VFB6akxYVGVzWEFFTmlDY1lOZGoweFRVcXdwaEliTWZVbkpDYzZYZkhMNEppNThQUE1ma0VKTGpHdwp1cGhIMjF1MDlNWnA4UmlDYkp3ZlE3TG90M0kvdlFvZktoczExSFdMSUNvT2psSzlrWUVpOWc9PQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo=

[root@k8s-master01 k8s-work]# kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=kube.config

[root@k8s-master01 k8s-work]# cat kube.config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURtakNDQW9LZ0F3SUJBZ0lVT2dyZGNuYXRLcmp1WCs3dlFRaFg2TjErL0Qwd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTNNRGN3TUZvWERUTTBNRGt3T1RBM01EY3dNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RURBTwpCZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBdEJwcmxVZGJ2T1dKcE1PcDdjcXIKK29OakRMYUhhN1ZtVStIaEVDQ1pWUWtkMWl2TmVXWXF5TjUxNlU3d3hqdk5jR1pzYkZkVzVaSjdIU1JjRS9RYgpNV2t1OEZheEo0NHpKWSttM1NKL2g0R2UrL0tONWhVeEUyWjhWbDMzTTd0ZlJVTzlQOG9YdDZWSG82L1JHai91Cmp3SXBWbzU5dmZnbDA3SlNjaGtCT200bGhvRjJCeWx3TkZEVUg3cmJYV0c0T1JieWhoSGpsMzcxd0RpZjliazcKMjE4WXpXdFRIV3JqS3g0dEJjR21KTk1TbDNMLzVpYWx3ZGVRczlQN1Z5OG1FdFlxRHpOSktZQVFNRzRJSHJrSAo1SElrZnBwSGpaRGF6Vzdtb3pjL1VISnZYcnlvYVNJSnhyNDVVaEN0MEEzOFc4NlQrcTVWUDNiYjl1aVdxZjAwCm1RSURBUUFCbzBJd1FEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0R3WURWUjBUQVFIL0JBVXdBd0VCL3pBZEJnTlYKSFE0RUZnUVVRb2liOFFELzJBRkRpeXBjQUxNSCtSM2oyNDh3RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUVHbgpra1hLcGpaM0k1elprYlVxU3BNUDA3ZGhKa1UxWFJhUFJoNVZ0ZUtyRGxtOU1YQzNhLy9DUFJCNk1ScG01YUZoClRxZ3I4aVZzbEl5YXd5ZkdRUkdrdklDbjMzdWxuR1R6Mm8zdXVnTDJSS0ZqclNVL2pqQmFqQ3NUbjdxenA1a0QKTG1qZk9RZWh4KzZQNXhTem92NlJaU1czQnZQZG5HRDRndFZoQUJSS2N6U0J1dHd2WW5JUXg1QXlsaS9jdlBpcgprSTVKdEphajUybTBZbG8vOWN3ZFlkbStGaWViTmlmRGhyMnNGdHZPWjcyaEoxUGR2YmsxRmh6VldjOTRZR0sxCnNXME1rWGxMWkdrYTI1Mm9Yd3RXZWtGV0ErWlBKeTZRSVd5L3pxdHd1WDZaOTdsZlIvSFlMUGRlaGFObkFDMEoKcEVUQnJvWTNuTERiTHdhd1RVaz0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://10.0.0.47:8443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: admin

name: kubernetes

current-context: ""

kind: Config

preferences: {}

users:

- name: admin

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQzVENDQXNXZ0F3SUJBZ0lVUjhObG1wL0FtbzRQRDkzUFEzMlNTamFzU0RRd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTNORGN3TUZvWERUTTBNRGt3T1RBM05EY3dNRm93YXpFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RnpBVgpCZ05WQkFvVERuTjVjM1JsYlRwdFlYTjBaWEp6TVE4d0RRWURWUVFMRXdaemVYTjBaVzB4RGpBTUJnTlZCQU1UCkJXRmtiV2x1TUlJQklqQU5CZ2txaGtpRzl3MEJBUUVGQUFPQ0FROEFNSUlCQ2dLQ0FRRUF6Y1ZaMUN6V3hVNGEKNHdaMkxpRjNJK2JaMjFLSUN2d3h5by96akNwZWNUZ3QxMXNjclZyRVlxLzM5a283d09lYzlVMk9NRG5jTzExNgp4anVBUVEvTGFqS1lSZmZjUGNLVUZadHdZMGgzVzNnUTdvV1hZcmg2eDFkUVJKUXRXZG16ZWtZUWZoOUt2aFo5CmNZYWFYTUQ2c3I0MkFFT1RxWnJYSmpUb2VKR01OcGhXbVIzU3hwNGEyb2VFOVhJZXYzS0RVdGhtTnJQa0FaUW0KeHp1SklQY2Z6TjdSelc2R2RTd1ZKK1o0REVQS0tERDlOb0p0ZzQ5RDlZVlhjTnFpdnFBT3I0VlF1dERVZE5MWgpxbDZ2S1hXTHlHMVJEcVd5YXVSbG5Pb3dwTmwySWxqNWQ0dVF5VE9xMWExTElhRTJTa1pzZWkrN2pZRzJyWmw1Ck5nTHZGOEw4NlFJREFRQUJvMzh3ZlRBT0JnTlZIUThCQWY4RUJBTUNCYUF3SFFZRFZSMGxCQll3RkFZSUt3WUIKQlFVSEF3RUdDQ3NHQVFVRkJ3TUNNQXdHQTFVZEV3RUIvd1FDTUFBd0hRWURWUjBPQkJZRUZDZVJFQlh1SzJWVQpnWmZLNWN5T09Cekdwc3RtTUI4R0ExVWRJd1FZTUJhQUZFS0ltL0VBLzlnQlE0c3FYQUN6Qi9rZDQ5dVBNQTBHCkNTcUdTSWIzRFFFQkN3VUFBNElCQVFCMDkrNzB1bFF5TG1TVExPdXR4NUVlZnJYQW90TFljdEUwWmprRWJURzMKNFVZTU9mWGF0RXZwYnBYWlBmYVRudk83RS9jdHl5RksvV1ZIS1RrNnFzandWZklYZTV4cm0zbzQ5T3ExRFFDTwpMMUI5ckIzSDRNcC9ic0tQbkxBeFA4V2ZiYmN3OTdCREdleVVjZHdzTTVFYy9BSDBkaUNTancxa0haSXlWVnh0CmtHby8zQVhKek9UZXZlUi9sTVJpcGNDNTV4SnQ1Z1I0Rzhhb0N3MGg5R1QzYk9zVThRVVVHaG9oc3ZPYmU0dzkKbkF6ZTU5eks2TEtqcXNyYXROUmdtNUJ1cTFsRm11cTJ5UDNBUDVPbWpBVlNQZnNqS3NHRUVCb3Q1R0NCVnlTegoyeVFXYldwTGFzakJjSU54bzdNRnB4YmZ0NUhHeFU0ZGg0Vy9EdXJoMEl5egotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg==

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcEFJQkFBS0NBUUVBemNWWjFDeld4VTRhNHdaMkxpRjNJK2JaMjFLSUN2d3h5by96akNwZWNUZ3QxMXNjCnJWckVZcS8zOWtvN3dPZWM5VTJPTURuY08xMTZ4anVBUVEvTGFqS1lSZmZjUGNLVUZadHdZMGgzVzNnUTdvV1gKWXJoNngxZFFSSlF0V2RtemVrWVFmaDlLdmhaOWNZYWFYTUQ2c3I0MkFFT1RxWnJYSmpUb2VKR01OcGhXbVIzUwp4cDRhMm9lRTlYSWV2M0tEVXRobU5yUGtBWlFteHp1SklQY2Z6TjdSelc2R2RTd1ZKK1o0REVQS0tERDlOb0p0Cmc0OUQ5WVZYY05xaXZxQU9yNFZRdXREVWROTFpxbDZ2S1hXTHlHMVJEcVd5YXVSbG5Pb3dwTmwySWxqNWQ0dVEKeVRPcTFhMUxJYUUyU2tac2VpKzdqWUcyclpsNU5nTHZGOEw4NlFJREFRQUJBb0lCQVFEQ1c1d0RhdTdab25LRwo2VDJMU1JUTmxtbEVYZW9kNWlQcG5wcCtWQzZzWmxIMlRoc0NLdSsvLzFJSkVnanFwbHA4NE9waTV1UDhOc21XCm4vRCtnenF4Ym1TaUFnSEhYQmlmYUJoNXpxTGVoTVFKWjZtY0YzL3c5YW5kZk5CeFE4M2d1bmt0aDhVRFV4N2QKc2pQdlZGLzNvTzVFeFkrZDdhRTJkMWIxT3hUaklzR25CTzJHU3VvZE1WRDFBcXBueVJmR2NVSnM0dkFsM0tXSwp2cmxGTDEycnRqbTFNQzBHRjdwT3ZueHlkR0lhNkVZbjNFZEFCMjY1VHFhUHB4VGFDZjkwaHlQclNsVVNhbDU1Cm9BSzA5cFE0ckwxVmZFZXdUdEpCY0JIZFpMZk5GU0UyWjB5MVlFYk5QRFZ1RmswMEpOdE51L3ZOYWl6ckM4OGkKTkwzcjFWU1pBb0dCQU5IV1c5Zngxd0pyYXdnQTVEVWZ1S3RjbVZTMFJMZitJMmhiaWw1NUFlQUpRbC85TllyaQo2bG5oblUyS3RZUFlKamdEUVkvWi9iZG1ZZ1FBSm9LYVpZNFIwUkdSVGs5bm91emh1UUxQalRZbEo3ZnRCZzI0CjYvbzJwSjVnL0RuNjV1ZzMzZ0F2VTJQUm9VTGR0TFhtMTRCSloxeVJkYjE3VjJVZnlocnJHcVNYQW9HQkFQc0oKK21VZVZ1TXpWbzhrWld0bGJ0djVydER2cGlWYUU4bDNGSStTbDAxTXJDMitqREtmZG5FZEUySFdDc0RDODVDOApYdG80Wm1kWUh2ZTBMRHFKMGNSZSswa2lwdXRsUG5wOEpUaVowbFFSd1BaUUpGK3JqTVN4a2lQaGRjWk94MzJQCnJLQW9Bdk1FSWtFTG84MFgxYmYyMGs1OGNyRDUySkFVVWZTOFRCcC9Bb0dBQWwyN2JXVHh1cnBCVzdhKzNBWisKaTVnZ3RuN040NUUvRHZjeFNUMXVFdnVudnZOWS9qYnUwNUtpdG5RZzlkcWpHN0NWdGF5TW10dlJzUi9iVDArMApZM1M1K2N1OHFWS08yTUwyMWh4SENGeEU1V01MMVczSFkydm9VVXpncXpxMERkeExhWThmRHBvWGlteDdsQzJGCk1wSWhVejdrcC8xVEQvWGF6cERtSFFNQ2dZRUEyc2xHZGl4cjgxV0I0ZjBKZXdFTERpSmNibkgrYmwxRUUzaDUKN2VzSGZISVBPVXJ4YXdrNU03bndjM3NWSWd5R05DVkgwWTRJQ1pkdVhkbWtGbHlZK2prQmJpc0tLT3V5K1JNTAphWG4rS2hEVENKaXVLc2NiUnkydlBTQTVBZDBVMWVTS3dZWTlrOGlOaGZ6OEJEbjZwSHN6clAyZkE0aXNhbDJiClU5MXJ3a2NDZ1lCVE9aTzQvcGE1MUFRcjEweE5EeitlYjNReTZmcTJJd0swWm0zb0NLOC96NlZNcllFTjR6ckYKRFVxVnh3VFB6akxYVGVzWEFFTmlDY1lOZGoweFRVcXdwaEliTWZVbkpDYzZYZkhMNEppNThQUE1ma0VKTGpHdwp1cGhIMjF1MDlNWnA4UmlDYkp3ZlE3TG90M0kvdlFvZktoczExSFdMSUNvT2psSzlrWUVpOWc9PQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo=

[root@k8s-master01 k8s-work]# kubectl config use-context kubernetes --kubeconfig=kube.config

[root@k8s-master01 k8s-work]# cat kube.config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURtakNDQW9LZ0F3SUJBZ0lVT2dyZGNuYXRLcmp1WCs3dlFRaFg2TjErL0Qwd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTNNRGN3TUZvWERUTTBNRGt3T1RBM01EY3dNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RURBTwpCZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBdEJwcmxVZGJ2T1dKcE1PcDdjcXIKK29OakRMYUhhN1ZtVStIaEVDQ1pWUWtkMWl2TmVXWXF5TjUxNlU3d3hqdk5jR1pzYkZkVzVaSjdIU1JjRS9RYgpNV2t1OEZheEo0NHpKWSttM1NKL2g0R2UrL0tONWhVeEUyWjhWbDMzTTd0ZlJVTzlQOG9YdDZWSG82L1JHai91Cmp3SXBWbzU5dmZnbDA3SlNjaGtCT200bGhvRjJCeWx3TkZEVUg3cmJYV0c0T1JieWhoSGpsMzcxd0RpZjliazcKMjE4WXpXdFRIV3JqS3g0dEJjR21KTk1TbDNMLzVpYWx3ZGVRczlQN1Z5OG1FdFlxRHpOSktZQVFNRzRJSHJrSAo1SElrZnBwSGpaRGF6Vzdtb3pjL1VISnZYcnlvYVNJSnhyNDVVaEN0MEEzOFc4NlQrcTVWUDNiYjl1aVdxZjAwCm1RSURBUUFCbzBJd1FEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0R3WURWUjBUQVFIL0JBVXdBd0VCL3pBZEJnTlYKSFE0RUZnUVVRb2liOFFELzJBRkRpeXBjQUxNSCtSM2oyNDh3RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUVHbgpra1hLcGpaM0k1elprYlVxU3BNUDA3ZGhKa1UxWFJhUFJoNVZ0ZUtyRGxtOU1YQzNhLy9DUFJCNk1ScG01YUZoClRxZ3I4aVZzbEl5YXd5ZkdRUkdrdklDbjMzdWxuR1R6Mm8zdXVnTDJSS0ZqclNVL2pqQmFqQ3NUbjdxenA1a0QKTG1qZk9RZWh4KzZQNXhTem92NlJaU1czQnZQZG5HRDRndFZoQUJSS2N6U0J1dHd2WW5JUXg1QXlsaS9jdlBpcgprSTVKdEphajUybTBZbG8vOWN3ZFlkbStGaWViTmlmRGhyMnNGdHZPWjcyaEoxUGR2YmsxRmh6VldjOTRZR0sxCnNXME1rWGxMWkdrYTI1Mm9Yd3RXZWtGV0ErWlBKeTZRSVd5L3pxdHd1WDZaOTdsZlIvSFlMUGRlaGFObkFDMEoKcEVUQnJvWTNuTERiTHdhd1RVaz0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://10.0.0.47:8443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: admin

name: kubernetes

current-context: kubernetes

kind: Config

preferences: {}

users:

- name: admin

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQzVENDQXNXZ0F3SUJBZ0lVUjhObG1wL0FtbzRQRDkzUFEzMlNTamFzU0RRd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTNORGN3TUZvWERUTTBNRGt3T1RBM05EY3dNRm93YXpFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RnpBVgpCZ05WQkFvVERuTjVjM1JsYlRwdFlYTjBaWEp6TVE4d0RRWURWUVFMRXdaemVYTjBaVzB4RGpBTUJnTlZCQU1UCkJXRmtiV2x1TUlJQklqQU5CZ2txaGtpRzl3MEJBUUVGQUFPQ0FROEFNSUlCQ2dLQ0FRRUF6Y1ZaMUN6V3hVNGEKNHdaMkxpRjNJK2JaMjFLSUN2d3h5by96akNwZWNUZ3QxMXNjclZyRVlxLzM5a283d09lYzlVMk9NRG5jTzExNgp4anVBUVEvTGFqS1lSZmZjUGNLVUZadHdZMGgzVzNnUTdvV1hZcmg2eDFkUVJKUXRXZG16ZWtZUWZoOUt2aFo5CmNZYWFYTUQ2c3I0MkFFT1RxWnJYSmpUb2VKR01OcGhXbVIzU3hwNGEyb2VFOVhJZXYzS0RVdGhtTnJQa0FaUW0KeHp1SklQY2Z6TjdSelc2R2RTd1ZKK1o0REVQS0tERDlOb0p0ZzQ5RDlZVlhjTnFpdnFBT3I0VlF1dERVZE5MWgpxbDZ2S1hXTHlHMVJEcVd5YXVSbG5Pb3dwTmwySWxqNWQ0dVF5VE9xMWExTElhRTJTa1pzZWkrN2pZRzJyWmw1Ck5nTHZGOEw4NlFJREFRQUJvMzh3ZlRBT0JnTlZIUThCQWY4RUJBTUNCYUF3SFFZRFZSMGxCQll3RkFZSUt3WUIKQlFVSEF3RUdDQ3NHQVFVRkJ3TUNNQXdHQTFVZEV3RUIvd1FDTUFBd0hRWURWUjBPQkJZRUZDZVJFQlh1SzJWVQpnWmZLNWN5T09Cekdwc3RtTUI4R0ExVWRJd1FZTUJhQUZFS0ltL0VBLzlnQlE0c3FYQUN6Qi9rZDQ5dVBNQTBHCkNTcUdTSWIzRFFFQkN3VUFBNElCQVFCMDkrNzB1bFF5TG1TVExPdXR4NUVlZnJYQW90TFljdEUwWmprRWJURzMKNFVZTU9mWGF0RXZwYnBYWlBmYVRudk83RS9jdHl5RksvV1ZIS1RrNnFzandWZklYZTV4cm0zbzQ5T3ExRFFDTwpMMUI5ckIzSDRNcC9ic0tQbkxBeFA4V2ZiYmN3OTdCREdleVVjZHdzTTVFYy9BSDBkaUNTancxa0haSXlWVnh0CmtHby8zQVhKek9UZXZlUi9sTVJpcGNDNTV4SnQ1Z1I0Rzhhb0N3MGg5R1QzYk9zVThRVVVHaG9oc3ZPYmU0dzkKbkF6ZTU5eks2TEtqcXNyYXROUmdtNUJ1cTFsRm11cTJ5UDNBUDVPbWpBVlNQZnNqS3NHRUVCb3Q1R0NCVnlTegoyeVFXYldwTGFzakJjSU54bzdNRnB4YmZ0NUhHeFU0ZGg0Vy9EdXJoMEl5egotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg==

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcEFJQkFBS0NBUUVBemNWWjFDeld4VTRhNHdaMkxpRjNJK2JaMjFLSUN2d3h5by96akNwZWNUZ3QxMXNjCnJWckVZcS8zOWtvN3dPZWM5VTJPTURuY08xMTZ4anVBUVEvTGFqS1lSZmZjUGNLVUZadHdZMGgzVzNnUTdvV1gKWXJoNngxZFFSSlF0V2RtemVrWVFmaDlLdmhaOWNZYWFYTUQ2c3I0MkFFT1RxWnJYSmpUb2VKR01OcGhXbVIzUwp4cDRhMm9lRTlYSWV2M0tEVXRobU5yUGtBWlFteHp1SklQY2Z6TjdSelc2R2RTd1ZKK1o0REVQS0tERDlOb0p0Cmc0OUQ5WVZYY05xaXZxQU9yNFZRdXREVWROTFpxbDZ2S1hXTHlHMVJEcVd5YXVSbG5Pb3dwTmwySWxqNWQ0dVEKeVRPcTFhMUxJYUUyU2tac2VpKzdqWUcyclpsNU5nTHZGOEw4NlFJREFRQUJBb0lCQVFEQ1c1d0RhdTdab25LRwo2VDJMU1JUTmxtbEVYZW9kNWlQcG5wcCtWQzZzWmxIMlRoc0NLdSsvLzFJSkVnanFwbHA4NE9waTV1UDhOc21XCm4vRCtnenF4Ym1TaUFnSEhYQmlmYUJoNXpxTGVoTVFKWjZtY0YzL3c5YW5kZk5CeFE4M2d1bmt0aDhVRFV4N2QKc2pQdlZGLzNvTzVFeFkrZDdhRTJkMWIxT3hUaklzR25CTzJHU3VvZE1WRDFBcXBueVJmR2NVSnM0dkFsM0tXSwp2cmxGTDEycnRqbTFNQzBHRjdwT3ZueHlkR0lhNkVZbjNFZEFCMjY1VHFhUHB4VGFDZjkwaHlQclNsVVNhbDU1Cm9BSzA5cFE0ckwxVmZFZXdUdEpCY0JIZFpMZk5GU0UyWjB5MVlFYk5QRFZ1RmswMEpOdE51L3ZOYWl6ckM4OGkKTkwzcjFWU1pBb0dCQU5IV1c5Zngxd0pyYXdnQTVEVWZ1S3RjbVZTMFJMZitJMmhiaWw1NUFlQUpRbC85TllyaQo2bG5oblUyS3RZUFlKamdEUVkvWi9iZG1ZZ1FBSm9LYVpZNFIwUkdSVGs5bm91emh1UUxQalRZbEo3ZnRCZzI0CjYvbzJwSjVnL0RuNjV1ZzMzZ0F2VTJQUm9VTGR0TFhtMTRCSloxeVJkYjE3VjJVZnlocnJHcVNYQW9HQkFQc0oKK21VZVZ1TXpWbzhrWld0bGJ0djVydER2cGlWYUU4bDNGSStTbDAxTXJDMitqREtmZG5FZEUySFdDc0RDODVDOApYdG80Wm1kWUh2ZTBMRHFKMGNSZSswa2lwdXRsUG5wOEpUaVowbFFSd1BaUUpGK3JqTVN4a2lQaGRjWk94MzJQCnJLQW9Bdk1FSWtFTG84MFgxYmYyMGs1OGNyRDUySkFVVWZTOFRCcC9Bb0dBQWwyN2JXVHh1cnBCVzdhKzNBWisKaTVnZ3RuN040NUUvRHZjeFNUMXVFdnVudnZOWS9qYnUwNUtpdG5RZzlkcWpHN0NWdGF5TW10dlJzUi9iVDArMApZM1M1K2N1OHFWS08yTUwyMWh4SENGeEU1V01MMVczSFkydm9VVXpncXpxMERkeExhWThmRHBvWGlteDdsQzJGCk1wSWhVejdrcC8xVEQvWGF6cERtSFFNQ2dZRUEyc2xHZGl4cjgxV0I0ZjBKZXdFTERpSmNibkgrYmwxRUUzaDUKN2VzSGZISVBPVXJ4YXdrNU03bndjM3NWSWd5R05DVkgwWTRJQ1pkdVhkbWtGbHlZK2prQmJpc0tLT3V5K1JNTAphWG4rS2hEVENKaXVLc2NiUnkydlBTQTVBZDBVMWVTS3dZWTlrOGlOaGZ6OEJEbjZwSHN6clAyZkE0aXNhbDJiClU5MXJ3a2NDZ1lCVE9aTzQvcGE1MUFRcjEweE5EeitlYjNReTZmcTJJd0swWm0zb0NLOC96NlZNcllFTjR6ckYKRFVxVnh3VFB6akxYVGVzWEFFTmlDY1lOZGoweFRVcXdwaEliTWZVbkpDYzZYZkhMNEppNThQUE1ma0VKTGpHdwp1cGhIMjF1MDlNWnA4UmlDYkp3ZlE3TG90M0kvdlFvZktoczExSFdMSUNvT2psSzlrWUVpOWc9PQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo=

6.5.5 准备kubectl配置文件并进行角色绑定

[root@k8s-master01 k8s-work]# mkdir ~/.kube

[root@k8s-master01 k8s-work]# cp kube.config ~/.kube/config

[root@k8s-master01 k8s-work]# kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes --kubeconfig=/root/.kube/config

6.5.6 查看集群状态

[root@k8s-master01 k8s-work]# kubectl cluster-info

Kubernetes control plane is running at https://10.0.0.47:8443

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

[root@k8s-master01 k8s-work]# kubectl get componentstatuses

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Unhealthy Get "https://127.0.0.1:10257/healthz": dial tcp 127.0.0.1:10257: connect: connection refused

scheduler Unhealthy Get "https://127.0.0.1:10259/healthz": dial tcp 127.0.0.1:10259: connect: connection refused

etcd-0 Healthy ok

[root@k8s-master01 k8s-work]# kubectl get all --all-namespaces

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 49m

6.5.7 同步kubectl配置文件到其它master节点

[root@k8s-master02 ~]# mkdir /root/.kube

[root@k8s-master03 ~]# mkdir /root/.kube

[root@k8s-master01 k8s-work]# scp /root/.kube/config k8s-master02:/root/.kube/config

[root@k8s-master01 k8s-work]# scp /root/.kube/config k8s-master03:/root/.kube/config

6.5.8 配置kubectl命令补全功能(可选)

yum install -y bash-completion

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

kubectl completion bash > ~/.kube/completion.bash.inc

source '/root/.kube/completion.bash.inc'

source $HOME/.bash_profile

6.6 kube-controller-manager部署

6.6.1 创建kube-controller-manager证书请求文件

[root@k8s-master01 k8s-work]# cat > kube-controller-manager-csr.json << "EOF"

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"10.0.0.40",

"10.0.0.41",

"10.0.0.42"

],

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "system"

}

]

}

EOF

说明:

hosts 列表包含所有 kube-controller-manager 节点 IP;

CN 为 system:kube-controller-manager、O 为 system:kube-controller-manager,kubernetes 内置的 ClusterRoleBindings system:kube-controller-manager 赋予 kube-controller-manager 工作所需的权限

[root@k8s-master01 k8s-work]# ls kube-controller-manager-csr.json

kube-controller-manager-csr.json

6.6.2 创建kube-controller-manager证书文件

[root@k8s-master01 k8s-work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

输出:

2024/09/11 16:40:36 [INFO] generate received request

2024/09/11 16:40:36 [INFO] received CSR

2024/09/11 16:40:36 [INFO] generating key: rsa-2048

2024/09/11 16:40:37 [INFO] encoded CSR

2024/09/11 16:40:37 [INFO] signed certificate with serial number 130097164205038572324452144298498763113561896404

[root@k8s-master01 k8s-work]# ls kube-controller-manager*

kube-controller-manager.csr

kube-controller-manager-csr.json

kube-controller-manager-key.pem

kube-controller-manager.pem

6.6.3 创建kube-controller-manager的config文件

[root@k8s-master01 k8s-work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.0.0.47:8443 --kubeconfig=kube-controller-manager.kubeconfig

[root@k8s-master01 k8s-work]# cat kube-controller-manager.kubeconfig

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURtakNDQW9LZ0F3SUJBZ0lVT2dyZGNuYXRLcmp1WCs3dlFRaFg2TjErL0Qwd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTNNRGN3TUZvWERUTTBNRGt3T1RBM01EY3dNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RURBTwpCZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBdEJwcmxVZGJ2T1dKcE1PcDdjcXIKK29OakRMYUhhN1ZtVStIaEVDQ1pWUWtkMWl2TmVXWXF5TjUxNlU3d3hqdk5jR1pzYkZkVzVaSjdIU1JjRS9RYgpNV2t1OEZheEo0NHpKWSttM1NKL2g0R2UrL0tONWhVeEUyWjhWbDMzTTd0ZlJVTzlQOG9YdDZWSG82L1JHai91Cmp3SXBWbzU5dmZnbDA3SlNjaGtCT200bGhvRjJCeWx3TkZEVUg3cmJYV0c0T1JieWhoSGpsMzcxd0RpZjliazcKMjE4WXpXdFRIV3JqS3g0dEJjR21KTk1TbDNMLzVpYWx3ZGVRczlQN1Z5OG1FdFlxRHpOSktZQVFNRzRJSHJrSAo1SElrZnBwSGpaRGF6Vzdtb3pjL1VISnZYcnlvYVNJSnhyNDVVaEN0MEEzOFc4NlQrcTVWUDNiYjl1aVdxZjAwCm1RSURBUUFCbzBJd1FEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0R3WURWUjBUQVFIL0JBVXdBd0VCL3pBZEJnTlYKSFE0RUZnUVVRb2liOFFELzJBRkRpeXBjQUxNSCtSM2oyNDh3RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUVHbgpra1hLcGpaM0k1elprYlVxU3BNUDA3ZGhKa1UxWFJhUFJoNVZ0ZUtyRGxtOU1YQzNhLy9DUFJCNk1ScG01YUZoClRxZ3I4aVZzbEl5YXd5ZkdRUkdrdklDbjMzdWxuR1R6Mm8zdXVnTDJSS0ZqclNVL2pqQmFqQ3NUbjdxenA1a0QKTG1qZk9RZWh4KzZQNXhTem92NlJaU1czQnZQZG5HRDRndFZoQUJSS2N6U0J1dHd2WW5JUXg1QXlsaS9jdlBpcgprSTVKdEphajUybTBZbG8vOWN3ZFlkbStGaWViTmlmRGhyMnNGdHZPWjcyaEoxUGR2YmsxRmh6VldjOTRZR0sxCnNXME1rWGxMWkdrYTI1Mm9Yd3RXZWtGV0ErWlBKeTZRSVd5L3pxdHd1WDZaOTdsZlIvSFlMUGRlaGFObkFDMEoKcEVUQnJvWTNuTERiTHdhd1RVaz0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://10.0.0.47:8443

name: kubernetes

contexts: null

current-context: ""

kind: Config

preferences: {}

users: null

[root@k8s-master01 k8s-work]# kubectl config set-credentials system:kube-controller-manager --client-certificate=kube-controller-manager.pem --client-key=kube-controller-manager-key.pem --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfig

[root@k8s-master01 k8s-work]# cat kube-controller-manager.kubeconfig

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURtakNDQW9LZ0F3SUJBZ0lVT2dyZGNuYXRLcmp1WCs3dlFRaFg2TjErL0Qwd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTNNRGN3TUZvWERUTTBNRGt3T1RBM01EY3dNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RURBTwpCZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBdEJwcmxVZGJ2T1dKcE1PcDdjcXIKK29OakRMYUhhN1ZtVStIaEVDQ1pWUWtkMWl2TmVXWXF5TjUxNlU3d3hqdk5jR1pzYkZkVzVaSjdIU1JjRS9RYgpNV2t1OEZheEo0NHpKWSttM1NKL2g0R2UrL0tONWhVeEUyWjhWbDMzTTd0ZlJVTzlQOG9YdDZWSG82L1JHai91Cmp3SXBWbzU5dmZnbDA3SlNjaGtCT200bGhvRjJCeWx3TkZEVUg3cmJYV0c0T1JieWhoSGpsMzcxd0RpZjliazcKMjE4WXpXdFRIV3JqS3g0dEJjR21KTk1TbDNMLzVpYWx3ZGVRczlQN1Z5OG1FdFlxRHpOSktZQVFNRzRJSHJrSAo1SElrZnBwSGpaRGF6Vzdtb3pjL1VISnZYcnlvYVNJSnhyNDVVaEN0MEEzOFc4NlQrcTVWUDNiYjl1aVdxZjAwCm1RSURBUUFCbzBJd1FEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0R3WURWUjBUQVFIL0JBVXdBd0VCL3pBZEJnTlYKSFE0RUZnUVVRb2liOFFELzJBRkRpeXBjQUxNSCtSM2oyNDh3RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUVHbgpra1hLcGpaM0k1elprYlVxU3BNUDA3ZGhKa1UxWFJhUFJoNVZ0ZUtyRGxtOU1YQzNhLy9DUFJCNk1ScG01YUZoClRxZ3I4aVZzbEl5YXd5ZkdRUkdrdklDbjMzdWxuR1R6Mm8zdXVnTDJSS0ZqclNVL2pqQmFqQ3NUbjdxenA1a0QKTG1qZk9RZWh4KzZQNXhTem92NlJaU1czQnZQZG5HRDRndFZoQUJSS2N6U0J1dHd2WW5JUXg1QXlsaS9jdlBpcgprSTVKdEphajUybTBZbG8vOWN3ZFlkbStGaWViTmlmRGhyMnNGdHZPWjcyaEoxUGR2YmsxRmh6VldjOTRZR0sxCnNXME1rWGxMWkdrYTI1Mm9Yd3RXZWtGV0ErWlBKeTZRSVd5L3pxdHd1WDZaOTdsZlIvSFlMUGRlaGFObkFDMEoKcEVUQnJvWTNuTERiTHdhd1RVaz0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://10.0.0.47:8443

name: kubernetes

contexts: null

current-context: ""

kind: Config

preferences: {}

users:

- name: system:kube-controller-manager

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUVMRENDQXhTZ0F3SUJBZ0lVRnNuQ00vUGFzKzNSbnUxL0ZJd01MN3RVdGRRd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTRNell3TUZvWERUTTBNRGt3T1RBNE16WXdNRm93Z1pReEN6QUoKQmdOVkJBWVRBa05PTVJBd0RnWURWUVFJRXdkQ1pXbHFhVzVuTVJBd0RnWURWUVFIRXdkQ1pXbHFhVzVuTVNjdwpKUVlEVlFRS0V4NXplWE4wWlcwNmEzVmlaUzFqYjI1MGNtOXNiR1Z5TFcxaGJtRm5aWEl4RHpBTkJnTlZCQXNUCkJuTjVjM1JsYlRFbk1DVUdBMVVFQXhNZWMzbHpkR1Z0T210MVltVXRZMjl1ZEhKdmJHeGxjaTF0WVc1aFoyVnkKTUlJQklqQU5CZ2txaGtpRzl3MEJBUUVGQUFPQ0FROEFNSUlCQ2dLQ0FRRUF1bWxITEVJNXhBKy9mY24xV0hGagpET2d0L3hLTHdaRnk1d0Nvb2IxMFFNR2VUNkRlcmRNdVYyMlNNbW9zZUt3YnpnV3dKN0J0dGRydVJ6cWRGTEZ0CkRpc0pnRlZxNGVZa3Z3VWh4RTV1aWhEdmZmZHlRbTA3cUh5d3BxbFFOeDlSS3Y5bmlkTGtQZVVOWEVKMnY1UWwKZ2J4NW9GSWMvcytvSzRORjAyaHk4K2dLUCtvZmlGKzZRV0VNby9HdzZlajNKM01ldXUvYVZKR29NdjJiVEFocAoyZ2V1L3hVenE1ZEJzS2s1MGt4ck5QY2JCOTQyTXZkUXh4MkpySURISGhSQjVHV0VuWVB4WGdPSUwwcHJvNXFnCnBDVVdpOUZNSjRBTFR6MHBXYmVIZ1FIcklsdkliazV4VWRFVGFUQkNBWE8rNDdwNUpnS0VUalRUNnBhSFlITkYKYndJREFRQUJvNEdqTUlHZ01BNEdBMVVkRHdFQi93UUVBd0lGb0RBZEJnTlZIU1VFRmpBVUJnZ3JCZ0VGQlFjRApBUVlJS3dZQkJRVUhBd0l3REFZRFZSMFRBUUgvQkFJd0FEQWRCZ05WSFE0RUZnUVVKMkpwNm1YYzhSQVdqRHkxClFMNC9CMXg4aEZJd0h3WURWUjBqQkJnd0ZvQVVRb2liOFFELzJBRkRpeXBjQUxNSCtSM2oyNDh3SVFZRFZSMFIKQkJvd0dJY0Vmd0FBQVljRUNnQUFLSWNFQ2dBQUtZY0VDZ0FBS2pBTkJna3Foa2lHOXcwQkFRc0ZBQU9DQVFFQQpxYXdYaWxaRDdGNVVWMmdUNktXakZmQ0pLckFkdG9UcXhucVQ0b0tPQnE5cDhTcjVmYlo4NzA2MkpaWFh6ZEdvCkp5NHFGZ29DajZyWXE3c3NtaEt6ZDU1a2hhSy8zcEp3dE81Z1NVbmVSVlNmb1IrbjNlaVhncU9GWFFuSUVXdnMKZzVDMHZkTUFleEtwZGJURDlKZ2tYZ3gwd1N1V09UZjdIR3IySEFEa3Zxdk5iV3d3NUljcFRtL1luYmxvNG04UgpkbkkwZEJ1ek1Wdkt0MFU4L05oN0RSU3dTZkkzNnUwUy9SYUNzSTlGQlNPb0svTktpaWdDQjVRdGUyU1hrenNsCm51cGxpb3BTS2xQck9QTjJ2VmhHRU15OVRrdSt0TlJJKzFkd1pCTnc5VSsrbTZtaFErbjFXZXZvNDArSHMwSHYKenlqanlsMDJmMllUdG95cnRrNndjZz09Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFb3dJQkFBS0NBUUVBdW1sSExFSTV4QSsvZmNuMVdIRmpET2d0L3hLTHdaRnk1d0Nvb2IxMFFNR2VUNkRlCnJkTXVWMjJTTW1vc2VLd2J6Z1d3SjdCdHRkcnVSenFkRkxGdERpc0pnRlZxNGVZa3Z3VWh4RTV1aWhEdmZmZHkKUW0wN3FIeXdwcWxRTng5Ukt2OW5pZExrUGVVTlhFSjJ2NVFsZ2J4NW9GSWMvcytvSzRORjAyaHk4K2dLUCtvZgppRis2UVdFTW8vR3c2ZWozSjNNZXV1L2FWSkdvTXYyYlRBaHAyZ2V1L3hVenE1ZEJzS2s1MGt4ck5QY2JCOTQyCk12ZFF4eDJKcklESEhoUkI1R1dFbllQeFhnT0lMMHBybzVxZ3BDVVdpOUZNSjRBTFR6MHBXYmVIZ1FIcklsdkkKYms1eFVkRVRhVEJDQVhPKzQ3cDVKZ0tFVGpUVDZwYUhZSE5GYndJREFRQUJBb0lCQVFDMEIwcTZYcmNsTjhSTApPb21kUWR4VU1jT0NUU24xNW4rZXd3OFpMVHdoOGh2dmNVQzloVytDOWdvMGNEL0V4d3NQWElUMHY3b2s0R3d4CkZGVnlENnh2KzNad240M2Ezd1pzQ1F2RVo2N3YzazA5VFlYbXkxSExkYWl4UEdHQTZ0amIrcy9HMW9xaGtCM28KRlRSVDcwS04yalZvZFFVVnZmei9FUWVWbFpFM0ppRExvT1B6ZEhxODBHeHhQcWJXanhaMkMvWnZLZG14bFdzbQp4Y0dUM2xFWHlrSVp6NlZqd3lhNHJPV2IzMEllaUgrbTJ3OHhjUWxWcnVpQUpKWXF3Sm5ZM2pOU3lDRVVOdUJKCk5xTVl6ZmFDalBEb0UyZzVIS2EySWN6RWpxNElYbHJzb29HRjBlNGUwWEpsMHlTY0g0VkR3WjFWS3VkNVVJME4KZDJkUHNBcXBBb0dCQVBKbWE0bnFoU0MvUCtrUmJKR3d0dnFzUHlVcS9GcTFTQStaMjVjUURnRFZoSzNGVnFDbwpiZ1BLOTB4UkxGSkU5MlhOVy9CT2ZVcDRweE1lb0hnU1BxTGhDZSs5Y1RQQ1FGOGhESzFPNjlSMDhNOWxVb2VVClFzdEM5ZmlRcEt4Zk1iaWhLNGxFNnA0cEVyeDBhR0hmYnIzL00wWmtKalVqY0R1TGc1eVJNWjVEQW9HQkFNVGUKc1hLd2ZHc2dkQ3ZTSDByeEI4V1hJVHpwOEt2L3F2REVkTEZFYTNxOHFCUXFvV2M1RlNWV3NWMUl2VEJXT2NYSwpReFk1VVNSVHhzY1FPVXBlaEVyVlZENWtXVCtEbmFQUHMrQU92dmlUcE5vSWEvVjZMK0FKUkV1RDZuZXJUR3FWCmpUdTU5eG1hTXRWdG54dC82SC9CelF5dmdUNmt4cEZJOThZMmhBZGxBb0dBVEN1bkMxV29zOXVsUjZYMENld1AKODhHQXJqdE54V3RGMDdFemNjclh1NmRjNUFZbzdKOUF3dXhhdlo2Y1lOWFBNQ3hTQWJlSVk0aDZaK1d0NDAxSQpaWUoxenVJbTJtN21MMzZCTDB5bmlzR2NrbTl5ZWF3N09RZzNwdjQ4NFBXZytEV2RLcXQvVm1mdHZVNlBKb0pCCm1HN0RQQkZvZURaRXBGRjQ4QkFvR1dVQ2dZQmQ1UHhxLytPSFVHWTMxREtha3BTclY2WkJvQzNxU3JranRmOFYKNE5VR0o5NWVKK3J0Q1Z1ZGdGaDlia2pWT2ZxNTYvck5LYThhalY1YjZNLzZPVlFOUU91NkNqQkt5Nkl1MDh3dAppN3JuWWJ1WlJiVC8walB0UFY0MlNnZFU1ZjAvUkc2azB0QVloT1BEeVZHK1V1WDNzTjMwTSt5SGpSMHJnOHF3CjNhVmd4UUtCZ0N4M3VHS1UzU2hTOGxETlhlTHA2eFJyREJ6c2V6ZTExNWxSdkUydWx6bzZLNWVvNWN1M3M1a0wKYWsvT3hQUDZQanA5R0ZiYkdXNDM4UUFNakp0bVA2UjBZVVVHUkJkalViQko4OHV5MTBFaDZTbWlqM0JmM0phdwozemZiS1F5WWxLRGx2THIveUVxaTVIaU5xTmRZTWhFWjBYd0VSMlJMbk1YWkJPV29McVBrCi0tLS0tRU5EIFJTQSBQUklWQVRFIEtFWS0tLS0tCg==

[root@k8s-master01 k8s-work]# kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

[root@k8s-master01 k8s-work]# cat kube-controller-manager.kubeconfig

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURtakNDQW9LZ0F3SUJBZ0lVT2dyZGNuYXRLcmp1WCs3dlFRaFg2TjErL0Qwd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTNNRGN3TUZvWERUTTBNRGt3T1RBM01EY3dNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RURBTwpCZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBdEJwcmxVZGJ2T1dKcE1PcDdjcXIKK29OakRMYUhhN1ZtVStIaEVDQ1pWUWtkMWl2TmVXWXF5TjUxNlU3d3hqdk5jR1pzYkZkVzVaSjdIU1JjRS9RYgpNV2t1OEZheEo0NHpKWSttM1NKL2g0R2UrL0tONWhVeEUyWjhWbDMzTTd0ZlJVTzlQOG9YdDZWSG82L1JHai91Cmp3SXBWbzU5dmZnbDA3SlNjaGtCT200bGhvRjJCeWx3TkZEVUg3cmJYV0c0T1JieWhoSGpsMzcxd0RpZjliazcKMjE4WXpXdFRIV3JqS3g0dEJjR21KTk1TbDNMLzVpYWx3ZGVRczlQN1Z5OG1FdFlxRHpOSktZQVFNRzRJSHJrSAo1SElrZnBwSGpaRGF6Vzdtb3pjL1VISnZYcnlvYVNJSnhyNDVVaEN0MEEzOFc4NlQrcTVWUDNiYjl1aVdxZjAwCm1RSURBUUFCbzBJd1FEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0R3WURWUjBUQVFIL0JBVXdBd0VCL3pBZEJnTlYKSFE0RUZnUVVRb2liOFFELzJBRkRpeXBjQUxNSCtSM2oyNDh3RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUVHbgpra1hLcGpaM0k1elprYlVxU3BNUDA3ZGhKa1UxWFJhUFJoNVZ0ZUtyRGxtOU1YQzNhLy9DUFJCNk1ScG01YUZoClRxZ3I4aVZzbEl5YXd5ZkdRUkdrdklDbjMzdWxuR1R6Mm8zdXVnTDJSS0ZqclNVL2pqQmFqQ3NUbjdxenA1a0QKTG1qZk9RZWh4KzZQNXhTem92NlJaU1czQnZQZG5HRDRndFZoQUJSS2N6U0J1dHd2WW5JUXg1QXlsaS9jdlBpcgprSTVKdEphajUybTBZbG8vOWN3ZFlkbStGaWViTmlmRGhyMnNGdHZPWjcyaEoxUGR2YmsxRmh6VldjOTRZR0sxCnNXME1rWGxMWkdrYTI1Mm9Yd3RXZWtGV0ErWlBKeTZRSVd5L3pxdHd1WDZaOTdsZlIvSFlMUGRlaGFObkFDMEoKcEVUQnJvWTNuTERiTHdhd1RVaz0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://10.0.0.47:8443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: system:kube-controller-manager

name: system:kube-controller-manager

current-context: ""

kind: Config

preferences: {}

users:

- name: system:kube-controller-manager

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUVMRENDQXhTZ0F3SUJBZ0lVRnNuQ00vUGFzKzNSbnUxL0ZJd01MN3RVdGRRd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURreE1UQTRNell3TUZvWERUTTBNRGt3T1RBNE16WXdNRm93Z1pReEN6QUoKQmdOVkJBWVRBa05PTVJBd0RnWURWUVFJRXdkQ1pXbHFhVzVuTVJBd0RnWURWUVFIRXdkQ1pXbHFhVzVuTVNjdwpKUVlEVlFRS0V4NXplWE4wWlcwNmEzVmlaUzFqYjI1MGNtOXNiR1Z5TFcxaGJtRm5aWEl4RHpBTkJnTlZCQXNUCkJuTjVjM1JsYlRFbk1DVUdBMVVFQXhNZWMzbHpkR1Z0T210MVltVXRZMjl1ZEhKdmJHeGxjaTF0WVc1aFoyVnkKTUlJQklqQU5CZ2txaGtpRzl3MEJBUUVGQUFPQ0FROEFNSUlCQ2dLQ0FRRUF1bWxITEVJNXhBKy9mY24xV0hGagpET2d0L3hLTHdaRnk1d0Nvb2IxMFFNR2VUNkRlcmRNdVYyMlNNbW9zZUt3YnpnV3dKN0J0dGRydVJ6cWRGTEZ0CkRpc0pnRlZxNGVZa3Z3VWh4RTV1aWhEdmZmZHlRbTA3cUh5d3BxbFFOeDlSS3Y5bmlkTGtQZVVOWEVKMnY1UWwKZ2J4NW9GSWMvcytvSzRORjAyaHk4K2dLUCtvZmlGKzZRV0VNby9HdzZlajNKM01ldXUvYVZKR29NdjJiVEFocAoyZ2V1L3hVenE1ZEJzS2s1MGt4ck5QY2JCOTQyTXZkUXh4MkpySURISGhSQjVHV0VuWVB4WGdPSUwwcHJvNXFnCnBDVVdpOUZNSjRBTFR6MHBXYmVIZ1FIcklsdkliazV4VWRFVGFUQkNBWE8rNDdwNUpnS0VUalRUNnBhSFlITkYKYndJREFRQUJvNEdqTUlHZ01BNEdBMVVkRHdFQi93UUVBd0lGb0RBZEJnTlZIU1VFRmpBVUJnZ3JCZ0VGQlFjRApBUVlJS3dZQkJRVUhBd0l3REFZRFZSMFRBUUgvQkFJd0FEQWRCZ05WSFE0RUZnUVVKMkpwNm1YYzhSQVdqRHkxClFMNC9CMXg4aEZJd0h3WURWUjBqQkJnd0ZvQVVRb2liOFFELzJBRkRpeXBjQUxNSCtSM2oyNDh3SVFZRFZSMFIKQkJvd0dJY0Vmd0FBQVljRUNnQUFLSWNFQ2dBQUtZY0VDZ0FBS2pBTkJna3Foa2lHOXcwQkFRc0ZBQU9DQVFFQQpxYXdYaWxaRDdGNVVWMmdUNktXakZmQ0pLckFkdG9UcXhucVQ0b0tPQnE5cDhTcjVmYlo4NzA2MkpaWFh6ZEdvCkp5NHFGZ29DajZyWXE3c3NtaEt6ZDU1a2hhSy8zcEp3dE81Z1NVbmVSVlNmb1IrbjNlaVhncU9GWFFuSUVXdnMKZzVDMHZkTUFleEtwZGJURDlKZ2tYZ3gwd1N1V09UZjdIR3IySEFEa3Zxdk5iV3d3NUljcFRtL1luYmxvNG04UgpkbkkwZEJ1ek1Wdkt0MFU4L05oN0RSU3dTZkkzNnUwUy9SYUNzSTlGQlNPb0svTktpaWdDQjVRdGUyU1hrenNsCm51cGxpb3BTS2xQck9QTjJ2VmhHRU15OVRrdSt0TlJJKzFkd1pCTnc5VSsrbTZtaFErbjFXZXZvNDArSHMwSHYKenlqanlsMDJmMllUdG95cnRrNndjZz09Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFb3dJQkFBS0NBUUVBdW1sSExFSTV4QSsvZmNuMVdIRmpET2d0L3hLTHdaRnk1d0Nvb2IxMFFNR2VUNkRlCnJkTXVWMjJTTW1vc2VLd2J6Z1d3SjdCdHRkcnVSenFkRkxGdERpc0pnRlZxNGVZa3Z3VWh4RTV1aWhEdmZmZHkKUW0wN3FIeXdwcWxRTng5Ukt2OW5pZExrUGVVTlhFSjJ2NVFsZ2J4NW9GSWMvcytvSzRORjAyaHk4K2dLUCtvZgppRis2UVdFTW8vR3c2ZWozSjNNZXV1L2FWSkdvTXYyYlRBaHAyZ2V1L3hVenE1ZEJzS2s1MGt4ck5QY2JCOTQyCk12ZFF4eDJKcklESEhoUkI1R1dFbllQeFhnT0lMMHBybzVxZ3BDVVdpOUZNSjRBTFR6MHBXYmVIZ1FIcklsdkkKYms1eFVkRVRhVEJDQVhPKzQ3cDVKZ0tFVGpUVDZwYUhZSE5GYndJREFRQUJBb0lCQVFDMEIwcTZYcmNsTjhSTApPb21kUWR4VU1jT0NUU24xNW4rZXd3OFpMVHdoOGh2dmNVQzloVytDOWdvMGNEL0V4d3NQWElUMHY3b2s0R3d4CkZGVnlENnh2KzNad240M2Ezd1pzQ1F2RVo2N3YzazA5VFlYbXkxSExkYWl4UEdHQTZ0amIrcy9HMW9xaGtCM28KRlRSVDcwS04yalZvZFFVVnZmei9FUWVWbFpFM0ppRExvT1B6ZEhxODBHeHhQcWJXanhaMkMvWnZLZG14bFdzbQp4Y0dUM2xFWHlrSVp6NlZqd3lhNHJPV2IzMEllaUgrbTJ3OHhjUWxWcnVpQUpKWXF3Sm5ZM2pOU3lDRVVOdUJKCk5xTVl6ZmFDalBEb0UyZzVIS2EySWN6RWpxNElYbHJzb29HRjBlNGUwWEpsMHlTY0g0VkR3WjFWS3VkNVVJME4KZDJkUHNBcXBBb0dCQVBKbWE0bnFoU0MvUCtrUmJKR3d0dnFzUHlVcS9GcTFTQStaMjVjUURnRFZoSzNGVnFDbwpiZ1BLOTB4UkxGSkU5MlhOVy9CT2ZVcDRweE1lb0hnU1BxTGhDZSs5Y1RQQ1FGOGhESzFPNjlSMDhNOWxVb2VVClFzdEM5ZmlRcEt4Zk1iaWhLNGxFNnA0cEVyeDBhR0hmYnIzL00wWmtKalVqY0R1TGc1eVJNWjVEQW9HQkFNVGUKc1hLd2ZHc2dkQ3ZTSDByeEI4V1hJVHpwOEt2L3F2REVkTEZFYTNxOHFCUXFvV2M1RlNWV3NWMUl2VEJXT2NYSwpReFk1VVNSVHhzY1FPVXBlaEVyVlZENWtXVCtEbmFQUHMrQU92dmlUcE5vSWEvVjZMK0FKUkV1RDZuZXJUR3FWCmpUdTU5eG1hTXRWdG54dC82SC9CelF5dmdUNmt4cEZJOThZMmhBZGxBb0dBVEN1bkMxV29zOXVsUjZYMENld1AKODhHQXJqdE54V3RGMDdFemNjclh1NmRjNUFZbzdKOUF3dXhhdlo2Y1lOWFBNQ3hTQWJlSVk0aDZaK1d0NDAxSQpaWUoxenVJbTJtN21MMzZCTDB5bmlzR2NrbTl5ZWF3N09RZzNwdjQ4NFBXZytEV2RLcXQvVm1mdHZVNlBKb0pCCm1HN0RQQkZvZURaRXBGRjQ4QkFvR1dVQ2dZQmQ1UHhxLytPSFVHWTMxREtha3BTclY2WkJvQzNxU3JranRmOFYKNE5VR0o5NWVKK3J0Q1Z1ZGdGaDlia2pWT2ZxNTYvck5LYThhalY1YjZNLzZPVlFOUU91NkNqQkt5Nkl1MDh3dAppN3JuWWJ1WlJiVC8walB0UFY0MlNnZFU1ZjAvUkc2azB0QVloT1BEeVZHK1V1WDNzTjMwTSt5SGpSMHJnOHF3CjNhVmd4UUtCZ0N4M3VHS1UzU2hTOGxETlhlTHA2eFJyREJ6c2V6ZTExNWxSdkUydWx6bzZLNWVvNWN1M3M1a0wKYWsvT3hQUDZQanA5R0ZiYkdXNDM4UUFNakp0bVA2UjBZVVVHUkJkalViQko4OHV5MTBFaDZTbWlqM0JmM0phdwozemZiS1F5WWxLRGx2THIveUVxaTVIaU5xTmRZTWhFWjBYd0VSMlJMbk1YWkJPV29McVBrCi0tLS0tRU5EIFJTQSBQUklWQVRFIEtFWS0tLS0tCg==

[root@k8s-master01 k8s-work]# kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

6.6.4 创建kube-controller-manager服务配置文件

[root@k8s-master01 k8s-work]# cat > /etc/kubernetes/kube-controller-manager.conf << "EOF"

KUBE_CONTROLLER_MANAGER_OPTS=" \

--secure-port=10257 \

--bind-address=0.0.0.0 \

--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \

--service-cluster-ip-range=10.96.0.0/16 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--allocate-node-cidrs=true \

--cluster-cidr=10.244.0.0/16 \

--root-ca-file=/etc/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \

--leader-elect=true \

--feature-gates=RotateKubeletServerCertificate=true \

--controllers=*,bootstrapsigner,tokencleaner \

--tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager-key.pem \

--use-service-account-credentials=true \

--v=2"

EOF

[root@k8s-master02 ~]# cat > /etc/kubernetes/kube-controller-manager.conf << "EOF"

KUBE_CONTROLLER_MANAGER_OPTS=" \