Yarn 集群环境 HA 搭建

环境准备

确保主机搭建 HDFS HA 运行环境

步骤一:修改 mapred-site.xml 配置文件

[root@node-01 ~]# cd /root/apps/hadoop-3.2.1/etc/hadoop/

[root@node-01 hadoop]# vim mapred-site.xml

<configuration>

<!-- 配置MapReduce程序运行模式 为 yarn(不配置默认为 local 模式) -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<!-- 设置 hadoop 路径 -->

<property>

<name>mapreduce.application.classpath</name>

<value>/root/apps/hadoop-3.2.1/etc/hadoop:/root/apps/hadoop-3.2.1/share/hadoop/common/lib/*:/root/apps/hadoop-3.2.1/share/hadoop/common/*:/root/apps/hadoop-3.2.1/share/hadoop/hdfs:/root/apps/hadoop-3.2.1/share/hadoop/hdfs/lib/*:/root/apps/hadoop-3.2.1/share/hadoop/hdfs/*:/root/apps/hadoop-3.2.1/share/hadoop/mapreduce/lib/*:/root/apps/hadoop-3.2.1/share/hadoop/mapreduce/*:/root/apps/hadoop-3.2.1/share/hadoop/yarn:/root/apps/hadoop-3.2.1/share/hadoop/yarn/lib/*:/root/apps/hadoop-3.2.1/share/hadoop/yarn/*</value>

</property>

</configuration>

步骤二:修改yarn-env.sh 配置文件

[root@node-01 ~]# cd /root/apps/hadoop-3.2.1/etc/hadoop

[root@node-01 hadoop]# echo 'export JAVA_HOME=${JAVA_HOME}' >> yarn-env.sh

步骤三:修改 yarn-site.xml 配置文件

[root@node-01 ~]# cd /root/apps/hadoop-3.2.1/etc/hadoop/

[root@node-01 hadoop]# vim yarn-site.xml

<configuration>

<!-- 配置 NodeManager上运行的附属服务(指定 MapReduce 中 reduce 读取数据方式) -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!-- 配置 yarn 集群标识 id -->

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>yarncluster</value>

</property>

<!-- 启用 yarn HA(高可用) -->

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<!-- 配置 resourcemanager 逻辑 ids 名称-->

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<!-- 配置 resourcemanager1 启动主机名-->

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>node-01</value>

</property>

<!-- 配置 resourcemanager2 启动主机名 -->

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>node-02</value>

</property>

<!-- 配置 resourcemanager1 web 浏览器地址 -->

<property>

<name>yarn.resourcemanager.webapp.address.rm1</name>

<value>node-01:8088</value>

</property>

<!-- 配置 resourcemanager2 web 浏览器地址 -->

<property>

<name>yarn.resourcemanager.webapp.address.rm2</name>

<value>node-02:8088</value>

</property>

<!--配置 zk 集群地址-->

<property>

<name>hadoop.zk.address</name>

<value>node-01:2181,node-02:2181,node-03:2181</value>

</property>

<!-- 启用 resourcemanager 重启自动恢复 -->

<property>

<name>yarn.resourcemanager.recovery.enabled</name>

<value>true</value>

</property>

<!-- 有三种StateStore,分别是基于 zookeeper, HDFS, leveldb, HA 高可用集群必须用 ZKRMStateStore -->

<property>

<name>yarn.resourcemanager.store.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value>

</property>

<!-- 配置自动检测硬件(默认关闭) -->

<property>

<name>yarn.nodemanager.resource.detect-hardware-capabilities</name>

<value>true</value>

</property>

<!-- 配置 nodemanager 启动要求的最低配置-->

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>1024</value>

</property>

<property>

<name>yarn.nodemanager.resource.cpu-vcores</name>

<value>1</value>

</property>

</configuration>

步骤四:scp 这个 yarn-site.xml 到其他节点

[root@node-01 ~]# cd /root/apps/hadoop-3.2.1/etc/hadoop/

[root@node-01 ~]# scp mapred-site.xml node-02:$PWD

[root@node-01 ~]# scp mapred-site.xml node-03:$PWD

[root@node-01 ~]# scp yarn-env.sh node-02:$PWD

[root@node-01 ~]# scp yarn-env.sh node-03:$PWD

[root@node-01 ~]# scp yarn-site.xml node-02:$PWD

[root@node-01 ~]# scp yarn-site.xml node-03:$PWD

步骤五:启动 yarn 集群

[root@node-01 ~]# start-yarn.sh

stop-yarn.sh :停止 yarn 集群

步骤六:用 jps 检查 yarn 的进程

[root@node-01 ~]# jps

16800 ResourceManager

12050 NameNode

11878 JournalNode

12362 DFSZKFailoverController

11739 QuorumPeerMain

16941 NodeManager

12174 DataNode

[root@node-02 ~]# jps

11616 JournalNode

13492 ResourceManager

11926 DataNode

11803 NameNode

11452 QuorumPeerMain

12046 DFSZKFailoverController

# 手动启动 node-02 和 node-03 nodemanger 进程

[root@node-02 ~]# yarn --daemon start nodemanager

[root@node-03 ~]# yarn --daemon start nodemanager

yarn --daemon stop nodemanager 停止nodemanger进程

步骤七:用 web 浏览器查看 yarn 的网页

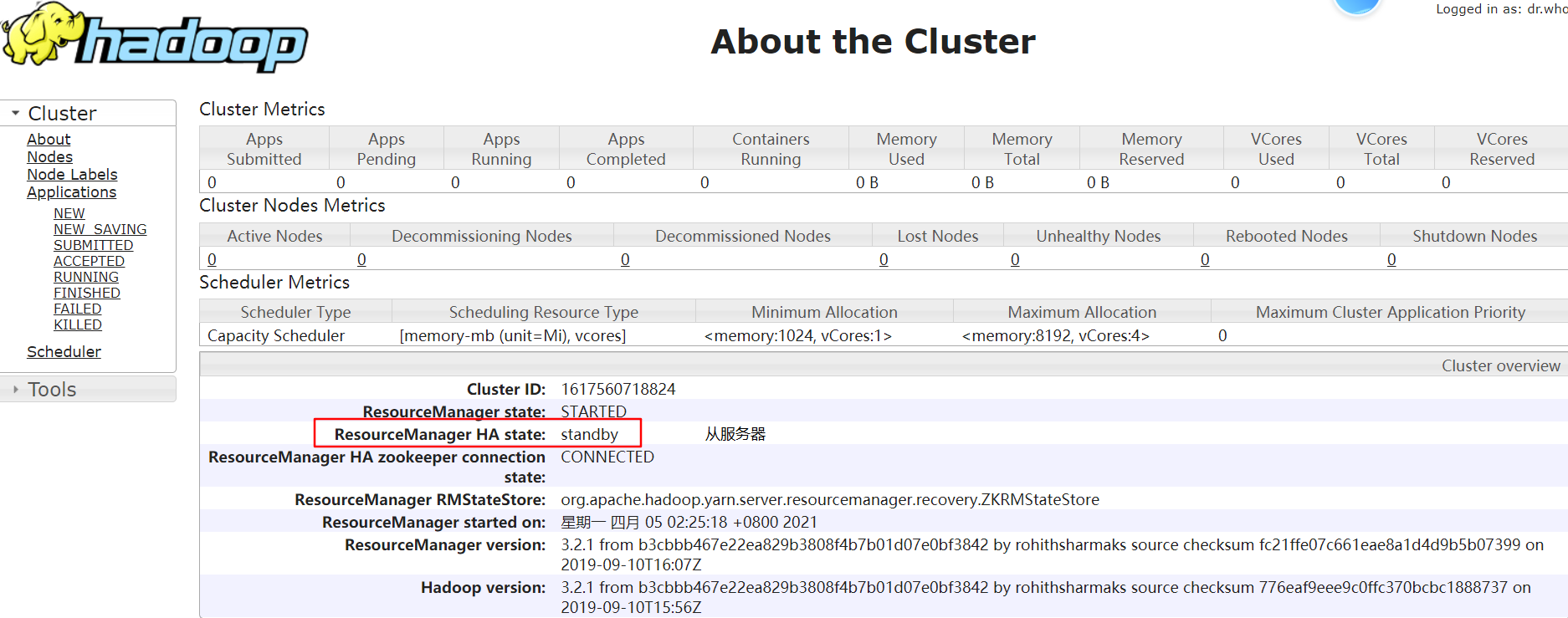

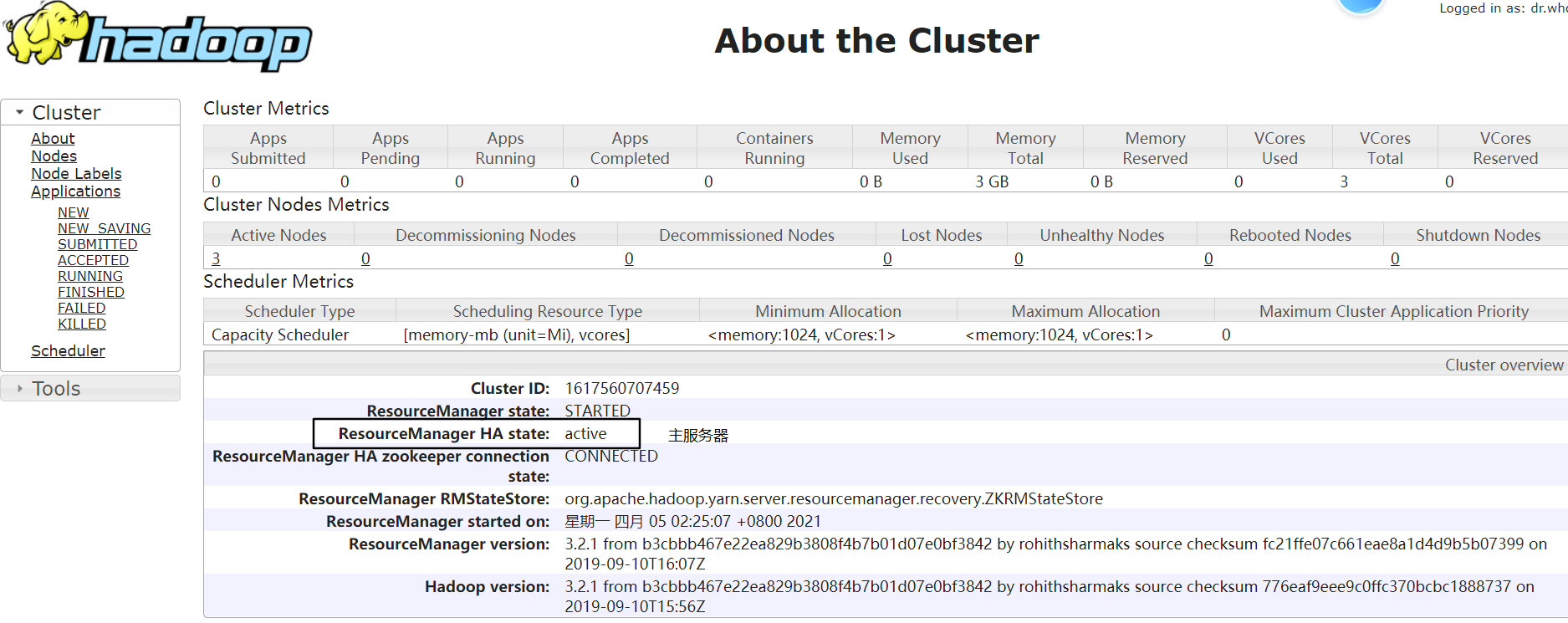

node-01:http://192.168.229.21:8088/cluster/cluster

node-02:http://192.168.229.22:8088/cluster/cluster

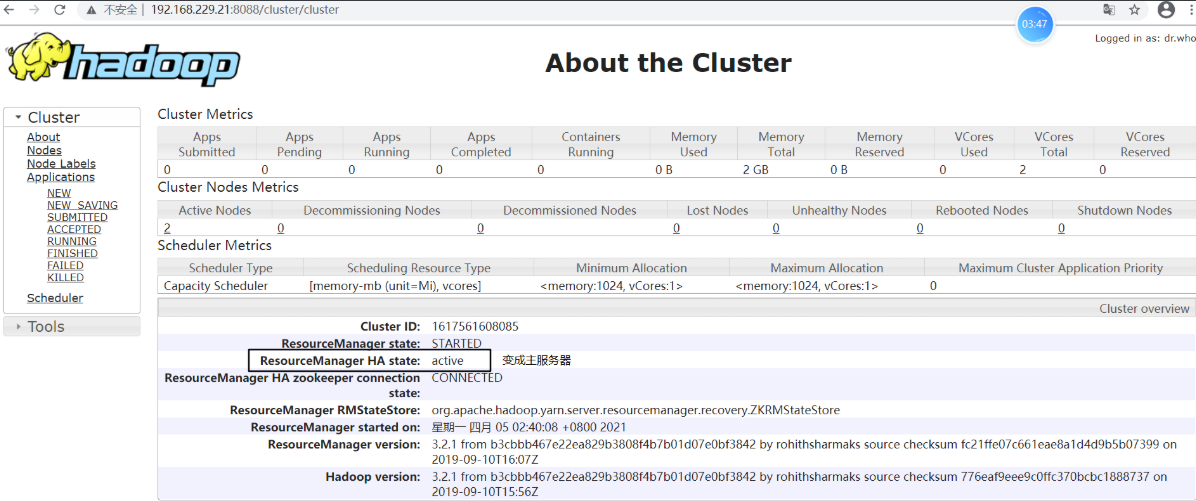

步骤八:测试 ResourceManager 故障转移

# node-02 上关闭 resourcemanager 进程

[root@node-02 logs]# yarn --daemon stop resourcemanager

查看 node-01:http://192.168.229.21:8088/cluster/cluster,发现状态由 standby 变为 active,说明已经进行故障转移

将 node-02 上 resourcemanager 进程再次启动

[root@node-02 logs]# yarn --daemon start resourcemanager

这时,node-02 上的 resourcemanager 则变为 standby 状态,故障转移测试完成:)

步骤九:测试 Yarn 集群运行 wordcount 程序

将 wordcount 程序进行 Jar 打包并上传,执行 wordcount 程序

执行 MapReduce 程序命令格式:hadoop jar xxxx.jar 类全名(main 方法的类名和包名)

[root@node-01 ~]# ll

总用量 138368

drwxr-xr-x. 5 root root 69 4月 4 23:36 apps

-rw-r--r--. 1 root root 6870038 4月 8 13:12 MapReduceDemo-1.0-SNAPSHOT.jar

[root@node-01 hadoop]# hadoop jar MapReduceDemo-1.0-SNAPSHOT.jar wordcount.JobSubmitterLinuxToYarn

2021-04-08 20:00:17,739 INFO mapreduce.Job: Job job_1617883180833_0001 completed successfully #表示 Job 执行成功

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 记一次.NET内存居高不下排查解决与启示

· 探究高空视频全景AR技术的实现原理

· 理解Rust引用及其生命周期标识(上)

· 浏览器原生「磁吸」效果!Anchor Positioning 锚点定位神器解析

· 没有源码,如何修改代码逻辑?

· 分享4款.NET开源、免费、实用的商城系统

· 全程不用写代码,我用AI程序员写了一个飞机大战

· MongoDB 8.0这个新功能碉堡了,比商业数据库还牛

· 记一次.NET内存居高不下排查解决与启示

· 白话解读 Dapr 1.15:你的「微服务管家」又秀新绝活了