TensorFlow实战第七课(dropout解决overfitting)

Dropout 解决 overfitting

overfitting也被称为过度学习,过度拟合。他是机器学习中常见的问题。

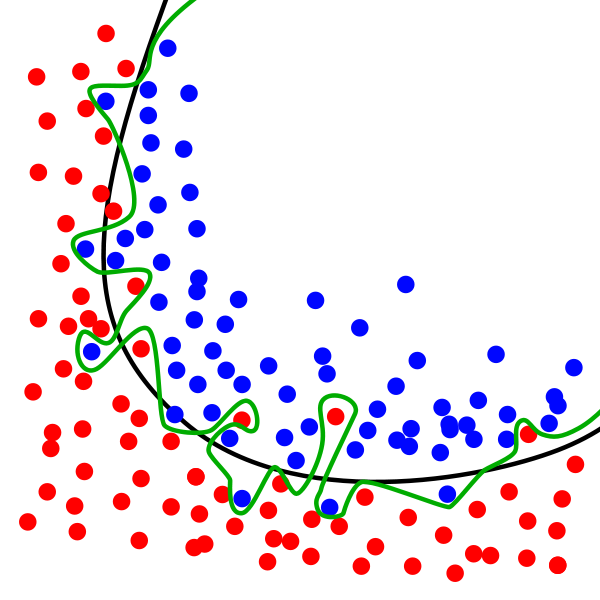

图中的黑色曲线是正常模型,绿色曲线就是overfitting模型。尽管绿色曲线很精确的区分了所有的训练数据,但是并没有描述数据的整体特征,对新测试的数据适应性比较差。

举个Regression(回归)的例子.

第三条曲线存在overfitting问题,尽管它经过了所有的训练点,但是不能很好地反映数据的趋势,预测能力严重不足。tensorflow提供了强大的dropout方法来结局overfitting的问题。

建立Dropout层

本次内容需要安装适应sklearn数据库中的数据 没有安装sklearn的同学可以参考一下以下

import tensorflow as tf from sklearn.datasets import load_digits from sklearn.model_selection import train_test_split from sklearn.preprocessing import LabelBinarizer

keep_prob = tf.placeholder(tf.float32)

...

...

Wx_plus_b = tf.nn.dropout(Wx_plus_b, keep_prob)

这里的keep_prob是保留概率,即我们要保留的结果所占的比例,他作为一个placeholder,在run时传入,当keep_prob=1的时候,相当于100%保留,就是drop没起作用。

下面准备数据:

digits = load_digits() X = digits.data y = digits.target y = LabelBinarizer().fit_transform(y) X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=.3)

其中X_train是训练数据,X_test是测试数据。然后添加隐藏层和输出层

# add output layer l1 = add_layer(xs, 64, 50, 'l1', activation_function=tf.nn.tanh) prediction = add_layer(l1, 50, 10, 'l2', activation_function=tf.nn.softmax)

添加层的激活函数选用tanh(其实我也不知道为什么,莫烦的课里讲不写tanh函数,其他的函数会报none错误,就不管啦)

训练

sess.run(train_step, feed_dict={xs: X_train, ys: y_train, keep_prob: 0.5})

#sess.run(train_step, feed_dict={xs: X_train, ys: y_train, keep_prob: 1})

保留概率是指保留下多少,我们尝试0.5&1.0 观察tensorboard生成的可视化结果

可视化结果

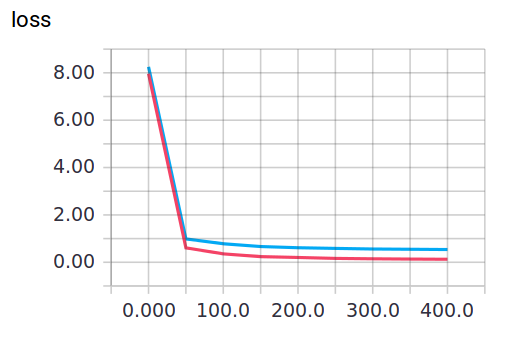

keep_prob=1时,会暴露overfitting的问题,keep_prob=0.5时,Dropout就发挥了作用。

当keep_prob=1时 模型对训练数据的适应性优于测试数据,存在overfitting。红线是train的误差,蓝线是test的误差。

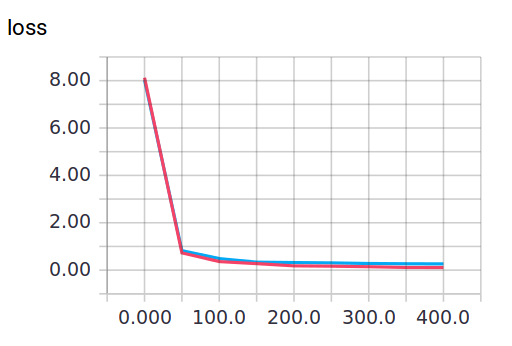

当keep_prob=0.5时

可以明显看到loss的变化

完整代码以及部分注释如下

import tensorflow as tf from sklearn.datasets import load_digits from sklearn.model_selection import train_test_split from sklearn.preprocessing import LabelBinarizer #载入数据 digits = load_digits() X = digits.data y = digits.target #preprocessing.LabelBinarizer是一个很好用的工具。 # 比如可以把yes和no转化为0和1 # 或是把incident和normal转化为0和1 y = LabelBinarizer().fit_transform(y) X_train,X_test,y_train,y_test = train_test_split(X,y,test_size=.3) def add_layer(inputs,in_size,out_size,layer_name,activation_function=None,): Weights = tf.Variable(tf.random_normal([in_size,out_size])) biases = tf.Variable(tf.zeros([1,out_size])+ 0.1) Wx_plus_b = tf.matmul(inputs,Weights)+biases #here to drop Wx_plus_b = tf.nn.dropout(Wx_plus_b, keep_prob) if activation_function is None: outputs = Wx_plus_b else: outputs = activation_function(Wx_plus_b,) tf.summary.histogram(layer_name + 'outputs',outputs) return outputs #define placeholder for inputs to network keep_prob = tf.placeholder(tf.float32)#保留概率 即我们需要保留的结果所占比 xs = tf.placeholder(tf.float32,[None,64])#8*8 ys = tf.placeholder(tf.float32,[None,10]) #add output layer l1 = add_layer(xs,64,50,'l1',activation_function=tf.nn.tanh) prediction = add_layer(l1,50,10,'l2',activation_function=tf.nn.softmax) #the loss between prediction and real data #loss cross_entropy = tf.reduce_mean(-tf.reduce_sum(ys*tf.log(prediction),reduction_indices=[1])) tf.summary.scalar('loss',cross_entropy) train_step = tf.train.GradientDescentOptimizer(0.5).minimize(cross_entropy) sess = tf.Session() merged = tf.summary.merge_all() #summary writer goes in here train_writer = tf.summary.FileWriter("logs/train",sess.graph) test_writer = tf.summary.FileWriter("logs/test",sess.graph) init = tf.global_variables_initializer() #变量初始化 sess.run(init) for i in range(500): #here to determine the keeping probability sess.run(train_step,feed_dict={xs:X_train,ys:y_train,keep_prob:0.5}) if i %50 ==0: #record loss train_result = sess.run(merged,feed_dict={xs:X_train,ys:y_train,keep_prob:1}) test_result = sess.run(merged,feed_dict={xs:X_test,ys:y_test,keep_prob:1}) train_writer.add_summary(test_result,i) test_writer.add_summary(test_result,i)