Linux下部署高可用hadoop集群

一、基础环境

1.1 安装说明

二、Host配置

三、Hadoop的安装与配置

3.1 创建文件目录

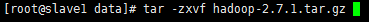

3.2 下载

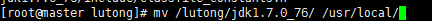

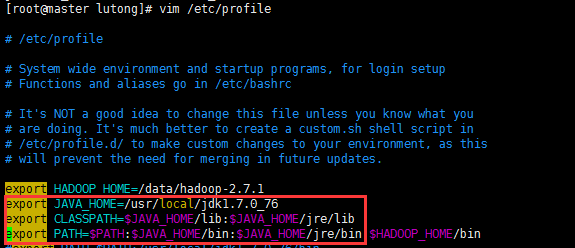

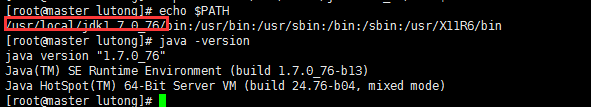

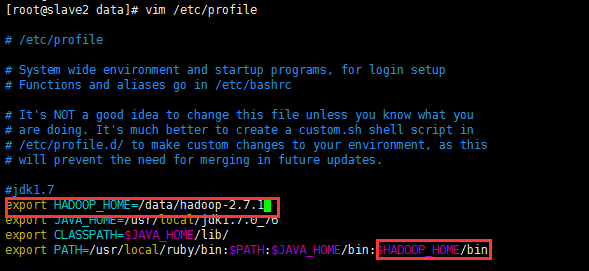

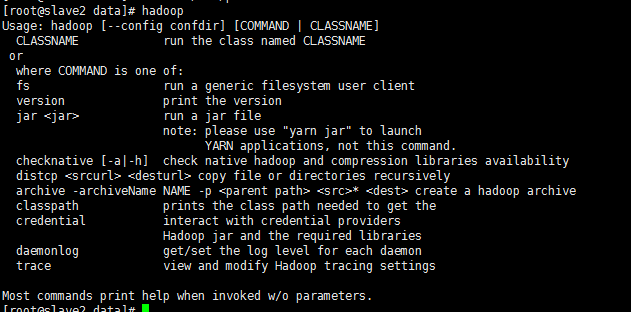

3.3 配置环境变量

3.4 Hadoop的配置

1 <?xml version="1.0" encoding="UTF-8"?>

2 <?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

3 <!--

4 Licensed under the Apache License, Version 2.0 (the "License");

5 you may not use this file except in compliance with the License.

6 You may obtain a copy of the License at

7

8 http://www.apache.org/licenses/LICENSE-2.0

9 Unless required by applicable law or agreed to in writing, software

10 distributed under the License is distributed on an "AS IS" BASIS,

11 WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 See the License for the specific language governing permissions and

13 limitations under the License. See accompanying LICENSE file.

14 -->

15

16 <!-- Put site-specific property overrides in this file. -->

17 <configuration>

18 <property>

19 <name>hadoop.tmp.dir</name>

20 <value>file:/data/hdfs/tmp</value>

21 <description>A base for other temporary directories.</description>

22 </property>

23 <property>

24 <name>io.file.buffer.size</name>

25 <value>131072</value>

26 </property>

27 <property>

28 <name>fs.default.name</name>

29 <value>hdfs://master:9000</value>

30 </property>

31 <property>

32 <name>hadoop.proxyuser.root.hosts</name>

33 <value>*</value>

34 </property>

35 <property>

36 <name>hadoop.proxyuser.root.groups</name>

37 <value>*</value>

38 </property>

39 </configuration>

1 <?xml version="1.0" encoding="UTF-8"?>

2 <?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

3 <!--

4 Licensed under the Apache License, Version 2.0 (the "License");

5 you may not use this file except in compliance with the License.

6 You may obtain a copy of the License at

7

8 http://www.apache.org/licenses/LICENSE-2.0

9

10 Unless required by applicable law or agreed to in writing, software

11 distributed under the License is distributed on an "AS IS" BASIS,

12 WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

13 See the License for the specific language governing permissions and

14 limitations under the License. See accompanying LICENSE file.

15 -->

16

17 <!-- Put site-specific property overrides in this file. -->

18

19 <configuration>

20 <property>

21 <name>dfs.replication</name>

22 <value>2</value>

23 </property>

24 <property>

25 <name>dfs.namenode.name.dir</name>

26 <value>file:/data/hdfs/name</value>

27 <final>true</final>

28 </property>

29 <property>

30 <name>dfs.datanode.data.dir</name>

31 <value>file:/data/hdfs/data</value>

32 <final>true</final>

33 </property>

34 <property>

35 <name>dfs.namenode.secondary.http-address</name>

36 <value>master:9001</value>

37 </property>

38 <property>

39 <name>dfs.webhdfs.enabled</name>

40 <value>true</value>

41 </property>

42 <property>

43 <name>dfs.permissions</name>

44 <value>false</value>

45 </property>

46 </configuration>

注意:dfs.namenode.name.dir和dfs.datanode.data.dir的value填写对应前面创建的目录

复制template,生成xml,命令如下:

cp mapred-site.xml.template mapred-site.xml

1 <?xml version="1.0"?>

2 <?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

3 <!--

4 Licensed under the Apache License, Version 2.0 (the "License");

5 you may not use this file except in compliance with the License.

6 You may obtain a copy of the License at

7

8 http://www.apache.org/licenses/LICENSE-2.0

9

10 Unless required by applicable law or agreed to in writing, software

11 distributed under the License is distributed on an "AS IS" BASIS,

12 WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

13 See the License for the specific language governing permissions and

14 limitations under the License. See accompanying LICENSE file.

15 -->

16

17 <!-- Put site-specific property overrides in this file. -->

18

19 <configuration>

20

21 <property>

22 <name>mapreduce.framework.name</name>

23 <value>yarn</value>

24 </property>

25

26 </configuration>

1 <?xml version="1.0"?>

2 <!--

3 Licensed under the Apache License, Version 2.0 (the "License");

4 you may not use this file except in compliance with the License.

5 You may obtain a copy of the License at

6

7 http://www.apache.org/licenses/LICENSE-2.0

8

9 Unless required by applicable law or agreed to in writing, software

10 distributed under the License is distributed on an "AS IS" BASIS,

11 WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

12 See the License for the specific language governing permissions and

13 limitations under the License. See accompanying LICENSE file.

14 -->

15 <configuration>

16

17 <!-- Site specific YARN configuration properties -->

18 <property>

19 <name>yarn.resourcemanager.address</name>

20 <value>master:18040</value>

21 </property>

22 <property>

23 <name>yarn.resourcemanager.scheduler.address</name>

24 <value>master:18030</value>

25 </property>

26 <property>

27 <name>yarn.resourcemanager.webapp.address</name>

28 <value>master:18088</value>

29 </property>

30 <property>

31 <name>yarn.resourcemanager.resource-tracker.address</name>

32 <value>master:18025</value>

33 </property>

34 <property>

35 <name>yarn.resourcemanager.admin.address</name>

36 <value>master:18141</value>

37 </property>

38 <property>

39 <name>yarn.nodemanager.aux-services</name>

40 <value>mapreduce.shuffle</value>

41 </property>

42 <property>

43 <name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

44 <value>org.apache.hadoop.mapred.ShuffleHandler</value>

45 </property>

46 </configuration>

最后,将整个hadoop-2.7.1文件夹及其子文件夹使用scp复制到slave1和slave2的相同目录中:

scp -r /data/hadoop-2.7.1 root@slave1:/data

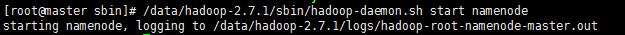

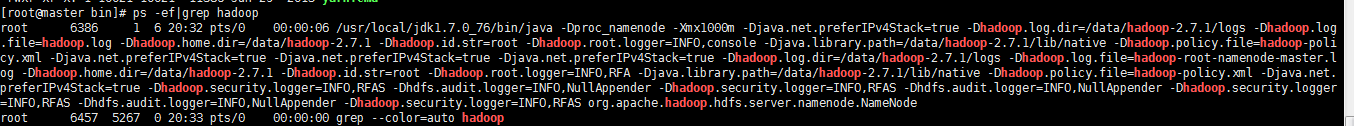

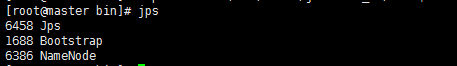

在Master上执行jps命令,得到如下结果:

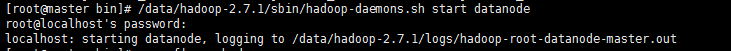

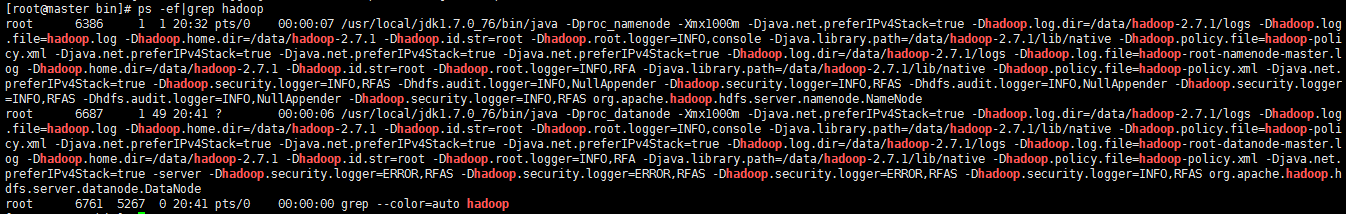

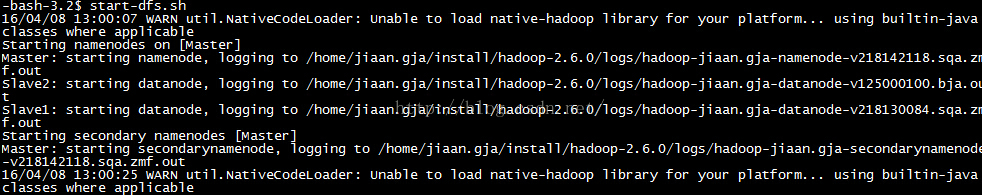

4.3 启动DataNode

master

slave1

slave2

4.4 运行YARN

说明ResourceManager运行正常。

4.5 查看集群是否启动成功:

jps

Master显示:

SecondaryNameNode

ResourceManager

NameNode

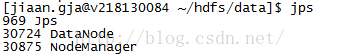

Slave显示:

NodeManager

DataNode

五、测试hadoop

5.1 测试HDFS

最后测试下亲手搭建的Hadoop集群是否执行正常,测试的命令如下图所示:

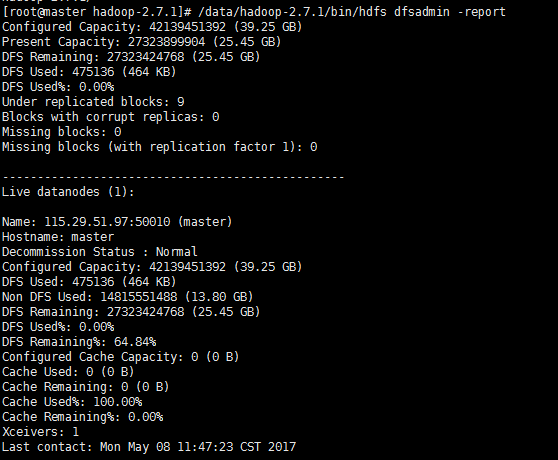

5.2 查看集群状态

/data/hadoop-2.7.1/bin/hdfs dfsadmin -report

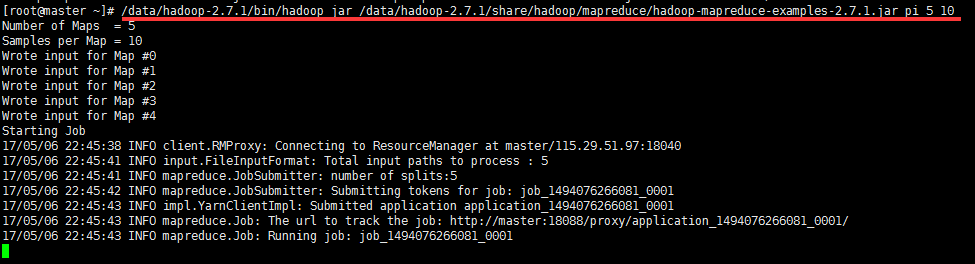

5.3 测试YARN

5.4 测试mapreduce

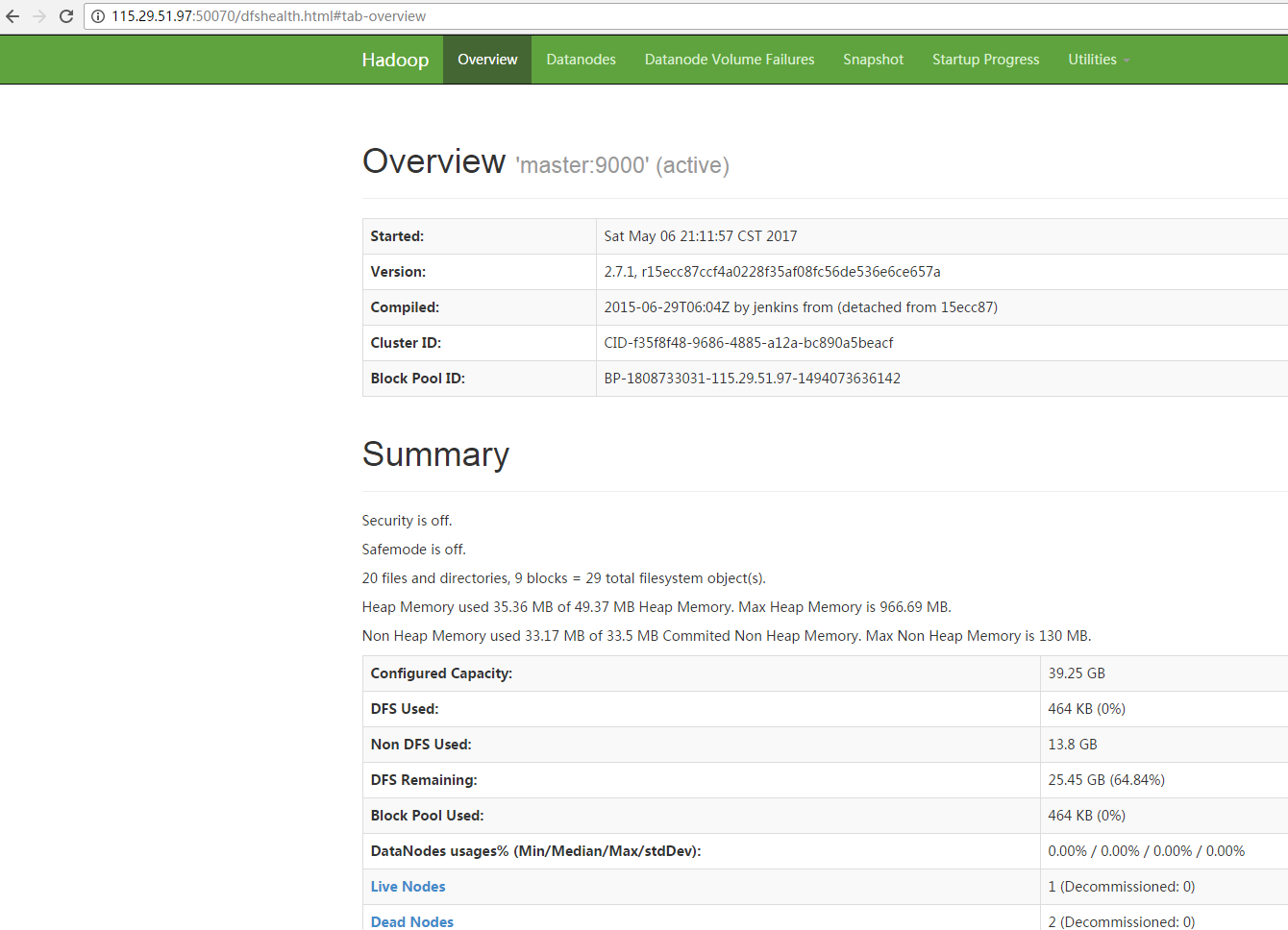

5.5 测试查看HDFS:

http://115.29.51.97:50070/dfshealth.html#tab-overview

六、配置运行Hadoop中遇见的问题

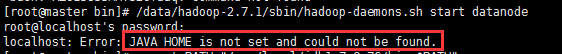

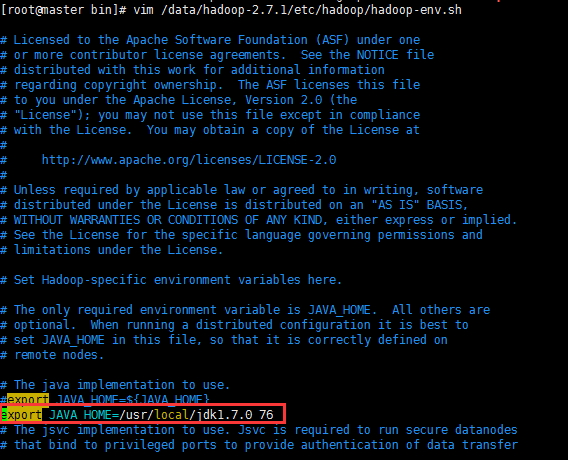

6.1 JAVA_HOME未设置

则需要/data/hadoop-2.7.1/etc/hadoop/hadoop-env.sh,添加JAVA_HOME路径

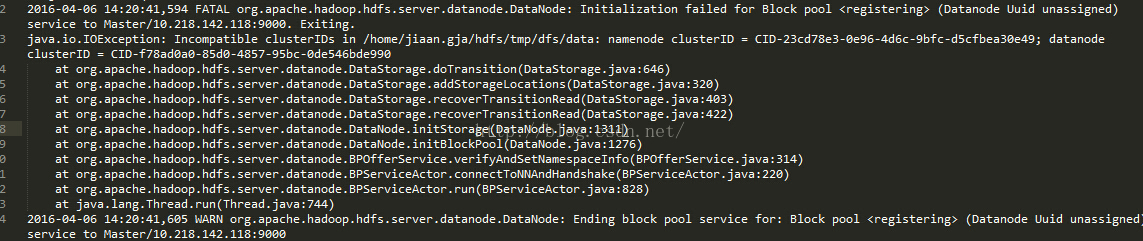

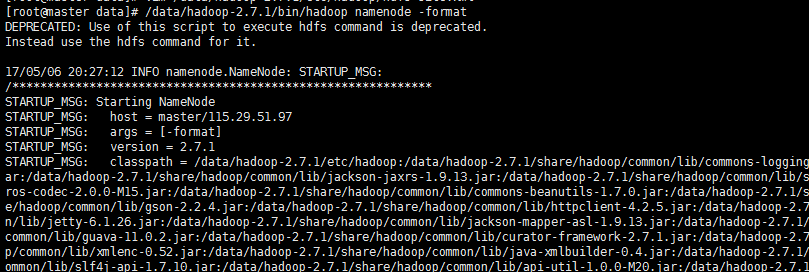

6.2 ncompatible clusterIDs

由于配置Hadoop集群不是一蹴而就的,所以往往伴随着配置——>运行——>。。。——>配置——>运行的过程,所以DataNode启动不了时,往往会在查看日志后,发现以下问题:

6.3 NativeCodeLoader的警告

在测试Hadoop时,细心的人可能看到截图中的警告信息:

学习本就是一个不断模仿、练习、再到最后面自己原创的过程。

虽然可能从来不能写出超越网上通类型同主题博文,但为什么还是要写?

于自己而言,博文主要是自己总结。假设自己有观众,毕竟讲是最好的学(见下图)。于读者而言,笔者能在这个过程get到知识点,那就是双赢了。

当然由于笔者能力有限,或许文中存在描述不正确,欢迎指正、补充!

感谢您的阅读。如果本文对您有用,那么请点赞鼓励。

作者:南辞、归

本博客所有文章仅用于学习、研究和交流目的,欢迎非商业性质转载。

博主的文章没有高度、深度和广度,只是凑字数。由于博主的水平不高,不足和错误之处在所难免,希望大家能够批评指出。

博主是利用读书、参考、引用、抄袭、复制和粘贴等多种方式打造成自己的文章,请原谅博主成为一个无耻的文档搬运工!

浙公网安备 33010602011771号

浙公网安备 33010602011771号