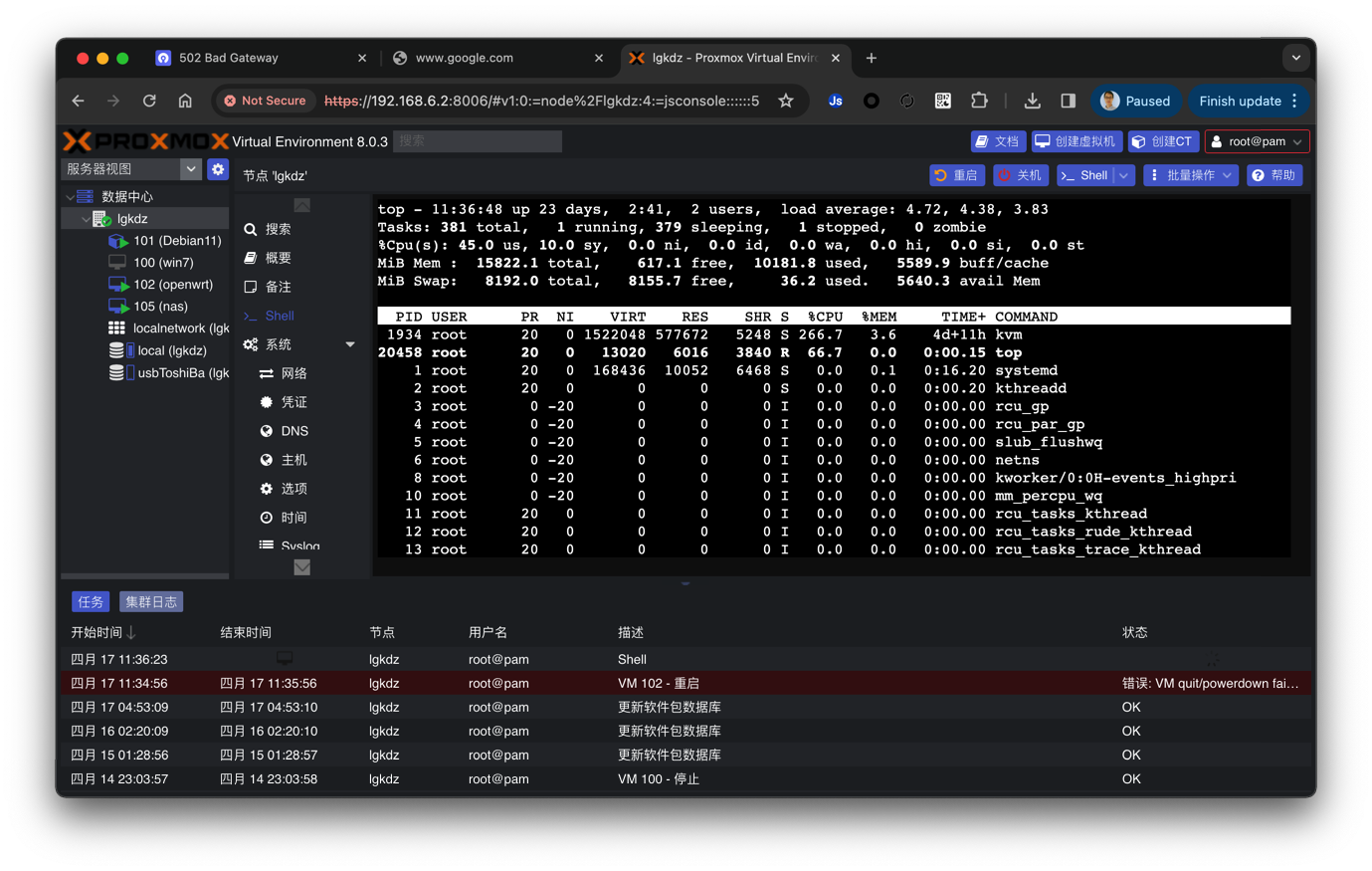

PVE群晖NAS修复笔记

调皮的小伙伴把NAS玩坏了,贴出详尽修复过程

排查修复结果总结

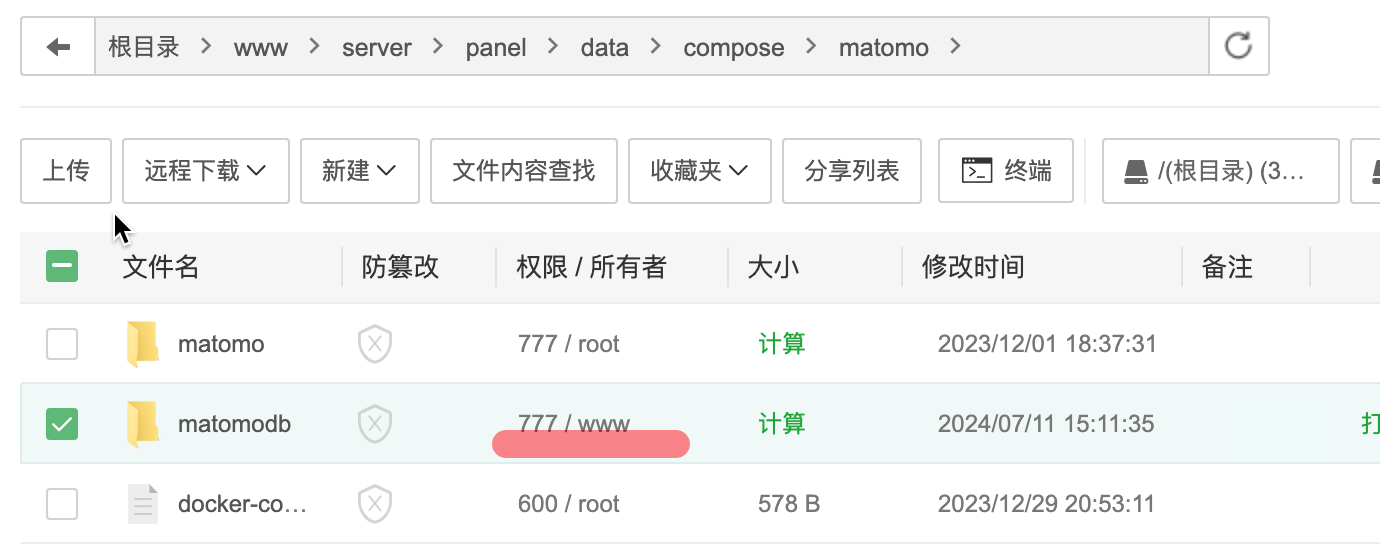

- MariaDB 修复需要赋值两个映射出来目录的权限,给mysql用户,777的目录权限

- yourls的sql仍然无法修复,只能全部重装后,使用import export插件导入数据,丢失了几周的数据,哎

- 删除了pve,debian中的所有陈旧的日志文件,和大的冗余的日志文件。设置了日志文件的新规则。

- 进一步了解了pve的磁盘以及分区原理,为下一步重新划分磁盘,分配要更合理一些,迫不及待了....

有什么用

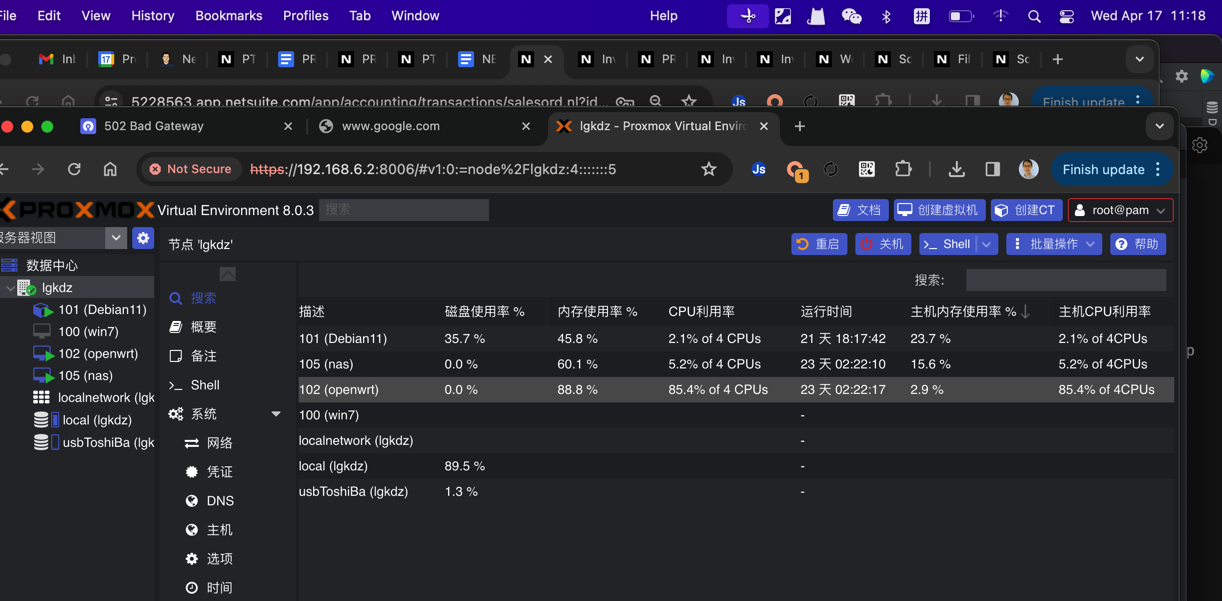

排查PVE中NAS的运行错误,控制台错误信息(导致linux无法正常启动):

sata boot support on this platform is experimental

关闭虚拟机,后尝试重启,pve错误:

WARN: no efidisk configured! Using temporary efivars disk.

Warning: unable to close filehandle GEN7208 properly: No space left on device at /usr/share/perl5/PVE/Tools.pm line 254.

TASK ERROR: unable to write '/tmp/105-ovmf.fd.tmp.29425' - No space left on device

当前的后果(2023.12.29)

NAS上面跑的docker也全部挂掉

Book

emby

aria2

数据,还有NAS中的数据(群龙无首了)

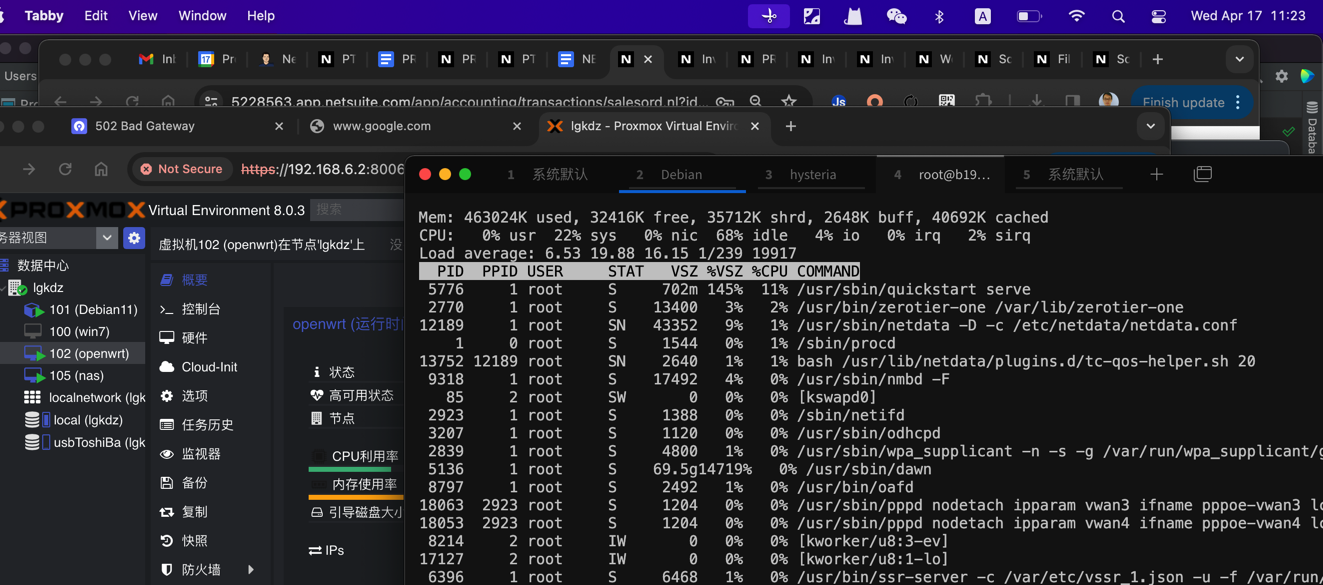

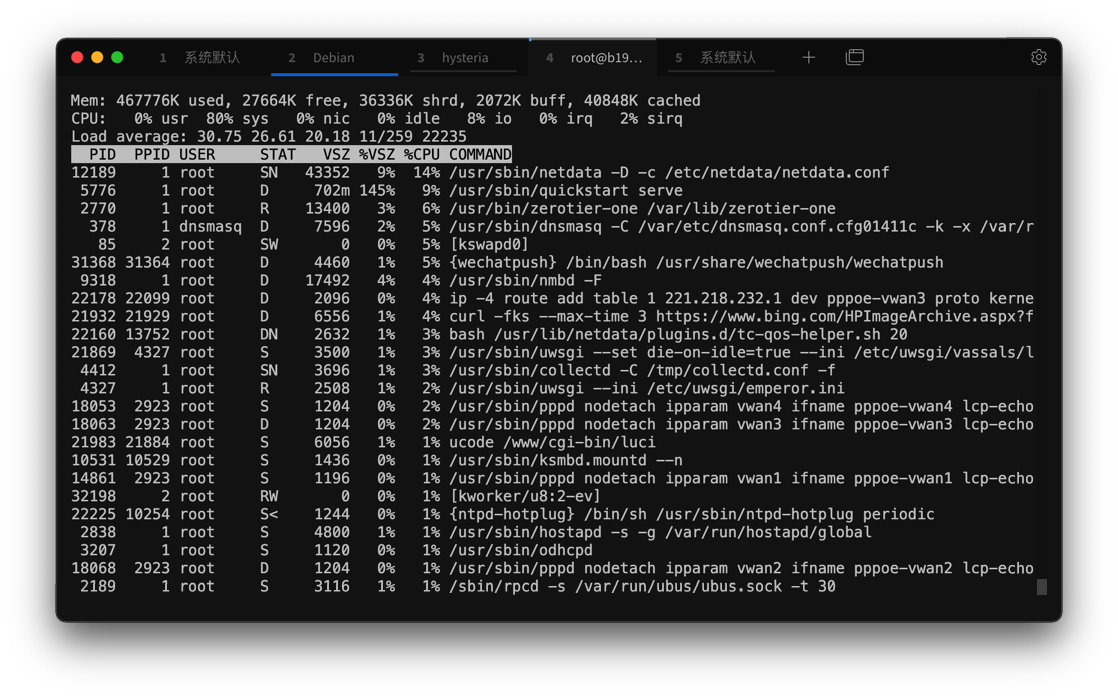

采取措施

qm list

qm stop 105

cd /

du -sh *

102G var

发现这个var文件夹占用了102G的空间,继续往里面找找

100G lib

root@lgkdz:/var/lib/vz/images# du -sh *

26G 100

50G 101

656M 102

21G 105

#排序

du -s /usr/share/* | sort -nr

进debian ssh,tasksel 卸载桌面。

磁盘依旧99.99%

发现可能是Debian的磁盘占用不停侵蚀占用128G的SSD:

4.4G usr

18G var

5.8G www

var从上次排查(2023年12月15?;目前运行39天,当时运行24天;2周前)的12G,2周涨了6G空间出来。

root@Debian11:/var/lib/docker# du -sh *

108K buildkit

1.3G containers

4.0K engine-id

27M image

244K network

15G overlay2

16K plugins

4.0K runtimes

4.0K swarm

4.0K tmp

900K volumes

清理docker:

> docker system df

TYPE TOTAL ACTIVE SIZE RECLAIMABLE

Images 21 18 6.589GB 153.2MB (2%)

Containers 18 17 483.5MB 555.6kB (0%)

Local Volumes 0 0 0B 0B

Build Cache 0 0 0B 0B

> docker system prune -a

y

Total reclaimed space: 392MB

> docker system df

TYPE TOTAL ACTIVE SIZE RECLAIMABLE

Images 17 17 6.197GB 7.335MB (0%)

Containers 17 17 482.9MB 0B (0%)

Local Volumes 0 0 0B 0B

Build Cache 0 0 0B 0B

sudo systemctl restart docker

仍然是:99.99% (108.18 GiB的108.20 GiB)

#PVE的情况

root@lgkdz:/# systemctl status pveproxy.service

● pveproxy.service - PVE API Proxy Server

Loaded: loaded (/lib/systemd/system/pveproxy.service; enabled; preset: enabled)

Active: active (running) since Sun 2023-11-19 19:25:04 CST; 1 month 9 days ago

Process: 989 ExecStartPre=/usr/bin/pvecm updatecerts --silent (code=exited, status=0/SUCCESS)

Process: 991 ExecStart=/usr/bin/pveproxy start (code=exited, status=0/SUCCESS)

Process: 56738 ExecReload=/usr/bin/pveproxy restart (code=exited, status=0/SUCCESS)

Main PID: 993 (pveproxy)

Tasks: 4

Memory: 162.3M

CPU: 2h 15min 3.182s

CGroup: /system.slice/pveproxy.service

├─ 993 pveproxy

├─12296 "pveproxy worker"

├─12300 "pveproxy worker"

└─12302 "pveproxy worker"

Dec 29 10:17:46 lgkdz pveproxy[993]: worker 12296 started

Dec 29 10:17:49 lgkdz pveproxy[12280]: worker exit

Dec 29 10:17:49 lgkdz pveproxy[993]: worker 12280 finished

Dec 29 10:17:49 lgkdz pveproxy[993]: starting 1 worker(s)

Dec 29 10:17:49 lgkdz pveproxy[993]: worker 12300 started

Dec 29 10:17:49 lgkdz pveproxy[993]: worker 12295 finished

Dec 29 10:17:49 lgkdz pveproxy[993]: starting 1 worker(s)

Dec 29 10:17:49 lgkdz pveproxy[993]: worker 12302 started

Dec 29 10:17:49 lgkdz pveproxy[12300]: Warning: unable to close filehandle GEN5 properly: No space left on device at /usr/share/p

erl5/PVE/APIServer/AnyEvent.pm line 1901.

Dec 29 10:17:49 lgkdz pveproxy[12300]: error writing access log

加载下来看看,Kingchuxing里面的500G的数据情况:

mount /dev/sda5 /mnt/sda5

清理+管理Linux日志

rm -rf /log/*.gz

rm -rf /var/log/*.1

journalctl --disk-usage # 查看占用的磁盘

Archived and active journals take up 2.5G in the file system.

# 设置占用的磁盘空间,日志量大于这些后自动删除旧的

journalctl --vacuum-size=512M

Vacuuming done, freed 2.0G of archived journals from /var/log/journal/2afbdd1662c14f99a11ce27fcda8ab85.

Vacuuming done, freed 0B of archived journals from /run/log/journal.

# 2d之前的自动删除

journalctl --vacuum-time=2d

#这一顿清理日志以后,硬盘空间:

98.33% (106.39 GiB的108.20 GiB)

在此尝试启动NAS

WARN: no efidisk configured! Using temporary efivars disk.

TASK WARNINGS: 1

Debian的日志清理,维护

> journalctl --disk-usage # 查看占用的磁盘

Archived and active journals take up 104.0M in the file system.

1、find查找根下大于800M的文件

find / -size +800M -exec ls -lh {} ;

>root@lgkdz:/var/log# find / -size +800M -exec ls -lh {} \;

-r-------- 1 root root 128T Nov 19 19:24 /proc/kcore

find: ‘/proc/3193/task/3251/fd/34’: No such file or directory

find: ‘/proc/3193/task/3251/fd/35’: No such file or directory

find: ‘/proc/14759’: No such file or directory

find: ‘/proc/14779’: No such file or directory

find: ‘/proc/14780’: No such file or directory

find: ‘/proc/14781/task/14781/fd/5’: No such file or directory

find: ‘/proc/14781/task/14781/fdinfo/5’: No such file or directory

find: ‘/proc/14781/fd/6’: No such file or directory

find: ‘/proc/14781/fdinfo/6’: No such file or directory

-rw-r--r-- 1 root root 1.3G Oct 14 20:50 /var/lib/vz/dump/vzdump-lxc-101-2023_10_14-20_48_21.tar.zst

-rw-r----- 1 root root 51G Dec 25 09:46 /var/lib/vz/images/100/vm-100-disk-0.qcow2

-rw-r----- 1 root root 11G Dec 29 13:34 /var/lib/vz/images/102/vm-102-disk-0.qcow2

-rw-r----- 1 root root 101G Dec 29 13:34 /var/lib/vz/images/105/vm-105-disk-2.qcow2

-rw-r----- 1 root root 50G Dec 29 13:34 /var/lib/vz/images/101/vm-101-disk-0.raw

-rw------- 1 root root 4.6G Nov 4 10:52 /core

> root@Debian11:~# find / -size +800M -exec ls -lh {} \;

-rw-r----- 1 root root 1.2G Dec 29 13:43 /var/lib/docker/containers/a611cae746aa6c4b1e3bda308a7935180b79e0f684a75791910430989

1e2c979/a611cae746aa6c4b1e3bda308a7935180b79e0f684a757919104309891e2c979-json.log

-r-------- 1 root root 128T Nov 19 19:26 /proc/kcore

-r-------- 1 root root 128T Dec 17 09:32 /dev/.lxc/proc/kcore

检查异常大小的log文件

cd /var/lib/docker/containers/a611cae746aa6c4b1e3bda308a7935180b79e0f684a757919104309891e2c979

我认为这个a611cae746aa6c4b1e3bda308a7935180b79e0f684a757919104309891e2c979 是frp的docker,尝试删除这个1.2G的log文件!

直接rm;没有发现任何异常;怎么会生成这么大的log文件??

4:28PM >

分析两个磁盘的6个分区里面数据占用情况

使用df -h 命令查看文件系统及空间使用情况

> root@lgkdz:/var/log# df -h

Filesystem Size Used Avail Use% Mounted on

udev 7.7G 0 7.7G 0% /dev

tmpfs 1.6G 864K 1.6G 1% /run

/dev/mapper/pve-root 109G 107G 0 100% /

tmpfs 7.8G 43M 7.7G 1% /dev/shm

tmpfs 5.0M 0 5.0M 0% /run/lock

/dev/sdb2 1022M 352K 1022M 1% /boot/efi

/dev/fuse 128M 16K 128M 1% /etc/pve

tmpfs 1.6G 0 1.6G 0% /run/user/0

#也可用 df -T 查看文件系统的Type

> df -T

Filesystem Type 1K-blocks Used Available Use% Mounted on

udev devtmpfs 8066408 0 8066408 0% /dev

tmpfs tmpfs 1620188 864 1619324 1% /run

/dev/mapper/pve-root ext4 113455880 111702412 0 100% /

tmpfs tmpfs 8100928 43680 8057248 1% /dev/shm

tmpfs tmpfs 5120 0 5120 0% /run/lock

/dev/sdb2 vfat 1046508 352 1046156 1% /boot/efi

/dev/fuse fuse 131072 16 131056 1% /etc/pve

tmpfs tmpfs 1620184 0 1620184 0% /run/user/0

/dev/mapper/pve-root 就是pve卷组里的一个逻辑卷pve

> root@lgkdz:/var/log# pvdisplay

--- Physical volume ---

PV Name /dev/sdb3

VG Name pve

PV Size 118.24 GiB / not usable <3.32 MiB

Allocatable yes (but full)

PE Size 4.00 MiB

Total PE 30269

Free PE 0

Allocated PE 30269

PV UUID jEzPvE-ELri-mvlq-5Jpi-s96g-a44F-SWWM4N

> root@lgkdz:/var/log# vgdisplay

--- Volume group ---

VG Name pve

System ID

Format lvm2

Metadata Areas 1

Metadata Sequence No 9

VG Access read/write

VG Status resizable

MAX LV 0

Cur LV 2

Open LV 2

Max PV 0

Cur PV 1

Act PV 1

VG Size <118.24 GiB

PE Size 4.00 MiB

Total PE 30269

Alloc PE / Size 30269 / <118.24 GiB

Free PE / Size 0 / 0

VG UUID aBqMlz-dH1H-PEif-LGT5-khnl-oEXf-sMtWTc

> root@lgkdz:/var/log# lvdisplay

--- Logical volume ---

LV Path /dev/pve/swap

LV Name swap

VG Name pve

LV UUID Wl0zSQ-Rlkj-4TLc-yyuM-Ntg1-1T27-QK3KOg

LV Write Access read/write

LV Creation host, time proxmox, 2023-07-01 20:13:17 +0800

LV Status available

# open 2

LV Size 8.00 GiB

Current LE 2048

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:0

--- Logical volume ---

LV Path /dev/pve/root

LV Name root

VG Name pve

LV UUID dFYnFo-1PQw-3qUM-sR9V-2eqf-BKn8-yTASwe

LV Write Access read/write

LV Creation host, time proxmox, 2023-07-01 20:13:17 +0800

LV Status available

# open 1

LV Size <110.24 GiB

Current LE 28221

Segments 1

Allocation inherit

Read ahead sectors auto

- currently set to 256

Block device 253:1

lsblk 查看所有存在的磁盘及分区(不管使用挂载是否)

root@lgkdz:/var/log# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

loop0 7:0 0 50G 0 loop

sda 8:0 0 476.9G 0 disk

├─sda1 8:1 0 8G 0 part

├─sda2 8:2 0 2G 0 part

├─sda3 8:3 0 1K 0 part

└─sda5 8:5 0 466.7G 0 part

sdb 8:16 0 119.2G 0 disk

├─sdb1 8:17 0 1007K 0 part

├─sdb2 8:18 0 1G 0 part /boot/efi

└─sdb3 8:19 0 118.2G 0 part

├─pve-swap 253:0 0 8G 0 lvm [SWAP]

└─pve-root 253:1 0 110.2G 0 lvm /

您可以通过清理占用磁盘空间较大的文件或目录、扩容磁盘或新购磁盘等几种方式来解决磁盘分区空间使用率达到100%的问题。具体操作步骤如下:

没办法,杀掉Windows

> scp root@192.168.6.2:/var/lib/vz/images/100/vm-100-disk-0.qcow2 .

root@192.168.6.2's password:

vm-100-disk-0.qcow2 100% 50GB 45.1MB/s 18:55

非正常地退出Matomo和yourls,导致数据库启动出错:

Matomo-DB | 2023-12-29 10:46:40+00:00 [Note] [Entrypoint]: Switching to dedicated user 'mysql'

Matomo-DB | 2023-12-29 10:46:40+00:00 [Note] [Entrypoint]: Entrypoint script for MariaDB Server 1:11.2.2+mari

a~ubu2204 started.

Matomo-DB | 2023-12-29 10:46:40+00:00 [Note] [Entrypoint]: Initializing database files

Matomo-DB | 2023-12-29 10:46:40 0 [Warning] Can't create test file '/var/lib/mysql/6be765c4a185.lower-test' (

Errcode: 13 "Permission denied")

Matomo-DB | /usr/sbin/mariadbd: Can't change dir to '/var/lib/mysql/' (Errcode: 13 "Permission denied")

Matomo-DB | 2023-12-29 10:46:40 0 [ERROR] Aborting

Matomo-DB |

Matomo-DB | Installation of system tables failed! Examine the logs in

Matomo-DB | /var/lib/mysql/ for more information.

Matomo-DB |

Matomo-DB | The problem could be conflicting information in an external

Matomo-DB | my.cnf files. You can ignore these by doing:

Matomo-DB |

Matomo-DB | shell> /usr/bin/mariadb-install-db --defaults-file=~/.my.cnf

Matomo-DB |

Matomo-DB | You can also try to start the mariadbd daemon with:

Matomo-DB |

Matomo-DB | shell> /usr/sbin/mariadbd --skip-grant-tables --general-log &

Matomo-DB |

Matomo-DB | and use the command line tool /usr/bin/mariadb

Matomo-DB | to connect to the mysql database and look at the grant tables:

Matomo-DB |

Matomo-DB | shell> /usr/bin/mariadb -u root mysql

Matomo-DB | MariaDB> show tables;

Matomo-DB |

Matomo-DB | Try '/usr/sbin/mariadbd --help' if you have problems with paths. Using

Matomo-DB | --general-log gives you a log in /var/lib/mysql/ that may be helpful.

Matomo-DB |

Matomo-DB | The latest information about mariadb-install-db is available at

Matomo-DB | https://mariadb.com/kb/en/installing-system-tables-mysql_install_db

Matomo-DB | You can find the latest source at https://downloads.mariadb.org and

Matomo-DB | the maria-discuss email list at https://launchpad.net/~maria-discuss

Matomo-DB |

Matomo-DB | Please check all of the above before submitting a bug report

Matomo-DB | at https://mariadb.org/jira

Matomo-DB |

Matomo-DB exited with code 1

2023-12-29 2:43:11 0 [Note] InnoDB: IO Error: 5during write of 16384 bytes, for file ./matomodb/matomo_log_visit.ibd(16), returned 0

Docker - 运行 Mysql 容器后报错:[ERROR] --initialize specified but the data directory has files in it. Abort

2023-12-29 11:14:38+00:00 [Note] [Entrypoint]: Entrypoint script for MySQL Server 5.7.44-1.el7 started.

2023-12-29 11:14:39+00:00 [Note] [Entrypoint]: Switching to dedicated user 'mysql'

2023-12-29 11:14:39+00:00 [Note] [Entrypoint]: Entrypoint script for MySQL Server 5.7.44-1.el7 started.

2023-12-29 11:14:39+00:00 [Note] [Entrypoint]: Initializing database files

2023-12-29T11:14:39.612526Z 0 [Warning] TIMESTAMP with implicit DEFAULT value is deprecated. Please use --explicit_defaults_for_timestamp server option (see documentation for more details).

2023-12-29T11:14:39.615112Z 0 [ERROR] --initialize specified but the data directory has files in it. Aborting.

2023-12-29T11:14:39.615174Z 0 [ERROR] Aborting

拯救MaraDB;

2023-12-29 13:00:07+00:00 [Note] [Entrypoint]: Switching to dedicated user 'mysql'

Matomo-DB | 2023-12-29 13:00:07+00:00 [Note] [Entrypoint]: Entrypoint script for MariaDB Server 1:11.2.2+maria~ubu2204 started.

Matomo-DB | 2023-12-29 13:00:07+00:00 [Note] [Entrypoint]: Initializing database files

原来是docker-compose 运行maradb需要的权限 给mysql用户分配 执行权限!!

成功启动maradb!!next:无法运行matomo程序

You don't have permission to access this resource.Server unable to read htaccess file, denying access to be safe

Matomo | [Fri Dec 29 21:28:33.351828 2023] [core:crit] [pid 53] (13)Permission denied: [client 172.21.0.1:5230] AH00529: /var/

www/html/.htaccess pcfg_openfile: unable to check htaccess file, ensure it is readable and that '/var/www/html/' is executable, re

ferer: https://query.carlzeng.com:3/

unable to check htaccess file, ensure it is readable and that '/var/www/html/' is executable

给予777的文件夹权限:matomo

Maradb修复成功

Mysql重建成功,丢失数据:lost data: 12.3 - 12.28 之间的数据不知道怎么找回来。。。

修复权限以后显示:

到目录/www/server/panel/data/compose/yourls_service/template下运行 重新运行 docker-compose up -d

在PVE中发现磁盘没有被正常加载,这时需要

mount /dev/sdd2 /mnt/usbToshiBa

PVE8.0如何配置和限制日志生成

You can limit it by editing "#SystemMaxUse=" to something like "SystemMaxUse=100M" in "/etc/systemd/journald.conf" and then do a:

systemctl restart systemd-journald

下一步就是清理Debian系统的磁盘占用

root@lgkdz:/var/lib/vz# du -s /var/lib/vz/images/* | sort -nr

51647072 /var/lib/vz/images/101

25876720 /var/lib/vz/images/105

Debian 清理apt缓存文件

How to clear the APT cache and delete everything from /var/cache/apt/archives/

The clean command clears out the local repository of retrieved package files. It removes everything but the lock file from /var/cache/apt/archives/ and /var/cache/apt/archives/partial/. The syntax is:

sudo apt clean

OR

sudo apt-get clean

Delete all useless files from the APT cache

The syntax is as follows to delete /var/cache/apt/archives/:

sudo apt autoclean

OR

sudo apt-get autoclean

docker system prune

如何设置docker每天定时重启

NAS中耗磁盘的docker设置重启

echo "0 3 * * * root docker restart embyserver" >> /etc/cron.d/my-cron

echo "0 3 * * * root docker restart embyserver" >> /etc/cron.d/my-cron

echo "11 3 * * * root docker restart portainer" >> /etc/cron.d/my-cron

echo "16 3 * * * root docker restart it-tools" >> /etc/cron.d/my-cron

echo "21 3 * * * root docker restart espeakbox" >> /etc/cron.d/my-cron

echo "26 3 * * * root docker restart book-searcher" >> /etc/cron.d/my-cron

echo "31 3 * * * root docker restart aria2-webui" >> /etc/cron.d/my-cron

echo "36 3 * * * root docker restart alistaria2-webui" >> /etc/cron.d/my-cron

20230109 添加了更多的重启

继续观察,是否按时重启了这些docker容器?

答:确实已经是每天定时重启了

Debian中耗磁盘的docker设置重启

#At 02:00am, only on Monday

echo "0 2 * * 1 root docker restart iptvchecker-website-1" >> /etc/cron.d/my-cron

echo "0 3 * * * root docker restart frps" >> /etc/cron.d/my-cron

echo "10 3 * * * root docker restart chatgpt-next-web" >> /etc/cron.d/my-cron

echo "15 3 * * * root docker restart hideipnetwork" >> /etc/cron.d/my-cron

echo "20 3 * * * root docker restart mmPlayer" >> /etc/cron.d/my-cron

echo "25 3 * * * root docker restart hysteria" >> /etc/cron.d/my-cron

如何改变 /dev/sda5的大小?

root@lgkdz:~# df -hT

Filesystem Type Size Used Avail Use% Mounted on

udev devtmpfs 7.7G 0 7.7G 0% /dev

tmpfs tmpfs 1.6G 880K 1.6G 1% /run

/dev/mapper/pve-root ext4 109G 85G 19G 82% /

tmpfs tmpfs 7.8G 46M 7.7G 1% /dev/shm

tmpfs tmpfs 5.0M 0 5.0M 0% /run/lock

/dev/sdb2 vfat 1022M 352K 1022M 1% /boot/efi

/dev/fuse fuse 128M 16K 128M 1% /etc/pve

tmpfs tmpfs 1.6G 0 1.6G 0% /run/user/0

PVE挂载新硬盘

ls /dev/disk/by-id

qm set 105 -sata2 /dev/disk/by-id/ata-Kingchuxing_512GB_2023050801426

qm set 105 -sata3 /dev/disk/by-id/usb-TOSHIBA_External_USB_3.0_20140612002491C-0:0

#这是挂载到特定的虚拟机中去的命令;

#我需要挂载给pve本身!

fdisk /dev/sdc

格式化

mkfs.ext4 /dev/sdc2

/dev/sdc2 contains a hfsplus file system labelled 'Time Machine Backups'

Proceed anyway? (y,N) y

Creating filesystem with 244106668 4k blocks and 61030400 inodes

Filesystem UUID: 3991ce4f-dcec-4b28-9370-3ebb751c1912

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736, 1605632, 2654208,

4096000, 7962624, 11239424, 20480000, 23887872, 71663616, 78675968,

102400000, 214990848

Allocating group tables: done

Writing inode tables: done

Creating journal (262144 blocks):

done

Writing superblocks and filesystem accounting information: done

mount /dev/sdc2 /mnt/usbToshiBa1T

等待测试PVE的显卡直通情况,确保,开机的画面是pve的,这样如果这个命令是错误的(导致无法开机)

echo /dev/sdc2 /mnt/usbToshiBa1T ext4 defaults 0 0 >> /etc/fstab

就还知道补救的步骤

如果这里你操作错误,可能会导致 PVE 无法启动,需要在启动时候接上显示器,进入修复模式 repair filesystem ,直接输入 root 密码即可进入

因为此时 / 目录是只读模式,进行修改 /etc/fstab 时,提示无法保存(只读),这时需要将 / 目录重新挂载为可读写模式 ,用命令

mount -o remount,rw,auto /

然后再对 /etc/fstab 进行修改就可以了。重启后系统正常启动。

之后重启 PVE 即可

reboot

这个可以看到已经mount成功了,新磁盘可以使用了

> df -hT

Filesystem Type Size Used Avail Use% Mounted on

udev devtmpfs 7.7G 0 7.7G 0% /dev

tmpfs tmpfs 1.6G 904K 1.6G 1% /run

/dev/mapper/pve-root ext4 109G 85G 19G 82% /

tmpfs tmpfs 7.8G 46M 7.7G 1% /dev/shm

tmpfs tmpfs 5.0M 0 5.0M 0% /run/lock

/dev/sdb2 vfat 1022M 352K 1022M 1% /boot/efi

/dev/fuse fuse 128M 16K 128M 1% /etc/pve

tmpfs tmpfs 1.6G 0 1.6G 0% /run/user/0

/dev/sdc2 ext4 916G 12K 869G 1% /mnt/usbToshiBa1T

本章节参考:

第二天,sdc消失,变成了sdd.

直接重新拔插USB,还是识别成sdd。删除昨天的sdc2 mount.

使用pve的UI,点击磁盘》/dev/sdd2,点 ‘擦除磁盘’,使用率由昨天格式化的ext4 变成了 否。

umount /mnt/usbToshiBa1T

在UI中(目录:磁盘》LVM),新建Volume Group。

top, ps查了半天,还是在pve里面的重启指令生效了 😃

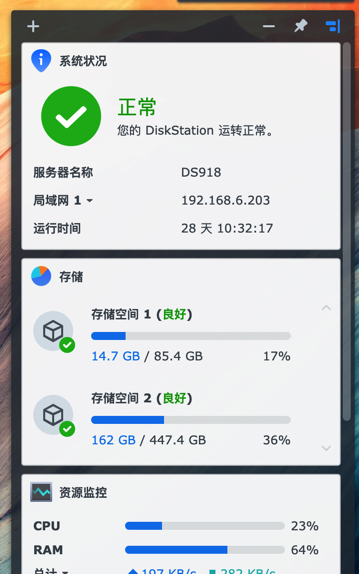

群晖中清理新硬盘容量

日常使用中有手动的把不需要的文件都删除,可是依然发现磁盘的使用率一路表现直到75% 然后 82%(报警)。

今天发现(其实以前清理过),需要群晖中删除的文件都会默认被挪动到‘回收站’;直到我手动地在该硬盘的根目录,删除‘#recovery’文件夹。磁盘使用率恢复成36%,高兴了 😃

分配PVE新的USB磁盘给Debian

Debian是以docker的形式挂载在PVE上的,点击pve UI中的Debian11 > ‘资源’ > 添加‘挂载点’

分配了USB ToShiBa 的硬盘中的 400G给Debian。

然后备份Debian和Openwrt到这个USB ToShiBa 的硬盘400G空间中,然后都结束以后,PVE又显示磁盘的状态:unknown

点击磁盘后选择‘备份’显示错误:

mkdir /mnt/usbToshiBa//dump: Input/output error at /usr/share/perl5/PVE/Storage/Plugin.pm line 1389. (500)

重新拔插了USB的ToshiBa移动硬盘;依然显示状态:unknown

修复办法:

切换到PVE的Shell执行

mount /dev/sdc2 /mnt/usbToshiBa

#20240423/CZ 尝试通过修改/etc/fstab来获取自动加载,不知道下次会不会自动加载?

echo /dev/sdc2 /mnt/usbToshiBa ext4 defaults 0 0 >> /etc/fstab

相关内容

实现方法

都是些小爱心小公益的项目,没必要花时间攻击哦,沉淀共享,格局放大!~

浙公网安备 33010602011771号

浙公网安备 33010602011771号