python:爬虫初体验

最近帮老妈在58上找保姆的工作,无奈58上的中介服务太多 了,我想找一些私人发布的保姆招聘信息,所以倒腾了一个python的爬虫,将数据爬出来之后通过Excel进行过滤中介,因为代码实在是太简单,这里就不解释了

代码不多,如下:

#!/usr/bin/python #coding=utf-8 import requests from bs4 import BeautifulSoup import xlwt url1 = "https://gz.58.com/job/pn" url2 = "/?key=%E4%BF%9D%E5%A7%86&final=1&jump=1&PGTID=0d302408-0000-3bd9-3b86-d29895d9ee5d&ClickID=3" book = xlwt.Workbook(encoding='utf-8') sheet = book.add_sheet(u'qingyuan',cell_overwrite_ok=True) kk = 0 for i in range(1,54): print("*******************第"+str(i)+"页****************************") html = requests.get(url1+str(i)+url2) soup = BeautifulSoup(html.text, "lxml") address = soup.select('#list_con > li.job_item > div.job_title > div.job_name > a > span.address') jobTitle = soup.select('#list_con > li.job_item > div.job_title > div.job_name > a > span.name') salary = soup.select('#list_con > li.job_item > div.job_title > p.job_salary') company = soup.select('#list_con > li.job_item > div.job_comp > div.comp_name > a') link = soup.select("#list_con > li.job_item > div.job_title > div.job_name > a") if len(address)==0: print("*******************第" + i + "页被拦截****************************") break for j in range(len(address)): sheet.write(j+kk, 0, address[j].get_text()) sheet.write(j+kk, 1, jobTitle[j].get_text()) sheet.write(j+kk, 2, salary[j].get_text()) sheet.write(j+kk, 3, company[j].get('title')) sheet.write(j+kk, 4, link[j].get('href')) kk = kk+len(address) path = 'E:/58广州保姆招聘信息爬虫结果.xls' book.save(path)

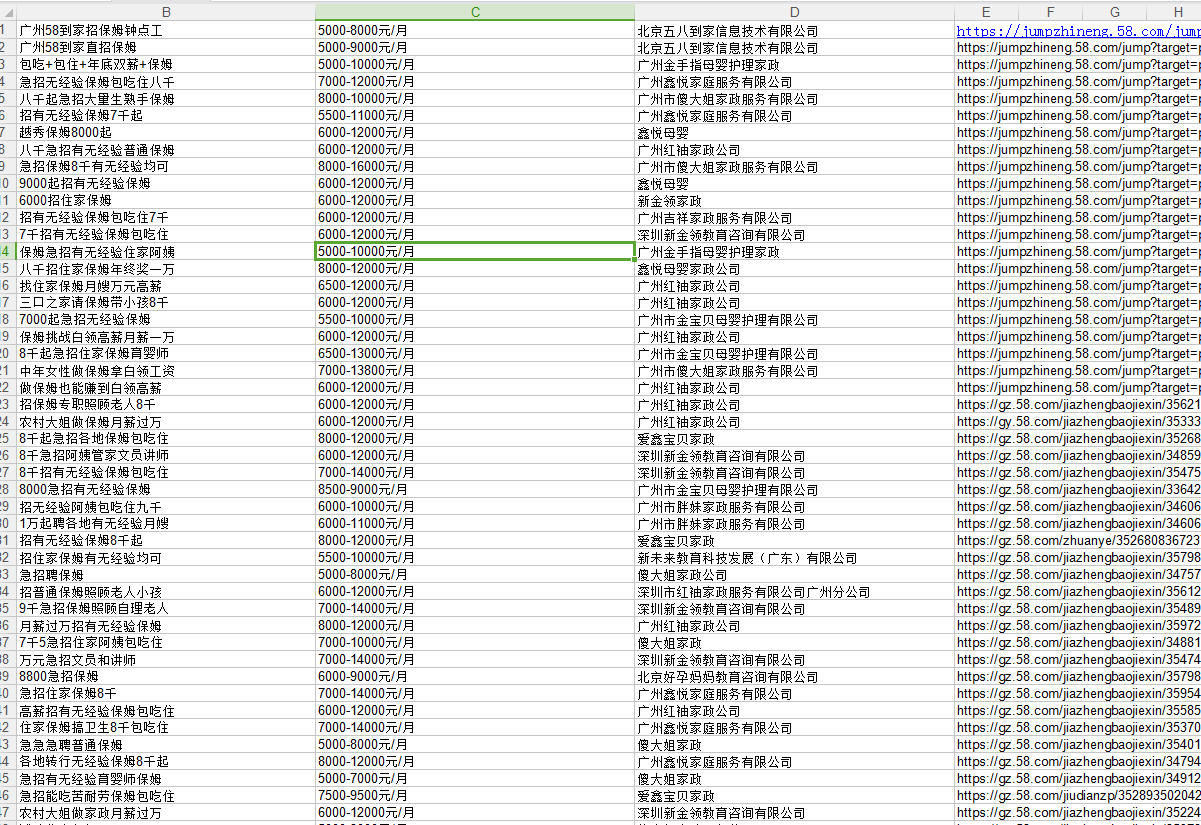

这是最后排出来的Excel的数据样子