报错栈:

2017-06-15 16:24:50,449 INFO [main] org.apache.sqoop.mapreduce.db.DBRecordReader: Executing query: select "CTJX60","CTJX61","CTJX62","CTJX63","CTJX64","CTJX65","CTJX66","CTJX67","CTJX68","CTJX69","CTJX70","CTJX71","CTJX72","CTJX73","CTJX74","CTJX75","CTJX76","CTJX77","CTJX78","CTJX79","CTJX80","CTJX81","CTJX82","CTJX83","CTJX84","CTJX85","CTJX86","CTJX87","CTJX88","CTJX89","CTJX90","CTJX91","CTJX92","CTJX93","CTJX94","CTJX95","CTJX96","CTJX97","CTJX98","CTJX99","CTJX55","IID","IEXAM_IID","CTSBGLX","CTJX01","CTJX02","CTJX03","CTJX04","CTJX05","CTJX06","CTJX07","CTJX08","CTJX09","CTJX10","CTJX11","CTJX12","CTJX13","CTJX14","CTJX15","CTJX16","CTJX17","CTJX18","CTJX19","CTJX20","CTJX21","CTJX22","CTJX23","CTJX24","CTJX25","CTJX26","CTJX27","CTJX28","CTJX29","CTJX30","CTJX31","CTJX32","CTJX33","CTJX34","CTJX35","CTJX36","CTJX37","CTJX38","CTJX39","CTJX40","CTJX41","CTJX42","CTJX43","CTJX44","CTJX45","CTJX46","CTJX47","CTJX48","CTJX49","CTJX50","CTJX51","CTJX52","CTJX53","CTJX54","CTJX56","CTJX57","CTJX58","CTJX59" from RIS."ICNRIS_UIS_EXAM_TJX" tbl where ( 1=1 ) AND ( 1=1 ) 2017-06-15 16:24:50,589 INFO [Thread-13] org.apache.sqoop.mapreduce.AutoProgressMapper: Auto-progress thread is finished. keepGoing=false 2017-06-15 16:24:50,604 FATAL [main] org.apache.hadoop.mapred.YarnChild: Error running child : java.lang.OutOfMemoryError: Java heap space at oracle.jdbc.driver.OracleStatement.prepareAccessors(OracleStatement.java:870) at oracle.jdbc.driver.OracleStatement.executeMaybeDescribe(OracleStatement.java:1047) at oracle.jdbc.driver.T4CPreparedStatement.executeMaybeDescribe(T4CPreparedStatement.java:850) at oracle.jdbc.driver.OracleStatement.doExecuteWithTimeout(OracleStatement.java:1134) at oracle.jdbc.driver.OraclePreparedStatement.executeInternal(OraclePreparedStatement.java:3339) at oracle.jdbc.driver.OraclePreparedStatement.executeQuery(OraclePreparedStatement.java:3384) at org.apache.sqoop.mapreduce.db.DBRecordReader.executeQuery(DBRecordReader.java:111) at org.apache.sqoop.mapreduce.db.DBRecordReader.nextKeyValue(DBRecordReader.java:235) at org.apache.hadoop.mapred.MapTask$NewTrackingRecordReader.nextKeyValue(MapTask.java:556) at org.apache.hadoop.mapreduce.task.MapContextImpl.nextKeyValue(MapContextImpl.java:80) at org.apache.hadoop.mapreduce.lib.map.WrappedMapper$Context.nextKeyValue(WrappedMapper.java:91) at org.apache.hadoop.mapreduce.Mapper.run(Mapper.java:145) at org.apache.sqoop.mapreduce.AutoProgressMapper.run(AutoProgressMapper.java:64) at org.apache.hadoop.mapred.MapTask.runNewMapper(MapTask.java:787) at org.apache.hadoop.mapred.MapTask.run(MapTask.java:341) at org.apache.hadoop.mapred.YarnChild$2.run(YarnChild.java:164) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1657) at org.apache.hadoop.mapred.YarnChild.main(YarnChild.java:158)

解决:调小sqoop参数:--fetch-size

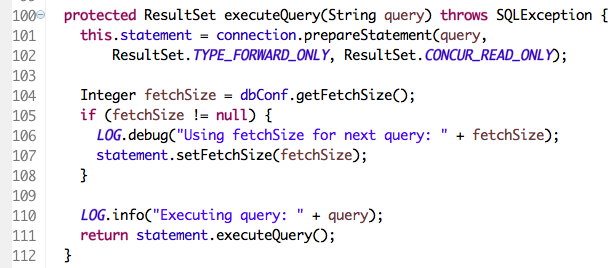

解决过程,查看sqoop源码,看到fetchSize,想到调整这个参数:

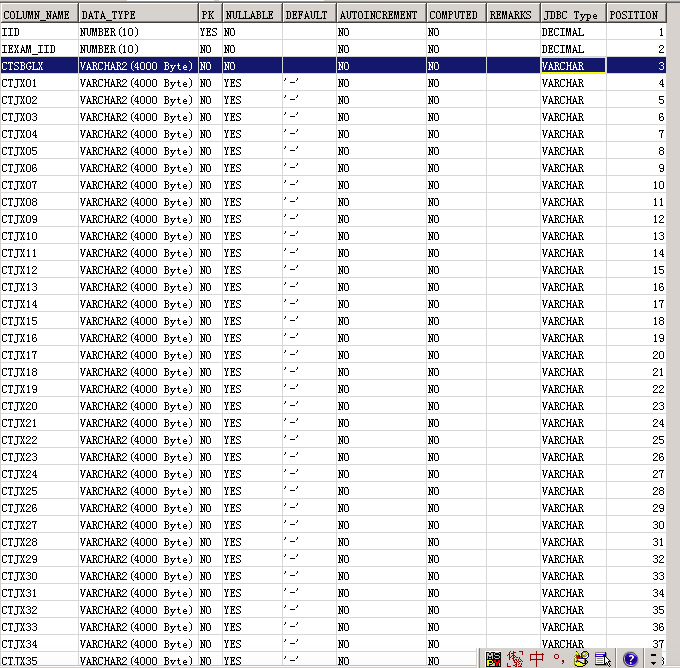

ps: 调大mapper参数不管用,曾设置过参数:-D mapreduce.map.memory.mb=8192 -D yarn.app.mapreduce.am.resource.mb=6144。问题是在从db fetch数据的时候出现的,原表的列不算多,但每个列的长度很大:

浙公网安备 33010602011771号

浙公网安备 33010602011771号