k8s 资料(安装,,,持续更新)

Kubeadm方式号称一键安装部署,很多人也试过并且顺利成功,可到了我这里因为折腾系统问题,倒腾出不少的坑出来。

- kubeadm好处是自动配置了必要的服务,以及缺省配置了安全的认证,etcd,apiserver,controller-manager,Schedule,kube-proxy都变成pod而非操作系统进程可以不断检测其状态并且进行迁移(能否迁移不确定)

- kubeadm上有很多组件配置直接拿来可用。

- 缺点是缺乏集群高可用模式,以及目前的定位是beta版。

[kubeadm] WARNING: kubeadm is in beta, please do not use it for production clusters.

- 准备工作

关掉selinux

vi /etc/selinux/config disabled

关掉firewalld,iptables

systemctl disable firewalld systemctl stop firewalld systemctl disable iptables systemctl stop iptables

先设置主机名

hostnamectl set-hostname k8s-1

修改/etc/hosts文件

cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.0.105 k8s-1 192.168.0.106 k8s-2 192.168.0.107 k8s-3

修改网络配置成静态ip,然后

service network restart

- 安装docker,kubectl,kubelet,kubeadm

安装docker

yum install docker

验证docker version

[root@k8s-master1 ~]# service docker start Redirecting to /bin/systemctl start docker.service [root@k8s-master1 ~]# docker version Client: Version: 1.12.6 API version: 1.24 Package version: docker-1.12.6-61.git85d7426.el7.centos.x86_64 Go version: go1.8.3 Git commit: 85d7426/1.12.6 Built: Tue Oct 24 15:40:21 2017 OS/Arch: linux/amd64 Server: Version: 1.12.6 API version: 1.24 Package version: docker-1.12.6-61.git85d7426.el7.centos.x86_64 Go version: go1.8.3 Git commit: 85d7426/1.12.6 Built: Tue Oct 24 15:40:21 2017 OS/Arch: linux/amd64

开机启动

[root@k8s-master1 ~]# systemctl enable docker Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service. [root@k8s-master1 ~]# systemctl start docker

编辑生成kubernetes的yum源

[root@k8s-1 network-scripts]# cat /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0

安装kubelet,kubectl,kubenetes-cni,kubeadm,缺省安装的是1.7.5版本

yum install kubectl kubelet kubernetes-cni kubeadm

sysctl net.bridge.bridge-nf-call-iptables=1

如果需要安装其他版本,可以用yum remove移除

修改kubelet启动配置文件,主要是将--cgroup-driver改为cgroupfs(确保和/usr/lib/systemd/system/docker.service的用户一致就可以了,不需要修改!)

[root@k8s-1 bin]# cat /etc/systemd/system/kubelet.service.d/10-kubeadm.conf [Service] Environment="KUBELET_KUBECONFIG_ARGS=--kubeconfig=/etc/kubernetes/kubelet.conf --require-kubeconfig=true" Environment="KUBELET_SYSTEM_PODS_ARGS=--pod-manifest-path=/etc/kubernetes/manifests --allow-privileged=true" Environment="KUBELET_NETWORK_ARGS=--network-plugin=cni --cni-conf-dir=/etc/cni/net.d --cni-bin-dir=/opt/cni/bin" Environment="KUBELET_DNS_ARGS=--cluster-dns=10.96.0.10 --cluster-domain=cluster.local" Environment="KUBELET_AUTHZ_ARGS=--authorization-mode=Webhook --client-ca-file=/etc/kubernetes/pki/ca.crt" Environment="KUBELET_CADVISOR_ARGS=--cadvisor-port=0" Environment="KUBELET_CGROUP_ARGS=--cgroup-driver=cgroupfs" ExecStart= ExecStart=/usr/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_SYSTEM_PODS_ARGS $KUBELET_NETWORK_ARGS $KUBELET_DNS_ARGS $KUBELET_AUTHZ_ARGS $KUBELET_CADVISOR_ARGS $KUBELET_CGROUP_ARGS $KUBELET_EXTRA_ARGS

启动docker和kubelet

systemctl enable docker systemctl enable kubelet systemctl start docker systemctl start kubelet

- 下载镜像

在运行kubeadm之前,需要在本地先下载一系列images,这些images名称和版本,可以运行kubeadm init,然后中断运行得到

具体会生成在/etc/kubernetes/manifest目录下,通过grep命令可以列出,比如

cat etcd.yaml | grep gcr*

image: gcr.io/google_containers/etcd-amd64:3.0.17

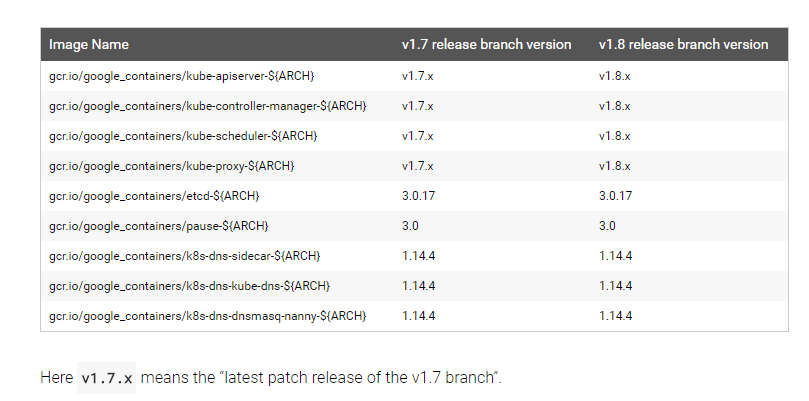

那具体需要下载哪些images和相应的版本呢? 可以参照kubernetes kubeadm手册,具体地址

https://kubernetes.io/docs/admin/kubeadm/

这里就有比较清楚的版本和对应关系。

如何获取镜像

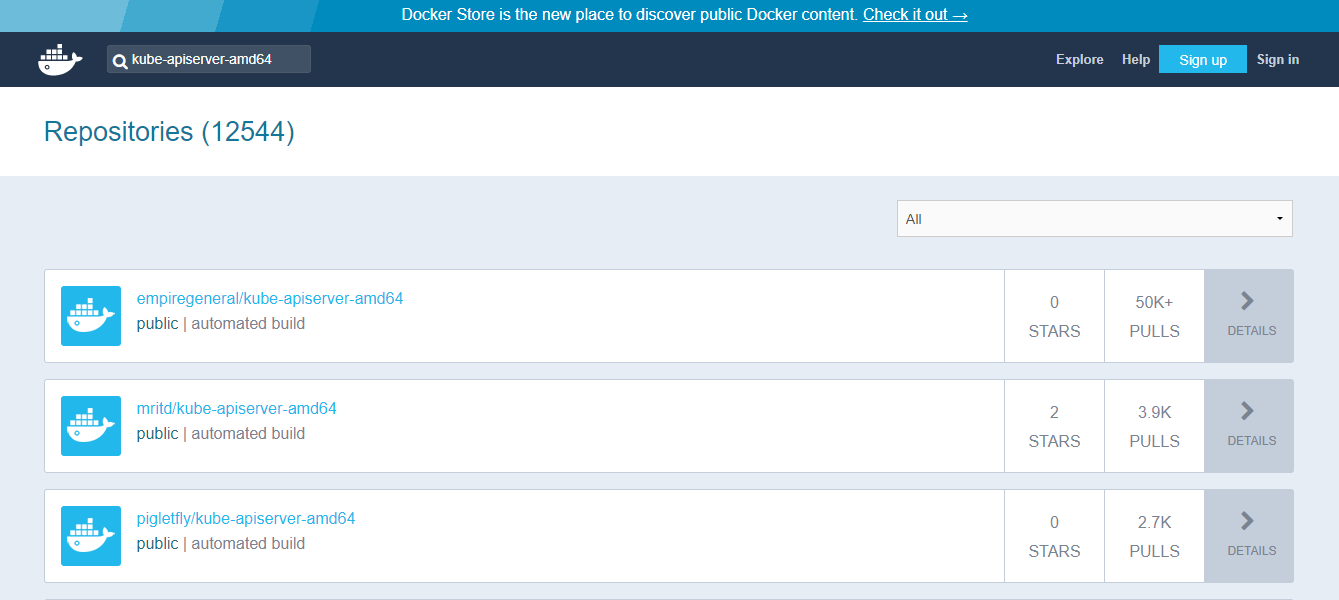

国内因为gcr.io被墙,所以要么通过代理FQ获取,要么寻找其他办法。我的办法是访问

https://hub.docker.com/,然后搜索kube-apiserver-amd64,会列出各位大神已经build好的images

选择相应的版本,进行pull

docker pull cloudnil/etcd-amd64:3.0.17 docker pull cloudnil/pause-amd64:3.0 docker pull cloudnil/kube-proxy-amd64:v1.7.2 docker pull cloudnil/kube-scheduler-amd64:v1.7.2 docker pull cloudnil/kube-controller-manager-amd64:v1.7.2 docker pull cloudnil/kube-apiserver-amd64:v1.7.2 docker pull cloudnil/kubernetes-dashboard-amd64:v1.6.1 docker pull cloudnil/k8s-dns-sidecar-amd64:1.14.4 docker pull cloudnil/k8s-dns-kube-dns-amd64:1.14.4 docker pull cloudnil/k8s-dns-dnsmasq-nanny-amd64:1.14.4 docker tag cloudnil/etcd-amd64:3.0.17 gcr.io/google_containers/etcd-amd64:3.0.17 docker tag cloudnil/pause-amd64:3.0 gcr.io/google_containers/pause-amd64:3.0 docker tag cloudnil/kube-proxy-amd64:v1.7.2 gcr.io/google_containers/kube-proxy-amd64:v1.7.2 docker tag cloudnil/kube-scheduler-amd64:v1.7.2 gcr.io/google_containers/kube-scheduler-amd64:v1.7.2 docker tag cloudnil/kube-controller-manager-amd64:v1.7.2 gcr.io/google_containers/kube-controller-manager-amd64:v1.7.2 docker tag cloudnil/kube-apiserver-amd64:v1.7.2 gcr.io/google_containers/kube-apiserver-amd64:v1.7.2 docker tag cloudnil/kubernetes-dashboard-amd64:v1.6.1 gcr.io/google_containers/kubernetes-dashboard-amd64:v1.6.1 docker tag cloudnil/k8s-dns-sidecar-amd64:1.14.4 gcr.io/google_containers/k8s-dns-sidecar-amd64:1.14.4 docker tag cloudnil/k8s-dns-kube-dns-amd64:1.14.4 gcr.io/google_containers/k8s-dns-kube-dns-amd64:1.14.4 docker tag cloudnil/k8s-dns-dnsmasq-nanny-amd64:1.14.4 gcr.io/google_containers/k8s-dns-dnsmasq-nanny-amd64:1.14.4

最后

[root@k8s-1 ~]# docker images REPOSITORY TAG IMAGE ID CREATED SIZE gcr.io/google_containers/kube-apiserver-amd64 v1.7.2 25c5958099a8 3 months ago 186.1 MB gcr.io/google_containers/kube-controller-manager-amd64 v1.7.2 83d607ba9358 3 months ago 138 MB gcr.io/google_containers/kube-scheduler-amd64 v1.7.2 6282cca6de74 3 months ago 77.18 MB gcr.io/google_containers/kube-proxy-amd64 v1.7.2 69f8faa3d08d 3 months ago 114.7 MB gcr.io/google_containers/k8s-dns-kube-dns-amd64 1.14.4 2d6a3bea02c4 3 months ago 49.38 MB gcr.io/google_containers/k8s-dns-dnsmasq-nanny-amd64 1.14.4 13117b1d461f 3 months ago 41.41 MB gcr.io/google_containers/k8s-dns-sidecar-amd64 1.14.4 c413c7235eb4 3 months ago 41.81 MB gcr.io/google_containers/etcd-amd64 3.0.17 393e48d05c4e 4 months ago 168.9 MB gcr.io/google_containers/kubernetes-dashboard-amd64 v1.6.1 c14ffb751676 4 months ago 134.4 MB gcr.io/google_containers/pause-amd64 3.0 66c684b679d2 4 months ago 746.9 kB

- 主节点初始化

镜像准备完成,准备开始init

kubeadm init --kubernetes-version=v1.7.2 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=0.0.0.0 --apiserver-cert-extra-sans=192.168.0.105,192.168.0.106,192.168.0.107,127.0.0.1,k8s-1,k8s-2,k8s-3,192.168.0.1 --skip-preflight-checks

[root@k8s-1 network-scripts]# kubeadm init --kubernetes-version=v1.7.2 --pod-network-cidr=10.244.0.0/12 --apiserver-advertise-address=0.0.0.0 --apiserver-cert-extra-sans=192.168.0.105,192.168.0.106,192.168.0.107,127.0.0.1,k8s-1,k8s-2,k8s-3,192.168.0.1 --skip-preflight-checks [kubeadm] WARNING: kubeadm is in beta, please do not use it for production clusters. [init] Using Kubernetes version: v1.7.2 [init] Using Authorization modes: [Node RBAC] [preflight] Skipping pre-flight checks [kubeadm] WARNING: starting in 1.8, tokens expire after 24 hours by default (if you require a non-expiring token use --token-ttl 0) [certificates] Using the existing CA certificate and key. [certificates] Using the existing API Server certificate and key. [certificates] Using the existing API Server kubelet client certificate and key. [certificates] Using the existing service account token signing key. [certificates] Using the existing front-proxy CA certificate and key. [certificates] Using the existing front-proxy client certificate and key. [certificates] Valid certificates and keys now exist in "/etc/kubernetes/pki" [kubeconfig] Using existing up-to-date KubeConfig file: "/etc/kubernetes/scheduler.conf" [kubeconfig] Using existing up-to-date KubeConfig file: "/etc/kubernetes/admin.conf" [kubeconfig] Using existing up-to-date KubeConfig file: "/etc/kubernetes/kubelet.conf" [kubeconfig] Using existing up-to-date KubeConfig file: "/etc/kubernetes/controller-manager.conf" [apiclient] Created API client, waiting for the control plane to become ready

坑来了。。。卡在这一句上,通过journalctl看日志

journalctl -xeu kubelet > a

Oct 30 10:01:30 k8s-1 systemd[1]: Starting kubelet: The Kubernetes Node Agent...

-- Subject: Unit kubelet.service has begun start-up

-- Defined-By: systemd

-- Support: http://lists.freedesktop.org/mailman/listinfo/systemd-devel

--

-- Unit kubelet.service has begun starting up.

Oct 30 10:01:30 k8s-1 kubelet[4646]: I1030 10:01:30.326586 4646 feature_gate.go:144] feature gates: map[]

Oct 30 10:01:30 k8s-1 kubelet[4646]: error: failed to run Kubelet: invalid kubeconfig: stat /etc/kubernetes/kubelet.conf: no such file or directory

Oct 30 10:01:30 k8s-1 systemd[1]: kubelet.service: main process exited, code=exited, status=1/FAILURE

Oct 30 10:01:30 k8s-1 systemd[1]: Unit kubelet.service entered failed state.

Oct 30 10:01:30 k8s-1 systemd[1]: kubelet.service failed.

Oct 30 10:01:40 k8s-1 systemd[1]: kubelet.service holdoff time over, scheduling restart.

Oct 30 10:01:40 k8s-1 systemd[1]: Started kubelet: The Kubernetes Node Agent.

-- Subject: Unit kubelet.service has finished start-up

-- Defined-By: systemd

-- Support: http://lists.freedesktop.org/mailman/listinfo/systemd-devel

--

-- Unit kubelet.service has finished starting up.

--

-- The start-up result is done.

Oct 30 10:01:40 k8s-1 systemd[1]: Starting kubelet: The Kubernetes Node Agent...

-- Subject: Unit kubelet.service has begun start-up

-- Defined-By: systemd

-- Support: http://lists.freedesktop.org/mailman/listinfo/systemd-devel

--

-- Unit kubelet.service has begun starting up.

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.709684 4676 feature_gate.go:144] feature gates: map[]

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.712602 4676 client.go:72] Connecting to docker on unix:///var/run/docker.sock

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.712647 4676 client.go:92] Start docker client with request timeout=2m0s

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.714086 4676 cni.go:189] Unable to update cni config: No networks found in /etc/cni/net.d

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.725461 4676 manager.go:143] cAdvisor running in container: "/"

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.752809 4676 manager.go:151] unable to connect to Rkt api service: rkt: cannot tcp Dial rkt api service: dial tcp [::1]:15441: getsockopt: connection refused

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.762789 4676 fs.go:117] Filesystem partitions: map[/dev/mapper/cl-root:{mountpoint:/ major:253 minor:0 fsType:xfs blockSize:0} /dev/sda1:{mountpoint:/boot major:8 minor:1 fsType:xfs blockSize:0}]

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.763579 4676 manager.go:198] Machine: {NumCores:1 CpuFrequency:2496238 MemoryCapacity:1041182720 MachineID:a146a47b0c6b4c28a794c88309119e62 SystemUUID:B9DF3269-4A23-458F-8717-21EC1D216DD4 BootID:62e18038-ea14-438f-9688-e6a4abf265a1 Filesystems:[{Device:/dev/mapper/cl-root DeviceMajor:253 DeviceMinor:0 Capacity:39700664320 Type:vfs Inodes:19394560 HasInodes:true} {Device:/dev/sda1 DeviceMajor:8 DeviceMinor:1 Capacity:1063256064 Type:vfs Inodes:524288 HasInodes:true}] DiskMap:map[253:1:{Name:dm-1 Major:253 Minor:1 Size:2147483648 Scheduler:none} 253:2:{Name:dm-2 Major:253 Minor:2 Size:107374182400 Scheduler:none} 8:0:{Name:sda Major:8 Minor:0 Size:42949672960 Scheduler:cfq} 253:0:{Name:dm-0 Major:253 Minor:0 Size:39720058880 Scheduler:none}] NetworkDevices:[{Name:enp0s3 MacAddress:08:00:27:e2:ae:0a Speed:1000 Mtu:1500} {Name:virbr0 MacAddress:52:54:00:ed:58:71 Speed:0 Mtu:1500} {Name:virbr0-nic MacAddress:52:54:00:ed:58:71 Speed:0 Mtu:1500}] Topology:[{Id:0 Memory:1073274880 Cores:[{Id:0 Threads:[0] Caches:[{Size:32768 Type:Data Level:1} {Size:32768 Type:Instruction Level:1} {Size:262144 Type:Unified Level:2}]}] Caches:[{Size:3145728 Type:Unified Level:3}]}] CloudProvider:Unknown InstanceType:Unknown InstanceID:None}

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.765607 4676 manager.go:204] Version: {KernelVersion:3.10.0-514.21.1.el7.x86_64 ContainerOsVersion:CentOS Linux 7 (Core) DockerVersion:1.12.6 DockerAPIVersion:1.24 CadvisorVersion: CadvisorRevision:}

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.766218 4676 server.go:536] --cgroups-per-qos enabled, but --cgroup-root was not specified. defaulting to /

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.767731 4676 container_manager_linux.go:218] Running with swap on is not supported, please disable swap! This will be a fatal error by default starting in K8s v1.6! In the meantime, you can opt-in to making this a fatal error by enabling --experimental-fail-swap-on.

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.767779 4676 container_manager_linux.go:246] container manager verified user specified cgroup-root exists: /

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.767789 4676 container_manager_linux.go:251] Creating Container Manager object based on Node Config: {RuntimeCgroupsName: SystemCgroupsName: KubeletCgroupsName: ContainerRuntime:docker CgroupsPerQOS:true CgroupRoot:/ CgroupDriver:cgroupfs ProtectKernelDefaults:false NodeAllocatableConfig:{KubeReservedCgroupName: SystemReservedCgroupName: EnforceNodeAllocatable:map[pods:{}] KubeReserved:map[] SystemReserved:map[] HardEvictionThresholds:[{Signal:memory.available Operator:LessThan Value:{Quantity:100Mi Percentage:0} GracePeriod:0s MinReclaim:<nil>} {Signal:nodefs.available Operator:LessThan Value:{Quantity:<nil> Percentage:0.1} GracePeriod:0s MinReclaim:<nil>} {Signal:nodefs.inodesFree Operator:LessThan Value:{Quantity:<nil> Percentage:0.05} GracePeriod:0s MinReclaim:<nil>}]} ExperimentalQOSReserved:map[]}

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.767924 4676 kubelet.go:263] Adding manifest file: /etc/kubernetes/manifests

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.767935 4676 kubelet.go:273] Watching apiserver

Oct 30 10:01:40 k8s-1 kubelet[4676]: E1030 10:01:40.782325 4676 reflector.go:190] k8s.io/kubernetes/pkg/kubelet/kubelet.go:408: Failed to list *v1.Node: Get https://192.168.0.105:6443/api/v1/nodes?fieldSelector=metadata.name%3Dk8s-1&resourceVersion=0: dial tcp 192.168.0.105:6443: getsockopt: connection refused

Oct 30 10:01:40 k8s-1 kubelet[4676]: E1030 10:01:40.782380 4676 reflector.go:190] k8s.io/kubernetes/pkg/kubelet/kubelet.go:400: Failed to list *v1.Service: Get https://192.168.0.105:6443/api/v1/services?resourceVersion=0: dial tcp 192.168.0.105:6443: getsockopt: connection refused

Oct 30 10:01:40 k8s-1 kubelet[4676]: E1030 10:01:40.782413 4676 reflector.go:190] k8s.io/kubernetes/pkg/kubelet/config/apiserver.go:46: Failed to list *v1.Pod: Get https://192.168.0.105:6443/api/v1/pods?fieldSelector=spec.nodeName%3Dk8s-1&resourceVersion=0: dial tcp 192.168.0.105:6443: getsockopt: connection refused

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.783607 4676 kubelet_network.go:70] Hairpin mode set to "promiscuous-bridge" but kubenet is not enabled, falling back to "hairpin-veth"

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.783625 4676 kubelet.go:508] Hairpin mode set to "hairpin-veth"

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.784179 4676 cni.go:189] Unable to update cni config: No networks found in /etc/cni/net.d

orks found in /etc/cni/net.d

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.784915 4676 cni.go:189] Unable to update cni config: No networks found in /etc/cni/net.d

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.793823 4676 cni.go:189] Unable to update cni config: No networks found in /etc/cni/net.d

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.793839 4676 docker_service.go:208] Docker cri networking managed by cni

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.798395 4676 docker_service.go:225] Setting cgroupDriver to cgroupfs

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.804276 4676 remote_runtime.go:42] Connecting to runtime service unix:///var/run/dockershim.sock

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.806221 4676 kuberuntime_manager.go:166] Container runtime docker initialized, version: 1.12.6, apiVersion: 1.24.0

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.807620 4676 server.go:943] Started kubelet v1.7.5

Oct 30 10:01:40 k8s-1 kubelet[4676]: E1030 10:01:40.808001 4676 kubelet.go:1229] Image garbage collection failed once. Stats initialization may not have completed yet: unable to find data for container /

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.808008 4676 kubelet_node_status.go:247] Setting node annotation to enable volume controller attach/detach

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.808464 4676 server.go:132] Starting to listen on 0.0.0.0:10250

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.809166 4676 server.go:310] Adding debug handlers to kubelet server.

Oct 30 10:01:40 k8s-1 kubelet[4676]: E1030 10:01:40.811544 4676 event.go:209] Unable to write event: 'Post https://192.168.0.105:6443/api/v1/namespaces/default/events: dial tcp 192.168.0.105:6443: getsockopt: connection refused' (may retry after sleeping)

Oct 30 10:01:40 k8s-1 kubelet[4676]: E1030 10:01:40.818965 4676 kubelet.go:1729] Failed to check if disk space is available for the runtime: failed to get fs info for "runtime": unable to find data for container /

Oct 30 10:01:40 k8s-1 kubelet[4676]: E1030 10:01:40.818965 4676 kubelet.go:1737] Failed to check if disk space is available on the root partition: failed to get fs info for "root": unable to find data for container /

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.826012 4676 fs_resource_analyzer.go:66] Starting FS ResourceAnalyzer

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.826058 4676 status_manager.go:140] Starting to sync pod status with apiserver

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.826130 4676 kubelet.go:1809] Starting kubelet main sync loop.

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.826196 4676 kubelet.go:1820] skipping pod synchronization - [container runtime is down PLEG is not healthy: pleg was last seen active 2562047h47m16.854775807s ago; threshold is 3m0s]

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.826424 4676 container_manager_linux.go:747] CPUAccounting not enabled for pid: 980

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.826429 4676 container_manager_linux.go:750] MemoryAccounting not enabled for pid: 980

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.826465 4676 container_manager_linux.go:747] CPUAccounting not enabled for pid: 4676

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.826429 4676 container_manager_linux.go:750] MemoryAccounting not enabled for pid: 980

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.826465 4676 container_manager_linux.go:747] CPUAccounting not enabled for pid: 4676

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.826468 4676 container_manager_linux.go:750] MemoryAccounting not enabled for pid: 4676

Oct 30 10:01:40 k8s-1 kubelet[4676]: E1030 10:01:40.826495 4676 container_manager_linux.go:543] [ContainerManager]: Fail to get rootfs information unable to find data for container /

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.826504 4676 volume_manager.go:245] Starting Kubelet Volume Manager

Oct 30 10:01:40 k8s-1 kubelet[4676]: W1030 10:01:40.829827 4676 cni.go:189] Unable to update cni config: No networks found in /etc/cni/net.d

Oct 30 10:01:40 k8s-1 kubelet[4676]: E1030 10:01:40.829892 4676 kubelet.go:2136] Container runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is not ready: cni config uninitialized

Oct 30 10:01:40 k8s-1 kubelet[4676]: E1030 10:01:40.844934 4676 factory.go:336] devicemapper filesystem stats will not be reported: usage of thin_ls is disabled to preserve iops

Oct 30 10:01:40 k8s-1 kubelet[4676]: I1030 10:01:40.845787 4676 factory.go:351] Registering Docker factory

看起来是cni初始化的问题,网上帖子一大堆,但解决方案都不work。

=============================================================================

反复折腾搞不定,觉得可能是自己的OS有问题,重新安装了个CentOS7.4,步骤一样,结果秒过,真的是崩溃啊,前面那个问题折腾了一天!

同时抄了个脚本,自动化一下镜像下载

images=(etcd-amd64:3.0.17 pause-amd64:3.0 kube-proxy-amd64:v1.7.2 kube-scheduler-amd64:v1.7.2 kube-controller-manager-amd64:v1.7.2 kube-apiserver-amd64:v1.7.2 kubernetes-dashboard-amd64:v1.6.1 k8s-dns-sidecar-amd64:1.14.4 k8s-dns-kube-dns-amd64:1.14.4 k8s-dns-dnsmasq-nanny-amd64:1.14.4)

for imageName in ${images[@]} ; do

docker pull cloudnil/$imageName

docker tag cloudnil/$imageName gcr.io/google_containers/$imageName

docker rmi cloudnil/$imageName

done

[root@k8s-1 ~]# kubeadm init --kubernetes-version=v1.7.2 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=0.0.0.0 --apiserver-cert-extra-sans=192.168.0.105,192.168.0.106,192.168.0.107,127.0.0.1,k8s-1,k8s-2,k8s-3,192.168.0.1 [kubeadm] WARNING: kubeadm is in beta, please do not use it for production clusters. [init] Using Kubernetes version: v1.7.2 [init] Using Authorization modes: [Node RBAC] [preflight] Running pre-flight checks [kubeadm] WARNING: starting in 1.8, tokens expire after 24 hours by default (if you require a non-expiring token use --token-ttl 0) [certificates] Generated CA certificate and key. [certificates] Generated API server certificate and key. [certificates] API Server serving cert is signed for DNS names [k8s-1 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local k8s-1 k8s-2 k8s-3] and IPs [192.168.0.105 192.168.0.106 192.168.0.107 127.0.0.1 192.168.0.1 10.96.0.1 192.168.0.105] [certificates] Generated API server kubelet client certificate and key. [certificates] Generated service account token signing key and public key. [certificates] Generated front-proxy CA certificate and key. [certificates] Generated front-proxy client certificate and key. [certificates] Valid certificates and keys now exist in "/etc/kubernetes/pki" [kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/admin.conf" [kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/kubelet.conf" [kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/controller-manager.conf" [kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/scheduler.conf" [apiclient] Created API client, waiting for the control plane to become ready [apiclient] All control plane components are healthy after 55.001211 seconds [token] Using token: 22d578.d921a7cf51352441 [apiconfig] Created RBAC rules [addons] Applied essential addon: kube-proxy [addons] Applied essential addon: kube-dns Your Kubernetes master has initialized successfully! To start using your cluster, you need to run (as a regular user): mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: http://kubernetes.io/docs/admin/addons/ You can now join any number of machines by running the following on each node as root: kubeadm join --token 22d578.d921a7cf51352441 192.168.0.105:6443

然后

export KUBECONFIG=/etc/kubernetes/admin.conf [root@k8s-1 ~]# kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system etcd-k8s-1 1/1 Running 0 5m kube-system kube-apiserver-k8s-1 1/1 Running 0 4m kube-system kube-controller-manager-k8s-1 1/1 Running 0 4m kube-system kube-dns-2425271678-j8mnw 0/3 Pending 0 5m kube-system kube-proxy-6k4sb 1/1 Running 0 5m kube-system kube-scheduler-k8s-1 1/1 Running 0 4m

- 安装flanneld网络

启动kube-dns的服务无法启动,因为网络尚未配置。

配置flannel网络

在https://github.com/winse/docker-hadoop/tree/master/kube-deploy/kubeadm 中下载kube-flannel.yml和kube-flannel-rbac.yml

然后运行:

[root@k8s-1 ~]# kubectl apply -f kube-flannel.yml serviceaccount "flannel" created configmap "kube-flannel-cfg" created daemonset "kube-flannel-ds" created [root@k8s-1 ~]# kubectl apply -f kube-flannel-rbac.yml clusterrole "flannel" created clusterrolebinding "flannel" created

等待一段时间后pod启动,配置完成

[root@k8s-1 ~]# kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system etcd-k8s-1 1/1 Running 1 3h kube-system kube-apiserver-k8s-1 1/1 Running 1 3h kube-system kube-controller-manager-k8s-1 1/1 Running 1 3h kube-system kube-dns-2425271678-j8mnw 3/3 Running 0 3h kube-system kube-flannel-ds-j491k 2/2 Running 0 1h kube-system kube-proxy-6k4sb 1/1 Running 1 3h kube-system kube-scheduler-k8s-1 1/1 Running 1 3h

节点

安装images

images=(pause-amd64:3.0 kube-proxy-amd64:v1.7.2)

for imageName in ${images[@]} ; do

docker pull cloudnil/$imageName

docker tag cloudnil/$imageName gcr.io/google_containers/$imageName

docker rmi cloudnil/$imageName

done

root@k8s-3 ~]# docker images REPOSITORY TAG IMAGE ID CREATED SIZE gcr.io/google_containers/kube-proxy-amd64 v1.7.2 69f8faa3d08d 3 months ago 114.7 MB gcr.io/google_containers/pause-amd64 3.0 66c684b679d2 4 months ago 746.9 kB

加入集群

[root@k8s-2 ~]# kubeadm join --token 22d578.d921a7cf51352441 192.168.0.105:6443 [kubeadm] WARNING: kubeadm is in beta, please do not use it for production clusters. [preflight] Running pre-flight checks [discovery] Trying to connect to API Server "192.168.0.105:6443" [discovery] Created cluster-info discovery client, requesting info from "https://192.168.0.105:6443" [discovery] Cluster info signature and contents are valid, will use API Server "https://192.168.0.105:6443" [discovery] Successfully established connection with API Server "192.168.0.105:6443" [bootstrap] Detected server version: v1.7.2 [bootstrap] The server supports the Certificates API (certificates.k8s.io/v1beta1) [csr] Created API client to obtain unique certificate for this node, generating keys and certificate signing request [csr] Received signed certificate from the API server, generating KubeConfig... [kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/kubelet.conf" Node join complete: * Certificate signing request sent to master and response received. * Kubelet informed of new secure connection details. Run 'kubectl get nodes' on the master to see this machine join.

验证

[root@k8s-1 ~]# kubectl get nodes NAME STATUS AGE VERSION k8s-1 Ready 4h v1.7.5 k8s-2 Ready 1m v1.7.5

加入节点3后验证

[root@k8s-1 ~]# kubectl get nodes NAME STATUS AGE VERSION k8s-1 Ready 4h v1.7.5 k8s-2 Ready 5m v1.7.5 k8s-3 Ready 50s v1.7.5

[root@k8s-1 ~]# kubectl get pods -n kube-system -o wide NAME READY STATUS RESTARTS AGE IP NODE etcd-k8s-1 1/1 Running 1 4h 192.168.0.105 k8s-1 kube-apiserver-k8s-1 1/1 Running 1 4h 192.168.0.105 k8s-1 kube-controller-manager-k8s-1 1/1 Running 1 4h 192.168.0.105 k8s-1 kube-dns-2425271678-j8mnw 3/3 Running 0 4h 10.244.0.2 k8s-1 kube-flannel-ds-d8vvr 2/2 Running 0 1m 192.168.0.107 k8s-3 kube-flannel-ds-fgvr1 2/2 Running 0 5m 192.168.0.106 k8s-2 kube-flannel-ds-j491k 2/2 Running 0 1h 192.168.0.105 k8s-1 kube-proxy-6k4sb 1/1 Running 1 4h 192.168.0.105 k8s-1 kube-proxy-p6v69 1/1 Running 0 5m 192.168.0.106 k8s-2 kube-proxy-tk2jq 1/1 Running 0 1m 192.168.0.107 k8s-3 kube-scheduler-k8s-1 1/1 Running 1 4h 192.168.0.105 k8s-1

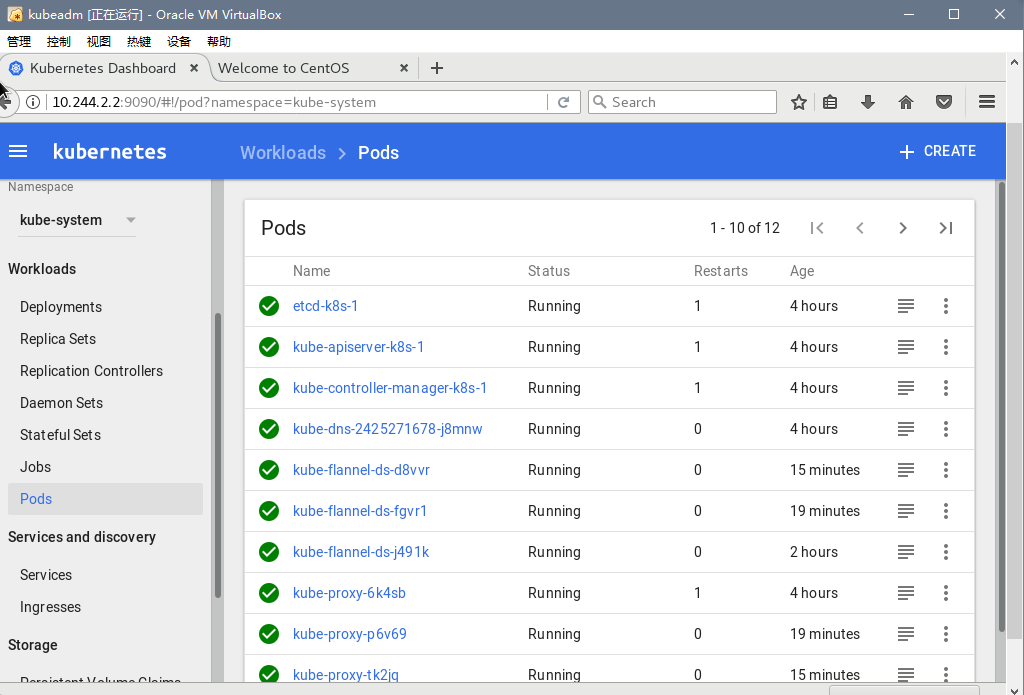

- 建立一个dashborad

在三台机器上运行

images=(kubernetes-dashboard-amd64:v1.6.0)

for imageName in ${images[@]} ; do

docker pull k8scn/$imageName

docker tag k8scn/$imageName gcr.io/google_containers/$imageName

docker rmi k8scn/$imageName

done

然后再https://github.com/winse/docker-hadoop/tree/master/kube-deploy/kubeadm下载一个kubernetes-dashboard.yaml文件

root@k8s-1 ~]# kubectl create -f kubernetes-dashboard.yaml serviceaccount "kubernetes-dashboard" created clusterrolebinding "kubernetes-dashboard" created deployment "kubernetes-dashboard" created service "kubernetes-dashboard" created [root@k8s-1 ~]# kubectl get pods -n kube-system -o wide NAME READY STATUS RESTARTS AGE IP NODE etcd-k8s-1 1/1 Running 1 4h 192.168.0.105 k8s-1 kube-apiserver-k8s-1 1/1 Running 1 4h 192.168.0.105 k8s-1 kube-controller-manager-k8s-1 1/1 Running 1 4h 192.168.0.105 k8s-1 kube-dns-2425271678-j8mnw 3/3 Running 0 4h 10.244.0.2 k8s-1 kube-flannel-ds-d8vvr 2/2 Running 0 13m 192.168.0.107 k8s-3 kube-flannel-ds-fgvr1 2/2 Running 0 18m 192.168.0.106 k8s-2 kube-flannel-ds-j491k 2/2 Running 0 2h 192.168.0.105 k8s-1 kube-proxy-6k4sb 1/1 Running 1 4h 192.168.0.105 k8s-1 kube-proxy-p6v69 1/1 Running 0 18m 192.168.0.106 k8s-2 kube-proxy-tk2jq 1/1 Running 0 13m 192.168.0.107 k8s-3 kube-scheduler-k8s-1 1/1 Running 1 4h 192.168.0.105 k8s-1 kubernetes-dashboard-3044843954-42k3c 1/1 Running 0 4s 10.244.2.2 k8s-3

firefox上运行http://10.244.2.2:9090/,秒出这一大堆的Pods.

谢谢帮助我指引我爬坑的大神们:

http://www.cnblogs.com/liangDream/p/7358847.html

http://www.winseliu.com/blog/2017/08/13/kubeadm-install-k8s-on-centos7-with-resources/

我自己搞了一个星期,把kubernetes1.7.3版本(网络组件选用Calico),终于按照官方文档+填坑的方式部署成功,写此文希望能帮助更多的人部署kubernetes1.7成功。 安装 安装准备 操作系统:CentOS7.3 [root@centos7-base-ok]# cat /etc/redhat-release CentOS Linux release 7.3.1611 (Core) 安装机器:k8s-1为master节点,k8s-2、k8s-3为slave节点 [root@centos7-base-ok]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 k8s-1 192.168.80.28 k8s-2 192.168.80.35 k8s-3 192.168.80.14 安装步骤 安装docker 1.12(所有节点) 注意:现在docker已经更新到CE版本,但是kubernetes官方文档说在1.12上测试通过,最近版本的兼容性未测试,为了避免后面出现大坑,我们还是乖乖安装1.12版本的docker。 1.新建docker.repo文件,将文件移动到/etc/yum.repos.d/目录下 [root@centos7-base-ok]# cat /etc/yum.repos.d/docker.repo [dockerrepo] name=Docker Repository baseurl=https://yum.dockerproject.org/repo/main/centos/7/ enabled=1 gpgcheck=1 gpgkey=https://yum.dockerproject.org/gpg 2.运行yum命令,找到需要安装的docker版本 10:21 [root@centos7-base-ok]# yum list|grep docker | sort -r python2-avocado-plugins-runner-docker.noarch python-dockerpty.noarch 0.4.1-6.el7 epel python-dockerfile-parse.noarch 0.0.5-1.el7 epel python-docker-scripts.noarch 0.4.4-1.el7 epel python-docker-pycreds.noarch 1.10.6-1.el7 extras python-docker-py.noarch 1.10.6-1.el7 extras kdocker.x86_64 4.9-1.el7 epel golang-github-fsouza-go-dockerclient-devel.x86_64 docker.x86_64 2:1.12.6-32.git88a4867.el7.centos docker-v1.10-migrator.x86_64 2:1.12.6-32.git88a4867.el7.centos docker-unit-test.x86_64 2:1.12.6-32.git88a4867.el7.centos docker-registry.x86_64 0.9.1-7.el7 extras docker-registry.noarch 0.6.8-8.el7 extras docker-python.x86_64 1.4.0-115.el7 extras docker-novolume-plugin.x86_64 2:1.12.6-32.git88a4867.el7.centos docker-lvm-plugin.x86_64 2:1.12.6-32.git88a4867.el7.centos docker-logrotate.x86_64 2:1.12.6-32.git88a4867.el7.centos docker-latest.x86_64 1.13.1-13.gitb303bf6.el7.centos docker-latest-v1.10-migrator.x86_64 1.13.1-13.gitb303bf6.el7.centos docker-latest-logrotate.x86_64 1.13.1-13.gitb303bf6.el7.centos docker-forward-journald.x86_64 1.10.3-44.el7.centos extras docker-engine.x86_64 17.05.0.ce-1.el7.centos dockerrepo docker-engine.x86_64 1.12.6-1.el7.centos @dockerrepo docker-engine-selinux.noarch 17.05.0.ce-1.el7.centos @dockerrepo docker-engine-debuginfo.x86_64 17.05.0.ce-1.el7.centos dockerrepo docker-distribution.x86_64 2.6.1-1.el7 extras docker-devel.x86_64 1.3.2-4.el7.centos extras docker-compose.noarch 1.9.0-5.el7 epel docker-common.x86_64 2:1.12.6-32.git88a4867.el7.centos docker-client.x86_64 2:1.12.6-32.git88a4867.el7.centos docker-client-latest.x86_64 1.13.1-13.gitb303bf6.el7.centos cockpit-docker.x86_64 141-3.el7.centos extras 3.找到对应版本后,执行yum install -y 包名+版本号,安装1.12版本的docker-engine [root@centos7-base-ok]# yum install -y docker-engine.x86_64-1.12.6-1.el7.centos 4.执行docker version命令,验证docker安装版本,执行docker run命令,验证docker是否安装成功 [root@centos7-base-ok]# docker version Client: Version: 1.12.6 API version: 1.24 Go version: go1.6.4 Git commit: 78d1802 Built: Tue Jan 10 20:20:01 2017 OS/Arch: linux/amd64 Server: Version: 1.12.6 API version: 1.24 Go version: go1.6.4 Git commit: 78d1802 Built: Tue Jan 10 20:20:01 2017 OS/Arch: linux/amd64 5.设置开机启动,启动容器,docker安装完成 [root@centos7-base-ok]# systemctl enbale docker && systemctl start docker 安装kubectl、kubelet、kubeadm(根据需求在不同节点安装) 注意:此步骤是填坑的开始,因为官方文档的yum源在国内无法使用,安装完成后注意观察你的/var/log/message日志,会疯狂报错,别着急,跟着我一步一步来填坑。 1.新建kubernetes.repo文件,将文件移动到/etc/yum.repos.d/目录下(所有节点) [root@centos7-base-ok]# cat /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 2.通过yum安装kubectl、kubelet、kubeadm(所有节点) [root@centos7-base-ok]# cat /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 3.修改kubelet配置,启动kubelet(所有节点) 注意:时刻查看/var/log/message的日志输出,会看到kubelet一直启动失败。 编辑10-kubeadm.conf的文件,修改cgroup-driver配置: [root@centos7-base-ok]# cat /etc/systemd/system/kubelet.service.d/10-kubeadm.conf [Service] Environment="KUBELET_KUBECONFIG_ARGS=--kubeconfig=/etc/kubernetes/kubelet.conf --require-kubeconfig=true" Environment="KUBELET_SYSTEM_PODS_ARGS=--pod-manifest-path=/etc/kubernetes/manifests --allow-privileged=true" Environment="KUBELET_NETWORK_ARGS=--network-plugin=cni --cni-conf-dir=/etc/cni/net.d --cni-bin-dir=/opt/cni/bin" Environment="KUBELET_DNS_ARGS=--cluster-dns=10.96.0.10 --cluster-domain=cluster.local" Environment="KUBELET_AUTHZ_ARGS=--authorization-mode=Webhook --client-ca-file=/etc/kubernetes/pki/ca.crt" Environment="KUBELET_CADVISOR_ARGS=--cadvisor-port=0" Environment="KUBELET_CGROUP_ARGS=--cgroup-driver=cgroupfs" ExecStart= ExecStart=/usr/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_SYSTEM_PODS_ARGS $KUBELET_NETWORK_ARGS $KUBELET_DNS_ARGS $KUBELET_AUTHZ_ARGS $KUBELET_CADVISOR_ARGS $KUBELET_CGROUP_ARGS $KUBELET_EXTRA_ARGS 将“--cgroup-driver=systems”修改成为“--cgroup-driver=cgroupfs”,重新启动kubelet。 [root@centos7-base-ok]# systemctl restart kubelet 4.下载安装k8s依赖镜像 注意:此步骤非常关键,kubenetes初始化启动会依赖这些镜像,天朝的网络肯定是拉不下来google的镜像的,一般人过了上一关,这一关未必过的去,一定要提前把镜像下载到本地,kubeadm安装才会继续,下面我会列出来master节点和node依赖的镜像列表。(备注:考虑到随着kubernetes版本一直更新,镜像也可能会有变化,大家可以先执行 kubeadm init 生成配置文件,日志输出到 [apiclient] Created API client, waiting for the control plane to become ready 这一行就会卡住不动了,你可以直接执行 ctrl + c 中止命令执行,然后查看 ls -ltr /etc/kubernetes/manifests/ yaml文件列表,每个文件都会写着镜像的地址和版本) 在这里我提一个可以解决下载google镜像的方法,就是买一台可以下载的机器,安装代理软件,在需要下载google镜像的机器的docker设置 HTTP_PROXY 配置项,配置好自己的服务代理即可(也可以直接买可以访问到google的服务器安装). master节点: REPOSITORY TAG IMAGE ID CREATED SIZE quay.io/calico/kube-policy-controller v0.7.0 fe3174230993 3 days ago 21.94 MB kubernetesdashboarddev/kubernetes-dashboard-amd64 head e2cadb73b2df 5 days ago 136.5 MB quay.io/calico/node v2.4.1 7643422fdf0f 6 days ago 277.4 MB gcr.io/google_containers/kube-controller-manager-amd64 v1.7.3 d014f402b272 11 days ago 138 MB gcr.io/google_containers/kube-apiserver-amd64 v1.7.3 a1cc3a3d8d0d 11 days ago 186.1 MB gcr.io/google_containers/kube-scheduler-amd64 v1.7.3 51967bf607d3 11 days ago 77.2 MB gcr.io/google_containers/kube-proxy-amd64 v1.7.3 54d2a8698e3c 11 days ago 114.7 MB quay.io/calico/cni v1.10.0 88ca805c8ddd 13 days ago 70.25 MB gcr.io/google_containers/kubernetes-dashboard-amd64 v1.6.3 691a82db1ecd 2 weeks ago 139 MB quay.io/coreos/etcd v3.1.10 47bb9dd99916 4 weeks ago 34.56 MB gcr.io/google_containers/k8s-dns-sidecar-amd64 1.14.4 38bac66034a6 7 weeks ago 41.81 MB gcr.io/google_containers/k8s-dns-kube-dns-amd64 1.14.4 a8e00546bcf3 7 weeks ago 49.38 MB gcr.io/google_containers/k8s-dns-dnsmasq-nanny-amd64 1.14.4 f7f45b9cb733 7 weeks ago 41.41 MB gcr.io/google_containers/etcd-amd64 3.0.17 243830dae7dd 5 months ago 168.9 MB gcr.io/google_containers/pause-amd64 3.0 99e59f495ffa 15 months ago 746.9 kB node节点: [root@centos7-base-ok]# docker images REPOSITORY TAG IMAGE ID CREATED SIZE kubernetesdashboarddev/kubernetes-dashboard-amd64 head e2cadb73b2df 5 days ago 137MB quay.io/calico/node v2.4.1 7643422fdf0f 6 days ago 277MB gcr.io/google_containers/kube-proxy-amd64 v1.7.3 54d2a8698e3c 11 days ago 115MB quay.io/calico/cni v1.10.0 88ca805c8ddd 13 days ago 70.3MB gcr.io/google_containers/kubernetes-dashboard-amd64 v1.6.3 691a82db1ecd 2 weeks ago 139MB nginx latest b8efb18f159b 2 weeks ago 107MB hello-world latest 1815c82652c0 2 months ago 1.84kB gcr.io/google_containers/pause-amd64 3.0 99e59f495ffa 15 months ago 747kB 5.利用kubeadm初始化服务(master节点) 注意:如果你在上一步执行过 kubeadm init 命令,没有关系,此步执行只需要执行时加上 --skip-preflight-checks 这个配置项即可。 注意:执行 kubeadm init 的 --pod-network-cidr 参数和选择的网络组件有关系,详细可以看官方文档说明,本文选用的网络组件为 Calico [root@centos7-base-ok]# kubeadm init --pod-network-cidr=192.168.0.0/16 --apiserver-advertise-address=0.0.0.0 --apiserver-cert-extra-sans=192.168.80.28,192.168.80.14,192.168.80.35,127.0.0.1,k8s-1,k8s-2,k8s-3,192.168.0.1 --skip-preflight-checks 参数说明: 参数名称 必选 参数说明 pod-network-cidr Yes For certain networking solutions the Kubernetes master can also play a role in allocating network ranges (CIDRs) to each node. This includes many cloud providers and flannel. You can specify a subnet range that will be broken down and handed out to each node with the --pod-network-cidr flag. This should be a minimum of a /16 so controller-manager is able to assign /24 subnets to each node in the cluster. If you are using flannel with this manifest you should use --pod-network-cidr=10.244.0.0/16. Most CNI based networking solutions do not require this flag. apiserver-advertise-address Yes This is the address the API Server will advertise to other members of the cluster. This is also the address used to construct the suggested kubeadm join line at the end of the init process. If not set (or set to 0.0.0.0) then IP for the default interface will be used. apiserver-cert-extra-sans Yes Additional hostnames or IP addresses that should be added to the Subject Alternate Name section for the certificate that the API Server will use. If you expose the API Server through a load balancer and public DNS you could specify this with. 其它的 kubeadm 参数设置请参照 官方文档 6.做一枚安静的美男子,等待安装成功,安装成功后你会看到日志如下(master节点): 注意:记录这段日志,后面添加node节点要用到。 [apiclient] All control plane components are healthy after 22.003243 seconds [token] Using token: 33729e.977f7b5d0a9b5f3e [apiconfig] Created RBAC rules [addons] Applied essential addon: kube-proxy [addons] Applied essential addon: kube-dns Your Kubernetes master has initialized successfully! To start using your cluster, you need to run (as a regular user): mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: http://kubernetes.io/docs/admin/addons/ You can now join any number of machines by running the following on each node as root: kubeadm join --token xxxxxxx 192.168.80.28:6443 7.创建kube的目录,添加kubectl配置(master节点) mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config 8.用 kubectl 添加网络组件Calico(master节点) kubectl apply -f http://docs.projectcalico.org/v2.4/getting-started/kubernetes/installation/hosted/kubeadm/1.6/calico.yaml 注意:此处坑为该文件未必下载的到,建议还是提前下载到本地,然后执行 kubectl apply -f <本地路径> 9.确认安装是否成功(master节点) 9.1 打开你的/var/log/messages,查看是否有报错,理论上,执行完上一步过去5分钟,日志应该不会有任何错误出现,如果持续报错,并且过了10分钟错误依然没有消失,检查之前的步骤是否有问题 9.2 运行 kubectl get pods --all-namespaces 查看结果,如果STATUS都为Running,恭喜你,你的master已经安装成功了。 注意:你的结果显示的条数未必和我完全一样,因为我这里有node节点的相关信息,而你还没有添加node节点。 [root@centos7-base-ok]# kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE default nginx-app-1666850838-4z2tb 1/1 Running 0 3d kube-system calico-etcd-0ssdd 1/1 Running 0 3d kube-system calico-node-1zfxd 2/2 Running 1 3d kube-system calico-node-s2gfs 2/2 Running 1 3d kube-system calico-node-xx30v 2/2 Running 1 3d kube-system calico-policy-controller-336633499-wgl8j 1/1 Running 0 3d kube-system etcd-k8s-1 1/1 Running 0 3d kube-system kube-apiserver-k8s-1 1/1 Running 0 3d kube-system kube-controller-manager-k8s-1 1/1 Running 0 3d kube-system kube-dns-2425271678-trmxx 3/3 Running 1 3d kube-system kube-proxy-79kkh 1/1 Running 0 3d kube-system kube-proxy-n1g6j 1/1 Running 0 3d kube-system kube-proxy-vccr6 1/1 Running 0 3d kube-system kube-scheduler-k8s-1 1/1 Running 0 3d 10.安装node节点,执行在master节点执行成功输出的日志语句(node节点执行) 注意:执行如下语句的之前,一定要确认node节点下载了上文提到的镜像,否则因为镜像下载不成功会导致node节点初始化失败;第二点,一定要时刻查看/var/log/messages日志,如果镜像版本发生变化,在日志里会提示需要下载的镜像;第三点,就是要有耐心,如果你的网络可以下载到镜像,你当个安静的美男子就可以了,因为 kubeadm 会帮你做一切,知道你发现/var/log/messages不再有错误日志出现,说明它已经帮你搞定了所有事情,你可以开心的玩耍了。 [root@centos7-base-ok]# kubeadm join --token xxxxxxxx 192.168.80.28:6443 验证子节点,在master节点执行 kubectl get nodes 查看节点状态。 注意:node的状态会变化,添加成功后才是Ready。 [root@centos7-base-ok]# kubectl get nodes NAME STATUS AGE VERSION k8s-1 Ready 3d v1.7.3 k8s-2 Ready 3d v1.7.3 k8s-3 Ready 3d v1.7.3 12.恭喜你,你可以开心的进行kubernetes1.7.3之旅了 安装后记 Kubernetes,想说爱你不容易啊 ,欢迎其它团队或者个人与我们团队进行交流,有意向可以评论区给我留言。 补充:目前官方说dashboard的HEAD版本支持1.7,但是我试了下dashboard确实不行,希望官方加快修复,还有就是多些错误定位的方法,否则很难提出具体的问题。

浙公网安备 33010602011771号

浙公网安备 33010602011771号