1. zookeeper,kafka容器化

1.1 zookeeper+kafka单机docker模式

docker pull bitnami/zookeeper:3.6.3-debian-11-r46

docker pull bitnami/kafka:3.1.1-debian-11-r36

docker run -itd --name zookeeper -p 2181:2181 -p 2888:2888 -p 3888:3888 -p 8080:8080 -e ALLOW_ANONYMOUS_LOGIN=yes -e ALLOW_PLAINTEXT_LISTENER=yes bitnami/zookeeper:3.6.3-debian-11-r46

docker run -itd --name kafka -p 9092:9092 -e ALLOW_ANONYMOUS_LOGIN=yes -e ALLOW_PLAINTEXT_LISTENER=yes -e KAFKA_CFG_ZOOKEEPER_CONNECT=10.0.0.101:2181 bitnami/kafka:3.1.1-debian-11-r36

#测试:

###创建topic

[root@small-node1 ~]# docker exec -it kafka bash

I have no name!@3772fdf40be2:/opt/bitnami/kafka/bin$ ./kafka-topics.sh --create --bootstrap-server 10.0.0.101:9092 --replication-factor 1 --partitions 1 --topic test2

Created topic test2.

### 创建生产者

I have no name!@3772fdf40be2:/opt/bitnami/kafka/bin$ ./kafka-console-producer.sh --broker-list 10.0.0.101:9092 --topic test2

>halou world!

>

### 创建消息者

[root@small-node1 ~]# docker exec -it kafka bash

I have no name!@3772fdf40be2:/$ cd /opt/bitnami/kafka/bin/

I have no name!@3772fdf40be2:/opt/bitnami/kafka/bin$ ./kafka-console-consumer.sh --bootstrap-server 10.0.0.101:9092 --topic test2 --from-beginning

halou world!

#### 查询topic

I have no name!@3772fdf40be2:/opt/bitnami/kafka/bin$ ./kafka-topics.sh --bootstrap-server 10.0.0.101:9092 --list

__consumer_offsets

small-kafka

test1

test2

I have no name!@3772fdf40be2:/opt/bitnami/kafka/bin$

1.2 部署nfs动态存储类

#安装nfs服务

yum install -y nfs-utils

#创建对应服务数据目录

mkdir -p /data/k8s-nfs/{zookeeper,reids,mysql,kafka}

#更改nfs配置文件

[root@small-master ~/yml/zk-ka]# cat /etc/exports

/data/k8s-nfs/ *(rw,no_root_squash)

#重启nfs服务

[root@small-master ~/yml/zk-ka]# systemctl enable --now

#检查nfs

[root@master ~/yml/sys]# showmount -e 10.0.0.151

Export list for 10.0.0.151:

/data/k8s-nfs/ *

git clone https://gitee.com/yinzhengjie/k8s-external-storage.git

mv k8s-external-storage yml/

cd yml/nfs-storage/nfs-client/deploy/

#创建动态存储类

[root@small-master ~/yml/nfs-storage/nfs-client/deploy/]# cat class.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

provisioner: fuseim.pri/ifs # or choose another name, must match deployment's env PROVISIONER_NAME'

parameters:

# archiveOnDelete: "false"

archiveOnDelete: "true"

#创建授权角色

[root@small-master ~/yml/nfs-storage/nfs-client/deploy/]# cat rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

#部署nfs动态存储配置器

[root@small-master ~/yml/nfs-storage/nfs-client/deploy]# cat deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: small-node1:5000/small/saas/nfs-client:latest

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: fuseim.pri/ifs

- name: NFS_SERVER

# 指定NFS服务器地址

value: 10.0.0.100

- name: NFS_PATH

# 指定NFS的共享路径

value: /data/k8s-nfs/

volumes:

- name: nfs-client-root

# 配置NFS共享

nfs:

server: 10.0.0.100

# path: /ifs/kubernetes

path: /data/k8s-nfs/

kubectl apply -f class.yaml

kubectl apply -f rbac.yaml

kubectl apply -f deployment.yaml

#检查动态存储

[root@small-master ~/yml/nfs-storage/nfs-client/deploy]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

managed-nfs-storage fuseim.pri/ifs Delete Immediate false 57m

1.3 zookeeper+fafka单机K8S

k8s之StatefulSet详解

1)zookeeper

#nfs共享存储部署 略

#部署 zookeeper Service Headless 供sts使用

[root@small-master ~/yml/zk-ka]# cat zk-svc-headless.yml

apiVersion: v1

kind: Service

metadata:

name: zookeeper-headless

labels:

app: zookeeper

spec:

type: ClusterIP

clusterIP: None

publishNotReadyAddresses: true

ports:

- name: client

port: 2181

targetPort: client

- name: follower

port: 2888

targetPort: follower

- name: election

port: 3888

targetPort: election

selector:

app: zookeeper

#部署zookeeper sts

[root@small-master ~/yml/zk-ka]# cat zk-sts.yml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: zookeeper

labels:

app: zookeeper

spec:

serviceName: zookeeper-headless

replicas: 1

podManagementPolicy: Parallel

updateStrategy:

type: RollingUpdate

selector:

matchLabels:

app: zookeeper

template:

metadata:

name: zookeeper

labels:

app: zookeeper

spec:

securityContext:

fsGroup: 1001

containers:

- name: zookeeper

image: docker.io/bitnami/zookeeper:3.4.14-debian-9-r25

imagePullPolicy: IfNotPresent

securityContext:

runAsUser: 1001

command:

- bash

- -ec

- |

# Execute entrypoint as usual after obtaining ZOO_SERVER_ID based on POD hostname

HOSTNAME=`hostname -s`

if [[ $HOSTNAME =~ (.*)-([0-9]+)$ ]]; then

ORD=${BASH_REMATCH[2]}

export ZOO_SERVER_ID=$((ORD+1))

else

echo "Failed to get index from hostname $HOST"

exit 1

fi

. /opt/bitnami/base/functions

. /opt/bitnami/base/helpers

print_welcome_page

. /init.sh

nami_initialize zookeeper

exec tini -- /run.sh

env:

- name: ZOO_PORT_NUMBER

value: "2181"

- name: ZOO_TICK_TIME

value: "2000"

- name: ZOO_INIT_LIMIT

value: "10"

- name: ZOO_SYNC_LIMIT

value: "5"

- name: ZOO_MAX_CLIENT_CNXNS

value: "60"

- name: ZOO_SERVERS

value: "

zookeeper-0.zookeeper-headless:2888:3888,

"

- name: ZOO_ENABLE_AUTH

value: "no"

- name: ZOO_HEAP_SIZE

value: "1024"

- name: ZOO_LOG_LEVEL

value: "ERROR"

- name: ALLOW_ANONYMOUS_LOGIN

value: "yes"

ports:

- name: client

containerPort: 2181

- name: follower

containerPort: 2888

- name: election

containerPort: 3888

livenessProbe:

tcpSocket:

port: client

initialDelaySeconds: 30

periodSeconds: 10

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 6

readinessProbe:

tcpSocket:

port: client

initialDelaySeconds: 5

periodSeconds: 10

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 6

volumeMounts:

- name: data

mountPath: /bitnami/zookeeper

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 10Gi

storageClassName: managed-nfs-storage

#部署 Service,用于外部访问 Zookeeper

[root@small-master ~/yml/zk-ka]# cat zk-svc.yml

apiVersion: v1

kind: Service

metadata:

name: zookeeper

labels:

app: zookeeper

spec:

type: NodePort

ports:

- name: client

port: 2181

targetPort: 2181

nodePort: 30107

- name: follower

port: 2888

targetPort: follower

- name: election

port: 3888

targetPort: election

selector:

app: zookeeper

2)kafka

#部署 headless,供sts使用

[root@small-master ~/yml/zk-ka]# cat kafka-svc-headless.yml

apiVersion: v1

kind: Service

metadata:

name: kafka-svc

labels:

app: kafka

spec:

ports:

- port: 9092

name: server

clusterIP: None

selector:

app: kafka

#部署kafka sts

#注意此处的zookeeper-0.zookeeper-headless.default.svc.cluster.local,如果zk指定了名称空间,这里的default就要替换成对应的名称名字

[root@small-master ~/yml/zk-ka]# cat kafka-sts.yml

apiVersion: policy/v1beta1

kind: PodDisruptionBudget

metadata:

name: kafka-pdb

spec:

selector:

matchLabels:

app: kafka

minAvailable: 2

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: kafka

spec:

selector:

matchLabels:

app: kafka

serviceName: kafka-svc

replicas: 1

template:

metadata:

labels:

app: kafka

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- kafka

topologyKey: "kubernetes.io/hostname"

podAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 1

podAffinityTerm:

labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- zk

topologyKey: "kubernetes.io/hostname"

terminationGracePeriodSeconds: 300

containers:

- name: k8skafka

imagePullPolicy: IfNotPresent

image: small-node1:5000/small/saas/kafka:v1

ports:

- containerPort: 9092

name: server

command:

- sh

- -c

- "exec kafka-server-start.sh /opt/kafka/config/server.properties --override broker.id=${HOSTNAME##*-} \

--override listeners=PLAINTEXT://:9092 \

--override zookeeper.connect=zookeeper-0.zookeeper-headless.default.svc.cluster.local \

--override log.dir=/var/lib/kafka \

--override auto.create.topics.enable=true \

--override auto.leader.rebalance.enable=true \

--override background.threads=10 \

--override compression.type=producer \

--override delete.topic.enable=false \

--override leader.imbalance.check.interval.seconds=300 \

--override leader.imbalance.per.broker.percentage=10 \

--override log.flush.interval.messages=9223372036854775807 \

--override log.flush.offset.checkpoint.interval.ms=60000 \

--override log.flush.scheduler.interval.ms=9223372036854775807 \

--override log.retention.bytes=-1 \

--override log.retention.hours=168 \

--override log.roll.hours=168 \

--override log.roll.jitter.hours=0 \

--override log.segment.bytes=1073741824 \

--override log.segment.delete.delay.ms=60000 \

--override message.max.bytes=1000012 \

--override min.insync.replicas=1 \

--override num.io.threads=8 \

--override num.network.threads=3 \

--override num.recovery.threads.per.data.dir=1 \

--override num.replica.fetchers=1 \

--override offset.metadata.max.bytes=4096 \

--override offsets.commit.required.acks=-1 \

--override offsets.commit.timeout.ms=5000 \

--override offsets.load.buffer.size=5242880 \

--override offsets.retention.check.interval.ms=600000 \

--override offsets.retention.minutes=1440 \

--override offsets.topic.compression.codec=0 \

--override offsets.topic.num.partitions=50 \

--override offsets.topic.replication.factor=3 \

--override offsets.topic.segment.bytes=104857600 \

--override queued.max.requests=500 \

--override quota.consumer.default=9223372036854775807 \

--override quota.producer.default=9223372036854775807 \

--override replica.fetch.min.bytes=1 \

--override replica.fetch.wait.max.ms=500 \

--override replica.high.watermark.checkpoint.interval.ms=5000 \

--override replica.lag.time.max.ms=10000 \

--override replica.socket.receive.buffer.bytes=65536 \

--override replica.socket.timeout.ms=30000 \

--override request.timeout.ms=30000 \

--override socket.receive.buffer.bytes=102400 \

--override socket.request.max.bytes=104857600 \

--override socket.send.buffer.bytes=102400 \

--override unclean.leader.election.enable=true \

--override zookeeper.session.timeout.ms=6000 \

--override zookeeper.set.acl=false \

--override broker.id.generation.enable=true \

--override connections.max.idle.ms=600000 \

--override controlled.shutdown.enable=true \

--override controlled.shutdown.max.retries=3 \

--override controlled.shutdown.retry.backoff.ms=5000 \

--override controller.socket.timeout.ms=30000 \

--override default.replication.factor=1 \

--override fetch.purgatory.purge.interval.requests=1000 \

--override group.max.session.timeout.ms=300000 \

--override group.min.session.timeout.ms=6000 \

--override inter.broker.protocol.version=0.10.2-IV0 \

--override log.cleaner.backoff.ms=15000 \

--override log.cleaner.dedupe.buffer.size=134217728 \

--override log.cleaner.delete.retention.ms=86400000 \

--override log.cleaner.enable=true \

--override log.cleaner.io.buffer.load.factor=0.9 \

--override log.cleaner.io.buffer.size=524288 \

--override log.cleaner.io.max.bytes.per.second=1.7976931348623157E308 \

--override log.cleaner.min.cleanable.ratio=0.5 \

--override log.cleaner.min.compaction.lag.ms=0 \

--override log.cleaner.threads=1 \

--override log.cleanup.policy=delete \

--override log.index.interval.bytes=4096 \

--override log.index.size.max.bytes=10485760 \

--override log.message.timestamp.difference.max.ms=9223372036854775807 \

--override log.message.timestamp.type=CreateTime \

--override log.preallocate=false \

--override log.retention.check.interval.ms=300000 \

--override max.connections.per.ip=2147483647 \

--override num.partitions=1 \

--override producer.purgatory.purge.interval.requests=1000 \

--override replica.fetch.backoff.ms=1000 \

--override replica.fetch.max.bytes=1048576 \

--override replica.fetch.response.max.bytes=10485760 \

--override reserved.broker.max.id=1000 "

env:

- name: KAFKA_HEAP_OPTS

value : "-Xmx512M -Xms512M"

- name: KAFKA_OPTS

value: "-Dlogging.level=INFO"

volumeMounts:

- name: data

mountPath: /var/lib/kafka

readinessProbe:

tcpSocket:

port: 9092

initialDelaySeconds: 30

periodSeconds: 30

livenessProbe:

tcpSocket:

port: 9092

initialDelaySeconds: 60

securityContext:

runAsUser: 1000

fsGroup: 1000

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 10Gi

storageClassName: managed-nfs-storage

#部署 Service,用于外部访问 kafka

[root@small-master ~/yml/zk-ka]# cat kafka-svc.yml

apiVersion: v1

kind: Service

metadata:

name: kafka-svc

labels:

app: kafka

spec:

type: NodePort

ports:

- port: 9092

name: server

targetPort: 9092

nodePort: 30108

selector:

app: kafka

3) 测试

#创建topic

[root@small-master ~]# kubectl exec -it kafka-0 bash

kafka@kafka-0:/$ kafka-topics.sh --create --zookeeper zookeeper-0.zookeeper-headless.default.svc.cluster.local:2181 --replication-factor 1 --partitions 1 --topic aaa

Created topic "aaa".

#创建生产者

Ckafka@kafka-0:/$ kafka-console-producer.sh --topic aaa --broker-list localhost:9092

fsdafdsfs

#换个窗口模拟消费者

[root@small-master ~]# kubectl exec -it kafka-0 bash

kafka@kafka-0:/$ kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic aaa

fsdafdsfs

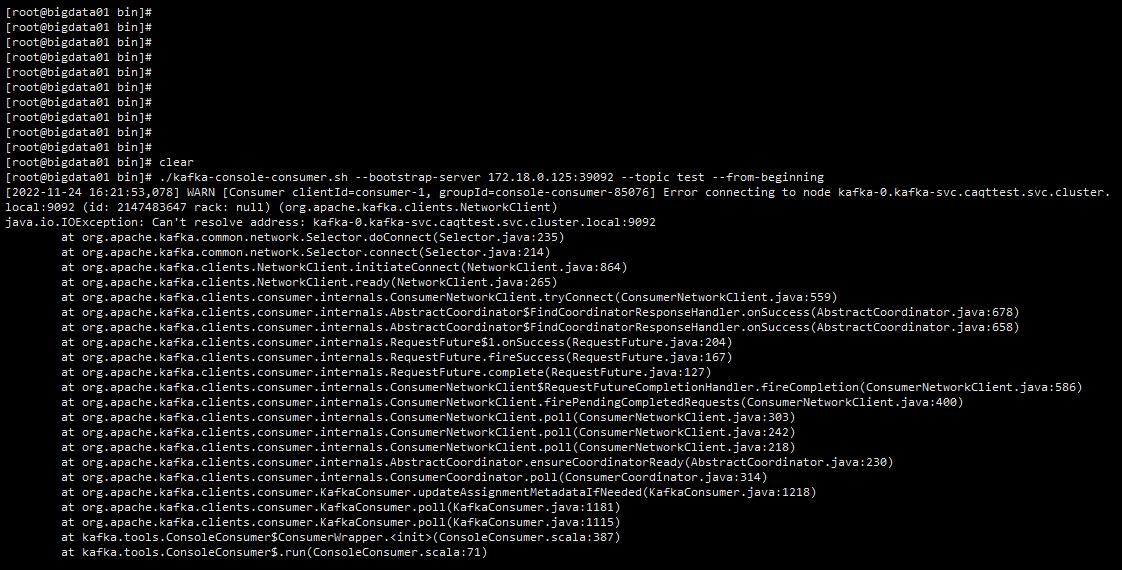

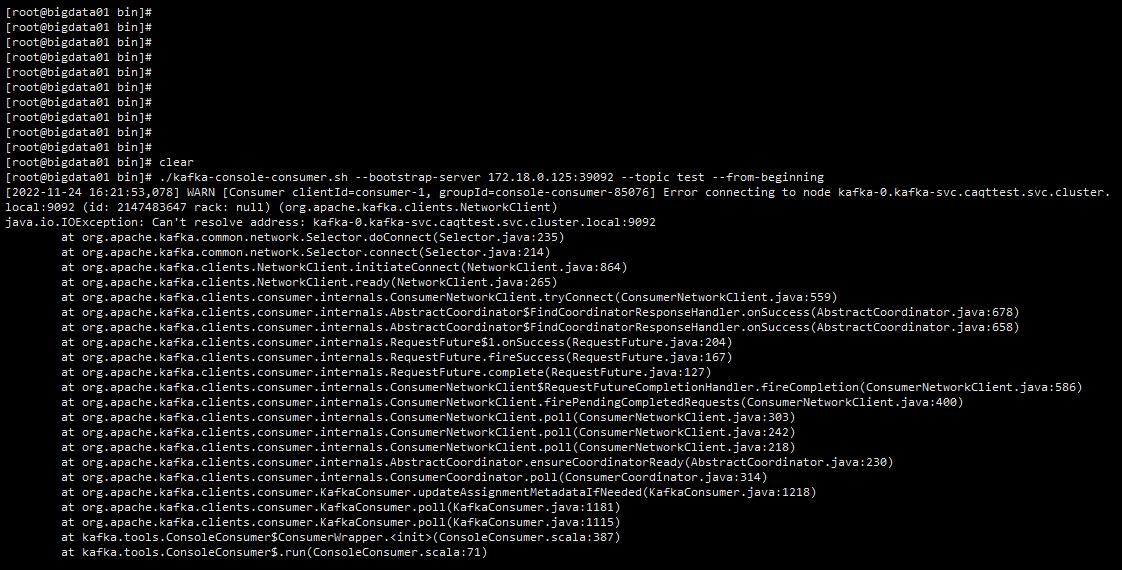

4)K8S集群外部连接kafka

如果K8S集群外部需要连接kafka,需要加 --override advertised.listeners=PLAINTEXT://172.18.0.125:39092 \ 这行配置,这里的ip为K8S集群宿主机的ip。否则连接失败,报错如下

1.4 zookeeper+kafka集群K8S

1)zookeeper

#去除主节点污点。保证pod的调度

[root@small-master ~/yml/zk-ka]# kubectl taint node small-master node-role.kubernetes.io/master-

node/small-master untainted

#对应yaml资源清单

[root@small-master ~/yml/zk-ka]# cat zookeeper-cm.yml

apiVersion: v1

kind: ConfigMap

metadata:

name: zk-cm

data:

jvm.heap: "1G"

tick: "2000"

init: "10"

sync: "5"

client.cnxns: "60"

snap.retain: "3"

purge.interval: "0"

[root@small-master ~/yml/zk-ka]# cat zookeeper-svc.yml

apiVersion: v1

kind: Service

metadata:

name: zk-svc

labels:

app: zk-svc

spec:

ports:

- port: 2888

name: server

- port: 3888

name: leader-election

clusterIP: None

selector:

app: zk

[root@small-master ~/yml/zk-ka]# cat zookeeper-sts.yml

apiVersion: policy/v1

kind: PodDisruptionBudget

metadata:

name: zk-pdb

spec:

selector:

matchLabels:

app: zk

minAvailable: 2

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: zk

spec:

selector:

matchLabels:

app: zk

serviceName: zk-svc

replicas: 3

template:

metadata:

labels:

app: zk

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- zk

topologyKey: "kubernetes.io/hostname"

containers:

- name: k8szk

imagePullPolicy: IfNotPresent

image: small-node1:5000/small/saas/zookeeper:v1

resources:

limits:

cpu: "1"

memory: "2Gi"

requests:

cpu: "0.25"

memory: "256Mi"

ports:

- containerPort: 2181

name: client

- containerPort: 2888

name: server

- containerPort: 3888

name: leader-election

env:

- name : ZK_REPLICAS

value: "3"

- name : ZK_HEAP_SIZE

valueFrom:

configMapKeyRef:

name: zk-cm

key: jvm.heap

- name : ZK_TICK_TIME

valueFrom:

configMapKeyRef:

name: zk-cm

key: tick

- name : ZK_INIT_LIMIT

valueFrom:

configMapKeyRef:

name: zk-cm

key: tick

- name : ZK_MAX_CLIENT_CNXNS

valueFrom:

configMapKeyRef:

name: zk-cm

key: client.cnxns

- name: ZK_SNAP_RETAIN_COUNT

valueFrom:

configMapKeyRef:

name: zk-cm

key: snap.retain

- name: ZK_PURGE_INTERVAL

valueFrom:

configMapKeyRef:

name: zk-cm

key: purge.interval

- name: ZK_CLIENT_PORT

value: "2181"

- name: ZK_SERVER_PORT

value: "2888"

- name: ZK_ELECTION_PORT

value: "3888"

command:

- sh

- -c

- zkGenConfig.sh && zkServer.sh start-foreground

readinessProbe:

exec:

command:

- "zkOk.sh"

initialDelaySeconds: 10

timeoutSeconds: 5

livenessProbe:

exec:

command:

- "zkOk.sh"

initialDelaySeconds: 10

timeoutSeconds: 5

volumeMounts:

- name: data

mountPath: /var/lib/zookeeper

securityContext:

runAsUser: 1000

fsGroup: 1000

# 卷申请模板,会为每个Pod去创建唯一的pvc并与之关联

volumeClaimTemplates:

- metadata:

#这个data要和上面的volumenMounts里的name一致才会调用

name: data

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 10Gi

# 声明自定义的动态存储类,即sc资源名称

storageClassName: managed-nfs-storage

#部署

[root@small-master ~/yml/zk-ka]# kubectl apply -f zookeeper-cm.yml

[root@small-master ~/yml/zk-ka]# kubectl apply -f zookeeper-svc.yml

[root@small-master ~/yml/zk-ka]# kubectl apply -f zookeeper-sts.yml

#验证

[root@small-master ~/yml/zk-ka]# kubectl get po

NAME READY STATUS RESTARTS AGE

nfs-client-provisioner-8b8f8bd6-mpjb2 1/1 Running 1 (34m ago) 52m

zk-0 1/1 Running 0 75s

zk-1 1/1 Running 0 53s

zk-2 1/1 Running 0 31s

[root@small-master ~/yml/zk-ka]# for i in 0 1 2; do echo "myid zk-$i";kubectl exec zk-$i -- cat /var/lib/zookeeper/data/myid; done

myid zk-0

1

myid zk-1

2

myid zk-2

3

[root@small-master ~/yml/zk-ka]# for i in 0 1 2; do kubectl exec zk-$i -- hostname -f; done

zk-0.zk-svc.default.svc.cluster.local

zk-1.zk-svc.default.svc.cluster.local

zk-2.zk-svc.default.svc.cluster.local

#暴露外部服务

kubectl label pod zk-0 zkInst=0

kubectl label pod zk-1 zkInst=1

kubectl label pod zk-2 zkInst=2

kubectl expose po zk-0 --port=2181 --target-port=2181 --name=zk-0 --selector=zkInst=0 --type=NodePort

kubectl expose po zk-1 --port=2181 --target-port=2181 --name=zk-1 --selector=zkInst=1 --type=NodePort

kubectl expose po zk-2 --port=2181 --target-port=2181 --name=zk-2 --selector=zkInst=2 --type=NodePort

2)kafka

#svc-headless资源创建

[root@small-master ~/yml/zk-ka]# cat kafka-svc.yml

apiVersion: v1

kind: Service

metadata:

name: kafka-svc

labels:

app: kafka

spec:

ports:

- port: 9093

name: server

# 将clusterIP字段设置为None表示为一个无头服务.

clusterIP: None

selector:

app: kafka

#sts资源创建

#注意此处的zookeeper-0.zookeeper-headless.default.svc.cluster.local,如果zk指定了名称空间,这里的default就要替换成对应的名称名字

[root@small-master ~/yml/zk-ka]# cat kafka-sts.yml

---

apiVersion: policy/v1beta1

kind: PodDisruptionBudget

metadata:

name: kafka-pdb

spec:

selector:

matchLabels:

app: kafka

minAvailable: 2

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: kafka

spec:

selector:

matchLabels:

app: kafka

#声明无头服务

serviceName: kafka-svc

replicas: 3

template:

metadata:

labels:

app: kafka

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- kafka

topologyKey: "kubernetes.io/hostname"

podAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 1

podAffinityTerm:

labelSelector:

matchExpressions:

- key: "app"

operator: In

values:

- zk

topologyKey: "kubernetes.io/hostname"

terminationGracePeriodSeconds: 300

containers:

- name: k8skafka

imagePullPolicy: IfNotPresent

image: small-node1:5000/small/saas/kafka:v1

resources:

limits:

cpu: "1"

memory: "2Gi"

requests:

cpu: "0.25"

memory: "256Mi"

ports:

- containerPort: 9093

name: server

command:

- sh

- -c

- "exec kafka-server-start.sh /opt/kafka/config/server.properties --override broker.id=${HOSTNAME##*-} \

--override listeners=PLAINTEXT://:9093 \

--override zookeeper.connect=zk-0.zk-svc.default.svc.cluster.local:2181,zk-1.zk-svc.default.svc.cluster.local:2181,zk-2.zk-svc.default.svc.cluster.local:2181 \

--override log.dir=/var/lib/kafka \

--override auto.create.topics.enable=true \

--override auto.leader.rebalance.enable=true \

--override background.threads=10 \

--override compression.type=producer \

--override delete.topic.enable=false \

--override leader.imbalance.check.interval.seconds=300 \

--override leader.imbalance.per.broker.percentage=10 \

--override log.flush.interval.messages=9223372036854775807 \

--override log.flush.offset.checkpoint.interval.ms=60000 \

--override log.flush.scheduler.interval.ms=9223372036854775807 \

--override log.retention.bytes=-1 \

--override log.retention.hours=168 \

--override log.roll.hours=168 \

--override log.roll.jitter.hours=0 \

--override log.segment.bytes=1073741824 \

--override log.segment.delete.delay.ms=60000 \

--override message.max.bytes=1000012 \

--override min.insync.replicas=1 \

--override num.io.threads=8 \

--override num.network.threads=3 \

--override num.recovery.threads.per.data.dir=1 \

--override num.replica.fetchers=1 \

--override offset.metadata.max.bytes=4096 \

--override offsets.commit.required.acks=-1 \

--override offsets.commit.timeout.ms=5000 \

--override offsets.load.buffer.size=5242880 \

--override offsets.retention.check.interval.ms=600000 \

--override offsets.retention.minutes=1440 \

--override offsets.topic.compression.codec=0 \

--override offsets.topic.num.partitions=50 \

--override offsets.topic.replication.factor=3 \

--override offsets.topic.segment.bytes=104857600 \

--override queued.max.requests=500 \

--override quota.consumer.default=9223372036854775807 \

--override quota.producer.default=9223372036854775807 \

--override replica.fetch.min.bytes=1 \

--override replica.fetch.wait.max.ms=500 \

--override replica.high.watermark.checkpoint.interval.ms=5000 \

--override replica.lag.time.max.ms=10000 \

--override replica.socket.receive.buffer.bytes=65536 \

--override replica.socket.timeout.ms=30000 \

--override request.timeout.ms=30000 \

--override socket.receive.buffer.bytes=102400 \

--override socket.request.max.bytes=104857600 \

--override socket.send.buffer.bytes=102400 \

--override unclean.leader.election.enable=true \

--override zookeeper.session.timeout.ms=6000 \

--override zookeeper.set.acl=false \

--override broker.id.generation.enable=true \

--override connections.max.idle.ms=600000 \

--override controlled.shutdown.enable=true \

--override controlled.shutdown.max.retries=3 \

--override controlled.shutdown.retry.backoff.ms=5000 \

--override controller.socket.timeout.ms=30000 \

--override default.replication.factor=1 \

--override fetch.purgatory.purge.interval.requests=1000 \

--override group.max.session.timeout.ms=300000 \

--override group.min.session.timeout.ms=6000 \

--override inter.broker.protocol.version=0.10.2-IV0 \

--override log.cleaner.backoff.ms=15000 \

--override log.cleaner.dedupe.buffer.size=134217728 \

--override log.cleaner.delete.retention.ms=86400000 \

--override log.cleaner.enable=true \

--override log.cleaner.io.buffer.load.factor=0.9 \

--override log.cleaner.io.buffer.size=524288 \

--override log.cleaner.io.max.bytes.per.second=1.7976931348623157E308 \

--override log.cleaner.min.cleanable.ratio=0.5 \

--override log.cleaner.min.compaction.lag.ms=0 \

--override log.cleaner.threads=1 \

--override log.cleanup.policy=delete \

--override log.index.interval.bytes=4096 \

--override log.index.size.max.bytes=10485760 \

--override log.message.timestamp.difference.max.ms=9223372036854775807 \

--override log.message.timestamp.type=CreateTime \

--override log.preallocate=false \

--override log.retention.check.interval.ms=300000 \

--override max.connections.per.ip=2147483647 \

--override num.partitions=1 \

--override producer.purgatory.purge.interval.requests=1000 \

--override replica.fetch.backoff.ms=1000 \

--override replica.fetch.max.bytes=1048576 \

--override replica.fetch.response.max.bytes=10485760 \

--override reserved.broker.max.id=1000 "

env:

- name: KAFKA_HEAP_OPTS

value : "-Xmx512M -Xms512M"

- name: KAFKA_OPTS

value: "-Dlogging.level=INFO"

volumeMounts:

- name: data

mountPath: /var/lib/kafka

readinessProbe:

tcpSocket:

port: 9093

initialDelaySeconds: 30

periodSeconds: 30

livenessProbe:

tcpSocket:

port: 9093

initialDelaySeconds: 60

periodSeconds: 3

securityContext:

runAsUser: 1000

fsGroup: 1000

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 10Gi

storageClassName: managed-nfs-storage

[root@small-master ~/yml/zk-ka]# kubectl apply -f kafka-svc.yml

[root@small-master ~/yml/zk-ka]# kubectl apply -f kafka-sts.yml

#检查

[root@small-master ~/yml/zk-ka]# kubectl get po

NAME READY STATUS RESTARTS AGE

kafka-0 1/1 Running 1 48m

kafka-1 1/1 Running 0 48m

kafka-2 1/1 Running 0 47m

nfs-client-provisioner-85fc7b9595-shrl4 1/1 Running 1 3h56m

zk-0 1/1 Running 0 3h51m

zk-1 1/1 Running 0 3h50m

zk-2 1/1 Running 0 3h51m

[root@small-master ~/yml/zk-ka]# ll /data/k8s-nfs/

total 0

drwxrwxrwx 2 root root 6 Oct 13 18:34 archived-default-data-kafka-0-pvc-e11ab794-69be-46c7-98d7-0648599199e1

drwxrwxrwx 2 root root 6 Oct 13 18:36 default-data-kafka-0-pvc-90dacc70-3a01-4225-b17c-c94ebdbf72a0

drwxrwxrwx 2 root root 6 Oct 13 18:36 default-data-kafka-1-pvc-caf25d03-d143-4a83-a453-869413983dc2

drwxrwxrwx 2 root root 6 Oct 13 18:36 default-data-kafka-2-pvc-fde88742-8c3c-4908-ae26-634b8afa038a

drwxrwxrwx 4 root root 29 Oct 13 18:29 default-data-zk-0-pvc-8d49b10a-e772-4949-8402-b48288be1dc5

drwxrwxrwx 4 root root 29 Oct 13 18:30 default-data-zk-1-pvc-cd5f6119-5fab-4750-9554-f33a1a5f58a0

drwxrwxrwx 4 root root 29 Oct 13 18:31 default-data-zk-2-pvc-b866fe09-6650-4b12-a4bc-707becab1798

#验证:

[root@small-master ~]# kubectl exec -it kafka-0 bash

#创建topic

kafka@kafka-0:/opt/kafka/config$ kafka-topics.sh --create --topic ans --zookeeper zk-0.zk-svc.default.svc.cluster.local:2181,zk-1.zk-svc.default.svc.cluster.local:2181,zk-2.zk-svc.default.svc.cluster.local:2181 --partitions 3 --replication-factor 2

Created topic "ans".

#创建生产者

kafka@kafka-0:/opt/kafka/config$ kafka-console-producer.sh --topic ans --broker-list localhost:9093

fsdfsd

#创建消费者

[root@small-master ~]# kubectl exec -it kafka-1 bash

kafka@kafka-1:/opt/kafka/bin$ kafka-console-consumer.sh --bootstrap-server localhost:9093 --topic ans

fsdfsd

#检查zookeeper状态

[root@small-master ~/yml/zk-ka]# kubectl exec -it zk-0 bash

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

zookeeper@zk-0:/$ zkCli.sh

Connecting to localhost:2181

2022-10-14 07:54:30,109 [myid:] - INFO [main:Environment@100] - Client environment:zookeeper.version=3.4.10-39d3a4f269333c922ed3db283be479f9deacaa0f, built on 03/23/2017 10:13 GMT

2022-10-14 07:54:30,112 [myid:] - INFO [main:Environment@100] - Client environment:host.name=zk-0.zk-svc.default.svc.cluster.local

2022-10-14 07:54:30,112 [myid:] - INFO [main:Environment@100] - Client environment:java.version=1.8.0_131

2022-10-14 07:54:30,114 [myid:] - INFO [main:Environment@100] - Client environment:java.vendor=Oracle Corporation

2022-10-14 07:54:30,114 [myid:] - INFO [main:Environment@100] - Client environment:java.home=/usr/lib/jvm/java-8-openjdk-amd64/jre

2022-10-14 07:54:30,114 [myid:] - INFO [main:Environment@100] - Client environment:java.class.path=/usr/bin/../build/classes:/usr/bin/../build/lib/*.jar:/usr/bin/../share/zookeeper/zookeeper-3.4.10.jar:/usr/bin/../share/zookeeper/slf4j-log4j12-1.6.1.jar:/usr/bin/../share/zookeeper/slf4j-api-1.6.1.jar:/usr/bin/../share/zookeeper/netty-3.10.5.Final.jar:/usr/bin/../share/zookeeper/log4j-1.2.16.jar:/usr/bin/../share/zookeeper/jline-0.9.94.jar:/usr/bin/../src/java/lib/*.jar:/usr/bin/../etc/zookeeper:

2022-10-14 07:54:30,114 [myid:] - INFO [main:Environment@100] - Client environment:java.library.path=/usr/java/packages/lib/amd64:/usr/lib/x86_64-linux-gnu/jni:/lib/x86_64-linux-gnu:/usr/lib/x86_64-linux-gnu:/usr/lib/jni:/lib:/usr/lib

2022-10-14 07:54:30,114 [myid:] - INFO [main:Environment@100] - Client environment:java.io.tmpdir=/tmp

2022-10-14 07:54:30,114 [myid:] - INFO [main:Environment@100] - Client environment:java.compiler=<NA>

2022-10-14 07:54:30,114 [myid:] - INFO [main:Environment@100] - Client environment:os.name=Linux

2022-10-14 07:54:30,114 [myid:] - INFO [main:Environment@100] - Client environment:os.arch=amd64

2022-10-14 07:54:30,114 [myid:] - INFO [main:Environment@100] - Client environment:os.version=3.10.0-1160.el7.x86_64

2022-10-14 07:54:30,115 [myid:] - INFO [main:Environment@100] - Client environment:user.name=zookeeper

2022-10-14 07:54:30,115 [myid:] - INFO [main:Environment@100] - Client environment:user.home=/home/zookeeper

2022-10-14 07:54:30,115 [myid:] - INFO [main:Environment@100] - Client environment:user.dir=/

2022-10-14 07:54:30,116 [myid:] - INFO [main:ZooKeeper@438] - Initiating client connection, connectString=localhost:2181 sessionTimeout=30000 watcher=org.apache.zookeeper.ZooKeeperMain$MyWatcher@42110406

Welcome to ZooKeeper!

2022-10-14 07:54:30,161 [myid:] - INFO [main-SendThread(localhost:2181):ClientCnxn$SendThread@1032] - Opening socket connection to server localhost/127.0.0.1:2181. Will not attempt to authenticate using SASL (unknown error)

JLine support is enabled

2022-10-14 07:54:30,367 [myid:] - INFO [main-SendThread(localhost:2181):ClientCnxn$SendThread@876] - Socket connection established to localhost/127.0.0.1:2181, initiating session

2022-10-14 07:54:30,494 [myid:] - INFO [main-SendThread(localhost:2181):ClientCnxn$SendThread@1299] - Session establishment complete on server localhost/127.0.0.1:2181, sessionid = 0x183d569ae680000, negotiated timeout = 30000

WATCHER::

#检查brokers

WatchedEvent state:SyncConnected type:None path:null

[zk: localhost:2181(CONNECTED) 0] ls /

[cluster, controller, controller_epoch, brokers, zookeeper, admin, isr_change_notification, consumers, config]

[zk: localhost:2181(CONNECTED) 1] ls /brokers

[ids, topics, seqid]

[zk: localhost:2181(CONNECTED) 4] ls /brokers/ids

[0, 1, 2]

#表示成功链接

2. redis哨兵,mysql容器化

2.1 redis单机模式

[root@small-master ~/yml/redis]# cat redis-alone.yaml

apiVersion: v1

kind: Service

metadata:

name: redis-svc

labels:

app: redis

spec:

ports:

- port: 6379

name: server

clusterIP: None

selector:

app: redis

---

apiVersion: v1

kind: ConfigMap

metadata:

name: redis-conf

data:

redis.conf: |

bind 0.0.0.0

port 6379

requirepass Shuli@123

pidfile .pid

appendonly yes

cluster-config-file nodes-6379.conf

pidfile /data/middleware-data/redis/log/redis-6379.pid

cluster-config-file /data/middleware-data/redis/conf/redis.conf

dir /data/middleware-data/redis/data/

logfile "/data/middleware-data/redis/log/redis-6379.log"

cluster-node-timeout 5000

protected-mode no

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: redis

spec:

serviceName: redis-headless

replicas: 1

serviceName: redis

selector:

matchLabels:

app: redis

template:

metadata:

labels:

app: redis

spec:

initContainers:

- name: init-redis

image: busybox

command: ['sh', '-c', 'mkdir -p /data/middleware-data/redis/log/;mkdir -p /data/middleware-data/redis/conf/;mkdir -p /data/middleware-data/redis/data/']

volumeMounts:

- name: data

mountPath: /data/middleware-data/redis/

containers:

- name: redis

image: harbor.data4truth.com:8443/paas/redis:5.0.5-alpine

imagePullPolicy: IfNotPresent

command:

- sh

- -c

- "exec redis-server /data/middleware-data/redis/conf/redis.conf"

ports:

- containerPort: 6379

name: redis

protocol: TCP

volumeMounts:

- name: redis-config

mountPath: /data/middleware-data/redis/conf/

- name: data

mountPath: /data/middleware-data/redis/

volumes:

- name: redis-config

configMap:

name: redis-conf

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 10Gi

storageClassName: managed-nfs-storage

---

kind: Service

apiVersion: v1

metadata:

labels:

name: redis

name: redis

spec:

type: NodePort

ports:

- name: redis

port: 6379

targetPort: 6379

nodePort: 31379

selector:

name: redis

#验证:

[root@small-master ~/yml/redis]# kubectl get po

NAME READY STATUS RESTARTS AGE

nfs-client-provisioner-85fc7b9595-h85pv 1/1 Running 0 61m

redis-0 1/1 Running 0 38s

[root@small-master ~/yml/redis]# kubectl exec -it redis-0 sh

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

/data # redis-cli

127.0.0.1:6379> auth Shuli@123

OK

127.0.0.1:6379> info Replication

# Replication

role:master

connected_slaves:0

master_replid:8571592c4a5fb224947f9e60a5846e489ac16030

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:0

second_repl_offset:-1

repl_backlog_active:0

repl_backlog_size:1048576

repl_backlog_first_byte_offset:0

repl_backlog_histlen:0

2.2 redis哨兵模式

#dockerfile

[root@small-master ~/yml/redis/dockerfile]# cat Dockerfile

FROM redis:6.0

MAINTAINER Ansheng

COPY *.conf /opt/conf/

COPY run.sh /opt/run.sh

RUN apt update -y;apt-get install vim net-tools -y;apt-get clean && \

chmod +x /opt/run.sh

CMD /opt/run.sh

#redis配置文件

[root@small-master ~/yml/redis/dockerfile]# cat redis.conf

#绑定到哪台机器,0.0.0.0表示允许所有主机访问

bind 0.0.0.0

#redis3.2版本之后加入的特性,yes开启后,如果没有配置bind则默认只允许127.0.0.1访问

protected-mode no

#对外暴露的访问端口

port 6379

#登录密码

requirepass shuli@123

#主从同步认证密码

masterauth shuli@123

#三次握手的时候server端接收到客户端 ack确认号之后的队列值

tcp-backlog 511

#服务端与客户端连接超时时间,0表示永不超时

timeout 30

#连接redis的时候的密码 hello

#requirepass hello

#tcp 保持会话时间是300s

tcp-keepalive 300

#redis是否以守护进程运行,如果是,会生成pid

daemonize yes

supervised no

#pid文件路径

pidfile /var/run/redis_6379.pid

#日志级别

loglevel notice

logfile /var/log/redis.log

#默认redis有几个db库

databases 16

#每间隔900秒,如果一个键值发生变化就触发快照机制

save 900 1

save 300 10

save 60 10000

#快照出错时,是否禁止redis写入

stop-writes-on-bgsave-error yes

#持久化到rdb文件时,是否压缩文件

rdbcompression yes

#持久化到rdb文件是,是否RC64开启验证

rdbchecksum yes

#持久化输出的时候,rdb文件命名

dbfilename dump.rdb

#持久化文件路径

slave-serve-stale-data yes

slave-read-only yes

repl-diskless-sync no

repl-diskless-sync-delay 5

repl-disable-tcp-nodelay no

slave-priority 100

#是否开启aof备份

appendonly yes

#aof备份文件名称

appendfilename "appendonly.aof"

appendfsync everysec

no-appendfsync-on-rewrite no

auto-aof-rewrite-percentage 100

auto-aof-rewrite-min-size 64mb

aof-load-truncated yes

lua-time-limit 5000

slowlog-log-slower-than 10000

slowlog-max-len 128

latency-monitor-threshold 0

notify-keyspace-events ""

hash-max-ziplist-entries 512

hash-max-ziplist-value 64

list-max-ziplist-size -2

list-compress-depth 0

set-max-intset-entries 512

zset-max-ziplist-entries 128

zset-max-ziplist-value 64

hll-sparse-max-bytes 3000

activerehashing yes

client-output-buffer-limit normal 0 0 0

client-output-buffer-limit slave 256mb 64mb 60

client-output-buffer-limit pubsub 32mb 8mb 60

hz 10

aof-rewrite-incremental-fsync yes

#客户端最大连接数

maxclients 20000

lazyfree-lazy-eviction yes

lazyfree-lazy-expire yes

lazyfree-lazy-server-del yes

slave-lazy-flush yes

#sentinel配置文件

[root@small-master ~/yml/redis/dockerfile]# cat sentinel.conf

# 哨兵sentinel实例运行的端口 默认26379

port 26379

# 哨兵sentinel的工作目录

dir "/tmp"

sentinel deny-scripts-reconfig yes

sentinel monitor mymaster redis-0.redis 6379 2

sentinel auth-pass mymaster shuli@123

sentinel down-after-milliseconds mymaster 5000

sentinel failover-timeout mymaster 15000

# 设定5秒内没有响应,说明服务器挂了,需要将配置放在sentinel monitor master 127.0.0.1 6379 下面

sentinel parallel-syncs mymaster 2

# 设定15秒内master没有活起来,就重新选举主

sentinel config-epoch mymaster 3

#.表示如果master重新选出来后,其它slave节点能同时并行从新master同步缓存的台数有多少个,显然该值越大,所有slave节点完成同步切换的整体速度越快,但如果此时正好有人在访问这些slave,可能造成读取失败,影响面会更广。最保定的设置为1,只同一时间,只能有一台干这件事,这样其它slave还能继续服务,但是所有slave全部完成缓存更新同步的进程将变慢。

sentinel leader-epoch mymaster 3

#启动脚本

[root@small-master ~/yml/redis/dockerfile]# cat run.sh

#!/bin/bash

pod_seq=$(echo $POD_NAME | awk -F"-" '{print $2}')

if [[ ${pod_seq} -ne 0 ]];then #为从机

sed -i '/^slaveof /d' /opt/conf/redis.conf

echo "slaveof redis-0.redis 6379" >> /opt/conf/redis.conf #redis-0.redis代表第一个redis的访问地址

fi

/usr/local/bin/redis-server /opt/conf/redis.conf

sleep 15 #如果redis-0没起来,它里面的哨兵也起不来,等待一段时间再启动哨兵

/usr/local/bin/redis-sentinel /opt/conf/sentinel.conf &

tail -f /var/log/redis.log

[root@small-master ~/yml/redis/dockerfile]# docker build --pull -t harbor.ans.cn/saas/redis_sentinel:6.0 .

[root@small-master ~/yml/redis/dockerfile]# docker push harbor.ans.cn/saas/redis_sentinel:6.0

#K8S redis yaml文件

[root@small-master ~/yml/redis]# cat redis-sentinel.yml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: redis

spec:

serviceName: redis

selector:

matchLabels:

app: redis

replicas: 3

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 10Gi

storageClassName: managed-nfs-storage

template:

metadata:

labels:

app: redis

spec:

restartPolicy: Always

containers:

- name: redis

image: harbor.ans.cn/saas/redis_sentinel:6.0

imagePullPolicy: Always

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

livenessProbe:

tcpSocket:

port: 6379

initialDelaySeconds: 3

periodSeconds: 5

readinessProbe:

tcpSocket:

port: 6379

initialDelaySeconds: 3

periodSeconds: 5

ports:

- containerPort: 6379

resources:

limits:

cpu: "1"

memory: "2Gi"

requests:

cpu: "0.25"

memory: "256Mi"

volumeMounts:

- name: data

mountPath: /data

---

apiVersion: v1

kind: Service

metadata:

name: redis

spec:

type: NodePort

ports:

- name: redis

port: 6379

targetPort: 6379

nodePort: 32222

selector:

app: redis

[root@small-master ~/yml/redis]# kubectl apply -f redis-sentinel.yml

statefulset.apps/redis created

service/redis created

[root@small-master ~/yml/redis]# kubectl get po

NAME READY STATUS RESTARTS AGE

redis-0 1/1 Running 0 5m13s

redis-1 1/1 Running 0 5m6s

redis-2 1/1 Running 0 4m59s

[root@small-master ~/yml/redis]# cd /data/k8s-nfs/

[root@small-master /data/k8s-nfs]# ll

total 0

drwxrwxrwx 2 root root 44 Oct 18 15:09 default-data-redis-0-pvc-875ac01e-d5d8-427f-8cd5-ba590e714902

drwxrwxrwx 2 root root 44 Oct 18 15:08 default-data-redis-1-pvc-7268bf13-4619-48c7-987f-fe2ccc4d218d

drwxrwxrwx 2 root root 44 Oct 18 15:09 default-data-redis-2-pvc-41dc4e4c-65fe-42cb-b41e-9117a0659eba

[root@small-master /data/k8s-nfs]# tree

.

├── default-data-redis-0-pvc-875ac01e-d5d8-427f-8cd5-ba590e714902

│ ├── appendonly.aof

│ └── dump.rdb

├── default-data-redis-1-pvc-7268bf13-4619-48c7-987f-fe2ccc4d218d

│ ├── appendonly.aof

│ └── dump.rdb

└── default-data-redis-2-pvc-41dc4e4c-65fe-42cb-b41e-9117a0659eba

├── appendonly.aof

└── dump.rdb

3 directories, 6 files

#验证

[root@small-master ~/yml/redis]# kubectl exec -it redis-0 -- bash

#查看主从信息

root@redis-0:/data# redis-cli

127.0.0.1:6379> auth shuli@123

OK

127.0.0.1:6379> info Replication

# Replication

role:master

connected_slaves:2

slave0:ip=10.244.2.165,port=6379,state=online,offset=72640,lag=0

slave1:ip=10.244.1.31,port=6379,state=online,offset=72503,lag=0

master_replid:9531e163c328f057a33e37c9611bb828a2e077a3

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:72640

second_repl_offset:-1

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:1

repl_backlog_histlen:72640

127.0.0.1:6379> exit

#查看哨兵信息

root@redis-0:/data# redis-cli -p 26379

127.0.0.1:26379> info sentinel

# Sentinel

sentinel_masters:1

sentinel_tilt:0

sentinel_running_scripts:0

sentinel_scripts_queue_length:0

sentinel_simulate_failure_flags:0

master0:name=mymaster,status=ok,address=10.244.1.30:6379,slaves=2,sentinels=3

#连接redis

#K8S其他java程序连接redis哨兵

spring:

redis:

database: 4

# redis哨兵地址

sentinel:

master: mymaster

nodes: redis-0.redis.redis-demo.svc.cluster.local:26379,redis-1.redis.redis-demo.svc.cluster.local:26379,redis-2.redis.redis-demo.svc.cluster.local:26379

# redis访问密码(默认为空)

password: shuli@123

#客户端连接redis

node节点IP:32222 密码:shuli@123

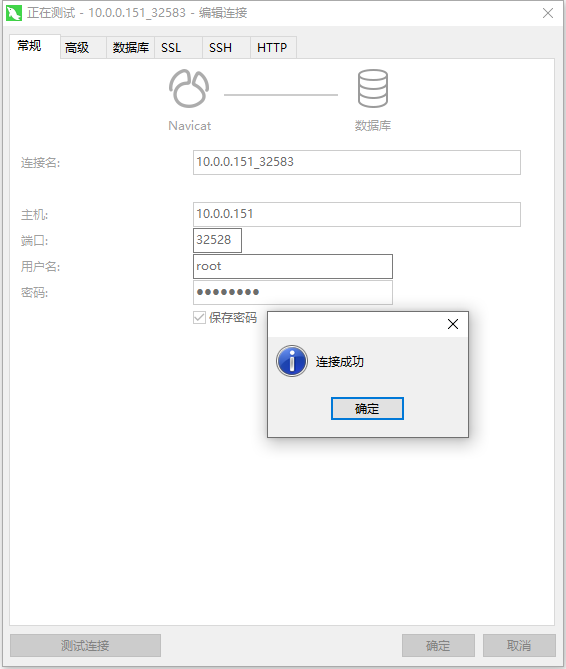

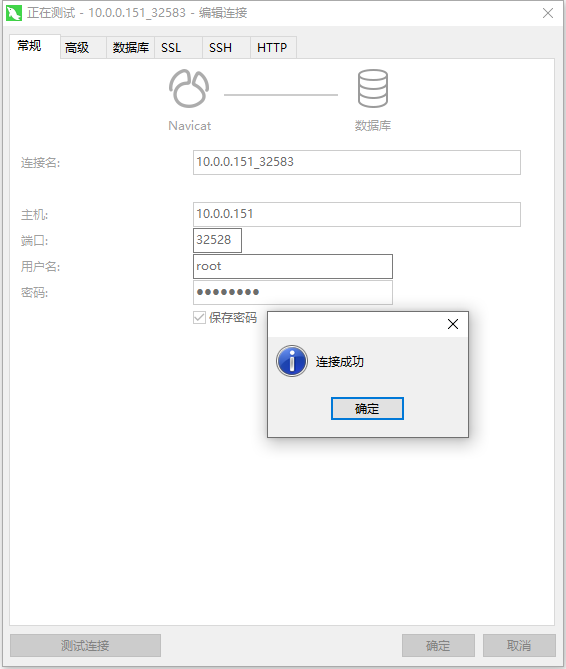

2.2 mysql

secret资源使用:

[root@small-master ~/yml/zk-ka]# echo -n "shuli@123" |base64

c2h1bGlAMTIz

[root@small-master ~/yml/zk-ka]# echo "c2h1bGlAMTIz" |base64 -d

shuli@123[root@small-master ~/yml/zk-ka]#

#使用secret资源为mysql提供密码,这里注意,secret使用base64对原文加密。给密码加密是,需要使用echo -n不输出换行。否则传递过去的内容会有换行符,导致登录失败。

[root@small-master ~/yml/mysql]# cat mysql-secret.yml

kind: Secret

apiVersion: v1

metadata:

name: mysql-secret

data:

MYSQL_ROOT_PASSWORD: c2h1bGlAMTIz

type: Opaque

#使用configmap资源挂载mysql配置文件

[root@small-master ~/yml/mysql]# cat mysql-cm.yml

kind: ConfigMap

apiVersion: v1

metadata:

name: mysql-cnf

data:

custom.cnf: |-

[mysqld]

#performance setttings

lock_wait_timeout = 3600

open_files_limit = 65535

back_log = 1024

max_connections = 512

max_connect_errors = 1000000

table_open_cache = 1024

table_definition_cache = 1024

thread_stack = 512K

sort_buffer_size = 4M

join_buffer_size = 4M

read_buffer_size = 8M

read_rnd_buffer_size = 4M

bulk_insert_buffer_size = 64M

thread_cache_size = 768

interactive_timeout = 600

wait_timeout = 600

tmp_table_size = 32M

max_heap_table_size = 32M

#创建headless供sts使用

[root@small-master ~/yml/mysql]# cat mysql-headless.yml

kind: Service

apiVersion: v1

metadata:

name: mysql-headless

labels:

app: mysql

spec:

ports:

- name: tcp-mysql

protocol: TCP

port: 3306

targetPort: 3306

selector:

app: mysql

clusterIP: None

type: ClusterIP

#创建svc供外部访问

[root@small-master ~/yml/mysql]# cat mysql-svc.yml

kind: Service

apiVersion: v1

metadata:

name: mysql-external

labels:

app: mysql-external

spec:

ports:

- name: tcp-mysql-external

protocol: TCP

port: 3306

targetPort: 3306

selector:

app: mysql

type: NodePort

#部署mysql有状态服务

[root@small-master ~/yml/mysql]# cat mysql-sts.yml

kind: StatefulSet

apiVersion: apps/v1

metadata:

name: mysql

labels:

app: mysql

spec:

replicas: 1

selector:

matchLabels:

app: mysql

template:

metadata:

labels:

app: mysql

spec:

volumes:

- name: host-time

hostPath:

path: /etc/localtime

type: ''

- name: volume-cnf

configMap:

name: mysql-cnf

items:

- key: custom.cnf

path: custom.cnf

defaultMode: 420

containers:

- name: lstack-mysql

image: 'harbor.ans.cn/saas/mysql:5.7.38'

ports:

- name: tcp-mysql

containerPort: 3306

protocol: TCP

env:

- name: MYSQL_ROOT_PASSWORD

valueFrom:

secretKeyRef:

name: mysql-secret

key: MYSQL_ROOT_PASSWORD

livenessProbe:

tcpSocket:

port: 3306

initialDelaySeconds: 3

periodSeconds: 5

readinessProbe:

tcpSocket:

port: 3306

initialDelaySeconds: 3

periodSeconds: 5

resources:

limits:

cpu: '1'

memory: '2Gi'

requests:

cpu: '0.25'

memory: '256Mi'

volumeMounts:

- name: host-time

mountPath: /etc/localtime

- name: data

mountPath: /var/lib/mysql

- name: volume-cnf

mountPath: /etc/mysql/conf.d/custom.cnf

subPath: custom.cnf

serviceName: mysql-headless

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 10Gi

storageClassName: managed-nfs-storage

#验证:

[root@small-master ~/yml/mysql]# kubectl exec -it mysql-0 bash

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

bash-4.2# mysql -uroot -pshuli@123

mysql: [Warning] Using a password on the command line interface can be insecure.

mysql>

3. 暴露服务脚本

[root@small-master ~/yml/redis]# cat service.sh

#!bin/bash

#auther:ans

#function:暴露集群服务端口

#脚本用法,需要指定服务名和服务端口号,名称空间默认是default,如果有名称空间需指定

service=$1

port=$2

namespace=${3:-default}

if [ ! $service ]; then

echo "请输入第一个变量为pod名称关键字.如redis,kafka,mysql"

else

if [ ! $port ]; then

echo "请输入第二个变量为服务的端口号。如3306,6379"

else

for i in `kubectl get po -n $namespace |grep $service |awk '{print $1}'`

do

kubectl label pod -n $namespace $i name=$i

kubectl expose po -n $namespace $i --port=$port --target-port=$port --name=$i --selector=name=$i --type=NodePort

done

fi

fi

#使用脚本

[root@small-master ~/yml/redis]# sh service.sh redis 6379 redis

pod/redis-0 labeled

service/redis-0 exposed

pod/redis-1 labeled

service/redis-1 exposed

pod/redis-2 labeled

service/redis-2 exposed

浙公网安备 33010602011771号

浙公网安备 33010602011771号