SUSE CaaS Platform 4 - Ceph RBD 作为 Pod 存储卷

网络存储卷系列文章

(2)SUSE CaaS Platform 4 - 安装部署

(3)SUSE CaaS Platform 4 - 安装技巧

(4)SUSE CaaS Platform 4 - Ceph RBD 作为 Pod 存储卷

(5)SUSE CaaS Platform 4 - 使用 Ceph RBD 作为持久存储(静态)

(6)SUSE CaaS Platform 4 - 使用 Ceph RBD 作为持久存储(动态)

RBD存储卷

目前 CaaSP4 支持多种 Volume 类型,这里选择 Ceph RBD(Rados Block Device),主要有如下好处:

- Ceph 经过多年开发,已经非常熟,社区也很活跃;

- Ceph 同时支持对象存储,块存储和文件系统接口;

环境准备条件

1、搭建环境

- 操作系统版本: SLES15 SP1,无需安装 swap

- 内核版本:4.12.14-197.18-default

- Kubernetes 版本:CaaSP 4 , v1.15.2

- Ceph :Storage 6

- VMware Workstation 14

2、虚拟化环境搭建和系统安装参考:

- SUSE Storage6 环境搭建详细步骤 - Win10 + VMware WorkStation

- SUSE Linux Enterprise 15 SP1 系统安装

- SUSE Ceph 快速部署 - Storage6

- SUSE CaaS Platform 4 - 安装部署

3、实验目的

配置 Pod 资源使用 RBD 存储卷

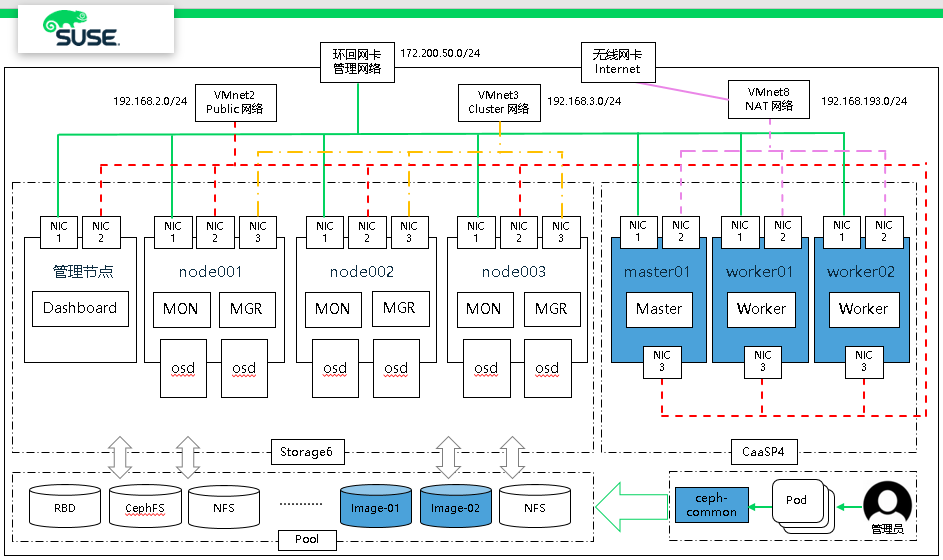

图1 环境架构

图1 环境架构

1、所有节点安装

# zypper -n in ceph-common

复制 ceph.conf 到 worker 节点上

# scp admin:/etc/ceph/ceph.conf /etc/ceph/

2、创建池 caasp4

# ceph osd pool create caasp4 128

3、创建 key ,并存储到 /etc/ceph/ 目录中

# ceph auth get-or-create client.caasp4 mon 'allow r' \

osd 'allow rwx pool=caasp4' -o /etc/ceph/caasp4.keyring

4、创建 RBD 镜像,2G

# rbd create caasp4/ceph-image-test -s 2048

5、查看 ceph 集群 key 信息,并生成基于 base64 编码的key

# ceph auth list

.......

client.admin

key: AQA9w4VdAAAAABAAHZr5bVwkALYo6aLVryt7YA==

caps: [mds] allow *

caps: [mgr] allow *

caps: [mon] allow *

caps: [osd] allow *

.......

client.caasp4

key: AQD1VJddM6QIJBAAlDbIWRT/eiGhG+aD8SB+5A==

caps: [mon] allow r

caps: [osd] allow rwx pool=caasp4

对 key 进行 base64 编码

# echo AQD1VJddM6QIJBAAlDbIWRT/eiGhG+aD8SB+5A== | base64

QVFEMVZKZGRNNlFJSkJBQWxEYklXUlQvZWlHaEcrYUQ4U0IrNUE9PQo=

6、Master 节点上,创建 secret 资源,插入 base64 key

# vi ceph-secret-test.yaml

apiVersion: v1

kind: Secret

metadata:

name: ceph-secret-test

data:

key: QVFEMVZKZGRNNlFJSkJBQWxEYklXUlQvZWlHaEcrYUQ4U0IrNUE9PQo=

# kubectl create -f ceph-secret-test.yaml

secret/ceph-secret-test created

# kubectl get secrets ceph-secret-test

NAME TYPE DATA AGE

ceph-secret-test Opaque 1 14s

7、创建 Pod,并使用存储卷

# mkdir /root/ceph_rbd_pod

# vim /root/ceph_rbd_pod/ceph-rbd-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: vol-rbd-pod

spec:

containers:

- name: ceph-busybox

image: busybox

command: ["sleep", "60000"]

volumeMounts:

- name: ceph-vol-test

mountPath: /usr/share/busybox

readOnly: false

volumes:

- name: ceph-vol-test

rbd:

monitors:

- 192.168.2.40:6789

- 192.168.2.41:6789

- 192.168.2.42:6789

pool: caasp4

image: ceph-image-test

user: caasp4

secretRef:

name: ceph-secret-test

fsType: ext4

readOnly: false

8、查看 Pod 状态和挂载

# kubectl get pods

NAME READY STATUS RESTARTS AGE

vol-rbd-pod 1/1 Running 0 22s

# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

vol-rbd-pod 1/1 Running 0 27s 10.244.2.59 worker02 <none> <none>

# kubectl describe pods vol-rbd-pod

Containers:

ceph-busybox:

.....

Mounts: # 挂载

/usr/share/busybox from ceph-vol-test (rw)

/var/run/secrets/kubernetes.io/serviceaccount from default-token-4hslq (ro)

.....

Volumes:

ceph-vol-test:

Type: RBD (a Rados Block Device mount on the host that shares a pod's lifetime)

CephMonitors: [192.168.2.40:6789 192.168.2.41:6789 192.168.2.42:6789]

RBDImage: ceph-image-test # RBD 镜像名

FSType: ext4 # 文件格式

RBDPool: caasp4 # 池

RadosUser: caasp4 # 用户

Keyring: /etc/ceph/keyring

SecretRef: &LocalObjectReference{Name:ceph-secret-test,}

ReadOnly: false

default-token-4hslq:

Type: Secret (a volume populated by a Secret)

SecretName: default-token-4hslq

Optional: false

.....

9、worker02节点上查看映射

worker02:~ # rbd showmapped

id pool namespace image snap device

0 caasp4 ceph-image-test - /dev/rbd0

浙公网安备 33010602011771号

浙公网安备 33010602011771号