SUSE Ceph 快速部署 - Storage6

学习 SUSE Storage 系列文章

(1)SUSE Storage6 实验环境搭建详细步骤 - Win10 + VMware WorkStation

(2)SUSE Linux Enterprise 15 SP1 系统安装

(4)SUSE Ceph 增加节点、减少节点、 删除OSD磁盘等操作 - Storage6

(5)深入理解 DeepSea 和 Salt 部署工具 - Storage6

一、安装环境描述

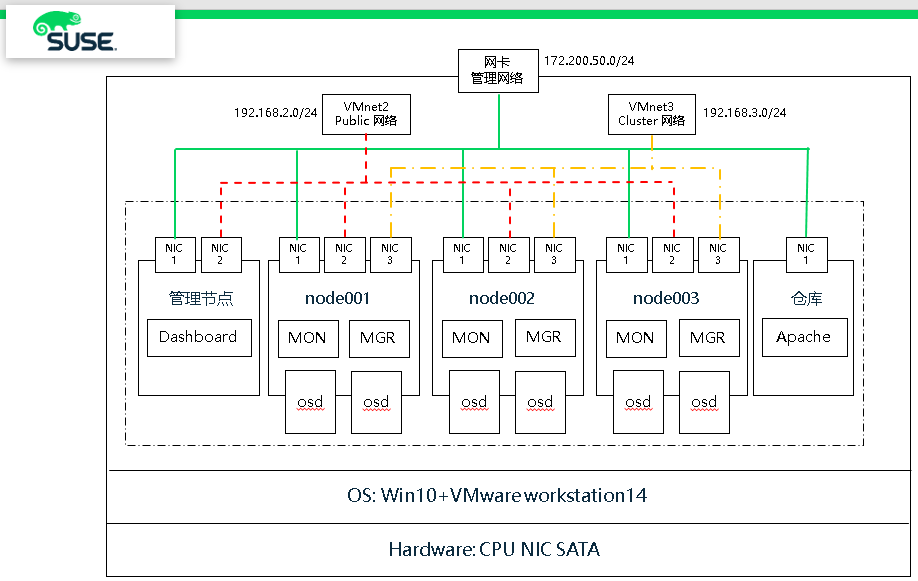

整个环境采用VMware workstation搭建,一共5台虚拟机,所有虚拟机安装SLES15SP1系统,其中一台安装apache作为仓库使用,剩余4台用于搭建Storage6集群。

(1)硬件环境:

(1)硬件环境:

- 笔记本一台,CPU、内存和磁盘空间足够

- 笔记本上建立一块环回接口用于分布式存储管理网络

(2)软件环境:

- 笔记本安装 Win10 操作系统

- 虚拟环境:VMware Workstation 14 Pro

使用VMnet2和VMnet3 作为分布式存储 public和cluster网络

- 虚拟机操作系统:SLES15SP1 企业版操作系统

1、网络

主机名 public网络 管理网络 集群网络 描述 smt 172.200.50.19 SUSE仓库 admin 192.168.2.39 172.200.50.39 192.168.3.39 管理主机 node001 192.168.2.41 172.200.50.41 192.168.3.41 MON node002 192.168.2.42 172.200.50.42 192.168.3.42 MON node003 192.168.2.43 172.200.50.43 192.168.3.43 MON

2、磁盘

每个节点系统有2块 OSD 盘和1块 NVME 磁盘

1 # lsblk 2 NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT 3 sda 8:0 0 20G 0 disk # 操作系统盘 4 ├─sda1 8:1 0 1G 0 part /boot 5 └─sda2 8:2 0 19G 0 part 6 ├─vgoo-lvroot 254:0 0 17G 0 lvm / 7 └─vgoo-lvswap 254:1 0 2G 0 lvm [SWAP] 8 sdb 8:16 0 10G 0 disk # osd 数据盘 9 sdc 8:32 0 10G 0 disk # osd 数据盘 10 nvme0n1 259:0 0 20G 0 disk # wal db

二、操作系统初始化安装

1、临时IP地址设置

1 ip link set eth0 up 2 ip addr add 172.200.50.50/24 dev eth0

连接上了设置永久地址

yast lan list

yast lan edit id=0 ip=192.168.2.40 netmask=255.255.255.0

2、设置bash环境变量和别名

# vim /root/.bash_profile alias cd..='cd ..' alias dir='ls -l' alias egrep='egrep --color=auto' alias fgrep='fgrep --color=auto' alias grep='grep --color=auto' alias l='ls -alF' alias la='ls -la' alias ll='ls -l' alias ls-l='ls -l'

3、配置after.local文件

touch /etc/init.d/after.local chmod 744 /etc/init.d/after.local

复制该内容进去

#! /bin/sh # # Copyright (c) 2010 SuSE LINUX Products GmbH, Germany. All rights reserved. # # Author: Werner Fink, 2010 # # /etc/init.d/after.local # # script with local commands to be executed from init after all scripts # of a runlevel have been executed. # # Here you should add things, that should happen directly after # runlevel has been reached. #

4、仓库配置(所有节点和admin)

1 2 3 4 5 6 7 8 9 10 11 12 13 | ## Poolzypper ar http://172.200.50.19/repo/SUSE/Products/SLE-Product-SLES/15-SP1/x86_64/product/ SLE-Product-SLES15-SP1-Poolzypper ar http://172.200.50.19/repo/SUSE/Products/SLE-Module-Basesystem/15-SP1/x86_64/product/ SLE-Module-Basesystem-SLES15-SP1-Poolzypper ar http://172.200.50.19/repo/SUSE/Products/SLE-Module-Server-Applications/15-SP1/x86_64/product/ SLE-Module-Server-Applications-SLES15-SP1-Poolzypper ar http://172.200.50.19/repo/SUSE/Products/SLE-Module-Legacy/15-SP1/x86_64/product/ SLE-Module-Legacy-SLES15-SP1-Poolzypper ar http://172.200.50.19/repo/SUSE/Products/Storage/6/x86_64/product/ SUSE-Enterprise-Storage-6-Pool## Updatezypper ar http://172.200.50.19/repo/SUSE/Updates/SLE-Product-SLES/15-SP1/x86_64/update/ SLE-Product-SLES15-SP1-Updateszypper ar http://172.200.50.19/repo/SUSE/Updates/SLE-Module-Basesystem/15-SP1/x86_64/update/ SLE-Module-Basesystem-SLES15-SP1-Upadateszypper ar http://172.200.50.19/repo/SUSE/Updates/SLE-Module-Server-Applications/15-SP1/x86_64/update/ SLE-Module-Server-Applications-SLES15-SP1-Upadateszypper ar http://172.200.50.19/repo/SUSE/Updates/SLE-Module-Legacy/15-SP1/x86_64/update/ SLE-Module-Legacy-SLES15-SP1-Updateszypper ar http://172.200.50.19/repo/SUSE/Updates/Storage/6/x86_64/update/ SUSE-Enterprise-Storage-6-Updates |

1 2 3 4 5 6 7 8 9 10 11 12 13 | # zypper lr# | Alias | Name ---+----------------------------------------------------+---------------------------------------------------- 1 | SLE-Module-Basesystem-SLES15-SP1-Pool | SLE-Module-Basesystem-SLES15-SP1-Pool 2 | SLE-Module-Basesystem-SLES15-SP1-Upadates | SLE-Module-Basesystem-SLES15-SP1-Upadates 3 | SLE-Module-Legacy-SLES15-SP1-Pool | SLE-Module-Legacy-SLES15-SP1-Pool 4 | SLE-Module-Legacy-SLES15-SP1-Updates | SLE-Module-Legacy-SLES15-SP1-Updates 5 | SLE-Module-Server-Applications-SLES15-SP1-Pool | SLE-Module-Server-Applications-SLES15-SP1-Pool 6 | SLE-Module-Server-Applications-SLES15-SP1-Upadates | SLE-Module-Server-Applications-SLES15-SP1-Upadates 7 | SLE-Product-SLES15-SP1-Pool | SLE-Product-SLES15-SP1-Pool 8 | SLE-Product-SLES15-SP1-Updates | SLE-Product-SLES15-SP1-Updates 9 | SUSE-Enterprise-Storage-6-Pool | SUSE-Enterprise-Storage-6-Pool 10 | SUSE-Enterprise-Storage-6-Updates | SUSE-Enterprise-Storage-6-Updates |

5、安装基本软件 (所有节点和admin)

zypper in -y -t pattern yast2_basis base zypper in -y net-tools vim man sudo tuned irqbalance zypper in -y ethtool rsyslog iputils less supportutils-plugin-ses zypper in -y net-tools-deprecated tree wget

6、关闭IPV6 (所有节点和admin)

# vim /etc/sysctl.conf net.ipv6.conf.all.disable_ipv6 = 1 # 关闭 IPV6 net.ipv6.conf.default.disable_ipv6 = 1 net.ipv6.conf.lo.disable_ipv6 = 1 vm.min_free_kbytes = 2097152 # 128GB的RAM,系统预留2GB kernel.pid_max = 4194303 # 线程数设置最大

执行生效

# sysctl -p

7、调整网络优化参数 (所有节点和admin)

# tuned-adm profile throughput-performance # tuned-adm active # systemctl start tuned.service # systemctl enable tuned.service

8、编辑hosts文件 (所有节点和admin)

# vim /etc/hosts 192.168.2.39 admin.example.com admin 192.168.2.40 node001.example.com node001 192.168.2.41 node002.example.com node002 192.168.2.42 node003.example.com node003

9、执行更新操作系统,并重启 (所有节点和admin)

# zypper ref # zypper -n update # reboot

三、安装Storage6集群

1、安装 salt(admin节点)

zypper -n in deepsea systemctl restart salt-master.service systemctl enable salt-master.service systemctl status salt-master.service

osd节点 和 admin节点

zypper -n in salt-minion sed -i '17i\master: 192.168.2.39' /etc/salt/minion systemctl restart salt-minion.service systemctl enable salt-minion.service systemctl status salt-minion.service

接受所有请求(admin节点)

salt-key salt-key --accept-all salt-key salt '*' test.ping

2、Admin节点,配置NTP服务

如果没有ntp服务器,默认以admin节点为ntp server

Admin节点:

# vim /etc/chrony.conf # Sync to local clock # 添加本地时钟源 server 127.0.0.1 allow 127.0.0.0/8 allow 192.168.2.0/24 allow 172.200.50.0/24 local stratum 10

systemctl restart chronyd.service

systemctl enable chronyd.service

systemctl status chronyd.service

# chronyc sources 210 Number of sources = 1 MS Name/IP address Stratum Poll Reach LastRx Last sample =============================================================================== ^* 127.127.1.0 12 6 37 23 +1461ns[+3422ns] +/- 166us

# chronyc -n sources -v

3、修改组(admin节点)

cp -p /srv/pillar/ceph/deepsea_minions.sls /tmp/ sed -i "4c # deepsea_minions: 'G@deepsea:*'" /srv/pillar/ceph/deepsea_minions.sls sed -i "6c deepsea_minions: '*'" /srv/pillar/ceph/deepsea_minions.sls

4、远程连接终端监控(admin节点)

该程序监控提供一个详细的,实时的可视化操作行为,当在执行运行salt-run state.orch时,监控执行期间运行了什么

# deepsea monitor

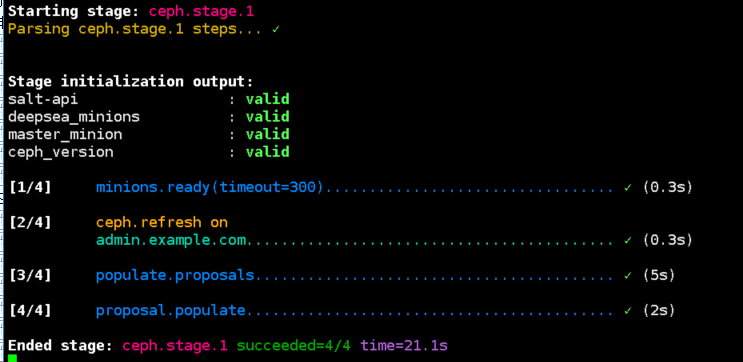

5、更新补丁,并收集硬件信息(admin节点)

salt-run state.orch ceph.stage.0 salt-run state.orch ceph.stage.1

报错信息可忽略

No minions matched the target. No command was sent, no jid was assigned. No minions matched the target. No command was sent, no jid was assigned. [ERROR ] Exception during resolving address: [Errno 2] Host name lookup failure [ERROR ] Exception during resolving address: [Errno 2] Host name lookup failure [WARNING ] /usr/lib/python3.6/site-packages/salt/grains/core.py:2827: DeprecationWarning: This server_id is computed nor by Adler32 neither by CRC32. Please use "server_id_use_crc" option and define algorithm youprefer ( default "Adler32"). The server_id will be computed withAdler32 by default.

GitHub🔗

https://github.com/SUSE/DeepSea/issues/1593

6、查看网络配置文件(admin节点)

# vim /srv/pillar/ceph/proposals/config/stack/default/ceph/cluster.yml cluster_network: 192.168.3.0/24 fsid: 10aca2da-ead5-438d-b104-da37870b50b8 public_network: 192.168.2.0/24

7、配置集群policy.cfg文件(admin节点)

(1)模板文件,复制policy.cfg-rolebased模板

# ll /usr/share/doc/packages/deepsea/examples/ total 12 -rw-r--r-- 1 root root 329 Jun 13 16:00 policy.cfg-generic -rw-r--r-- 1 root root 489 Jun 13 16:00 policy.cfg-regex -rw-r--r-- 1 root root 577 Jun 13 16:00 policy.cfg-rolebased

# cp /usr/share/doc/packages/deepsea/examples/policy.cfg-rolebased /srv/pillar/ceph/proposals/policy.cfg

(2)编辑模板文件(admin节点)

# vim /srv/pillar/ceph/proposals/policy.cfg ## Cluster Assignment cluster-ceph/cluster/*.sls ## Roles # ADMIN role-master/cluster/admin*.sls role-admin/cluster/admin*.sls # Monitoring role-prometheus/cluster/admin*.sls role-grafana/cluster/admin*.sls # MON role-mon/cluster/node00[1-3]*.sls # MGR (mgrs are usually colocated with mons) role-mgr/cluster/node00[1-3]*.sls # COMMON config/stack/default/global.yml config/stack/default/ceph/cluster.yml # Storage # 定义为 storage 角色 role-storage/cluster/node00*.sls

(3)执行stage2命令 (admin节点)

# salt-run state.orch ceph.stage.2 # salt '*' pillar.items # 查看设置是否正确

尤其是NTP,role 角色定义,public network 网络 是否定义正确

public_network: 192.168.2.0/24 roles: - mon - mgr - storage time_server: admin.example.com

(4)如果3个节点需要修改 (admin节点)

由于测试环境,只用到3台OSD节点,官方建议生产环境必须是4台节点或以上

# sed -i 's/if (not self.in_dev_env and len(storage) < 4/if (not self.in_dev_env and len(storage) < 2/g' /srv/modules/runners/validate.py

8、定义和创建 OSD 磁盘

(1)备份配置文件

# cp /srv/salt/ceph/configuration/files/drive_groups.yml /srv/salt/ceph/configuration/files/drive_groups.yml.bak

(2)查看OSD节点磁盘情况(node001,node002,node003)

# ceph-volume inventory stderr: blkid: error: /dev/sr0: No medium found Device Path Size rotates available Model name /dev/nvme0n1 20.00 GB False True VMware Virtual NVMe Disk /dev/sdb 10.00 GB True True VMware Virtual S /dev/sdc 10.00 GB True True VMware Virtual S /dev/sda 20.00 GB True False VMware Virtual S /dev/sr0 1024.00 MB True False VMware SATA CD01

(3)编辑配置文件

# vim /srv/salt/ceph/configuration/files/drive_groups.yml drive_group_hdd_nvme: # 目标为 storage角色节点 target: 'I@roles:storage' data_devices: size: '9GB:12GB' # 数据设备按照磁盘大小来区分,9G到12G之间 db_devices: rotational: 0 # 非机械设备 SSD or NVME block_db_size: '2G' # 指定 db大小为2GB (大小按实际情况)

(4)显示OSD配置报告

可以清楚的看到一块nvme0n1作为BlueStore的DB设备,2G大小,分割成2个LV对应2块OSD数据磁盘。

# salt-run disks.report node003.example.com: |_ - 0 - Total OSDs: 2 Solid State VG: Targets: block.db Total size: 19.00 GB Total LVs: 2 Size per LV: 1.86 GB Devices: /dev/nvme0n1 Type Path LV Size % of device ---------------------------------------------------------------- [data] /dev/sdb 9.00 GB 100.0% [block.db] vg: vg/lv 1.86 GB 10% ---------------------------------------------------------------- [data] /dev/sdc 9.00 GB 100.0% [block.db] vg: vg/lv 1.86 GB 10%

注意:如果磁盘无法识别请使用如下命令格式化,不能有GPT分区

# ceph-volume lvm zap /dev/xx

# ceph-volume lvm zap /dev/xx --destroy

(5)运行stage3 stage4

# salt-run state.orch ceph.stage.3 # salt-run state.orch ceph.stage.4

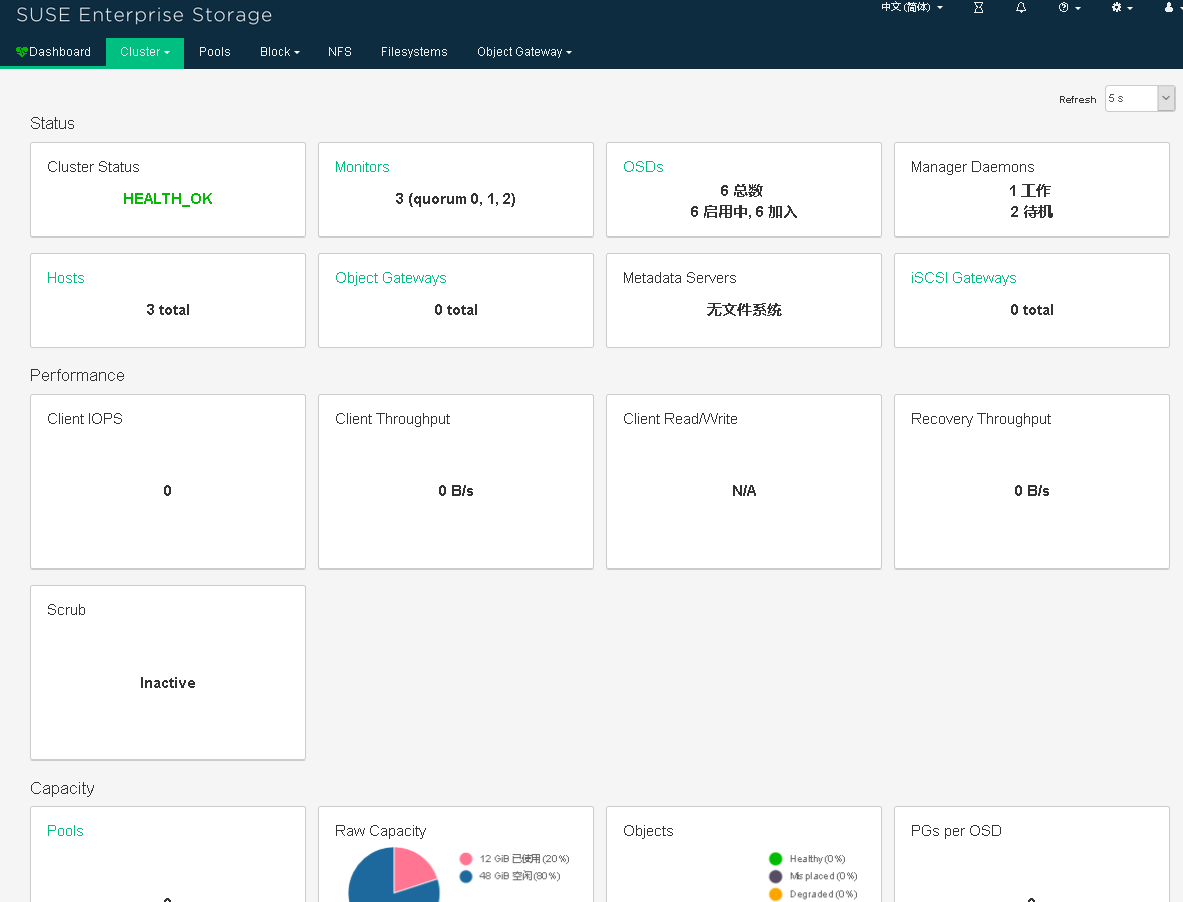

四、配置HAproxy和Dashboard

1、添加SUSE HA仓库、安装 Haproxy (admin节点)

# zypper ar http://172.200.50.19/repo/SUSE/Products/SLE-Product-HA/15-SP1/x86_64/product/ SLE-Products-HA-SLES15-SP1-Pool # zypper -n in haproxy

2、配置

# vim /etc/haproxy/haproxy.cfg …… frontend http_web option tcplog bind 0.0.0.0:8443 # 定义bind绑定,监听那个套接字,如果是node001作为admin节点,改成9443 mode tcp default_backend dashboard backend dashboard mode tcp option log-health-checks option httpchk GET / http-check expect status 200 server mgr1 172.200.50.40:8443 check ssl verify none server mgr2 172.200.50.41:8443 check ssl verify none server mgr3 172.200.50.42:8443 check ssl verify none

3) 启动haproxy服务

# systemctl start haproxy.service

# systemctl enable haproxy.service

# systemctl status haproxy.service

4) 查看dashboard管理员密码:

# salt-call grains.get dashboard_creds local: ---------- admin: 9KyIXZSrdW

5)windows主机添加域名解析

C:\Windows\System32\drivers\etc\host 127.0.0.1 localhost 172.200.50.39 admin.example.com

6)访问SES6 Dashboard页面

http://172.200.50.39:8443/#/dashboard

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· 如何编写易于单元测试的代码

· 10年+ .NET Coder 心语,封装的思维:从隐藏、稳定开始理解其本质意义

· .NET Core 中如何实现缓存的预热?

· 从 HTTP 原因短语缺失研究 HTTP/2 和 HTTP/3 的设计差异

· AI与.NET技术实操系列:向量存储与相似性搜索在 .NET 中的实现

· 10年+ .NET Coder 心语 ── 封装的思维:从隐藏、稳定开始理解其本质意义

· 地球OL攻略 —— 某应届生求职总结

· 提示词工程——AI应用必不可少的技术

· Open-Sora 2.0 重磅开源!

· 周边上新:园子的第一款马克杯温暖上架