docker kubernetes Swarm容器编排k8s CICD部署 麦兜

1docker版本

docker 17.09

https://docs.docker.com/

appledeAir:~ apple$ docker version

Client: Docker Engine - Community

Version: 18.09.0

API version: 1.39

Go version: go1.10.4

Git commit: 4d60db4

Built: Wed Nov 7 00:47:43 2018

OS/Arch: darwin/amd64

Experimental: false

Server: Docker Engine - Community

Engine:

Version: 18.09.0

API version: 1.39 (minimum version 1.12)

Go version: go1.10.4

Git commit: 4d60db4

Built: Wed Nov 7 00:55:00 2018

OS/Arch: linux/amd64

Experimental: false

vagrant

创建linux虚拟机

创建一个目录

mkdir centos7

vagrant init centos/7 #会创建一个vagrant file

vagrant up #启动

vagrant ssh #进入虚拟机

vagrant status

vagrant halt #停机

vagrant destroy 删除机器

docker machine 自动在虚拟机安装docker的工具

docker-machine create demo virtualbox 里会自动运行一台虚拟机

docker-machine ls 显示有哪些虚拟机在运行

docker-machine ssh demo 进入机器

docker-machine create demo1 创建第二台有docker的虚拟机

docker-machine stop demo1

docker playground https://labs.play-with-docker.com/

运行docker

docker run -dit ubuntu /bin/bash

执行不退出

docker exec -it 33 /bin/bash

把普通用户添加到docker组,不用sudo

sudo gpasswd -a alex docker

/etc/init.d/docker restart

重新登录shell exit

验证docker version

创建自己的image

from scratch

ADD app.py /

CMD ["/app.py"]

build自己的image

docker build -t alex/helloworld .

显示当前正在运行的容器

docker container ls

docker container ls -a

显示状态为退出的容器

docker container ls -f "status=exited" -q

删除容器

docker container rm 89123

docker rm 89123

或者一次性删除全部容器

docker rm $(docker container ls -aq)

删除退出状态的容器

docker rm $(docker container ls -f "status=exited" -q)

删除不用的image

docker rmi 98766

把container,commit成为一个新的image

docker commit 12312312 alexhe/changed_a_lot:v1.0

docker image ls

docker history 901923123 (image的id)

Dockerfile案例:

cat Dockerfile

FROM centos

ENV name Docker

CMD echo "hello $name"

用dockerfile建立image

docker build -t alexhe/firstblood:latest .

从registry拉取

docker pull ubuntu:18.04

Dockerfile案例:

cat Dockerfile

FROM centos

RUN yum install -y vim

Dockerfile案例:

FROM ubuntu

RUN apt-get update && apt-get install -y python

Dockerfile语法梳理及最佳实践

From ubuntu:18.04

LABEL maintainer="alex@alexhe.net"

LABEL version="1.0"

LABEL description="This is comment"

RUN yum update && yum instlal -y vim \

python-dev #每运行一次run,增加一层layer,需要合并起来

WORKDIR /root #进入目录,如果没有目录会自动创建目录

WORKDIR demo #进入了/root/demo

ADD hello /

ADD test.tar.gz / #添加到根目录并解压

WORKDIR /root

ADD hello test/ # /root/test/hello

WORKDIR /root

COPY hello test/ #

大部分情况,copy优于add,add除了copy还有额外功能(解压),添加远程文件/目录请使用curl或者wget

ENV MYSQL_VERSION 5.6 #设置常亮

RUN apt-get install -y mysql-server="${MYSQL_VERSION}" && rm -rf /var/lib/apt/lists/* #引用常量

RUN vs CMD vs ENTRYPOINT

run:执行命令并创建新的image layer

cmd:设置容器启动后默认执行的命令和参数

entrypoint:设置容器启动时运行的命令

shell格式

RUN apt-get install -y vim

CMD echo "hello docker"

ENTRYPOINT echo "hello docker"

Exec格式

RUN ["apt-get","install","-y","vim"]

CMD ["/bin/echo","hello docker"]

ENTRYPOINT ["/bin/echo","hello docker"]

例子:注意

FROM centos

ENV name Docker

ENTRYPOINT ["/bin/bash","-c","echo","hello $name"] #这样正解 如果["echo","hello $name"],这样运行了以后还是显示hello $name,没有变量替换。用exec格式,执行的是echo这个命令,而不是shell,所以没办法把变量替换掉。

和上面的区别

FROM centos

ENV name Docker

ENTRYPOINT echo "hello $name" #正常 可以显示hello Docker 会用shell执行命令,识别变量

CMD:

容器启动时默认执行的命令,如果docker run指定了其他命令,CMD命令被忽略。如果定义了多个CMD,只有最后一个会执行。

ENTRYPOINT:

让容器以应用程序或者服务的形式运行。不会被忽略,一定会执行。最佳实践:下一个shell脚本作为entrypoint

COPY docker-entrypoint.sh /usr/local/bin/

ENTRYPOINT ["docker-entrypoint.sh"]

EXPOSE 27017

CMD ["mongod"]

镜像发布

docker login

docker push alexhe/hello-world:latest

docker rmi alexhe/hello-world #删掉

docker pull alexhe/hello-world:latest #再拉回来

本地registry private repository

https://docs.docker.com/v17.09/registry/

1.启动私有registry

docker run -d -p 5000:5000 -v /opt/registry:/var/lib/registry --restart always --name registry registry:2

2.其他机器测试 telnet x.x.x.x 5000

3.往私有registry push

3.1先用dockerfile build和打tag

docker build -t x.x.x.x:5000/hello-world .

3.2设置允许不安全的私有库

vim /etc/docker/daemon.json

{

"insecure-registries" : ["x.x.x.x:5000"]

}

vim /lib/systemd/service/docker.service

EnvirmentFile=/etc/docker/daemon.json

/etc/init.d/docker restart

3.3开始push

docker push x.x.x.x:5000/hello-world

3.4验证

registry有api https://docs.docker.com/v17.09/registry/spec/api/#listing-repositories

GET /v2/_catalogdocker pull x.x.x.x:5000/helloworld

Dockerfile github很多示例https://github.com/docker-library/docs https://docs.docker.com/engine/reference/builder/#add

Dockerfile案例:安装flask 复制目录中的app.py到/app/ 进入app目录 暴露5000端口 执行app.py

cat Dockerfile

FROM python:2.7

LABEL maintainer="alex he<alex@alexhe.net>"

RUN pip install flask

COPY app.py /app/

WORKDIR /app

EXPOSE 5000

CMD ["python", "app.py"]

cat app.py

from flask import Flask

app = Flask(__name__)

@app.route('/')

def hello():

return "hello docker"

if __name__ == '__main__':

app.run(host="0.0.0.0", port=5000)

docker build -t alexhe/flask-hello-world . #打包

如果打包时出错

docker run -it 报错时的第几步id /bin/bash

进入以后看看哪里报错

最后docker run -d alexhe/flask-hello-world #让容器在后台运行

在运行中的容器,执行命令:

docker exec -it xxxxxx /bin/bash

显示ip地址:

docker exec -it xxxx ip a

docker inspect xxxxxxid

显示容器运行产生的输出:

docker logs xxxxx

dockerfile 案例:

linux的stress工具

cat Dockerfile

FROM ubuntu

RUN apt-get update && apt-get install -y stress

ENTRYPOINT ["/usr/bin/stress"] #使用entrypoint加cmd配合使用,cmd为空的,用docker run来接收请求参数

CMD []

使用:

docker build alexhe/ubuntu-stress .

#dokcer run -it alexhe/ubuntu-stress #无任何参数运行,类似于打印

docker run -it alexhe/ubuntu-stress -vm 1 --verbose # 类似于运行 stress -vm 1 --verbose

容器资源限制,cpu,ram

docker run --memory=200M alexhe/ubuntu-stress -vm 1 --vm-bytes=500M --verbose #直接报错,内存不够。因为给了容器200m,压力测试占用500m

docker run --cpu-shares=10 --name=test1 alexhe/ubuntu-stress -vm1 #和下面一起启动,他的cpu占用率为66%

docker run --cpu-shares=5 --name=test2 alexhe/ubuntu-stress -vm1 #一起启动,他的cpu占用率33%

容器网络

单机:bridge,host,none

多机:Overlay

linux中的网络命名空间

docker run -dit --name test1 busybox /bin/sh -c "while true;do sleep 3600;done"

docker exec -it xxxxx /bin/sh

ip a 显示网络接口

exit

进入host , 执行ip a ,显示host的接口

container和host的网络namespace是隔离开的

docker run -dit --name test2 busybox /bin/sh -c "while true;do sleep 3600;done"

docker exec xxxxx ip a #看第二台机器的网络

同一台机器,container之间的网络是相通的。

以下是linux中的网络命名空间端口互通的原理实现(docker的和他类似):

host中执行 ip netns list 查看本机的network namespace

ip netns delete test1

ip netns add test1 #创建network namespace

ip netns add test2 #创建network namespace

在test1的network namespace中执行ip link

ip netns exec test1 ip link #目前状态是down的

ip netns exec test1 ip link set dev lo up #状态变成了unknown,要两端都连通,他才会变成up

创建一对veth,一个放入test1的namespace,另一个放入test2的namespace。

创建一对veth:

ip link add veth-test1 type veth peer name veth-test2

把veth-test1放入test1的namespace:

ip link set veth-test1 netns test1

看看test1的namespace里的情况:

ip netns exec test1 ip link #test1的namespace多了一个veth,状态为down

看看本地的ip link:

ip link #少了一个,说明这一个已经加到了test1的namespace

把veth-test2放入test2的namespace:

ip link set veth-test2 netns test2

看看本地的ip link:

ip link #又少了一个,说明已经加入到了test2的namespace

看看test2的namespace

ip netns exec test2 ip link #test2的namespace多了一个veth,状态为down

给两个veth端口添加ip地址:

ip netns exec test1 ip addr add 192.168.1.1/24 dev veth-test1

ip netns exec test2 ip addr add 192.168.1.2/24 dev veth-test2

查看test1和test2的ip link

ip netns exec test1 ip link #发现没有ip地址,并且端口状态是down

ip netns exec test2 ip link #发现没有ip地址,并且端口状态是down

把两个端口up起来

ip netns exec test1 ip link set dev veth-test1 up

ip netns exec test2 ip link set dev veth-test2 up

查看test1和test2的ip link

ip netns exec test1 ip link #发现有ip地址,并且端口状态是up

ip netns exec test2 ip link #发现有ip地址,并且端口状态是up

从test1的namespace里的veth-test1 执行ping test2的namespace中的veth-test2

ip netns exec test1 ping 192.168.1.2

ip netns exec test2 ping 192.168.1.1

docker的bridge docker0网络:

两个容器test1和test2能互相ping 通,说明两个network namespace是连接在一起的。

目前系统只有一个test1的容器,删除test2容器

显示docker网络:

docker network ls

NETWORK ID NAME DRIVER SCOPE

9d133c1c82ff bridge bridge local

e44acf9eff90 host host local

bc660dbbb8b6 none null local

显示bridge网络的详情:

docker network inspect xxxxxx(上面显示bridge网络的id)

host上的veth和容器里的eth0是一对儿veth

ip link #veth6aa1698@if18

docker exec test1 ip link #18: eth0@if19

这一对儿veth pair连接到了host上的docker0上面。

yum install bridge-utils

brctl show #主机上的veth6aa连接在docker0上

新开一个test2的容器:

docker run -dit --name test2 busybox /bin/sh -c "while true;do sleep 3600;done"

看看docker的网络:

docker network inspect bridge

看到container多了一个,地址都有

在host运行ip a,又多了一个veth。

运行brctl show,docker0上有两个接口

docker容器之间的互联link

目前只有一个容器test1

现在创建第二个容器test2

docker run -d --name test2 --link test1 busybox /bin/sh -c "while true;do sleep 3600;done"

docker exec -it test2 /bin/sh

进入test2后,去ping test1的ip地址,通,ping test1的名字 ,也通。

进入test1里,去ping test2的ip地址,通,ping test2的名字,不通。

自己建一个docker network bridge,并让容器连接他。

docker network create -d bridge my-bridge

创建一个容器,test3

docker run -d --name test3 --network my-bridege busybox /bin/sh -c "while true;do sleep 3600;done"

通过brctl show来查看。

把test2的网络换成my-bridge

docker network connect my-bridge test2,当连接进来后,test2就有了2个ip地址。

注意:如果用户自己创建自定义的network,并让一些容器连接进来,这些容器,是能通过名字来互相ping连接的。而默认的bridge不行,就像上面测试一样。

docker的端口映射

创建一个nginx的container

docker run --name web -d -p 80:80 nginx

docker的host和none网络

none network:

docker run -d --name test1 --network none busybox /bin/sh -c "while true;do sleep 3600;done"

docker network inspect none #可以看到none的network连接了一个container

进入容器:

docker exec -it test1 /bin/sh #进入看看网络,无任何ip网络

host network:

docker run -d --name test1 --network host busybox /bin/sh -c "while true;do sleep 3600;done"

docker network inspect host

进入容器:

docker exec -it test1 /bin/sh #查看ip a 网络,容器的ip和主机的ip完全一样,他没有自己独立的namespace

多容器复杂应用的部署:

Flask+redis, flask的container访问redis的container

cat app.py

from flask import Flask

from redis import Redis

import os

import socket

app = Flask(__name__)

redis = Redis(host=os.environ.get('REDIS_HOST', '127.0.0.1'), port=6379)

@app.route('/')

def hello():

redis.incr('hits')

return 'Hello Container World! I have been seen %s times and my hostname is %s.\n' % (redis.get('hits'),socket.gethostname())

if __name__ == "__main__":

app.run(host="0.0.0.0", port=5000, debug=True)

cat Dockerfile

FROM python:2.7

LABEL maintaner="Peng Xiao xiaoquwl@gmail.com"

COPY . /app

WORKDIR /app

RUN pip install flask redis

EXPOSE 5000

CMD [ "python", "app.py" ]

1.创建redis的container:

docker run -d --name redis redis

2. dokcer build -t alexhe/flask-redis .

3.创建container

docker run -d -p 5000:5000 --link redis --name flask-redis -e REDIS_HOST=redis alexhe/flask-redis #和上面源码的相对应

4.进入上面的container,并执行env看一下:

docker exec -it flask-redis /bin/bash

env #环境变量

ping redis #在容器里可以ping redis

5.在主机访问curl 127.0.0.1:5000。可以访问到

多主机间,多container互相通信

docker网络的overlay和underlay:

两台linux 主机 192.168.205.10 192.168.205.11

vxlan数据包(google搜vxlan概念)

cat multi-host-network.md # Mutil-host networking with etcd ## setup etcd cluster 在docker-node1上 ``` vagrant@docker-node1:~$ wget https://github.com/coreos/etcd/releases/download/v3.0.12/etcd-v3.0.12-linux-amd64.tar.gz vagrant@docker-node1:~$ tar zxvf etcd-v3.0.12-linux-amd64.tar.gz vagrant@docker-node1:~$ cd etcd-v3.0.12-linux-amd64 vagrant@docker-node1:~$ nohup ./etcd --name docker-node1 --initial-advertise-peer-urls http://192.168.205.10:2380 \ --listen-peer-urls http://192.168.205.10:2380 \ --listen-client-urls http://192.168.205.10:2379,http://127.0.0.1:2379 \ --advertise-client-urls http://192.168.205.10:2379 \ --initial-cluster-token etcd-cluster \ --initial-cluster docker-node1=http://192.168.205.10:2380,docker-node2=http://192.168.205.11:2380 \ --initial-cluster-state new& ``` 在docker-node2上 ``` vagrant@docker-node2:~$ wget https://github.com/coreos/etcd/releases/download/v3.0.12/etcd-v3.0.12-linux-amd64.tar.gz vagrant@docker-node2:~$ tar zxvf etcd-v3.0.12-linux-amd64.tar.gz vagrant@docker-node2:~$ cd etcd-v3.0.12-linux-amd64/ vagrant@docker-node2:~$ nohup ./etcd --name docker-node2 --initial-advertise-peer-urls http://192.168.205.11:2380 \ --listen-peer-urls http://192.168.205.11:2380 \ --listen-client-urls http://192.168.205.11:2379,http://127.0.0.1:2379 \ --advertise-client-urls http://192.168.205.11:2379 \ --initial-cluster-token etcd-cluster \ --initial-cluster docker-node1=http://192.168.205.10:2380,docker-node2=http://192.168.205.11:2380 \ --initial-cluster-state new& ``` 检查cluster状态 ``` vagrant@docker-node2:~/etcd-v3.0.12-linux-amd64$ ./etcdctl cluster-health member 21eca106efe4caee is healthy: got healthy result from http://192.168.205.10:2379 member 8614974c83d1cc6d is healthy: got healthy result from http://192.168.205.11:2379 cluster is healthy ``` ## 重启docker服务 在docker-node1上 ``` $ sudo service docker stop $ sudo /usr/bin/dockerd -H tcp://0.0.0.0:2375 -H unix:///var/run/docker.sock --cluster-store=etcd://192.168.205.10:2379 --cluster-advertise=192.168.205.10:2375& ``` 在docker-node2上 ``` $ sudo service docker stop $ sudo /usr/bin/dockerd -H tcp://0.0.0.0:2375 -H unix:///var/run/docker.sock --cluster-store=etcd://192.168.205.11:2379 --cluster-advertise=192.168.205.11:2375& ``` ## 创建overlay network 在docker-node1上创建一个demo的overlay network ``` vagrant@docker-node1:~$ sudo docker network ls NETWORK ID NAME DRIVER SCOPE 0e7bef3f143a bridge bridge local a5c7daf62325 host host local 3198cae88ab4 none null local vagrant@docker-node1:~$ sudo docker network create -d overlay demo 3d430f3338a2c3496e9edeccc880f0a7affa06522b4249497ef6c4cd6571eaa9 vagrant@docker-node1:~$ sudo docker network ls NETWORK ID NAME DRIVER SCOPE 0e7bef3f143a bridge bridge local 3d430f3338a2 demo overlay global a5c7daf62325 host host local 3198cae88ab4 none null local vagrant@docker-node1:~$ sudo docker network inspect demo [ { "Name": "demo", "Id": "3d430f3338a2c3496e9edeccc880f0a7affa06522b4249497ef6c4cd6571eaa9", "Scope": "global", "Driver": "overlay", "EnableIPv6": false, "IPAM": { "Driver": "default", "Options": {}, "Config": [ { "Subnet": "10.0.0.0/24", "Gateway": "10.0.0.1/24" } ] }, "Internal": false, "Containers": {}, "Options": {}, "Labels": {} } ] ``` 我们会看到在node2上,这个demo的overlay network会被同步创建 ``` vagrant@docker-node2:~$ sudo docker network ls NETWORK ID NAME DRIVER SCOPE c9947d4c3669 bridge bridge local 3d430f3338a2 demo overlay global fa5168034de1 host host local c2ca34abec2a none null local ``` 通过查看etcd的key-value, 我们获取到,这个demo的network是通过etcd从node1同步到node2的 ``` vagrant@docker-node2:~/etcd-v3.0.12-linux-amd64$ ./etcdctl ls /docker /docker/network /docker/nodes vagrant@docker-node2:~/etcd-v3.0.12-linux-amd64$ ./etcdctl ls /docker/nodes /docker/nodes/192.168.205.11:2375 /docker/nodes/192.168.205.10:2375 vagrant@docker-node2:~/etcd-v3.0.12-linux-amd64$ ./etcdctl ls /docker/network/v1.0/network /docker/network/v1.0/network/3d430f3338a2c3496e9edeccc880f0a7affa06522b4249497ef6c4cd6571eaa9 vagrant@docker-node2:~/etcd-v3.0.12-linux-amd64$ ./etcdctl get /docker/network/v1.0/network/3d430f3338a2c3496e9edeccc880f0a7affa06522b4249497ef6c4cd6571eaa9 | jq . { "addrSpace": "GlobalDefault", "enableIPv6": false, "generic": { "com.docker.network.enable_ipv6": false, "com.docker.network.generic": {} }, "id": "3d430f3338a2c3496e9edeccc880f0a7affa06522b4249497ef6c4cd6571eaa9", "inDelete": false, "ingress": false, "internal": false, "ipamOptions": {}, "ipamType": "default", "ipamV4Config": "[{\"PreferredPool\":\"\",\"SubPool\":\"\",\"Gateway\":\"\",\"AuxAddresses\":null}]", "ipamV4Info": "[{\"IPAMData\":\"{\\\"AddressSpace\\\":\\\"GlobalDefault\\\",\\\"Gateway\\\":\\\"10.0.0.1/24\\\",\\\"Pool\\\":\\\"10.0.0.0/24\\\"}\",\"PoolID\":\"GlobalDefault/10.0.0.0/24\"}]", "labels": {}, "name": "demo", "networkType": "overlay", "persist": true, "postIPv6": false, "scope": "global" } ``` ## 创建连接demo网络的容器 在docker-node1上 ``` vagrant@docker-node1:~$ sudo docker run -d --name test1 --net demo busybox sh -c "while true; do sleep 3600; done" Unable to find image 'busybox:latest' locally latest: Pulling from library/busybox 56bec22e3559: Pull complete Digest: sha256:29f5d56d12684887bdfa50dcd29fc31eea4aaf4ad3bec43daf19026a7ce69912 Status: Downloaded newer image for busybox:latest a95a9466331dd9305f9f3c30e7330b5a41aae64afda78f038fc9e04900fcac54 vagrant@docker-node1:~$ sudo docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES a95a9466331d busybox "sh -c 'while true; d" 4 seconds ago Up 3 seconds test1 vagrant@docker-node1:~$ sudo docker exec test1 ifconfig eth0 Link encap:Ethernet HWaddr 02:42:0A:00:00:02 inet addr:10.0.0.2 Bcast:0.0.0.0 Mask:255.255.255.0 inet6 addr: fe80::42:aff:fe00:2/64 Scope:Link UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1 RX packets:15 errors:0 dropped:0 overruns:0 frame:0 TX packets:8 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:0 RX bytes:1206 (1.1 KiB) TX bytes:648 (648.0 B) eth1 Link encap:Ethernet HWaddr 02:42:AC:12:00:02 inet addr:172.18.0.2 Bcast:0.0.0.0 Mask:255.255.0.0 inet6 addr: fe80::42:acff:fe12:2/64 Scope:Link UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1 RX packets:8 errors:0 dropped:0 overruns:0 frame:0 TX packets:8 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:0 RX bytes:648 (648.0 B) TX bytes:648 (648.0 B) lo Link encap:Local Loopback inet addr:127.0.0.1 Mask:255.0.0.0 inet6 addr: ::1/128 Scope:Host UP LOOPBACK RUNNING MTU:65536 Metric:1 RX packets:0 errors:0 dropped:0 overruns:0 frame:0 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:1 RX bytes:0 (0.0 B) TX bytes:0 (0.0 B) vagrant@docker-node1:~$ ``` 在docker-node2上 ``` vagrant@docker-node2:~$ sudo docker run -d --name test1 --net demo busybox sh -c "while true; do sleep 3600; done" Unable to find image 'busybox:latest' locally latest: Pulling from library/busybox 56bec22e3559: Pull complete Digest: sha256:29f5d56d12684887bdfa50dcd29fc31eea4aaf4ad3bec43daf19026a7ce69912 Status: Downloaded newer image for busybox:latest fad6dc6538a85d3dcc958e8ed7b1ec3810feee3e454c1d3f4e53ba25429b290b docker: Error response from daemon: service endpoint with name test1 already exists. #已经用过不能再用 vagrant@docker-node2:~$ sudo docker run -d --name test2 --net demo busybox sh -c "while true; do sleep 3600; done" 9d494a2f66a69e6b861961d0c6af2446265bec9b1d273d7e70d0e46eb2e98d20 ``` 验证连通性。 ``` vagrant@docker-node2:~$ sudo docker exec -it test2 ifconfig eth0 Link encap:Ethernet HWaddr 02:42:0A:00:00:03 inet addr:10.0.0.3 Bcast:0.0.0.0 Mask:255.255.255.0 inet6 addr: fe80::42:aff:fe00:3/64 Scope:Link UP BROADCAST RUNNING MULTICAST MTU:1450 Metric:1 RX packets:208 errors:0 dropped:0 overruns:0 frame:0 TX packets:201 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:0 RX bytes:20008 (19.5 KiB) TX bytes:19450 (18.9 KiB) eth1 Link encap:Ethernet HWaddr 02:42:AC:12:00:02 inet addr:172.18.0.2 Bcast:0.0.0.0 Mask:255.255.0.0 inet6 addr: fe80::42:acff:fe12:2/64 Scope:Link UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1 RX packets:8 errors:0 dropped:0 overruns:0 frame:0 TX packets:8 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:0 RX bytes:648 (648.0 B) TX bytes:648 (648.0 B) lo Link encap:Local Loopback inet addr:127.0.0.1 Mask:255.0.0.0 inet6 addr: ::1/128 Scope:Host UP LOOPBACK RUNNING MTU:65536 Metric:1 RX packets:0 errors:0 dropped:0 overruns:0 frame:0 TX packets:0 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:1 RX bytes:0 (0.0 B) TX bytes:0 (0.0 B) vagrant@docker-node1:~$ sudo docker exec test1 sh -c "ping 10.0.0.3" PING 10.0.0.3 (10.0.0.3): 56 data bytes 64 bytes from 10.0.0.3: seq=0 ttl=64 time=0.579 ms 64 bytes from 10.0.0.3: seq=1 ttl=64 time=0.411 ms 64 bytes from 10.0.0.3: seq=2 ttl=64 time=0.483 ms ^C vagrant@docker-node1:~$ ```

docker的持久化存储和数据共享:

持久化有两种方式:1,Data Volume 2,Bind Mounting

第一种Data Volume:

容器产生数据,比如日志,数据库,想保留这些数据

例如https://hub.docker.com/_/mysql

docker run -d -e MYSQL_ALLOW_EMPTY_PASSWORD=yes --name mysql1 mysql

查看volume:

docker volume ls

删除volume

docker volume rm xxxxxxxxxx

查看细节:

docker volume inspect xxxxxxxxx

创建第二个mysql container

docker run -d -e MYSQL_ALLOW_EMPTY_PASSWORD=yes --name mysql2 mysql

查看细节:

docker volume inspect xxxxxxxxx

删除container,volume是不会删除的:

docker stop mysql1 mysql2

docker rm mysql1 mysql2

docker volume ls #数据还在

重新创建mysql1:

docker run -d -v mysq:/var/lib/mysql -e MYSQL_ALLOW_EMPTY_PASSWORD=yes --name mysql1 mysql #这里的mysq是volume的名字

docker volume ls #会显示mysq

进入mysql1的container

创建一个新的数据库

create database docker;

退出容器,把mysql1 container删除

docker rm -f mysql1 #强制停止和删除mysql1这个container

查看volume:

docker volume ls #还在

创建一个新的mysql2 container,但是volume使用之前的mysq

docker run -d -v mysq:/var/lib/mysql -e MYSQL_ALLOW_EMPTY_PASSWORD=yes --name mysql2 mysql

进入mysql2的容器里,看看数据库在不在

show database #数据库还在

第二种持久化方式:bind mounting

和第一种方式区别是什么?如果用data volume方式,需要在dockerfile里定义创建的volume,bind mounting不需要,bind mouting只需要在运行时指定本地目录和容器目录一一对应的关系。

然后通过这种方式去做一个同步,就是说本地系统中的文件和容器中的文件是同步的。本地文件做了修改,容器目录中的文件也会做修改。

cat Dockerfile

# this same shows how we can extend/change an existing official image from Docker Hub

FROM nginx:latest

# highly recommend you always pin versions for anything beyond dev/learn

WORKDIR /usr/share/nginx/html

# change working directory to root of nginx webhost

# using WORKDIR is prefered to using 'RUN cd /some/path'

COPY index.html index.html

# I don't have to specify EXPOSE or CMD because they're in my FROM

index.html随便整一个

docker build -t alexhe/my-nginx .

docker run -d -p 80:80 -v $(pwd):/usr/shar/nginx/html --name web alexhe/my-nginx

bind mount其他案例:

cat Dockerfile

FROM python:2.7

LABEL maintainer="alexhe<alex@alexhe.net>"

COPY . /skeleton

WORKDIR /skeleton

RUN pip install -r requirements.txt

EXPOSE 5000

ENTRYPOINT ["scripts/dev.sh"]

开始build image

docker build -t alexhe/flask-skeleton

docker run -d -p 80:5000 -v $(pwd):/skeleton --name flask alexhe/flask-skeleton

其他源码在

/Users/apple/temp/docker-k8s-devops-master/chapter5/labs/flask-skeleton

部署一个WordPress:

docker run -d -v mysql-data:/var/lib/mysql -e MYSQL_ROOT_PASSWORD=root -e MYSQL_DATABASE=wordpress --name mysql mysql

docker run -d -e WORDPRESS_DB_HOST=mysql:3306 -e WORDPRESS_DB_PASSWORD=root --link mysql -p 8080:80 wordpress

docker compose:

官网介绍https://docs.docker.com/compose/overview/

通过一个yml文件定义多容器的docker应用

通过一条命令就可以根据yml文件的定义去创建或者管理这多个容器

docker compose的三大概念:Services,Networks,Volumes

v2可以运行在单机,v3可以运行在多机

services:一个service代表一个container,这个container可以从dokcerhub的image来创建,或者从本地的dockerfile build出来的image来创建

service的启动,类似docker run,我们可以给其制定network和volume,所以可以给service指定network和volume的引用。

例子:

services:

db: (container的名字叫db)

image:postgres:9.4(docker hub拉取的)

volumes:

- "db-data:/var/lib/postgresql/data"

networks:

- back-tier

就像这样:

docker run -d --network back-tier -v db-data:/var/lib/postgresql/data postgres:9.4

例子:

services:

worker: (container的名字)

build: ./worker (不是从dockerhub取,而是从本地build)

links:

- db

- redis

networks:

- back-tier

例子:

cat docker-compose.yml

version: '3'

services:

wordpress:

image: wordpress

ports:

- 8080:80

environment:

WORDPRESS_DB_HOST: mysql

WORDPRESS_DB_PASSWORD: root

networks:

- my-bridge

mysql:

image: mysql

environment:

MYSQL_ROOT_PASSWORD: root

MYSQL_DATABASE: wordpress

volumes:

- mysql-data:/var/lib/mysql

networks:

- my-bridge

volumes:

mysql-data:

networks:

my-bridge:

driver: bridge

docker-compose的安装和基本使用:

安装:https://docs.docker.com/compose/install/

curl -L "https://github.com/docker/compose/releases/download/1.23.2/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-compose

chmod +x /usr/local/bin/docker-compose

docker-compose --version

docker-compose version 1.23.2, build 1110ad01

使用:

用上面的docker-compose.yml

docker-compose up #在当前文件夹的docker-compose.yml,启动. 1.创建bridge网络wordpress_my-bridge. 2.创建2个service wordpress_wordpress_1 和wordpress_mysql_1,并且启动container

docker-compose -f xxxx/docker-compose.yml #在调用指定文件夹中的docker-compose.yml

docker-compose ps #显示目前的service

docker-compose stop

docker-compose down #stop and remove,但是不会删除image

docker-compose start

docker-compose up -d #后台执行,不显示日志

docker-compose images #显示yml中定义的container和使用的image

docker-compose exec mysql bash #mysql是yml中定义的service,进入mysql 这台container的bash

案例:docker-compose调用Dockerfile来创建

源码在/Users/apple/temp/docker-k8s-devops-master/chapter6/labs/flask-redis

cat docker-compose.yml

version: "3"

services:

redis:

image: redis

web:

build:

context: . #dockerfile的位置

dockerfile: Dockerfile #调用目录中的Dockerfile

ports:

- 8080:5000

environment:

REDIS_HOST: redis

cat Dockerfile

FROM python:2.7

LABEL maintaner="alexhe alex@alexhe.net"

COPY . /app

WORKDIR /app

RUN pip install flask redis

EXPOSE 5000

CMD [ "python", "app.py" ]

使用:docker-compose up -d #如果不使用-d 会一直停在那里 web信息输出在前端

docker-compose中的scale

docker-compose up #用上面的yml

docker-compose up --scale web=3 -d #会报错,报8080已经被占用,需要把上面的ports: - 8000:5000删除

删除后再执行上面的。会启动3个container,并监听了容器本地的5000。可用docker-compose ps查看

但这样不行,容器本地的5000端口我们访问不到。我们需要在yml里新增haproxy

cat docker-compose.yml

version: "3"

services:

redis:

image: redis

web:

build:

context: .

dockerfile: Dockerfile

environment:

REDIS_HOST: redis

lb:

image: dockercloud/haproxy

links:

- web

ports:

- 8080:80

volumes:

- /var/run/docker.sock:/var/run/docker.sock

cat Dockerfile

FROM python:2.7

LABEL maintaner="alex alex@alexhe.net"

COPY . /app

WORKDIR /app

RUN pip install flask redis

EXPOSE 80

CMD [ "python", "app.py" ]

cat app.py

from flask import Flask

from redis import Redis

import os

import socket

app = Flask(__name__)

redis = Redis(host=os.environ.get('REDIS_HOST', '127.0.0.1'), port=6379)

@app.route('/')

def hello():

redis.incr('hits')

return 'Hello Container World! I have been seen %s times and my hostname is %s.\n' % (redis.get('hits'),socket.gethostname())

if __name__ == "__main__":

app.run(host="0.0.0.0", port=80, debug=True)

docker-compose up -d

curl 127.0.0.1:8080

docker-compose up --scale web=3 -d

案例:部署一个复杂的应用,投票系统,

源码:

/Users/apple/temp/docker-k8s-devops-master/chapter6/labs/example-voting-app

python前端+redis+java worker+ pg database + results app

cat docker-compose.yml

version: "3"

services:

voting-app:

build: ./voting-app/.

volumes:

- ./voting-app:/app

ports:

- "5000:80"

links:

- redis

networks:

- front-tier

- back-tier

result-app:

build: ./result-app/.

volumes:

- ./result-app:/app

ports:

- "5001:80"

links:

- db

networks:

- front-tier

- back-tier

worker:

build: ./worker

links:

- db

- redis

networks:

- back-tier

redis:

image: redis

ports: ["6379"]

networks:

- back-tier

db:

image: postgres:9.4

volumes:

- "db-data:/var/lib/postgresql/data"

networks:

- back-tier

volumes:

db-data:

networks:

front-tier: #没指明driver,默认为bridge

back-tier:

docker-compose up

浏览器通过5000端口投票,5001查看投票结果

docker-compose build #可以事先build image, 而用up会先build再做start。

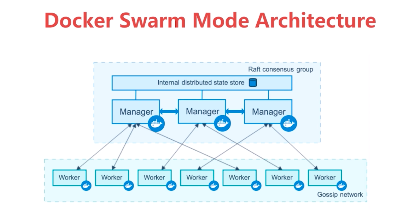

docker swarm

创建一个3节点的swarm cluster

manager 192.168.205.10

worker1 192.168.205.11

worker2 192.168.205.12

manager:

docker swarm init --advertise-addr=192.168.205.10

worker1 and 2:

docker swarm join xxxxx

manager:

docker node ls #查看所有节点

docker service create --name demo busybox sh -c "while true;do sleep 3600;done"

docker service ls

docker service ps demo #看service在哪台机器上

docker service scale demo=5 #扩展成5台

如果在work2上,强制删除了一个container, docker rm -f xxxxxxxxx.

这时候如果docker service ls,会显示 REPLICAS 4/5, 过一会儿会显示5/5,在docker service ls里会显示有状态为shutdown的container

docker service rm demo #删除整个service

docker service ps demo

swarm service 部署WordPress

docker network create -d overlay demo #创建overlay的网络,docker network ls

docker service create --name mysql --env MYSQL_ROOT_PASSWORD=root --env MYSQL_DATABASE=wordpress --network demo --mount type=volume,source=mysql-data,destination=/var/lib/mysql mysql #service中-v是这样的mount格式,名字叫mysql-data,挂载地址在/var/lib/mysql

docker service ls

docker service ps mysql

docker service create --name wordpress -p 80:80 --env WORDPRESS_DB_PASSWORD=root --env WORDPRESS_DB_HOST=mysql --network demo wordpress

docker service ps wordpress

访问manager或者worker的http地址,都能访问到wordpress

集群服务间通信之RoutingMesh

swam有内置服务发现的功能。通过service访问,是连到了overlay的网络。 用到了vip。

首先要有demo的overlay网络。

docker service create --name whoami -p 8000:8000 --network demo -d jwilder/whoami

docker service ls

docker service ps whoami #运行在manager节点

curl 127.0.0.1:8000

再创建一个busybox service

docker service create --name client -d --network demo busybox sh -c "while true;do sleep 3600;done"

docker service ls

docker service ps client #运行在work1节点

首先进到swarm 的 worker1节点

docker exec -it xxxx sh 进入这个busybox container

ping whoami #ping service的name, 10.0.0.7, 这个其实是一个vip,通过lvs创建的

docker service scale whoami=2 #扩展到2台

docker service ps whoami #有一台运行在work1,一台在work2

进入worker1的节点

docker exec -it xxx sh #进入busybox container

ping whoami #还是不变

nslookup whoami # 10.0.0.7 虚拟ip

nslookup tasks.whoami #有2个地址。这才是具体container的真实地址

iptables -t mangle -nL DOCKER-INGRESS 里做了转发

Routing Mesh的两种体现

Internal:在网络中,container和container是通过overlay网络来进行通信。

Ingress:如果服务有绑定接口,则此服务可以通过任意swarm节点的响应接口访问。服务端口被暴露到每个swarm节点

docker stack 部署wordpress

compose yml的reference:https://docs.docker.com/compose/compose-file/

官方例子:

version: "3.3"

services:

wordpress:

image: wordpress

ports:

- "8080:80"

networks:

- overlay

deploy:

mode: replicated

replicas: 2

endpoint_mode: vip #vip指service互访的时候,往外暴露的是虚拟的ip,底层通过lvs,负载均衡到后端服务器。默认为vip模式。

mysql:

image: mysql

volumes:

- db-data:/var/lib/mysql/data

networks:

- overlay

deploy:

mode: replicated

replicas: 2

endpoint_mode: dnsrr #dnsrr,直接使用service的ip地址,当横向扩展了以后,可能有三个或者四个IP地址,循环调用。

volumes:

db-data:

networks:

overlay:还有labels:打标签

mode:global和replicated,global代表全cluster只有一个,不能做横向扩展。replicated,mode的默认值,可以通过docker service scale做横向扩展。

placement:设定service的限定条件。比如:

version: '3.3'

services:

db:

image: postgres

deploy:

placement:

constraints:

- node.role == manager #db这个service一定会部署到manager这个节点,并且系统环境一定是ubuntu 14.04

- engine.labels.operatingsystem == ubuntu 14.04

preferences:

- spread: node.labels.zonereplicas:如果设置了模式为replicted,可以设置这个值

resources:资源占用和保留。

restart_policy: 重启条件,延迟,重启次数

update_config: 配置更新时的参数,比如可以同时更新2个,要等10秒才更新第二个。

cat docker-compose.yml

version: '3'

services:

web: #这个service叫web

image: wordpress

ports:

- 8080:80

environment:

WORDPRESS_DB_HOST: mysql

WORDPRESS_DB_PASSWORD: root

networks:

- my-network

depends_on:

- mysql

deploy:

mode: replicated

replicas: 3

restart_policy:

condition: on-failure

delay: 5s

max_attempts: 3

update_config:

parallelism: 1

delay: 10s

mysql: #这个service叫mysql

image: mysql

environment:

MYSQL_ROOT_PASSWORD: root

MYSQL_DATABASE: wordpress

volumes:

- mysql-data:/var/lib/mysql

networks:

- my-network

deploy:

mode: global #指能创建一台,不允许replicated

placement:

constraints:

- node.role == manager

volumes:

mysql-data:

networks:

my-network:

driver: overlay #默认为bridge,但是我们在多机集群里,要改成overlay。

发布:

docker stack deploy wordpress --compose-file=docker-compose.yml #stack的名字为wordpress

查看:

docker stack ls

docker stack ps wordpress

docker stack services wordpress #显示services replicas的情况。

访问:随便挑一台node的ip 8080端口

注意:docker swarm不能使用上面投票系统中的build,所以要自己build image

投票系统,使用docker swarm部署:

cat docker-compose.yml

version: "3"

services:

redis:

image: redis:alpine

ports:

- "6379"

networks:

- frontend

deploy:

replicas: 2

update_config:

parallelism: 2

delay: 10s

restart_policy:

condition: on-failure

db:

image: postgres:9.4

volumes:

- db-data:/var/lib/postgresql/data

networks:

- backend

deploy:

placement:

constraints: [node.role == manager]

vote:

image: dockersamples/examplevotingapp_vote:before

ports:

- 5000:80

networks:

- frontend

depends_on:

- redis

deploy:

replicas: 2

update_config:

parallelism: 2

restart_policy:

condition: on-failure

result:

image: dockersamples/examplevotingapp_result:before

ports:

- 5001:80

networks:

- backend

depends_on:

- db

deploy:

replicas: 1

update_config:

parallelism: 2

delay: 10s

restart_policy:

condition: on-failure

worker:

image: dockersamples/examplevotingapp_worker

networks:

- frontend

- backend

deploy:

mode: replicated

replicas: 1

labels: [APP=VOTING]

restart_policy:

condition: on-failure

delay: 10s

max_attempts: 3

window: 120s

placement:

constraints: [node.role == manager]

visualizer:

image: dockersamples/visualizer:stable

ports:

- "8080:8080"

stop_grace_period: 1m30s

volumes:

- "/var/run/docker.sock:/var/run/docker.sock"

deploy:

placement:

constraints: [node.role == manager]

networks:

frontend: #在swarm模式下默认是overlay的

backend:

volumes:

db-data:

启动:

docker stack deploy voteapp --compose-file=docker-compose.yml

docker secret 管理

internal distributed store 是存储在所有swarm manager节点上的,所以manager节点推荐2台以上。存在swarm manger节点的raft database里。

secret可以assign给一个service,这个service就能看到这个secret

在container内部secret看起来像文件,但是实际是在内存中。

secret创建,从文件创建:

vim alexpasswd

admin123

docker secret create my-pw alexpasswd #给这个secret起个名字叫my-pw

查看:

docker secret ls

从标准输入创建:

echo "adminadmin" | docker secret create my-pw2 - # 从标准输入创建

删除:

docker secret rm my-pw2

把一个secret暴露给service

docker service create --name client --secret my-pw busybox sh -c while true;do sleep 3600;done"

查看container在哪个节点上:

docker service ps client

进入这个container:

docker exec -it ccee sh

cd /run/secret/my-pw #这里就能看到我们的密码secret

例如mysql的docker:

docker service create --name db --secret my-pw -e MYSQL_ROOT_PASSWORD_FILE=/run/secrets/my-pw mysql

secret 在stack中使用:

有个密码文件:

cat password

adminadmin

docker-compose.yml文件:

version: '3'

services:

web:

image: wordpress

ports:

- 8080:80

secrets:

- my-pw

environment:

WORDPRESS_DB_HOST: mysql

WORDPRESS_DB_PASSWORD_FILE: /run/secrets/my-pw

networks:

- my-network

depends_on:

- mysql

deploy:

mode: replicated

replicas: 3

restart_policy:

condition: on-failure

delay: 5s

max_attempts: 3

update_config:

parallelism: 1

delay: 10s

mysql:

image: mysql

secrets:

- my-pw

environment:

MYSQL_ROOT_PASSWORD_FILE: /run/secrets/my-pw

MYSQL_DATABASE: wordpress

volumes:

- mysql-data:/var/lib/mysql

networks:

- my-network

deploy:

mode: global

placement:

constraints:

- node.role == manager

volumes:

mysql-data:

networks:

my-network:

driver: overlay

# secrets:

# my-pw:

# file: ./password

使用:

docker stack deploy wordpress -c=docker-compose.yml

service的更新:

首先建立源service:

docker service create --name web --publish 8080:5000 --network demo alexhe/python-flask-demo:1.0

开始扩展,至少2个:

docker service scale web=2

检查服务 curl 127.0.0.1:8080

while true;do curl 127.0.0.1:8080 && sleep 1;done

更新服务:

可以更新secret,publish port,image等等

docker service update --image alexhe/python-flask-demo:2.0 web

更新端口:

docker service update --publish-rm 8080:5000 --publish-add 8088:5000 web

k8s版本