Prometheus监控操作

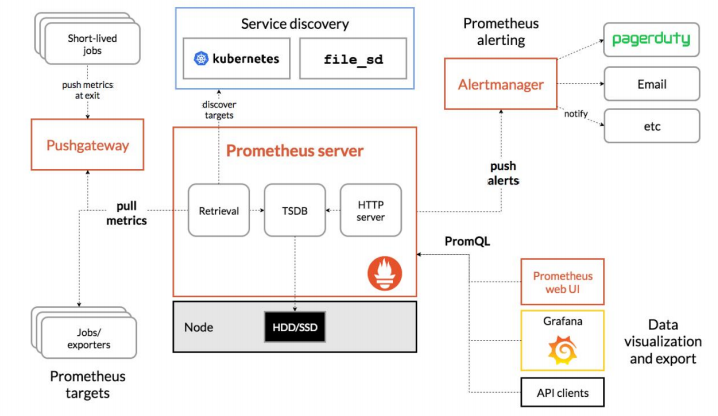

一、架构说明

➢ Prometheus Server:Prometheus 生态最重要的组件,主要用于抓取和存储时间序列数据,

同时提供数据的查询和告警策略的配置管理;

➢ Alertmanager:Prometheus 生态用于告警的组件,Prometheus Server 会将告警发送给

Alertmanager,Alertmanager 根据路由配置,将告警信息发送给指定的人或组。

Alertmanager 支持邮件、Webhook、微信、钉钉、短信等媒介进行告警通知;

➢ Grafana:用于展示数据,便于数据的查询和观测;

➢ Push Gateway:Prometheus 本身是通过 Pull 的方式拉取数据,但是有些监控数据可能是

短期的,如果没有采集数据可能会出现丢失。Push Gateway 可以用来解决此类问题,它

可以用来接收数据,也就是客户端可以通过 Push 的方式将数据推送到 Push Gateway,

之后 Prometheus 可以通过 Pull 拉取该数据;

➢ Exporter:主要用来采集监控数据,比如主机的监控数据可以通过 node_exporter采集,

MySQL 的监控数据可以通过 mysql_exporter 采集,之后 Exporter 暴露一个接口,比

如/metrics,Prometheus 可以通过该接口采集到数据;

➢ PromQL:PromQL 其实不算 Prometheus 的组件,它是用来查询数据的一种语法,比

如查询数据库的数据,可以通过 SQL 语句,查询 Loki 的数据,可以通过 LogQL,查询

Prometheus 数据的叫做 PromQL;

➢ Service Discovery:用来发现监控目标的自动发现,常用的有基于 Kubernetes、

Consul、Eureka、文件的自动发现等

二、Prometheus安装

-

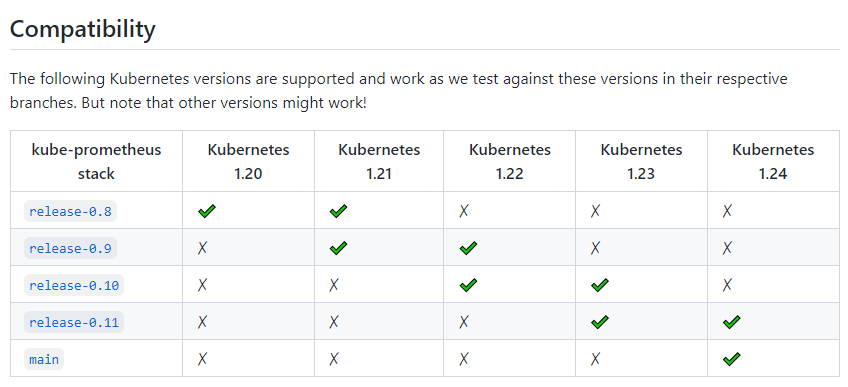

Kube-Prometheus 项目地址:https://github.com/prometheus-operator/kube-prometheus/首先需要通过该项目地址,找到和自己 Kubernetes 版本对应的 Kube Prometheus Stack 的版本

- 克隆下载,这里安装的是release-0.10版本或者利用游览器下载后上传到服务器

git clone -b release-0.10 --single-branch https://github.com/prometheus-operator/kube-prometheus.git

- 修改镜像下载地址。原下载镜像地址不能下载镜像

[root@k8s-master1 manifests]# vim prometheusAdapter-deployment.yaml image: k8s.gcr.io/prometheus-adapter/prometheus-adapter:v0.9.1 #修改如下地址 image: willdockerhub/prometheus-adapter:v0.9.1 [root@k8s-master1 manifests]# vim kubeStateMetrics-deployment.yaml image: k8s.gcr.io/kube-state-metrics/kube-state-metrics:v2.3.0 #修改如下地址: image: bitnami/kube-state-metrics:2.3.0

- Prometheus安装

cd kube-prometheus/manifests #安装 Prometheus Operator: kubectl create -f setup/ #安装 Prometheus Stack: kubectl create -f .

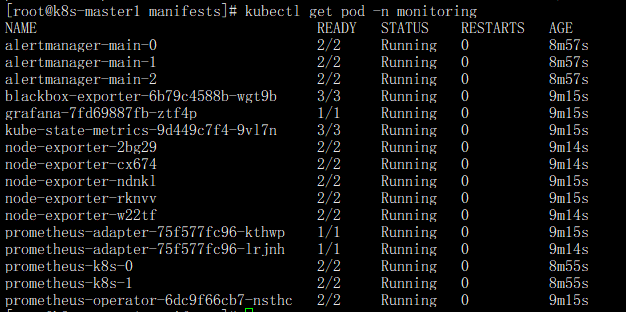

- 查看 Prometheus 容器状态:

kubectl get pod -n monitoring

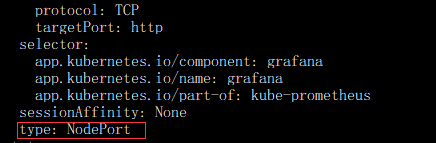

- 将 Grafana 的 Service 改成 NodePort 类型

kubectl edit svc -n monitoring grafana

打开后找到type: ClusterIP 修改为 type: NodePort,修改如下图红色方框

- 查看 Grafana Service 的 NodePort:

[root@k8s-master1 manifests]# kubectl get svc -n monitoring grafana NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE grafana NodePort 10.8.14.157 <none> 3000:32734/TCP 6m32s

- 访问Grafana,通过任意一个安装了 kube-proxy 服务的节点 IP+32734 端口即可访问

注:Grafana默认登录的账号密码为admin/admin。

- 开启Prometheus简易web ui界面。需要相同的方式更改Prometheus的Service为NodePort

kubectl edit svc -n monitoring prometheus-k8s

注:把type: ClusterIP 修改为 type: NodePort.修改方法跟上面一样

- 查看Service

[root@k8s-master1 manifests]# kubectl get svc -n monitoring prometheus-k8s NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE prometheus-k8s NodePort 10.6.102.49 <none> 9090:30671/TCP,8080:32548/TCP 9m52s

- 登录节点 IP地址:端口号(30671)

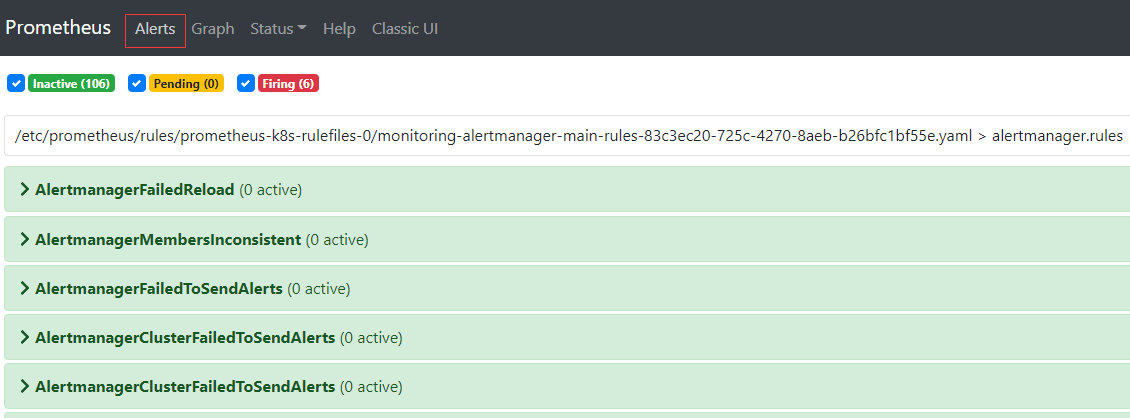

注:默认安装完成后,会有几个告警,先忽略

三、Etcd监控

3-1、测试访问Etcd Metric接口

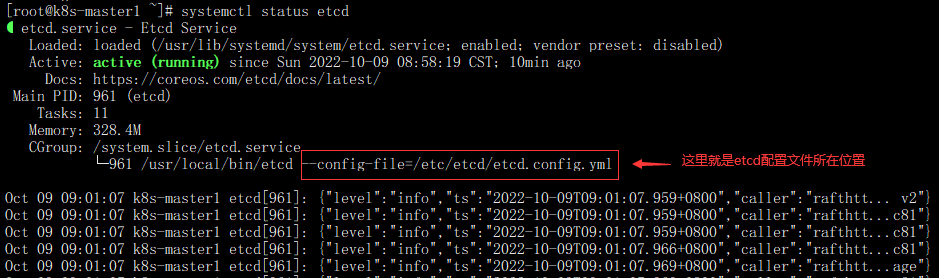

- 首先找到ectd证书位置。可以在Etcd配置文件中搜索获得(注意配置文件的位置,不同的集群位置可能不同,kubeadm安装方式可能会在在/etc/kubernetes/manifests/etcd.yml 中),可以通过systemctl status etcd 查看配置文件所在位置。如图;

- 找到配置文件位置后查看证书所在位置

[root@k8s-master1 ~]# cat /etc/etcd/etcd.config.yml | grep -E "key-file|cert-file" cert-file: '/etc/kubernetes/pki/etcd/etcd.pem' key-file: '/etc/kubernetes/pki/etcd/etcd-key.pem'

- 测试访问Etcd Metic接口(注;etcd 监听端口是2379)

[root@k8s-master1 ~]# curl -s --cert /etc/kubernetes/pki/etcd/etcd.pem --key /etc/kubernetes/pki/etcd/etcd-key.pem \

https://YOUR_ETCD_IP:2379/metrics -k|tail -l

#查看结果显示

# TYPE process_virtual_memory_max_bytes gauge

process_virtual_memory_max_bytes 1.8446744073709552e+19

# HELP promhttp_metric_handler_requests_in_flight Current number of scrapes being served.

# TYPE promhttp_metric_handler_requests_in_flight gauge

promhttp_metric_handler_requests_in_flight 1

# HELP promhttp_metric_handler_requests_total Total number of scrapes by HTTP status code.

# TYPE promhttp_metric_handler_requests_total counter

promhttp_metric_handler_requests_total{code="200"} 0

promhttp_metric_handler_requests_total{code="500"} 0

promhttp_metric_handler_requests_total{code="503"} 0

3-2、创建Etcd Service yaml文件

vim etcd-service.yaml

apiVersion: v1

kind: Endpoints

metadata:

labels:

app: etcd-prom

name: etcd-prom

namespace: kube-system

subsets:

- addresses:

- ip: YOUR_ETCD_IP1 #这里设置是etcd服务器的IP地址,有几个Etcd添加几个

- ip: YOUR_ETCD_IP1

- ip: YOUR_ETCD_IP1

ports:

- port: 2379 #etcd监听端口

name: https-metrics

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

labels:

app: etcd-prom

name: etcd-prom

namespace: kube-system

spec:

type: ClusterIP

ports:

- port: 2379

protocol: TCP

targetPort: 2379

name: https-metrics

注:

1、需要把YOUR_ETCD_IP改成自己的Etcd主机IP地址,另外需要注意port的名称为https-metrics,需要和后面的ServiceMonitor保持一致。

2、需要保持namespace 命名空间设置的名称一致

- 创建Etcd Service和查看Service的ClusterIP

#创建 kubectl create -f etcd-service.yaml #查看Service的ClusterIP [root@k8s-master1 src]# kubectl get svc -n kube-system etcd-prom NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE etcd-prom ClusterIP 10.4.62.48 <none> 2379/TCP 16h

- 通过ClusterIP访问测试

[root@k8s-master1 src]# curl -s --cert /etc/kubernetes/pki/etcd/etcd.pem --key /etc/kubernetes/pki/etcd/etcd-key.pem https://10.4.62.48:2379/metrics -k|tail -l

# TYPE process_virtual_memory_max_bytes gauge

process_virtual_memory_max_bytes 1.8446744073709552e+19

# HELP promhttp_metric_handler_requests_in_flight Current number of scrapes being served.

# TYPE promhttp_metric_handler_requests_in_flight gauge

promhttp_metric_handler_requests_in_flight 1

# HELP promhttp_metric_handler_requests_total Total number of scrapes by HTTP status code.

# TYPE promhttp_metric_handler_requests_total counter

promhttp_metric_handler_requests_total{code="200"} 1

promhttp_metric_handler_requests_total{code="500"} 0

promhttp_metric_handler_requests_total{code="503"} 0

注:通过创建Etcd Service 得到的ClusterIP 测试metrics 和上面测试结果一样,说明创建的Etcd Service可以使用

3-3、创建Etcd证书的Secret(证书路径根据实际环境进行更改)

[root@k8s-master1 src]# kubectl create secret generic etcd-ssl \ --from-file=/etc/kubernetes/pki/etcd/etcd-ca.pem \ --from-file=/etc/kubernetes/pki/etcd/etcd.pem \ --from-file=/etc/kubernetes/pki/etcd/etcd-key.pem -n monitoring

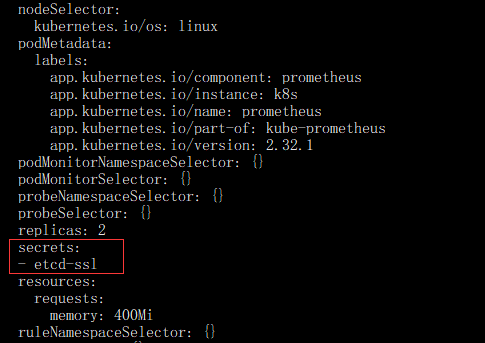

- 将证书挂载至Prometheus容器(由于Prometheus是Operator部署,所以只需修改Prometheus资源即可)

kubectl edit prometheus k8s -n monitoring #添加以下内容 secrets: - etcd-ssl

如图所示:

注:保存退出后,Prometheus的Pod会自动重启。重启玩后查看证书是否挂载(任意一个Promentheus的pod即可)

- 查看状态和查看证书是否挂载

#查看重启是否完成命令 kubectl get pod -n monitoring #查看证书是否挂载 [root@k8s-master1 ~]# kubectl exec -n monitoring prometheus-k8s-0 -c prometheus -- ls /etc/prometheus/secrets/etcd-ssl/ etcd-ca.pem etcd-key.pem etcd.pem

- Etcd ServiceMonitor 创建

vim servicemonitor.yaml

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: etcd

namespace: monitoring

labels:

app: etcd

spec:

jobLabel: k8s-app

endpoints:

- interval: 30s

port: https-metrics #这个port对应 Service.spec.ports.name

scheme: https

tlsConfig:

caFile: /etc/prometheus/secrets/etcd-ssl/etcd-ca.pem #证书路径

certFile: /etc/prometheus/secrets/etcd-ssl/etcd.pem

keyFile: /etc/prometheus/secrets/etcd-ssl/etcd-key.pem

insecureSkipVerify: true # 关闭证书校验

selector:

matchLabels:

app: etcd-prom #跟svc的lables 保持一致。可以根据下面的图进行查看说明

namespaceSelector:

matchNames:

- kube-system

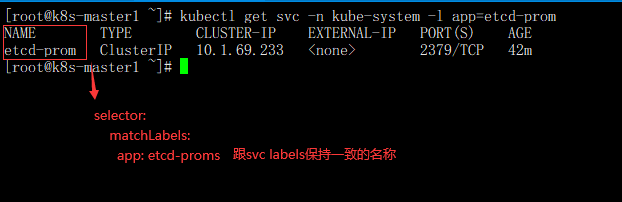

- app: etcd-prom配置查看说明

kubectl get svc -n kube-system -l app=etcd-prom

注:和之前的 ServiceMonitor 相比,多了 tlsConfig 的配置,http 协议的 Metrics 无需该配置

- 创建ServiceMonitor

[root@k8s-master1 src]# kubectl create -f servicemonitor.yaml servicemonitor.monitoring.coreos.com/etcd created

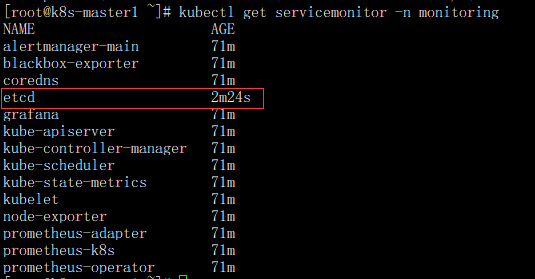

- 检查监控Etcd配置是否正常加载

kubectl get servicemonitor -n monitoring

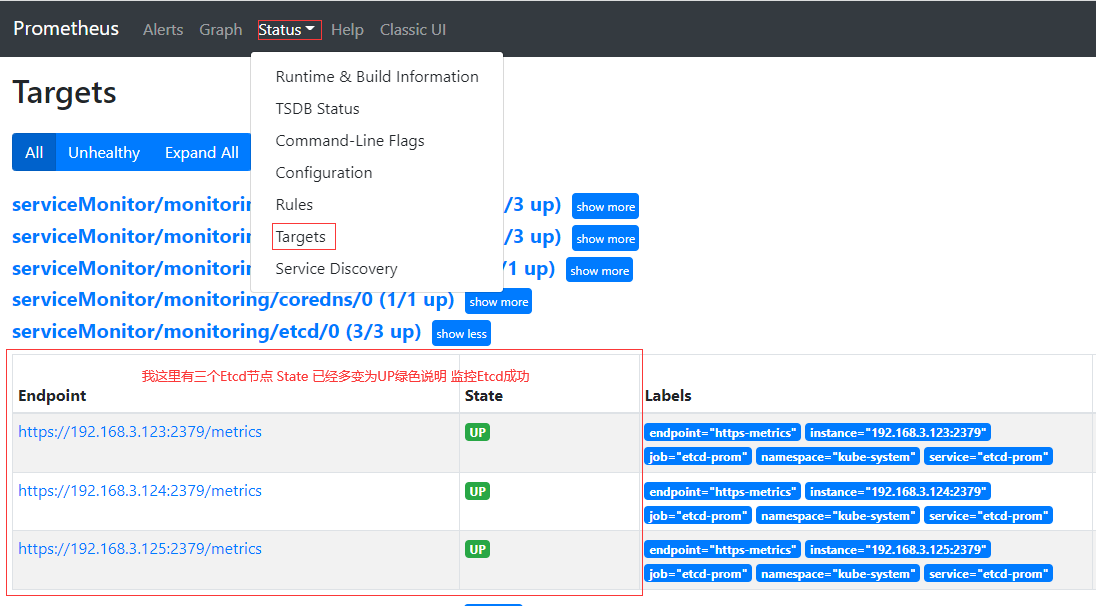

- Prometheus web简易页面

注:Etcd节点有几个显示几个才算正常,如果显示不正常根据上面步骤排查下问题

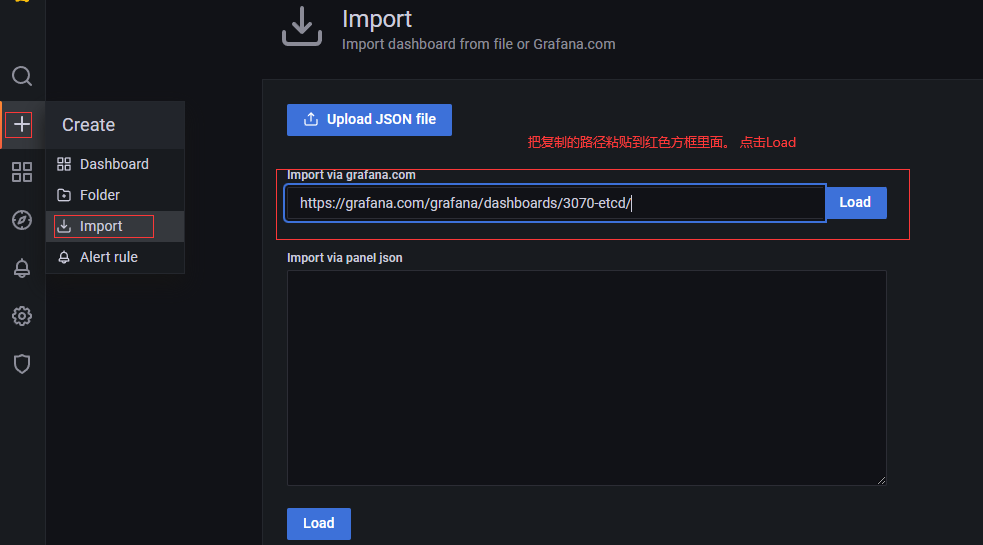

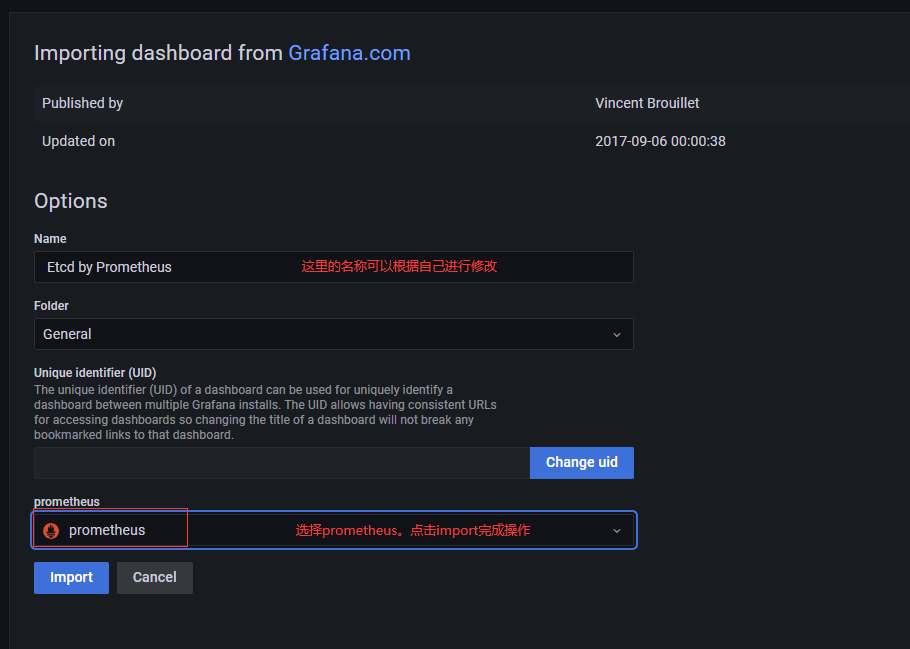

- 配置Grafana 显示etcd监控页面

打开 https://grafana.com/grafana/dashboards 找Etcd相关的 dashboards。

找的的Etcd dashboards导入路径:https://grafana.com/grafana/dashboards/3070-etcd/

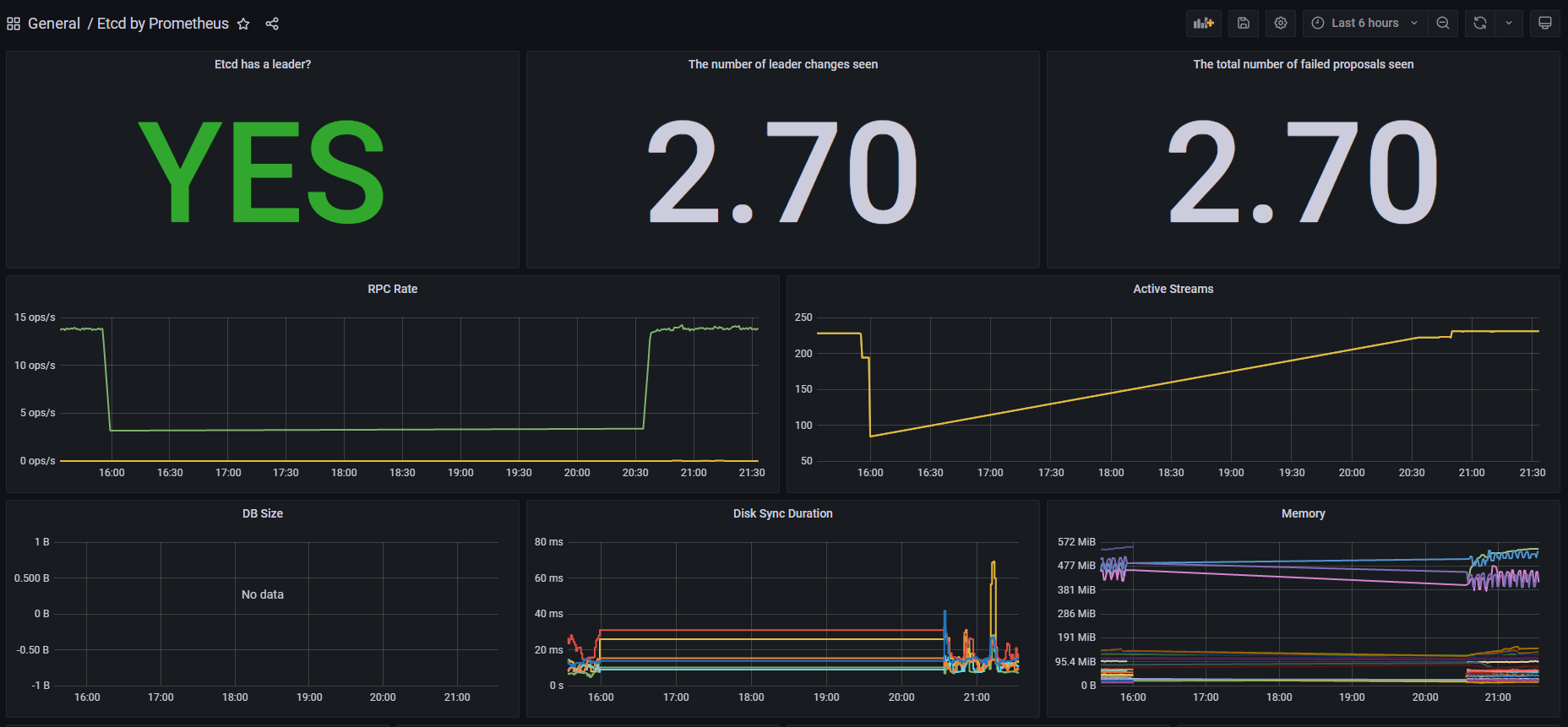

导入后页面显示

注:至此Etcd监控配置完成

四、非云原生监控Exporter

4-1、MySQL 作为一个测试用例,演示如何使用 Exporter 监控非云原生应用。 MySQL安装不在操作

-

登录 MySQL,创建 需要远程MySQL用户和用户授权所需的用户和权限

# 创建mysql用户和对创建的用户授权 mysql> CREATE USER '用户名称'@'%' IDENTIFIED BY '用户密码' WITH MAX_USER_CONNECTIONS 3; Query OK, 0 rows affected (0.01 sec) mysql> GRANT PROCESS, REPLICATION CLIENT, SELECT ON *.* TO '授权用户名称'@'%'; Query OK, 0 rows affected (0.00 sec)

- 配置 MySQL Exporter 采集 MySQL 监控数据

vim mysql-exporter.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: mysql-exporter

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

k8s-app: mysql-exporter

template:

metadata:

labels:

k8s-app: mysql-exporter

spec:

containers:

- name: mysql-exporter

image: registry.cn-beijing.aliyuncs.com/dotbalo/mysqld-exporter

env:

- name: DATA_SOURCE_NAME

#value: "MySQL用户:密码@(mysql.default:3306)/" #集群内部MySQL监控配置

value: "MySQL用户:密码@(192.168.3.81:3306)/" #集群外部监控MySQL配置

imagePullPolicy: IfNotPresent

ports:

- containerPort: 9104

---

apiVersion: v1

kind: Service

metadata:

name: mysql-exporter

namespace: monitoring

labels:

k8s-app: mysql-exporter

spec:

type: ClusterIP

selector:

k8s-app: mysql-exporter

ports:

- name: api

port: 9104

protocol: TCP

- 创建 Exporter:

# kubectl create -f mysql-exporter.yaml deployment.apps/mysql-exporter created service/mysql-exporter created

- 查看创建的mysql-exporter状态

[root@k8s-master1 src]# kubectl get svc -n monitoring mysql-exporter NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE mysql-exporter ClusterIP 10.1.173.190 <none> 9104/TCP 10h

- 通过该 Service 地址,检查是否能正常获取 Metrics 数据:

[root@k8s-master1 src]# curl 10.1.173.190:9104/metrics | tail -1

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 119k 0 119k 0 0 1161k 0 --:--:-- --:--:-- --:--:-- 1179k

promhttp_metric_handler_requests_total{code="503"} 0

- ServiceMonitor 配置

vim mysql-sm.yaml

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: mysql-exporter

namespace: monitoring

labels:

k8s-app: mysql-exporter

namespace: monitoring

spec:

jobLabel: k8s-app

endpoints:

- port: api

interval: 30s

scheme: http

selector:

matchLabels:

k8s-app: mysql-exporter

namespaceSelector:

matchNames:

- monitoring

注:需要matchLabels 和 endpoints 的配置,要和 MySQL 的 Service 一致

- 创建ServiceMonitor

# kubectl create -f mysql-sm.yaml servicemonitor.monitoring.coreos.com/mysql-exporter created

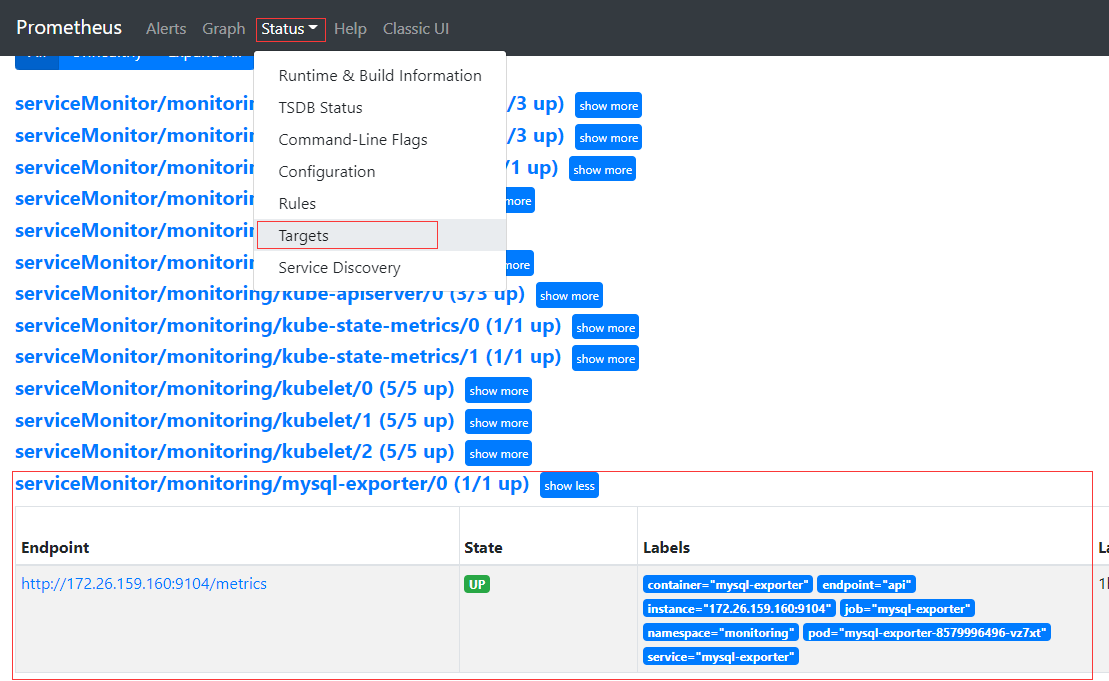

- 接下来即可在 Prometheus Web UI 看到该监控

- 导入 Grafana Dashboard

说明:导入 Grafana Dashboard,地址:https://grafana.com/grafana/dashboards/6239-mysql/,导入步骤和之前类似,

在此不再演示。导入完成后,即可在 Grafana 看到监控数据:

五、Service Monitor 找不到监控主机排查

5-1、故障排查步骤

1)确认Service Monitor是否创建成功

2)确认Service Monitor标签是否配置正确

3)确认Promenteus 是否生成了相关配置

4)确认存在Service Monitor匹配的Service

5)确认通过Service 能够访问程序的Metrics接口

6)确认Service 的端口和Scheme和Service Monitor一致

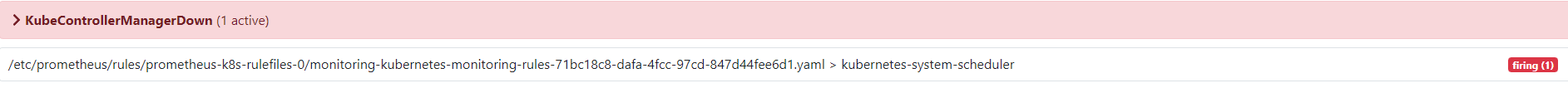

5-2、解决安装后报KubeControllerManagerDown (1 active) 监控数据没有错误,如下图错误。解决步骤如下

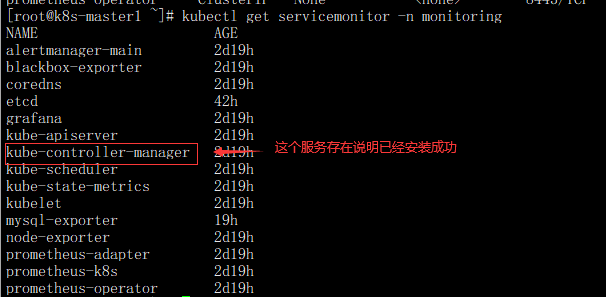

- 确认Service Monitor是否创建成功,查看命令

kubectl get servicemonitor -n monitoring

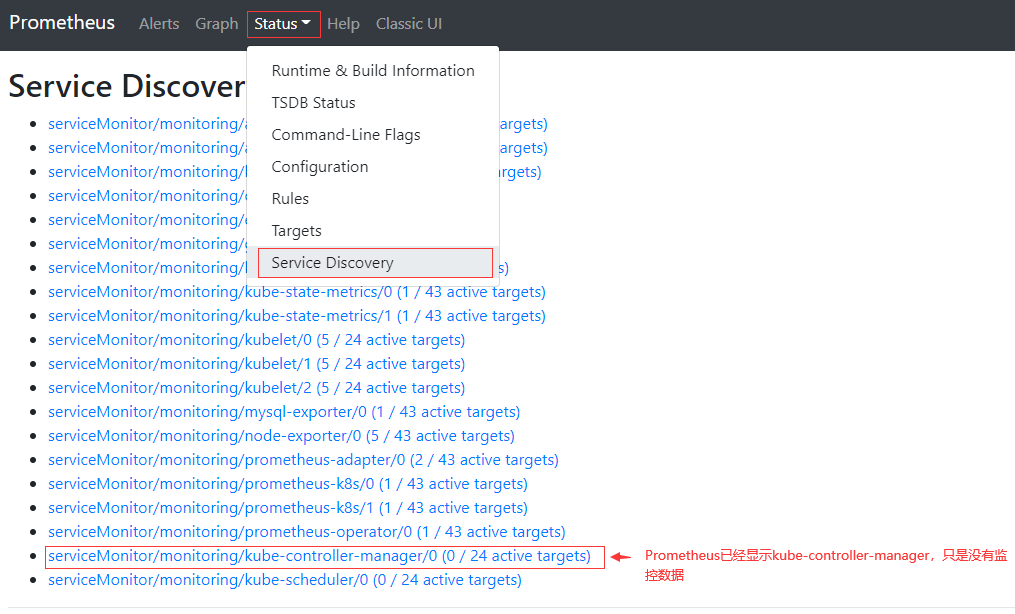

通过Prometheus web界面查看kube-controller-manager是否生成了相关配置

- 检查Service Monitor匹配的Service

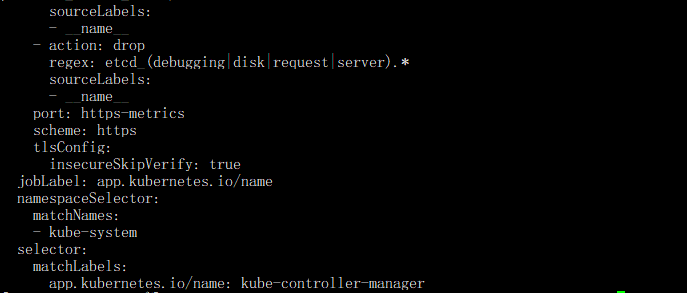

kubectl get servicemonitor -n monitoring kube-controller-manager -oyaml

注:该Service Monitor匹 配 的 是kube-system命名空间下 , 具有app.kubernetes.io/name=kube-controller-manager 标签

接下来通过该标签查看是否存在

[root@k8s-master1 ~]# kubectl get svc -l app.kubernetes.io/name=kube-controller-manager -n kube-system No resources found in kube-system namespace.

注:可以看到并没有此标签的 Service,所以导致了找不到需要监控的目标,此时可以手动创建该 Service 和

Endpoint 指向自己的 Controller Manage(kube-controller-manager )

- 创建该 Service 和 Endpoint 指向Controller Manage(kube-controller-manager )

[root@k8s-master1 src]# vim controller-manager-svc.yaml

apiVersion: v1

kind: Endpoints

metadata:

labels:

app.kubernetes.io/name: kube-controller-manager

name: kube-controller-manager-prom

namespace: kube-system

subsets:

- addresses:

- ip: 192.168.3.123 #这里设置是contorller-manager服务器的IP地址,有几个Etcd添加几个

- ip: 192.168.3.124

- ip: 192.168.3.125

ports:

- port: 10257 #controlle监听端口

name: https-metrics

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/name: kube-controller-manager #这里要跟servicemotiors labels 名称保持一致才能找到

name: kube-controller-manager-prom

namespace: kube-system

spec:

type: ClusterIP

ports:

- port: 10257

protocol: TCP

targetPort: 10257

name: https-metrics

#创建

[root@k8s-master1 src]# kubectl create -f controller-manager-svc.yaml

endpoints/kube-controller-manager-prom created

service/kube-controller-manager-prom created

- 所有 k8s-master主节点上面的 /usr/lib/systemd/system/kube-controller-manager.service 启动配置文件添加以下内容

--authentication-kubeconfig=/etc/kubernetes/controller-manager.kubeconfig \ --authorization-kubeconfig=/etc/kubernetes/controller-manager.kubeconfig \

注:如果在配置末尾添加注意后面的反斜杠需要去掉

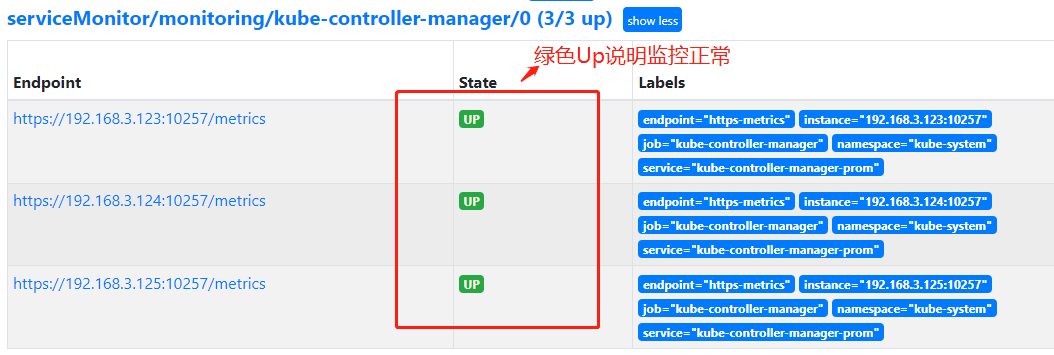

- 登录Prometheus Web UI界面是否正常,如下图kube-controller-manager监控正常

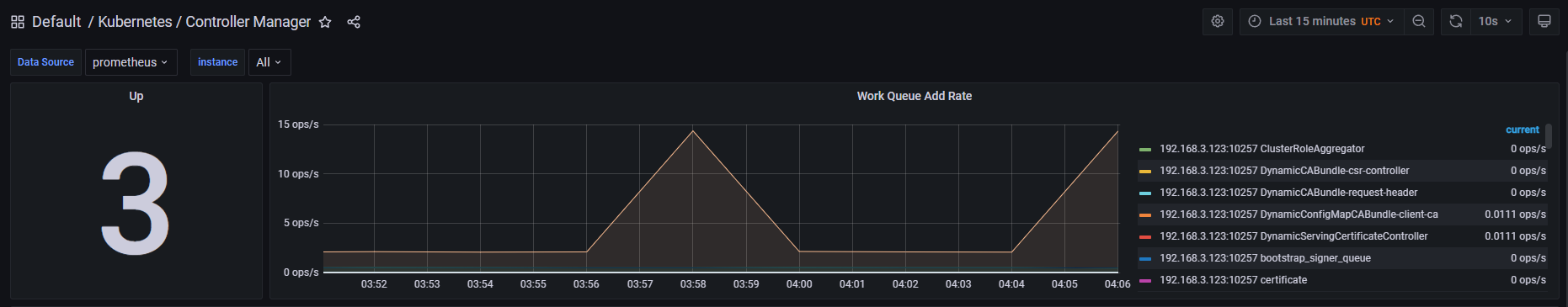

- 登录Grafana 界面找到Controller Manager标签显示3个主节点信息说明Grafana监控也正常

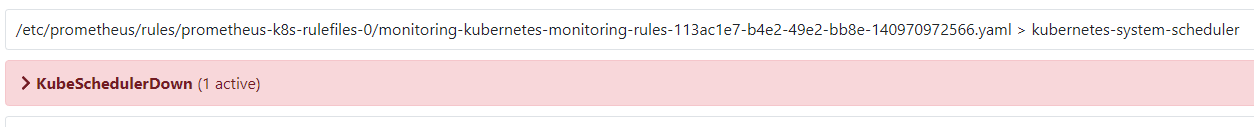

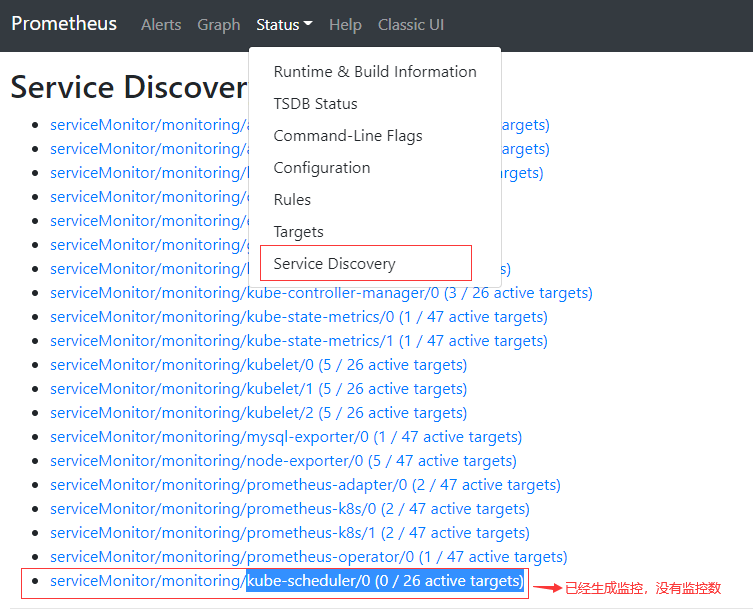

5-3、解决安装后报KubeSchedulerDown (1 active) (1 active)没有监控数据错误,如下图错误。解决步骤如下

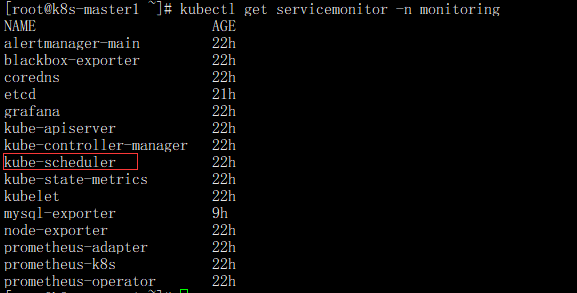

- 确认Service Monitor是否创建成功,查看命令

kubectl get servicemonitor -n monitoring

- 通过Prometheus web界面查看kube-scheduler是否生成了相关配置

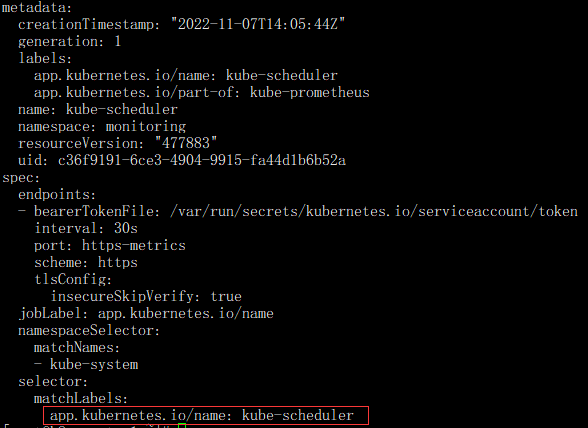

- 检查Service Monitor匹配的Service

kubectl get servicemonitor -n monitoring kube-scheduler -oyaml

注:该Service Monitor匹 配 的 是kube-system命名空间下 , 具有app.kubernetes.io/name: kube-scheduler 标签

接下来通过该标签查看是否存在

[root@k8s-master1 ~]# kubectl get svc -l app.kubernetes.io/name=kube-scheduler -n kube-system No resources found in kube-system namespace.

注:可以看到并没有此标签的 Service,所以导致了找不到需要监控的目标,此时可以手动创建该 Service 和

Endpoint 指向自己的 Controller Manage(kube-scheduler )

- 创建该 Service 和 Endpoint 指向Controller Manage(kube-scheduler )

[root@k8s-master1 src]# vim scheduler.yaml

apiVersion: v1

kind: Endpoints

metadata:

labels:

app.kubernetes.io/name: kube-scheduler

name: kube-scheduler-prom

namespace: kube-system

subsets:

- addresses:

- ip: 192.168.3.123 #这里设置是scheduler服务器的IP地址,有几个Etcd添加几个

- ip: 192.168.3.124

- ip: 192.168.3.125

ports:

- port: 10259 #scheduler监听端口

name: https-metrics

protocol: TCP

---

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/name: kube-scheduler #这里要跟servicemotiors labels 名称保持一致才能找到

name: kube-scheduler-prom

namespace: kube-system

spec:

type: ClusterIP

ports:

- port: 10259

protocol: TCP

targetPort: 10259

name: https-metrics

- 创建

[root@k8s-master1 src]# kubectl create -f scheduler.yaml endpoints/kube-scheduler-prom created service/kube-scheduler-prom created

- 所有 k8s-master主节点上面的/usr/lib/systemd/system/kube-scheduler.service启动配置文件添加以下内容

--requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.pem \ --authentication-kubeconfig=/etc/kubernetes/scheduler.kubeconfig \ --authorization-kubeconfig=/etc/kubernetes/scheduler.kubeconfig \

注:如果在配置末尾添加注意后面的反斜杠需要去掉

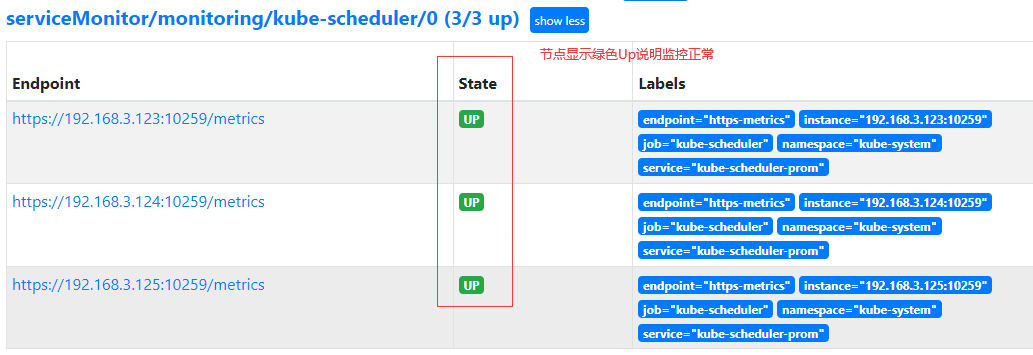

- 登录Prometheus Web UI界面是否正常,如下图说明kube-scheduler监控正常

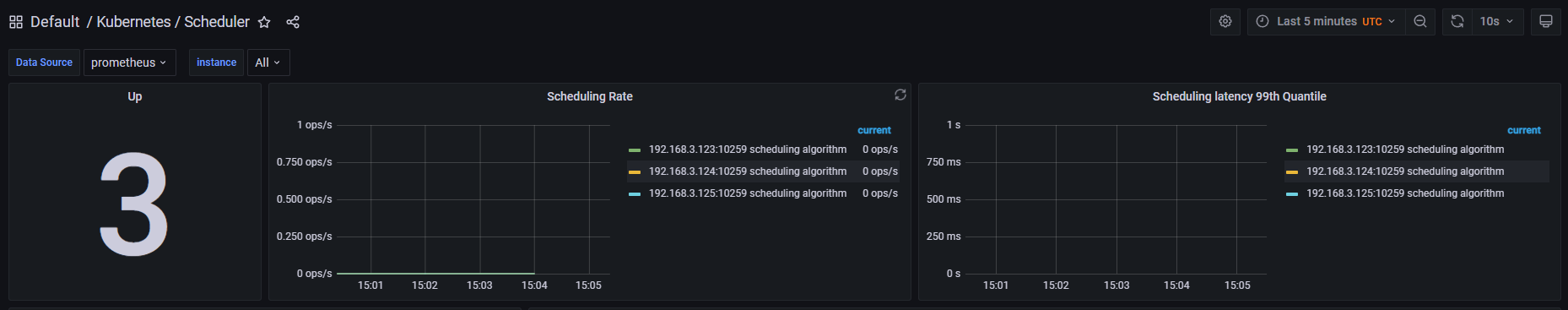

- 登录Grafana 界面找到kube-scheduler标签显示3个主节点信息说明Grafana监控也正常

注:其它相关错误排查也是相关步骤

六、配置Prometeus静态文件远程监控Linux主机

说明:远程监控主机IP地址为:192.168.3.81

6-1、远程Linux主机操作。远程监控客户端下载地址:https://prometheus.io/download/

#下载node_exporter远程监控客户端口 wget https://github.com/prometheus/node_exporter/releases/download/v1.4.0/node_exporter-1.4.0.linux-amd64.tar.gz #解压 tar zxf node_exporter-1.4.0.linux-amd64.tar.gz #解压后吧node_exporter移动到/usr/local/目录下 mv node_exporter-1.4.0.linux-amd64 /usr/local/node_exporter-1.4.0.linux-amd64

- 配置远程监控Linux主机node_exporter启动服务

vim /usr/lib/systemd/system/node_exporter.service [Service] ExecStart=/usr/local/node_exporter-1.4.0.linux-amd64/node_exporter --web.listen-address=0.0.0.0:9100 ExecReload=/bin/kill -HUP $MAINPID KillMode=process Restart=on-failure [Install] WantedBy=multi-user.target [Unit] Description=node_exporter After=network.target #更新系统服务 systemctl daemon-reload #加入开机启动 systemctl enable node_exporter.service #启动 systemctl start node_exporter.service #停止 systemctl stop node_exporter.service #重启 systemctl restart node_exporter.service

注:ExecStart=/usr/local/node_exporter-1.4.0.linux-amd64/node_exporter --web.listen-address=0.0.0.0:9100配置说明

1、/usr/local/node_exporter-1.4.0.linux-amd64/node_exporter 存放node_exporter路径

2、--web.listen-address=0.0.0.0:9100 指定了 node_exporter 的监听端口为9100

6-2、以下操作都在Kubernetes集群K8s-master1上面操作或kubectl命令机器上面

- 首先创建一个prometheus-additional.yaml 空文件,然后通过该文件创建一个 Secret,那么这个 Secret 即可作为Prometheus 的静态配置

#生成一个prometheus-additional.yaml 文件 mkdir /prometeus cd /prometeus/ touch /prometeus/prometheus-additional.yaml #创建secret [root@k8s-master1 prometeus]# kubectl create secret generic additional-configs \ --from-file=/prometeus/prometheus-additional.yaml -n monitoring #创建成功提示信息 secret/additional-configs created

- 查看创建additional-configs是否成功

#查看创建状态 [root@k8s-master1 ~]# kubectl get secrets -n monitoring additional-configs NAME TYPE DATA AGE additional-configs Opaque 1 17m #查看相关内容信息 [root@k8s-master1 ~]# kubectl describe secrets -n monitoring additional-configs Name: additional-configs Namespace: monitoring Labels: <none> Annotations: <none> Type: Opaque Data ==== prometheus-additional.yaml: 0 bytes

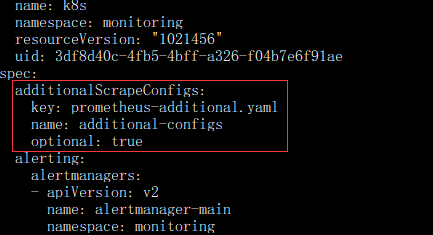

- 创建完 Secret 后,需要编辑下 Prometheus 配置

[root@k8s-master1 src]# kubectl edit prometheus -n monitoring k8s

prometheus.monitoring.coreos.com/k8s edited

#添加以下内容

additionalScrapeConfigs:

key: prometheus-additional.yaml

name: additional-configs

optional: true

注:添加上述配置后保存退出,无需重启 Prometheus 的 Pod 即可生效

- 配置prometheus-additional.yaml文件实现监控Linux主机

vim prometheus-additional.yaml

- job_name: 'LinuxServerMonitor'

static_configs:

- targets:

- "192.168.3.81:9100" #监控linux主机IP地址和端口。端口默认为9100。可以配置多个监控主机按照格式换行添加即可

labels:

server_type: 'linux'

relabel_configs:

- source_labels: [__address__]

target_label: instance

注:远程监控多个Linux主机监控示例静态文件配置:

- job_name: 'LinuxServerMonitor'

static_configs:

- targets:

- "192.168.3.81:9100"

- "192.168.3.82:9100"

- "192.168.3.83:9100"

#需要监控多少台Linux主机直接按照顺序添加IP地址:端口即可

labels:

server_type: 'linux'

relabel_configs:

- source_labels: [__address__]

target_label: instance

- 通过prometheus-additional.yaml该文件更新 Secret

[root@k8s-master1 src]# kubectl create secret generic additional-configs \ --from-file=/prometeus/prometheus-additional.yaml --dry-run=client -oyaml | \ kubectl replace -f - -n monitoring #更新后提示 secret/additional-configs replaced

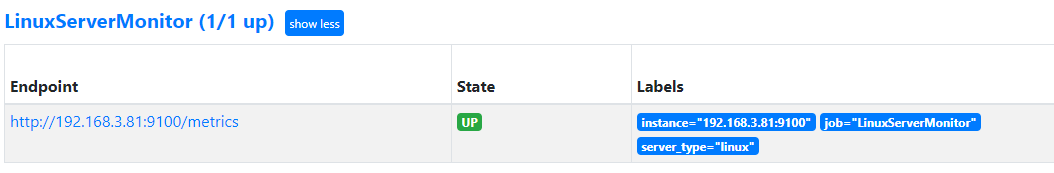

- 查看Prometheus web 界面,出现如下下图显示说明监控正常。

注:如果没有稍等一会在查看是否出现。时间过长还没出现根据上面操作查看自己的操作流程或配置文件

- 打开General导入dashboards模块,展示图如下:

说明:导入dashboards模块这里不在操作,按照上面导入dashboards模块操作即可

导入模块地址:https://grafana.com/grafana/dashboards/12633-linux

七、黑河监控域名是否可以正常访问

说明:

1、关于Secret 配置prometheus-additional.yaml静态文件查看6-2相关操作步骤

2、如果黑河监控没有安装可以参考: https://github.com/prometheus/blackbox_exporter

7-1、新版Prometheus Stack 已经默认安装了 BlackboxExporter,可以通过以下命令查看

[root@k8s-master1 ~]# kubectl get pod -n monitoring -l app.kubernetes.io/name=blackbox-exporter NAME READY STATUS RESTARTS AGE blackbox-exporter-6b79c4588b-hvqgz 3/3 Running 21 (83m ago) 3d21h

7-2、同时也会创建一个 Service,可以通过该 Service 访问 Blackbox Exporter 并传递一些参数

[root@k8s-master1 ~]# kubectl get svc -n monitoring -l app.kubernetes.io/name=kube-state-metrics NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kube-state-metrics ClusterIP None <none> 8443/TCP,9443/TCP 3d21h

7-3、在prometheus-additional.yaml文件编辑静态配置实现监控域

vim prometheus-additional.yaml

- job_name: 'WebUrl'

metrics_path: /probe

params:

module: [http_2xx] # Look for a HTTP 200 response.

static_configs:

- targets:

- http://gaoxin.kubeasy.com # Target to probe with http.

- https://www.baidu.com # Target to probe with https.

relabel_configs:

- source_labels: [__address__]

target_label: __param_target

- source_labels: [__param_target]

target_label: instance

- target_label: __address__

replacement: blackbox-exporter:19115

➢ targets:探测的目标,根据实际情况进行更改

➢ params:使用哪个模块进行探测

➢ replacement:

1、blackbox-exporter:指向的是访问地址(如果是k8s架设是架设黑河服务主机地址);

2、19115:指向的是k8s svc里面的blackbox-exporter端口

注:可以看到此处的内容,和传统配置的内容一致,只需要添加对应的 job 即可

7-4、通过prometheus-additional.yaml该文件更新 Secret

[root@k8s-master1 src]# kubectl create secret generic additional-configs \ --from-file=/prometeus/prometheus-additional.yaml --dry-run=client -oyaml | \ kubectl replace -f - -n monitoring secret/additional-configs replaced

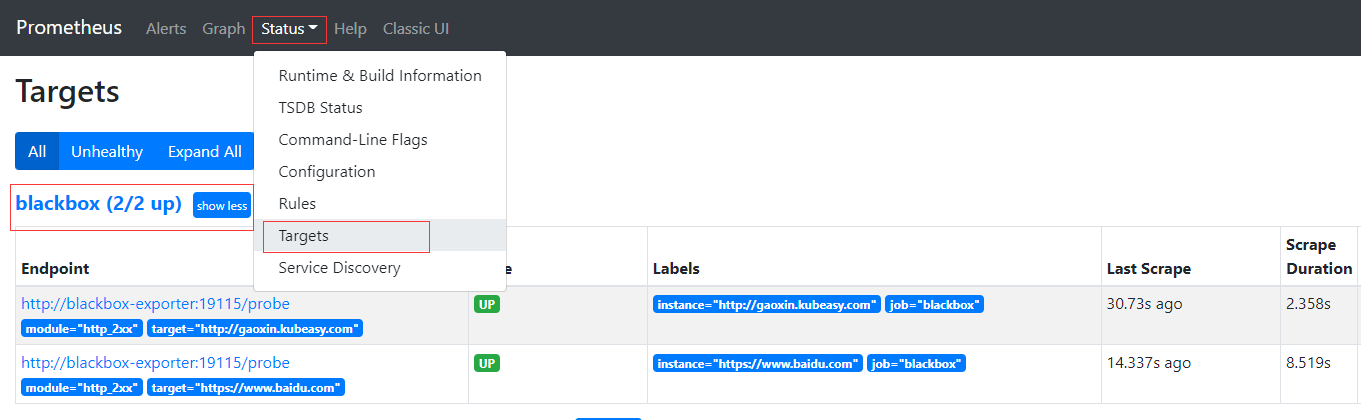

7-5、更新完后稍等一会,可登录Prometheus Web 看到该图配置

注:监控状态UP后导入黑河模块到General里面

7-6、导入dashboards模块到General里面,显示图如下

说明:导入dashboards模块这里不在操作,按照上面的导入dashboards模块操作即可

导入模板地址:https://grafana.com/grafana/dashboards/13659

7-7、对远程应用端口进行监控

- job_name: 'ServerPort'

metrics_path: /probe

params:

module: [tcp_connect]

static_configs:

- targets:

- 192.168.3.81:9000

labels:

tags: "server1" #标签名称

relabel_configs:

- source_labels: [__address__]

target_label: __param_target

- source_labels: [__param_target]

target_label: instance

- target_label: __address__

replacement: blackbox-exporter:19115

- 通过prometheus-additional.yaml该文件更新 Secret

[root@k8s-master1 src]# kubectl create secret generic additional-configs \ --from-file=/prometeus/prometheus-additional.yaml --dry-run=client -oyaml | \ kubectl replace -f - -n monitoring

注:导入模板地址:https://grafana.com/grafana/dashboards/13659 使General展示

七、邮件告警配置

7-1、在安装Prometheus安装包目录下面找到 Alertmanager 的配置文件。

#例如安装包目录为/kube-prometheus-release-0.10切换到manifests

#编辑 alertmanager-secret.yaml文件

[root@k8s-master1 kube-prometheus-release-0.10]# cd manifests/

[root@k8s-master1 manifests]# vim alertmanager-secret.yaml

apiVersion: v1

kind: Secret

metadata:

labels:

app.kubernetes.io/component: alert-router

app.kubernetes.io/instance: main

app.kubernetes.io/name: alertmanager

app.kubernetes.io/part-of: kube-prometheus

app.kubernetes.io/version: 0.23.0

name: alertmanager-main

namespace: monitoring

stringData:

alertmanager.yaml: |-

"global":

"resolve_timeout": "5m"

'''后面内容省略

- alertmanager-secret.yaml 文件的 global 添加发送邮件相关配置配置如下

alertmanager.yaml: |-

"global":

"resolve_timeout": "5m"

#添加以下内容

smtp_from: "123@126.com" 发送邮件地址

smtp_smarthost: "smtp.126.com:465" #发送邮箱smtp地址

smtp_hello: "126.com"

smtp_auth_username: "123@126.com" #发送邮箱地址

smtp_auth_password: "QWEZSDREDR" #开启POP3/SMTP服务后的授权密码

smtp_require_tls: false

- 接受邮件配置,修改 alertmanager-secret.yaml 文件的 receivers 配置如下

"receivers":

- "name": "Default"

#下面设置的是接受邮件配置

"email_configs":

- to: "123@126.com"

send_resolved: true

注:

➢ email_configs:代表使用邮件通知;

➢ to:收件人,此处为 123@126.com,可以配置多个,逗号隔开;

➢ send_resolved:告警如果被解决是否发送解决通知。

-

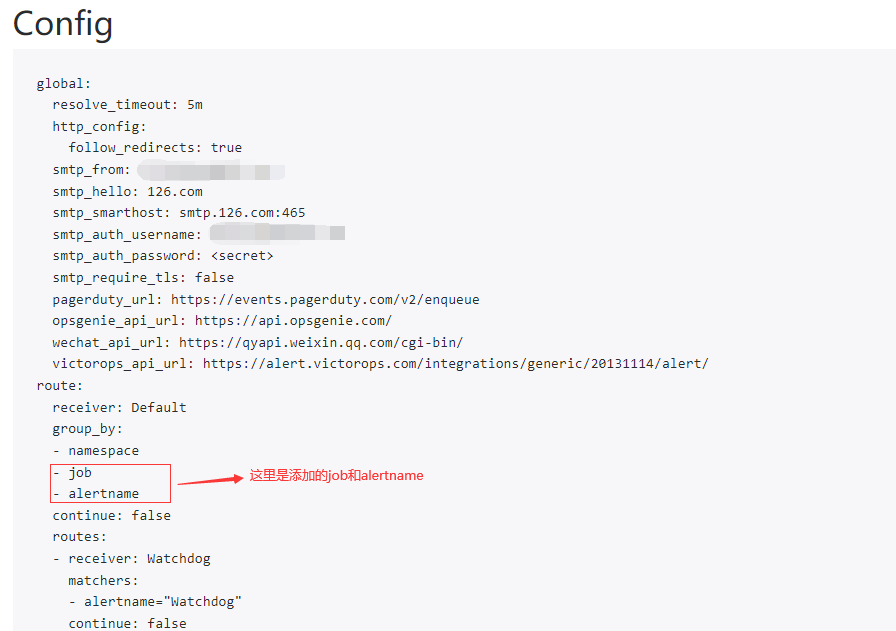

路由规则(默认分组只有 namespace,在此添加上 job 和 alertname,当然不添加也是可以的):

"route":

"group_by":

- "namespace"

#下方添加job和alertname分组

- "job"

- "alertname"

"group_interval": "5m"

"group_wait": "30s"

"receiver": "Default"

"repeat_interval": "12h"

"routes":

- "matchers":

- "alertname = Watchdog"

"receiver": "Watchdog"

- "matchers":

- "severity = critical"

"receiver": "Critical"

- 将更改好的 alertmanager-secret.yaml配置加载到 Alertmanager

kubectl replace -f alertmanager-secret.yaml

7-1、开启Alertmanager Web UI 界面查看添加的job和alertname分组,配置内容

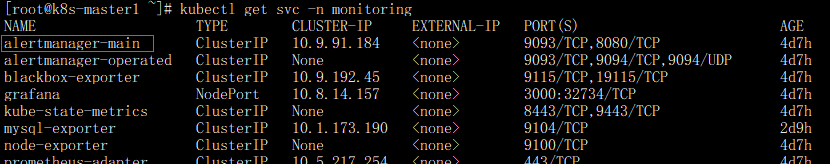

- 查红色方框alertmanager-main是否存在,存下进行编辑开启Alertmanager Web UI

kubectl get svc -n monitoring

- 编辑svc alertmanager-main 。把type: ClusterIP 改为 type: NodePort 编辑命令。红色方框为编辑后

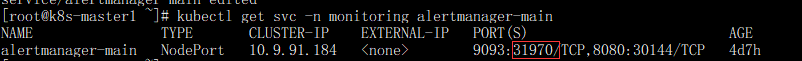

kubectl edit svc -n monitoring alertmanager-main

- 查看编辑后对外的映射的端口号,红色方框为映射的端口。IP地址:红色方框端口(端口号是随机的产生)

- 访问alertmanager Web UI查看分组

7-2、编写mysql不能连接服务或MySQL服务关闭告警邮件

vim mysql-exporter.yaml

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

labels:

app.kubernetes.io/component: exporter

app.kubernetes.io/name: mysql-ruler

prometheus: k8s

role: alert-rules

name: mysql-rules

namespace: monitoring

spec:

groups:

- name: mysql-ruler

rules:

- alert: MySQLDown

annotations:

description: MySQL Instance:{{ $labels.instance }} 关闭

summary: MySQL不能连接

expr: mysql_up!=1

for: 1m

labels:

level: high

severity: warning

type: MySQL

浙公网安备 33010602011771号

浙公网安备 33010602011771号