Hadoop高可用配置过程(dudu)

前提:开启所有的虚拟机!!!!

第一步,停掉hadoop,linux命令窗口中停掉hdfs : stop-all.sh,

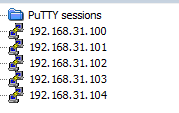

第二步,克隆 ,把克隆192.168.31.100给192.168.31.105,

,把克隆192.168.31.100给192.168.31.105,

操作是点击鼠标右键→ 一直下一步,修改一下虚拟机名称

一直下一步,修改一下虚拟机名称![]()

第三步,在【105】启动虚拟机,打开105,在命令中修改参数

①配置hostname:sudo nano hostname /etc/hostname 将 ![]() 改为是s105

改为是s105

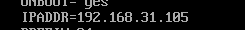

②配置静态ip :sudo nano /etc/sysconfig/network-scripts/ifcfg-ens33 将 最后改成105

最后改成105

③重启:roboot

剩下的都在101中操作

在【101】中

③修改hosts:sudo nano /etc/hosts 添加:

192.168.79.100 s100

192.168.79.101 s101

192.168.79.102 s102

192.168.79.103 s103

192.168.79.104 s104

192.168.79.105 s105

④分发,直接粘贴到命令行:

sudo scp /etc/hosts root@s102:/etc/

sudo scp /etc/hosts root@s103:/etc/

sudo scp /etc/hosts root@s104:/etc/

sudo scp /etc/hosts root@s105:/etc/

⑤创建ha目录,修改符号链接,在目录 /soft/hadoop/etc下进行,即cd /soft/hadoop/etc

cp -r full/ ha

ln -sfT ha hadoop

二、修改配置文件:在【101】中

1.core-site.xml,将下面代码复制进去

命令:nano /soft/hadoop/etc/hadoop core-site.xml

--------------------------------------

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <property> <name>fs.defaultFS</name> <value>hdfs://mycluster</value> </property> <property> <name>hadoop.tmp.dir</name> <value>/home/centos/ha</value> </property> <property> <name>dfs.journalnode.edits.dir</name> <value>/home/centos/ha/journal</value> </property> </configuration>

2.hdfs-site.xml,将下面代码复制进去

命令:nano /soft/hadoop/etc/hadoop hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <property> <name>dfs.replication</name> <value>3</value> </property> <!-- 声明名字服务 --> <property> <name>dfs.nameservices</name> <value>mycluster</value> </property> <!-- 声明两个节点名称 --> <property> <name>dfs.ha.namenodes.mycluster</name> <value>nn1,nn2</value> </property> <!-- 声明节点名称指定的真实主机名 --> <property> <name>dfs.namenode.rpc-address.mycluster.nn1</name> <value>s101:8020</value> </property> <property> <name>dfs.namenode.rpc-address.mycluster.nn2</name> <value>s105:8020</value> </property> <!-- 声明节点名称指定的真实主机名web端口 --> <property> <name>dfs.namenode.http-address.mycluster.nn1</name> <value>s101:50070</value> </property> <property> <name>dfs.namenode.http-address.mycluster.nn2</name> <value>s105:50070</value> </property> <property> <name>dfs.namenode.shared.edits.dir</name> <value>qjournal://s104:8485;s103:8485;s102:8485/mycluster</value> </property> <property> <name>dfs.client.failover.proxy.provider.mycluster</name> <value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value> </property> <property> <name>dfs.ha.fencing.methods</name> <value> sshfence shell(/bin/true) </value> </property> </configuration>

3、同步配置文件

scp -r /soft/hadoop/etc centos@s102:/soft/hadoop/

scp -r /soft/hadoop/etc centos@s103:/soft/hadoop/

scp -r /soft/hadoop/etc centos@s104:/soft/hadoop/

scp -r /soft/hadoop/etc centos@s105:/soft/hadoop/

4、修改符号链接

ssh s102 ln -sfT /soft/hadoop/etc/ha /soft/hadoop/etc/hadoop

ssh s103 ln -sfT /soft/hadoop/etc/ha /soft/hadoop/etc/hadoop

ssh s104 ln -sfT /soft/hadoop/etc/ha /soft/hadoop/etc/hadoop

ssh s105 ln -sfT /soft/hadoop/etc/ha /soft/hadoop/etc/hadoop

5、启动journalnode

hadoop-daemons.sh start journalnode

6、格式化文件系统

hdfs namenode -format

7、将s101的~/ha目录同步到s105节点

scp -r ~/ha centos@s105:~/

8、start-dfs.sh

9、将s101的namenode变为激活状态(active)

hdfs haadmin -transitionToActive nn1

10、检查进程

s101和s105:用jps命令

namenode

浙公网安备 33010602011771号

浙公网安备 33010602011771号