利用selenium 爬取豆瓣 武林外传数据并且完成 数据可视化 情绪分析

全文的步骤可以大概分为几步:

一:数据获取,利用selenium+多进程(linux上selenium 多进程可能会有问题)+kafka写数据(linux首选必选耦合)windows直接采用的是写mysql

二:数据存储(kafka+hive 或者mysql)+数据清洗shell +python3

三: 数据可视化,词云 pyecharts jieba分词 snownlp (情绪化分析)

step 1

selenium 模拟登陆豆瓣,爬去武林外传的短评:

在最开始写爬虫的时候,抓取豆瓣评论,我们从F12里面是可以直接发现接口的,但是最近豆瓣更新,数据是JS异步加载的,所以没有找到合适的方法爬去,于是采用了selenium来模拟浏览器爬取。

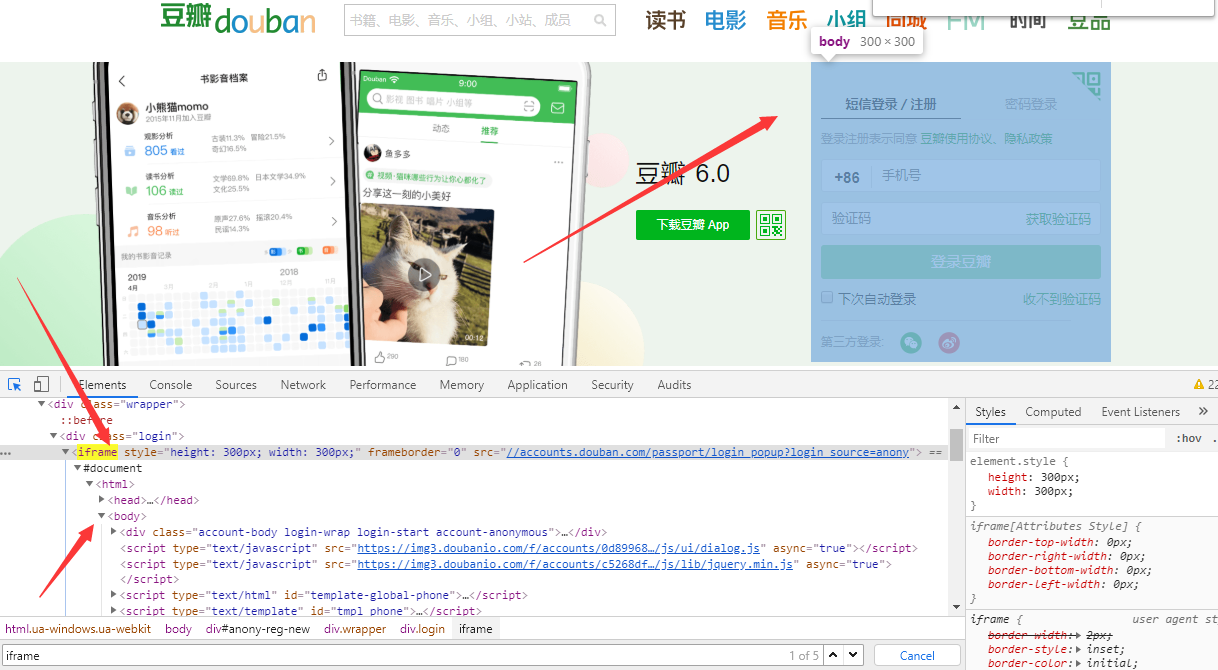

豆瓣登陆也是改了样式,我们可以发现登陆页面是在另一个frame里面

所以代码如下:

# -*- coding:utf-8 -*-

# 导包

import time

from selenium import webdriver

from selenium.webdriver.common.keys import Keys

# 创建chrome参数对象

opt = webdriver.ChromeOptions()

# 把chrome设置成无界面模式,不论windows还是linux都可以,自动适配对应参数

opt.set_headless()

# 用的是谷歌浏览器

driver = webdriver.Chrome(options=opt)

driver=webdriver.Chrome()

# 登录豆瓣网

driver.get("http://www.douban.com/")

# 切换到登录框架中来

driver.switch_to.frame(driver.find_elements_by_tag_name("iframe")[0])

# 点击"密码登录"

bottom1 = driver.find_element_by_xpath('/html/body/div[1]/div[1]/ul[1]/li[2]')

bottom1.click()

# # 输入密码账号

input1 = driver.find_element_by_xpath('//*[@id="username"]')

input1.clear()

input1.send_keys("xxxxx")

input2 = driver.find_element_by_xpath('//*[@id="password"]')

input2.clear()

input2.send_keys("xxxxx")

# 登录

bottom = driver.find_element_by_class_name('account-form-field-submit ')

bottom.click()

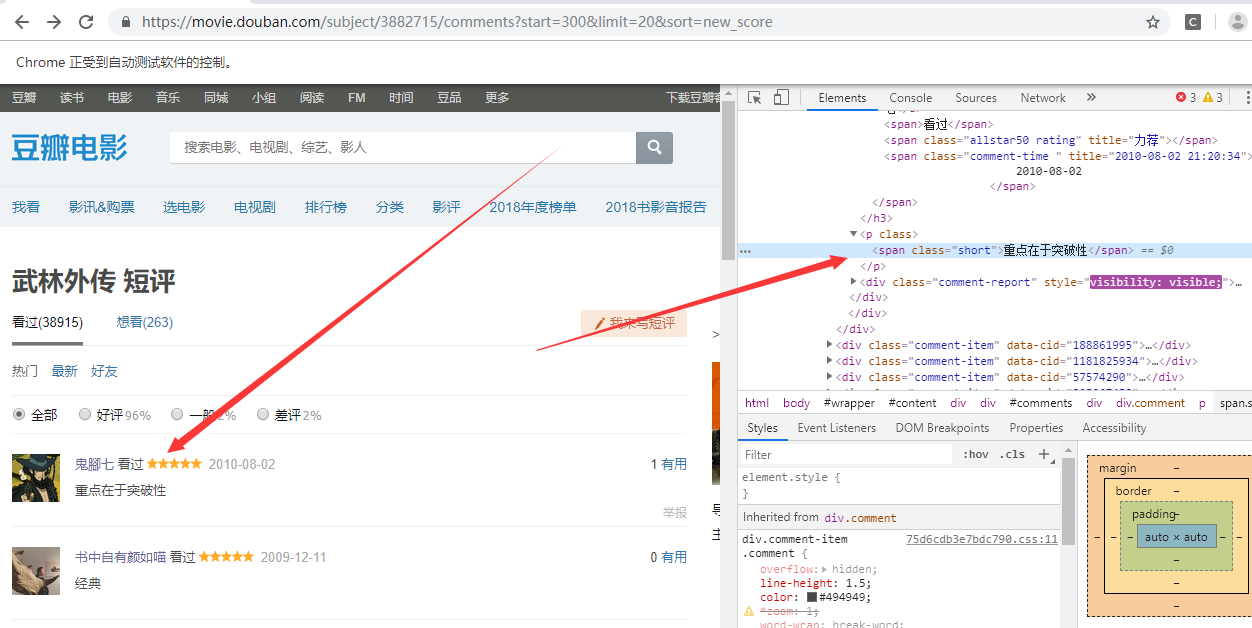

然后跳转到评论界面 https://movie.douban.com/subject/3882715/comments?sort=new_score

点击下一页发现url变化 https://movie.douban.com/subject/3882715/comments?start=20&limit=20&sort=new_score 所以我们观察到变化后可以直接写循环

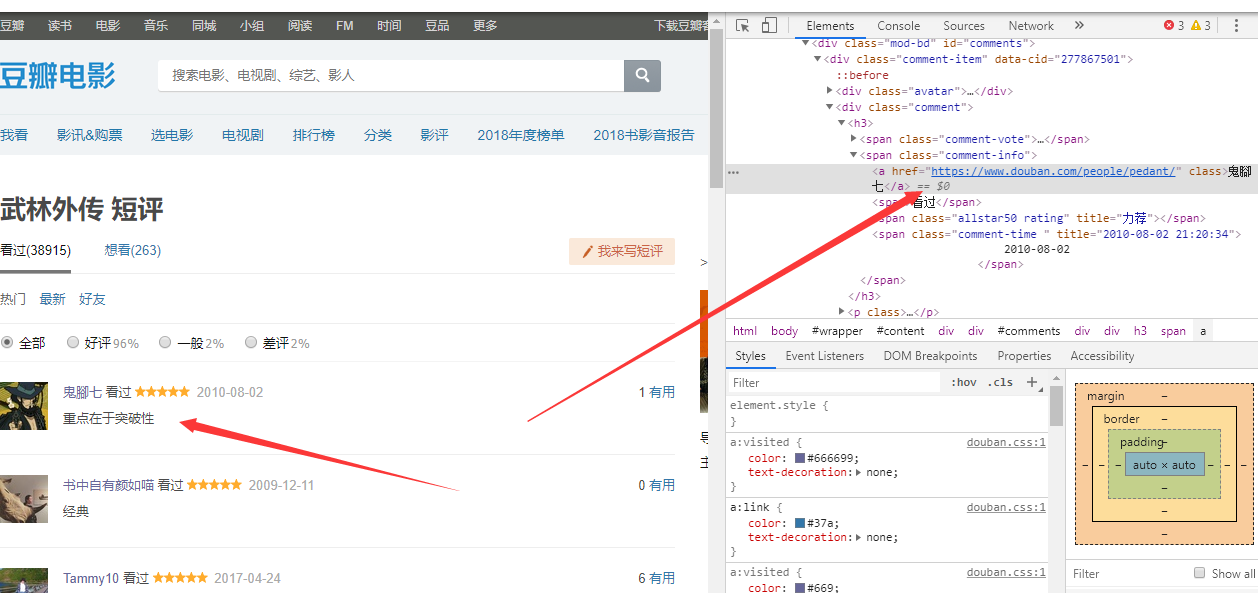

获取用户的姓名

driver.find_element_by_xpath('//*[@id="comments"]/div[{}]/div[2]/h3/span[2]/a'.format(str(i))).text

用户的评论

driver.find_element_by_xpath('//*[@id="comments"]/div[{}]/div[2]/p/span'.format(str(i))).text

然后我们想要知道用户的居住地:

1 #获取用户的url然后点击url获取居住地

2 userInfo=driver.find_element_by_xpath('//*[@id="comments"]/div[{}]/div[2]/h3/span[2]/a'.format(str(i))).get_attribute('href')

3 driver.get(userInfo)

4 try:

5 userLocation = driver.find_element_by_xpath('//*[@id="profile"]/div/div[2]/div[1]/div/a').text

6 print("用户的居之地是: ")

7 print(userLocation)

8 except Exception as e:

9 print(e)

这里要注意有些用户没有写居住地,所以必须要捕获异常

完整代码

# -*- coding:utf-8 -*- # 导包 import time from selenium import webdriver import pymysql from selenium.webdriver.common.keys import Keys from multiprocessing import Pool class doubanwlwz_spider(): def writeMysql(self,userName,userConment,userLocation): # 打开数据库连接 db = pymysql.connect("123XXX1", "zXXXan", "XXX1", "huXXXt") # 使用 cursor() 方法创建一个游标对象 cursor cursor = db.cursor() sql = "insert into userinfo(username,commont,location) values(%s, %s, %s)" cursor.execute(sql, [userName, userConment, userLocation]) db.commit() # 关闭数据库连接 cursor.close() db.close() def getInfo(self,page): # 切换到登录框架中来 # 登录豆瓣网 opt = webdriver.ChromeOptions() # 用的是谷歌浏览器 # 把chrome设置成无界面模式,不论windows还是linux都可以,自动适配对应参数 opt.add_argument('--no-sandbox') opt.add_argument('--disable-gpu') opt.add_argument('--hide-scrollbars') # 隐藏滚动条, 应对一些特殊页面 opt.add_argument('blink-settings=imagesEnabled=false') # 不加载图片, 提升速度 # 用的是谷歌浏览器 driver = webdriver.Chrome('D:\chromedriver_win32\chromedriver', options=opt) driver.get("http://www.douban.com/") driver.switch_to.frame(driver.find_elements_by_tag_name("iframe")[0]) # 点击"密码登录" bottom1 = driver.find_element_by_xpath('/html/body/div[1]/div[1]/ul[1]/li[2]') bottom1.click() # # 输入密码账号 input1 = driver.find_element_by_xpath('//*[@id="username"]') input1.clear() input1.send_keys("1XXX2") input2 = driver.find_element_by_xpath('//*[@id="password"]') input2.clear() input2.send_keys("zXXX344") # 登录 bottom = driver.find_element_by_class_name('account-form-field-submit ') bottom.click() time.sleep(1) #获取全部评论 一共有24页,每个页面20个评论,一共能抓取到480个 for i in range((page-1)*240,page*240,20): driver.get('https://movie.douban.com/subject/3882715/comments?start={}&limit=20&sort=new_score'.format(i)) print("开始抓取第%i页面"%(i)) search_window = driver.current_window_handle # pageSource=driver.page_source # print(pageSource) #获取用户的名字 每页20个 for i in range(1,21): userName=driver.find_element_by_xpath('//*[@id="comments"]/div[{}]/div[2]/h3/span[2]/a'.format(str(i))).text print("用户的名字是: %s"%(userName)) # 获取用户的评论 # print(driver.find_element_by_xpath('//*[@id="comments"]/div[1]/div[2]/p/span').text) userConment=driver.find_element_by_xpath('//*[@id="comments"]/div[{}]/div[2]/p/span'.format(str(i))).text print("用户的评论是: %s"%(userConment)) #获取用户的url然后点击url获取居住地 userInfo=driver.find_element_by_xpath('//*[@id="comments"]/div[{}]/div[2]/h3/span[2]/a'.format(str(i))).get_attribute('href') driver.get(userInfo) try: userLocation = driver.find_element_by_xpath('//*[@id="profile"]/div/div[2]/div[1]/div/a').text print("用户的居之地是 %s"%(userLocation)) driver.back() self.writeMysql(userName, userConment, userLocation) except Exception as e: userLocation='未填写' self.writeMysql(userName, userConment, userLocation) driver.back() driver.close() if __name__ == '__main__': AAA=doubanwlwz_spider() p = Pool(3) startTime = time.time() for i in range(1, 3): p.apply_async(AAA.getInfo, args=(i,)) p.close() p.join() stopTime = time.time() print('Running time: %0.2f Seconds' % (stopTime - startTime))

step 2

linux代码(这里需要注意 linux对于selenium和这里的多进程适配不是很好,建议使用windows,linux上面写的是kafka)kafka代码如下

# -*- coding: utf-8 -*- from kafka import KafkaProducer from kafka import KafkaConsumer from kafka.errors import KafkaError import json import time import sys class Kafka_producer(): ''' 使用kafka的生产模块 ''' def __init__(self, kafkahost, kafkaport, kafkatopic): self.kafkaHost = kafkahost self.kafkaPort = kafkaport self.kafkatopic = kafkatopic self.producer = KafkaProducer(bootstrap_servers='{kafka_host}:{kafka_port}'.format( kafka_host=self.kafkaHost, kafka_port=self.kafkaPort )) def sendjsondata(self, params): try: #parmas_message = json.dumps(params) parmas_message =params producer = self.producer kafkamessage=parmas_message.encode('utf-8') producer.send(self.kafkatopic, kafkamessage,partition= 0) # producer.send('test1', value= ingo1, partition= 0) producer.flush() except KafkaError as e: print(e) def main(message,topicname): ''' 测试consumer和producer :return: ''' # 测试生产模块 producer = Kafka_producer("10XXX2", "9098", topicname) print("进入kafka的数据是%s"%(message)) producer.sendjsondata(message) time.sleep(1)

# -*- coding:utf-8 -*- # 导包 import time from selenium import webdriver import pymysql from selenium.webdriver.common.keys import Keys from multiprocessing import Pool import producer class doubanwlwz_spider(): def writeMysql(self,userName,userConment,userLocation): # 打开数据库连接 db = pymysql.connect("123.207.35.161", "zhangfan", "N$nIpms1", "hupo_test") # 使用 cursor() 方法创建一个游标对象 cursor cursor = db.cursor() sql = "insert into userinfo(username,commont,location) values(%s, %s, %s)" cursor.execute(sql, [userName, userConment, userLocation]) db.commit() # 关闭数据库连接 cursor.close() db.close() def getInfo(self,page): # 切换到登录框架中来 # 登录豆瓣网 opt = webdriver.ChromeOptions() # 把chrome设置成无界面模式,不论windows还是linux都可以,自动适配对应参数 opt.add_argument('--no-sandbox') opt.add_argument('--disable-gpu') opt.add_argument('--hide-scrollbars') # 隐藏滚动条, 应对一些特殊页面 opt.add_argument('blink-settings=imagesEnabled=false') # 不加载图片, 提升速度 opt.add_argument('--headless') #浏览器不提供可视化页面. # 用的是谷歌浏览器 driver = webdriver.Chrome('/opt/scripts/zf/douban/chromedriver',options=opt) # 用的是谷歌浏览器 driver.get("http://www.douban.com/") driver.switch_to.frame(driver.find_elements_by_tag_name("iframe")[0]) # 点击"密码登录" bottom1 = driver.find_element_by_xpath('/html/body/div[1]/div[1]/ul[1]/li[2]') bottom1.click() # # 输入密码账号 input1 = driver.find_element_by_xpath('//*[@id="username"]') input1.clear() input1.send_keys("XXX2") input2 = driver.find_element_by_xpath('//*[@id="password"]') input2.clear() input2.send_keys("XX44") # 登录 bottom = driver.find_element_by_class_name('account-form-field-submit ') bottom.click() time.sleep(1) print("登录成功") #获取全部评论 一共有480页,每个页面20个评论 for i in range((page-1)*120,page*120): driver.get('https://movie.douban.com/subject/3882715/comments?start={}&limit=20&sort=new_score'.format(i)) print("开始抓取第%i页面"%(i)) search_window = driver.current_window_handle # pageSource=driver.page_source # print(pageSource) #获取用户的名字 每页20个 for i in range(1,21): userName=driver.find_element_by_xpath('//*[@id="comments"]/div[{}]/div[2]/h3/span[2]/a'.format(str(i))).text print("用户的名字是: %s"%(userName)) #传递两个参数 第一个topic信息 第二个topic名称 producer.main(userName,"username") # 获取用户的评论 # print(driver.find_element_by_xpath('//*[@id="comments"]/div[1]/div[2]/p/span').text) userConment=driver.find_element_by_xpath('//*[@id="comments"]/div[{}]/div[2]/p/span'.format(str(i))).text print("用户的评论是: %s"%(userConment)) producer.main(userConment,"usercomment") #获取用户的url然后点击url获取居住地 userInfo=driver.find_element_by_xpath('//*[@id="comments"]/div[{}]/div[2]/h3/span[2]/a'.format(str(i))).get_attribute('href') driver.get(userInfo) try: userLocation = driver.find_element_by_xpath('//*[@id="profile"]/div/div[2]/div[1]/div/a').text print("用户的居之地是 %s"%(userLocation)) producer.main(userLocation,"userlocation") except Exception as e: userLocation='NUll' producer.main("未填写","userlocation") ## self.writeMysql(userName, userConment, userLocation) #driver.back() if __name__ == '__main__': AAA=doubanwlwz_spider() p = Pool(4) startTime = time.time() for i in range(1, 5): p.apply_async(AAA.getInfo, args=(i,)) p.close() p.join() stopTime = time.time() print('Running time: %0.2f Seconds' % (stopTime - startTime))

step 3

在windows上面直接从mysql读取数据做可视化 对应linux需要消费kafka消息代码如下

rom kafka import KafkaConsumer from multiprocessing import Pool import json import re def writeTxt(topic): consumer = KafkaConsumer(topic, auto_offset_reset='earliest', group_id = "test1", bootstrap_servers=['10.8.31.2:9098']) for message in consumer: #print ("%s:%d:%d: key=%s value=%s" % (message.topic, message.partition, # message.offset, message.key, #message.value.decode('utf-8'))) #匹配中文(把中文符号替换为空) 需要注意的是kafka里面的数据是二进制这里必须decode string = re.sub("[\s+\.\!\/_,$%^*(+\"\']+|[+——!,。?、~@#¥%……&*()]+", " ", message.value.decode('utf-8')) print(string) f=open(topic,'a',encoding='utf-8') f.write(string) f.close() p = Pool(4) for i in {"userlocation","usercomment","username"}: print(i) p.apply_async(writeTxt, args=(i,)) p.close() p.join()

------------------------------------------华丽的分割线 ----------------------------到此已经获取到了所以的数据----------------------------------------------------

获取到数据之后,首先对用户location做可视化

第一步 做数据清洗,把里面的数据中文符号全部转为为空格

import re f = open('name.txt','r') for line in f.readlines(): string = re.sub("[\s+\.\!\/_,$%^*(+\"\']+|[+——!,。?、~@#¥%……&*()]+", " ", line) print(line) print(string) f1=open("newname.txt",'a',encoding='utf-8') f1.write(string) f1.close() f.close()

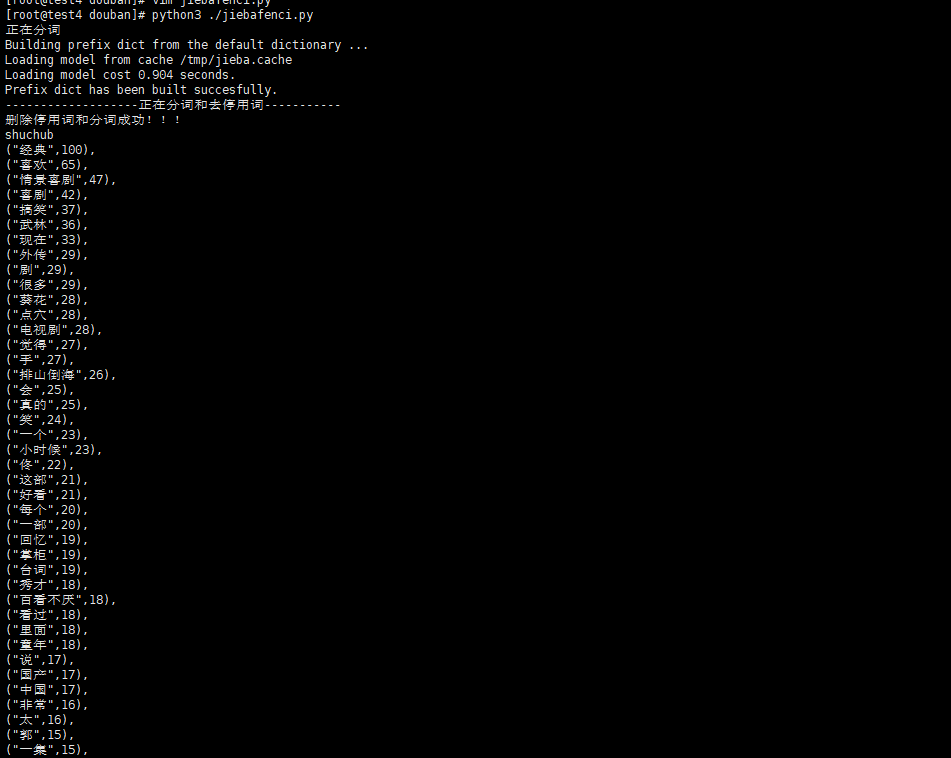

第二步 数据做词云,需要过滤停用词,然后分词

#定义结巴分词的方法以及处理过程 import jieba.analyse import jieba #需要输入需要分析的文本名称,分析后输入的文本名称 class worldAnalysis(): def __init__(self,inputfilename,outputfilename): self.inputfilename=inputfilename self.outputfilename=outputfilename self.start() #--------------------------------这里实现分词和去停用词--------------------------------------- # 创建停用词列表 def stopwordslist(self): stopwords = [line.strip() for line in open('ting.txt',encoding='UTF-8').readlines()] return stopwords # 对句子进行中文分词 def seg_depart(self,sentence): # 对文档中的每一行进行中文分词 print("正在分词") sentence_depart = jieba.cut(sentence.strip()) # 创建一个停用词列表 stopwords = self.stopwordslist() # 输出结果为outstr outstr = '' # 去停用词 for word in sentence_depart: if word not in stopwords: if word != '\t': outstr += word outstr += " " return outstr def start(self): # 给出文档路径 filename = self.inputfilename outfilename = self.outputfilename inputs = open(filename, 'r', encoding='UTF-8') outputs = open(outfilename, 'w', encoding='UTF-8') # 将输出结果写入ou.txt中 for line in inputs: line_seg = self.seg_depart(line) outputs.write(line_seg + '\n') print("-------------------正在分词和去停用词-----------") outputs.close() inputs.close() print("删除停用词和分词成功!!!") self.LyricAnalysis() #实现数据词频统计 def splitSentence(self): #下面的程序完成分析前十的数据出现的次数 f = open(self.outputfilename, 'r', encoding='utf-8') a = f.read().split() b = sorted([(x, a.count(x)) for x in set(a)], key=lambda x: x[1], reverse=True) #print(sorted([(x, a.count(x)) for x in set(a)], key=lambda x: x[1], reverse=True)) print("shuchub") # for i in range(0,100): # print(b[i][0],end=',') # print("---------") # for i in range(0,100): # print(b[i][1],end=',') for i in range(0,100): print("("+'"'+b[i][0]+'"'+","+ str(b[i][1])+')'+',') #输出频率最多的前十个字,里面调用splitSentence完成频率出现最多的前十个词的分析 def LyricAnalysis(self): import jieba file = self.outputfilename #这个技巧需要注意 alllyric = str([line.strip() for line in open(file,encoding="utf-8").readlines()]) #获取全部歌词,在一行里面 alllyric1=alllyric.replace("'","").replace(" ","").replace("?","").replace(",","").replace('"','').replace("?","").replace(".","").replace("!","").replace(":","") # print(alllyric1) self.splitSentence() #下面是词频(单个汉字)统计 import collections # 读取文本文件,把所有的汉字拆成一个list f = open(file, 'r', encoding='utf8') # 打开文件,并读取要处理的大段文字 txt1 = f.read() txt1 = txt1.replace('\n', '') # 删掉换行符 txt1 = txt1.replace(' ', '') # 删掉换行符 txt1 = txt1.replace('.', '') # 删掉逗号 txt1 = txt1.replace('.', '') # 删掉句号 txt1 = txt1.replace('o', '') # 删掉句号 mylist = list(txt1) mycount = collections.Counter(mylist) for key, val in mycount.most_common(10): # 有序(返回前10个) print("开始单词排序") print(key, val)

#输入文本为 newcomment.txt 输出 test.txt

AAA=worldAnalysis("newcomment.txt","test.txt")

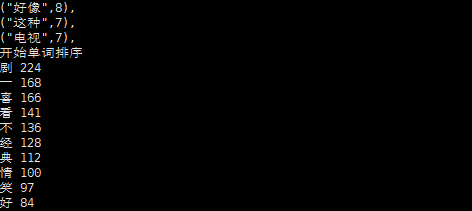

输入结果 这样输出的原因是后面需要用pyechart做数据的词云

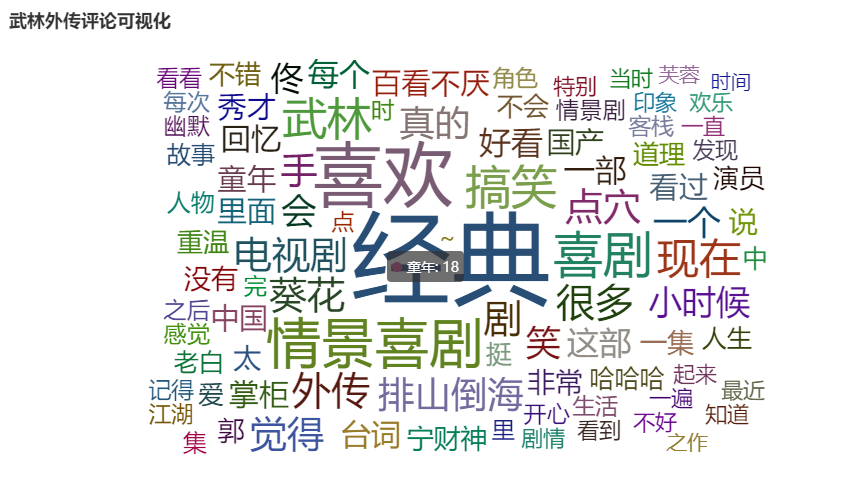

第三步 词云可视化

from pyecharts import options as opts from pyecharts.charts import Page, WordCloud from pyecharts.globals import SymbolType from pyecharts.charts import Bar from pyecharts.render import make_snapshot from snapshot_selenium import snapshot words = [ ("经典",100), ("喜欢",65), ("情景喜剧",47), ("喜剧",42), ("搞笑",37), ("武林",36), ("现在",33), ("剧",29), ("外传",29), ("很多",29), ("点穴",28), ("葵花",28), ("电视剧",28), ("手",27), ("觉得",27), ("排山倒海",26), ("真的",25), ("会",25), ("笑",24), ("一个",23), ("小时候",23), ("佟",22), ("好看",21), ("这部",21), ("一部",20), ("每个",20), ("掌柜",19), ("台词",19), ("回忆",19), ("看过",18), ("里面",18), ("百看不厌",18), ("童年",18), ("秀才",18), ("国产",17), ("说",17), ("中国",17), ("太",16), ("非常",16), ("一集",15), ("郭",15), ("宁财神",15), ("没有",15), ("不错",14), ("不会",14), ("道理",14), ("重温",14), ("挺",14), ("演员",14), ("爱",13), ("中",13), ("哈哈哈",13), ("人生",13), ("老白",13), ("人物",12), ("故事",12), ("集",12), ("情景剧",11), ("开心",11), ("感觉",11), ("之后",11), ("点",11), ("时",11), ("幽默",11), ("每次",11), ("角色",10), ("完",10), ("里",10), ("客栈",10), ("看看",10), ("发现",10), ("生活",10), ("江湖",10), ("~",10), ("记得",10), ("起来",9), ("特别",9), ("剧情",9), ("一直",9), ("一遍",9), ("印象",9), ("看到",9), ("不好",9), ("当时",9), ("最近",9), ("欢乐",9), ("知道",9), ("芙蓉",8), ("之作",8), ("绝对",8), ("无法",8), ("十年",8), ("依然",8), ("巅峰",8), ("好像",8), ("长大",8), ("深刻",8), ("无聊",8), ("以前",7), ("时间",7), ] def wordcloud_base() -> WordCloud: c = ( WordCloud() .add("", words, word_size_range=[20, 100],shape="triangle-forward",) .set_global_opts(title_opts=opts.TitleOpts(title="WordCloud-基本示例")) ) return c make_snapshot(snapshot, wordcloud_base().render(), "bar.png") # wordcloud_base().render()

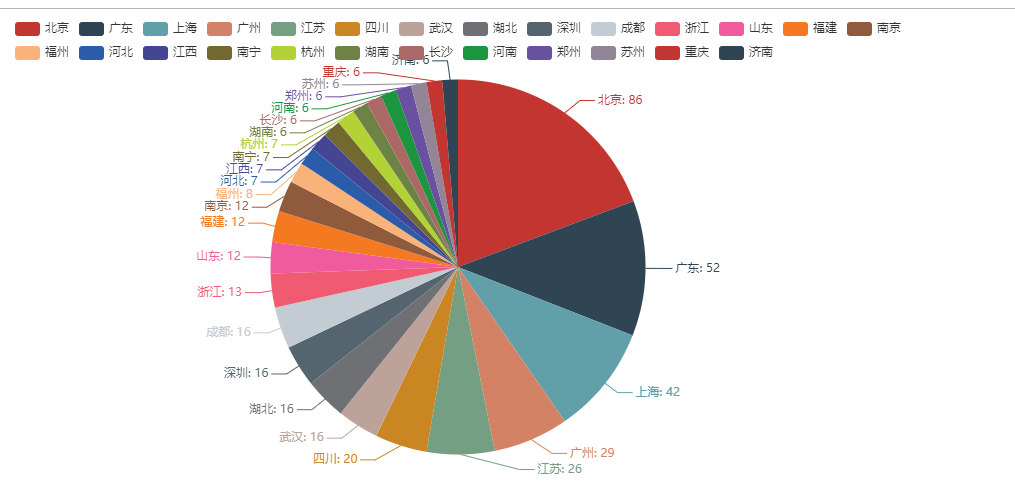

二 用户地址可视化

用户所在地成都热点图

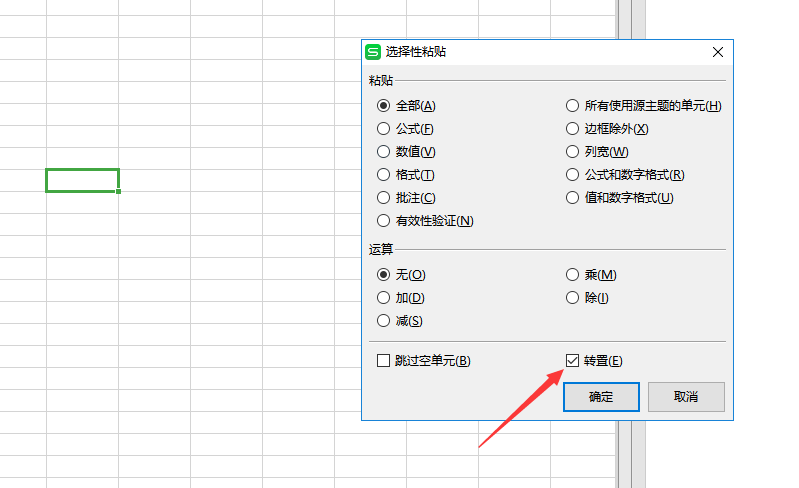

程序脚本:这里需要注意这里的城市一定要是中国城市的名称,为了处理元数据用了xlml(f)+py 随便放一下py脚本

数据处理 f=open("city.txt",'r') for i in f.readlines(): #print(i,end=",") print('"'+i.strip()+'"',end=",")

from example.commons import Faker from pyecharts import options as opts from pyecharts.charts import Geo from pyecharts.globals import ChartType, SymbolType def geo_base() -> Geo: c = ( Geo() .add_schema(maptype="china") .add("geo", [list(z) for z in zip(["北京","广东","上海","广州","江苏","四川","武汉","湖北","深圳","成都","浙江","山东","福建","南京","福州","河北","江西","南宁","杭州","湖南","长沙","河南","郑州","苏州","重庆","济南","黑龙江","石家庄","西安","南昌","陕西","哈尔滨","吉林","厦门","天津","沈阳","香港","青岛","无锡","贵州"], ["86","52","42","29","26","20","16","16","16","16","13","12","12","12","8","7","7","7","7","6","6","6","6","6","6","6","5","5","5","5","5","5","4","4","4","4","3","3","3","3"])]) .set_series_opts(label_opts=opts.LabelOpts(is_show=False)) .set_global_opts( visualmap_opts=opts.VisualMapOpts(), title_opts=opts.TitleOpts(title="城市热点图"), ) ) return c geo_base().render()

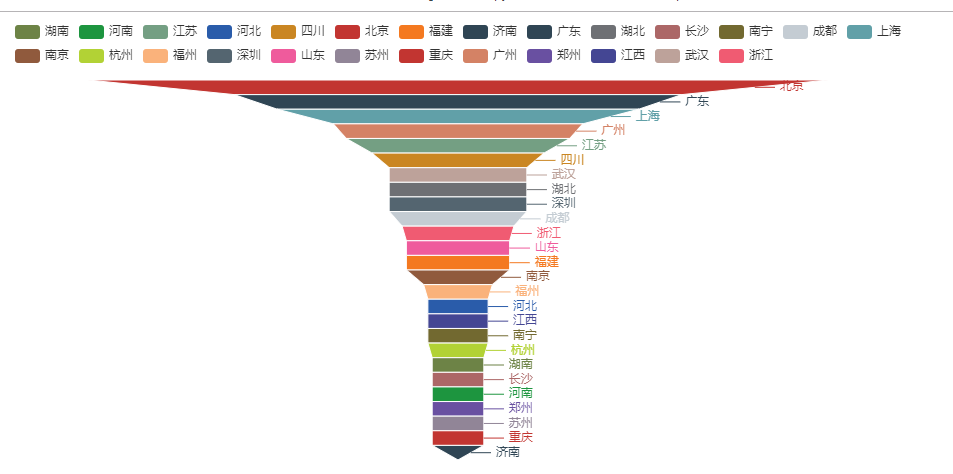

漏斗图 由于页面适配的问题这里已经筛减了很多城市了

from example.commons import Faker from pyecharts import options as opts from pyecharts.charts import Funnel, Page def funnel_base() -> Funnel: c = ( Funnel() .add("geo", [list(z) for z in zip( ["北京", "广东", "上海", "广州", "江苏", "四川", "武汉", "湖北", "深圳", "成都", "浙江", "山东", "福建", "南京", "福州", "河北", "江西", "南宁", "杭州", "湖南", "长沙", "河南", "郑州", "苏州", "重庆", "济南"], ["86", "52", "42", "29", "26", "20", "16", "16", "16", "16", "13", "12", "12", "12", "8", "7", "7", "7", "7", "6", "6", "6", "6", "6", "6", "6"])]) .set_global_opts(title_opts=opts.TitleOpts()) ) return c funnel_base().render('漏斗图.html')

饼图

from example.commons import Faker from pyecharts import options as opts from pyecharts.charts import Page, Pie def pie_base() -> Pie: c = ( Pie() .add("", [list(z) for z in zip( ["北京", "广东", "上海", "广州", "江苏", "四川", "武汉", "湖北", "深圳", "成都", "浙江", "山东", "福建", "南京", "福州", "河北", "江西", "南宁", "杭州", "湖南", "长沙", "河南", "郑州", "苏州", "重庆", "济南"], ["86", "52", "42", "29", "26", "20", "16", "16", "16", "16", "13", "12", "12", "12", "8", "7", "7", "7", "7", "6", "6", "6", "6", "6", "6", "6"])]) .set_global_opts(title_opts=opts.TitleOpts()) .set_series_opts(label_opts=opts.LabelOpts(formatter="{b}: {c}")) ) return c pie_base().render("饼图.html")

评论情绪化分析代码如下

from snownlp import SnowNLP f=open("comment.txt",'r') sentiments=0 count=0 point2=0 point3=0 point4=0 point5=0 point6=0 point7=0 point8=0 point9=0 for i in f.readlines(): s = SnowNLP(i) s1 = SnowNLP(s.sentences[0]) for p in s.sentences: s = SnowNLP(p) s1 = SnowNLP(s.sentences[0]) count+=1 if s1.sentiments > 0.9: point9+=1 elif s1.sentiments> 0.8 and s1.sentiments <=0.9: point8+=1 elif s1.sentiments> 0.7 and s1.sentiments <=0.8: point7+=1 elif s1.sentiments> 0.6 and s1.sentiments <=0.7: point6+=1 elif s1.sentiments> 0.5 and s1.sentiments <=0.6: point5+=1 elif s1.sentiments> 0.4 and s1.sentiments <=0.5: point4+=1 elif s1.sentiments> 0.3 and s1.sentiments <=0.4: point3+=1 elif s1.sentiments> 0.2 and s1.sentiments <=0.3: point2=1 print(s1.sentiments) sentiments+=s1.sentiments print(sentiments) print(count) avg1=int(sentiments)/int(count) print(avg1) print(point9) print(point8) print(point7) print(point6) print(point5) print(point4) print(point3) print(point2)

情绪可视化

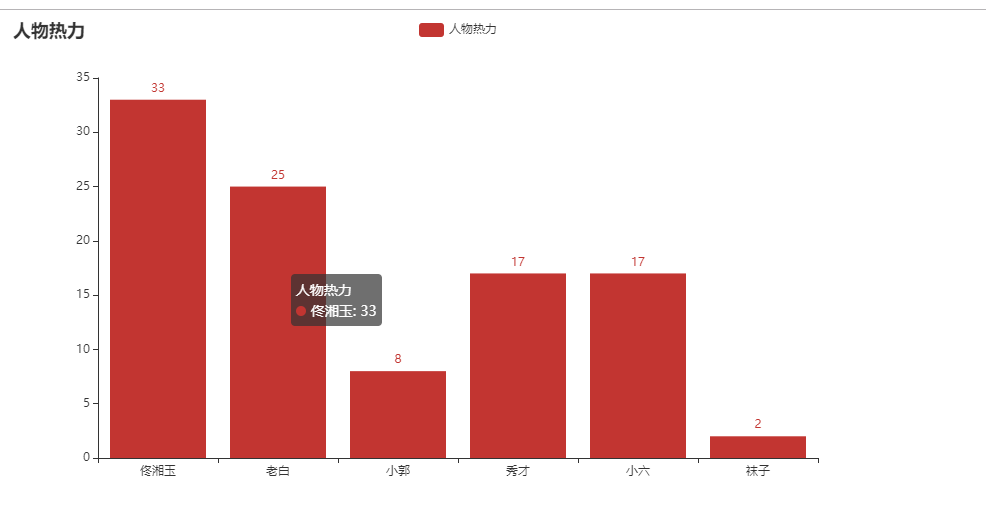

主要人物热力图

cat comment.txt | grep -E '佟|掌柜|湘玉|闫妮' | wc -l 33 cat comment.txt | grep -E '老白|展堂|盗圣' | wc -l 25 cat comment.txt | grep -E '大嘴' | wc -l 8 cat comment.txt | grep -E '小郭|郭|芙蓉' | wc -l 17 cat comment.txt | grep -E '秀才|吕轻侯' | wc -l 17 cat comment.txt | grep -E '小六' | wc -l 2

from pyecharts.charts import Bar from pyecharts import options as opts # V1 版本开始支持链式调用 bar = ( Bar() .add_xaxis(["佟湘玉", "老白", "小郭", "秀才", "小六", "袜子"]) .add_yaxis("人物热力", [33, 25, 8, 17, 17, 2]) .set_global_opts(title_opts=opts.TitleOpts(title="人物热力")) # 或者直接使用字典参数 # .set_global_opts(title_opts={"text": "主标题", "subtext": "副标题"}) ) bar.render("人物热力.html")

浙公网安备 33010602011771号

浙公网安备 33010602011771号