寒假大数据学习笔记四

今天的学习内容是利用python对图片进行爬取。

首先找到一个中意的图片网站,打开开发者工具,仔细寻找有关爬取内容的代码

可以很明显的找到.JPG格式的文件,然后直接爬取本网页的源代码,用正则表达式筛选出相应的.JPG文件,读取并保存就可以啦!

from urllib import request import os import time import re from fake_useragent import UserAgent import random def url_open(url): # 使用代理IP的操作 proxies = ['39.106.114.143:80', '47.99.236.251:3128', '58.222.32.77:8080', '101.4.136.34:81', '39.137.95.71:80', '39.80.41.0:8060'] proxy_support = request.ProxyHandler( {'http': random.choice(proxies)}) opener = request.build_opener(proxy_support) request.install_opener(opener) # 编写请求头 header = {"User-Agent": UserAgent().random} if bool(re.search(r'https://www.xxx.com/\d{6}', url)): header = { "User-Agent": UserAgent().random, "Sec-Fetch-User": "?1", "Upgrade-Insecure-Requests": 1, "accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8,application/signed-exchange;v=b3;q=0.9", "cookie": "Hm_lvt_dbc355aef238b6c32b43eacbbf161c3c=1580652014; Hm_lpvt_dbc355aef238b6c32b43eacbbf161c3c=1580662027", "referer": } req = request.Request(url, headers=header) response = request.urlopen(req, timeout=300) html = response.read() # print(url) return html # 得到图片所在网页的网址 def get_page(url): html = url_open(url).decode("utf-8") pattern = re.compile(r'https://www.xxx.com/\d{6}') result = pattern.findall(html) # 去重并转换为集合 result = set(result) # 集合不支持索引调用,转换为列表 list_url = list(result) # 返回包含地址的列表 return list_url # 得到图片网址下的每一张图片的网址 def find_image(image_page_url): html = url_open(image_page_url).decode("utf-8") pattern = re.compile(image_page_url + r"(\d\d\d|\d\d|\d{0,1})") result = pattern.findall(html) # 去重并转换为集合 result = set(result) # 集合不支持索引调用,转换为列表 list_url = list(result) list_url = [image_page_url + x for x in list_url] # for i in list_url: # print(i) return list_url # 找到相应网址下的图片 def find_images_jpg(image_addr): images_addr = [] images_URL = [] for each in image_addr: html = url_open(each).decode("utf-8") pattern = re.compile(r'((https):[^\s]*?(jpge|jpg|png|PNG|JPG))') images_addr.append(pattern.findall(html)) for i in images_addr: images_URL.append(i[0][0]) # for j in images_URL: # print(j) return images_URL # 将图片保存到本地 def save_image(images): i = 1 for each in images: with open(str(i) + ".jpg", "wb") as p: html = url_open(each) p.write(html) i += 1 time.sleep(1) print(each) # 主程序体 def downloadimg(folder="meiimages"): os.mkdir(folder) os.chdir(folder) url = "https://www.xxx.com/" # 得到相应的图片id image_page_url = get_page(url) # 循环访问相应路径 for i in image_page_url: image_addr = find_image(i + "/") time.sleep(2) images = find_images_jpg(image_addr) save_image(images) time.sleep(2) # 程序入口 if __name__ == '__main__': downloadimg()

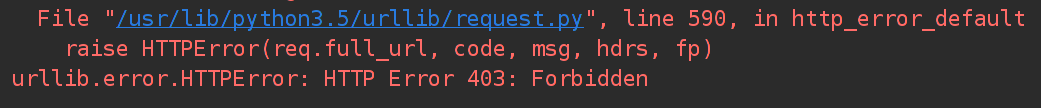

本程序语法、逻辑无误,但本次爬取工作却是失败的:

403错误,程序触发了反爬取机制,导致程序运行失败,接下来的任务是学习编写请求头欺骗服务器。

浙公网安备 33010602011771号

浙公网安备 33010602011771号