部署ELK+filebeat收集nginx日志

前言

简介

ELK(Elasticsearch、Logstash、Kibana)是开源的实时日志收集分析解决方案。

- Elasticsearch:开源搜索引擎,是一个基于Lucene、分布式、通过Restful方式进行交互的近实时搜索平台框架。Elasticsearch为所有类型的数据提供近乎实时的搜索和分析。无论是结构化文本还是非结构化文本,数字数据或地理空间数据,Elasticsearch都能以支持快速搜索的方式有效地对其进行存储和索引。在ELK中负责日志分析与存储。

- Logstash:日志收集、过滤、转发的日志收集引擎。能够从多个来源采集数据,转换数据,然后将数据发送到“存储库”中。Logstash能够动态地采集、转换和传输数据,不受格式或复杂度的影响。在本文中负责接收处理filebeat的数据

- Kibana:负责页面可视化展示。

- Filebeat:日志数据采集

完整日志系统的基本特征:

- 收集:能够采集多种来源的日志数据

- 传输:能够稳定的把日志数据解析过滤并传输到存储系统

- 存储:存储日志数据

- 分析:支持UI分析

- 警告:能够提供错误报告,监控机制

部署位置

| IP | 应用 | 版本 | 说明 |

|---|---|---|---|

| 192.168.2.249 | elasticsearch | 7.5.1 | 日志分析和存储,简称“es” |

| 192.168.2.249 | logstash | 7.5.1 | 日志处理 |

| 192.168.2.249 | kibana | 7.5.1 | 数据可视化 |

| 192.168.2.249 | filebeat | 7.5.1 | 日志采集 |

| 192.168.2.249 | nginx | 1.21.5 | 日志源 |

步骤

准备工作

- 安装docker和docker-compose,可参考 博客园 - 花酒锄作田 - linux离线安装docker与compose

- docker拉取es、logstash和kibana的docker镜像(version 7.5.1)

- 下载filebeat的二进制程序压缩包(官方下载地址)

- 安装nginx

记录的一些命令(使用普通用户admin,有docker的使用权限)

# 拉取镜像

docker pull elasticsearch:7.5.1

docker pull logstash:7.5.1

docker pull kibana:7.5.1

# 准备数据和配置文件目录

mkdir -p $HOME/apps/{elasticsearch,logstash,kibana}

安装elasticsearch

- 编辑

es-compose.yaml

version: '3'

services:

elasticsearch:

image: elasticsearch:7.5.1

container_name: es

environment:

- discovery.type=single-node

- bootstrap.memory_lock=true

- "ES_JAVA_OPTS=-Xms1024m -Xmx1024m"

ulimits:

memlock:

soft: -1

hard: -1

hostname: elasticsearch

ports:

- "9200:9200"

- "9300:9300"

- 创建docker容器

docker-compose -f es-compose.yaml

- 从容器中复制一些配置

docker cp es:/usr/share/elasticsearch/data $HOME/apps/elasticsearch

docker cp es:/usr/share/elasticsearch/config $HOME/apps/elasticsearch

docker cp es:/usr/share/elasticsearch/modules $HOME/apps/elasticsearch

docker cp es:/usr/share/elasticsearch/plugins $HOME/apps/elasticsearch

- 修改es-compose.yaml(主要用于挂载数据持久化目录)

version: '3'

services:

elasticsearch:

image: elasticsearch:7.5.1

container_name: es

environment:

- discovery.type=single-node

- bootstrap.memory_lock=true

- "ES_JAVA_OPTS=-Xms1024m -Xmx1024m"

ulimits:

memlock:

soft: -1

hard: -1

volumes:

- /etc/localtime:/etc/localtime:ro

- /etc/timezone:/etc/timezone:ro

- /home/admin/apps/elasticsearch/modules:/usr/share/elasticsearch/modules

- /home/admin/apps/elasticsearch/plugins:/usr/share/elasticsearch/plugins

- /home/admin/apps/elasticsearch/data:/usr/share/elasticsearch/data

- /home/admin/apps/elasticsearch/config:/usr/share/elasticsearch/config

hostname: elasticsearch

ports:

- "9200:9200"

- "9300:9300"

- 重新启动

docker-compose -f es-compose.yaml up -d

安装logstash

- 编辑logstash-compose.yaml

version: '3'

services:

logstash:

image: logstash:7.5.1

container_name: logstash

hostname: logstash

ports:

- "5044:5044"

- 启动docker容器

docker-compose -f logstash-compose.yaml up -d

- 从docker容器中复制配置

docker cp logstash:/usr/share/logstash/config $HOME/apps/logstash

docker cp logstash:/usr/share/logstash/pipeline $HOME/apps/logstash

- 修改

$HOME/apps/logstash.config/logstash.yml,示例如下

http.host: "0.0.0.0"

xpack.monitoring.elasticsearch.hosts: [ "http://192.168.2.249:9200" ]

- 修改

$HOME/apps/logstash/pipeline/logstash.conf

input {

beats {

port => 5044

codec => "json"

}

}

output {

elasticsearch {

hosts => ["http://192.168.2.249:9200"]

index => "logstash-nginx-%{[@metadata][version]}-%{+YYYY.MM.dd}"

}

}

- 修改logstash-compose.yaml

version: '3'

services:

logstash:

image: logstash:7.5.1

container_name: logstash

hostname: logstash

ports:

- "5044:5044"

volumes:

- /etc/localtime:/etc/locatime:ro

- /etc/timezone:/etc/timezone:ro

- /home/admin/apps/logstash/pipeline:/usr/share/logstash/pipeline

- /home/admin/apps/logstash/config:/usr/share/logstash/config

- 重新启动

docker-compose -f logstash-compose.yaml up -d

安装kibana

- 编辑kibana-compose.yaml

version: '3'

services:

kibana:

image: kibana:7.5.1

container_name: kibana

hostname: kibana

ports:

- "5601:5601"

- 启动

docker-compose -f kibana-compose.yaml up -d

- 从docker容器中复制配置

docker cp kibana:/usr/share/kibana/config $HOME/apps/kibana

- 修改

$HOME/apps/kibana/config/kibana.yml,修改es地址和指定中文

server.name: kibana

server.host: "0"

elasticsearch.hosts: [ "http://192.168.2.249:9200" ]

xpack.monitoring.ui.container.elasticsearch.enabled: true

i18n.locale: "zh-CN"

- 修改kibana-compose.yaml

version: '3'

services:

kibana:

image: kibana:7.5.1

container_name: kibana

hostname: kibana

ports:

- "5601:5601"

volumes:

- /etc/localtime:/etc/locatime:ro

- /etc/timezone:/etc/timezone:ro

- /home/admin/apps/kibana/config:/usr/share/kibana/config

- 重新启动

docker-compose -f kibana-compose.yaml up -d

修改nginx日志格式

log_format json '{"@timestamp": "$time_iso8601", '

'"connection": "$connection", '

'"remote_addr": "$remote_addr", '

'"remote_user": "$remote_user", '

'"request_method": "$request_method", '

'"request_uri": "$request_uri", '

'"server_protocol": "$server_protocol", '

'"status": "$status", '

'"body_bytes_sent": "$body_bytes_sent", '

'"http_referer": "$http_referer", '

'"http_user_agent": "$http_user_agent", '

'"http_x_forwarded_for": "$http_x_forwarded_for", '

'"request_time": "$request_time"}';

在http块中设置:access_log logs/access.log json;

安装filebeat

- 假设filebeat的压缩包已下载并解压,解压和重命名之后,二进制文件所在目录为

/home/admin/apps/filebeat - 编辑

filebeat.yaml,找到以下内容,取消输出到es,改为输出到logstash,示例:

#-------------------------- Elasticsearch output ------------------------------

#output.elasticsearch:

# Array of hosts to connect to.

#hosts: ["localhost:9200"]

# Optional protocol and basic auth credentials.

#protocol: "https"

#username: "elastic"

#password: "changeme"

#----------------------------- Logstash output --------------------------------

output.logstash:

# The Logstash hosts

hosts: ["192.168.2.249:5044"]

- 启用nginx模块

./filebeat modules enable nginx

- 配置nginx模块。修改

./modules.d/nginx.yml,主要修改nginx日志的路径,示例如下

# Module: nginx

# Docs: https://www.elastic.co/guide/en/beats/filebeat/7.5/filebeat-module-nginx.html

- module: nginx

# Access logs

access:

enabled: true

# Set custom paths for the log files. If left empty,

# Filebeat will choose the paths depending on your OS.

var.paths: ["/home/admin/apps/nginx/logs/*access.log"]

# Error logs

error:

enabled: true

# Set custom paths for the log files. If left empty,

# Filebeat will choose the paths depending on your OS.

var.paths: ["/home/admin/apps/nginx/logs/*error.log"]

- 检查配置

./filebeat test config

./filebeat test output

- 验证通过后,启动

nohup ./filebeat -e -c ./filebeat.yml > /dev/null 2>&1 &

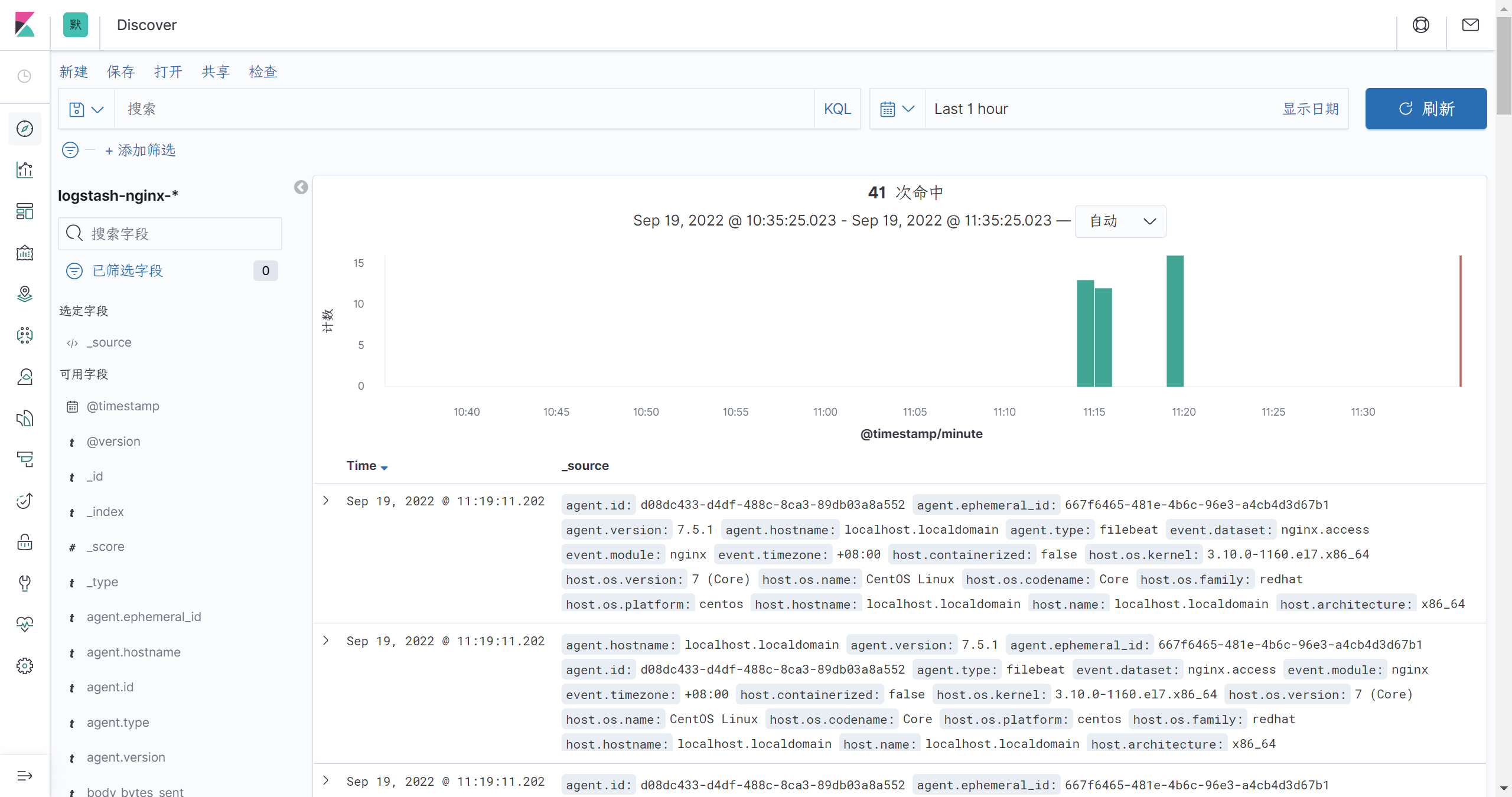

kibana展示

- 打开kibana的web页面,端口5061

- 打开左侧菜单栏的设置(management) - 索引模式 - 创建索引模式

- 输入

logstash-nginx-*,点击下一步(如果点不了,选择timestamp) - 打开左侧菜单栏的discover,选择

logstash-nginx-*就可以试试查看nginx输出日志了

参考资料

- 搞懂ELK并不是一件特别难的事

- 王小东:《Nginx应用与运维实战》

本文来自博客园,作者:花酒锄作田,转载请注明原文链接:https://www.cnblogs.com/XY-Heruo/p/14503356.html