理解爬虫原理

作业来源:https://edu.cnblogs.com/campus/gzcc/GZCC-16SE1/homework/2881

1. 简单说明爬虫原理

通过访问请求爬取网页上的数据

2. 理解爬虫开发过程

1).简要说明浏览器工作原理;

URL解析/DNS解析查找域名IP地址,网络连接发起HTTP请求,HTTP报文传输过程,服务器接收数据,服务器响应请求/MVC,服务器返回数据,客户端接收数据,浏览器加载/渲染页面,打印绘制输出所看到的网页。

2).使用 requests 库抓取网站数据;

requests.get(url) 获取校园新闻首页html代码

import requests url ='http://news.gzcc.cn/html/xiaoyuanxinwen/' html = requests.get(url) html.encoding ='utf-8' print(html.text)

2.了解网页

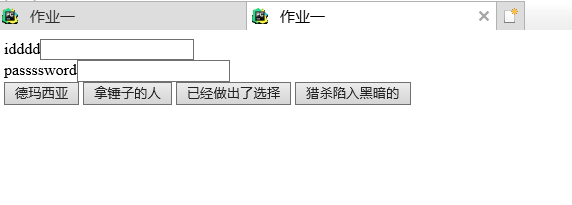

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<title>作业一</title>

<stytle>

</stytle>

</head>

<body>

<form>

<div id="name" class='content'><label>idddd</label><input type="text"></div>

<div id="password" class='content'><label>passssword</label><input type="text"></div>

</form>

<button>德玛西亚</button>

<button>拿锤子的人</button>

<button>已经做出了选择</button>

<button>猎杀陷入黑暗的</button>

</body>

</html>

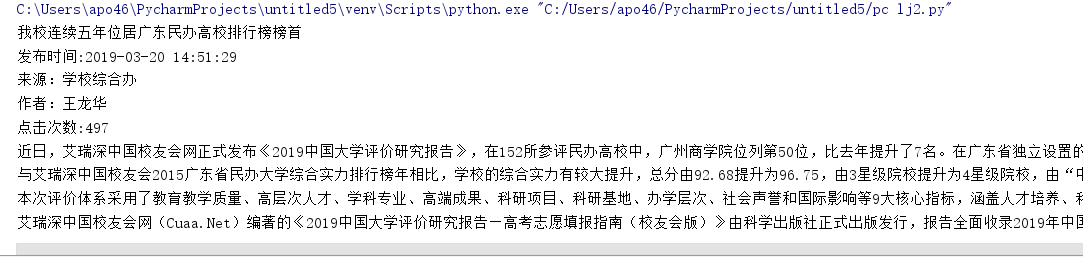

3.提取一篇校园新闻的标题、发布时间、发布单位、作者、点击次数、内容等信息

import requests

from bs4 import BeautifulSoup

from datetime import datetime

url = 'http://news.gzcc.cn/html/2019/xiaoyuanxinwen_0320/11029.html'

html = requests.get(url)

html.encoding = 'utf-8'

soup = BeautifulSoup(html.text,'html.parser')

title = soup.select('.show-title')[0].text

time = soup.select('.show-info')[0].text.split()[0:2]

time = ' '.join(time)[5:]

time = datetime.strptime(time,'%Y-%m-%d %H:%M:%S')

add = str(soup.select('.show-info')[0].text.split()[4])

writer = str(soup.select('.show-info')[0].text.split()[2])

DingUrl="http://oa.gzcc.cn/api.php?op=count&id=11052&modelid=80"

ding=int(requests.get(DingUrl).text.split('.html')[-1][2:-3])

Ding = '点击次数:{}'.format(ding)

Nr = soup.select('#content')[0].text.split()

print(title)

print('发布时间:{}'.format(time))

print(add)

print(writer)

print(Ding)

print(Nr[0]+'\n'+Nr[2]+'\n'+Nr[4]+'\n'+Nr[6])

posted on 2019-04-01 21:10 刘杰_winslow 阅读(133) 评论(0) 编辑 收藏 举报