kubernete-Kafka集群部署

系统环境

- k8s版本:1.23.17

- zookeeper版本: 3.5.9

- kafka版本:3.6.0

zookeeper部署

StatefulSet控制器创建三个Pod,每个Pod都有一个带ZooKeeper服务器的容器

---

apiVersion: v1

kind: Service

metadata:

name: zk-hs

labels:

app: zk

spec:

ports:

- port: 2888

name: server

- port: 3888

name: leader-election

clusterIP: None

selector:

app: zk

---

apiVersion: v1

kind: Service

metadata:

name: zk-cs

labels:

app: zk

spec:

ports:

- port: 2181

name: client

selector:

app: zk

---

apiVersion: policy/v1beta1

kind: PodDisruptionBudget

metadata:

name: zk-pdb

spec:

selector:

matchLabels:

app: zk

maxUnavailable: 1

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: zk

spec:

selector:

matchLabels:

app: zk

serviceName: zk-hs

replicas: 3

updateStrategy:

type: RollingUpdate

podManagementPolicy: Parallel

template:

metadata:

labels:

app: zk

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In

values:

- zk

topologyKey: kubernetes.io/hostname

containers:

- name: kubernetes-zookeeper

imagePullPolicy: IfNotPresent

#image: 'kubebiz/zookeeper:3.5.9'

image: 'registry.us-east-1.aliyuncs.com/y110/zookeeper:3.5.9'

ports:

- containerPort: 2181

name: client

- containerPort: 2888

name: server

- containerPort: 3888

name: leader-election

command:

- sh

- '-c'

- >-

start-zookeeper --servers=3

--log_dir=/logs

--data_dir=/data

--data_log_dir=/datalog

--conf_dir=/conf

--client_port=2181

--election_port=3888

--server_port=2888

--tick_time=2000

--init_limit=10

--sync_limit=5

--heap=512M

--max_client_cnxns=60

--snap_retain_count=3

--purge_interval=12

--max_session_timeout=40000

--min_session_timeout=4000

--log_level=INFO

readinessProbe:

exec:

command:

- sh

- '-c'

- zookeeper-ready 2181

initialDelaySeconds: 10

timeoutSeconds: 5

livenessProbe:

exec:

command:

- sh

- '-c'

- zookeeper-ready 2181

initialDelaySeconds: 10

timeoutSeconds: 5

volumeMounts:

- name: datadir

mountPath: /var/lib/zookeeper

securityContext:

runAsUser: 1000

fsGroup: 1000

volumeClaimTemplates:

- metadata:

name: datadir

spec:

accessModes:

- ReadWriteOnce

storageClassName: nfs-storage

resources:

requests:

storage: 1Gi

Leader 选举

由于在匿名网络中选择leader没有终止算法,因此Zab需要显式成员资格配置来执行leader选举。集合中的每个服务器都需要具有唯一标识符,所有服务器都需要知道全局标识符集,并且每个标识符需要与网络地址相关联

使用kubectl exec获取zk StatefulSet中Pod的主机名,StatefulSet控制器根据其序数索引为每个Pod提供唯一的主机名。主机名采用 - 的形式

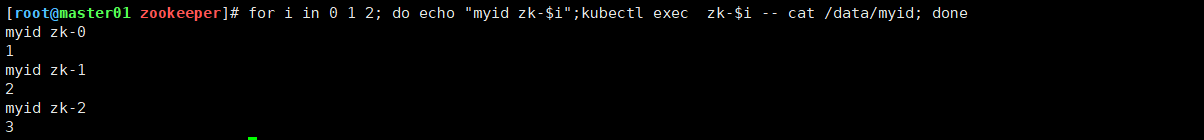

检查状态

检查每个服务器的myid文件的内容

for i in 0 1 2; do echo "myid zk-$i";kubectl exec zk-$i -- cat /data/myid; done

检查ZooKeeper实例的状态

for i in 0 1 2; do echo "myid zk-$i";kubectl exec zk-$i -- zkServer.sh status; done

获取zk StatefulSet中每个Pod的完全限定域名(FQDN)

for i in 0 1 2; do kubectl exec zk-$i -- hostname -f; done

kafka部署

---

apiVersion: v1

kind: Service

metadata:

name: kafka-hs

labels:

app: kafka

spec:

ports:

- port: 9092

name: server

clusterIP: None

selector:

app: kafka

---

apiVersion: policy/v1beta1

kind: PodDisruptionBudget

metadata:

name: kafka-pdb

spec:

selector:

matchLabels:

app: kafka

maxUnavailable: 1

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: kafka

spec:

serviceName: kafka-hs

replicas: 3

podManagementPolicy: Parallel

updateStrategy:

type: RollingUpdate

selector:

matchLabels:

app: kafka

template:

metadata:

labels:

app: kafka

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In

values:

- kafka

topologyKey: kubernetes.io/hostname

podAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 1

podAffinityTerm:

labelSelector:

matchExpressions:

- key: app

operator: In

values:

- zk

topologyKey: kubernetes.io/hostname

terminationGracePeriodSeconds: 300

containers:

- name: k8skafka

imagePullPolicy: IfNotPresent

image: 'harbor.jiajia.com/kafka/kafka:2.13-3.6.0'

resources:

requests:

memory: 1Gi

cpu: '0.5'

ports:

- containerPort: 9092

name: server

command:

- sh

- '-c'

- >-

exec kafka-server-start.sh /opt/kafka/config/server.properties

--override broker.id=${HOSTNAME##*-}

--override listeners=PLAINTEXT://$(hostname -i):9092

--override zookeeper.connect=zk-0.zk-hs.default.svc.cluster.local:2181,zk-1.zk-hs.default.svc.cluster.local:2181,zk-2.zk-hs.default.svc.cluster.local:2181

--override log.dirs=/var/lib/kafka

--override auto.create.topics.enable=true

--override auto.leader.rebalance.enable=true

--override background.threads=10

--override compression.type=producer

--override delete.topic.enable=false

--override leader.imbalance.check.interval.seconds=300

--override leader.imbalance.per.broker.percentage=10

--override log.flush.interval.messages=9223372036854775807

--override log.flush.offset.checkpoint.interval.ms=60000

--override log.flush.scheduler.interval.ms=9223372036854775807

--override log.retention.bytes=-1

--override log.retention.hours=168

--override log.roll.hours=168

--override log.roll.jitter.hours=0

--override log.segment.bytes=1073741824

--override log.segment.delete.delay.ms=60000

--override message.max.bytes=1000012

--override min.insync.replicas=1

--override num.io.threads=8

--override num.network.threads=3

--override num.recovery.threads.per.data.dir=1

--override num.replica.fetchers=1

--override offset.metadata.max.bytes=4096

--override offsets.commit.required.acks=-1

--override offsets.commit.timeout.ms=5000

--override offsets.load.buffer.size=5242880

--override offsets.retention.check.interval.ms=600000

--override offsets.retention.minutes=1440

--override offsets.topic.compression.codec=0

--override offsets.topic.num.partitions=50

--override offsets.topic.replication.factor=3

--override offsets.topic.segment.bytes=104857600

--override queued.max.requests=500

--override quota.consumer.default=9223372036854775807

--override quota.producer.default=9223372036854775807

--override replica.fetch.min.bytes=1

--override replica.fetch.wait.max.ms=500

--override replica.high.watermark.checkpoint.interval.ms=5000

--override replica.lag.time.max.ms=10000

--override replica.socket.receive.buffer.bytes=65536

--override replica.socket.timeout.ms=30000

--override request.timeout.ms=30000

--override socket.receive.buffer.bytes=102400

--override socket.request.max.bytes=104857600

--override socket.send.buffer.bytes=102400

--override unclean.leader.election.enable=true

--override zookeeper.session.timeout.ms=6000

--override zookeeper.set.acl=false

--override broker.id.generation.enable=true

--override connections.max.idle.ms=600000

--override controlled.shutdown.enable=true

--override controlled.shutdown.max.retries=3

--override controlled.shutdown.retry.backoff.ms=5000

--override controller.socket.timeout.ms=30000

--override default.replication.factor=1

--override fetch.purgatory.purge.interval.requests=1000

--override group.max.session.timeout.ms=300000

--override group.min.session.timeout.ms=6000

--override log.cleaner.backoff.ms=15000

--override log.cleaner.dedupe.buffer.size=134217728

--override log.cleaner.delete.retention.ms=86400000

--override log.cleaner.enable=true

--override log.cleaner.io.buffer.load.factor=0.9

--override log.cleaner.io.buffer.size=524288

--override log.cleaner.io.max.bytes.per.second=1.7976931348623157E308

--override log.cleaner.min.cleanable.ratio=0.5

--override log.cleaner.min.compaction.lag.ms=0

--override log.cleaner.threads=1

--override log.cleanup.policy=delete

--override log.index.interval.bytes=4096

--override log.index.size.max.bytes=10485760

--override log.message.timestamp.difference.max.ms=9223372036854775807

--override log.message.timestamp.type=CreateTime

--override log.preallocate=false

--override log.retention.check.interval.ms=300000

--override max.connections.per.ip=2147483647

--override num.partitions=1

--override producer.purgatory.purge.interval.requests=1000

--override replica.fetch.backoff.ms=1000

--override replica.fetch.max.bytes=1048576

--override replica.fetch.response.max.bytes=10485760

--override reserved.broker.max.id=1000

env:

- name: KAFKA_HEAP_OPTS

value: '-Xmx512M -Xms512M'

- name: KAFKA_OPTS

value: '-Dlogging.level=INFO'

volumeMounts:

- name: datadir

mountPath: /var/lib/kafka

readinessProbe:

exec:

command:

- sh

- '-c'

- >-

/opt/kafka/bin/kafka-broker-api-versions.sh

--bootstrap-server=$(hostname -i):9092

timeoutSeconds: 5

securityContext:

runAsUser: 1000

fsGroup: 1000

volumeClaimTemplates:

- metadata:

name: datadir

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10Gi

检查状态和日志

[root@master01 sts]# kubectl get pods | grep kafka

kafka-0 1/1 Running 0 6m14s

kafka-1 1/1 Running 0 6m14s

kafka-2 1/1 Running 0 6m14s

kafka-ui-85cbf6f8dc-sf9gg 1/1 Running 26 (28h ago) 31d

创建topic

[root@master01 sts]# kubectl exec -it kafka-0 bash

kafka@kafka-0:/$ kafka-topics.sh --bootstrap-server kafka-0.kafka-hs.default.svc.cluster.local:9092 --create --topic test

消息发送及消费

[root@master01 sts]# kubectl exec -it kafka-0 bash

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

kafka@kafka-0:/$ kafka-con --bootstrap-server kafka-0.kafka-hs.default.svc.cluster.local:9092

kafka-configs.sh kafka-console-consumer.sh kafka-console-producer.sh kafka-consumer-groups.sh kafka-consumer-perf-test.sh

kafka@kafka-0:/$ kafka-console-producer.sh --bootstrap-server kafka-0.kafka-hs.default.svc.cluster.local:9092 --topic test

>123

>456

>

[root@master01 sts]# kubectl exec -it kafka-2 bash

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

kafka@kafka-2:/$ kafka-console-consumer.sh --bootstrap-server kafka-1.kafka-hs.default.svc.cluster.local:9092 --topic test --from-beginning

123

456

本文来自博客园,作者:&UnstopPable,转载请注明原文链接:https://www.cnblogs.com/Unstoppable9527/p/18339233

浙公网安备 33010602011771号

浙公网安备 33010602011771号