Hadoop Mapreduce 案例 wordcount+统计手机流量使用情况

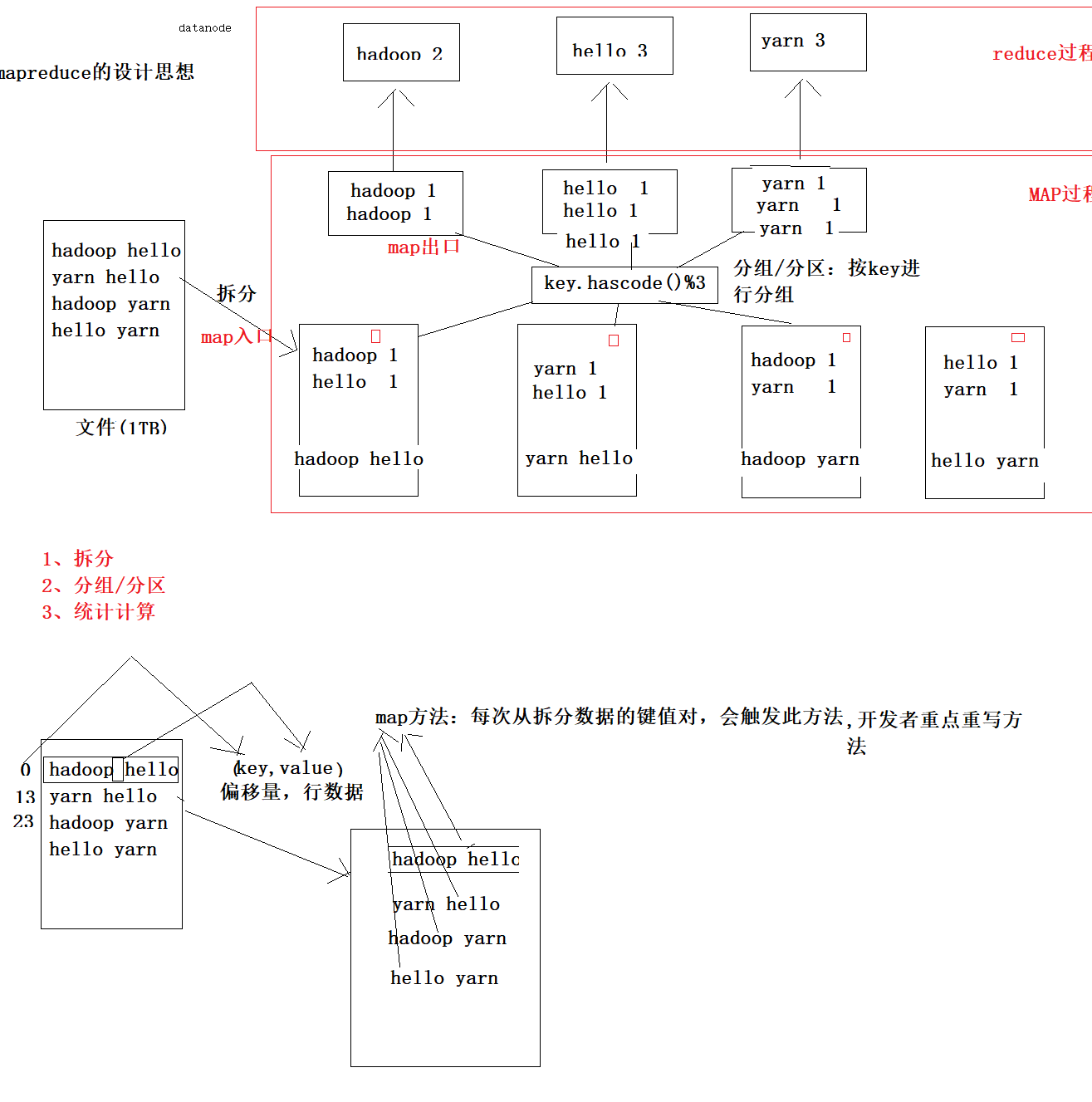

mapreduce设计思想

概念:

它是一个分布式并行计算的应用框架

它提供相应简单的api模型,我们只需按照这些模型规则编写程序,

即可实现"分布式并行计算"的功能。

案例一:wordcount经典案例

先写map方法

package com.gec.demo; import org.apache.hadoop.io.IntWritable; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Mapper; import java.io.IOException; /* * 作用:体现mapreduce的map阶段的实现 * KEYIN:输入参数key的数据类型 * VALUEIN:输入参数value的数据类型 * KEYOUT,输出key的数据类型 * VALUEOUT:输出value的数据类型 * * 输入: * map(key,value)=偏移量,行内容 * * 输出: * map(key,value)=单词,1 * * 数据类型: * java数据类型: * int-------------->IntWritable * long------------->LongWritable * String----------->Text * 它都实现序列化处理 * * */ public class WcMapTask extends Mapper<LongWritable, Text,Text, IntWritable> { /* *根据拆分输入数据的键值对,调用此方法,有多少个键,就触发多少次map方法 * 参数一:输入数据的键值:行的偏移量 * 参数二:输入数据的键对应的value值:偏移量对应行内容 * */ @Override protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { String line=value.toString(); String words[]=line.split(" "); for (String word : words) { context.write(new Text(word),new IntWritable(1)); } } }

以下为reduce方法

package com.gec.demo; import org.apache.hadoop.io.IntWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Reducer; import java.io.IOException; /* * 此类:处理reducer阶段 * 汇总单词次数 * KEYIN:输入数据key的数据类型 * VALUEIN:输入数据value的数据类型 * KEYOUT:输出数据key的数据类型 * VALUEOUT:输出数据value的数据类型 * * * */ public class WcReduceTask extends Reducer<Text, IntWritable,Text,IntWritable> { /* * 第一个参数:单词数据 * 第二个参数:集合数据类型汇总:单词的次数 * * */ @Override protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException { int count=0; for (IntWritable value : values) { count+=value.get(); } context.write(key,new IntWritable(count)); } }

最后是主类

package com.gec.demo; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.IntWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; import java.io.IOException; /* * 指明运行map task的类 * 指明运行reducer task类 * 指明输入文件io流类型 * 指明输出文件路径 * * */ public class WcMrJob { public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException { Configuration configuration=new Configuration(); Job job=Job.getInstance(configuration); //设置Driver类 job.setJarByClass(WcMrJob.class); //设置运行那个map task job.setMapperClass(WcMapTask.class); //设置运行那个reducer task job.setReducerClass(WcReduceTask.class); //设置map task的输出key的数据类型 job.setMapOutputKeyClass(Text.class); //设置map task的输出value的数据类型 job.setMapOutputValueClass(IntWritable.class); job.setOutputKeyClass(Text.class); job.setOutputValueClass(IntWritable.class); //指定要处理的数据所在的位置 FileInputFormat.setInputPaths(job, "hdfs://hadoop-001:9000/wordcount/input/big.txt"); //指定处理完成之后的结果所保存的位置 FileOutputFormat.setOutputPath(job, new Path("hdfs://hadoop-001:9000/wordcount/output/")); //向yarn集群提交这个job boolean res = job.waitForCompletion(true); System.exit(res?0:1); } }

双击package,可以生成mapreducewordcount-1.0-SNAPSHOT.jar这个jar包,将该jar包发送到hadoop-oo1

指令如下: hadoop jar mapreducewordcount-1.0-SNAPSHOT.jar com.gec.demo.WcMrJob

即可运行word count程序

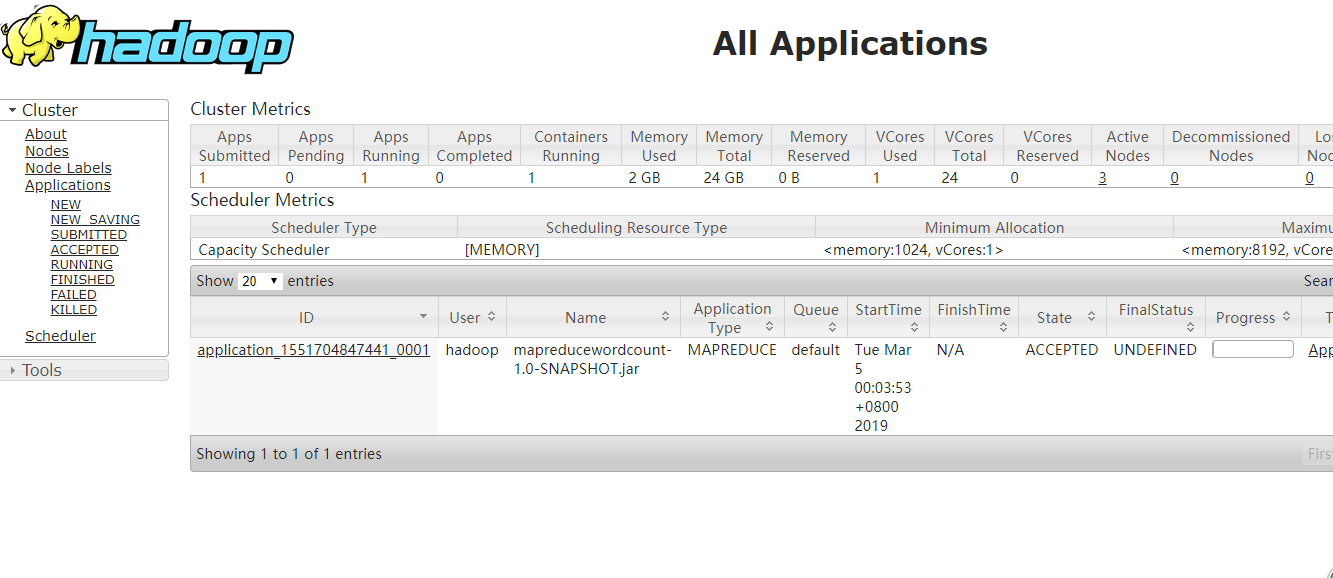

在浏览器上查看yarn

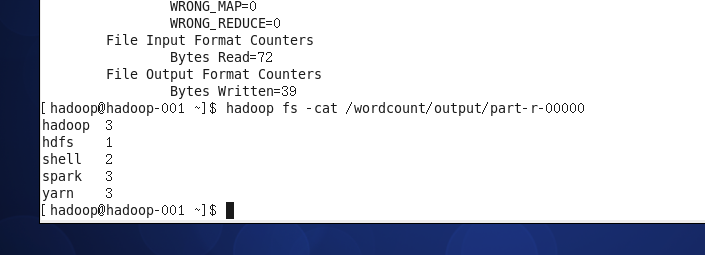

查看结果指令如下:

hadoop fs -cat /wordcount/output/part-r-00000

案例二:

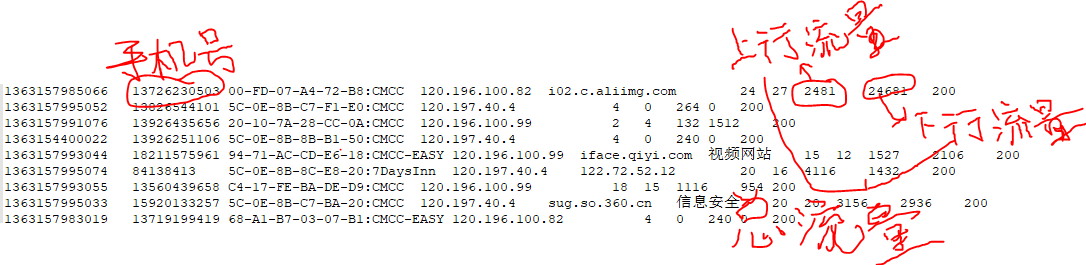

需要被统计流量的文件内容如下:

1363157985066 13726230503 00-FD-07-A4-72-B8:CMCC 120.196.100.82 i02.c.aliimg.com 24 27 2481 24681 200

1363157995052 13826544101 5C-0E-8B-C7-F1-E0:CMCC 120.197.40.4 4 0 264 0 200

1363157991076 13926435656 20-10-7A-28-CC-0A:CMCC 120.196.100.99 2 4 132 1512 200

1363154400022 13926251106 5C-0E-8B-8B-B1-50:CMCC 120.197.40.4 4 0 240 0 200

1363157993044 18211575961 94-71-AC-CD-E6-18:CMCC-EASY 120.196.100.99 iface.qiyi.com 视频网站 15 12 1527 2106 200

1363157995074 84138413 5C-0E-8B-8C-E8-20:7DaysInn 120.197.40.4 122.72.52.12 20 16 4116 1432 200

1363157993055 13560439658 C4-17-FE-BA-DE-D9:CMCC 120.196.100.99 18 15 1116 954 200

1363157995033 15920133257 5C-0E-8B-C7-BA-20:CMCC 120.197.40.4 sug.so.360.cn 信息安全 20 20 3156 2936 200

1363157983019 13719199419 68-A1-B7-03-07-B1:CMCC-EASY 120.196.100.82 4 0 240 0 200

1363157984041 13660577991 5C-0E-8B-92-5C-20:CMCC-EASY 120.197.40.4 s19.cnzz.com 站点统计 24 9 6960 690 200

1363157973098 15013685858 5C-0E-8B-C7-F7-90:CMCC 120.197.40.4 rank.ie.sogou.com 搜索引擎 28 27 3659 3538 200

1363157986029 15989002119 E8-99-C4-4E-93-E0:CMCC-EASY 120.196.100.99 www.umeng.com 站点统计 3 3 1938 180 200

1363157992093 13560439658 C4-17-FE-BA-DE-D9:CMCC 120.196.100.99 15 9 918 4938 200

1363157986041 13480253104 5C-0E-8B-C7-FC-80:CMCC-EASY 120.197.40.4 3 3 180 180 200

1363157984040 13602846565 5C-0E-8B-8B-B6-00:CMCC 120.197.40.4 2052.flash2-http.qq.com 综合门户 15 12 1938 2910 200

1363157995093 13922314466 00-FD-07-A2-EC-BA:CMCC 120.196.100.82 img.qfc.cn 12 12 3008 3720 200

1363157982040 13502468823 5C-0A-5B-6A-0B-D4:CMCC-EASY 120.196.100.99 y0.ifengimg.com 综合门户 57 102 7335 110349 200

1363157986072 18320173382 84-25-DB-4F-10-1A:CMCC-EASY 120.196.100.99 input.shouji.sogou.com 搜索引擎 21 18 9531 2412 200

1363157990043 13925057413 00-1F-64-E1-E6-9A:CMCC 120.196.100.55 t3.baidu.com 搜索引擎 69 63 11058 48243 200

1363157988072 13760778710 00-FD-07-A4-7B-08:CMCC 120.196.100.82 2 2 120 120 200

1363157985066 13726238888 00-FD-07-A4-72-B8:CMCC 120.196.100.82 i02.c.aliimg.com 24 27 2481 24681 200

其中各个字段的解释如下,要统计手机号的上行流量,下行流量和总流量,其中总流量=上行流量+下行流量

代码如下:

FlowBean:

package com.gec.demo.bean;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/*

* 序列化的类

* */

public class FlowBean implements Writable

{

//上行流量

private long upFlow;

//下行流量

private long downFlow;

//总流量

private long sumFlow;

public FlowBean() {

}

public FlowBean(long upFlow, long downFlow, long sumFlow) {

this.upFlow = upFlow;

this.downFlow = downFlow;

this.sumFlow = sumFlow;

}

public void setFlowData(long upFlow, long downFlow)

{

this.upFlow=upFlow;

this.downFlow=downFlow;

sumFlow=this.upFlow+this.downFlow;

}

public long getUpFlow() {

return upFlow;

}

public void setUpFlow(long upFlow) {

this.upFlow = upFlow;

}

public long getDownFlow() {

return downFlow;

}

public void setDownFlow(long downFlow) {

this.downFlow = downFlow;

}

public long getSumFlow() {

return sumFlow;

}

public void setSumFlow(long sumFlow) {

this.sumFlow = sumFlow;

}

//序列化处理

@Override

public void write(DataOutput out) throws IOException {

out.writeLong(this.getUpFlow());

out.writeLong(this.getDownFlow());

out.writeLong(this.getSumFlow());

}

//反列化处理

@Override

public void readFields(DataInput in) throws IOException {

setUpFlow(in.readLong());

setDownFlow(in.readLong());

setSumFlow(in.readLong());

}

@Override

public String toString() {

return getUpFlow()+"\t"+getDownFlow()+"\t"+getSumFlow();

}

}

Mapper:

package com.gec.demo;

import com.gec.demo.bean.FlowBean;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.util.StringUtils;

import java.io.IOException;

/*<

KEYIN

VALUEIN

KEYOUT

VALUEOUT

*/

public class PhoneFlowMapper extends Mapper<LongWritable, Text,Text, FlowBean>

{

private FlowBean flowBean=new FlowBean();

private Text keyText=new Text();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//获取行内容

String line=value.toString();

String []fields=StringUtils.split(line,'\t');

//获取手机号

String phoneNum=fields[1];

//获取上传流量数据

long upflow=Long.parseLong(fields[fields.length-3]);

//获取下载流量数据

long downflow=Long.parseLong(fields[fields.length-2]);

flowBean.setFlowData(upflow,downflow);

keyText.set(phoneNum);

context.write(keyText,flowBean);

}

}

Reducer:

package com.gec.demo;

import com.gec.demo.bean.FlowBean;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class PhoneFlowReducer extends Reducer<Text, FlowBean,Text, FlowBean>

{

private FlowBean flowBean=new FlowBean();

/**

*key:phonenum(电话号码)

*values:

*/

@Override

protected void reduce(Text key, Iterable<FlowBean> values, Context context) throws IOException, InterruptedException {

long sumDownFlow=0;

long sumUpFlow=0;

//统计每台手机所耗的总流量

for (FlowBean value : values) {

sumUpFlow+=value.getUpFlow();

sumDownFlow+=value.getDownFlow();

}

flowBean.setFlowData(sumUpFlow,sumDownFlow);

context.write(key,flowBean);

}

}

Driver:

package com.gec.demo;

import com.gec.demo.bean.FlowBean;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import java.io.IOException;

public class PhoneFlowApp {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

Configuration configuration=new Configuration();

Job job=Job.getInstance(configuration);

job.setJarByClass(PhoneFlowApp.class);

//job.setJar("");

job.setMapperClass(PhoneFlowMapper.class);

job.setReducerClass(PhoneFlowReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(FlowBean.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

job.setInputFormatClass(TextInputFormat.class);

job.setOutputFormatClass(TextOutputFormat.class);

//指定要处理的数据所在的位置

FileInputFormat.setInputPaths(job, "hdfs://hadoop-001:9000/flowcount/input/HTTP_20130313143750.dat");

//指定处理完成之后的结果所保存的位置

FileOutputFormat.setOutputPath(job, new Path("hdfs://hadoop-001:9000/flowcount/output/"));

//向yarn集群提交这个job

boolean res = job.waitForCompletion(true);

System.exit(res?0:1);

}

}

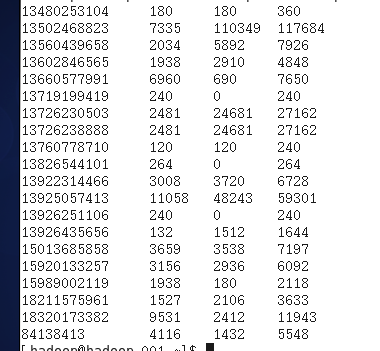

生成文件的结果如下:

手机号 上行流量 下行流量 总流量

如果要按总流量的多少排序,并按手机号输出到六个不同的文件,有如下代码:

FlowBean:要实现WritableComparable接口

package com.gec.demo.Bean;

import org.apache.hadoop.io.Writable;

import org.apache.hadoop.io.WritableComparable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class FlowBean implements WritableComparable<FlowBean> {

//上行流量

private long upFlow;

//下行流量

private long downFlow;

//总流量

private long sumFlow;

public FlowBean() {

}

public long getUpFlow() {

return upFlow;

}

public void setUpFlow(long upFlow) {

this.upFlow = upFlow;

}

public long getDownFlow() {

return downFlow;

}

public void setDownFlow(long downFlow) {

this.downFlow = downFlow;

}

public long getSumFlow() {

return sumFlow;

}

public void setSumFlow(long sumFlow) {

this.sumFlow = sumFlow;

}

public FlowBean(long upFlow, long downFlow, long sumFlow) {

this.upFlow = upFlow;

this.downFlow = downFlow;

this.sumFlow = sumFlow;

}

public void setFlowData(long upFlow,long downFlow){

this.upFlow=upFlow;

this.downFlow=downFlow;

this.sumFlow=this.upFlow+this.downFlow;

}

@Override

public String toString() {

return getUpFlow()+" "+getDownFlow()+" "+getSumFlow();

}

//序列化处理

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeLong(this.getUpFlow());

dataOutput.writeLong(this.getDownFlow());

dataOutput.writeLong(this.getSumFlow());

}

//反序列化处理

@Override

public void readFields(DataInput dataInput) throws IOException {

setUpFlow(dataInput.readLong());

setDownFlow(dataInput.readLong());

setSumFlow(dataInput.readLong());

}

@Override

public int compareTo(FlowBean o) {

if (o.getSumFlow()>this.getSumFlow()){

return 1;

}else

return -1;

}

}

//FlowPartitioner类继承Partitioner类,可以定义以什么开头的手机号输出到哪个文件

package com.gec.demo.partitioner; import com.gec.demo.Bean.FlowBean; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Partitioner; public class FlowPartitioner extends Partitioner<FlowBean,Text> { @Override public int getPartition(FlowBean flowBean, Text text, int i) { String phoneNum=text.toString(); String headThreePhoneNum=phoneNum.substring(0,3); if(headThreePhoneNum.equals("134")){ return 0; }else if(headThreePhoneNum.equals("135")){ return 1; }else if(headThreePhoneNum.equals("136")){ return 2; }else if(headThreePhoneNum.equals("137")){ return 3; }else if(headThreePhoneNum.equals("138")){ return 4; }else{ return 5; } } }

package com.gec.demo;

import com.gec.demo.Bean.FlowBean;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.util.StringUtils;

import org.mortbay.util.StringUtil;

import java.io.IOException;

public class PhoneFlowMapper extends Mapper<LongWritable, Text,FlowBean,Text> {

private FlowBean flowBean=new FlowBean();

private Text text=new Text();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String line=value.toString();

String[] fields= StringUtils.split(line,'\t');

String phoneNum=fields[1];

long upFlow=Long.parseLong(fields[fields.length-3]);

long downFlow=Long.parseLong(fields[fields.length-2]);

flowBean.setFlowData(upFlow,downFlow);

flowBean.setSumFlow(upFlow+downFlow);

text.set(phoneNum);

context.write(flowBean,text);

}

}

package com.gec.demo;

import com.gec.demo.Bean.FlowBean;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class PhoneFlowReducer extends Reducer<FlowBean,Text,Text,FlowBean> {

private FlowBean flowBean=new FlowBean();

@Override

protected void reduce(FlowBean key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

//有没有分组合并操作?

context.write(values.iterator().next(),key);

}

}

package com.gec.demo;

import com.gec.demo.Bean.FlowBean;

import com.gec.demo.partitioner.FlowPartitioner;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import java.io.IOException;

public class PhoneFlowApp {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

Configuration configuration=new Configuration();

Job job=Job.getInstance(configuration);

job.setJarByClass(PhoneFlowApp.class);

job.setMapperClass(PhoneFlowMapper.class);

job.setReducerClass(PhoneFlowReducer.class);

job.setMapOutputKeyClass(FlowBean.class);

job.setMapOutputValueClass(Text.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

job.setInputFormatClass(TextInputFormat.class);

job.setOutputFormatClass(TextOutputFormat.class);

job.setPartitionerClass(FlowPartitioner.class);

job.setNumReduceTasks(6);

//指定要处理的数据所在的位置

FileInputFormat.setInputPaths(job, "D://Bigdata//4、mapreduce//day02//HTTP_20130313143750.dat");

//指定处理完成之后的结果所保存的位置

FileOutputFormat.setOutputPath(job, new Path("D://Bigdata//4、mapreduce//day02//output"));

//向yarn集群提交这个job

boolean res = job.waitForCompletion(true);

System.exit(res?0:1);

}

}

案例三:

统计共同好友

任务需求:

如下的文本,

A:B,C,D,F,E,O

B:A,C,E,K

C:F,A,D,I

D:A,E,F,L

E:B,C,D,M,L

F:A,B,C,D,E,O,M

G:A,C,D,E,F

H:A,C,D,E,O

I:A,O

J:B,O

K:A,C,D

L:D,E,F

M:E,F,G

O:A,H,I,J

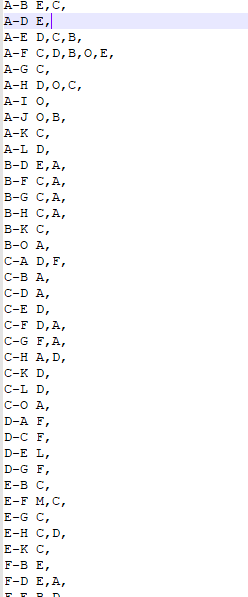

求出哪些人两两之间有共同好友,及他俩的共同好友都是谁

b -a

c -a

d -a

a -b

c -b

b -e

b -j

解题思路:

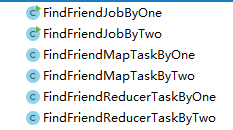

写两个mapreduce

第一个MR输出结果如:

b -> a e j

c ->a b e f h

第二个MR输出结果如:

a-e b

a-j b

e-j b

a-b c

a-e c

比如:

a-e b c d

a-m e f

代码如下:

第一个mapper:FindFriendMapTaskByOne

package com.gec.demo;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

import java.io.PrintStream;

public class FindFriendMapTaskByOne extends Mapper<LongWritable, Text,Text,Text> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String line=value.toString();

String[] datas=line.split(":");

String user=datas[0];

String []friends=datas[1].split(",");

for (String friend : friends) {

context.write(new Text(friend),new Text(user));

}

}

}

第一个reducer:

package com.gec.demo;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class FindFriendReducerTaskByOne extends Reducer<Text,Text,Text,Text> {

@Override

protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

StringBuffer strBuf=new StringBuffer();

for (Text value : values) {

strBuf.append(value).append("-");

}

context.write(key,new Text(strBuf.toString()));

}

}

第一个job

package com.gec.demo;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class FindFriendJobByOne {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

Configuration configuration=new Configuration();

Job job=Job.getInstance(configuration);

//设置Driver类

job.setJarByClass(FindFriendJobByOne.class);

//设置运行那个map task

job.setMapperClass(FindFriendMapTaskByOne .class);

//设置运行那个reducer task

job.setReducerClass(FindFriendReducerTaskByOne .class);

//设置map task的输出key的数据类型

job.setMapOutputKeyClass(Text.class);

//设置map task的输出value的数据类型

job.setMapOutputValueClass(Text.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

//指定要处理的数据所在的位置

FileInputFormat.setInputPaths(job, "D://Bigdata//4、mapreduce//day05//homework//friendhomework.txt");

//指定处理完成之后的结果所保存的位置

FileOutputFormat.setOutputPath(job, new Path("D://Bigdata//4、mapreduce//day05//homework//output"));

//向yarn集群提交这个job

boolean res = job.waitForCompletion(true);

System.exit(res?0:1);

}

}

得出结果:

第二个mapper:

package com.gec.demo;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class FindFriendMapTaskByTwo extends Mapper<LongWritable, Text,Text,Text> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String line=value.toString();

String []datas=line.split("\t");

String []userlist=datas[1].split("-");

for (int i=0;i<userlist.length-1;i++){

for (int j=i+1;j<userlist.length;j++){

String user1=userlist[i];

String user2=userlist[j];

String friendkey=user1+"-"+user2;

context.write(new Text(friendkey),new Text(datas[0]));

}

}

}

}

第二个reducer:

package com.gec.demo;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class FindFriendReducerTaskByTwo extends Reducer<Text,Text,Text,Text> {

@Override

protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

StringBuffer stringBuffer=new StringBuffer();

for (Text value : values) {

stringBuffer.append(value).append(",");

}

context.write(key,new Text(stringBuffer.toString()));

}

}

第二个job:

package com.gec.demo;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class FindFriendJobByTwo {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

Configuration configuration=new Configuration();

Job job=Job.getInstance(configuration);

//设置Driver类

job.setJarByClass(FindFriendJobByTwo.class);

//设置运行那个map task

job.setMapperClass(FindFriendMapTaskByTwo .class);

//设置运行那个reducer task

job.setReducerClass(FindFriendReducerTaskByTwo .class);

//设置map task的输出key的数据类型

job.setMapOutputKeyClass(Text.class);

//设置map task的输出value的数据类型

job.setMapOutputValueClass(Text.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

//指定要处理的数据所在的位置

FileInputFormat.setInputPaths(job, "D://Bigdata//4、mapreduce//day05//homework//friendhomework3.txt");

//指定处理完成之后的结果所保存的位置

FileOutputFormat.setOutputPath(job, new Path("D://Bigdata//4、mapreduce//day05//homework//output"));

//向yarn集群提交这个job

boolean res = job.waitForCompletion(true);

System.exit(res?0:1);

}

}

得出结果:

案例四

MapReduce中多表合并案例

1)需求:

订单数据表t_order:

|

id |

pid |

amount |

|

1001 |

01 |

1 |

|

1002 |

02 |

2 |

|

1003 |

03 |

3 |

商品信息表t_product

|

id |

pname |

|

01 |

小米 |

|

02 |

华为 |

|

03 |

格力 |

将商品信息表中数据根据商品id合并到订单数据表中。

最终数据形式:

|

id |

pname |

amount |

|

1001 |

小米 |

1 |

|

1001 |

小米 |

1 |

|

1002 |

华为 |

2 |

|

1002 |

华为 |

2 |

|

1003 |

格力 |

3 |

|

1003 |

格力 |

3 |

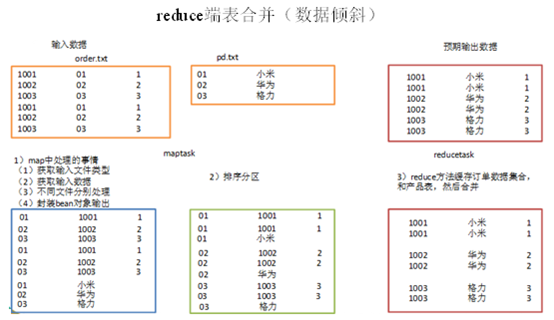

3.4.1 需求1:reduce端表合并(数据倾斜)

通过将关联条件作为map输出的key,将两表满足join条件的数据并携带数据所来源的文件信息,发往同一个reduce task,在reduce中进行数据的串联。

1)创建商品和订合并后的bean类

|

package com.gec.mapreduce.table; import java.io.DataInput; import java.io.DataOutput; import java.io.IOException; import org.apache.hadoop.io.Writable;

public class TableBean implements Writable { private String order_id; // 订单id private String p_id; // 产品id private int amount; // 产品数量 private String pname; // 产品名称 private String flag;// 表的标记

public TableBean() { super(); }

public TableBean(String order_id, String p_id, int amount, String pname, String flag) { super(); this.order_id = order_id; this.p_id = p_id; this.amount = amount; this.pname = pname; this.flag = flag; }

public String getFlag() { return flag; }

public void setFlag(String flag) { this.flag = flag; }

public String getOrder_id() { return order_id; }

public void setOrder_id(String order_id) { this.order_id = order_id; }

public String getP_id() { return p_id; }

public void setP_id(String p_id) { this.p_id = p_id; }

public int getAmount() { return amount; }

public void setAmount(int amount) { this.amount = amount; }

public String getPname() { return pname; }

public void setPname(String pname) { this.pname = pname; }

@Override public void write(DataOutput out) throws IOException { out.writeUTF(order_id); out.writeUTF(p_id); out.writeInt(amount); out.writeUTF(pname); out.writeUTF(flag); }

@Override public void readFields(DataInput in) throws IOException { this.order_id = in.readUTF(); this.p_id = in.readUTF(); this.amount = in.readInt(); this.pname = in.readUTF(); this.flag = in.readUTF(); }

@Override public String toString() { return order_id + "\t" + p_id + "\t" + amount + "\t" ; } } |

2)编写TableMapper程序

|

package com.gec.mapreduce.table; import java.io.IOException; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.lib.input.FileSplit;

public class TableMapper extends Mapper<LongWritable, Text, Text, TableBean>{ TableBean bean = new TableBean(); Text k = new Text();

@Override protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 1 获取输入文件类型 FileSplit split = (FileSplit) context.getInputSplit(); String name = split.getPath().getName();

// 2 获取输入数据 String line = value.toString();

// 3 不同文件分别处理 if (name.startsWith("order")) {// 订单表处理 // 3.1 切割 String[] fields = line.split(",");

// 3.2 封装bean对象 bean.setOrder_id(fields[0]); bean.setP_id(fields[1]); bean.setAmount(Integer.parseInt(fields[2])); bean.setPname(""); bean.setFlag("0");

k.set(fields[1]); }else {// 产品表处理 // 3.3 切割 String[] fields = line.split(",");

// 3.4 封装bean对象 bean.setP_id(fields[0]); bean.setPname(fields[1]); bean.setFlag("1"); bean.setAmount(0); bean.setOrder_id("");

k.set(fields[0]); } // 4 写出 context.write(k, bean); } } |

3)编写TableReducer程序

|

package com.gec.mapreduce.table; import java.io.IOException; import java.util.ArrayList; import org.apache.commons.beanutils.BeanUtils; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Reducer;

public class TableReducer extends Reducer<Text, TableBean, TableBean, NullWritable> {

@Override protected void reduce(Text key, Iterable<TableBean> values, Context context) throws IOException, InterruptedException {

// 1准备存储订单的集合 ArrayList<TableBean> orderBeans = new ArrayList<>(); // 2 准备bean对象 TableBean pdBean = new TableBean();

for (TableBean bean : values) {

if ("0".equals(bean.getFlag())) {// 订单表 // 拷贝传递过来的每条订单数据到集合中 TableBean orderBean = new TableBean(); try { BeanUtils.copyProperties(orderBean, bean); } catch (Exception e) { e.printStackTrace(); }

orderBeans.add(orderBean); } else {// 产品表 try { // 拷贝传递过来的产品表到内存中 BeanUtils.copyProperties(pdBean, bean); } catch (Exception e) { e.printStackTrace(); } } }

// 3 表的拼接 for(TableBean bean:orderBeans){ bean.setP_id(pdBean.getPname());

// 4 数据写出去 context.write(bean, NullWritable.get()); } } } |

4)编写TableDriver程序

|

package com.gec.mapreduce.table;

import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class TableDriver {

public static void main(String[] args) throws Exception { // 1 获取配置信息,或者job对象实例 Configuration configuration = new Configuration(); Job job = Job.getInstance(configuration);

// 2 指定本程序的jar包所在的本地路径 job.setJarByClass(TableDriver.class);

// 3 指定本业务job要使用的mapper/Reducer业务类 job.setMapperClass(TableMapper.class); job.setReducerClass(TableReducer.class);

// 4 指定mapper输出数据的kv类型 job.setMapOutputKeyClass(Text.class); job.setMapOutputValueClass(TableBean.class);

// 5 指定最终输出的数据的kv类型 job.setOutputKeyClass(TableBean.class); job.setOutputValueClass(NullWritable.class);

// 6 指定job的输入原始文件所在目录 FileInputFormat.setInputPaths(job, new Path(args[0])); FileOutputFormat.setOutputPath(job, new Path(args[1]));

// 7 将job中配置的相关参数,以及job所用的java类所在的jar包, 提交给yarn去运行 boolean result = job.waitForCompletion(true); System.exit(result ? 0 : 1); } } |

3)运行程序查看结果

|

1001 小米 1 1001 小米 1 1002 华为 2 1002 华为 2 1003 格力 3 1003 格力 3 |

缺点:这种方式中,合并的操作是在reduce阶段完成,reduce端的处理压力太大,map节点的运算负载则很低,资源利用率不高,且在reduce阶段极易产生数据倾斜

解决方案: map端实现数据合并

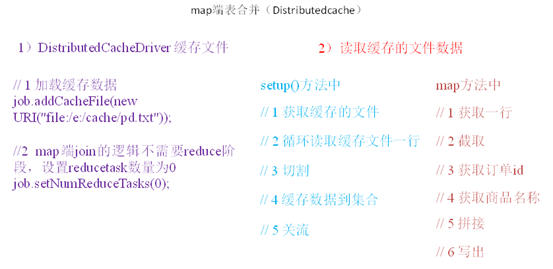

3.4.2 需求2:map端表合并(Distributedcache)

1)分析

适用于关联表中有小表的情形;

可以将小表分发到所有的map节点,这样,map节点就可以在本地对自己所读到的大表数据进行合并并输出最终结果,可以大大提高合并操作的并发度,加快处理速度。

2)实操案例

(1)先在驱动模块中添加缓存文件

|

package test; import java.net.URI; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class DistributedCacheDriver {

public static void main(String[] args) throws Exception { // 1 获取job信息 Configuration configuration = new Configuration(); Job job = Job.getInstance(configuration);

// 2 设置加载jar包路径 job.setJarByClass(DistributedCacheDriver.class);

// 3 关联map job.setMapperClass(DistributedCacheMapper.class);

// 4 设置最终输出数据类型 job.setOutputKeyClass(Text.class); job.setOutputValueClass(NullWritable.class);

// 5 设置输入输出路径 FileInputFormat.setInputPaths(job, new Path(args[0])); FileOutputFormat.setOutputPath(job, new Path(args[1]));

// 6 加载缓存数据 job.addCacheFile(new URI("file:/e:/cache/pd.txt"));

// 7 map端join的逻辑不需要reduce阶段,设置reducetask数量为0 job.setNumReduceTasks(0);

// 8 提交 boolean result = job.waitForCompletion(true); System.exit(result ? 0 : 1); } } |

(2)读取缓存的文件数据

|

package test; import java.io.BufferedReader; import java.io.FileInputStream; import java.io.IOException; import java.io.InputStreamReader; import java.util.HashMap; import java.util.Map; import org.apache.commons.lang.StringUtils; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Mapper;

public class DistributedCacheMapper extends Mapper<LongWritable, Text, Text, NullWritable>{

Map<String, String> pdMap = new HashMap<>();

@Override protected void setup(Mapper<LongWritable, Text, Text, NullWritable>.Context context) throws IOException, InterruptedException { // 1 获取缓存的文件 BufferedReader reader = new BufferedReader(new InputStreamReader(new FileInputStream("pd.txt(的完整路径)")));

String line; while(StringUtils.isNotEmpty(line = reader.readLine())){ // 2 切割 String[] fields = line.split("\t");

// 3 缓存数据到集合 pdMap.put(fields[0], fields[1]); }

// 4 关流 reader.close(); }

Text k = new Text();

@Override protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { // 1 获取一行 String line = value.toString();

// 2 截取 String[] fields = line.split("\t");

// 3 获取订单id String orderId = fields[1];

// 4 获取商品名称 String pdName = pdMap.get(orderId);

// 5 拼接 k.set(line + "\t"+ pdName);

// 6 写出 context.write(k, NullWritable.get()); } } |

案例五

求每个订单中最贵的商品(GroupingComparator)

1)需求

有如下订单数据

|

订单id |

商品id |

成交金额 |

|

Order_0000001 |

Pdt_01 |

222.8 |

|

Order_0000001 |

Pdt_05 |

25.8 |

|

Order_0000002 |

Pdt_03 |

522.8 |

|

Order_0000002 |

Pdt_04 |

122.4 |

|

Order_0000002 |

Pdt_05 |

722.4 |

|

Order_0000003 |

Pdt_01 |

222.8 |

|

Order_0000003 |

Pdt_02 |

33.8 |

现在需要求出每一个订单中最贵的商品。

2)输入数据

输出数据预期:

3)分析

(1)利用“订单id和成交金额”作为key,可以将map阶段读取到的所有订单数据按照id分区,按照金额排序,发送到reduce。

(2)在reduce端利用groupingcomparator将订单id相同的kv聚合成组,然后取第一个即是最大值。

4)实现

定义订单信息OrderBean

|

package com.gec.mapreduce.order; import java.io.DataInput; import java.io.DataOutput; import java.io.IOException; import org.apache.hadoop.io.WritableComparable;

public class OrderBean implements WritableComparable<OrderBean> {

private String orderId; private double price;

public OrderBean() { super(); }

public OrderBean(String orderId, double price) { super(); this.orderId = orderId; this.price = price; }

public String getOrderId() { return orderId; }

public void setOrderId(String orderId) { this.orderId = orderId; }

public double getPrice() { return price; }

public void setPrice(double price) { this.price = price; }

@Override public void readFields(DataInput in) throws IOException { this.orderId = in.readUTF(); this.price = in.readDouble(); }

@Override public void write(DataOutput out) throws IOException { out.writeUTF(orderId); out.writeDouble(price); }

@Override public int compareTo(OrderBean o) { // 1 先按订单id排序(从小到大) int result = this.orderId.compareTo(o.getOrderId());

if (result == 0) { // 2 再按金额排序(从大到小) result = price > o.getPrice() ? -1 : 1; }

return result; } @Override public String toString() { return orderId + "\t" + price ; } } |

编写OrderSortMapper处理流程

|

package com.gec.mapreduce.order; import java.io.IOException; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Mapper;

public class OrderSortMapper extends Mapper<LongWritable, Text, OrderBean, NullWritable>{ OrderBean bean = new OrderBean();

@Override protected void map(LongWritable key, Text value, Context context)throws IOException, InterruptedException { // 1 获取一行数据 String line = value.toString();

// 2 截取字段 String[] fields = line.split("\t");

// 3 封装bean bean.setOrderId(fields[0]); bean.setPrice(Double.parseDouble(fields[2]));

// 4 写出 context.write(bean, NullWritable.get()); } } |

编写OrderSortReducer处理流程

|

package com.gec.mapreduce.order; import java.io.IOException; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.mapreduce.Reducer;

public class OrderSortReducer extends Reducer<OrderBean, NullWritable, OrderBean, NullWritable>{ @Override protected void reduce(OrderBean bean, Iterable<NullWritable> values, Context context) throws IOException, InterruptedException { // 直接写出 context.write(bean, NullWritable.get()); } } |

编写OrderSortDriver处理流程

|

package com.gec.mapreduce.order; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class OrderSortDriver {

public static void main(String[] args) throws Exception { // 1 获取配置信息 Configuration conf = new Configuration(); Job job = Job.getInstance(conf);

// 2 设置jar包加载路径 job.setJarByClass(OrderSortDriver.class);

// 3 加载map/reduce类 job.setMapperClass(OrderSortMapper.class); job.setReducerClass(OrderSortReducer.class);

// 4 设置map输出数据key和value类型 job.setMapOutputKeyClass(OrderBean.class); job.setMapOutputValueClass(NullWritable.class);

// 5 设置最终输出数据的key和value类型 job.setOutputKeyClass(OrderBean.class); job.setOutputValueClass(NullWritable.class);

// 6 设置输入数据和输出数据路径 FileInputFormat.setInputPaths(job, new Path(args[0])); FileOutputFormat.setOutputPath(job, new Path(args[1]));

// 10 设置reduce端的分组 job.setGroupingComparatorClass(OrderSortGroupingComparator.class);

// 7 设置分区 job.setPartitionerClass(OrderSortPartitioner.class);

// 8 设置reduce个数 job.setNumReduceTasks(3);

// 9 提交 boolean result = job.waitForCompletion(true); System.exit(result ? 0 : 1); } } |

编写OrderSortPartitioner处理流程

|

package com.gec.mapreduce.order; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.mapreduce.Partitioner;

public class OrderSortPartitioner extends Partitioner<OrderBean, NullWritable>{

@Override public int getPartition(OrderBean key, NullWritable value, int numReduceTasks) {

return (key.getOrderId().hashCode() & Integer.MAX_VALUE) % numReduceTasks; } } |

编写OrderSortGroupingComparator处理流程

|

package com.gec.mapreduce.order; import org.apache.hadoop.io.WritableComparable; import org.apache.hadoop.io.WritableComparator;

public class OrderSortGroupingComparator extends WritableComparator {

protected OrderSortGroupingComparator() { super(OrderBean.class, true); }

@Override public int compare(WritableComparable a, WritableComparable b) {

OrderBean abean = (OrderBean) a; OrderBean bbean = (OrderBean) b;

// 将orderId相同的bean都视为一组 return abean.getOrderId().compareTo(bbean.getOrderId()); } } |

浙公网安备 33010602011771号

浙公网安备 33010602011771号