使用正则表达式,取得点击次数,函数抽离

1. 用正则表达式判定邮箱是否输入正确。

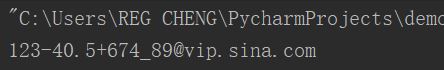

import re r = "^(\w)+([-+_.]\w+)*@(\w)+((\.\w{2,4}){1,3})$" e = "123-40.5+674_89@vip.sina.com" if re.match(r,e): print(re.match(r, e).group(0)) else: print("error!")

2. 用正则表达式识别出全部电话号码。

str = "版权所有:广州商学院 地址:广州市黄埔区九龙大道206号" \ "学校学士办公室:020-82876130 学士招生电话:020-82872773" \ "学校硕士办公室:020-82876131 硕士招生电话:020-82872774" \ "粤公网安备 44011602000060号 粤ICP备15103669号" numbers = re.findall("(\d{3,4})-(\d{6,8})", str) print(numbers)

3. 用正则表达式进行英文分词。re.split('',news)

news = "Facebook? Informs Data Leak Victims Whether They " \ "Need To Burn Down House, Cut Off Fingerprints, Start Anew," word = re.split("[\s,.?\-]+", news) print(word)

4. 使用正则表达式取得新闻编号

url = "http://news.gzcc.cn/html/2017/xiaoyuanxinwen_1225/8854.html"

newsId = re.findall("\_(.*).html", url)[0].split("/")[-1]

print(newsId)

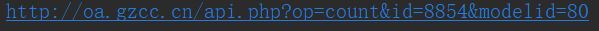

5. 生成点击次数的Request URL

Rurl = "http://oa.gzcc.cn/api.php?op=count&id={}&modelid=80".format(newsId)

print(Rurl)

6. 获取点击次数

res = requests.get("http://oa.gzcc.cn/api.php?op=count&id={}&modelid=80".format(newsId))

print(int(res.text.split(".html")[-1].lstrip("('").rsplit("');")[0]))

7. 将456步骤定义成一个函数 def getClickCount(newsUrl):

def getClickCount(): url = "http://news.gzcc.cn/html/2017/xiaoyuanxinwen_1225/8854.html" newsId = re.findall("\_(.*).html", url)[0].split("/")[-1] res1 = requests.get("http://oa.gzcc.cn/api.php?op=count&id={}&modelid=80".format(newsId)) return int(res1.text.split(".html")[-1].lstrip("('").rsplit("');")[0]) print(getClickCount())

8. 将获取新闻详情的代码定义成一个函数 def getNewDetail(newsUrl):

def getNewDetail(): detail_res = requests.get("http://news.gzcc.cn/html/2017/xiaoyuanxinwen_1225/8854.html") detail_res.encoding = "utf-8" detail_soup = BeautifulSoup(detail_res.text, "html.parser") content = detail_soup.select("#content")[0].text info = detail_soup.select(".show-info")[0].text return content, info print(getNewDetail())

9. 取出一个新闻列表页的全部新闻 包装成函数def getListPage(pageUrl):

def getListPage(): res = requests.get("http://news.gzcc.cn/html/2017/xiaoyuanxinwen_1225/8854.html") res.encoding = 'utf-8' soup = BeautifulSoup(res.text, 'html.parser') for news in soup.select("li"): if (len(news.select('.news-list-info')) > 0): newsUrl = news.select('a')[0].attrs['href'] print(newsUrl) print(getListPage())

10. 获取总的新闻篇数,算出新闻总页数包装成函数def getPageN():

def getPageN(): resurl = requests.get("http://news.gzcc.cn/html/xiaoyuanxinwen/") resurl.encoding = "utf-8" soup = BeautifulSoup(resurl.text, 'html.parser') return int(soup.select(".al")[0].text.rstrip("条"))//10+1 print(getPageN())

11. 获取全部新闻列表页的全部新闻详情。

def getall(): for num in range(2,getPageN()): listurl="http://news.gzcc.cn/html/xiaoyuanxinwen/{}.html".format(num) getlist(listurl) getNewDetail(listurl) print(getall())