Embedded Vision question

01 IFQ-Net: Integrated Fixed-point Quantization Networks for Embedded Vision (1911.08076)

(for example XNOR-Net and HWGQNet, quantize the data into 1 or 2 bits)

In this paper, we propose a fixed-point network

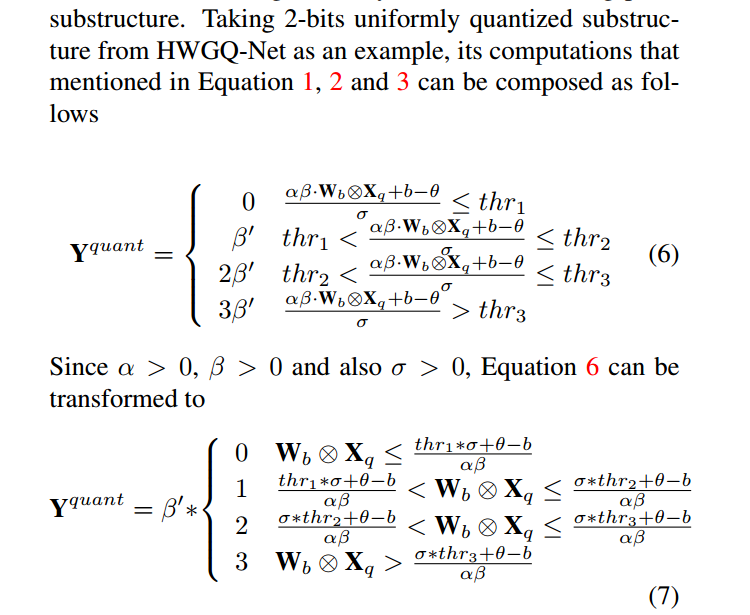

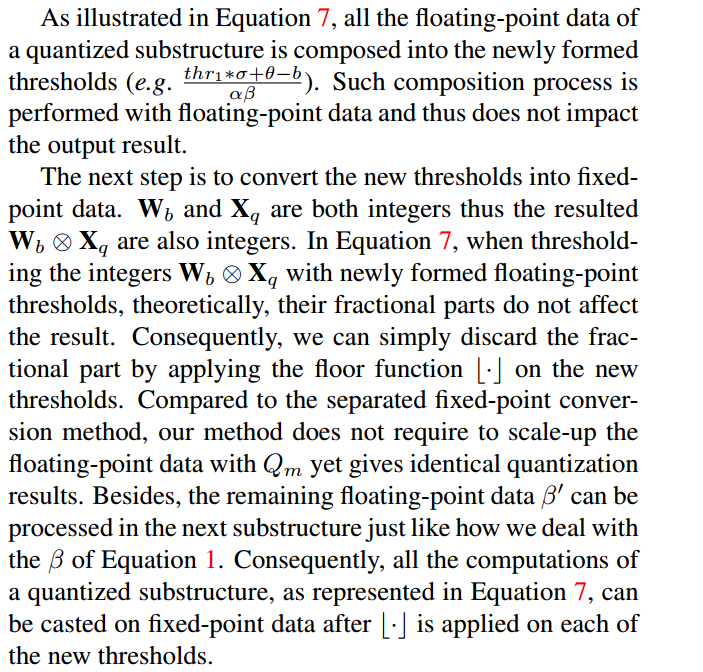

for embedded vision tasks through converting the floatingpoint data in a quantization network into fixed-point. Furthermore, to overcome the data loss caused by the conversion, we propose to compose floating-point data operations

across multiple layers (e.g. convolution, batch normalization and quantization layers) and convert them into fixedpoint.

量化网络层 将浮点数据转换为固定点数据;

为了减小数据丢失,转换过程跨多个层(卷积 正则化 量化);

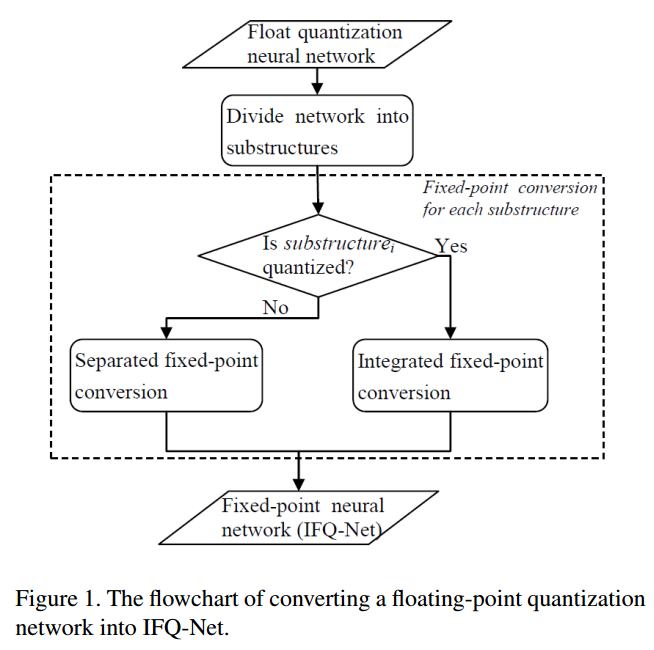

IFQ-Net, for embedded vision. It divides a quantization

network into substructures and then converts each substructure into fixed-point in either separated or the proposed integrated manner.

将网络分为不同的子结构,然后将每个子结构以分离的或集成方式转换为定点。

first we divide a trained floating-point

quantization network into substructures and then we convert

each substructure into its fixed-point counterpart. We employ HWGQ-Net algorithm to train a floating-point quantization network

设置阈值,归一化到指定范围内;

02 DupNet: Towards Very Tiny Quantized CNN with Improved Accuracy for Face(1911.05341)

Detection

【

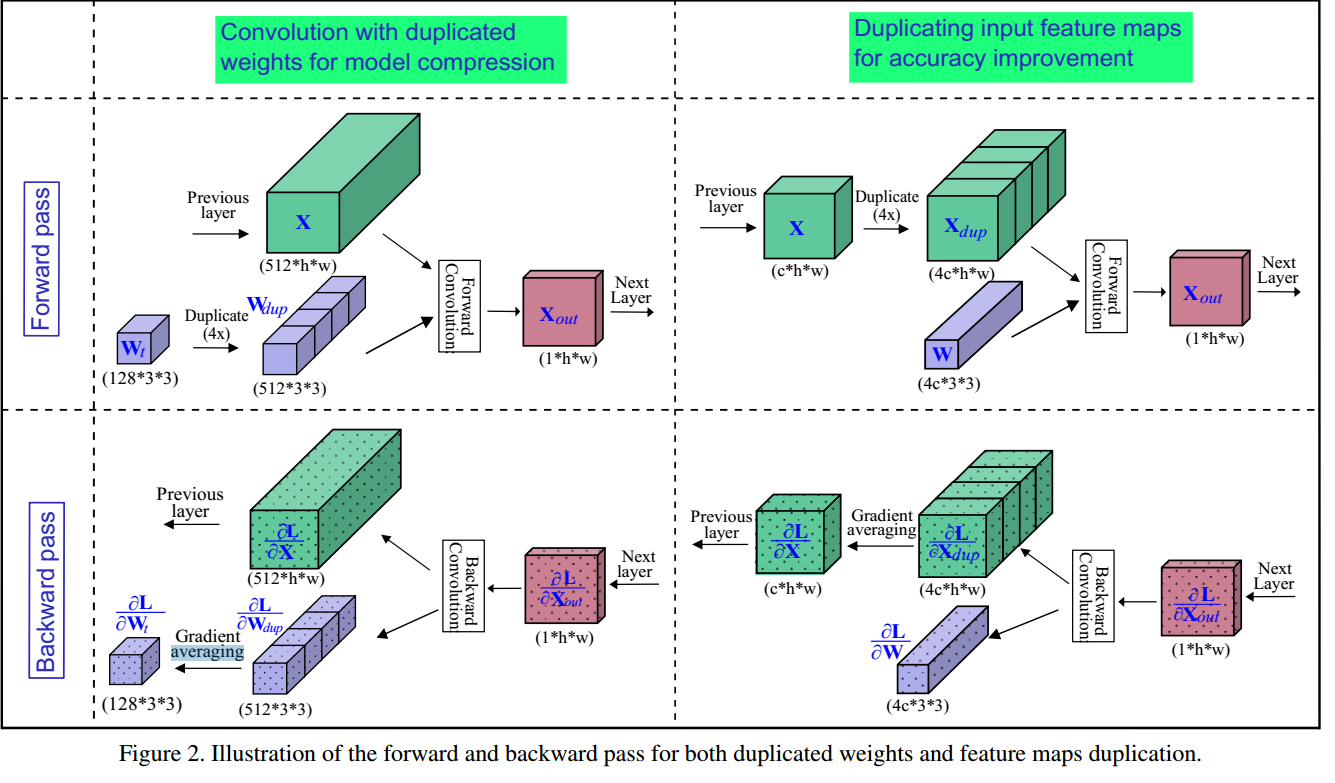

we propose DupNet which consists of two parts.

Firstly, we employ weights with duplicated channels for the

weight-intensive layers to reduce the model size. Secondly,

for the quantization-sensitive layers whose quantization

causes notable accuracy drop, we duplicate its input feature

maps. It allows us to use more weights channels for convolving more representative outputs.

】

【

1) it reduces the model size of a quantized network by duplicated weights for weight-intensive layers;

2)it increases the accuracy through duplicating the input feature maps of its quantization-sensitive layers.

】

1:权重密集层复制权重参数(使用同样的参数,减小模型尺寸)

2:量化密集层复制输入特性映射(增加准确率)

--