爬虫必备—Scrapy

一、Scrapy简介

Scrapy是一个为了爬取网站数据,提取结构性数据而编写的应用框架。 其可以应用在数据挖掘,信息处理或存储历史数据等一系列的程序中。

其最初是为了页面抓取 (更确切来说, 网络抓取 )所设计的, 也可以应用在获取API所返回的数据(例如 Amazon Associates Web Services ) 或者通用的网络爬虫。Scrapy用途广泛,可以用于数据挖掘、监测和自动化测试。

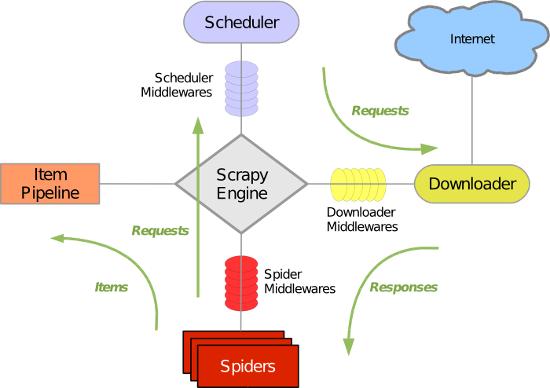

Scrapy 使用了 Twisted异步网络库来处理网络通讯。整体架构大致如下:

Scrapy主要包括了以下组件:

- 引擎(Scrapy)

用来处理整个系统的数据流处理, 触发事务(框架核心)

调度器(Scheduler)

用来接受引擎发过来的请求, 压入队列中, 并在引擎再次请求的时候返回. 可以想像成一个URL(抓取网页的网址或者说是链接)的优先队列, 由它来决定下一个要抓取的网址是什么, 同时去除重复的网址

- 下载器(Downloader)

用于下载网页内容, 并将网页内容返回给蜘蛛(Scrapy下载器是建立在twisted这个高效的异步模型上的)

- 爬虫(Spiders)

爬虫是主要干活的, 用于从特定的网页中提取自己需要的信息, 即所谓的实体(Item)。用户也可以从中提取出链接,让Scrapy继续抓取下一个页面

- 项目管道(Pipeline)

负责处理爬虫从网页中抽取的实体,主要的功能是持久化实体、验证实体的有效性、清除不需要的信息。当页面被爬虫解析后,将被发送到项目管道,并经过几个特定的次序处理数据。

- 下载器中间件(Downloader Middlewares)

位于Scrapy引擎和下载器之间的框架,主要是处理Scrapy引擎与下载器之间的请求及响应。

- 爬虫中间件(Spider Middlewares)

介于Scrapy引擎和爬虫之间的框架,主要工作是处理蜘蛛的响应输入和请求输出。

- 调度中间件(Scheduler Middewares)

介于Scrapy引擎和调度之间的中间件,从Scrapy引擎发送到调度的请求和响应。

Scrapy运行流程大概如下:

- 引擎从调度器中取出一个链接(URL)用于接下来的抓取

- 引擎把URL封装成一个请求(Request)传给下载器

- 下载器把资源下载下来,并封装成应答包(Response)

- 爬虫解析Response

- 解析出实体(Item),则交给实体管道进行进一步的处理

- 解析出的是链接(URL),则把URL交给调度器等待抓取

二、安装

1 Linux 2 pip3 install scrapy 3 4 5 Windows 6 a. pip3 install wheel 7 b. 下载twisted http://www.lfd.uci.edu/~gohlke/pythonlibs/#twisted 8 c. 进入下载目录,执行 pip3 install Twisted‑17.1.0‑cp35‑cp35m‑win_amd64.whl 9 d. pip3 install scrapy 10 e. 下载并安装pywin32:https://sourceforge.net/projects/pywin32/files/

三、基本使用

1. 基本命令

1. scrapy startproject 项目名称 - 在当前目录中创建中创建一个项目文件(类似于Django) 2. scrapy genspider [-t template] <name> <domain> - 创建爬虫应用 如: scrapy gensipider -t basic oldboy oldboy.com scrapy gensipider -t xmlfeed autohome autohome.com.cn PS: 查看所有命令:scrapy gensipider -l 查看模板命令:scrapy gensipider -d 模板名称 3. scrapy list - 展示爬虫应用列表 4. scrapy crawl 爬虫应用名称 - 运行单独爬虫应用

2. 项目结构及爬虫应用简介

2.1. 目录结构

project_name/ scrapy.cfg project_name/ __init__.py items.py pipelines.py settings.py spiders/ __init__.py 爬虫1.py 爬虫2.py 爬虫3.py

2.2. 文件说明

- scrapy.cfg 项目的主配置信息。(真正爬虫相关的配置信息在settings.py文件中)

- items.py 设置数据存储模板,用于结构化数据,如:Django的Model

- pipelines 数据处理行为,如:一般结构化的数据持久化

- settings.py 配置文件,如:递归的层数、并发数,延迟下载等

- spiders 爬虫目录,如:创建文件,编写爬虫规则

注意:一般创建爬虫文件时,以网站域名命名

2.3. 示例

1 import scrapy 2 3 class XiaoHuarSpider(scrapy.spiders.Spider): 4 name = "xiaohuar" # 爬虫名称 ***** 5 allowed_domains = ["xiaohuar.com"] # 允许的域名 6 start_urls = [ 7 "http://www.xiaohuar.com/hua/", # URL 8 ] 9 10 def parse(self, response): 11 # 访问起始URL并获取结果后的回调函数

若在Windows系统上若出现编码错误,解决方法如下:

1 import sys,os 2 3 sys.stdout=io.TextIOWrapper(sys.stdout.buffer,encoding='gb18030')

3. 小试牛刀

1 import scrapy 2 from scrapy.selector import HtmlXPathSelector 3 from scrapy.http.request import Request 4 5 6 class DigSpider(scrapy.Spider): 7 # 爬虫应用的名称,通过此名称启动爬虫命令 8 name = "dig" 9 10 # 允许的域名 11 allowed_domains = ["chouti.com"] 12 13 # 起始URL 14 start_urls = [ 15 'http://dig.chouti.com/', 16 ] 17 18 has_request_set = {} 19 20 def parse(self, response): 21 print(response.url) 22 23 hxs = HtmlXPathSelector(response) 24 page_list = hxs.select('//div[@id="dig_lcpage"]//a[re:test(@href, "/all/hot/recent/\d+")]/@href').extract() 25 for page in page_list: 26 page_url = 'http://dig.chouti.com%s' % page 27 key = self.md5(page_url) 28 if key in self.has_request_set: 29 pass 30 else: 31 self.has_request_set[key] = page_url 32 obj = Request(url=page_url, method='GET', callback=self.parse) 33 yield obj 34 35 @staticmethod 36 def md5(val): 37 import hashlib 38 ha = hashlib.md5() 39 ha.update(bytes(val, encoding='utf-8')) 40 key = ha.hexdigest() 41 return key

执行此爬虫文件,则在终端进入项目目录执行如下命令:

1 scrapy crawl dig --nolog

对于上述代码重要之处在于:

- Request是一个封装用户请求的类,在回调函数中yield该对象表示继续访问

- HtmlXpathSelector用于结构化HTML代码并提供选择器功能

4. 选择器

1 #!/usr/bin/env python 2 # -*- coding:utf-8 -*- 3 from scrapy.selector import Selector, HtmlXPathSelector 4 from scrapy.http import HtmlResponse 5 html = """<!DOCTYPE html> 6 <html> 7 <head lang="en"> 8 <meta charset="UTF-8"> 9 <title></title> 10 </head> 11 <body> 12 <ul> 13 <li class="item-"><a id='i1' href="link.html">first item</a></li> 14 <li class="item-0"><a id='i2' href="llink.html">first item</a></li> 15 <li class="item-1"><a href="llink2.html">second item<span>vv</span></a></li> 16 </ul> 17 <div><a href="llink2.html">second item</a></div> 18 </body> 19 </html> 20 """ 21 response = HtmlResponse(url='http://example.com', body=html,encoding='utf-8') 22 # hxs = HtmlXPathSelector(response) 23 # print(hxs) 24 # hxs = Selector(response=response).xpath('//a') 25 # print(hxs) 26 # hxs = Selector(response=response).xpath('//a[2]') 27 # print(hxs) 28 # hxs = Selector(response=response).xpath('//a[@id]') 29 # print(hxs) 30 # hxs = Selector(response=response).xpath('//a[@id="i1"]') 31 # print(hxs) 32 # hxs = Selector(response=response).xpath('//a[@href="link.html"][@id="i1"]') 33 # print(hxs) 34 # hxs = Selector(response=response).xpath('//a[contains(@href, "link")]') 35 # print(hxs) 36 # hxs = Selector(response=response).xpath('//a[starts-with(@href, "link")]') 37 # print(hxs) 38 # hxs = Selector(response=response).xpath('//a[re:test(@id, "i\d+")]') 39 # print(hxs) 40 # hxs = Selector(response=response).xpath('//a[re:test(@id, "i\d+")]/text()').extract() 41 # print(hxs) 42 # hxs = Selector(response=response).xpath('//a[re:test(@id, "i\d+")]/@href').extract() 43 # print(hxs) 44 # hxs = Selector(response=response).xpath('/html/body/ul/li/a/@href').extract() 45 # print(hxs) 46 # hxs = Selector(response=response).xpath('//body/ul/li/a/@href').extract_first() 47 # print(hxs) 48 49 # ul_list = Selector(response=response).xpath('//body/ul/li') 50 # for item in ul_list: 51 # v = item.xpath('./a/span') 52 # # 或 53 # # v = item.xpath('a/span') 54 # # 或 55 # # v = item.xpath('*/a/span') 56 # print(v)

5. 登陆抽屉实现自动点赞或取消赞

1 # -*- coding: utf-8 -*- 2 import scrapy 3 import json 4 import urllib.parse 5 from scrapy.selector import Selector, HtmlXPathSelector 6 from scrapy.http import Request 7 8 9 class ChoutiSpider(scrapy.Spider): 10 name = 'chouti' 11 allowed_domains = ['dig.chouti.com'] 12 start_urls = ['http://dig.chouti.com/'] 13 cookie_dict = {} 14 news_dict = {} 15 type = 'undo' # 取消点赞undo,点赞do 16 17 def start_requests(self): 18 for url in self.start_urls: 19 yield Request(url, dont_filter=True, callback=self.login) 20 21 def login(self, response): 22 print('login...\n') 23 from scrapy.http.cookies import CookieJar 24 ck_jar = CookieJar() 25 ck_jar.extract_cookies(response, response.request) # 去响应中获取cookies 26 for k, v in ck_jar._cookies.items(): 27 for i, j in v.items(): 28 for m, n in j.items(): 29 self.cookie_dict[m] = n.value 30 print(self.cookie_dict,'\n') 31 post_dict = { 32 'phone': 123456789, 33 'password': '**********', 34 'oneMonth': 1, 35 } 36 37 yield Request( 38 url='http://dig.chouti.com/login', 39 method='POST', 40 cookies=self.cookie_dict, 41 body=urllib.parse.urlencode(post_dict), 42 headers={'Content-Type': 'application/x-www-form-urlencoded; charset=UTF-8'}, 43 callback=self.get_news 44 ) 45 46 def get_news(self, response): 47 yield Request(url='http://dig.chouti.com/', cookies=self.cookie_dict, callback=self.do) 48 49 def do(self, response): 50 hxs = Selector(response) 51 link_id_list = hxs.xpath('//div[@class="part2"]/@share-linkid').extract() 52 print(link_id_list) 53 undo_url = 'http://dig.chouti.com/vote/cancel/vote.do' 54 for link_id in link_id_list: 55 if self.type == 'undo': 56 print('undo.........\n') 57 post_dict = {'linksId': link_id} 58 yield Request( 59 undo_url, method='POST', 60 cookies=self.cookie_dict, 61 body=urllib.parse.urlencode(post_dict), 62 headers={'Content-Type': 'application/x-www-form-urlencoded; charset=UTF-8'}, 63 callback=self.showret) 64 else: 65 print('dododod.......\n') 66 thumb_url = "http://dig.chouti.com/link/vote?linksId=%s" % (link_id,) 67 yield Request(thumb_url, method='POST', cookies=self.cookie_dict, callback=self.showret) 68 page_list = hxs.xpath('//a[@class="ct_pagepa"]/@href').extract() 69 for page in page_list: 70 # http://dig.chouti.com/all/hot/recent/2 71 page_url = 'http://dig.chouti.com%s' %(page,) 72 yield Request(page_url, method='GET', callback=self.do) 73 74 75 def showret(self, response): 76 print('show...\n', response.text)

注意:settings.py中设置DEPTH_LIMIT = 1来指定“递归”的层数,即只操作当前页。

6. 格式处理

上述实例只是简单的处理,所以在parse方法中直接处理。如果对于想要获取更多的数据处理,则可以利用Scrapy的items将数据格式化,然后统一交由pipelines来处理。

1 import scrapy 2 from scrapy.selector import HtmlXPathSelector 3 from scrapy.http.request import Request 4 from scrapy.http.cookies import CookieJar 5 from scrapy import FormRequest 6 7 8 class XiaoHuarSpider(scrapy.Spider): 9 # 爬虫应用的名称,通过此名称启动爬虫命令 10 name = "xiaohuar" 11 # 允许的域名 12 allowed_domains = ["xiaohuar.com"] 13 14 start_urls = [ 15 "http://www.xiaohuar.com/list-1-1.html", 16 ] 17 # custom_settings = { 18 # 'ITEM_PIPELINES':{ 19 # 'spider1.pipelines.JsonPipeline': 100 20 # } 21 # } 22 has_request_set = {} 23 24 def parse(self, response): 25 # 分析页面 26 # 找到页面中符合规则的内容(校花图片),保存 27 # 找到所有的a标签,再访问其他a标签,一层一层的搞下去 28 29 hxs = HtmlXPathSelector(response) 30 31 items = hxs.select('//div[@class="item_list infinite_scroll"]/div') 32 for item in items: 33 src = item.select('.//div[@class="img"]/a/img/@src').extract_first() 34 name = item.select('.//div[@class="img"]/span/text()').extract_first() 35 school = item.select('.//div[@class="img"]/div[@class="btns"]/a/text()').extract_first() 36 url = "http://www.xiaohuar.com%s" % src 37 from ..items import XiaoHuarItem 38 obj = XiaoHuarItem(name=name, school=school, url=url) 39 yield obj 40 41 urls = hxs.select('//a[re:test(@href, "http://www.xiaohuar.com/list-1-\d+.html")]/@href') 42 for url in urls: 43 key = self.md5(url) 44 if key in self.has_request_set: 45 pass 46 else: 47 self.has_request_set[key] = url 48 req = Request(url=url,method='GET',callback=self.parse) 49 yield req 50 51 @staticmethod 52 def md5(val): 53 import hashlib 54 ha = hashlib.md5() 55 ha.update(bytes(val, encoding='utf-8')) 56 key = ha.hexdigest() 57 return key

1 import scrapy 2 3 4 class XiaoHuarItem(scrapy.Item): 5 name = scrapy.Field() 6 school = scrapy.Field() 7 url = scrapy.Field()

1 import json 2 import os 3 import requests 4 5 6 class JsonPipeline(object): 7 def __init__(self): 8 self.file = open('xiaohua.txt', 'w') 9 10 def process_item(self, item, spider): 11 v = json.dumps(dict(item), ensure_ascii=False) 12 self.file.write(v) 13 self.file.write('\n') 14 self.file.flush() 15 return item 16 17 18 class FilePipeline(object): 19 def __init__(self): 20 if not os.path.exists('imgs'): 21 os.makedirs('imgs') 22 23 def process_item(self, item, spider): 24 response = requests.get(item['url'], stream=True) 25 file_name = '%s_%s.jpg' % (item['name'], item['school']) 26 with open(os.path.join('imgs', file_name), mode='wb') as f: 27 f.write(response.content) 28 return item

1 ITEM_PIPELINES = { 2 'spider1.pipelines.JsonPipeline': 100, 3 'spider1.pipelines.FilePipeline': 300, 4 } 5 # 每行后面的整型值,确定了他们运行的顺序,item按数字从低到高的顺序,通过pipeline,通常将这些数字定义在0-1000范围内。

对于pipeline可以做更多,如下:

1 from scrapy.exceptions import DropItem 2 3 class CustomPipeline(object): 4 def __init__(self,v): 5 self.value = v 6 7 def process_item(self, item, spider): 8 # 操作并进行持久化 9 10 # return表示会被后续的pipeline继续处理 11 return item 12 13 # 表示将item丢弃,不会被后续pipeline处理 14 # raise DropItem() 15 16 17 @classmethod 18 def from_crawler(cls, crawler): 19 """ 20 初始化时候,用于创建pipeline对象 21 :param crawler: 22 :return: 23 """ 24 val = crawler.settings.getint('MMMM') 25 return cls(val) 26 27 def open_spider(self,spider): 28 """ 29 爬虫开始执行时,调用 30 :param spider: 31 :return: 32 """ 33 print('000000') 34 35 def close_spider(self,spider): 36 """ 37 爬虫关闭时,被调用 38 :param spider: 39 :return: 40 """ 41 print('111111')

7. 中间件

1 class SpiderMiddleware(object): 2 3 def process_spider_input(self,response, spider): 4 """ 5 下载完成,执行,然后交给parse处理 6 :param response: 7 :param spider: 8 :return: 9 """ 10 pass 11 12 def process_spider_output(self,response, result, spider): 13 """ 14 spider处理完成,返回时调用 15 :param response: 16 :param result: 17 :param spider: 18 :return: 必须返回包含 Request 或 Item 对象的可迭代对象(iterable) 19 """ 20 return result 21 22 def process_spider_exception(self,response, exception, spider): 23 """ 24 异常调用 25 :param response: 26 :param exception: 27 :param spider: 28 :return: None,继续交给后续中间件处理异常;含 Response 或 Item 的可迭代对象(iterable),交给调度器或pipeline 29 """ 30 return None 31 32 33 def process_start_requests(self,start_requests, spider): 34 """ 35 爬虫启动时调用 36 :param start_requests: 37 :param spider: 38 :return: 包含 Request 对象的可迭代对象 39 """ 40 return start_requests

1 class DownMiddleware1(object): 2 def process_request(self, request, spider): 3 """ 4 请求需要被下载时,经过所有下载器中间件的process_request调用 5 :param request: 6 :param spider: 7 :return: 8 None,继续后续中间件去下载; 9 Response对象,停止process_request的执行,开始执行process_response 10 Request对象,停止中间件的执行,将Request重新调度器 11 raise IgnoreRequest异常,停止process_request的执行,开始执行process_exception 12 """ 13 pass 14 15 16 17 def process_response(self, request, response, spider): 18 """ 19 spider处理完成,返回时调用 20 :param response: 21 :param result: 22 :param spider: 23 :return: 24 Response 对象:转交给其他中间件process_response 25 Request 对象:停止中间件,request会被重新调度下载 26 raise IgnoreRequest 异常:调用Request.errback 27 """ 28 print('response1') 29 return response 30 31 def process_exception(self, request, exception, spider): 32 """ 33 当下载处理器(download handler)或 process_request() (下载中间件)抛出异常 34 :param response: 35 :param exception: 36 :param spider: 37 :return: 38 None:继续交给后续中间件处理异常; 39 Response对象:停止后续process_exception方法 40 Request对象:停止中间件,request将会被重新调用下载 41 """ 42 return None

8. 自定制命令

- 在spiders同级创建任意目录,如:commands

- 在其中创建 crawlall.py 文件 (此处文件名就是自定义的命令)

1 from scrapy.commands import ScrapyCommand 2 from scrapy.utils.project import get_project_settings 3 4 5 class Command(ScrapyCommand): 6 7 requires_project = True 8 9 def syntax(self): 10 return '[options]' 11 12 def short_desc(self): 13 return 'Runs all of the spiders' 14 15 def run(self, args, opts): 16 spider_list = self.crawler_process.spiders.list() 17 for name in spider_list: 18 self.crawler_process.crawl(name, **opts.__dict__) 19 self.crawler_process.start()

- 在settings.py 中添加配置 COMMANDS_MODULE = '项目名称.目录名称'

- 在项目目录执行命令:scrapy crawlall

9. 自定义扩展

自定义扩展时,利用信号在指定位置注册制定操作

1 from scrapy import signals 2 3 4 class MyExtension(object): 5 def __init__(self, value): 6 self.value = value 7 8 @classmethod 9 def from_crawler(cls, crawler): 10 val = crawler.settings.getint('MMMM') 11 ext = cls(val) 12 13 crawler.signals.connect(ext.spider_opened, signal=signals.spider_opened) 14 crawler.signals.connect(ext.spider_closed, signal=signals.spider_closed) 15 16 return ext 17 18 def spider_opened(self, spider): 19 print('open') 20 21 def spider_closed(self, spider): 22 print('close')

10. 避免重复访问

scrapy默认使用 scrapy.dupefilter.RFPDupeFilter 进行去重,相关配置有:

1 DUPEFILTER_CLASS = 'scrapy.dupefilter.RFPDupeFilter' 2 DUPEFILTER_DEBUG = False 3 JOBDIR = "保存范文记录的日志路径,如:/root/" # 最终路径为 /root/requests.seen

1 class RepeatUrl: 2 def __init__(self): 3 self.visited_url = set() 4 5 @classmethod 6 def from_settings(cls, settings): 7 """ 8 初始化时,调用 9 :param settings: 10 :return: 11 """ 12 return cls() 13 14 def request_seen(self, request): 15 """ 16 检测当前请求是否已经被访问过 17 :param request: 18 :return: True表示已经访问过;False表示未访问过 19 """ 20 if request.url in self.visited_url: 21 return True 22 self.visited_url.add(request.url) 23 return False 24 25 def open(self): 26 """ 27 开始爬去请求时,调用 28 :return: 29 """ 30 print('open replication') 31 32 def close(self, reason): 33 """ 34 结束爬虫爬取时,调用 35 :param reason: 36 :return: 37 """ 38 print('close replication') 39 40 def log(self, request, spider): 41 """ 42 记录日志 43 :param request: 44 :param spider: 45 :return: 46 """ 47 print('repeat', request.url)

11. 其它

1 # -*- coding: utf-8 -*- 2 3 # Scrapy settings for step8_king project 4 # 5 # For simplicity, this file contains only settings considered important or 6 # commonly used. You can find more settings consulting the documentation: 7 # 8 # http://doc.scrapy.org/en/latest/topics/settings.html 9 # http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html 10 # http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html 11 12 # 1. 爬虫名称 13 BOT_NAME = 'step8_king' 14 15 # 2. 爬虫应用路径 16 SPIDER_MODULES = ['step8_king.spiders'] 17 NEWSPIDER_MODULE = 'step8_king.spiders' 18 19 # Crawl responsibly by identifying yourself (and your website) on the user-agent 20 # 3. 客户端 user-agent请求头 21 # USER_AGENT = 'step8_king (+http://www.yourdomain.com)' 22 23 # Obey robots.txt rules 24 # 4. 禁止爬虫配置 25 # ROBOTSTXT_OBEY = False 26 27 # Configure maximum concurrent requests performed by Scrapy (default: 16) 28 # 5. 并发请求数 29 # CONCURRENT_REQUESTS = 4 30 31 # Configure a delay for requests for the same website (default: 0) 32 # See http://scrapy.readthedocs.org/en/latest/topics/settings.html#download-delay 33 # See also autothrottle settings and docs 34 # 6. 延迟下载秒数 35 # DOWNLOAD_DELAY = 2 36 37 38 # The download delay setting will honor only one of: 39 # 7. 单域名访问并发数,并且延迟下次秒数也应用在每个域名 40 # CONCURRENT_REQUESTS_PER_DOMAIN = 2 41 # 单IP访问并发数,如果有值则忽略:CONCURRENT_REQUESTS_PER_DOMAIN,并且延迟下次秒数也应用在每个IP 42 # CONCURRENT_REQUESTS_PER_IP = 3 43 44 # Disable cookies (enabled by default) 45 # 8. 是否支持cookie,cookiejar进行操作cookie 46 # COOKIES_ENABLED = True 47 # COOKIES_DEBUG = True 48 49 # Disable Telnet Console (enabled by default) 50 # 9. Telnet用于查看当前爬虫的信息,操作爬虫等... 51 # 使用telnet ip port ,然后通过命令操作 52 # TELNETCONSOLE_ENABLED = True 53 # TELNETCONSOLE_HOST = '127.0.0.1' 54 # TELNETCONSOLE_PORT = [6023,] 55 56 57 # 10. 默认请求头 58 # Override the default request headers: 59 # DEFAULT_REQUEST_HEADERS = { 60 # 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8', 61 # 'Accept-Language': 'en', 62 # } 63 64 65 # Configure item pipelines 66 # See http://scrapy.readthedocs.org/en/latest/topics/item-pipeline.html 67 # 11. 定义pipeline处理请求 68 # ITEM_PIPELINES = { 69 # 'step8_king.pipelines.JsonPipeline': 700, 70 # 'step8_king.pipelines.FilePipeline': 500, 71 # } 72 73 74 75 # 12. 自定义扩展,基于信号进行调用 76 # Enable or disable extensions 77 # See http://scrapy.readthedocs.org/en/latest/topics/extensions.html 78 # EXTENSIONS = { 79 # # 'step8_king.extensions.MyExtension': 500, 80 # } 81 82 83 # 13. 爬虫允许的最大深度,可以通过meta查看当前深度;0表示无深度 84 # DEPTH_LIMIT = 3 85 86 # 14. 爬取时,0表示深度优先Lifo(默认);1表示广度优先FiFo 87 88 # 后进先出,深度优先 89 # DEPTH_PRIORITY = 0 90 # SCHEDULER_DISK_QUEUE = 'scrapy.squeue.PickleLifoDiskQueue' 91 # SCHEDULER_MEMORY_QUEUE = 'scrapy.squeue.LifoMemoryQueue' 92 # 先进先出,广度优先 93 94 # DEPTH_PRIORITY = 1 95 # SCHEDULER_DISK_QUEUE = 'scrapy.squeue.PickleFifoDiskQueue' 96 # SCHEDULER_MEMORY_QUEUE = 'scrapy.squeue.FifoMemoryQueue' 97 98 # 15. 调度器队列 99 # SCHEDULER = 'scrapy.core.scheduler.Scheduler' 100 # from scrapy.core.scheduler import Scheduler 101 102 103 # 16. 访问URL去重 104 # DUPEFILTER_CLASS = 'step8_king.duplication.RepeatUrl' 105 106 107 # Enable and configure the AutoThrottle extension (disabled by default) 108 # See http://doc.scrapy.org/en/latest/topics/autothrottle.html 109 110 """ 111 17. 自动限速算法 112 from scrapy.contrib.throttle import AutoThrottle 113 自动限速设置 114 1. 获取最小延迟 DOWNLOAD_DELAY 115 2. 获取最大延迟 AUTOTHROTTLE_MAX_DELAY 116 3. 设置初始下载延迟 AUTOTHROTTLE_START_DELAY 117 4. 当请求下载完成后,获取其"连接"时间 latency,即:请求连接到接受到响应头之间的时间 118 5. 用于计算的... AUTOTHROTTLE_TARGET_CONCURRENCY 119 target_delay = latency / self.target_concurrency 120 new_delay = (slot.delay + target_delay) / 2.0 # 表示上一次的延迟时间 121 new_delay = max(target_delay, new_delay) 122 new_delay = min(max(self.mindelay, new_delay), self.maxdelay) 123 slot.delay = new_delay 124 """ 125 126 # 开始自动限速 127 # AUTOTHROTTLE_ENABLED = True 128 # The initial download delay 129 # 初始下载延迟 130 # AUTOTHROTTLE_START_DELAY = 5 131 # The maximum download delay to be set in case of high latencies 132 # 最大下载延迟 133 # AUTOTHROTTLE_MAX_DELAY = 10 134 # The average number of requests Scrapy should be sending in parallel to each remote server 135 # 平均每秒并发数 136 # AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0 137 138 # Enable showing throttling stats for every response received: 139 # 是否显示 140 # AUTOTHROTTLE_DEBUG = True 141 142 # Enable and configure HTTP caching (disabled by default) 143 # See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings 144 145 146 """ 147 18. 启用缓存 148 目的用于将已经发送的请求或相应缓存下来,以便以后使用 149 150 from scrapy.downloadermiddlewares.httpcache import HttpCacheMiddleware 151 from scrapy.extensions.httpcache import DummyPolicy 152 from scrapy.extensions.httpcache import FilesystemCacheStorage 153 """ 154 # 是否启用缓存策略 155 # HTTPCACHE_ENABLED = True 156 157 # 缓存策略:所有请求均缓存,下次在请求直接访问原来的缓存即可 158 # HTTPCACHE_POLICY = "scrapy.extensions.httpcache.DummyPolicy" 159 # 缓存策略:根据Http响应头:Cache-Control、Last-Modified 等进行缓存的策略 160 # HTTPCACHE_POLICY = "scrapy.extensions.httpcache.RFC2616Policy" 161 162 # 缓存超时时间 163 # HTTPCACHE_EXPIRATION_SECS = 0 164 165 # 缓存保存路径 166 # HTTPCACHE_DIR = 'httpcache' 167 168 # 缓存忽略的Http状态码 169 # HTTPCACHE_IGNORE_HTTP_CODES = [] 170 171 # 缓存存储的插件 172 # HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage' 173 174 175 """ 176 19. 代理,需要在环境变量中设置 177 from scrapy.contrib.downloadermiddleware.httpproxy import HttpProxyMiddleware 178 179 方式一:使用默认 180 os.environ 181 { 182 http_proxy:http://root:woshiniba@192.168.11.11:9999/ 183 https_proxy:http://192.168.11.11:9999/ 184 } 185 方式二:使用自定义下载中间件 186 187 def to_bytes(text, encoding=None, errors='strict'): 188 if isinstance(text, bytes): 189 return text 190 if not isinstance(text, six.string_types): 191 raise TypeError('to_bytes must receive a unicode, str or bytes ' 192 'object, got %s' % type(text).__name__) 193 if encoding is None: 194 encoding = 'utf-8' 195 return text.encode(encoding, errors) 196 197 class ProxyMiddleware(object): 198 def process_request(self, request, spider): 199 PROXIES = [ 200 {'ip_port': '111.11.228.75:80', 'user_pass': ''}, 201 {'ip_port': '120.198.243.22:80', 'user_pass': ''}, 202 {'ip_port': '111.8.60.9:8123', 'user_pass': ''}, 203 {'ip_port': '101.71.27.120:80', 'user_pass': ''}, 204 {'ip_port': '122.96.59.104:80', 'user_pass': ''}, 205 {'ip_port': '122.224.249.122:8088', 'user_pass': ''}, 206 ] 207 proxy = random.choice(PROXIES) 208 if proxy['user_pass'] is not None: 209 request.meta['proxy'] = to_bytes("http://%s" % proxy['ip_port']) 210 encoded_user_pass = base64.encodestring(to_bytes(proxy['user_pass'])) 211 request.headers['Proxy-Authorization'] = to_bytes('Basic ' + encoded_user_pass) 212 print "**************ProxyMiddleware have pass************" + proxy['ip_port'] 213 else: 214 print "**************ProxyMiddleware no pass************" + proxy['ip_port'] 215 request.meta['proxy'] = to_bytes("http://%s" % proxy['ip_port']) 216 217 DOWNLOADER_MIDDLEWARES = { 218 'step8_king.middlewares.ProxyMiddleware': 500, 219 } 220 221 """ 222 223 """ 224 20. Https访问 225 Https访问时有两种情况: 226 1. 要爬取网站使用的可信任证书(默认支持) 227 DOWNLOADER_HTTPCLIENTFACTORY = "scrapy.core.downloader.webclient.ScrapyHTTPClientFactory" 228 DOWNLOADER_CLIENTCONTEXTFACTORY = "scrapy.core.downloader.contextfactory.ScrapyClientContextFactory" 229 230 2. 要爬取网站使用的自定义证书 231 DOWNLOADER_HTTPCLIENTFACTORY = "scrapy.core.downloader.webclient.ScrapyHTTPClientFactory" 232 DOWNLOADER_CLIENTCONTEXTFACTORY = "step8_king.https.MySSLFactory" 233 234 # https.py 235 from scrapy.core.downloader.contextfactory import ScrapyClientContextFactory 236 from twisted.internet.ssl import (optionsForClientTLS, CertificateOptions, PrivateCertificate) 237 238 class MySSLFactory(ScrapyClientContextFactory): 239 def getCertificateOptions(self): 240 from OpenSSL import crypto 241 v1 = crypto.load_privatekey(crypto.FILETYPE_PEM, open('/Users/wupeiqi/client.key.unsecure', mode='r').read()) 242 v2 = crypto.load_certificate(crypto.FILETYPE_PEM, open('/Users/wupeiqi/client.pem', mode='r').read()) 243 return CertificateOptions( 244 privateKey=v1, # pKey对象 245 certificate=v2, # X509对象 246 verify=False, 247 method=getattr(self, 'method', getattr(self, '_ssl_method', None)) 248 ) 249 其他: 250 相关类 251 scrapy.core.downloader.handlers.http.HttpDownloadHandler 252 scrapy.core.downloader.webclient.ScrapyHTTPClientFactory 253 scrapy.core.downloader.contextfactory.ScrapyClientContextFactory 254 相关配置 255 DOWNLOADER_HTTPCLIENTFACTORY 256 DOWNLOADER_CLIENTCONTEXTFACTORY 257 258 """ 259 260 261 262 """ 263 21. 爬虫中间件 264 class SpiderMiddleware(object): 265 266 def process_spider_input(self,response, spider): 267 ''' 268 下载完成,执行,然后交给parse处理 269 :param response: 270 :param spider: 271 :return: 272 ''' 273 pass 274 275 def process_spider_output(self,response, result, spider): 276 ''' 277 spider处理完成,返回时调用 278 :param response: 279 :param result: 280 :param spider: 281 :return: 必须返回包含 Request 或 Item 对象的可迭代对象(iterable) 282 ''' 283 return result 284 285 def process_spider_exception(self,response, exception, spider): 286 ''' 287 异常调用 288 :param response: 289 :param exception: 290 :param spider: 291 :return: None,继续交给后续中间件处理异常;含 Response 或 Item 的可迭代对象(iterable),交给调度器或pipeline 292 ''' 293 return None 294 295 296 def process_start_requests(self,start_requests, spider): 297 ''' 298 爬虫启动时调用 299 :param start_requests: 300 :param spider: 301 :return: 包含 Request 对象的可迭代对象 302 ''' 303 return start_requests 304 305 内置爬虫中间件: 306 'scrapy.contrib.spidermiddleware.httperror.HttpErrorMiddleware': 50, 307 'scrapy.contrib.spidermiddleware.offsite.OffsiteMiddleware': 500, 308 'scrapy.contrib.spidermiddleware.referer.RefererMiddleware': 700, 309 'scrapy.contrib.spidermiddleware.urllength.UrlLengthMiddleware': 800, 310 'scrapy.contrib.spidermiddleware.depth.DepthMiddleware': 900, 311 312 """ 313 # from scrapy.contrib.spidermiddleware.referer import RefererMiddleware 314 # Enable or disable spider middlewares 315 # See http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html 316 SPIDER_MIDDLEWARES = { 317 # 'step8_king.middlewares.SpiderMiddleware': 543, 318 } 319 320 321 """ 322 22. 下载中间件 323 class DownMiddleware1(object): 324 def process_request(self, request, spider): 325 ''' 326 请求需要被下载时,经过所有下载器中间件的process_request调用 327 :param request: 328 :param spider: 329 :return: 330 None,继续后续中间件去下载; 331 Response对象,停止process_request的执行,开始执行process_response 332 Request对象,停止中间件的执行,将Request重新调度器 333 raise IgnoreRequest异常,停止process_request的执行,开始执行process_exception 334 ''' 335 pass 336 337 338 339 def process_response(self, request, response, spider): 340 ''' 341 spider处理完成,返回时调用 342 :param response: 343 :param result: 344 :param spider: 345 :return: 346 Response 对象:转交给其他中间件process_response 347 Request 对象:停止中间件,request会被重新调度下载 348 raise IgnoreRequest 异常:调用Request.errback 349 ''' 350 print('response1') 351 return response 352 353 def process_exception(self, request, exception, spider): 354 ''' 355 当下载处理器(download handler)或 process_request() (下载中间件)抛出异常 356 :param response: 357 :param exception: 358 :param spider: 359 :return: 360 None:继续交给后续中间件处理异常; 361 Response对象:停止后续process_exception方法 362 Request对象:停止中间件,request将会被重新调用下载 363 ''' 364 return None 365 366 367 默认下载中间件 368 { 369 'scrapy.contrib.downloadermiddleware.robotstxt.RobotsTxtMiddleware': 100, 370 'scrapy.contrib.downloadermiddleware.httpauth.HttpAuthMiddleware': 300, 371 'scrapy.contrib.downloadermiddleware.downloadtimeout.DownloadTimeoutMiddleware': 350, 372 'scrapy.contrib.downloadermiddleware.useragent.UserAgentMiddleware': 400, 373 'scrapy.contrib.downloadermiddleware.retry.RetryMiddleware': 500, 374 'scrapy.contrib.downloadermiddleware.defaultheaders.DefaultHeadersMiddleware': 550, 375 'scrapy.contrib.downloadermiddleware.redirect.MetaRefreshMiddleware': 580, 376 'scrapy.contrib.downloadermiddleware.httpcompression.HttpCompressionMiddleware': 590, 377 'scrapy.contrib.downloadermiddleware.redirect.RedirectMiddleware': 600, 378 'scrapy.contrib.downloadermiddleware.cookies.CookiesMiddleware': 700, 379 'scrapy.contrib.downloadermiddleware.httpproxy.HttpProxyMiddleware': 750, 380 'scrapy.contrib.downloadermiddleware.chunked.ChunkedTransferMiddleware': 830, 381 'scrapy.contrib.downloadermiddleware.stats.DownloaderStats': 850, 382 'scrapy.contrib.downloadermiddleware.httpcache.HttpCacheMiddleware': 900, 383 } 384 385 """ 386 # from scrapy.contrib.downloadermiddleware.httpauth import HttpAuthMiddleware 387 # Enable or disable downloader middlewares 388 # See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html 389 # DOWNLOADER_MIDDLEWARES = { 390 # 'step8_king.middlewares.DownMiddleware1': 100, 391 # 'step8_king.middlewares.DownMiddleware2': 500, 392 # }

12. TinyScrapy

1 # -*- coding: utf-8 -*- 2 3 from twisted.web.client import getPage, defer 4 from twisted.internet import reactor 5 import queue 6 7 8 class Response(object): 9 def __init__(self, body, request): 10 self.body = body 11 self.request = request 12 self.url = request.url 13 14 @property 15 def text(self): 16 return self.body.decode('utf-8') 17 18 19 class Request(object): 20 def __init__(self, url, callback=None): 21 self.url = url 22 self.callback = callback 23 24 25 class Scheduler(object): 26 def __init__(self, engine): 27 self.q = queue.Queue() 28 self.engine = engine 29 30 def enqueue_request(self, request): 31 self.q.put(request) 32 33 def next_request(self): 34 try: 35 req = self.q.get(block=False) 36 except Exception as e: 37 req = None 38 return req 39 40 def size(self): 41 return self.q.qsize() 42 43 44 class ExecutionEngine(object): 45 def __init__(self): 46 self._closewait = None 47 self.running = True 48 self.start_requests = None 49 self.scheduler = Scheduler(self) 50 self.inprogress = set() 51 52 def check_empty(self, response): 53 if not self.running: 54 self._closewait.callback() 55 56 def _nex_request(self): 57 while self.start_requests: 58 try: 59 request = next(self.start_requests) 60 except StopIteration: 61 self.start_requests = None 62 else: 63 self.scheduler.enqueue_request(request) 64 while len(self.inprogress) < 5 and self.scheduler.size() > 0: # 最大并发数为5 65 request = self.scheduler.next_request() 66 if not request: 67 break 68 self.inprogress.add(request) 69 d = getPage(bytes(request.url, encoding='utf-8')) 70 d.addBoth(self._handle_downloader_output, request) 71 d.addBoth(lambda x, req: self.inprogress.remove(req), request) 72 d.addBoth(lambda x: self._nex_request()) 73 if not len(self.inprogress) and not self.scheduler.size(): 74 self._closewait.callback(None) 75 76 def _handle_downloader_output(self, body, request): 77 """ 78 获取内容,执行回调函数,并且把回调函数中的返回值获取,并添加到队列中 79 :param body: 80 :param request: 81 :return: 82 """ 83 import types 84 response = Response(body, request) 85 func = request.callback or self.spider.parse 86 gen = func(response) 87 if isinstance(gen, types.GeneratorType): 88 for req in gen: 89 self.scheduler.enqueue_request(req) 90 91 @defer.inlineCallbacks 92 def start(self): 93 self._closewait = defer.Deferred() 94 yield self._closewait 95 96 @defer.inlineCallbacks 97 def open_spider(self, spider, start_requests): 98 self.start_requests = start_requests 99 self.spider = spider 100 yield None 101 reactor.callLater(0, self._nex_request) 102 103 104 class Crawler(object): 105 def __init__(self, spidercls): 106 self.spidercls = spidercls 107 self.spider = None 108 self.engine = None 109 110 @defer.inlineCallbacks 111 def crawl(self): 112 self.engine = ExecutionEngine() 113 self.spider = self.spidercls() 114 start_requests = iter(self.spider.start_requests()) 115 yield self.engine.open_spider(self.spider, start_requests) 116 yield self.engine.start() 117 118 119 class CrawlerProcess(object): 120 def __init__(self): 121 self._active = set() 122 self.crawlers = set() 123 124 def crawl(self, spidercls, *args, **kwargs): 125 crawler = Crawler(spidercls) 126 self.crawlers.add(crawler) 127 d = crawler.crawl(*args, **kwargs) 128 self._active.add(d) 129 return d 130 131 def start(self): 132 d1 = defer.DeferredList(self._active) 133 d1.addBoth(self._stop_reactor) 134 reactor.run() 135 136 def _stop_reactor(self, _=None): 137 reactor.stop() 138 139 140 class Spider(object): 141 def start_requests(self): 142 for url in self.start_urls: 143 yield Request(url) 144 145 146 class ChoutiSpider(Spider): 147 name = "chouti" 148 start_urls = [ 149 'http://dig.chouti.com/', 150 ] 151 152 def parse(self, response): 153 print(response.text) 154 155 156 class CnblogsSpider(Spider): 157 name = "cnblogs" 158 start_urls = [ 159 'http://www.cnblogs.com/', 160 ] 161 162 def parse(self, response): 163 print(response.text) 164 165 166 if __name__ == '__main__': 167 spider_cls_list = [ChoutiSpider, CnblogsSpider] 168 crawler_process = CrawlerProcess() 169 for spider_cls in spider_cls_list: 170 crawler_process.crawl(spider_cls) 171 crawler_process.start()

更多文档参见:http://scrapy-chs.readthedocs.io/zh_CN/latest/index.html

转载自:

浙公网安备 33010602011771号

浙公网安备 33010602011771号