MapReduce案例-流量统计

一、MapReduce案例-流量统计

1: 需求一: 统计求和

统计每个手机号的上行数据包总和,下行数据包总和,上行总流量之和,下行总流量之和 分析:以手机号码作为key值,上行流量,下行流量,上行总流量,下行总流量四个字段作为value值,然后以这个key,和value作为map阶段的输出,reduce阶段的输入

1.1: 自定义map的输出value对象FlowBean

package flowcount;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/**

* @author MoooJL

* @data 2020/8/28-20:26

*/

public class FlowBean implements Writable {

private Integer upFlow; //上行数据包数

private Integer downFlow; //下行数据包数

private Integer upCountFlow; //上行流量总和

private Integer downCountFlow;//下行流量总和

public Integer getUpFlow() {

return upFlow;

}

public void setUpFlow(Integer upFlow) {

this.upFlow = upFlow;

}

public Integer getDownFlow() {

return downFlow;

}

public void setDownFlow(Integer downFlow) {

this.downFlow = downFlow;

}

public Integer getUpCountFlow() {

return upCountFlow;

}

public void setUpCountFlow(Integer upCountFlow) {

this.upCountFlow = upCountFlow;

}

public Integer getDownCountFlow() {

return downCountFlow;

}

public void setDownCountFlow(Integer downCountFlow) {

this.downCountFlow = downCountFlow;

}

@Override

public String toString() {

return upFlow +

"\t" + downFlow +

"\t" + upCountFlow +

"\t" + downCountFlow;

}

//序列化方法

@Override

public void write(DataOutput out) throws IOException {

out.writeInt(upFlow);

out.writeInt(downFlow);

out.writeInt(upCountFlow);

out.writeInt(downCountFlow);

}

@Override

public void readFields(DataInput in) throws IOException {

this.upFlow = in.readInt();

this.downFlow = in.readInt();

this.upCountFlow = in.readInt();

this.downCountFlow = in.readInt();

}

}

1.2:定义FlowMapper类

package flowcount;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.yarn.webapp.hamlet.Hamlet;

import java.io.IOException;

/**

* @author MoooJL

* @data 2020/8/28-20:31

*/

public class FlowCountMapper extends Mapper<LongWritable, Text,Text,FlowBean> {

/*

将K1和V1转为K2和V2:

K1 V1

0 1360021750219 128 1177 16852 200

------------------------------

K2 V2

13600217502 FlowBean(19 128 1177 16852)

*/

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//1:拆分行文本数据,得到手机号--->K2

String[] split = value.toString().split("\t");

String phoneNum = split[1];

//2:创建FlowBean对象,并从行文本数据拆分出流量的四个四段,并将四个流量字段的值赋给FlowBean对象

FlowBean flowBean = new FlowBean();

flowBean.setUpFlow(Integer.parseInt(split[6]));

flowBean.setDownFlow(Integer.parseInt(split[7]));

flowBean.setUpCountFlow(Integer.parseInt(split[8]));

flowBean.setDownCountFlow(Integer.parseInt(split[9]));

//3:将K2和V2写入上下文中

context.write(new Text(phoneNum), flowBean);

}

}

1.3:定义FlowReducer类

package flowcount;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

/**

* @author MoooJL

* @data 2020/8/28-21:54

*/

public class FlowCountReducer extends Reducer<Text,FlowBean,Text,FlowBean> {

@Override

protected void reduce(Text key, Iterable<FlowBean> values, Context context) throws IOException, InterruptedException {

//1:遍历集合,并将集合中的对应的四个字段累计

Integer upFlow = 0; //上行数据包数

Integer downFlow = 0; //下行数据包数

Integer upCountFlow = 0; //上行流量总和

Integer downCountFlow = 0;//下行流量总和

for (FlowBean value : values) {

upFlow += value.getUpFlow();

downFlow += value.getDownFlow();

upCountFlow += value.getUpCountFlow();

downCountFlow += value.getDownCountFlow();

}

//2:创建FlowBean对象,并给对象赋值 V3

FlowBean flowBean = new FlowBean();

flowBean.setUpFlow(upFlow);

flowBean.setDownFlow(downFlow);

flowBean.setUpCountFlow(upCountFlow);

flowBean.setDownCountFlow(downCountFlow);

//3:将K3和V3下入上下文中

context.write(key, flowBean);

}

}

1.4:程序main函数入口FlowMain

package flowcount;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

/**

* @author MoooJL

* @data 2020/8/26-15:24

*/

public class jobMain extends Configured implements Tool {

//该方法用于指定一个job任务

@Override

public int run(String[] args) throws Exception {

//1、创建一个job对象

Job job = Job.getInstance(super.getConf(), "mapreduce_flowcount");

//2、配置job任务的8个对象

//第一步 指定文件的读取方式和路径

job.setInputFormatClass(TextInputFormat.class);

/*

如果打包运行出错 加配置

job.setJarByClass(jobMain.class);

*/

TextInputFormat.addInputPath(job,new Path("file:///D:\\input\\flowcount"));

//第二步 指定map阶段的处理方式和数据类型

job.setMapperClass(FlowCountMapper.class);

//设置map阶段k2的类型

job.setMapOutputKeyClass(Text.class);

//设置map阶段v2的类型

job.setMapOutputValueClass(FlowBean.class);

//第三(分区) 四(排序) 第五步(规约) 六(分组) 步 采用默认方式

//第七步 指定reduce阶段的处理方式和数据类型

job.setReducerClass(FlowCountReducer.class);

//设置k3 v3类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

//第八步 设置输出类型

job.setOutputFormatClass(TextOutputFormat.class);

//本地运行模式

TextOutputFormat.setOutputPath(job,new Path("file:///D:\\output\\flowcount"));

//等待任务结束

boolean b = job.waitForCompletion(true);

return b ? 0:1;

}

public static void main(String[] args) throws Exception {

Configuration configuration=new Configuration();

//启动job任务

int run = ToolRunner.run(configuration, new jobMain(), args);

System.exit(run);

}

}

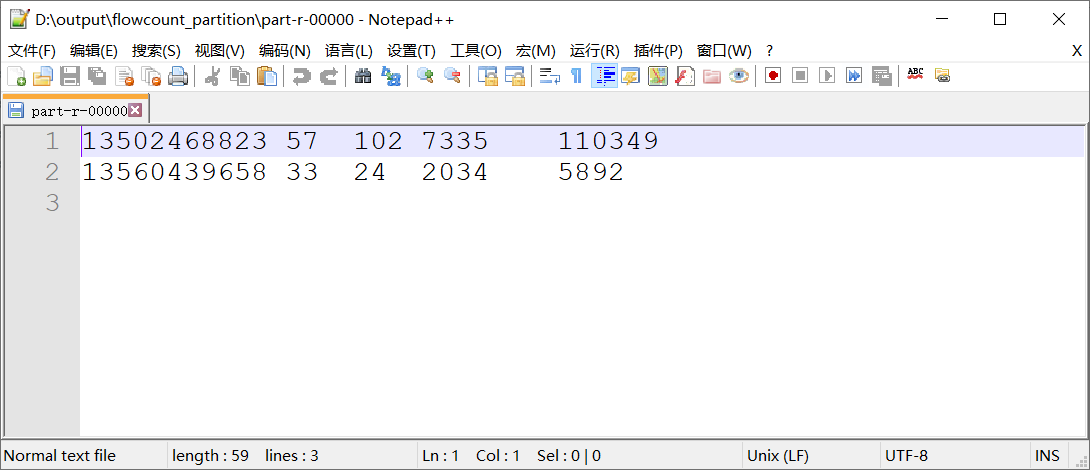

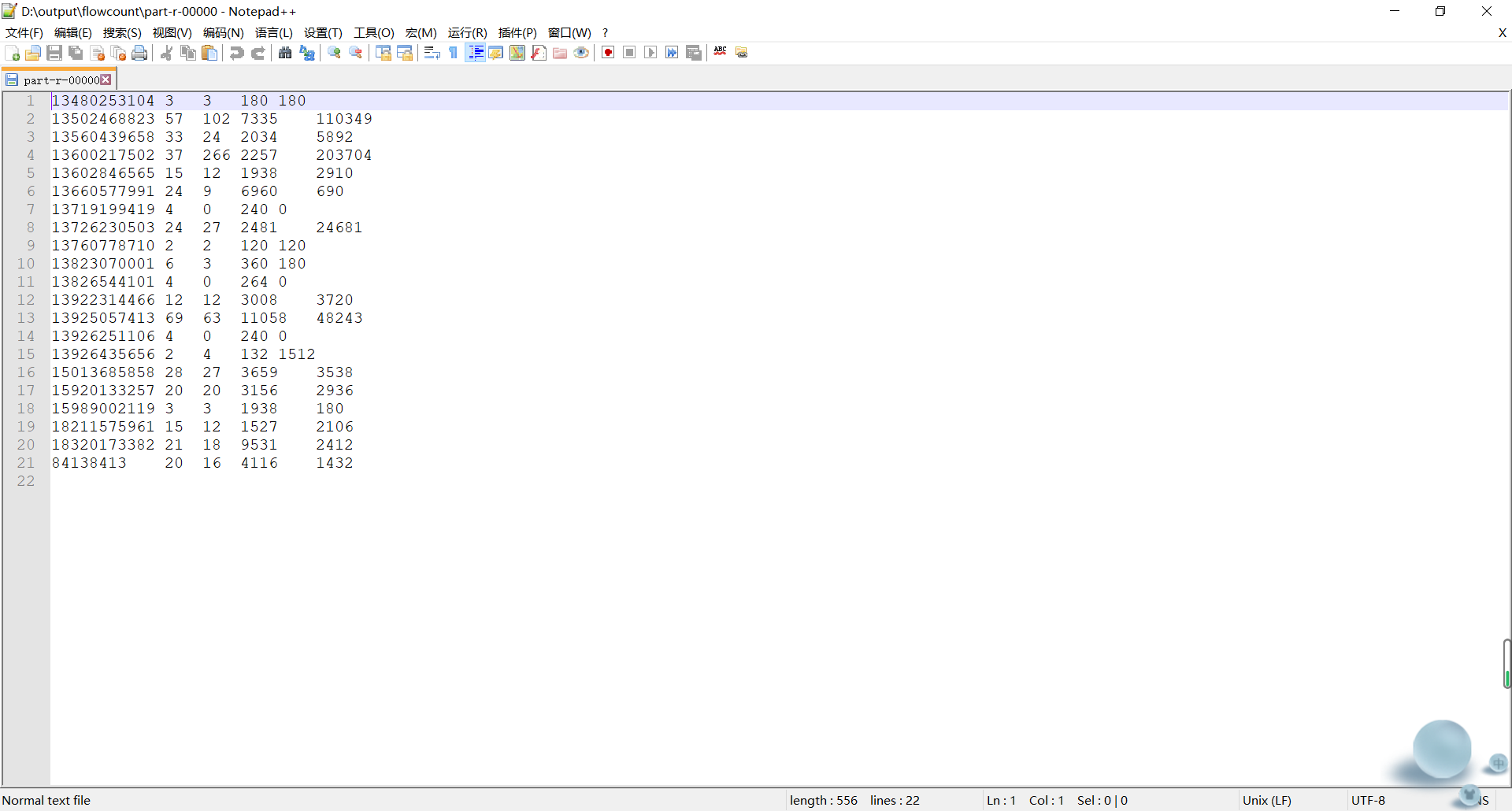

1.5:运行截图

2:需求二: 上行流量倒序排序(递减排序)

分析,以需求一的输出数据作为排序的输入数据,自定义FlowBean,以FlowBean为map输出的key,以手机号作为Map输出的value,因为MapReduce程序会对Map阶段输出的key进行排序

2.1: 定义FlowBean实现WritableComparable实现比较排序

Java 的 compareTo 方法说明:

- compareTo 方法用于将当前对象与方法的参数进行比较。

- 如果指定的数与参数相等返回 0。

- 如果指定的数小于参数返回 -1。

- 如果指定的数大于参数返回 1。

例如:o1.compareTo(o2); 返回正数的话,当前对象(调用 compareTo 方法的对象 o1)要排在比较对象(compareTo 传参对象 o2)后面,返回负数的话,放在前面

package flowcount.sort;

import org.apache.hadoop.io.Writable;

import org.apache.hadoop.io.WritableComparable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/**

* @author MoooJL

* @data 2020/8/28-20:26

*/

public class FlowBean implements WritableComparable<FlowBean> {

private Integer upFlow; //上行数据包数

private Integer downFlow; //下行数据包数

private Integer upCountFlow; //上行流量总和

private Integer downCountFlow;//下行流量总和

public Integer getUpFlow() {

return upFlow;

}

public void setUpFlow(Integer upFlow) {

this.upFlow = upFlow;

}

public Integer getDownFlow() {

return downFlow;

}

public void setDownFlow(Integer downFlow) {

this.downFlow = downFlow;

}

public Integer getUpCountFlow() {

return upCountFlow;

}

public void setUpCountFlow(Integer upCountFlow) {

this.upCountFlow = upCountFlow;

}

public Integer getDownCountFlow() {

return downCountFlow;

}

public void setDownCountFlow(Integer downCountFlow) {

this.downCountFlow = downCountFlow;

}

@Override

public String toString() {

return upFlow +

"\t" + downFlow +

"\t" + upCountFlow +

"\t" + downCountFlow;

}

//序列化方法

@Override

public void write(DataOutput out) throws IOException {

out.writeInt(upFlow);

out.writeInt(downFlow);

out.writeInt(upCountFlow);

out.writeInt(downCountFlow);

}

@Override

public void readFields(DataInput in) throws IOException {

this.upFlow = in.readInt();

this.downFlow = in.readInt();

this.upCountFlow = in.readInt();

this.downCountFlow = in.readInt();

}

//指定排序规则

@Override

public int compareTo(FlowBean flowBean) {

// return this.upFlow.compareTo(flowBean.getUpFlow()) * -1;

return flowBean.upFlow - this.upFlow ;

}

}

2.2:定义FlowMapper

package flowcount.sort;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* @author MoooJL

* @data 2020/8/29-0:04

*/

public class FlowSortMapper extends Mapper<LongWritable, Text,FlowBean,Text> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//1:拆分行文本数据(V1),得到四个流量字段,并封装FlowBean对象---->K2

String[] split = value.toString().split("\t");

FlowBean flowBean = new FlowBean();

flowBean.setUpFlow(Integer.parseInt(split[1]));

flowBean.setDownFlow(Integer.parseInt(split[2]));

flowBean.setUpCountFlow(Integer.parseInt(split[3]));

flowBean.setDownCountFlow(Integer.parseInt(split[4]));

//2:通过行文本数据,得到手机号--->V2

String phoneNum = split[0];

//3:将K2和V2下入上下文中

context.write(flowBean, new Text(phoneNum));

}

}

2.3:定义FlowReducer

package flowcount.sort;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

/**

* @author MoooJL

* @data 2020/8/29-0:10

*/

public class FlowSortReducer extends Reducer<FlowBean, Text,Text,FlowBean> {

@Override

protected void reduce(FlowBean key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

//1:遍历集合,取出 K3,并将K3和V3写入上下文中

for (Text value : values) {

context.write(value, key);

}

}

}

2.4:程序main函数入口

package flowcount.sort;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

import sort.SortBean;

/**

* @author MoooJL

* @data 2020/8/26-15:24

*/

public class jobMain extends Configured implements Tool {

//该方法用于指定一个job任务

@Override

public int run(String[] args) throws Exception {

//1、创建一个job对象

Job job = Job.getInstance(super.getConf(), "mapreduce_flowcount_cort");

//2、配置job任务的8个对象

//第一步 指定文件的读取方式和路径

job.setInputFormatClass(TextInputFormat.class);

/*

如果打包运行出错 加配置

job.setJarByClass(jobMain.class);

*/

TextInputFormat.addInputPath(job,new Path("file:///D:\\output\\flowcount"));

//第二步 指定map阶段的处理方式和数据类型

job.setMapperClass(FlowSortMapper.class);

//设置map阶段k2的类型

job.setMapOutputKeyClass(FlowBean.class);

//设置map阶段v2的类型

job.setMapOutputValueClass(Text.class);

//第三(分区) 四(排序) 第五步(规约) 六(分组) 步 采用默认方式

//第七步 指定reduce阶段的处理方式和数据类型

job.setReducerClass(FlowSortReducer.class);

//设置k3 v3类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(SortBean.class);

//第八步 设置输出类型

job.setOutputFormatClass(TextOutputFormat.class);

//本地运行模式

TextOutputFormat.setOutputPath(job,new Path("file:///D:\\output\\flowcount_sort"));

//等待任务结束

boolean b = job.waitForCompletion(true);

return b ? 0:1;

}

public static void main(String[] args) throws Exception {

Configuration configuration=new Configuration();

//启动job任务

int run = ToolRunner.run(configuration, new jobMain(), args);

System.exit(run);

}

}

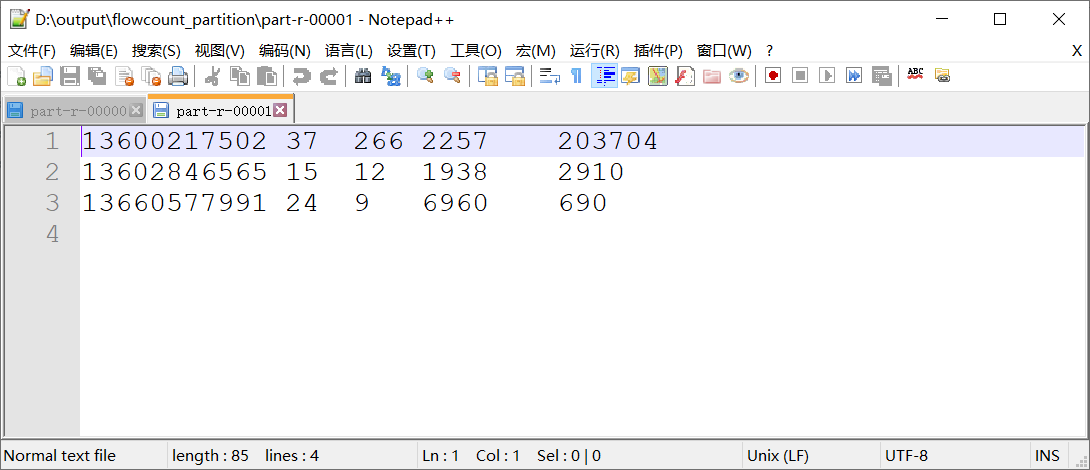

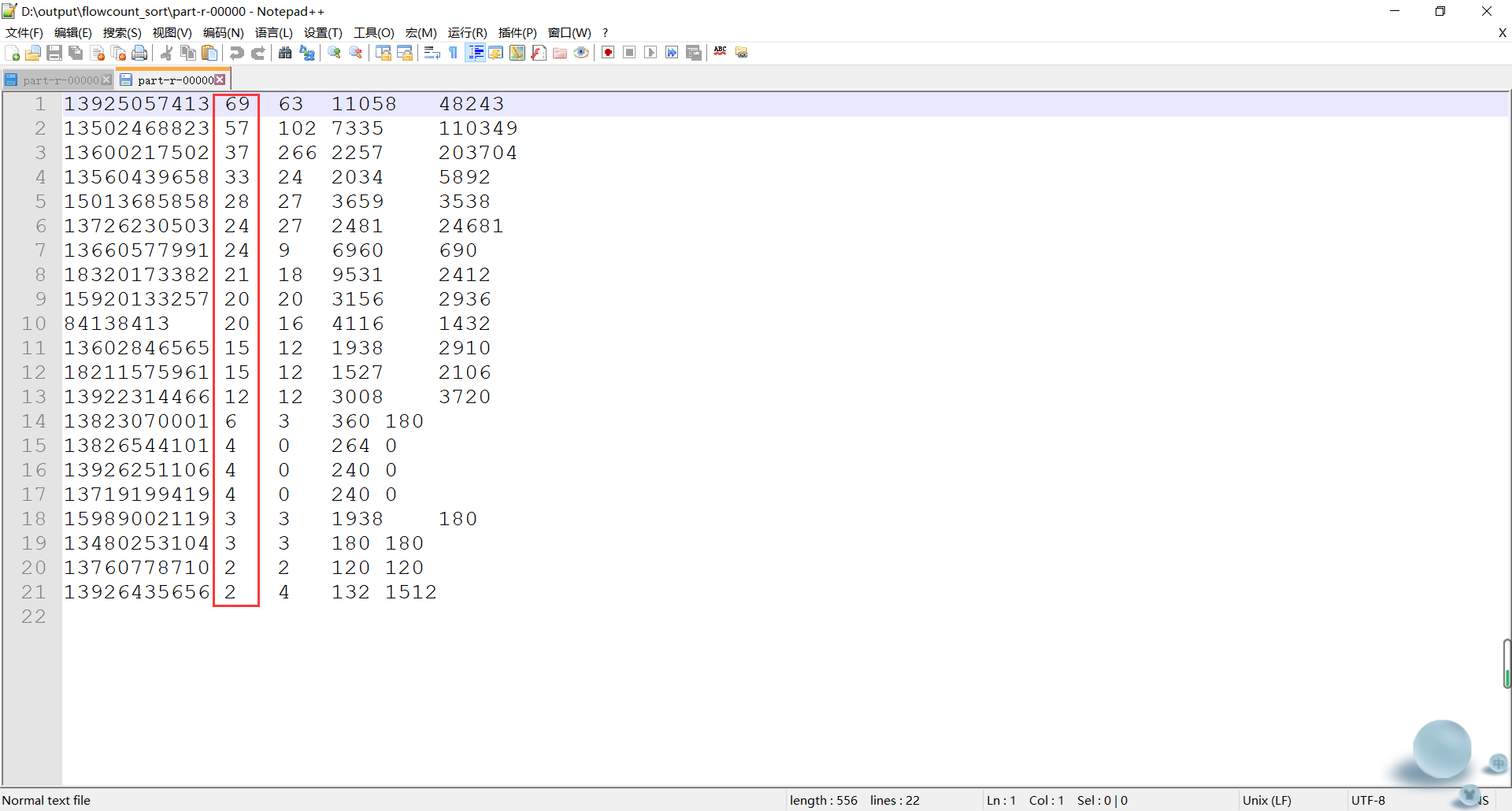

2.5:运行截图

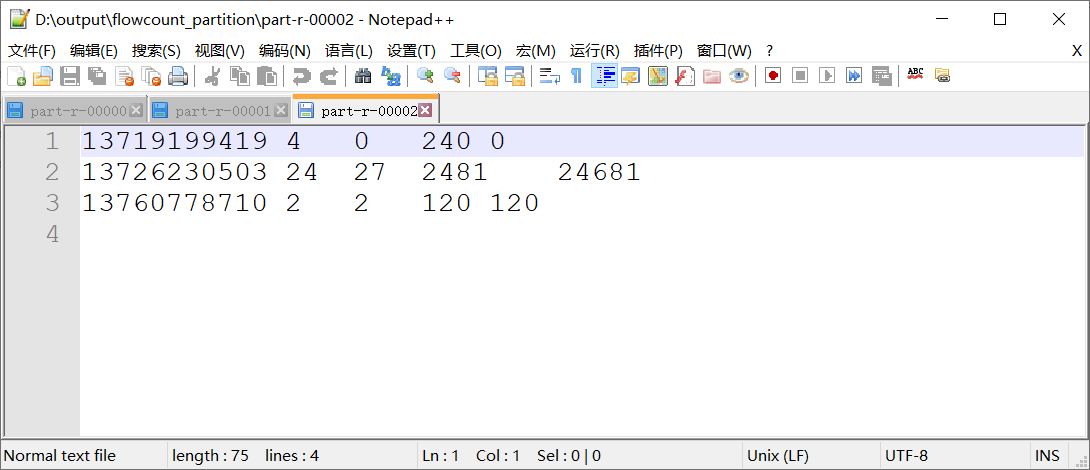

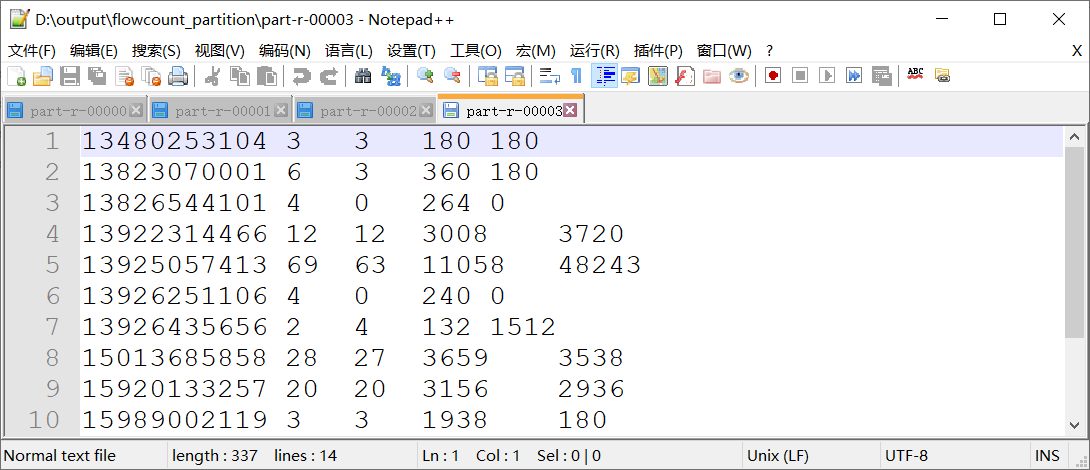

3:需求三: 手机号码分区

在需求一的基础上,继续完善,将不同的手机号分到不同的数据文件的当中去,需要自定义分区来实现,这里我们自定义来模拟分区,将以下数字开头的手机号进行分开

135 开头数据到一个分区文件

136 开头数据到一个分区文件

137 开头数据到一个分区文件

其他分区

3.1:自定义分区

package flowcount.partition;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Partitioner;

/**

* @author MoooJL

* @data 2020/8/29-0:28

*/

public class FlowCountPartition extends Partitioner<Text,FlowBean> {

/*

该方法用来指定分区的规则:

135 开头数据到一个分区文件

136 开头数据到一个分区文件

137 开头数据到一个分区文件

其他分区

参数:

text : K2 手机号

flowBean: V2

i : ReduceTask的个数

*/

@Override

public int getPartition(Text text, FlowBean flowBean, int i) {

//1:获取手机号

String phoneNum = text.toString();

//2:判断手机号以什么开头,返回对应的分区编号(0-3)

if(phoneNum.startsWith("135")){

return 0;

}else if(phoneNum.startsWith("136")){

return 1;

}else if(phoneNum.startsWith("137")){

return 2;

}else{

return 3;

}

}

}

3.2:作业运行设置

job.setPartitionerClass(FlowPartition.class);

job.setNumReduceTasks(4);

3.3:运行结果