爬虫_拉勾网(解析ajax)

拉勾网反爬虫做的比较严,请求头多添加几个参数才能不被网站识别

找到真正的请求网址,返回的是一个json串,解析这个json串即可,而且注意是post传值

通过改变data中pn的值来控制翻页

job_name读取的结果是一个列表 ['JAVA高级工程师、爬虫工程师'] ,而我只想得到里面的字符串,在用job_name[0]的时候,爬取过程中会报下标错误,不知道怎么回事,都看了一遍没问题啊,只能不处理这个列表了

1 import requests 2 from lxml import etree 3 import time 4 import re 5 6 headers = { 7 'User-Agent': 'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/68.0.3440.75 Safari/537.36', 8 'Cookie': '_ga=GA1.2.438907938.1533109215; user_trace_token=20180801154015-21630e48-955e-11e8-ac93-525400f775ce; LGUID=20180801154015-216316d6-955e-11e8-ac93-525400f775ce; WEBTJ-ID=08122018%2C193417-1652dea595ec0a-0ca5deb5d1dcb9-172f1503-2073600-1652dea595f51f; Hm_lvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1533109214,1534073658; LGSID=20180812193418-a61634a5-9e23-11e8-bae9-525400f775ce; PRE_UTM=m_cf_cpt_baidu_pc; PRE_HOST=www.baidu.com; PRE_SITE=https%3A%2F%2Fwww.baidu.com%2Fs%3Fie%3Dutf-8%26f%3D8%26rsv_bp%3D0%26rsv_idx%3D1%26tn%3Dbaidu%26wd%3Dlagou%2520wang%2520%26rsv_pq%3D8f8c8d4500066afd%26rsv_t%3Deaa21Vp5dXD8YMkjoybb0H4UW4n0ReZfxSBiBne14tXfDsN5XKFydx2jVnA%26rqlang%3Dcn%26rsv_enter%3D1%26rsv_sug3%3D12%26rsv_sug1%3D2%26rsv_sug7%3D101%26rsv_sug2%3D0%26inputT%3D1970%26rsv_sug4%3D3064; PRE_LAND=https%3A%2F%2Fwww.lagou.com%2Flp%2Fhtml%2Fcommon.html%3Futm_source%3Dm_cf_cpt_baidu_pc; _gid=GA1.2.849726101.1534073658; X_HTTP_TOKEN=0d3a98743216c83f14e092a5154d2327; LG_LOGIN_USER_ID=5f3d6b41f24b2423bae0a956753632482a2ecabcb36ef97074ff9fdec683ae51; _putrc=3A0548631F844F3C123F89F2B170EADC; JSESSIONID=ABAAABAAAIAACBI302EB6DF4BD54C909C01751F65D4311D; login=true; unick=%E5%88%98%E4%BA%9A%E6%96%8C; showExpriedIndex=1; showExpriedCompanyHome=1; showExpriedMyPublish=1; hasDeliver=0; gate_login_token=c6678499c0aaeb807c1e37249f90d483317ec272762e7060fa18175f49d9c806; index_location_city=%E5%85%A8%E5%9B%BD; TG-TRACK-CODE=search_code; _gat=1; Hm_lpvt_4233e74dff0ae5bd0a3d81c6ccf756e6=1534074635; LGRID=20180812195035-ec87e3ce-9e25-11e8-a37b-5254005c3644; SEARCH_ID=85704a0c1aaf43d4b9f07489311f0707', 9 'Referer': 'https://www.lagou.com/jobs/list_%E7%88%AC%E8%99%AB?city=%E5%85%A8%E5%9B%BD&cl=false&fromSearch=true&labelWords=sug&suginput=pachong', 10 'Origin': 'https://www.lagou.com' 11 } 12 13 data = { 14 'first': 'false', 15 'pn': 1, 16 'kd': '爬虫' 17 } 18 19 def get_list_page(page_num): 20 url = 'https://www.lagou.com/jobs/positionAjax.json?needAddtionalResult=false' 21 data['pn'] = page_num 22 response = requests.post(url, headers=headers, data=data) 23 result = response.json() 24 positions = result['content']['positionResult']['result'] 25 for position in positions: 26 positionId = position['positionId'] 27 position_url = 'https://www.lagou.com/jobs/%s.html' %positionId 28 29 parse_detail_page(position_url) 30 # break 31 32 33 def parse_detail_page(url): 34 job_information = {} 35 response = requests.get(url, headers=headers) 36 # response.raise_for_status() 37 html = response.text 38 html_element = etree.HTML(html) 39 job_name = html_element.xpath('//div[@class="job-name"]/@title') 40 job_description = html_element.xpath('//dd[@class="job_bt"]//p//text()') 41 for index, i in enumerate(job_description): 42 job_description[index] = re.sub('\xa0', '', i) 43 job_address = html_element.xpath('//div[@class="work_addr"]/a/text()') 44 job_salary = html_element.xpath('//span[@class="salary"]/text()') 45 46 # 字符串处理去掉不必要的信息 47 for index, i in enumerate(job_address): 48 job_address[index] = re.sub('查看地图', '', i) 49 while '' in job_address: 50 job_address.remove('') 51 52 # job_address_detail = html_element.xpath('//div[@class="work_addr"]/a[-2]/text()') 53 # print(job_address_detail) 54 job_information['job_name'] = job_name 55 job_information['job_description'] = job_description 56 job_information['job_address'] = job_address 57 job_information['job_salary'] = job_salary 58 print(job_information) 59 60 61 def main(): 62 for i in range(1, 31): 63 get_list_page(i) 64 print('='*30 + '第%s页'%i + '='*30) 65 66 67 if __name__ == '__main__': 68 main()

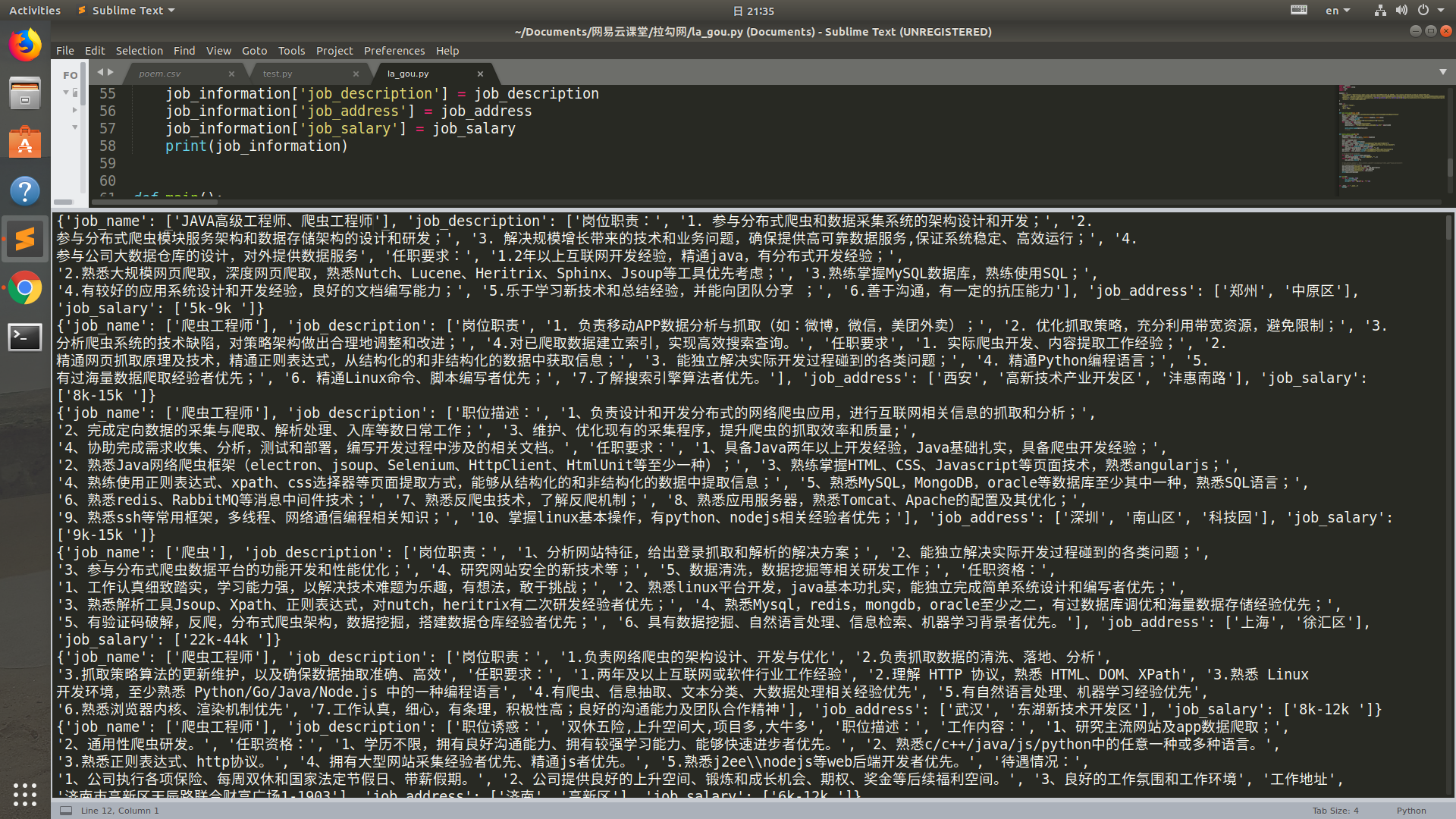

运行结果