第11章:Pod数据持久化

https://kubernetes.io/docs/concepts/storage/volumes/

-

Kubernetes中的Volume提供了在容器中挂载外部存储的能力

-

Pod需要设置卷来源(spec.volume)和挂载点(spec.containers.volumeMounts)两个信息后才可以使用相应的Volume

本地卷:hostPath,emptyDir网络卷:nfs,ceph(cephfs,rbd),glusterfs公有云:aws,azurek8s资源:downwardAPI,configMap,secret

3.1 emptyDir

emptyDir == docker中的volume

创建一个空卷,挂载到Pod中的容器。Pod删除该卷也会被删除。

应用场景:Pod中容器之间数据共享

apiVersion: v1 kind: Pod metadata: name: my-pod spec: containers: - name: write image: centos command: ["bash","-c","for i in {1..100};do echo $i >> /data/hello;sleep 1;done"] volumeMounts: - name: data mountPath: /data - name: read image: centos command: ["bash","-c","tail -f /data/hello"] volumeMounts: - name: data mountPath: /data volumes: - name: data emptyDir: {}

# 查看pod中read容器日志

# kubectl logs my-pod -c read

# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

my-pod 2/2 Running 5 15m 10.244.2.11 k8s-node2

# 查看卷目录(pod在哪个node节点上,emptydir卷就在哪里)

# docker ps 查看容器名,找到对应POD ID

# /var/lib/kubelet/pods/<POD ID>/volumes/kubernetes.io~empty-dir/data

[root@k8s-node2 ~]# docker ps | grep "my-pod"

[root@k8s-node2 ~]# cat /var/lib/kubelet/pods/7c521ebc-dec6-4487-a698-af4cc006cf57/volumes/kubernetes.io~empty-dir/data/hello

3.2 hostPath

hostPath == docker中的bindmount 日志采集agent、监控agent /proc

挂载Node文件系统上文件或者目录到Pod中的容器。每个node上需要有相应的挂载目录,删除pod,挂载的卷不会被删除。

应用场景:Pod中容器需要访问宿主机文件

apiVersion: v1 kind: Pod metadata: name: my-pod spec: containers: - name: busybox image: busybox args: - /bin/sh - -c - sleep 36000 volumeMounts: - name: data mountPath: /data volumes: - name: data hostPath: path: /tmp type: Directory

验证:进入Pod中的/data目录内容与当前运行Pod的节点内容一样:

# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

my-pod 1/1 Running 0 17s 10.244.2.13 k8s-node2

# kubectl exec -it my-pod sh

/ # ls /data/

[root@k8s-node2 ~]# ls /tmp/

3.3 网络存储

在172.16.1.72节点上安装nfs:

# yum install nfs-utils -y

# mkdir -p /ifs/kubernetes/

# vim /etc/exports

/ifs/kubernetes *(rw,no_root_squash,sync)

# systemctl start rpcbind.service

# systemctl enable rpcbind.service

# systemctl start nfs-server.service

# systemctl enable nfs-server.service

# rpcinfo -p

# 挂载测试

# mkdir -p /mntdata/

# mount -t nfs 172.16.1.72:/ifs/kubernetes /mntdata/

# df -hT

172.16.1.72:/ifs/kubernetes nfs4 58G 3.0G 55G 6% /mntdata

apiVersion: apps/v1 kind: Deployment metadata: name: web spec: replicas: 3 selector: matchLabels: app: web template: metadata: labels: app: web spec: containers: - name: nginx image: nginx volumeMounts: - name: wwwroot mountPath: /usr/share/nginx/html volumes: - name: wwwroot nfs: server: 172.16.1.72 path: /ifs/kubernetes

在nfs的家目录中创建index.html首页访问并访问:

# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

web-856c696878-678lq 1/1 Running 0 6m19s 10.244.2.14 k8s-node2

web-856c696878-lbq4z 1/1 Running 0 6m19s 10.244.2.15 k8s-node2

web-856c696878-wvwn8 1/1 Running 0 6m19s 10.244.1.8 k8s-node1

# kubectl expose deployment web --port=80 --target-port=80 --type=NodePort

# kubectl get svc/web

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

web NodePort 10.96.174.135 <none> 80:30449/TCP 31s

[root@k8s-node2 ~]# echo "hello" >/ifs/kubernetes/index.html

# 访问nginx

http://NodeIP:30449/

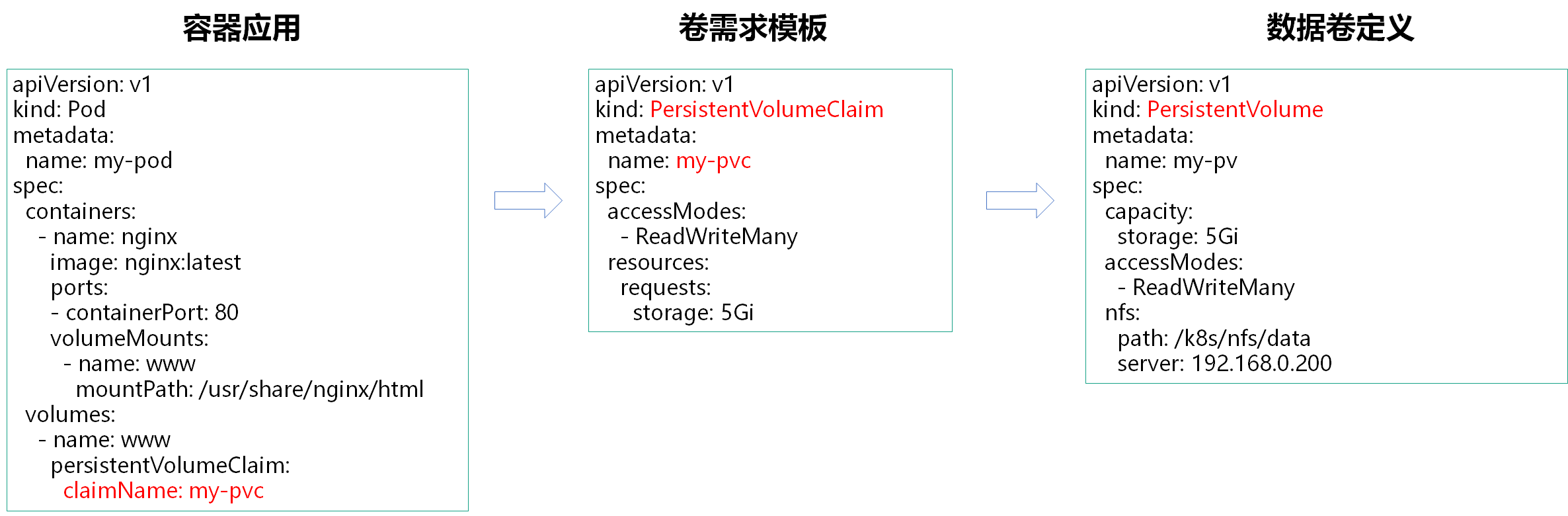

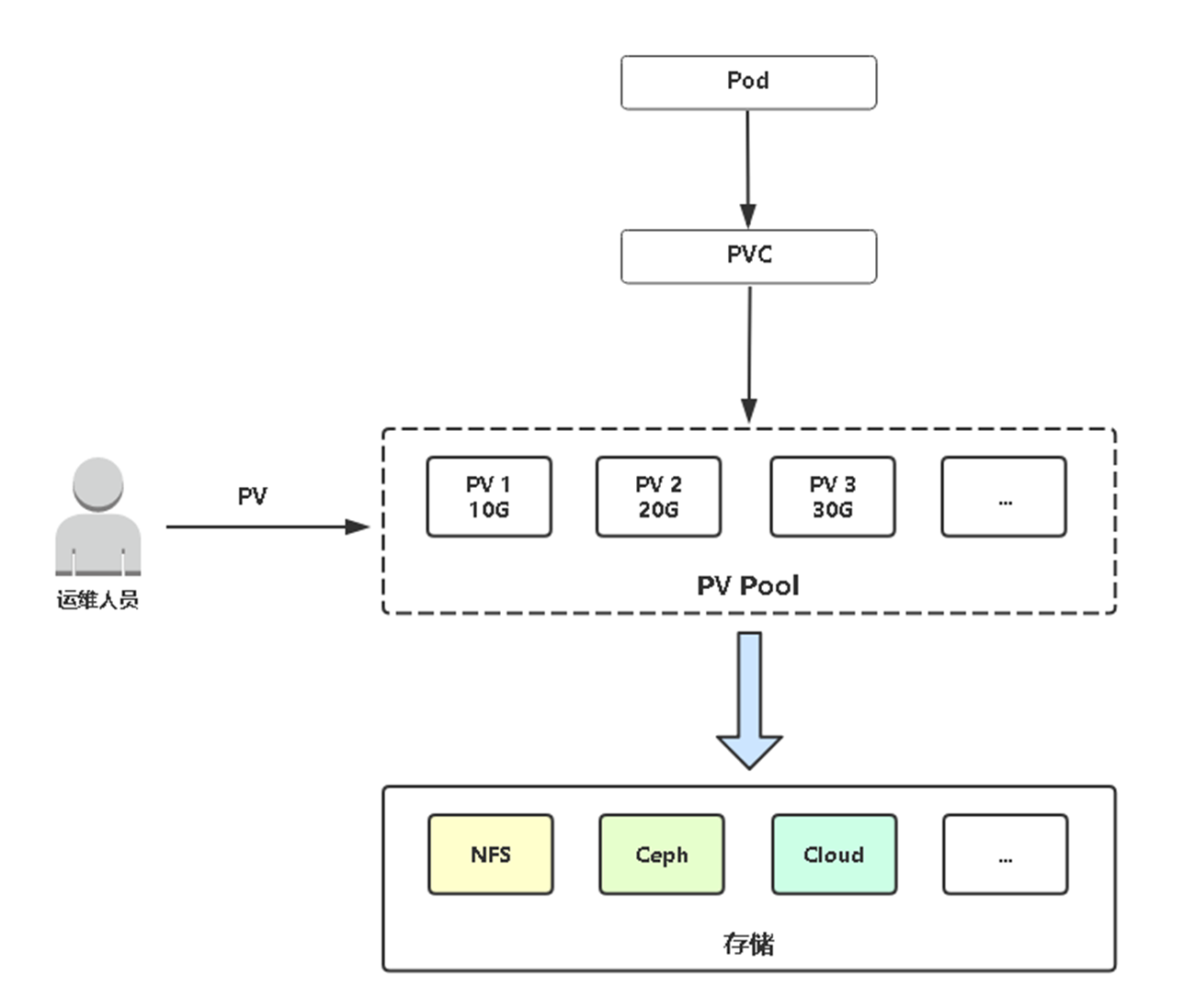

3.4 PV&PVC

PersistentVolume(PV:持久卷):对存储资源创建和使用的抽象,使得存储作为集群中的资源管理

PV供给分为:

-

静态

-

动态

PersistentVolumeClaim(PVC:持久卷声明):让用户不需要关心具体的Volume实现细节

3.5 PV静态供给

静态供给是指提前创建好很多个PV,以供使用。

先准备一台NFS服务器作为测试(以172.16.1.72节点作为测试)。

[root@k8s-node2 ~]# yum install nfs-utils -y

[root@k8s-node2 ~]# vim /etc/exports

/ifs/kubernetes *(rw,no_root_squash,sync)

[root@k8s-node2 ~]# mkdir -p /ifs/kubernetes

[root@k8s-node2 ~]# systemctl start nfs

[root@k8s-node2 ~]# systemctl enable nfs

示例:先准备三个PV,分别是5G,10G,20G,修改下面对应值分别创建。

[root@k8s-node2 ~]# mkdir -p /ifs/kubernetes/{pv001,pv002,pv003}

[root@k8s-node2 ~]# ls /ifs/kubernetes/

pv001 pv002 pv003

[root@k8s-admin ~]# vim my-pv.yaml

--- apiVersion: v1 kind: PersistentVolume metadata: name: pv001 # 修改PV名称 spec: capacity: storage: 5Gi # 修改大小 accessModes: - ReadWriteMany nfs: path: /ifs/kubernetes/pv001 # 修改目录名 server: 172.16.1.72 --- apiVersion: v1 kind: PersistentVolume metadata: name: pv002 # 修改PV名称 spec: capacity: storage: 10Gi # 修改大小 accessModes: - ReadWriteMany nfs: path: /ifs/kubernetes/pv002 # 修改目录名 server: 172.16.1.72 --- apiVersion: v1 kind: PersistentVolume metadata: name: pv003 # 修改PV名称 spec: capacity: storage: 20Gi # 修改大小 accessModes: - ReadWriteMany nfs: path: /ifs/kubernetes/pv003 # 修改目录名 server: 172.16.1.72

应用并查看pv:

[root@k8s-admin ~]# kubectl apply -f my-pv.yaml

persistentvolume/pv001 created

persistentvolume/pv002 created

persistentvolume/pv003 created

[root@k8s-admin ~]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pv001 5Gi RWX Retain Available 2m31s

pv002 10Gi RWX Retain Available 2m31s

pv003 20Gi RWX Retain Available 2m31s

创建一个Pod使用PV:

[root@k8s-admin ~]# vim static-pv.yaml

--- apiVersion: v1 kind: Pod metadata: name: my-pod spec: containers: - name: nginx image: nginx:latest ports: - containerPort: 80 volumeMounts: - name: www mountPath: /usr/share/nginx/html volumes: - name: www persistentVolumeClaim: claimName: my-pvc --- apiVersion: v1 kind: PersistentVolumeClaim metadata: name: my-pvc spec: # 访问模式 accessModes: - ReadWriteMany # 请求容量大小 resources: requests: storage: 5Gi

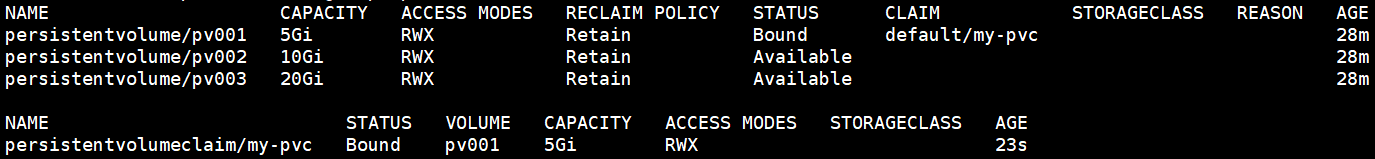

创建并查看PV与PVC状态:

[root@k8s-admin ~]# kubectl apply -f pod-pv.yaml

pod/my-pod created

persistentvolumeclaim/my-pvc created

[root@k8s-admin ~]# kubectl get pv,pvc

会发现该 PVC 会与 5G PV 进行绑定成功。

然后进入到容器中/usr/share/nginx/html(PV挂载目录)目录下创建一个文件测试:

[root@k8s-admin ~]# kubectl exec -it my-pod bash

root@my-pod:/# cd /usr/share/nginx/html

root@my-pod:/usr/share/nginx/html# echo "hello" >index.html

# 再切换到NFS服务器,会发现也有刚在容器创建的文件,说明工作正常。

[root@k8s-node2 ~]# ls -l /ifs/kubernetes/pv001

total 4

-rw-r--r-- 1 root root 6 May 12 22:47 index.html

# kubectl delete pod/my-pod

# kubectl delete persistentvolumeclaim/my-pvc

# kubectl delete persistentvolume/pv001

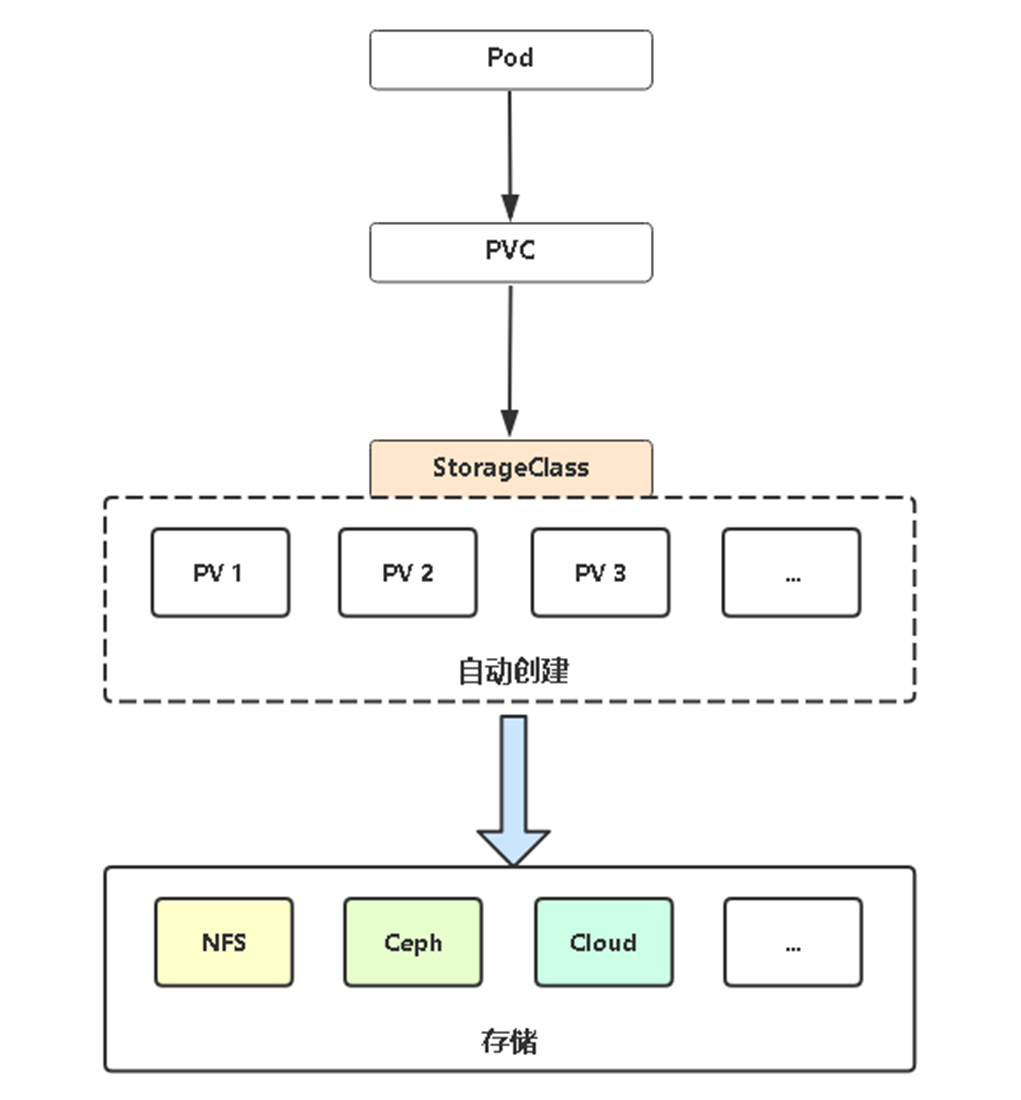

3.6 PV动态供给

我们前面说了 PV 和 PVC 的使用方法,但是前面的 PV 都是静态的,就是我要使用的一个 PVC 的话就必须手动去创建一个 PV,这种方式在很大程度上并不能满足我们的需求,比如我们有一个应用需要对存储的并发度要求比较高,这时使用静态的 PV 就很不合适了,这种情况下我们就需要用到动态 PV,StorageClass。

Dynamic Provisioning机制工作的核心在于StorageClass的API对象。

StorageClass声明存储插件,用于自动创建PV。

Kubernetes支持动态供给的存储插件:

https://kubernetes.io/docs/concepts/storage/storage-classes/

https://github.com/kubernetes-retired/external-storage/

3.7 PV动态供给实践(NFS)

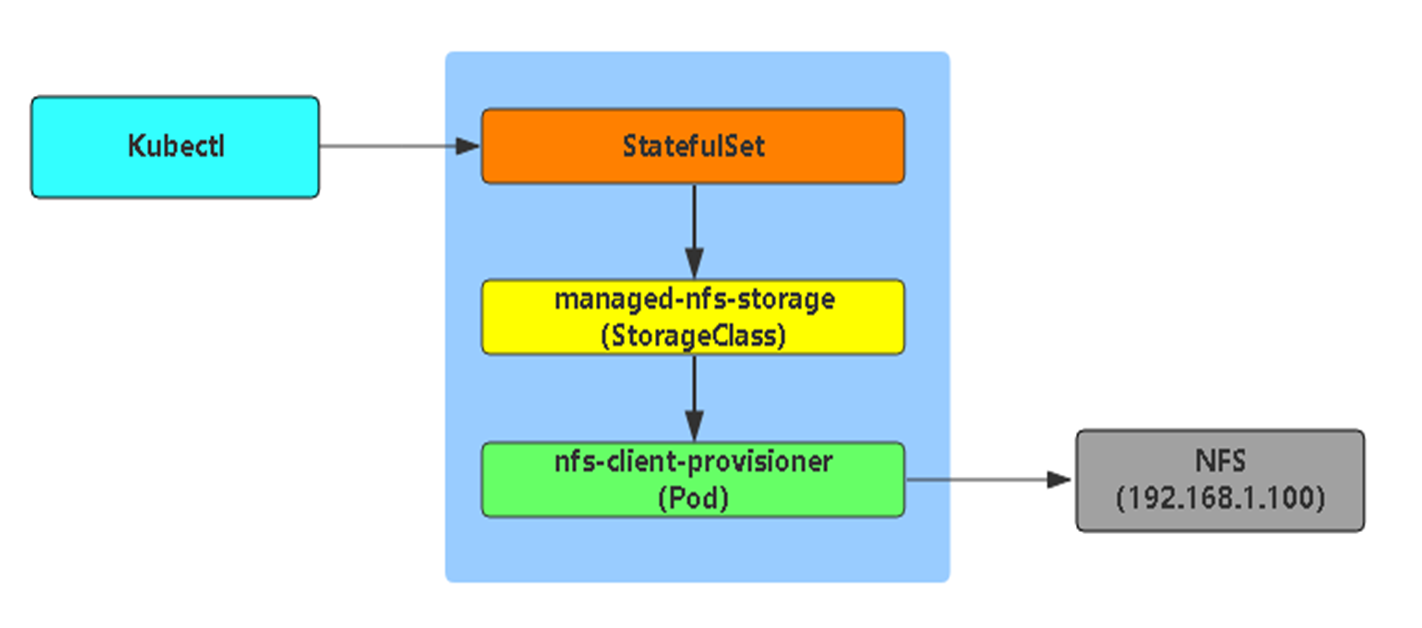

工作流程

由于K8S不支持NFS动态供给,还需要先安装上图中的nfs-client-provisioner插件(nfs-client-provisioner会在nfs服务器上自动创建共享目录):

1 下载nfs-client-provisioner相关的yaml文件

https://github.com/kubernetes-retired/external-storage/tree/master/nfs-client/deploy

class.yaml deployment.yaml rbac.yaml

2 修改连接nfs服务器的地址及共享目录

[root@k8s-admin ~]# vim deployment.yaml

...... spec: serviceAccountName: nfs-client-provisioner containers: - name: nfs-client-provisioner image: quay.io/external_storage/nfs-client-provisioner:latest volumeMounts: - name: nfs-client-root mountPath: /persistentvolumes env: - name: PROVISIONER_NAME value: fuseim.pri/ifs - name: NFS_SERVER value: 172.16.1.72 # 修改为nfs服务器地址 - name: NFS_PATH value: /ifs/kubernetes # 修改为nfs共享目录 volumes: - name: nfs-client-root nfs: server: 172.16.1.72 # 修改为nfs服务器地址 path: /ifs/kubernetes # 修改为nfs共享目录 ......

3 查看pvc动态供给配置文件

[root@k8s-admin ~]# cat class.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage # pv 动态供给插件名称

provisioner: fuseim.pri/ifs # or choose another name, must match deployment's env PROVISIONER_NAME'

parameters:

archiveOnDelete: "true" # 修改删除pv后,自动创建的nfs共享目录不被删除。默认false,即删除

4 应用yaml

[root@k8s-admin ~]# kubectl apply -f .

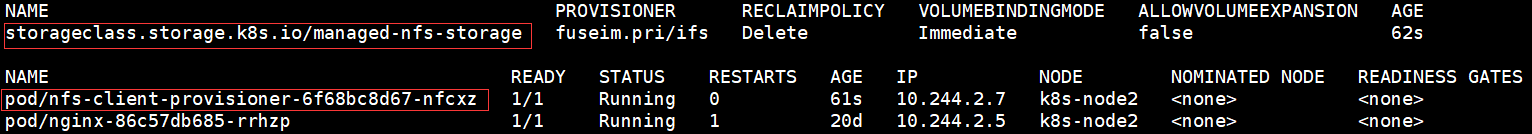

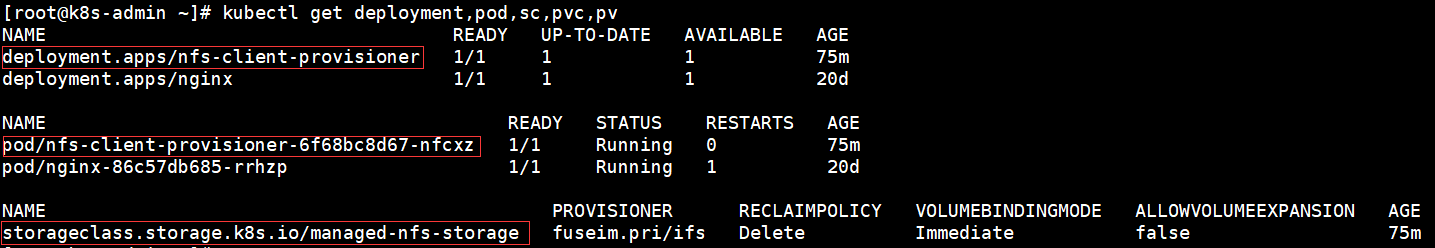

5 查看

[root@k8s-admin ~]# kubectl get sc,pod -o wide

6 测试动态供给

[root@k8s-admin ~]# vim dynamic-pv.yaml

--- apiVersion: v1 kind: Pod metadata: name: my-pod spec: containers: - name: nginx image: nginx:latest ports: - containerPort: 80 volumeMounts: - name: www mountPath: /usr/share/nginx/html volumes: - name: www persistentVolumeClaim: claimName: my-pvc --- apiVersion: v1 kind: PersistentVolumeClaim metadata: name: my-pvc spec: storageClassName: "managed-nfs-storage" accessModes: - ReadWriteMany resources: requests: storage: 5Gi

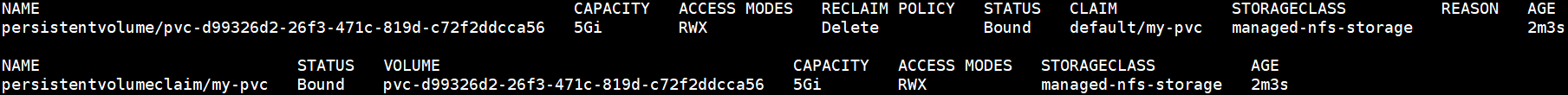

这次会自动创建 5G PV 并与 PVC 绑定。

[root@k8s-admin ~]# kubectl apply -f dynamic-pv.yaml

[root@k8s-admin ~]# kubectl get pv,pvc

测试方法同上,进入到容器中/usr/share/nginx/html(PV挂载目录)目录下创建一个文件测试。

再切换到NFS服务器,会发现下面目录,该目录是自动创建的PV挂载点。进入到目录会发现刚在容器创建的文件。

[root@k8s-admin ~]# kubectl exec -it my-pod bash

root@my-pod:/# cd /usr/share/nginx/html

root@my-pod:/usr/share/nginx/html# echo "hello" >index.html

# 再切换到NFS服务器,会发现也有刚在容器创建的文件,说明工作正常。

[root@k8s-node2 ~]# ls /ifs/kubernetes/default-my-pvc-pvc-75be2f82-623e-4906-9137-211893ca1731/

index.html

说明:

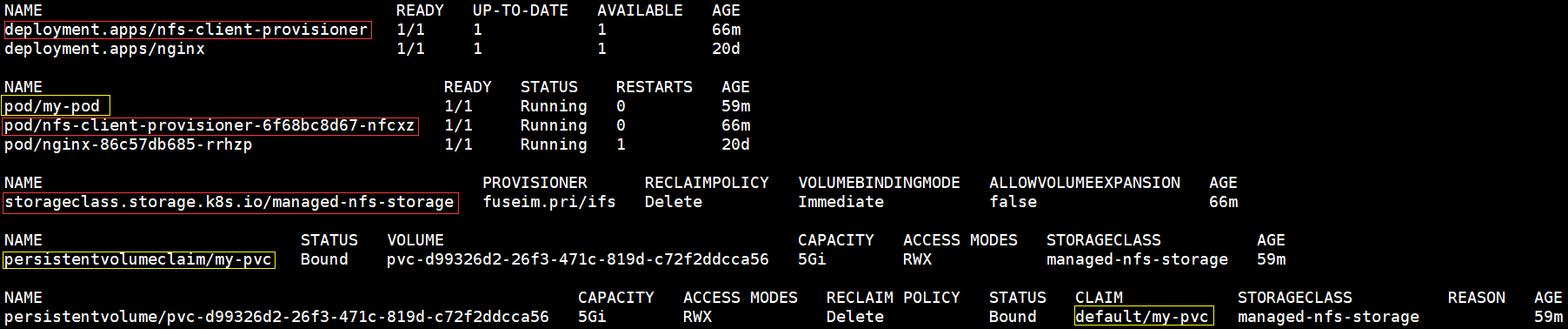

1 查看相关信息

[root@k8s-admin ~]# kubectl get deployment,pod,sc,pvc,pv

2 删除 pod、pvc、pv

[root@k8s-admin ~]# kubectl delete pod/my-pod

[root@k8s-admin ~]# kubectl delete persistentvolumeclaim/my-pvc

# 因为是动态供给所以在删除pvc的时候,pv也会被删除。因为class.yaml文件中

# archiveOnDelete参数改为了‘true’(archiveOnDelete: "true"),pv所

# 对应的nfs共享卷不会被删除。

[root@k8s-node2 ~]# ls /ifs/kubernetes/

archived-default-my-pvc-pvc-d99326d2-26f3-471c-819d-c72f2ddcca56

[root@k8s-admin ~]# kubectl get deployment,pod,sc,pvc,pv

3 删除nfs-client-provisioner控制器

[root@k8s-admin ~]# kubectl delete deployment.apps/nfs-client-provisioner

# 删除deployment的同时相应的pod也会被删除

[root@k8s-admin ~]# kubectl delete storageclass.storage.k8s.io/managed-nfs-storage