Ingress和 Ingress Controller概述

百度网盘链接:https://pan.baidu.com/s/15t_TSH5RRpCFXV-93JHpNw?pwd=8od3 提取码:8od3

18 Ingress和 Ingress Controller概述

18.1 Ingress介绍

四层代理:Service可以通过标签选择器找到它所关联的Pod。但是属于四层代理,只能基于IP和端口代理。

Ingress官网定义:Ingress可以把进入到集群内部的请求转发到集群中的一些服务上,从而可以把服务映射到集群外部。Ingress 能把集群内Service 配置成外网能够访问的 URL,流量负载均衡,提供基于域名访问的虚拟主机等。

Ingress简单的理解就是你原来需要改Nginx配置,然后配置各种域名对应哪个Service,现在把这个动作抽象出来,变成一个Ingress对象,你可以用yaml创建,每次不要去改Nginx了,直接改yaml然后创建/更新就行了;那么问题来了:”Nginx 该怎么处理?”

Ingress Controller就是解决“Nginx的处理方式”的;Ingress Controller通过与Kubernetes API交互,动态感知集群中Ingress规则变化,然后读取他,按照他自己模板生成一段Nginx配置,再写到Nginx Pod里,最后reload 一下。

18.2 Ingress Controller介绍

Ingress Controller是一个七层负载均衡调度器,客户端的请求先到达这个七层负载均衡调度器,由七层负载均衡器在反向代理到后端pod,常见的七层负载均衡器有nginx、traefik,以我们熟悉的nginx为例,假如请求到达nginx,会通过upstream反向代理到后端pod应用,但是后端pod的ip地址是一直在变化的,因此在后端pod前需要加一个service,这个service只是起到分组的作用,那么我们upstream只需要填写service地址即可

18.3 Ingress和Ingress Controller总结

Ingress Controller 可以理解为控制器,它通过不断的跟Kubernetes API交互,实时获取后端Service、Pod的变化,比如新增、删除等,结合Ingress定义的规则生成配置,然后动态更新上边的Nginx或者trafik负载均衡器,并刷新使配置生效,来达到服务自动发现的作用。

Ingress则是定义规则,通过它定义某个域名的请求过来之后转发到集群中指定的Service。它可以通过Yaml文件定义,可以给一个或多个Service定义一个或多个Ingress规则。

18.4 使用Ingress Controller代理k8s内部应用的流程

(1)部署Ingress controller,我们ingress controller使用的是nginx

(2)创建Pod应用,可以通过控制器创建pod

(3)创建Service,用来分组pod

(4)创建Ingress http,测试通过http访问应用

(5)创建Ingress https,测试通过https访问应用

使用七层负载均衡调度器ingress controller时,当客户端访问k8s集群内部的应用时,数据包走向如图流程所示:

18.5 Ingress-controller高可用

Ingress Controller是集群流量的接入层,对它做高可用非常重要,可以基于keepalive实现nginx-ingress-controller高可用,具体实现如下:

Ingress-controller根据Deployment + nodeSeletor + pod反亲和性方式部署在k8s指定的两个work节点,nginx-ingress-controller这个pod共享宿主机ip,然后通过keepalive+lvs实现nginx-ingress-controller高可用

参考:https://github.com/kubernetes/ingress-nginx

https://github.com/kubernetes/ingress-nginx/tree/main/deploy/static/provider/baremetal

[root@master1 ~]# kubectl label node node1 kubernetes.io/ingress=nginx

[root@master1 ~]# kubectl label node node2 kubernetes.io/ingress=nginx

[root@node1 ~]# ctr -n=k8s.io images import ingress-nginx-controllerv1.1.0.tar.gz

[root@node1 ~]# ctr -n=k8s.io images import kube-webhook-certgen-v1.1.0.tar.gz

[root@node2 ~]# ctr -n=k8s.io images import ingress-nginx-controllerv1.1.0.tar.gz

[root@node2 ~]# ctr -n=k8s.io images import kube-webhook-certgen-v1.1.0.tar.gz

[root@master1 ~]# kubectl apply -f ingress-deploy.yaml

[root@master1 ~]# kubectl get pods -n ingress-nginx -o wide

NAME READY STATUS RESTARTS AGE IP NODE

ingress-nginx-admission-create-k4fmn 0/1 Completed 0 103s 10.244.1.12 node1

ingress-nginx-admission-patch-x87h8 0/1 Completed 1 103s 10.244.1.11 node1

ingress-nginx-controller-6c8ffbbfcf-cjj9t 1/1 Running 0 103s 192.168.40.181 node1

ingress-nginx-controller-6c8ffbbfcf-wpt26 1/1 Running 0 103s 192.168.40.182 node2

18.5.1 通过keepalive+nginx实现nginx-ingress-controller高可用

1、安装nginx主备:在node1和node2上做nginx主备安装

# yum install epel-release nginx nginx-mod-stream keepalived -y

2、修改nginx配置文件(主备一样),node1和node2均操作

# vim /etc/nginx/nginx.conf

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log;

pid /run/nginx.pid;

include /usr/share/nginx/modules/*.conf;

events {

worker_connections 1024;

}

# 四层负载均衡,为两台Master apiserver组件提供负载均衡

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-ingress {

server 192.168.40.181:80; # Master1 APISERVER IP:PORT

server 192.168.40.182:80; # Master2 APISERVER IP:PORT

}

server {

listen 30080;

proxy_pass k8s-ingress;

}

}

http {

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

include /etc/nginx/mime.types;

default_type application/octet-stream;

}

注意:nginx监听端口变成大于30000的端口,比方说30080,这样访问域名:30080就可以了,必须是满足大于30000以上,才能代理ingress-controller。

3、keepalive配置

主keepalived:

[root@node1 ~]# vim /etc/keepalived/keepalived.conf

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

}

vrrp_instance VI_1 {

state MASTER

interface eth0 #修改为实际网卡名

virtual_router_id 51 #VRRP路由ID实例,每个实例是唯一的

priority 100 #优先级,备服务器设置 90

advert_int 1 #指定VRRP心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

# 虚拟IP

virtual_ipaddress {

192.168.40.199

}

track_script {

check_nginx

}

}

[root@node1 ~]# vim /etc/keepalived/check_nginx.sh //编辑keepalived存活检测脚本

#!/bin/bash

counter=`ps -C nginx --no-header | wc -l`

if [ $counter -eq 0 ]; then

service nginx start

sleep 2

counter=`ps -C nginx --no-header | wc -l`

if [ $counter -eq 0 ]; then

service keepalived stop

fi

fi

[root@node1 ~]# chmod +x /etc/keepalived/check_nginx.sh

备keepalive

[root@node2 ~]# vim /etc/keepalived/keepalived.conf

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_BACKUP

}

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

}

vrrp_instance VI_1 {

state BACKUP

interface eth0 # 修改为实际网卡名

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 90 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

# 虚拟IP

virtual_ipaddress {

192.168.40.199

}

track_script {

check_nginx

}

}

[root@node2 ~]# vim /etc/keepalived/check_nginx.sh

#!/bin/bash

counter=`ps -C nginx --no-header | wc -l`

if [ $counter -eq 0 ]; then

service nginx start

sleep 2

counter=`ps -C nginx --no-header | wc -l`

if [ $counter -eq 0 ]; then

service keepalived stop

fi

fi

[root@node2 ~]# chmod +x /etc/keepalived/check_nginx.sh

#注:keepalived根据脚本返回状态码(0为工作正常,非0不正常)判断是否故障转移。

4、启动服务:node1+node2

# systemctl daemon-reload

# systemctl enable nginx keepalived

# systemctl start nginx keepalived

5、测试vip是否绑定成功

# ip addr

......

inet 192.168.1.199/24 scope global secondary ens33

......

6、测试keepalived

停掉node1上的keepalived,Vip会漂移到node2:

[root@node1 ~]# service keepalived stop

[root@node2 ~]# ip addr

......

inet 192.168.1.199/24 scope global secondary ens33

启动node1上的keepalived,Vip又会漂移到master1:

[root@node1 ~]# service keepalived start

[root@node1 ~]# ip addr

......

inet 192.168.1.199/24 scope global secondary ens33

18.5.2 测试Ingress HTTP代理k8s内部站点

1.部署后端tomcat服务

[root@master1 ~]# vim ingress-demo.yaml

apiVersion: v1

kind: Service

metadata:

name: tomcat

namespace: default

spec:

selector:

app: tomcat

release: canary

ports:

- name: http

targetPort: 8080

port: 8080

- name: ajp

targetPort: 8009

port: 8009

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: tomcat-deploy

namespace: default

spec:

replicas: 2

selector:

matchLabels:

app: tomcat

release: canary

template:

metadata:

labels:

app: tomcat

release: canary

spec:

containers:

- name: tomcat

image: tomcat:8.5-jre8-alpine

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 8080

name: ajp

containerPort: 8009

[root@master1 ~]# kubectl apply -f ingress-demo.yaml #更新资源清单yaml文件

[root@master1 ~]# kubectl get pods -l app=tomcat #查看pod是否部署成功

NAME READY STATUS RESTARTS AGE

tomcat-deploy-66b67fcf7b-9h9qp 1/1 Running 0 32s

tomcat-deploy-66b67fcf7b-hxtkm 1/1 Running 0 32s

2、编写ingress规则

[root@master1 ~]# vim ingress-myapp.yaml #编写ingress的配置清单

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-myapp

namespace: default

# annotations: #1.23用这种注解的模式

# kubernetes.io/ingress.class: "nginx"

spec:

ingressClassName: nginx #1.25用ingressClassName的模式

rules:

- host: tomcat.lucky.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: tomcat

port:

number: 8080

[root@master1 ~]# kubectl apply -f ingress-myapp.yaml #更新yaml文件:

若出现如下报错:

通过如下命令解决:

[root@master1 ~]# kubectl delete -A ValidatingWebhookConfiguration ingress-nginx-admission

[root@master1 ~]# kubectl apply -f ingress-myapp.yaml

[root@master1 ~]# kubectl describe ingress ingress-myapp #查看ingress-myapp的详细信息

Name: ingress-myapp

Namespace: default

Address:

Default backend: default-http-backend:80 (10.244.187.118:8080)

Rules:

Host Path Backends

---- ---- --------

tomcat.lucky.com

tomcat:8080 (10.244.209.172:8080,10.244.209.173:8080)

Annotations: kubernetes.io/ingress.class: nginx

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal CREATE 22s nginx-ingress-controller Ingress default/ingress-myapp

修改电脑本地的host文件,增加如下一行:

192.168.40.199 tomcat.lucky.com

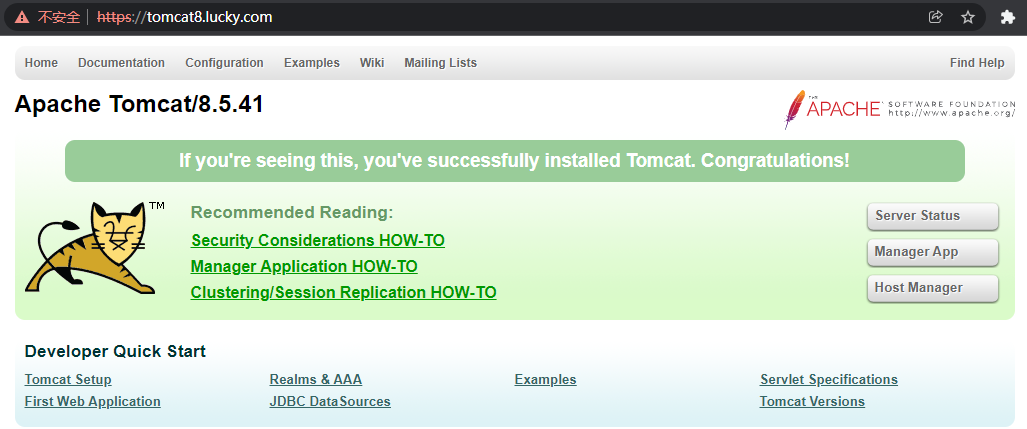

浏览器访问tomcat.lucky.com,出现如下页面(自己测试只有域名能访问,用ip+端口访问不通):

总结:

通过deployment+nodeSelector+pod反亲和性实现ingress-controller在node1和node2调度。

Keeplaive+nginx实现ingress-controller高可用。

测试ingress七层代理是否正常。

18.5.3 测试Ingress HTTPS代理k8s内部站点

1、准备证书

[root@master1 ~]# cd /root

[root@master1 ~]# openssl genrsa -out tls.key 2048

[root@master1 ~]# openssl req -new -x509 -key tls.key -out tls.crt -subj /C=CN/ST=Beijing/L=Beijing/O=DevOps/CN=tomcat8.lucky.com

2、生成secret

[root@master1 ~]# kubectl create secret tls tomcat-ingress-secret --cert=tls.crt --key=tls.key

[root@master1 ~]# kubectl get secret

tomcat-ingress-secret kubernetes.io/tls 2 8s

3、创建Ingress

[root@master1 ~]# vim ingress-tomcat-tls.yaml #编写ingress的配置清单

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-tomcat-tls

namespace: default

spec:

ingressClassName: nginx #1.25用ingressClassName的模式

tls:

- hosts:

- tomcat8.lucky.com

secretName: tomcat-ingress-secret

rules:

- host: tomcat8.lucky.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: tomcat

port:

number: 8080

[root@master1 ~]# kubectl apply -f ingress-tomcat-tls.yaml

修改电脑本地的host文件,增加如下一行:

192.168.40.199 tomcat8.lucky.com

浏览器访问tomcat8.lucky.com,出现如下页面:

18.5.4 同一个k8s搭建多套Ingress-controller

ingress可以简单理解为service的service,他通过独立的ingress对象来制定请求转发的规则,把请求路由到一个或多个service中。这样就把服务与请求规则解耦了,可以从业务维度统一考虑业务的暴露,而不用为每个service单独考虑。

在同一个k8s集群里,部署两个ingress nginx。一个deploy部署给A的API网关项目用。另一个daemonset部署给其它项目作域名访问用。这两个项目的更新频率和用法不一致,暂时不用合成一个。

为了满足多租户场景,需要在k8s集群部署多个ingress-controller,给不同用户不同环境使用。

主要参数设置:

containers:

- name: nginx-ingress-controller

image: registry.cn-hangzhou.aliyuncs.com/google_containers/nginx-ingress-controller:v1.1.0

args:

- /nginx-ingress-controller

- --ingress-class=ngx-ds

注意:--ingress-class设置该Ingress Controller可监听的目标Ingress Class标识;注意:同一个集群中不同套Ingress Controller监听的Ingress Class标识必须唯一,且不能设置为nginx关键字(其是集群默认Ingress Controller的监听标识);

创建Ingress规则:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-myapp

namespace: default

annotations:

kubernetes.io/ingress.class: "ngx-ds"

spec:

rules:

- host: tomcat.lucky.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: tomcat

port:

number: 8080

18.6 通过Ingress-nginx实现灰度发布

场景一: 将新版本灰度给部分用户

假设线上运行了一套对外提供7层服务的 Service A 服务,后来开发了个新版本 Service A’想要上线,但又不想直接替换掉原来的 Service A,希望先灰度一小部分用户,等运行一段时间足够稳定了再逐渐全量上线新版本,最后平滑下线旧版本。这个时候就可以利用 Nginx Ingress 基于Header或Cookie进行流量切分的策略来发布,业务使用 Header 或 Cookie 来标识不同类型的用户,我们通过配置Ingress来实现让带有指定Header或Cookie的请求被转发到新版本,其它的仍然转发到旧版本,从而实现将新版本灰度给部分用户。

场景二: 切一定比例的流量给新版本

假设线上运行了一套对外提供7层服务的Service B服务,后来修复了一些问题,需要灰度上线一个新版本Service B’,但又不想直接替换掉原来的Service B,而是让先切10%的流量到新版本,等观察一段时间稳定后再逐渐加大新版本的流量比例直至完全替换旧版本,最后再滑下线旧版本,从而实现切一定比例的流量给新版本。

Ingress-Nginx是一个K8S ingress工具,支持配置Ingress Annotations来实现不同场景下的灰度发布和测试。 Nginx Annotations支持以下几种Canary规则:

假设我们现在部署了两个版本的服务,老版本和canary版本。

nginx.ingress.kubernetes.io/canary-by-header:基于Request Header的流量切分,适用于灰度发布以及 A/B 测试。当Request Header设置为always时,请求将会被一直发送到Canary版本;当Request Header设置为never时,请求不会被发送到Canary入口。

nginx.ingress.kubernetes.io/canary-by-header-value:要匹配的Request Header的值,用于通知Ingress将请求路由到Canary Ingress中指定的服务。当Request Header设置为此值时,它将被路由到Canary入口。

nginx.ingress.kubernetes.io/canary-weight:基于服务权重的流量切分,适用于蓝绿部署,权重范围0 - 100按百分比将请求路由到Canary Ingress中指定的服务。权重为0意味着该金丝雀规则不会向Canary入口的服务发送任何请求。权重为60意味着60%流量转到canary。权重为100 意味着所有请求都将被发送到Canary入口。

nginx.ingress.kubernetes.io/canary-by-cookie:基于Cookie的流量切分,适用于灰度发布与A/B测试。用于通知 Ingress将请求路由到Canary Ingress中指定的服务的cookie。当cookie值设置为always时,它将被路由到Canary入口;当cookie值设置为never时,请求不会被发送到Canary入口。

部署两个版本的服务,这里以简单的 nginx 为例,先部署一个v1版本:

[root@node1 ~]# ctr -n=k8s.io images import openresty.tar.gz

[root@node2 ~]# ctr -n=k8s.io images import openresty.tar.gz

[root@master1 ~]# vim v1.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-v1

spec:

replicas: 1

selector:

matchLabels:

app: nginx

version: v1

template:

metadata:

labels:

app: nginx

version: v1

spec:

containers:

- name: nginx

image: "openresty/openresty:centos"

imagePullPolicy: IfNotPresent

ports:

- name: http

protocol: TCP

containerPort: 80

volumeMounts:

- mountPath: /usr/local/openresty/nginx/conf/nginx.conf

name: config

subPath: nginx.conf

volumes:

- name: config

configMap:

name: nginx-v1

---

apiVersion: v1

kind: ConfigMap

metadata:

labels:

app: nginx

version: v1

name: nginx-v1

data:

nginx.conf: |

worker_processes 1;

events {

accept_mutex on;

multi_accept on;

use epoll;

worker_connections 1024;

}

http {

ignore_invalid_headers off;

server {

listen 80;

location / {

access_by_lua '

local header_str = ngx.say("nginx-v1")

';

}

}

}

---

apiVersion: v1

kind: Service

metadata:

name: nginx-v1

spec:

type: ClusterIP

ports:

- port: 80

protocol: TCP

name: http

selector:

app: nginx

version: v1

[root@master1~]# kubectl apply -f v1.yaml

再部署一个 v2 版本:

[root@master1 ~]# vim v2.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-v2

spec:

replicas: 1

selector:

matchLabels:

app: nginx

version: v2

template:

metadata:

labels:

app: nginx

version: v2

spec:

containers:

- name: nginx

image: "openresty/openresty:centos"

imagePullPolicy: IfNotPresent

ports:

- name: http

protocol: TCP

containerPort: 80

volumeMounts:

- mountPath: /usr/local/openresty/nginx/conf/nginx.conf

name: config

subPath: nginx.conf

volumes:

- name: config

configMap:

name: nginx-v2

---

apiVersion: v1

kind: ConfigMap

metadata:

labels:

app: nginx

version: v2

name: nginx-v2

data:

nginx.conf: |

worker_processes 1;

events {

accept_mutex on;

multi_accept on;

use epoll;

worker_connections 1024;

}

http {

ignore_invalid_headers off;

server {

listen 80;

location / {

access_by_lua '

local header_str = ngx.say("nginx-v2")

';

}

}

}

---

apiVersion: v1

kind: Service

metadata:

name: nginx-v2

spec:

type: ClusterIP

ports:

- port: 80

protocol: TCP

name: http

selector:

app: nginx

version: v2

[root@master1 ~]# kubectl apply -f v2.yaml

再创建一个 Ingress,对外暴露服务,指向 v1 版本的服务:

[root@master1 ~]# vim v1-ingress.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: nginx

spec:

ingressClassName: nginx

rules:

- host: canary.example.com

http:

paths:

- path: / #配置访问路径,如果通过url进行转发,需要修改;空默认为访问的路径为"/"

pathType: Prefix

backend: #配置后端服务

service:

name: nginx-v1

port:

number: 80

[root@master1 ~]# kubectl apply -f v1-ingress.yaml

[root@master1 ~]# curl -H "Host: canary.example.com" http://192.168.40.199

nginx-v1

基于 Header 的流量切分:

创建Canary Ingress,指定v2版本的后端服务,且加上一些annotation,实现仅将带有名为Region且值为cd或sz的请求头的请求转发给当前Canary Ingress,模拟灰度新版本给成都和深圳地域的用户:

[root@master1 ~]# vim v2-ingress.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-by-header: "Region"

nginx.ingress.kubernetes.io/canary-by-header-pattern: "cd|sz"

name: nginx-canary

spec:

ingressClassName: nginx

rules:

- host: canary.example.com

http:

paths:

- path: / #配置访问路径,如果通过url进行转发,需要修改;空默认为访问的路径为"/"

pathType: Prefix

backend: #配置后端服务

service:

name: nginx-v2

port:

number: 80

[root@master1 ~]# kubectl apply -f v2-ingress.yaml

[root@master1 ~]# curl -H "Host: canary.example.com" -H "Region: cd" http://192.168.40.199

nginx-v2

[root@master1 ~]# curl -H "Host: canary.example.com" -H "Region: bj" http://192.168.40.199

nginx-v1

可以看到,只有header Region为cd或sz的请求才由v2版本服务响应。

基于 Cookie 的流量切分:

与前面Header类似,不过使用Cookie就无法自定义value了,这里以模拟灰度成都地域用户为例,仅将带有名为 user_from_cd 的cookie的请求转发给当前Canary Ingress。先删除前面基于Header的流量切分的Canary Ingress,然后创建下面新的 Canary Ingress:

[root@master1 ~]# kubectl delete -f v2-ingress.yaml

[root@master1 ~]# vim v2-cookie.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-by-cookie: "user_from_cd"

name: nginx-canary

spec:

ingressClassName: nginx

rules:

- host: canary.example.com

http:

paths:

- path: / #配置访问路径,如果通过url进行转发,需要修改;空默认为访问的路径为"/"

pathType: Prefix

backend: #配置后端服务

service:

name: nginx-v2

port:

number: 80

[root@master1 ~]# kubectl apply -f v1-cookie.yaml

[root@master1 ~]# curl -s -H "Host: canary.example.com" --cookie "user_from_cd=always" http://192.168.40.199

nginx-v2

[root@master1 ~]# curl -s -H "Host: canary.example.com" --cookie "user_from_bj=always" http://192.168.40.199

nginx-v1

[root@master1 ~]# curl -s -H "Host: canary.example.com" http://192.168.40.199

nginx-v1

可以看到,只有cookie user_from_cd为always的请求才由v2版本的服务响应。

基于服务权重的流量切分:

基于服务权重的 Canary Ingress 就简单了,直接定义需要导入的流量比例,这里以导入 10% 流量到 v2 版本为例 (如果有,先删除之前的 Canary Ingress):

[root@master1 ~]# kubectl delete -f v2-cookie.yaml

[root@master1 ~]# vim v2-weight.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-weight: "10"

name: nginx-canary

spec:

ingressClassName: nginx

rules:

- host: canary.example.com

http:

paths:

- path: / #配置访问路径,如果通过url进行转发,需要修改;空默认为访问的路径为"/"

pathType: Prefix

backend: #配置后端服务

service:

name: nginx-v2

port:

number: 80

[root@master1 ~]# kubectl apply -f v2-weight.yaml

[root@master1 ~]# for i in {1..10}; do curl -H "Host: canary.example.com" http://192.168.40.181; done;

返回如下结果:

nginx-v1

nginx-v1

nginx-v1

nginx-v1

nginx-v1

nginx-v1

nginx-v2

nginx-v1

nginx-v1

nginx-v1

可以看到,大概只有十分之一的几率由 v2 版本的服务响应,符合 10% 服务权重的设置

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】凌霞软件回馈社区,博客园 & 1Panel & Halo 联合会员上线

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】博客园社区专享云产品让利特惠,阿里云新客6.5折上折

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步

· CSnakes vs Python.NET:高效嵌入与灵活互通的跨语言方案对比

· DeepSeek “源神”启动!「GitHub 热点速览」

· 我与微信审核的“相爱相杀”看个人小程序副业

· Plotly.NET 一个为 .NET 打造的强大开源交互式图表库

· 上周热点回顾(2.17-2.23)