Python Pandas Merge, join and concatenate

Pandas提供了基于 series, DataFrame 和panel对象集合的连接/合并操作。

Concatenating objects

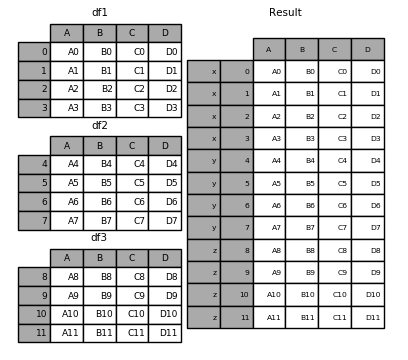

先来看例子:

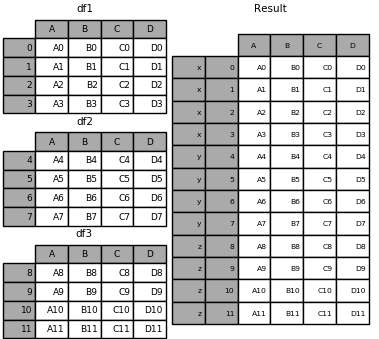

from pandas import Series, DataFrame import pandas as pd import numpy as np df1 = pd.DataFrame({'A': ['A0', 'A1', 'A2', 'A3'], 'B': ['B0', 'B1', 'B2', 'B3'], 'C': ['C0', 'C1', 'C2', 'C3'], 'D': ['D0', 'D1', 'D2', 'D3']}, index=[0, 1, 2, 3]) df2 = pd.DataFrame({'A': ['A4', 'A5', 'A6', 'A7'], 'B': ['B4', 'B5', 'B6', 'B7'], 'C': ['C4', 'C5', 'C6', 'C7'], 'D': ['D4', 'D5', 'D6', 'D7']}, index=[4, 5, 6, 7]) df3 = pd.DataFrame({'A': ['A8', 'A9', 'A10', 'A11'], 'B': ['B8', 'B9', 'B10', 'B11'], 'C': ['C8', 'C9', 'C10', 'C11'], 'D': ['D8', 'D9', 'D10', 'D11']}, index=[8, 9, 10, 11]) frames = [df1, df2, df3] result = pd.concat(frames) print(frames)

上面效果类似sql中的union操作

pd.concat(objs, axis=0, join='outer', join_axes=None, ignore_index=False,keys=None, levels=None, names=None, verify_integrity=False,copy=True)

objs: a sequence or mapping of Series, DataFrame, or Panel objects. If a dict is passed, the sorted keys will be used as the keys argument, unless it is passed, in which case the values will be selected (see below). Any None objects will be dropped silently unless they are all None in which case a ValueError will be raised.axis: {0, 1, ...}, default 0. The axis to concatenate along.join: {‘inner’, ‘outer’}, default ‘outer’. How to handle indexes on other axis(es). Outer for union and inner for intersection.ignore_index: boolean, default False. If True, do not use the index values on the concatenation axis. The resulting axis will be labeled 0, ..., n - 1. This is useful if you are concatenating objects where the concatenation axis does not have meaningful indexing information. Note the index values on the other axes are still respected in the join.join_axes: list of Index objects. Specific indexes to use for the other n - 1 axes instead of performing inner/outer set logic.keys: sequence, default None. Construct hierarchical index using the passed keys as the outermost level. If multiple levels passed, should contain tuples.levels: list of sequences, default None. Specific levels (unique values) to use for constructing a MultiIndex. Otherwise they will be inferred from the keys.names: list, default None. Names for the levels in the resulting hierarchical index.verify_integrity: boolean, default False. Check whether the new concatenated axis contains duplicates. This can be very expensive relative to the actual data concatenation.copy: boolean, default True. If False, do not copy data unnecessarily.

result = pd.concat(frames, keys=['x', 'y', 'z']) print(result)

上面的结果集有一个hierachical index, 所以我们可以根据这个key找到相应的元素

print(result.loc['y']) # A B C D #4 A4 B4 C4 D4 #5 A5 B5 C5 D5 #6 A6 B6 C6 D6 #7 A7 B7 C7 D7

Set logic on the other axes

When gluing together multiple DataFrames (or Panels or...), for example, you have a choice of how to handle the other axes (other than the one being concatenated). This can be done in three ways:

- Take the (sorted) union of them all,

join='outer'. This is the default option as it results in zero information loss. - Take the intersection,

join='inner'. - Use a specific index (in the case of DataFrame) or indexes (in the case of Panel or future higher dimensional objects), i.e. the

join_axesargument

Here is a example of each of these methods. First, the default join='outer' behavior:

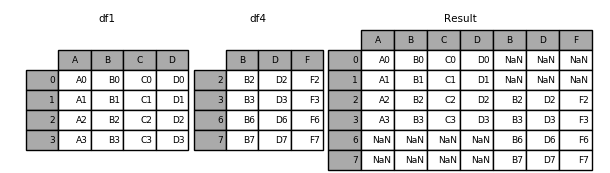

下面有点类似full join的效果

df1 = pd.DataFrame({'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']},

index=[0, 1, 2, 3])

index=[8, 9, 10, 11])

df4 = pd.DataFrame({'B': ['B2', 'B3', 'B6', 'B7'],

'D': ['D2', 'D3', 'D6', 'D7'],

'F': ['F2', 'F3', 'F6', 'F7']},

index=[2, 3, 6, 7])

result = pd.concat([df1, df4], axis=1)

print(result)

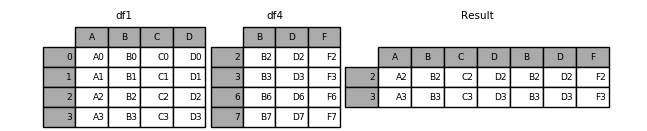

下面看下inner的效果

df1 = pd.DataFrame({'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']},

index=[0, 1, 2, 3])

df4 = pd.DataFrame({'B': ['B2', 'B3', 'B6', 'B7'],

'D': ['D2', 'D3', 'D6', 'D7'],

'F': ['F2', 'F3', 'F6', 'F7']},

index=[2, 3, 6, 7])

result = pd.concat([df1, df4], axis=1,join='inner')

print(result)

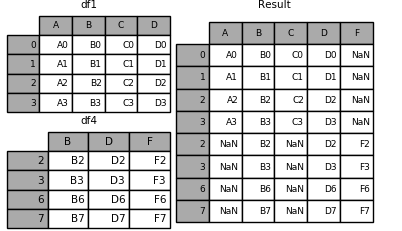

Left join

df1 = pd.DataFrame({'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']},

index=[0, 1, 2, 3])

df4 = pd.DataFrame({'B': ['B2', 'B3', 'B6', 'B7'],

'D': ['D2', 'D3', 'D6', 'D7'],

'F': ['F2', 'F3', 'F6', 'F7']},

index=[2, 3, 6, 7])

result = pd.concat([df1, df4], axis=1, join_axes=[df1.index])

print(result)

Concatenating using append

A useful shortcut to concat are the append instance methods on Series and DataFrame. These methods actually predated concat. They concatenate along axis=0, namely the index:类似union

df1 = pd.DataFrame({'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']},

index=[0, 1, 2, 3])

df2 = pd.DataFrame({'A': ['A4', 'A5', 'A6', 'A7'],

'B': ['B4', 'B5', 'B6', 'B7'],

'C': ['C4', 'C5', 'C6', 'C7'],

'D': ['D4', 'D5', 'D6', 'D7']},

index=[4, 5, 6, 7])

result = result = df1.append(df2)

print(result)

df1 = pd.DataFrame({'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']},

index=[0, 1, 2, 3])

df4 = pd.DataFrame({'B': ['B2', 'B3', 'B6', 'B7'],

'D': ['D2', 'D3', 'D6', 'D7'],

'F': ['F2', 'F3', 'F6', 'F7']},

index=[2, 3, 6, 7])

result = result = df1.append(df4)

print(result)

df1 = pd.DataFrame({'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']},

index=[0, 1, 2, 3])

df2 = pd.DataFrame({'A': ['A4', 'A5', 'A6', 'A7'],

'B': ['B4', 'B5', 'B6', 'B7'],

'C': ['C4', 'C5', 'C6', 'C7'],

'D': ['D4', 'D5', 'D6', 'D7']},

index=[4, 5, 6, 7])

df3 = pd.DataFrame({'A': ['A8', 'A9', 'A10', 'A11'],

'B': ['B8', 'B9', 'B10', 'B11'],

'C': ['C8', 'C9', 'C10', 'C11'],

'D': ['D8', 'D9', 'D10', 'D11']},

index=[8, 9, 10, 11])

df4 = pd.DataFrame({'B': ['B2', 'B3', 'B6', 'B7'],

'D': ['D2', 'D3', 'D6', 'D7'],

'F': ['F2', 'F3', 'F6', 'F7']},

index=[2, 3, 6, 7])

result = df1.append([df2,df3])

print(result)

Ignoring indexes on the concatenation axis

结果集中不出现重复的索引序号

result = pd.concat([df1, df4], ignore_index=True) print(result)

DataFrame.append:效果一样

result = df1.append(df4, ignore_index=True) print(result)

Concatenating with mixed ndims

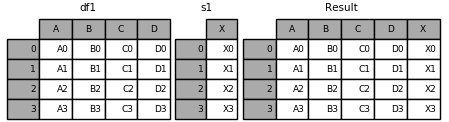

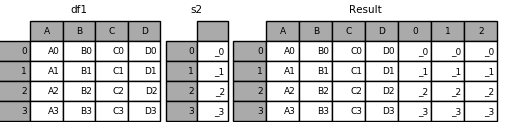

可以对series 和data frames 进行concatenate

s1 = pd.Series(['X0', 'X1', 'X2', 'X3'], name='X') result = pd.concat([df1, s1], axis=1) print(result)

如果series没有命名,结果如下

s2 = pd.Series(['_0', '_1', '_2', '_3']) result = pd.concat([df1, s2,s2,s2], axis=1) print(result)

when ignore_index=True 所有的命名都会drop

result = pd.concat([df1, s1], axis=1, ignore_index=True) print(result)

More concatenating with group keys

A fairly common use of the keys argument is to override the column names when creating a new DataFrame based on existing Series. Notice how the default behaviour consists on letting the resulting DataFrame inherits the parent Series’ name, when these existed.

s3 = pd.Series([0, 1, 2, 3], name='foo') s4 = pd.Series([0, 1, 2, 3]) s5 = pd.Series([0, 1, 4, 5]) result = pd.concat([s3, s4, s5], axis=1) print(result)

# foo 0 1 #0 0 0 0 #1 1 1 1 #2 2 2 4 #3 3 3 5

可以在concat的时候声明名字

result = pd.concat([s3, s4, s5], axis=1, keys=['red','blue','yellow']) print(result) # red blue yellow #0 0 0 0 #1 1 1 1 #2 2 2 4 #3 3 3 5

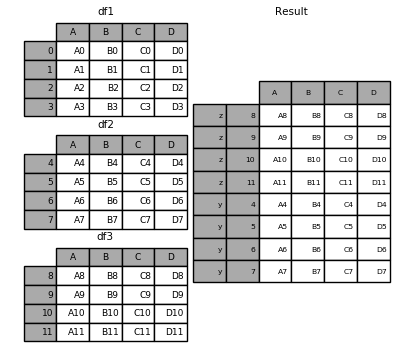

frames= [df1,df2,df3] result = pd.concat(frames, keys=['x', 'y', 'z']) print(result)

我们可以通过指定dict

result = {'x': df1, 'y': df2, 'z': df3}

print(result)

pieces = {'x': df1, 'y': df2, 'z': df3}

result = pd.concat(pieces, keys=['z', 'y'])

print(result)

Database-style DataFrame joining/merging

pandas has full-featured, high performance in-memory join operations idiomatically very similar to relational databases like SQL. These methods perform significantly better (in some cases well over an order of magnitude better) than other open source implementations (like base::merge.data.frame in R). The reason for this is careful algorithmic design and internal layout of the data in DataFrame.

See the cookbook for some advanced strategies.

Users who are familiar with SQL but new to pandas might be interested in a comparison with SQL.

pandas provides a single function, merge, as the entry point for all standard database join operations between DataFrame objects:

pd.merge(left, right, how='inner', on=None, left_on=None, right_on=None, left_index=False, right_index=False, sort=True, suffixes=('_x', '_y'), copy=True, indicator=False, validate=None)

-

left: A DataFrame object -

right: Another DataFrame object -

on: Columns (names) to join on. Must be found in both the left and right DataFrame objects. If not passed andleft_indexandright_indexareFalse, the intersection of the columns in the DataFrames will be inferred to be the join keys -

left_on: Columns from the left DataFrame to use as keys. Can either be column names or arrays with length equal to the length of the DataFrame -

right_on: Columns from the right DataFrame to use as keys. Can either be column names or arrays with length equal to the length of the DataFrame -

left_index: IfTrue, use the index (row labels) from the left DataFrame as its join key(s). In the case of a DataFrame with a MultiIndex (hierarchical), the number of levels must match the number of join keys from the right DataFrame -

right_index: Same usage asleft_indexfor the right DataFrame -

how: One of'left','right','outer','inner'. Defaults toinner. See below for more detailed description of each method -

sort: Sort the result DataFrame by the join keys in lexicographical order. Defaults toTrue, setting toFalsewill improve performance substantially in many cases -

suffixes: A tuple of string suffixes to apply to overlapping columns. Defaults to('_x', '_y'). -

copy: Always copy data (defaultTrue) from the passed DataFrame objects, even when reindexing is not necessary. Cannot be avoided in many cases but may improve performance / memory usage. The cases where copying can be avoided are somewhat pathological but this option is provided nonetheless. -

indicator: Add a column to the output DataFrame called_mergewith information on the source of each row._mergeis Categorical-type and takes on a value ofleft_onlyfor observations whose merge key only appears in'left'DataFrame,right_onlyfor observations whose merge key only appears in'right'DataFrame, andbothif the observation’s merge key is found in both.New in version 0.17.0.

-

validate: string, default None. If specified, checks if merge is of specified type.- “one_to_one” or “1:1”: checks if merge keys are unique in both left and right datasets.

- “one_to_many” or “1:m”: checks if merge keys are unique in left dataset.

- “many_to_one” or “m:1”: checks if merge keys are unique in right dataset.

- “many_to_many” or “m:m”: allowed, but does not result in checks.

New in version 0.21.0.

The return type will be the same as left. If left is a DataFrame and right is a subclass of DataFrame, the return type will still be DataFrame.

merge is a function in the pandas namespace, and it is also available as a DataFrame instance method, with the calling DataFrame being implicitly considered the left object in the join.

The related DataFrame.join method, uses merge internally for the index-on-index (by default) and column(s)-on-index join. If you are joining on index only, you may wish to use DataFrame.join to save yourself some typing.

Brief primer on merge methods (relational algebra)

Experienced users of relational databases like SQL will be familiar with the terminology used to describe join operations between two SQL-table like structures (DataFrame objects). There are several cases to consider which are very important to understand:

- one-to-one joins: for example when joining two DataFrame objects on their indexes (which must contain unique values)

- many-to-one joins: for example when joining an index (unique) to one or more columns in a DataFrame

- many-to-many joins: joining columns on columns.

Note

When joining columns on columns (potentially a many-to-many join), any indexes on the passed DataFrame objects will be discarded.

It is worth spending some time understanding the result of the many-to-many join case. In SQL / standard relational algebra, if a key combination appears more than once in both tables, the resulting table will have the Cartesian product of the associated data. Here is a very basic example with one unique key combination:

left = pd.DataFrame({'key': ['K0', 'K1', 'K2', 'K3'],

'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3']})

right = pd.DataFrame({'key': ['K0', 'K1', 'K2', 'K3'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']})

result = pd.merge(left, right, on='key')

print(result)

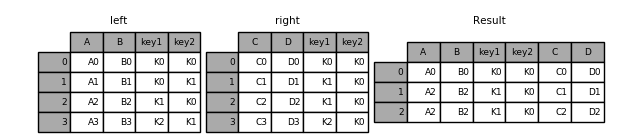

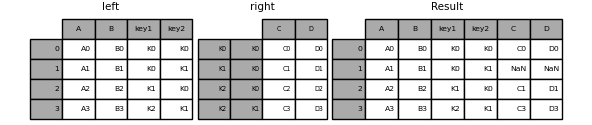

on 作用在两个键上

left = pd.DataFrame({'key1': ['K0', 'K0', 'K1', 'K2'],

'key2': ['K0', 'K1', 'K0', 'K1'],

'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3']})

right = pd.DataFrame({'key1': ['K0', 'K1', 'K1', 'K2'],

'key2': ['K0', 'K0', 'K0', 'K0'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']})

result = pd.merge(left, right, on=['key1','key2'])

print(result)

The how argument to merge specifies how to determine which keys are to be included in the resulting table. If a key combination does not appear in either the left or right tables, the values in the joined table will be NA. Here is a summary of the how options and their SQL equivalent names:

| Merge method | SQL Join Name | Description |

|---|---|---|

left |

LEFT OUTER JOIN |

Use keys from left frame only |

right |

RIGHT OUTER JOIN |

Use keys from right frame only |

outer |

FULL OUTER JOIN |

Use union of keys from both frames |

inner |

INNER JOIN |

Use intersection of keys from both frames |

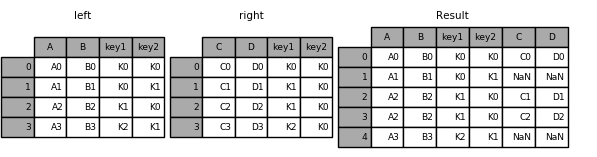

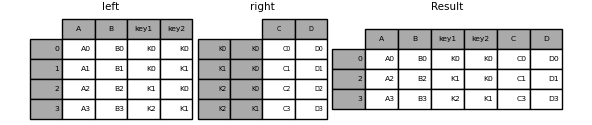

left = pd.DataFrame({'key1': ['K0', 'K0', 'K1', 'K2'],

'key2': ['K0', 'K1', 'K0', 'K1'],

'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3']})

right = pd.DataFrame({'key1': ['K0', 'K1', 'K1', 'K2'],

'key2': ['K0', 'K0', 'K0', 'K0'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']})

result = pd.merge(left, right, how='left', on=['key1', 'key2'])

print(result)

left = pd.DataFrame({'key1': ['K0', 'K0', 'K1', 'K2'],

'key2': ['K0', 'K1', 'K0', 'K1'],

'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3']})

right = pd.DataFrame({'key1': ['K0', 'K1', 'K1', 'K2'],

'key2': ['K0', 'K0', 'K0', 'K0'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']})

result = pd.merge(left, right, how='right', on=['key1', 'key2'])

print(result)

left = pd.DataFrame({'key1': ['K0', 'K0', 'K1', 'K2'],

'key2': ['K0', 'K1', 'K0', 'K1'],

'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3']})

right = pd.DataFrame({'key1': ['K0', 'K1', 'K1', 'K2'],

'key2': ['K0', 'K0', 'K0', 'K0'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']})

result = pd.merge(left, right, how='outer', on=['key1', 'key2'])

print(result)

left = pd.DataFrame({'key1': ['K0', 'K0', 'K1', 'K2'],

'key2': ['K0', 'K1', 'K0', 'K1'],

'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3']})

right = pd.DataFrame({'key1': ['K0', 'K1', 'K1', 'K2'],

'key2': ['K0', 'K0', 'K0', 'K0'],

'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']})

result = pd.merge(left, right, how='inner', on=['key1', 'key2'])

print(result)

left = pd.DataFrame({'A' : [1,2], 'B' : [2, 2]})

right = pd.DataFrame({'A' : [4,5,6], 'B': [2,2,2]})

result = pd.merge(left, right, on='B', how='outer')

print(result)

Checking for duplicate keys

Users can use the validate argument to automatically check whether there are unexpected duplicates in their merge keys. Key uniqueness is checked before merge operations and so should protect against memory overflows. Checking key uniqueness is also a good way to ensure user data structures are as expected.

In the following example, there are duplicate values of B in the right DataFrame. As this is not a one-to-one merge – as specified in the validate argument – an exception will be raised.

left = pd.DataFrame({'A' : [1,2], 'B' : [1, 2]})

right = pd.DataFrame({'A' : [4,5,6], 'B': [2, 2, 2]})

result = pd.merge(left, right, on='B', how='outer', validate="one_to_one")

in _validate

raise MergeError("Merge keys are not unique in right dataset;"

pandas.errors.MergeError: Merge keys are not unique in right dataset; not a one-to-one merge

这个关系显然是一对多的

left = pd.DataFrame({'A' : [1,2], 'B' : [1, 2]})

right = pd.DataFrame({'A' : [4,5,6], 'B': [2, 2, 2]})

result = pd.merge(left, right, on='B', how='outer', validate="one_to_many")

print(result)

A_x B A_y

0 1 1 NaN

1 2 2 4.0

2 2 2 5.0

3 2 2 6.0

The merge indicator

merge now accepts the argument indicator. If True, a Categorical-type column called _merge will be added to the output object that takes on values:

Observation Origin _mergevalueMerge key only in 'left'frameleft_onlyMerge key only in 'right'frameright_onlyMerge key in both frames both

这个功能SQL中没有,挺好

df1 = pd.DataFrame({'col1': [0, 1], 'col_left':['a', 'b']})

df2 = pd.DataFrame({'col1': [1, 2, 2],'col_right':[2, 2, 2]})

result = pd.merge(df1, df2, on='col1', how='outer', indicator=True)

print(result)

col1 col_left col_right _merge

0 0 a NaN left_only

1 1 b 2.0 both

2 2 NaN 2.0 right_only

3 2 NaN 2.0 right_only

indictor 也是接收 string类型参数的

df1 = pd.DataFrame({'col1': [0, 1], 'col_left':['a', 'b']})

df2 = pd.DataFrame({'col1': [1, 2, 2],'col_right':[2, 2, 2]})

result = pd.merge(df1, df2, on='col1', how='outer', indicator='indicator_column')

print(result)

col1 col_left col_right indicator_column

0 0 a NaN left_only

1 1 b 2.0 both

2 2 NaN 2.0 right_only

3 2 NaN 2.0 right_only

Merge Dtypes

left = pd.DataFrame({'key': [1], 'v1': [10]})

right = pd.DataFrame({'key': [1, 2], 'v1': [20, 30]})

result = pd.merge(left, right, how='outer')

print(result)

print(pd.merge(left, right, how='outer').dtypes

key v1

0 1 10

1 1 20

2 2 30

key int64

v1 int64

dtype: object

Joining on index

DataFrame.join is a convenient method for combining the columns of two potentially differently-indexed DataFrames into a single result DataFrame. Here is a very basic example:

left = pd.DataFrame({'A': ['A0', 'A1', 'A2'],

'B': ['B0', 'B1', 'B2']},

index=['K0', 'K1', 'K2'])

right = pd.DataFrame({'C': ['C0', 'C2', 'C3'],

'D': ['D0', 'D2', 'D3']},

index=['K0', 'K2', 'K3'])

result = left.join(right)

print(result)

A B C D

K0 A0 B0 C0 D0

K1 A1 B1 NaN NaN

K2 A2 B2 C2 D2

left = pd.DataFrame({'A': ['A0', 'A1', 'A2'],

'B': ['B0', 'B1', 'B2']},

index=['K0', 'K1', 'K2'])

right = pd.DataFrame({'C': ['C0', 'C2', 'C3'],

'D': ['D0', 'D2', 'D3']},

index=['K0', 'K2', 'K3'])

result = left.join(right, how='outer')

print(result)

A B C D

K0 A0 B0 C0 D0

K1 A1 B1 NaN NaN

K2 A2 B2 C2 D2

K3 NaN NaN C3 D3

left = pd.DataFrame({'A': ['A0', 'A1', 'A2'],

'B': ['B0', 'B1', 'B2']},

index=['K0', 'K1', 'K2'])

right = pd.DataFrame({'C': ['C0', 'C2', 'C3'],

'D': ['D0', 'D2', 'D3']},

index=['K0', 'K2', 'K3'])

result = left.join(right, how='inner')

print(result)

A B C D

K0 A0 B0 C0 D0

K2 A2 B2 C2 D2

The data alignment here is on the indexes (row labels). This same behavior can be achieved using merge plus additional arguments instructing it to use the indexes:

result = pd.merge(left, right, left_index=True, right_index=True, how='outer')

result = pd.merge(left, right, left_index=True, right_index=True, how='inner');

Joining key columns on an index

join takes an optional on argument which may be a column or multiple column names, which specifies that the passed DataFrame is to be aligned on that column in the DataFrame. These two function calls are completely equivalent:

left.join(right, on=key_or_keys) pd.merge(left, right, left_on=key_or_keys, right_index=True, how='left', sort=False)

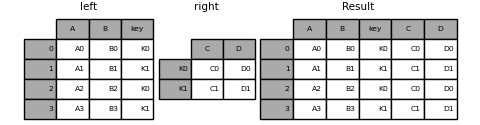

Obviously you can choose whichever form you find more convenient. For many-to-one joins (where one of the DataFrame’s is already indexed by the join key), using join may be more convenient. Here is a simple example:

left = pd.DataFrame({'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3'],

'key': ['K0', 'K1', 'K0', 'K1']})

right = pd.DataFrame({'C': ['C0', 'C1'],

'D': ['D0', 'D1']},

index=['K0', 'K1'])

result = left.join(right, on='key')

result = pd.merge(left, right, left_on='key', right_index=True, how='left', sort=False);

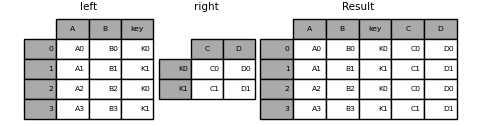

To join on multiple keys, the passed DataFrame must have a MultiIndex:

left = pd.DataFrame({'A': ['A0', 'A1', 'A2', 'A3'],

'B': ['B0', 'B1', 'B2', 'B3'],

'key1': ['K0', 'K0', 'K1', 'K2'],

'key2': ['K0', 'K1', 'K0', 'K1']})

index = pd.MultiIndex.from_tuples([('K0', 'K0'), ('K1', 'K0'),

('K2', 'K0'), ('K2', 'K1')])

right = pd.DataFrame({'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']},

index=index)

Now this can be joined by passing the two key column names:

result = left.join(right, on=['key1', 'key2'])

The default for DataFrame.join is to perform a left join (essentially a “VLOOKUP” operation, for Excel users), which uses only the keys found in the calling DataFrame. Other join types, for example inner join, can be just as easily performed:

result = left.join(right, on=['key1', 'key2'], how='inner')

As you can see, this drops any rows where there was no match.

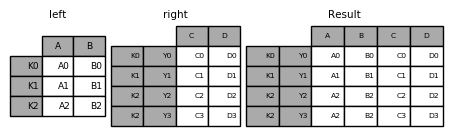

Joining a single Index to a Multi-index

一个index和多个index

left = pd.DataFrame({'A': ['A0', 'A1', 'A2'],

'B': ['B0', 'B1', 'B2']},

index=pd.Index(['K0', 'K1', 'K2'], name='key'))

index = pd.MultiIndex.from_tuples([('K0', 'Y0'), ('K1', 'Y1'),

('K2', 'Y2'), ('K2', 'Y3')],

names=['key', 'Y'])

right = pd.DataFrame({'C': ['C0', 'C1', 'C2', 'C3'],

'D': ['D0', 'D1', 'D2', 'D3']},

index=index)

result = left.join(right, how='inner')

This is equivalent but less verbose and more memory efficient / faster than this.

result = pd.merge(left.reset_index(), right.reset_index(), on=['key'], how='inner').set_index(['key','Y'])

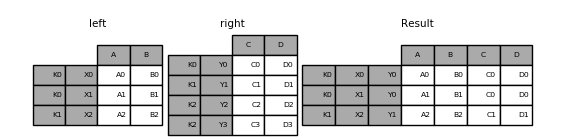

Joining with two multi-indexes

现在join不能实现,但是merge 可以

index = pd.MultiIndex.from_tuples([('K0', 'X0'), ('K0', 'X1'), ('K1', 'X2')], names=['key', 'X']) left = pd.DataFrame({'A': ['A0', 'A1', 'A2'], 'B': ['B0', 'B1', 'B2']}, index=index) result = pd.merge(left.reset_index(), right.reset_index(), on=['key'], how='inner').set_index(['key','X','Y'])

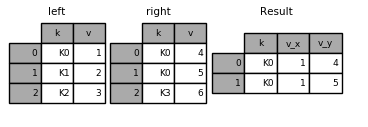

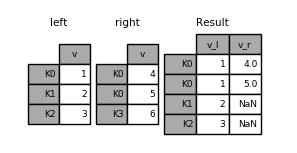

Overlapping value columns¶

The merge suffixes argument takes a tuple of list of strings to append to overlapping column names in the input DataFrames to disambiguate the result columns:

left = pd.DataFrame({'k': ['K0', 'K1', 'K2'], 'v': [1, 2, 3]})

right = pd.DataFrame({'k': ['K0', 'K0', 'K3'], 'v': [4, 5, 6]})

result = pd.merge(left, right, on='k')

print(result)

result = pd.merge(left, right, on='k', suffixes=['_l', '_r'])

left = pd.DataFrame({'k': ['K0', 'K1', 'K2'], 'v': [1, 2, 3]})

right = pd.DataFrame({'k': ['K0', 'K0', 'K3'], 'v': [4, 5, 6]})

left = left.set_index('k')

right = right.set_index('k')

result = left.join(right, lsuffix='_l', rsuffix='_r')

print(result)

Timeseries friendly merging

Merging Ordered Data

A merge_ordered() function allows combining time series and other ordered data. In particular it has an optional fill_method keyword to fill/interpolate missing data:

left = pd.DataFrame({'k': ['K0', 'K1', 'K1', 'K2'],

'lv': [1, 2, 3, 4],

's': ['a', 'b', 'c', 'd']})

right = pd.DataFrame({'k': ['K1', 'K2', 'K4'],

'rv': [1, 2, 3]})

result = pd.merge_ordered(left, right, fill_method='ffill', left_by='s')

print(left)

print(right)

print(result)

k lv s

0 K0 1 a

1 K1 2 b

2 K1 3 c

3 K2 4 d

k rv

0 K1 1

1 K2 2

2 K4 3

k lv s rv

0 K0 1.0 a NaN

1 K1 1.0 a 1.0

2 K2 1.0 a 2.0

3 K4 1.0 a 3.0

4 K1 2.0 b 1.0

5 K2 2.0 b 2.0

6 K4 2.0 b 3.0

7 K1 3.0 c 1.0

8 K2 3.0 c 2.0

9 K4 3.0 c 3.0

10 K1 NaN d 1.0

11 K2 4.0 d 2.0

12 K4 4.0 d 3.0

Merging AsOf

A merge_asof() is similar to an ordered left-join except that we match on nearest key rather than equal keys. For each row in the left DataFrame, we select the last row in the right DataFrame whose on key is less than the left’s key. Both DataFrames must be sorted by the key.

Optionally an asof merge can perform a group-wise merge. This matches the by key equally, in addition to the nearest match on the on key.

For example; we might have trades and quotes and we want to asof merge them.

trades = pd.DataFrame({ 'time': pd.to_datetime(['20160525 13:30:00.023', '20160525 13:30:00.038', '20160525 13:30:00.048', '20160525 13:30:00.048', '20160525 13:30:00.048']), 'ticker': ['MSFT', 'MSFT', 'GOOG', 'GOOG', 'AAPL'], 'price': [51.95, 51.95, 720.77, 720.92, 98.00], 'quantity': [75, 155, 100, 100, 100]}, columns=['time', 'ticker', 'price', 'quantity']) quotes = pd.DataFrame({ 'time': pd.to_datetime(['20160525 13:30:00.023', '20160525 13:30:00.023', '20160525 13:30:00.030', '20160525 13:30:00.041', '20160525 13:30:00.048', '20160525 13:30:00.049', '20160525 13:30:00.072', '20160525 13:30:00.075']), 'ticker': ['GOOG', 'MSFT', 'MSFT', 'MSFT', 'GOOG', 'AAPL', 'GOOG', 'MSFT'], 'bid': [720.50, 51.95, 51.97, 51.99, 720.50, 97.99, 720.50, 52.01], 'ask': [720.93, 51.96, 51.98, 52.00, 720.93, 98.01, 720.88, 52.03]}, columns=['time', 'ticker', 'bid', 'ask']) print(trades) print(quotes) result = pd.merge_asof(trades,quotes,on='time',by='ticker') print(result) time ticker price quantity 0 2016-05-25 13:30:00.023 MSFT 51.95 75 1 2016-05-25 13:30:00.038 MSFT 51.95 155 2 2016-05-25 13:30:00.048 GOOG 720.77 100 3 2016-05-25 13:30:00.048 GOOG 720.92 100 4 2016-05-25 13:30:00.048 AAPL 98.00 100 time ticker bid ask 0 2016-05-25 13:30:00.023 GOOG 720.50 720.93 1 2016-05-25 13:30:00.023 MSFT 51.95 51.96 2 2016-05-25 13:30:00.030 MSFT 51.97 51.98 3 2016-05-25 13:30:00.041 MSFT 51.99 52.00 4 2016-05-25 13:30:00.048 GOOG 720.50 720.93 5 2016-05-25 13:30:00.049 AAPL 97.99 98.01 6 2016-05-25 13:30:00.072 GOOG 720.50 720.88 7 2016-05-25 13:30:00.075 MSFT 52.01 52.03 time ticker price quantity bid ask 0 2016-05-25 13:30:00.023 MSFT 51.95 75 51.95 51.96 1 2016-05-25 13:30:00.038 MSFT 51.95 155 51.97 51.98 2 2016-05-25 13:30:00.048 GOOG 720.77 100 720.50 720.93 3 2016-05-25 13:30:00.048 GOOG 720.92 100 720.50 720.93 4 2016-05-25 13:30:00.048 AAPL 98.00 100 NaN NaN

result = pd.merge_asof(trades,quotes,on='time',by='ticker',tolerance=pd.Timedelta('2ms')) print(result) time ticker price quantity bid ask 0 2016-05-25 13:30:00.023 MSFT 51.95 75 51.95 51.96 1 2016-05-25 13:30:00.038 MSFT 51.95 155 NaN NaN 2 2016-05-25 13:30:00.048 GOOG 720.77 100 720.50 720.93 3 2016-05-25 13:30:00.048 GOOG 720.92 100 720.50 720.93 4 2016-05-25 13:30:00.048 AAPL 98.00 100 NaN NaN

result = pd.merge_asof(trades,quotes,on='time',by='ticker',tolerance=pd.Timedelta('10ms'),allow_exact_matches=False) print(result) time ticker price quantity bid ask 0 2016-05-25 13:30:00.023 MSFT 51.95 75 NaN NaN 1 2016-05-25 13:30:00.038 MSFT 51.95 155 51.97 51.98 2 2016-05-25 13:30:00.048 GOOG 720.77 100 NaN NaN 3 2016-05-25 13:30:00.048 GOOG 720.92 100 NaN NaN 4 2016-05-25 13:30:00.048 AAPL 98.00 100 NaN NaN

浙公网安备 33010602011771号

浙公网安备 33010602011771号