Logstash+Kibana部署配置

Logstash是一个接收,处理,转发日志的工具。支持系统日志,webserver日志,错误日志,应用日志,总之包括所有可以抛出来的日志类型。

典型的使用场景下(ELK):

用Elasticsearch作为后台数据的存储,kibana用来前端的报表展示。Logstash在其过程中担任搬运工的角色,它为数据存储,报表查询和日志解析创建了一个功能强大的管道链。Logstash提供了多种多样的 input,filters,codecs和output组件,让使用者轻松实现强大的功能。

学习Logstash最好的资料就是官网,介绍三个学习地址:

1、ELK官网帮助文档

https://www.elastic.co/guide/en/logstash/5.1/plugins-outputs-stdout.html

2、Logstash匹配帮助文档

http://grokdebug.herokuapp.com/patterns#

3、Grok在线正则匹配

http://grokdebug.herokuapp.com/

4、国内grok在线正则匹配

http://grok.qiexun.net/

下面就正式开始主题~~

logstash部署配置

1、基础环境支持(JAVA)

yum -y install java-1.8*

java --version

2、下载解压logstash

logstash-5.0.1.tar.gz

tar -zxvf logstash-5.0.1.tar.gz

cd logstash-5.0.1

mkdir conf #创建conf文件夹存放配置文件

cd conf

3、配置文件

配置test文件(结合前面搭建好的ES集群测试)

[root@logstash1 conf]# cat test.conf

input {

stdin {

}

}

output {

elasticsearch {

hosts =>["172.16.81.133:9200","172.16.81.134:9200"]

index => "test-%{+YYYY.MM.dd}"

}

stdout {

codec => rubydebug

}

}

#检查配置文件语法

[root@logstash1 conf]# /opt/logstash-5.0.1/bin/logstash -f /opt/logstash-5.0.1/conf/test.conf --config.test_and_exit

Sending Logstash's logs to /opt/logstash-5.0.1/logs which is now configured via log4j2.properties

Configuration OK

[2017-12-26T11:42:12,816][INFO ][logstash.runner ] Using config.test_and_exit mode. Config Validati on Result: OK. Exiting Logstash

#执行命令

/opt/logstash-5.0.1/bin/logstash -f /opt/logstash-5.0.1/conf/test.conf

手动输入信息

2017.12.26 admin 172.16.81.82 200

结果:

{

"@timestamp" => 2017-12-26T03:45:48.926Z,

"@version" => "1",

"host" => "0.0.0.0",

"message" => "2017.12.26 admin 172.16.81.82 200",

"tags" => []

}

配置kafka集群的logstash配置文件

客户端logstash推送日志

#配置客户端logstash配置文件

[root@www conf]# cat nginx_kafka.conf

input {

file {

type => "access.log"

path => "/var/log/nginx/imlogin.log"

start_position => "beginning"

}

}

output {

kafka {

bootstrap_servers => "172.16.81.131:9092,172.16.81.132:9092"

topic_id => 'summer'

}

}

配置服务端logstash过滤分割日志

[root@logstash1 conf]# cat kafka.conf

input {

kafka {

bootstrap_servers => "172.16.81.131:9092,172.16.81.132:9092"

group_id => "logstash"

topics => ["summer"]

consumer_threads => 50

decorate_events => true

}

}

filter {

grok {

match => {

"message" => "%{NOTSPACE:accessip} \- \- \[%{HTTPDATE:time}\] %{NOTSPACE:auth} %{NOTSPACE:uri_stem} %{NOTSPACE:agent} %{WORD:status} %{NUMBER:bytes} %{NOTSPACE:request_url} %{NOTSPACE:browser} %{NOTSPACE:system} %{NOTSPACE:system_type} %{NOTSPACE:tag} %{NOTSPACE:system}"

}

}

date {

match => [ "accessip", "MMM dd YYYY HH:mm:ss" ]

}

}

output {

elasticsearch {

hosts => ["172.16.81.133:9200","172.16.81.134:9200"]

index => "logstash-%{+YYYY.MM.dd}"

}

}

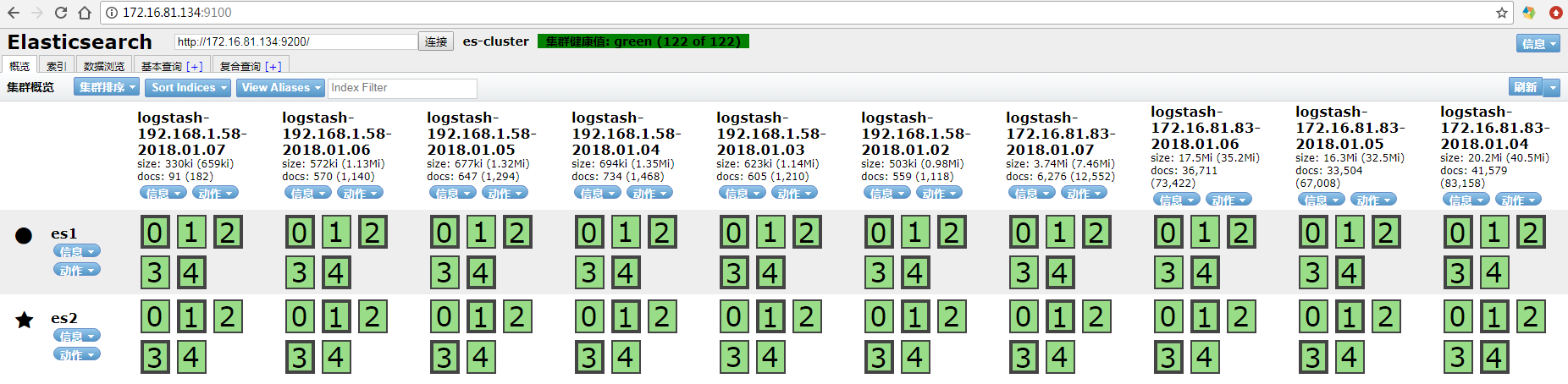

然后再es集群上观察消费情况

[root@es1 ~]# curl -XGET '172.16.81.134:9200/_cat/indices?v&pretty' health status index uuid pri rep docs.count docs.deleted store.size pri.store.size green open logstash-2017.12.29.03 waZfJChvSY2vcREQgyW7zA 5 1 1080175 0 622.2mb 311.1mb green open logstash-2017.12.29.06 Zm5Jcb3DSK2Ws3D2rYdp2g 5 1 183 0 744.2kb 372.1kb green open logstash-2017.12.29.04 NFimjo_sSnekHVoISp2DQg 5 1 1530 0 2.7mb 1.3mb green open .kibana YN93vVWQTESA-cZycYHI6g 1 1 2 0 22.9kb 11.4kb green open logstash-2017.12.29.05 kPQAlVkGQL-izw8tt2FRaQ 5 1 1289 0 2mb 1mb

配合ES集群的head插件使用!!观察日志生成情况!!

4、kibana安装部署

下载rpm包

kibana-5.0.1-x86_64.rpm

安装kibana软件

rpm -ivh kibana-5.0.1-x86_64.rpm

配置文件

[root@es1 opt]# cat /etc/kibana/kibana.yml | grep -v "^#" | grep -v "^$"

server.port: 5601

server.host: "172.16.81.133"

elasticsearch.url: "http://172.16.81.133:9200"

启动kibana

systemctl start kibana

systemctl enable kibana

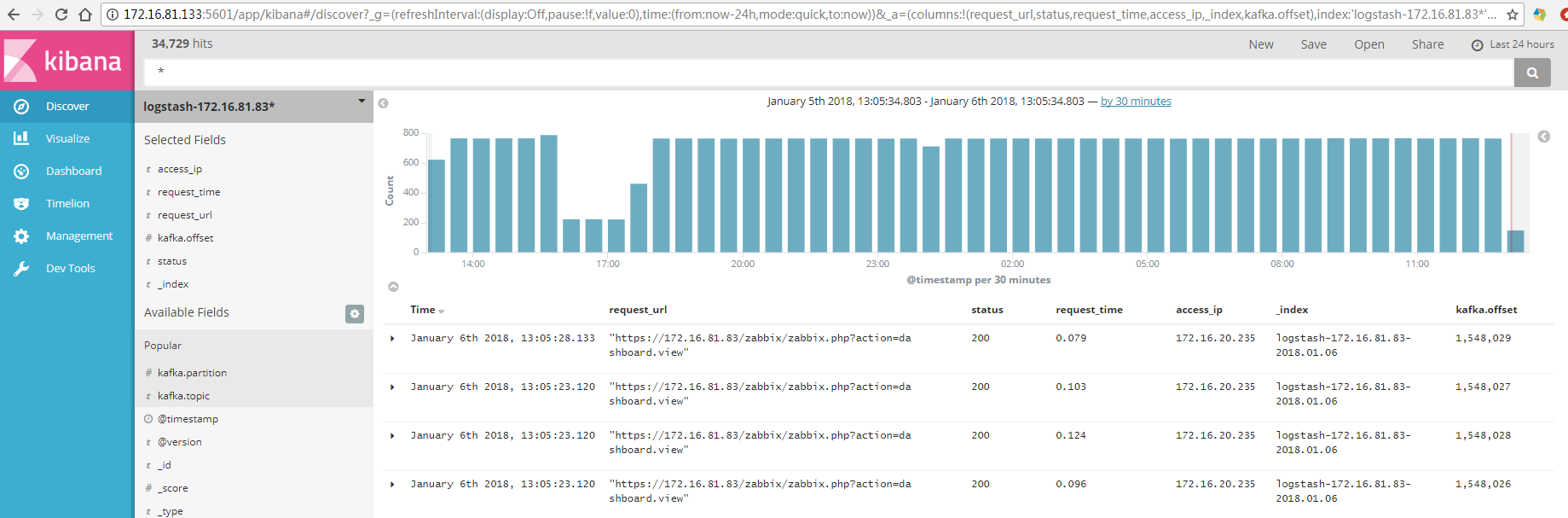

浏览器浏览

http://172.16.81.133:5601/

正常显示数据!!

有问题请指出!出现了很多IP地址不知道可以查看前面几篇!

浙公网安备 33010602011771号

浙公网安备 33010602011771号