Hadoop源码编译环境搭建

准备工具:

maven 3.0.0版本或者更高版本(配置中心库)

http://www.zlib.net/

git bash(Windows环境可以用此工具执行编译命令)

下载源码:

http://hadoop.apache.org/releases.html

http://mirror.bit.edu.cn/apache/hadoop/common/

当前稳定版本为hadoop 2.9.2

编译源码:

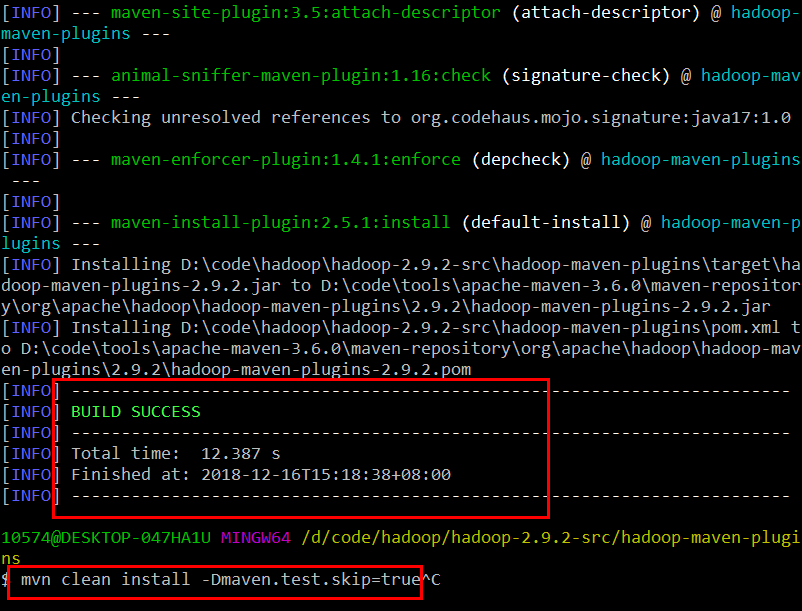

在目录hadoop-maven-plugins下执行maven命令

mvn clean package -Pdist -DskipTests

mvn clean install -DskipTests

mvn clean install -Dmaven.test.skip=true

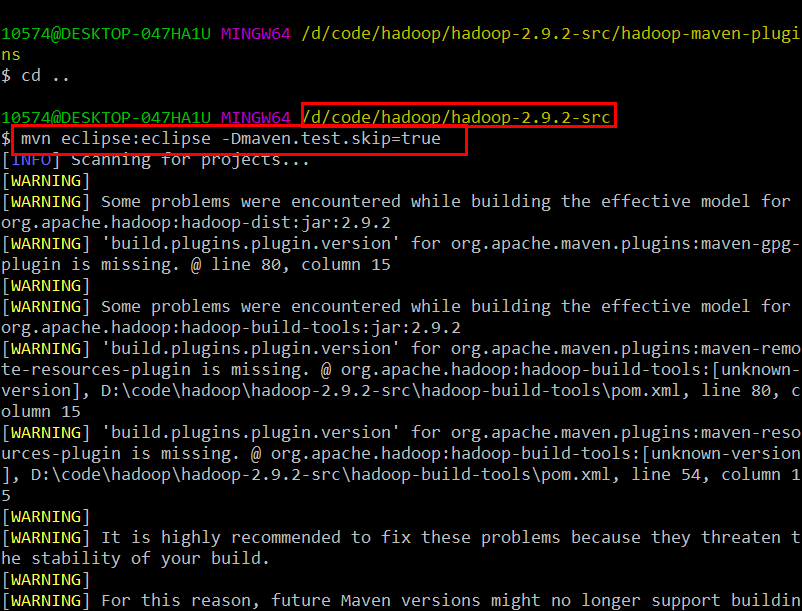

在目录hadoop-2.9.2-src下执行

mvn eclipse:eclipse -DskipTests

或者

mvn eclipse:eclipse -Dmaven.test.skip=true

然而编译到最后,挂红了、、

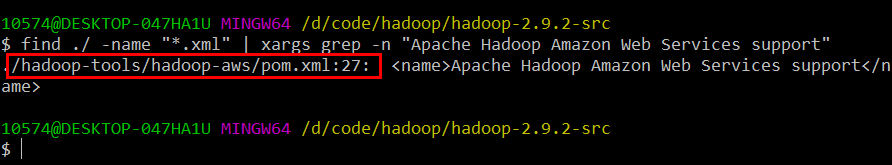

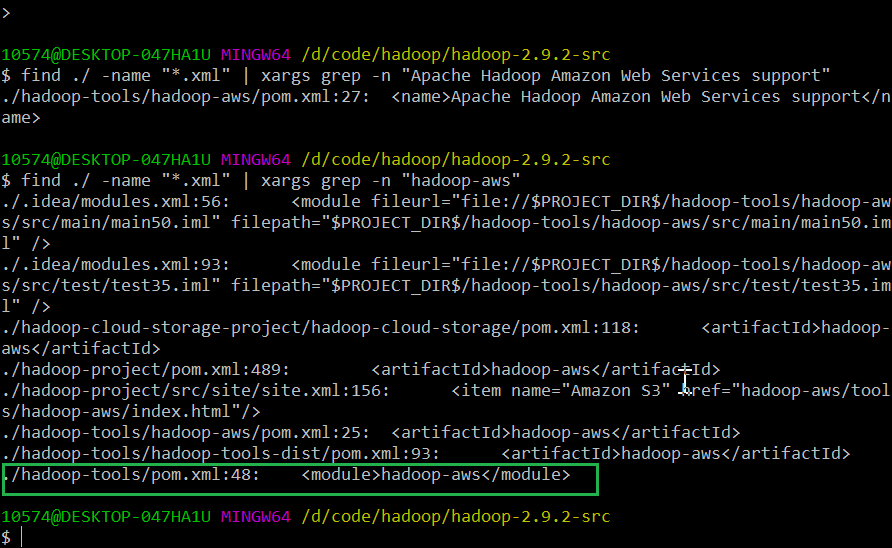

定位如下:

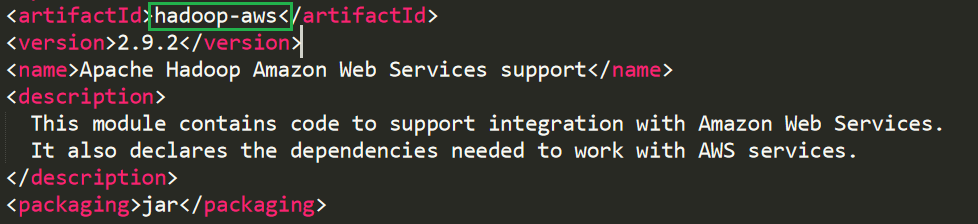

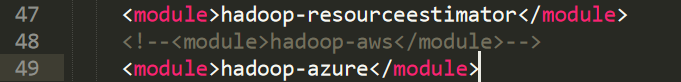

原来是hadoop-aws工程报错。尝试注掉该工程(注释掉该工程,不影响正常阅读Hadoop源码):

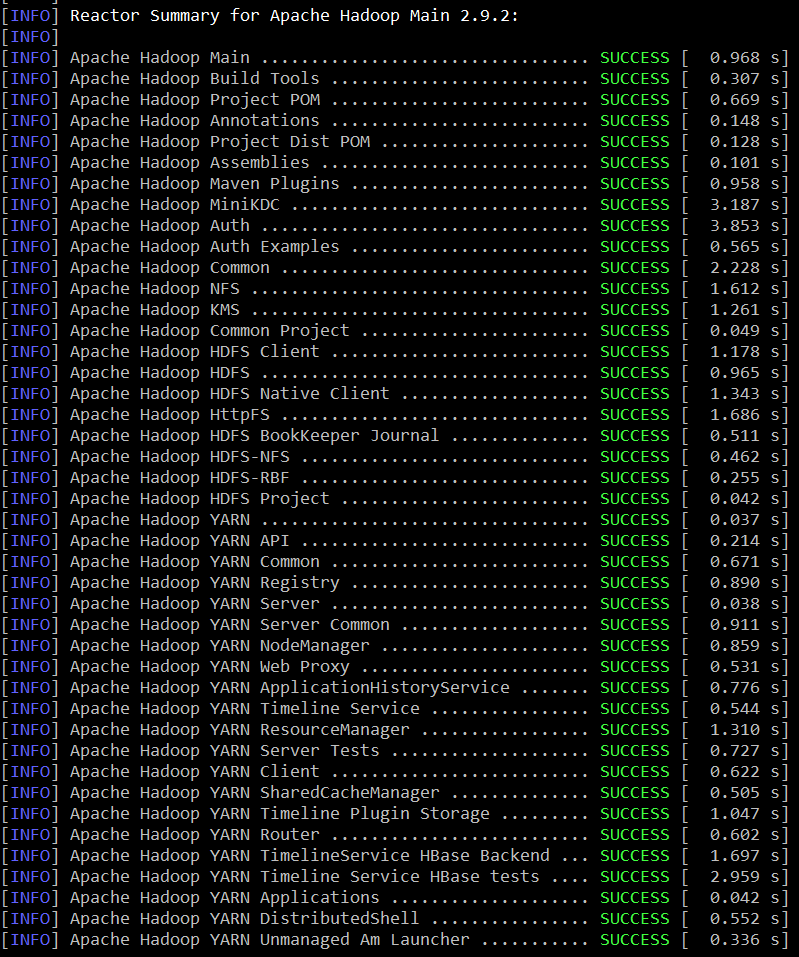

重新编译:

共80个工程项目(hadoop-aws)失败

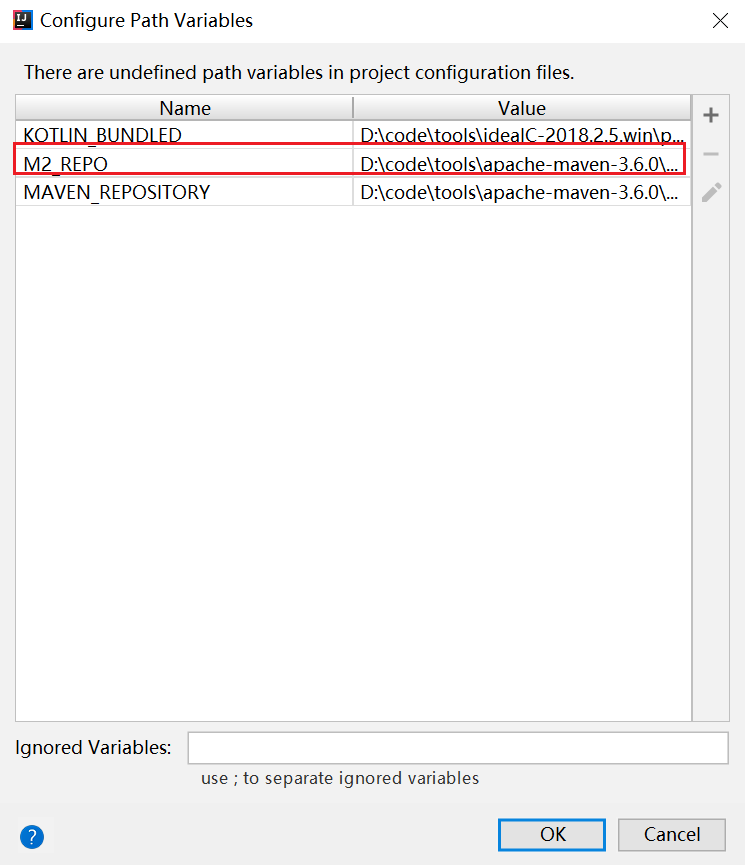

将Eclipse工程导入到IDEA,需要设置或更改M2_REPO和JDK版本(Hadoop 2.9.2使用JDK 1.7)

---------------------------------------------------------------------------------- Building on Windows ---------------------------------------------------------------------------------- Requirements: * Windows System * JDK 1.8 * Maven 3.0 or later * ProtocolBuffer 2.5.0 * CMake 3.1 or newer * Visual Studio 2010 Professional or Higher * Windows SDK 8.1 (if building CPU rate control for the container executor) * zlib headers (if building native code bindings for zlib) * Internet connection for first build (to fetch all Maven and Hadoop dependencies) * Unix command-line tools from GnuWin32: sh, mkdir, rm, cp, tar, gzip. These tools must be present on your PATH. * Python ( for generation of docs using 'mvn site') Unix command-line tools are also included with the Windows Git package which can be downloaded from http://git-scm.com/downloads If using Visual Studio, it must be Professional level or higher. Do not use Visual Studio Express. It does not support compiling for 64-bit, which is problematic if running a 64-bit system. The Windows SDK 8.1 is available to download at: http://msdn.microsoft.com/en-us/windows/bg162891.aspx Cygwin is not required. ---------------------------------------------------------------------------------- Building: Keep the source code tree in a short path to avoid running into problems related to Windows maximum path length limitation (for example, C:\hdc). There is one support command file located in dev-support called win-paths-eg.cmd. It should be copied somewhere convenient and modified to fit your needs. win-paths-eg.cmd sets up the environment for use. You will need to modify this file. It will put all of the required components in the command path, configure the bit-ness of the build, and set several optional components. Several tests require that the user must have the Create Symbolic Links privilege. All Maven goals are the same as described above with the exception that native code is built by enabling the 'native-win' Maven profile. -Pnative-win is enabled by default when building on Windows since the native components are required (not optional) on Windows. If native code bindings for zlib are required, then the zlib headers must be deployed on the build machine. Set the ZLIB_HOME environment variable to the directory containing the headers. set ZLIB_HOME=C:\zlib-1.2.7 At runtime, zlib1.dll must be accessible on the PATH. Hadoop has been tested with zlib 1.2.7, built using Visual Studio 2010 out of contrib\vstudio\vc10 in the zlib 1.2.7 source tree. http://www.zlib.net/ ---------------------------------------------------------------------------------- Building distributions: * Build distribution with native code : mvn package [-Pdist][-Pdocs][-Psrc][-Dtar][-Dmaven.javadoc.skip=true] ---------------------------------------------------------------------------------- Running compatibility checks with checkcompatibility.py Invoke `./dev-support/bin/checkcompatibility.py` to run Java API Compliance Checker to compare the public Java APIs of two git objects. This can be used by release managers to compare the compatibility of a previous and current release. As an example, this invocation will check the compatibility of interfaces annotated as Public or LimitedPrivate: ./dev-support/bin/checkcompatibility.py --annotation org.apache.hadoop.classification.InterfaceAudience.Public --annotation org.apache.hadoop.classification.InterfaceAudience.LimitedPrivate --include "hadoop.*" branch-2.7.2 trunk ---------------------------------------------------------------------------------- Changing the Hadoop version declared returned by VersionInfo If for compatibility reasons the version of Hadoop has to be declared as a 2.x release in the information returned by org.apache.hadoop.util.VersionInfo, set the property declared.hadoop.version to the desired version. For example: mvn package -Pdist -Ddeclared.hadoop.version=2.11 If unset, the project version declared in the POM file is used. ---------------------------------------------------------------------------------- Building distributions: Create binary distribution without native code and without documentation: $ mvn package -Pdist -DskipTests -Dtar -Dmaven.javadoc.skip=true Create binary distribution with native code and with documentation: $ mvn package -Pdist,native,docs -DskipTests -Dtar Create source distribution: $ mvn package -Psrc -DskipTests Create source and binary distributions with native code and documentation: $ mvn package -Pdist,native,docs,src -DskipTests -Dtar Create a local staging version of the website (in /tmp/hadoop-site) $ mvn clean site -Preleasedocs; mvn site:stage -DstagingDirectory=/tmp/hadoop-site ---------------------------------------------------------------------------------- Installing Hadoop Look for these HTML files after you build the document by the above commands. * Single Node Setup: hadoop-project-dist/hadoop-common/SingleCluster.html * Cluster Setup: hadoop-project-dist/hadoop-common/ClusterSetup.html

浙公网安备 33010602011771号

浙公网安备 33010602011771号