Hadoop2.7.7 centos7 完全分布式 配置与问题随记

Hadoop2.7.7 centos7 完全分布式 配置与问题随记

这里是当初在三个ECS节点上搭建hadoop+zookeeper+hbase+solr的主要步骤,文章内容未经过润色,请参考的同学搭配其他博客一同使用,并记得根据实际情况调整相关参数。

0.prepare

jdk,推荐1.8

关闭防火墙

开放ECS安全组

三台机器之间的免密登陆ssh

ip映射:【question1】hadoop启动时出现报错java.net.BindException: Cannot assign requested address

说明ip映射没有配置正确,正确的方式是在每一个节点上,都执行"内外外"的配置方式,即将本机与本机的内网ip对应,其他机器设置为外网ip

下面的文件要在每个节点上都修改

1. vi /etc/profile

1. vi /etc/profile

/opt/hadoop/hadoop-2.7.7

export HADOOP_HOME=/opt/hadoop/hadoop-2.7.7

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export HADOOP_OPTS="-Djava.library.path=$HADOOP_HOME/lib"

export PATH=.:${JAVA_HOME}/bin:${HADOOP_HOME}/bin:$PATH

#使环境变量生效

souce /etc/profile

#检验

hadoop version

2. vi /.../hadoop-2.7.7/etc/hadoop/core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://Gwj:8020</value>

<description>定义默认的文件系统主机和端口</description>

</property>

<property>

<name>io.file.buffer.size</name>

<value>4096</value>

<description>流文件的缓冲区为4K</description>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/opt/hadoop/hadoop-2.7.7/tempdata</value>

<description>A base for other temporary directories.</description>

</property>

</configuration>

3. vi /.../hadoop-2.7.7/etc/hadoop/hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/opt/hadoop/hadoop-2.7.7/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/opt/hadoop/hadoop-2.7.7/dfs/data</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<!--后增,如果想让solr索引存放到hdfs中,则还须添加下面两个属性-->

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.permissions.enabled</name>

<value>false</value>

</property>

<!--【question2】SecondayNameNode默认与NameNode在同一台节点上,在实际生产过程中有安全隐患。解决方法:加入如下配置信息,指定NameNode和SecondaryNameNode节点位置-->

<property>

<name>dfs.http.address</name>

<value>Gwj:50070</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>Ssj:50090</value>

</property>

</configuration>

4. vi /.../hadoop-2.7.7/etc/hadoop/mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>local</value>

</property>

<!-- 指定mapreduce jobhistory地址 -->

<property>

<name>mapreduce.jobhistory.address</name>

<value>0.0.0.0:10020</value>

</property>

<!-- 任务历史服务器的web地址 -->

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>0.0.0.0:19888</value>

</property>

</configuration>

5. vi /.../hadoop-2.7.7/etc/hadoop/yarn-site.xml

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>Gwj</value>

<description>指定resourcemanager所在的hostname</description>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

<description>NodeManager上运行的附属服务。需配置成mapreduce_shuffle,才可运行MapReduce程序 </description>

</property>

</configuration>

6.vi /.../hadoop-2.7.7/etc/hadoop/slaves

老版本是slaves文件,3.0.3 用 workers 文件代替 slaves 文件

将localhost删掉,加入dataNode节点的主机名

[root@Gwj ~]# cat /opt/hadoop/hadoop-2.7.7/etc/hadoop/slaves

Ssj

Pyf

7.首次使用进行格式化

hdfs namenode -format

8.启动

/.../hadoop-2.7.7/sbin/start/start-all.sh

hdfs

/.../hadoop-2.7.7/sbin/start/start-dfs.sh

Yarn

/.../hadoop-2.7.7/sbin/start/start-yarn.sh

#start可替换为stop、status

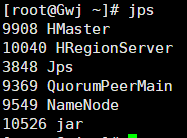

9.检验

使用jps检验

hadoop

hdfs

Master---NameNode (SecondaryNameNode)

Slave---DataNode

Yarn

Master---ResourceManager

Slave---NodeManager

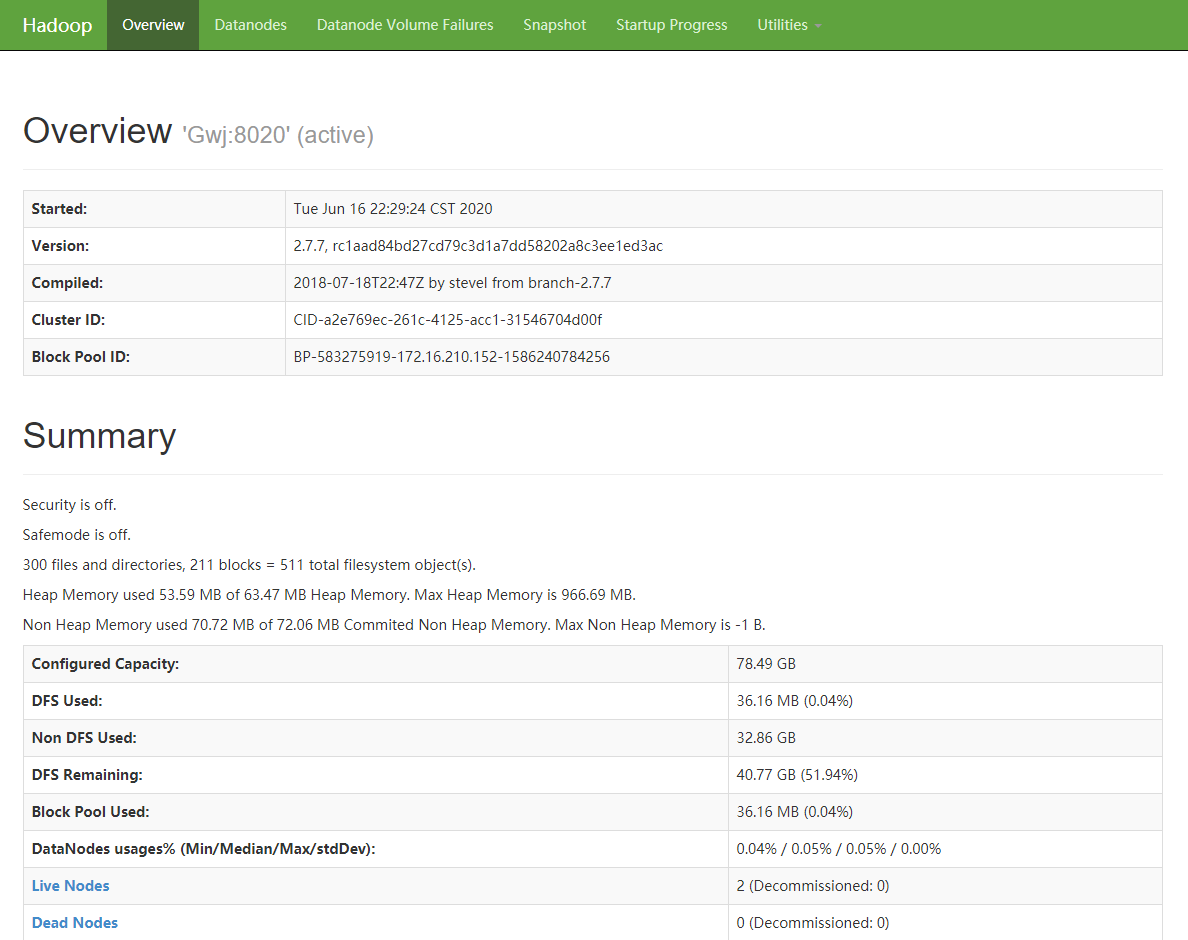

或者使用 “Master ip+50070”

---以下的yarn未设置,注意<configuration>!!!

<property>

<name>yarn.resourcemanager.address</name>

<value>${yarn.resourcemanager.hostname}:8032</value>

</property>

<property>

<description>The address of the scheduler interface.</description>

<name>yarn.resourcemanager.scheduler.address</name>

<value>${yarn.resourcemanager.hostname}:8030</value>

</property>

<property>

<description>The http address of the RM web application.</description>

<name>yarn.resourcemanager.webapp.address</name>

<value>${yarn.resourcemanager.hostname}:8088</value>

</property>

<property>

<description>The https adddress of the RM web application.</description>

<name>yarn.resourcemanager.webapp.https.address</name>

<value>${yarn.resourcemanager.hostname}:8090</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>${yarn.resourcemanager.hostname}:8031</value>

</property>

<property>

<description>The address of the RM admin interface.</description>

<name>yarn.resourcemanager.admin.address</name>

<value>${yarn.resourcemanager.hostname}:8033</value>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>2048</value>

<discription>每个节点可用内存,单位MB,默认8182MB,根据阿里云ECS性能配置为2048MB</discription>

</property>

<property>

<name>yarn.nodemanager.vmem-pmem-ratio</name>

<value>2.1</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>2048</value>

</property>

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>

</configuration>