参考资料:

Deep Residual Learning for Image Recognition

论文分析

网络结构分析

一.Resnet论文分析

1.研究动机

此前,人们认为通过堆叠网络结构增加网络深度可以提升网络的学习能力。然而一直增加网络深度到一定程度之后,模型的能力反而缩减了。

论文作者提出以下问题:Is learning better networks as easy as stacking more layers?

我们知道神经网络的深度太大可能会造成以下问题:

1.梯度消失和梯度爆炸

2.过拟合

针对梯度消失和梯度爆炸问题,我们使用normalized initialization和batch normalization方法可以解决。

通过上图我们也发现,56层的网络的training error和test error都比较大,所以这也不是过拟合问题。

现在假设一个浅层网络已经达到一个较好的效果,如果增加网络深度,但使新增的网络什么也不干,即identity mapping,理论上来说,较深的网络在性能上至少应该和

之前的浅层网络一致。然而现实却是,增加网络深度以后,性能反而下降了。作者认为是网络深度加深以后,导致了整个神经网络变得更加难以优化。

2.解决方案

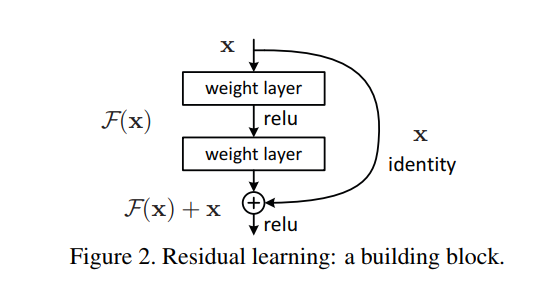

由此,作者提出residual learning。把网络中的几层看成是一个整体,输入是X,输出为H(X)。原本的映射是从X到H(X)。作者提出,令F(X)=H(X)-X,令网络拟合F(X),

再使用shortcut connection(短路连接),从而达到和映射H(X)一样的效果。

作者假设通过residual learning 可以使得深层网络的优化变得简单。最后实验的结果也表明确实如此。

3.网络结构

resnet具体结构:

(1).Block之前的操作

输入为3*224*224,进行一次kernel_size=7, stride=2, padding=3的卷积操作,以及一次kernel_size=3, stride=2, padding=1的池化操作,输出为64*56*56。

(2).两种不同的块

左边叫building block,右边叫bottleneck。building block用于组成resnet18/34, bottleneck用于组成resnet50/101/152

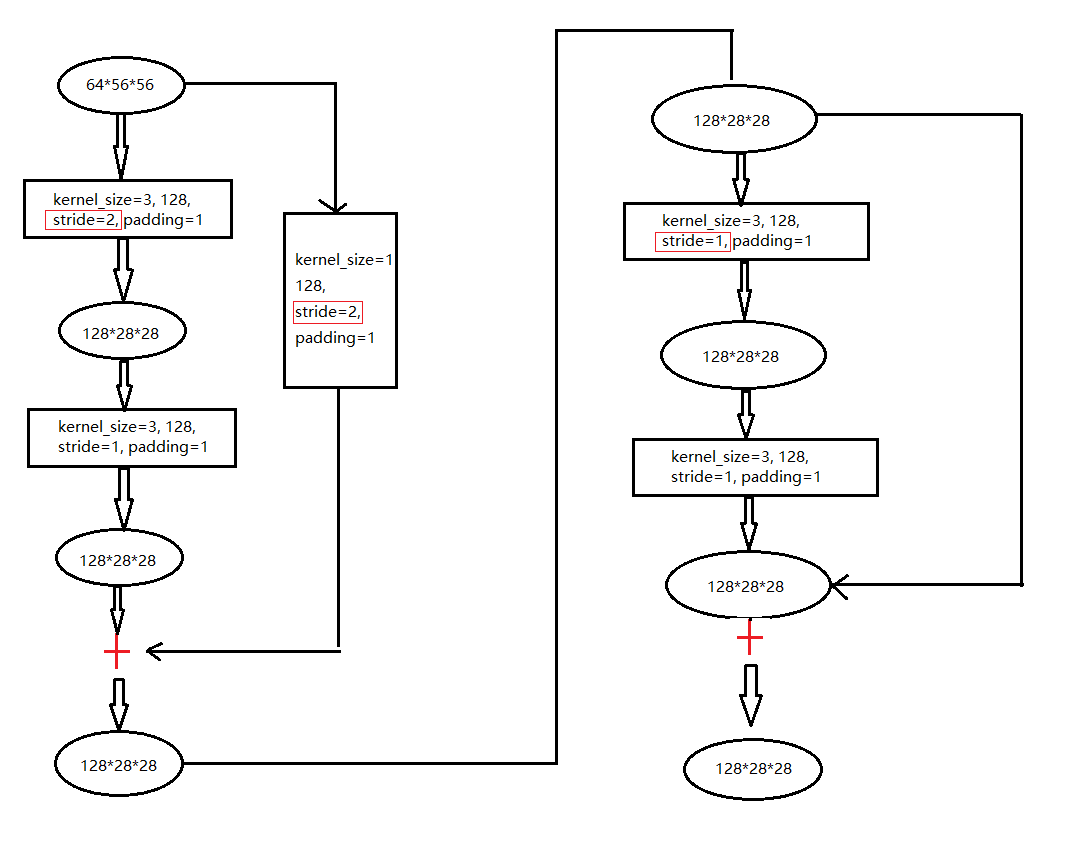

以resnet18为例:

第一层:

这种操作执行两次。

第二层:

需要注意stride在第一块中的第一个卷积操作中,stride=2,从而达到减小尺寸的目的。

后面的layers和第二层大致相同,不再赘述。

组成resnet50/101/152的bottleneck也与building block类似,不再赘述。

自己在实现网络时,需要注意的是每一步的padding和stride,从而保持尺寸一致。

最后附上pytorch实现代码:

#import package import os os.environ["CUDA_VISIBLE_DEVICES"] = "0,1,2,3,4,5,6" import torch import torchvision import torch.nn as nn from torchvision import transforms import torch.nn.functional as F #device configuration device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu") class building_block(nn.Module): def __init__(self, in_channels, out_channels, stride=1, downsample=None): super(building_block, self).__init__() self.conv1 = nn.Conv2d(in_channels, out_channels, 3, stride, padding=1, bias=False) self.bn1 = nn.BatchNorm2d(out_channels) self.relu = nn.ReLU(inplace=True) self.conv2 = nn.Conv2d(out_channels, out_channels, 3, stride, padding=1, bias=False) self.bn1 = nn.BatchNorm2d(out_channels) self.downsample = downsample def forward(self, input): residual = input x = self.conv1(input) x = self.bn1(x) x = self.relu(x) x = self.conv2(x) x = self.bn2(x) if self.downsample: residual = self.downsample(input) x = x + residual output = self.relu(x) return output class bottle_neck(nn.Module): def __init__(self, in_channels, out_channels, stride=1, downsampling=False, expansion=4): super(bottle_neck, self).__init__() self.expansion = expansion self.downsampling = downsampling self.bottleneck = nn.Sequential( nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=1, stride=1, bias=False), nn.BatchNorm2d(out_channels), nn.ReLU(inplace=True), nn.Conv2d(in_channels=out_channels, out_channels=out_channels, kernel_size=3, stride=stride, padding=1, bias=False), nn.BatchNorm2d(out_channels), nn.ReLU(inplace=True), nn.Conv2d(in_channels=out_channels, out_channels=out_channels*self.expansion, kernel_size=1, stride=1, bias=False), nn.BatchNorm2d(out_channels*self.expansion), ) if self.downsampling: self.downsample = nn.Sequential( nn.Conv2d(in_channels=in_channels, out_channels=out_channels*self.expansion, kernel_size=1, stride=stride, bias=False), nn.BatchNorm2d(out_channels*self.expansion) ) self.relu = nn.ReLU(inplace=True) def forward(self, x): residual = x out = self.bottleneck(x) if self.downsampling: residual = self.downsample(x) out += residual out = self.relu(out) return out def Conv1(in_channels, out_channels, stride = 2): return nn.Sequential( nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=7, stride=stride, padding=3, bias=False), nn.BatchNorm2d(out_channels), nn.ReLU(inplace=True), nn.MaxPool2d(kernel_size=3, stride=2, padding=1), ) class ResNet(nn.Module): def __init__(self, blocks, num_classes, expansion = 4): super(ResNet, self).__init__() self.expansion = expansion self.conv1 = Conv1(in_channels=3, out_channels=64) self.layer1 = self.make_layer(in_channels=64, out_channels=64, block=blocks[0], stride=1) self.layer2 = self.make_layer(in_channels=256, out_channels=128, block=blocks[1], stride=2) self.layer3 = self.make_layer(in_channels=512, out_channels=256, block=blocks[2], stride=2) self.layer4 = self.make_layer(in_channels=1024, out_channels=512, block=blocks[3], stride=2) self.avgpool = nn.AvgPool2d(7, stride=1) self.fc = nn.Linear(2048,num_classes) def make_layer(self, in_channels, out_channels, block, stride): layers = [] layers.append(bottle_neck(in_channels, out_channels, stride, downsampling=True)) for i in range(1, block): layers.append(bottle_neck(out_channels*self.expansion, out_channels)) return nn.Sequential(*layers) def forward(self, x): x = self.conv1(x) x = self.layer1(x) x = self.layer2(x) x = self.layer3(x) x = self.layer4(x) x = self.avgpool(x) x = x.view(x.size(0), -1) x = self.fc(x) return x def ResNet50(): return ResNet([3, 4, 6, 3], num_classes = 7) def ResNet101(): return ResNet([3, 4, 23, 3], num_classes = 7) def ResNet152(): return ResNet([3, 8, 36, 3], num_classes = 7) #model = torchvision.models.resnet50() model = ResNet101() print(model) input = torch.randn(1, 3, 224, 224) out = model(input) print(out.shape)