迁移学习: 利用VGG16进行猫狗大战分类

下载数据集和导入包

import sys

import os

print(os.getcwd())

! wget https://static.leiphone.com/cat_dog.rar

! unrar x cat_dog.rar

import numpy as np

import matplotlib.pyplot as plt

import os

import torch

import torch.nn.functional as F

import torch.nn as nn

import torchvision

from torchvision import models,transforms,datasets

from skimage import io

import time

import json

# 判断是否存在GPU设备

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print('Using gpu: %s ' % torch.cuda.is_available())

由于数据集不是标准的ImageFolder格式的需要自己定义一个DataSet类,继承torch.utils.data.DataSet

主要实现以下几个函数

__init__

__len__

__getitem__

normalize = transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

vgg_format = transforms.Compose([

transforms.ToPILImage(),

transforms.CenterCrop(224),

transforms.ToTensor(),

normalize

])

print(type(vgg_format))

class Cat_Dog_Data(torch.utils.data.Dataset):

def __init__(self, root_dir, transform=None):

self.img_list = os.listdir(root_dir)

self.root_dir = root_dir

self.transform = transform

def __len__(self):

return len(self.img_list)

def __getitem__(self, idx):

img_name = os.path.join(self.root_dir,

self.img_list[idx])

image = io.imread(img_name)

image = np.array(image)

label = 0 if self.img_list[idx].split('_')[0]=="cat" else 1

if self.transform:

img = self.transform(image)

return img, label

指定图片的存放路径,并创建DataLoader

DataLoader是可以多线程批量加载图片的类

root_dir = './cat_dog'

data_dir = ['train', 'test', 'val']

img_dir = {x : os.path.join(root_dir,x) for x in data_dir }

train_dataset = Cat_Dog_Data(

root_dir=img_dir['train'],

transform = vgg_format)

val_dataset = Cat_Dog_Data(

root_dir=img_dir['val'],

transform = vgg_format)

loader_train = torch.utils.data.DataLoader(train_dataset, batch_size=64, shuffle=True, num_workers=6)

loader_valid = torch.utils.data.DataLoader(val_dataset, batch_size=5, shuffle=False, num_workers=6)

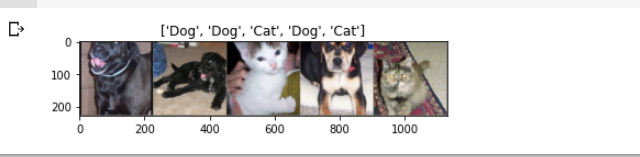

展示图片

使用torchvision.utils.make_grid函数

同时因为Tensor是按照CHW排列的,需要转换成HWC排列才能显示

inputs_try,labels_try = iter(loader_valid).next()

def imshow(inp, title=None):

# Imshow for Tensor.

inp = inp.numpy().transpose((1, 2, 0))

mean = np.array([0.485, 0.456, 0.406])

std = np.array([0.229, 0.224, 0.225])

inp = np.clip(std * inp + mean, 0,1)

plt.imshow(inp)

if title is not None:

plt.title(title)

plt.pause(0.001) # pause a bit so that plots are updated

label = ["Cat" if x.item()==0 else "Dog" for x in labels_try]

imshow(torchvision.utils.make_grid(inputs_try), label)

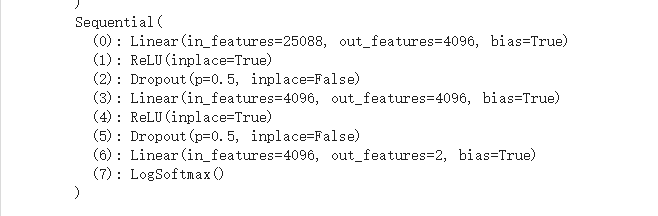

加载VGG16并修改最后一层的网络结构

当然是直接用的老师的代码了

model_vgg = models.vgg16(pretrained=True)

print(model_vgg)

model_vgg_new = model_vgg;

for param in model_vgg_new.parameters():

param.requires_grad = False

model_vgg_new.classifier._modules['6'] = nn.Linear(4096, 2)

model_vgg_new.classifier._modules['7'] = torch.nn.LogSoftmax(dim = 1)

model_vgg_new = model_vgg_new.to(device)

print(model_vgg_new.classifier)

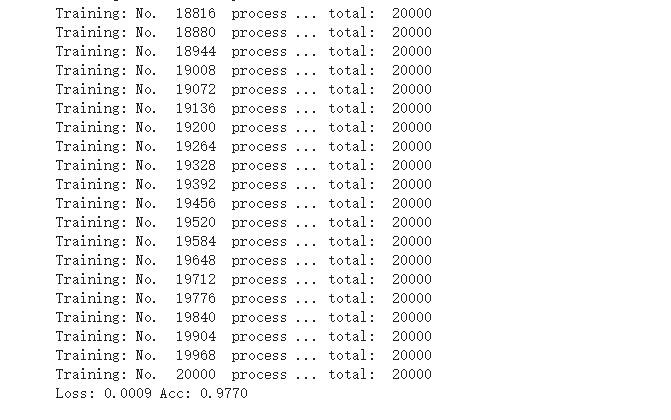

训练模型

这部分也是直接用就好了

尝试了一下Adam和SGD优化器

SGD大约十轮迭代以后和Adam的准确率差不多,貌似Adam的自适应收敛会更快

'''

第一步:创建损失函数和优化器

损失函数 NLLLoss() 的 输入 是一个对数概率向量和一个目标标签.

它不会为我们计算对数概率,适合最后一层是log_softmax()的网络.

'''

criterion = nn.NLLLoss()

# 学习率

lr = 0.001

# 随机梯度下降

optimizer_vgg = torch.optim.Adam(model_vgg_new.classifier[6].parameters(),lr = lr)

'''

第二步:训练模型

'''

def train_model(model,dataloader,size,epochs=1,optimizer=None):

model.train()

for epoch in range(epochs):

running_loss = 0.0

running_corrects = 0

count = 0

for inputs,classes in dataloader:

inputs = inputs.to(device)

classes = classes.to(device)

outputs = model(inputs)

loss = criterion(outputs,classes)

optimizer = optimizer

optimizer.zero_grad()

loss.backward()

optimizer.step()

_,preds = torch.max(outputs.data,1)

# statistics

running_loss += loss.data.item()

running_corrects += torch.sum(preds == classes.data)

count += len(inputs)

print('Training: No. ', count, ' process ... total: ', size)

epoch_loss = running_loss / size

epoch_acc = running_corrects.data.item() / size

print('Loss: {:.4f} Acc: {:.4f}'.format(

epoch_loss, epoch_acc))

# 模型训练

train_model(model_vgg_new,loader_train,size=train_dataset.__len__(), epochs=2,

optimizer=optimizer_vgg)

查看验证集上的准确率

def test_model(model,dataloader,size):

model.eval()

predictions = np.zeros(size)

all_classes = np.zeros(size)

all_proba = np.zeros((size,2))

i = 0

running_loss = 0.0

running_corrects = 0

for inputs,classes in dataloader:

inputs = inputs.to(device)

classes = classes.to(device)

outputs = model(inputs)

loss = criterion(outputs,classes)

_,preds = torch.max(outputs.data,1)

# statistics

running_loss += loss.data.item()

running_corrects += torch.sum(preds == classes.data)

predictions[i:i+len(classes)] = preds.to('cpu').numpy()

all_classes[i:i+len(classes)] = classes.to('cpu').numpy()

all_proba[i:i+len(classes),:] = outputs.data.to('cpu').numpy()

i += len(classes)

print('Testing: No. ', i, ' process ... total: ', size)

epoch_loss = running_loss / size

epoch_acc = running_corrects.data.item() / size

print('Loss: {:.4f} Acc: {:.4f}'.format(

epoch_loss, epoch_acc))

return predictions, all_proba, all_classes

predictions, all_proba, all_classes = test_model(model_vgg_new,loader_valid,size=val_dataset.__len__

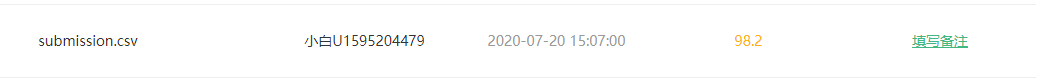

生成一个提交文件

import pandas as pd

pred = []

model_vgg_new.eval()

# print(model_vgg_new)

test_img = os.listdir(img_dir['test'])

ans = [0]*len(test_img)

ansf = open('submission.txt','w')

for i,img in enumerate(test_img):

image = vgg_format(io.imread(os.path.join(img_dir['test'],img)))

image = image.unsqueeze(0)

image = image.to(device)

index = int(os.path.splitext(img)[0])

print(index)

output = model_vgg_new(image)

_,preds = torch.max(output.data,1)

ans[index]=preds.item()

pred.append(preds.item())

for i, pred in enumerate(ans):

print(i, pred, file=ansf, sep=',')

ansf.close()

results = pd.Series(pred)

submission = pd.concat([pd.Series(range(0,2000)),results],axis=1)

print(submission)

submission.to_csv(os.path.join('./','submission.csv'),index=False)

上AI研习社交一发

WOW 起飞

晚餐加可乐了

结果分析:

提升方案

- 更换主干网络

VGG是一个多年前的网络了,可以考虑使用ResNet做主干网络

- 采用数据增强技术 可参考 数据增强(Data Augmentation)

对现有的训练样本进行平移旋转等,生成规模更大的样本

- 分析vali样本中的分类错误的样本,看是否有提升空间

毕竟神经网络理论上可以拟合任意函数,主要还是找一个适合的网络以及充足的合适的训练样本

Crossea_一条有梦想的咸鱼

一条有梦想的咸鱼