Mapreduce实例——去重

现有一个某电商网站的数据文件,名为buyer_favorite1,记录了用户收藏的商品以及收藏的日期,文件buyer_favorite1中包含(用户id,商品id,收藏日期)三个字段,数据内容以“,”分割,内容如下:

用户id,商品id,收藏日期

10181,1000481,2010-04-04 16:54:31

20001,1001597,2010-04-07 15:07:52

20001,1001560,2010-04-07 15:08:27

20042,1001368,2010-04-08 08:20:30

20067,1002061,2010-04-08 16:45:33

20056,1003289,2010-04-12 10:50:55

20056,1003290,2010-04-12 11:57:35

20056,1003292,2010-04-12 12:05:29

20054,1002420,2010-04-14 15:24:12

20055,1001679,2010-04-14 19:46:04

20054,1010675,2010-04-14 15:23:53

20054,1002429,2010-04-14 17:52:45

20076,1002427,2010-04-14 19:35:39

20054,1003326,2010-04-20 12:54:44

20056,1002420,2010-04-15 11:24:49

20064,1002422,2010-04-15 11:35:54

20056,1003066,2010-04-15 11:43:01

20056,1003055,2010-04-15 11:43:06

20056,1010183,2010-04-15 11:45:24

20056,1002422,2010-04-15 11:45:49

20056,1003100,2010-04-15 11:45:54

20056,1003094,2010-04-15 11:45:57

20056,1003064,2010-04-15 11:46:04

20056,1010178,2010-04-15 16:15:20

20076,1003101,2010-04-15 16:37:27

20076,1003103,2010-04-15 16:37:05

20076,1003100,2010-04-15 16:37:18

20076,1003066,2010-04-15 16:37:31

20054,1003100,2010-04-15 16:40:16

编写MapReduce程序,根据商品id进行去重,统计用户收藏商品中都有哪些商品被收藏

package mapreduce2; import java.io.IOException; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.input.TextInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat; //01.Mapreduce实例——去重 public class Filter { public static class Map extends Mapper<Object , Text , Text , NullWritable>{ private static Text newKey=new Text(); public void map(Object key,Text value,Context context) throws IOException, InterruptedException{ String line=value.toString(); System.out.println(line); String arr[]=line.split(","); newKey.set(arr[1]); context.write(newKey, NullWritable.get()); System.out.println(newKey); } } public static class Reduce extends Reducer<Text, NullWritable, Text, NullWritable>{ public void reduce(Text key,Iterable<NullWritable> values,Context context) throws IOException, InterruptedException{ context.write(key,NullWritable.get()); } } public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException{ Configuration conf=new Configuration(); System.out.println("start"); Job job =new Job(conf,"filter"); job.setJarByClass(Filter.class); job.setMapperClass(Map.class); job.setReducerClass(Reduce.class); job.setOutputKeyClass(Text.class); job.setOutputValueClass(NullWritable.class); job.setInputFormatClass(TextInputFormat.class); job.setOutputFormatClass(TextOutputFormat.class); Path in=new Path("hdfs://192.168.51.100:8020/mymapreduce2/in/buyer_favorite1"); Path out=new Path("hdfs://192.168.51.100:8020/mymapreduce2/out"); FileInputFormat.addInputPath(job,in); FileOutputFormat.setOutputPath(job,out); System.exit(job.waitForCompletion(true) ? 0 : 1); } }

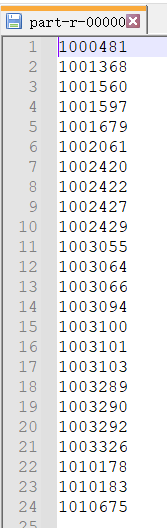

统计结果:

原理:

“数据去重”主要是为了掌握和利用并行化思想来对数据进行有意义的筛选。统计大数据集上的数据种类个数、从网站日志中计算访问地等这些看似庞杂的任务都会涉及数据去重。

数据去重的最终目标是让原始数据中出现次数超过一次的数据在输出文件中只出现一次。在MapReduce流程中,map的输出<key,value>经过shuffle过程聚集成<key,value-list>后交给reduce。我们自然而然会想到将同一个数据的所有记录都交给一台reduce机器,无论这个数据出现多少次,只要在最终结果中输出一次就可以了。具体就是reduce的输入应该以数据作为key,而对value-list则没有要求(可以设置为空)。当reduce接收到一个<key,value-list>时就直接将输入的key复制到输出的key中,并将value设置成空值,然后输出<key,value>。

浙公网安备 33010602011771号

浙公网安备 33010602011771号