Spark RDD 编程

Spark RDD 编程

1

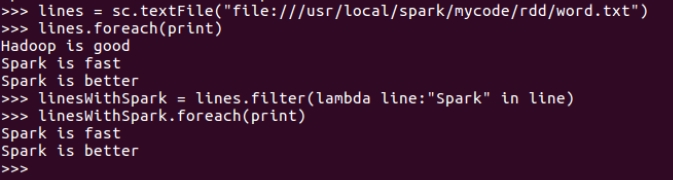

准备文本文件

vim /usr/local/spark/mycode/rdd/word.txt

Hadoop is good

Spark is fast

Spark is better

从文件创建 RDD lines=sc.textFile()

lines = sc.textFile("file:///usr/local/spark/mycode/rdd/word.txt")

lines.foreach(print)

筛选出含某个单词的行 lines.filter()

lambda 参数:条件表达式

linesWithSpark = lines.filter(lambda line:"Spark" in line)

linesWithSpark.foreach(print)

2

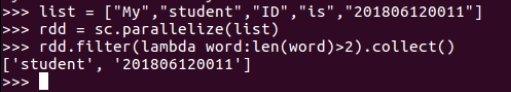

生成单词的列表

list = ["My","student","ID","is","201806120011"]

从列表创建 RDD words=sc.parallelize()

rdd = sc.parallelize(list)

筛选出长度大于2的单词 words.filter()

rdd.filter(lambda word:len(word)>2).collect()

3

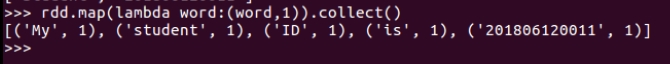

筛选出的单词RDD,映射为(单词,1)键值对。 words.map()

rdd.map(lambda word:(word,1)).collect()

浙公网安备 33010602011771号

浙公网安备 33010602011771号