Zookeeper

1. 概念

1.1 zookeeper主要是文件系统和通知机制

文件系统主要是用来存储数据

通知机制主要是服务器或者客户端进行通知,并且监督

基于观察者模式设计的分布式服务管理框架,开源的分布式框架

1.2特点

一个leader,多个follower的集群

集群只要有半数以上包括半数就可正常服务,一般安装奇数台服务器

全局数据一致,每个服务器都保存同样的数据,实时更新

更新的请求顺序保持顺序(来自同一个服务器)

数据更新的原子性,数据要么成功要么失败

数据实时更新性很快

主要的集群步骤为

服务端启动时去注册信息(创建都是临时节点)

获取到当前在线服务器列表,并且注册监听

服务器节点下线

服务器节点上下线事件通知

process(){重新再去获取服务器列表,并注册监听}

数据结构

与 Unix 文件系统很类似,可看成树形结构,每个节点称做一个 ZNode。每一个 ZNode 默认能够存储 1MB 的数据。也就是只能存储小数据

1.3应用场景

统一命名服务(域名服务)

统一配置管理(一个集群中的所有配置都一致,且也要实时更新同步)

将配置信息写入ZooKeeper上的一个Znode,各个客户端服务器监听这个Znode。一旦Znode中的数据被修改,ZooKeeper将通知各个客户端服务器

统一集群管理(掌握实时状态)

将节点信息写入ZooKeeper上的一个ZNode。监听ZNode获取实时状态变化

服务器节点动态上下线

软负载均衡(根据每个节点的访问数,让访问数最少的服务器处理最新的数据需求)

2. 安装

bin目录 框架启动停止,客户端和服务端的

conf 配置文件信息

docs文档

lib 配置文档的依赖

2.1 配置文件解读

配置文件的5大参数

tickTime = 2000发送时间

initLimit = 10初始化通信的时间,最多不能超过的时间,超过的话,通信失败

syncLimit = 5建立好连接后,下次的通信时间如果超过,通信失败

dataDir保存zookeeper的数据,默认是temp会被系统定期清除clientPort = 2181客户端的连接端口,一般不需要修改

2.2 docker安装

docker pull zookeeper

docker run -d -p 2181:2181 --name some-zookeeper --restart always 3487af26dee9d5c6f857cd88c342acf63dd58e838a4cdf912daa6c8c0115091147136e819307可视化客户端

下载地址:

https://issues.apache.org/jira/

解压后进入build目录执行命令

java -jar zookeeper-dev-ZooInspector.jar3. zookeeper集群操作

3.1 集群安装

3.1.1 集群安装

比如三个节点部署zookeeper

那么需要3台服务器

在每台服务器中解压zookeeper压缩包并修改其配置文件等

区别在于下面

要在创建保存zookeeper的数据文件夹中

![]()

新建一个myid文件(和源码的myid对应上)相当于服务器的唯一编号

具体编号是多少,对应该服务器是哪一台服务器编号

![]()

之后将其终端执行xsync /opt/apache-zookeeper-3.5.7-bin,主要功能是同步发脚本

在conf的配置文件zoo.cfg末尾添加如下配置

标识服务器的编号

server.2=hadoop102:2888:3888

server.3=hadoop103:2888:3888

server.4=hadoop104:2888:3888当前主要配置编号的参数是server.A=B:C:D

A标识第几台服务器

B标识服务器地址

C标识服务器 Follower 与集群中的 Leader 服务器交换信息的端口

D主要是选举,如果Leader 服务器挂了。这个端口就是用来执行选举时服务器相互通信的端口,通过这个端口进行重新选举leader

配置文件以及服务器编号设置好后即可启动

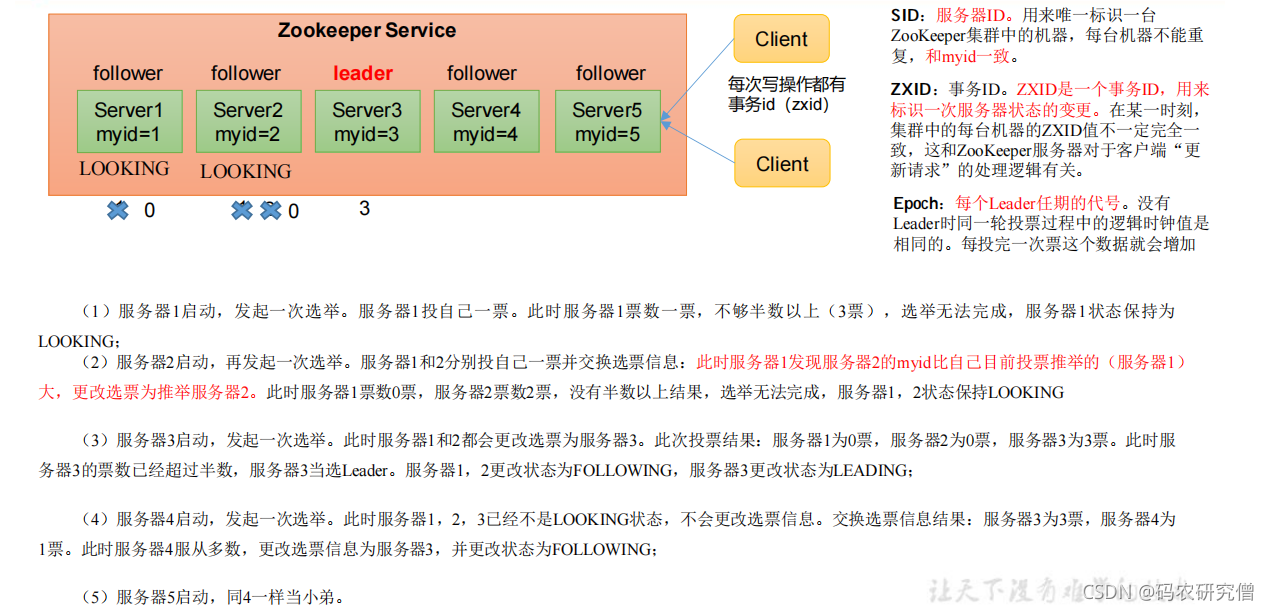

3.1.2 选举机制

以下主要是面试的重点

区分好第一次启动与非第一次启动的步骤

epoch在没有leader的时候,主要是逻辑时钟(与数字电路中的逻辑时钟相同)

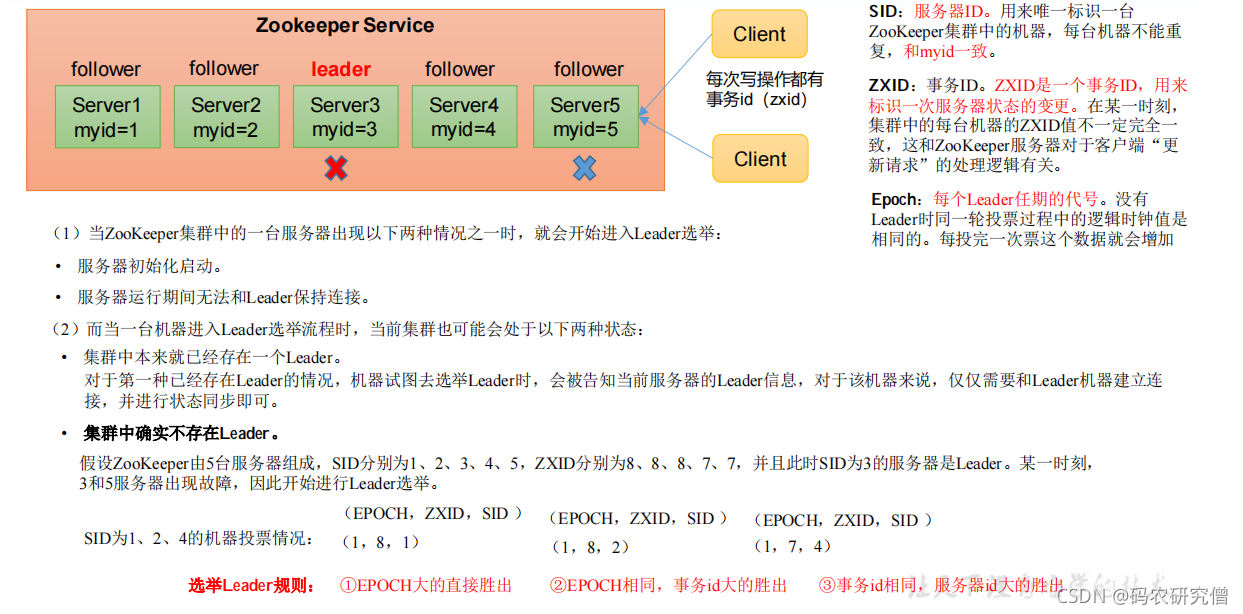

非第一次启动,在没有leader的时候

其判断依据是:epoch任职期>事务id>服务器sid

3.1.3 集群启动停止脚本

如果服务器太多,需要一台一台启动和关闭会比较麻烦

脚本文件大致如下:

建立一个后缀名为sh的文件

#!/bin/bash

case $1 in

"start"){

for i in hadoop102 hadoop103 hadoop104

do

echo ---------- zookeeper $i 启动 ------------

ssh $i "/opt/module/zookeeper-3.5.7/bin/zkServer.sh

start"

done

};;

"stop"){

for i in hadoop102 hadoop103 hadoop104

do

echo ---------- zookeeper $i 停止 ------------

ssh $i "/opt/module/zookeeper-3.5.7/bin/zkServer.sh

stop"

done

};;

"status"){

for i in hadoop102 hadoop103 hadoop104

do

echo ---------- zookeeper $i 状态 ------------

ssh $i "/opt/module/zookeeper-3.5.7/bin/zkServer.sh

status"

done

};;

esac修改该文件的权限

通过chmod 777 zk.sh

之后在一个服务器中执行./zk.sh start就可启动脚本文件,其他命令也如此

查看其进程号

可以通过jpsall 就可查看所有服务器的进程

3.1.4 docker安装

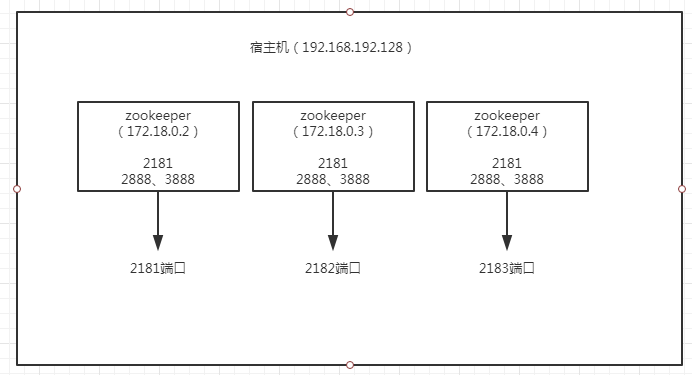

环境:单台宿主机(192.168.192.128),启动三个zookeeper容器。

这里涉及一个问题,就是Docker容器之间通信的问题,这个很重要!

Docker有三种网络模式,bridge、host、none,在你创建容器的时候,不指定--network默认是bridge。

bridge:为每一个容器分配IP,并将容器连接到一个docker0虚拟网桥,通过docker0网桥与宿主机通信。也就是说,此模式下,你不能用宿主机的IP+容器映射端口来进行Docker容器之间的通信。

host:容器不会虚拟自己的网卡,配置自己的IP,而是使用宿主机的IP和端口。这样一来,Docker容器之间的通信就可以用宿主机的IP+容器映射端口

none:无网络。

先在本地创建目录:

[root@localhost admin]# mkdir /usr/local/zookeeper-cluster

[root@localhost admin]# mkdir /usr/local/zookeeper-cluster/node1

[root@localhost admin]# mkdir /usr/local/zookeeper-cluster/node2

[root@localhost admin]# mkdir /usr/local/zookeeper-cluster/node3

[root@localhost admin]# ll /usr/local/zookeeper-cluster/

total 0

drwxr-xr-x. 2 root root 6 Aug 28 23:02 node1

drwxr-xr-x. 2 root root 6 Aug 28 23:02 node2

drwxr-xr-x. 2 root root 6 Aug 28 23:02 node3然后执行命令启动

docker run -d -p 2181:2181 -p 2888:2888 -p 3888:3888 --name zookeeper_node1 --privileged --restart always \

-v /usr/local/zookeeper-cluster/node1/volumes/data:/data \

-v /usr/local/zookeeper-cluster/node1/volumes/datalog:/datalog \

-v /usr/local/zookeeper-cluster/node1/volumes/logs:/logs \

-e ZOO_MY_ID=1 \

-e "ZOO_SERVERS=server.1=192.168.192.128:2888:3888;2181 server.2=192.168.192.128:2889:3889;2182 server.3=192.168.192.128:2890:3890;2183" 3487af26dee9

docker run -d -p 2182:2181 -p 2889:2888 -p 3889:3888 --name zookeeper_node2 --privileged --restart always \

-v /usr/local/zookeeper-cluster/node2/volumes/data:/data \

-v /usr/local/zookeeper-cluster/node2/volumes/datalog:/datalog \

-v /usr/local/zookeeper-cluster/node2/volumes/logs:/logs \

-e ZOO_MY_ID=2 \

-e "ZOO_SERVERS=server.1=192.168.192.128:2888:3888;2181 server.2=192.168.192.128:2889:3889;2182 server.3=192.168.192.128:2890:3890;2183" 3487af26dee9

docker run -d -p 2183:2181 -p 2890:2888 -p 3890:3888 --name zookeeper_node3 --privileged --restart always \

-v /usr/local/zookeeper-cluster/node3/volumes/data:/data \

-v /usr/local/zookeeper-cluster/node3/volumes/datalog:/datalog \

-v /usr/local/zookeeper-cluster/node3/volumes/logs:/logs \

-e ZOO_MY_ID=3 \

-e "ZOO_SERVERS=server.1=192.168.192.128:2888:3888;2181 server.2=192.168.192.128:2889:3889;2182 server.3=192.168.192.128:2890:3890;2183" 3487af26dee9【坑】

乍一看,没什么问题啊,首先映射端口到宿主机,然后三个zookeeper之间的访问地址则是宿主机IP:映射端口,没毛病啊;

看我前面讲的网络模式就能看出问题,ZOO_SERVERS里面的IP有问题,犯这个错误都是不了解Docker的网络模式的。什么错误往下看。

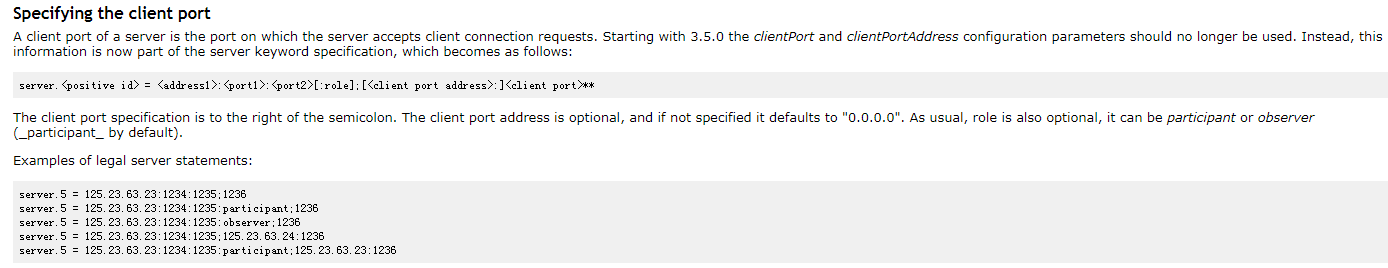

关于ZOO_SERVERS

什么意思呢,3.5.0开始,不应该再使用clientPort和clientPortAddress配置参数。相反,这些信息现在是server关键字规范的一部分。

端口映射三个容器不一样,比如2181/2182/2183,因为是一台宿主机嘛,端口不能冲突,如果你不在同一台机器,就不用修改端口。

最后的那个参数是镜像ID,也可以是镜像名称:TAG。

--privileged=true参数是为了解决【chown: changing ownership of '/data': Permission denied】,也可以省略true

执行结果:

[root@localhost admin]# docker run -d -p 2181:2181 -p 2888:2888 -p 3888:3888 --name zookeeper_node1 --privileged --restart always \

> -v /usr/local/zookeeper-cluster/node1/volumes/data:/data \

> -v /usr/local/zookeeper-cluster/node1/volumes/datalog:/datalog \

> -v /usr/local/zookeeper-cluster/node1/volumes/logs:/logs \

> -e ZOO_MY_ID=1 \

> -e "ZOO_SERVERS=server.1=192.168.192.128:2888:3888;2181 server.2=192.168.192.128:2889:3889;2182 server.3=192.168.192.128:2890:3890;2183" 3487af26dee9

4bfa6bbeb936037e178a577e5efbd06d4a963e91d67274413b933fd189917776

[root@localhost admin]# docker run -d -p 2182:2181 -p 2889:2888 -p 3889:3888 --name zookeeper_node2 --privileged --restart always \

> -v /usr/local/zookeeper-cluster/node2/volumes/data:/data \

> -v /usr/local/zookeeper-cluster/node2/volumes/datalog:/datalog \

> -v /usr/local/zookeeper-cluster/node2/volumes/logs:/logs \

> -e ZOO_MY_ID=2 \

> -e "ZOO_SERVERS=server.1=192.168.192.128:2888:3888;2181 server.2=192.168.192.128:2889:3889;2182 server.3=192.168.192.128:2890:3890;2183" 3487af26dee9

dbb7f1f323a09869d043152a4995e73bad5f615fd81bf11143fd1c28180f9869

[root@localhost admin]# docker run -d -p 2183:2181 -p 2890:2888 -p 3890:3888 --name zookeeper_node3 --privileged --restart always \

> -v /usr/local/zookeeper-cluster/node3/volumes/data:/data \

> -v /usr/local/zookeeper-cluster/node3/volumes/datalog:/datalog \

> -v /usr/local/zookeeper-cluster/node3/volumes/logs:/logs \

> -e ZOO_MY_ID=3 \

> -e "ZOO_SERVERS=server.1=192.168.192.128:2888:3888;2181 server.2=192.168.192.128:2889:3889;2182 server.3=192.168.192.128:2890:3890;2183" 3487af26dee9

6dabae1d92f0e861cc7515c014c293f80075c2762b254fc56312a6d3b450a919

[root@localhost admin]# 查看启动的容器

[root@localhost admin]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

6dabae1d92f0 3487af26dee9 "/docker-entrypoin..." 31 seconds ago Up 29 seconds 8080/tcp, 0.0.0.0:2183->2181/tcp, 0.0.0.0:2890->2888/tcp, 0.0.0.0:3890->3888/tcp zookeeper_node3

dbb7f1f323a0 3487af26dee9 "/docker-entrypoin..." 36 seconds ago Up 35 seconds 8080/tcp, 0.0.0.0:2182->2181/tcp, 0.0.0.0:2889->2888/tcp, 0.0.0.0:3889->3888/tcp zookeeper_node2

4bfa6bbeb936 3487af26dee9 "/docker-entrypoin..." 46 seconds ago Up 45 seconds 0.0.0.0:2181->2181/tcp, 0.0.0.0:2888->2888/tcp, 0.0.0.0:3888->3888/tcp, 8080/tcp zookeeper_node1

[root@localhost admin]# 不是说有错误吗?怎么还启动成功了??我们来看下节点1的启动日志

[root@localhost admin]# docker logs -f 4bfa6bbeb936

ZooKeeper JMX enabled by default

...

2019-08-29 09:20:22,665 [myid:1] - WARN [WorkerSender[myid=1]:QuorumCnxManager@677] - Cannot open channel to 2 at election address /192.168.192.128:3889

java.net.ConnectException: Connection refused (Connection refused)

at java.net.PlainSocketImpl.socketConnect(Native Method)

at java.net.AbstractPlainSocketImpl.doConnect(AbstractPlainSocketImpl.java:350)

at java.net.AbstractPlainSocketImpl.connectToAddress(AbstractPlainSocketImpl.java:206)

at java.net.AbstractPlainSocketImpl.connect(AbstractPlainSocketImpl.java:188)

at java.net.SocksSocketImpl.connect(SocksSocketImpl.java:392)

at java.net.Socket.connect(Socket.java:589)

at org.apache.zookeeper.server.quorum.QuorumCnxManager.connectOne(QuorumCnxManager.java:648)

at org.apache.zookeeper.server.quorum.QuorumCnxManager.connectOne(QuorumCnxManager.java:705)

at org.apache.zookeeper.server.quorum.QuorumCnxManager.toSend(QuorumCnxManager.java:618)

at org.apache.zookeeper.server.quorum.FastLeaderElection$Messenger$WorkerSender.process(FastLeaderElection.java:477)

at org.apache.zookeeper.server.quorum.FastLeaderElection$Messenger$WorkerSender.run(FastLeaderElection.java:456)

at java.lang.Thread.run(Thread.java:748)

2019-08-29 09:20:22,666 [myid:1] - WARN [WorkerSender[myid=1]:QuorumCnxManager@677] - Cannot open channel to 3 at election address /192.168.192.128:3890

java.net.ConnectException: Connection refused (Connection refused)

at java.net.PlainSocketImpl.socketConnect(Native Method)

at java.net.AbstractPlainSocketImpl.doConnect(AbstractPlainSocketImpl.java:350)

at java.net.AbstractPlainSocketImpl.connectToAddress(AbstractPlainSocketImpl.java:206)

at java.net.AbstractPlainSocketImpl.connect(AbstractPlainSocketImpl.java:188)

at java.net.SocksSocketImpl.connect(SocksSocketImpl.java:392)

at java.net.Socket.connect(Socket.java:589)

at org.apache.zookeeper.server.quorum.QuorumCnxManager.connectOne(QuorumCnxManager.java:648)

at org.apache.zookeeper.server.quorum.QuorumCnxManager.connectOne(QuorumCnxManager.java:705)

at org.apache.zookeeper.server.quorum.QuorumCnxManager.toSend(QuorumCnxManager.java:618)

at org.apache.zookeeper.server.quorum.FastLeaderElection$Messenger$WorkerSender.process(FastLeaderElection.java:477)

at org.apache.zookeeper.server.quorum.FastLeaderElection$Messenger$WorkerSender.run(FastLeaderElection.java:456)

at java.lang.Thread.run(Thread.java:748)连接不上2 和 3,为什么呢,因为在默认的Docker网络模式下,通过宿主机的IP+映射端口,根本找不到啊!他们有自己的IP啊!如下:

[root@localhost admin]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

6dabae1d92f0 3487af26dee9 "/docker-entrypoin..." 5 minutes ago Up 5 minutes 8080/tcp, 0.0.0.0:2183->2181/tcp, 0.0.0.0:2890->2888/tcp, 0.0.0.0:3890->3888/tcp zookeeper_node3

dbb7f1f323a0 3487af26dee9 "/docker-entrypoin..." 6 minutes ago Up 6 minutes 8080/tcp, 0.0.0.0:2182->2181/tcp, 0.0.0.0:2889->2888/tcp, 0.0.0.0:3889->3888/tcp zookeeper_node2

4bfa6bbeb936 3487af26dee9 "/docker-entrypoin..." 6 minutes ago Up 6 minutes 0.0.0.0:2181->2181/tcp, 0.0.0.0:2888->2888/tcp, 0.0.0.0:3888->3888/tcp, 8080/tcp zookeeper_node1

[root@localhost admin]# docker inspect 4bfa6bbeb936

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"NetworkID": "5fc1ce4362afe3d34fdf260ab0174c36fe4b7daf2189702eae48101a755079f3",

"EndpointID": "368237e4c903cc663111f1fe33ac4626a9100fb5a22aec85f5eccbc6968a1631",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.2",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"MacAddress": "02:42:ac:11:00:02"

}

}

}

}

]

[root@localhost admin]# docker inspect dbb7f1f323a0

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"NetworkID": "5fc1ce4362afe3d34fdf260ab0174c36fe4b7daf2189702eae48101a755079f3",

"EndpointID": "8a9734044a566d5ddcd7cbbf6661abb2730742f7c73bd8733ede9ed8ef106659",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.3",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"MacAddress": "02:42:ac:11:00:03"

}

}

}

}

]

[root@localhost admin]# docker inspect 6dabae1d92f0

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"NetworkID": "5fc1ce4362afe3d34fdf260ab0174c36fe4b7daf2189702eae48101a755079f3",

"EndpointID": "b10329b9940a07aacb016d8d136511ec388de02bf3bd0e0b50f7f4cbb7f138ec",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.4",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"MacAddress": "02:42:ac:11:00:04"

}

}

}

}

]node2---172.17.0.3

node3---172.17.0.4

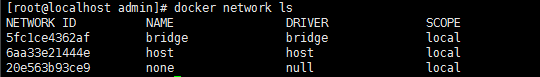

既然我们知道了它有自己的IP,那又出现另一个问题了,就是它的ip是动态的,启动之前我们无法得知。有个解决办法就是创建自己的bridge网络,然后创建容器的时候指定ip。

【正确方式开始】

[root@localhost admin]# docker network create --driver bridge --subnet=172.18.0.0/16 --gateway=172.18.0.1 zoonet

8257c501652a214d27efdf5ef71ff38bfe222c3a2a7898be24b8df9db1fb3b13

[root@localhost admin]# docker network ls

NETWORK ID NAME DRIVER SCOPE

5fc1ce4362af bridge bridge local

6aa33e21444e host host local

20e563b93ce9 none null local

8257c501652a zoonet bridge local

[root@localhost admin]# docker network inspect 8257c501652a

[

{

"Name": "zoonet",

"Id": "8257c501652a214d27efdf5ef71ff38bfe222c3a2a7898be24b8df9db1fb3b13",

"Created": "2019-08-29T06:08:01.442601483-04:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "172.18.0.0/16",

"Gateway": "172.18.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Containers": {},

"Options": {},

"Labels": {}

}

]然后我们修改一下zookeeper容器的创建命令。

docker run -d -p 2181:2181 --name zookeeper_node1 --privileged --restart always --network zoonet --ip 172.18.0.2 \

-v /root/zookeeper/node1/volumes/data:/data \

-v /root/zookeeper/node1/volumes/datalog:/datalog \

-v /root/zookeeper/node1/volumes/logs:/logs \

-e ZOO_MY_ID=1 \

-e "ZOO_SERVERS=server.1=172.18.0.2:2888:3888;2181 server.2=172.18.0.3:2888:3888;2181 server.3=172.18.0.4:2888:3888;2181" 36c607e7b14d

docker run -d -p 2182:2181 --name zookeeper_node2 --privileged --restart always --network zoonet --ip 172.18.0.3 \

-v /root/zookeeper/node1/volumes/data:/data \

-v /root/zookeeper/node1/volumes/datalog:/datalog \

-v /root/zookeeper/node1/volumes/logs:/logs \

-e ZOO_MY_ID=2 \

-e "ZOO_SERVERS=server.1=172.18.0.2:2888:3888;2181 server.2=172.18.0.3:2888:3888;2181 server.3=172.18.0.4:2888:3888;2181" 36c607e7b14d

docker run -d -p 2183:2181 --name zookeeper_node3 --privileged --restart always --network zoonet --ip 172.18.0.4 \

-v /root/zookeeper/node1/volumes/data:/data \

-v /root/zookeeper/node1/volumes/datalog:/datalog \

-v /root/zookeeper/node1/volumes/logs:/logs \

-e ZOO_MY_ID=3 \

-e "ZOO_SERVERS=server.1=172.18.0.2:2888:3888;2181 server.2=172.18.0.3:2888:3888;2181 server.3=172.18.0.4:2888:3888;2181" 36c607e7b14d1. 由于2888 、3888 不需要暴露,就不映射了;

2. 指定自己的网络,并指定IP;

3. 每个容器之间环境是隔离的,所以容器内所用的端口一样:2181/2888/3888

运行结果:

[root@localhost admin]# docker run -d -p 2181:2181 --name zookeeper_node1 --privileged --restart always --network zoonet --ip 172.18.0.2 \

> -v /usr/local/zookeeper-cluster/node1/volumes/data:/data \

> -v /usr/local/zookeeper-cluster/node1/volumes/datalog:/datalog \

> -v /usr/local/zookeeper-cluster/node1/volumes/logs:/logs \

> -e ZOO_MY_ID=1 \

> -e "ZOO_SERVERS=server.1=172.18.0.2:2888:3888;2181 server.2=172.18.0.3:2888:3888;2181 server.3=172.18.0.4:2888:3888;2181" 3487af26dee9

50c07cf11fab2d3b4da6d8ce48d8ed4a7beaab7d51dd542b8309f781e9920c36

[root@localhost admin]# docker run -d -p 2182:2181 --name zookeeper_node2 --privileged --restart always --network zoonet --ip 172.18.0.3 \

> -v /usr/local/zookeeper-cluster/node2/volumes/data:/data \

> -v /usr/local/zookeeper-cluster/node2/volumes/datalog:/datalog \

> -v /usr/local/zookeeper-cluster/node2/volumes/logs:/logs \

> -e ZOO_MY_ID=2 \

> -e "ZOO_SERVERS=server.1=172.18.0.2:2888:3888;2181 server.2=172.18.0.3:2888:3888;2181 server.3=172.18.0.4:2888:3888;2181" 3487af26dee9

649a4dbfb694504acfe4b8e11b990877964477bb41f8a230bd191cba7d20996f

[root@localhost admin]# docker run -d -p 2183:2181 --name zookeeper_node3 --privileged --restart always --network zoonet --ip 172.18.0.4 \

> -v /usr/local/zookeeper-cluster/node3/volumes/data:/data \

> -v /usr/local/zookeeper-cluster/node3/volumes/datalog:/datalog \

> -v /usr/local/zookeeper-cluster/node3/volumes/logs:/logs \

> -e ZOO_MY_ID=3 \

> -e "ZOO_SERVERS=server.1=172.18.0.2:2888:3888;2181 server.2=172.18.0.3:2888:3888;2181 server.3=172.18.0.4:2888:3888;2181" 3487af26dee9

c8bc1b9ae9adf86e9c7f6a3264f883206c6d0e4f6093db3200de80ef39f57160

[root@localhost admin]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

c8bc1b9ae9ad 3487af26dee9 "/docker-entrypoin..." 17 seconds ago Up 16 seconds 2888/tcp, 3888/tcp, 8080/tcp, 0.0.0.0:2183->2181/tcp zookeeper_node3

649a4dbfb694 3487af26dee9 "/docker-entrypoin..." 22 seconds ago Up 21 seconds 2888/tcp, 3888/tcp, 8080/tcp, 0.0.0.0:2182->2181/tcp zookeeper_node2

50c07cf11fab 3487af26dee9 "/docker-entrypoin..." 33 seconds ago Up 32 seconds 2888/tcp, 3888/tcp, 0.0.0.0:2181->2181/tcp, 8080/tcp zookeeper_node1

[root@localhost admin]# 进入容器内部验证一下:

[root@localhost admin]# docker exec -it 50c07cf11fab bash

root@50c07cf11fab:/apache-zookeeper-3.5.5-bin# ./bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /conf/zoo.cfg

Client port found: 2181. Client address: localhost.

Mode: follower

root@50c07cf11fab:/apache-zookeeper-3.5.5-bin# exit

exit

[root@localhost admin]# docker exec -it 649a4dbfb694 bash

root@649a4dbfb694:/apache-zookeeper-3.5.5-bin# ./bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /conf/zoo.cfg

Client port found: 2181. Client address: localhost.

Mode: leader

root@649a4dbfb694:/apache-zookeeper-3.5.5-bin# exit

exit

[root@localhost admin]# docker exec -it c8bc1b9ae9ad bash

root@c8bc1b9ae9ad:/apache-zookeeper-3.5.5-bin# ./bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /conf/zoo.cfg

Client port found: 2181. Client address: localhost.

Mode: follower

root@c8bc1b9ae9ad:/apache-zookeeper-3.5.5-bin# exit

exit

[root@localhost admin]# 在验证一下创建节点

开启防火墙,以供外部访问

firewall-cmd --zone=public --add-port=2181/tcp --permanent

firewall-cmd --zone=public --add-port=2182/tcp --permanent

firewall-cmd --zone=public --add-port=2183/tcp --permanent

systemctl restart firewalld

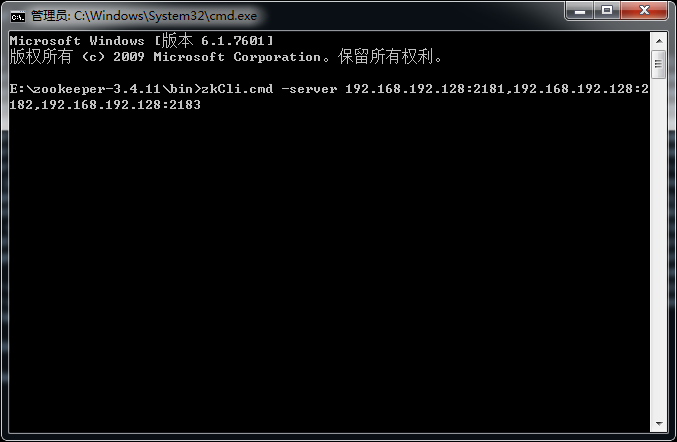

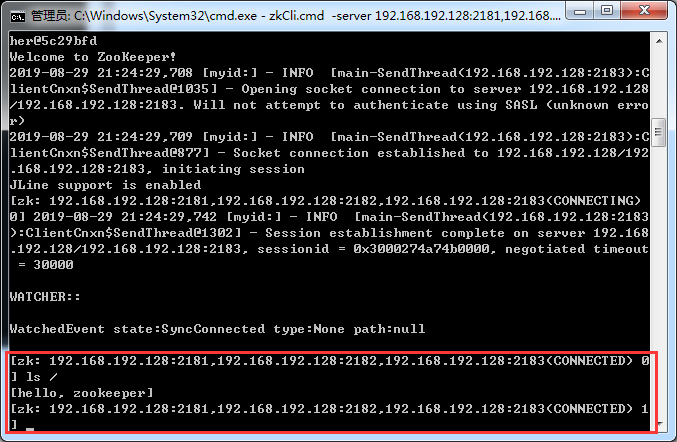

firewall-cmd --list-all在本地,我用zookeeper的客户端连接虚拟机上的集群:

可以看到连接成功!

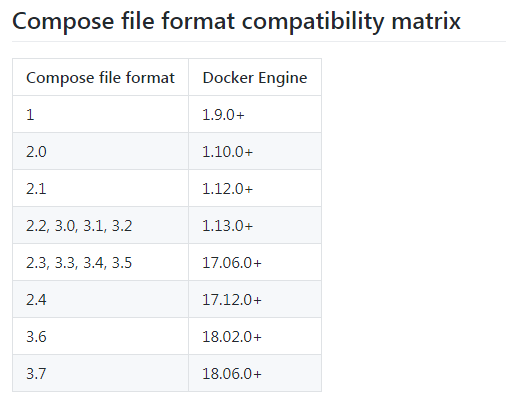

集群安装方式二:通过docker stack deploy或docker-compose安装

这里用docker-compose。先安装docker-compose

[root@localhost admin]# curl -L "https://github.com/docker/compose/releases/download/1.24.1/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-compose

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

100 617 0 617 0 0 145 0 --:--:-- 0:00:04 --:--:-- 145

100 15.4M 100 15.4M 0 0 131k 0 0:02:00 0:02:00 --:--:-- 136k

[root@localhost admin]# chmod +x /usr/local/bin/docker-compose检查版本(验证是否安装成功)

[root@localhost admin]# docker-compose --version

docker-compose version 1.24.1, build 4667896b卸载的话

rm /usr/local/bin/docker-compose开始配置,新建三个挂载目录

[root@localhost admin]# mkdir /usr/local/zookeeper-cluster/node4

[root@localhost admin]# mkdir /usr/local/zookeeper-cluster/node5

[root@localhost admin]# mkdir /usr/local/zookeeper-cluster/node6新建任意目录,然后在里面新建一个文件

[root@localhost admin]# mkdir DockerComposeFolder

[root@localhost admin]# cd DockerComposeFolder/

[root@localhost DockerComposeFolder]# vim docker-compose.yml文件内容如下:(自定义网络见上面)

version: '3.1'

services:

zoo1:

image: zookeeper

restart: always

privileged: true

hostname: zoo1

ports:

- 2181:2181

volumes: # 挂载数据

- /usr/local/zookeeper-cluster/node4/data:/data

- /usr/local/zookeeper-cluster/node4/datalog:/datalog

environment:

ZOO_MY_ID: 4

ZOO_SERVERS: server.4=0.0.0.0:2888:3888;2181 server.5=zoo2:2888:3888;2181 server.6=zoo3:2888:3888;2181

networks:

default:

ipv4_address: 172.18.0.14

zoo2:

image: zookeeper

restart: always

privileged: true

hostname: zoo2

ports:

- 2182:2181

volumes: # 挂载数据

- /usr/local/zookeeper-cluster/node5/data:/data

- /usr/local/zookeeper-cluster/node5/datalog:/datalog

environment:

ZOO_MY_ID: 5

ZOO_SERVERS: server.4=zoo1:2888:3888;2181 server.5=0.0.0.0:2888:3888;2181 server.6=zoo3:2888:3888;2181

networks:

default:

ipv4_address: 172.18.0.15

zoo3:

image: zookeeper

restart: always

privileged: true

hostname: zoo3

ports:

- 2183:2181

volumes: # 挂载数据

- /usr/local/zookeeper-cluster/node6/data:/data

- /usr/local/zookeeper-cluster/node6/datalog:/datalog

environment:

ZOO_MY_ID: 6

ZOO_SERVERS: server.4=zoo1:2888:3888;2181 server.5=zoo2:2888:3888;2181 server.6=0.0.0.0:2888:3888;2181

networks:

default:

ipv4_address: 172.18.0.16

networks: # 自定义网络

default:

external:

name: zoonet注意yaml文件里不能有tab,只能有空格。

关于version与Docker版本的关系如下:

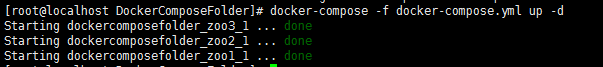

然后执行(-d后台启动)

docker-compose -f docker-compose.yml up -d

查看已启动的容器

[root@localhost DockerComposeFolder]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

a2c14814037d zookeeper "/docker-entrypoin..." 6 minutes ago Up About a minute 2888/tcp, 3888/tcp, 8080/tcp, 0.0.0.0:2183->2181/tcp dockercomposefolder_zoo3_1

50310229b216 zookeeper "/docker-entrypoin..." 6 minutes ago Up About a minute 2888/tcp, 3888/tcp, 0.0.0.0:2181->2181/tcp, 8080/tcp dockercomposefolder_zoo1_1

475d8a9e2d08 zookeeper "/docker-entrypoin..." 6 minutes ago Up About a minute 2888/tcp, 3888/tcp, 8080/tcp, 0.0.0.0:2182->2181/tcp dockercomposefolder_zoo2_1进入一个容器

[root@localhost DockerComposeFolder]# docker exec -it a2c14814037d bash

root@zoo3:/apache-zookeeper-3.5.5-bin# ./bin/zkCli.sh

Connecting to localhost:2181

....

WatchedEvent state:SyncConnected type:None path:null

[zk: localhost:2181(CONNECTED) 0]

[zk: localhost:2181(CONNECTED) 1] ls /

[zookeeper]

[zk: localhost:2181(CONNECTED) 2] create /hi

Created /hi

[zk: localhost:2181(CONNECTED) 3] ls /

[hi, zookeeper]进入另一个容器

[root@localhost DockerComposeFolder]# docker exec -it 50310229b216 bash

root@zoo1:/apache-zookeeper-3.5.5-bin# ./bin/zkCli.sh

Connecting to localhost:2181

...

WatchedEvent state:SyncConnected type:None path:null

[zk: localhost:2181(CONNECTED) 0] ls /

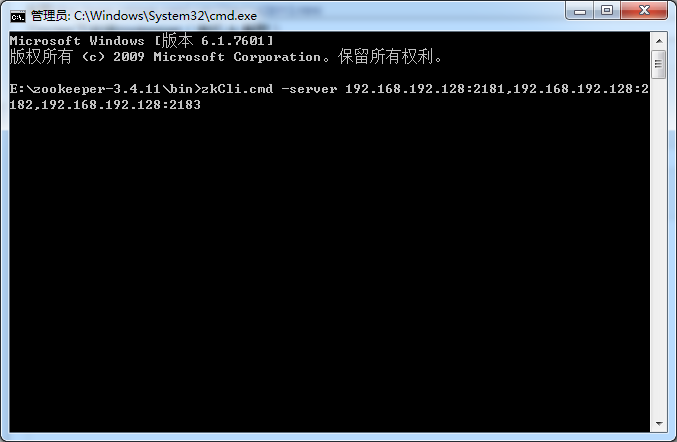

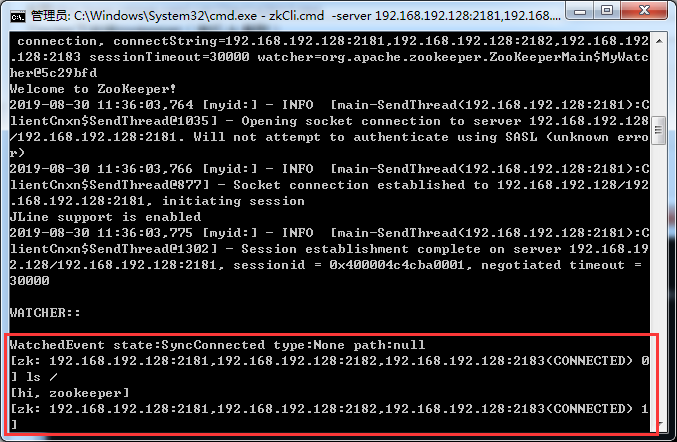

[hi, zookeeper]本地客户端连接集群:

zkCli.cmd -server 192.168.192.128:2181,192.168.192.128:2182,192.168.192.128:2183

查看

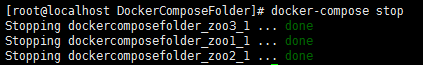

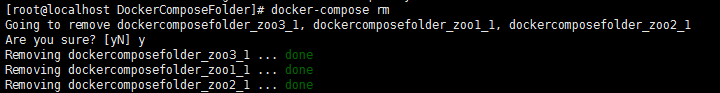

停止所有活动容器

删除所有已停止的容器

更多docker-compose的命令:

[root@localhost DockerComposeFolder]# docker-compose --help

Define and run multi-container applications with Docker.

Usage:

docker-compose [-f <arg>...] [options] [COMMAND] [ARGS...]

docker-compose -h|--help

Options:

-f, --file FILE Specify an alternate compose file

(default: docker-compose.yml)

-p, --project-name NAME Specify an alternate project name

(default: directory name)

--verbose Show more output

--log-level LEVEL Set log level (DEBUG, INFO, WARNING, ERROR, CRITICAL)

--no-ansi Do not print ANSI control characters

-v, --version Print version and exit

-H, --host HOST Daemon socket to connect to

--tls Use TLS; implied by --tlsverify

--tlscacert CA_PATH Trust certs signed only by this CA

--tlscert CLIENT_CERT_PATH Path to TLS certificate file

--tlskey TLS_KEY_PATH Path to TLS key file

--tlsverify Use TLS and verify the remote

--skip-hostname-check Don't check the daemon's hostname against the

name specified in the client certificate

--project-directory PATH Specify an alternate working directory

(default: the path of the Compose file)

--compatibility If set, Compose will attempt to convert keys

in v3 files to their non-Swarm equivalent

Commands:

build Build or rebuild services

bundle Generate a Docker bundle from the Compose file

config Validate and view the Compose file

create Create services

down Stop and remove containers, networks, images, and volumes

events Receive real time events from containers

exec Execute a command in a running container

help Get help on a command

images List images

kill Kill containers

logs View output from containers

pause Pause services

port Print the public port for a port binding

ps List containers

pull Pull service images

push Push service images

restart Restart services

rm Remove stopped containers

run Run a one-off command

scale Set number of containers for a service

start Start services

stop Stop services

top Display the running processes

unpause Unpause services

up Create and start containers

version Show the Docker-Compose version information3.2 客户端命令

3.2.1 常用命令

命令 功能

help 显示所有操作命令

ls path 使用 ls 命令来查看当前 znode 的子节点 [可监听] ,-w 监听子节点变化,-s 附加次级信息

create 普通创建

-s 含有序列

-e 临时(重启或者超时消失)

get path 获得节点的值 [可监听] ,-w 监听节点内容变化

-s 附加次级信息

set 设置节点的具体值

stat 查看节点状态

delete 删除节点

deleteall 递归删除节点

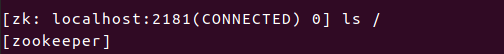

通过ls /

查看当前znode包含的信息

[zk: localhost:2181(CONNECTED) 0] ls /

[zookeeper]

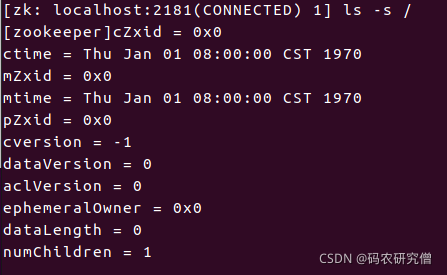

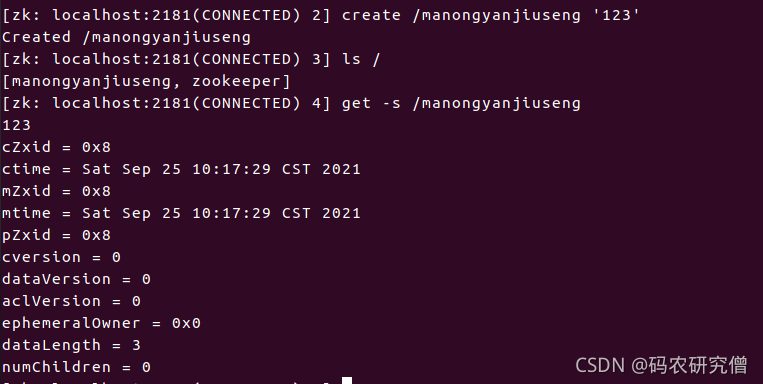

查看当前数据节点详细信息ls -s /

名称 表述

czxid 创建节点的事务 zxid,每次修改 ZooKeeper 状态都会产生一个 ZooKeeper 事务 ID。事务 ID 是 ZooKeeper 中所有修改总的次序。每次修改都有唯一的 zxid,如果 zxid1 小于 zxid2,那么 zxid1 在 zxid2 之前发生。

ctime znode 被创建的毫秒数(从 1970 年开始)

mzxid znode 最后更新的事务 zxid

mtime znode 最后修改的毫秒数(从 1970 年开始)

pZxid znode 最后更新的子节点 zxid

cversion znode 子节点变化号,znode 子节点修改次数

dataversion znode 数据变化号

aclVersion znode 访问控制列表的变化号

ephemeralOwner 如果是临时节点,这个是 znode 拥有者的 session id。如果不是临时节点则是 0

dataLength znode 的数据长度

numChildren znode 子节点数量

![]()

如果要启动专门的服务器

启动客户端的时候后缀要加上./zkCli.sh -server 服务器名:2181

博主的启动方式也为./zkCli.sh -server hadoop102:2181

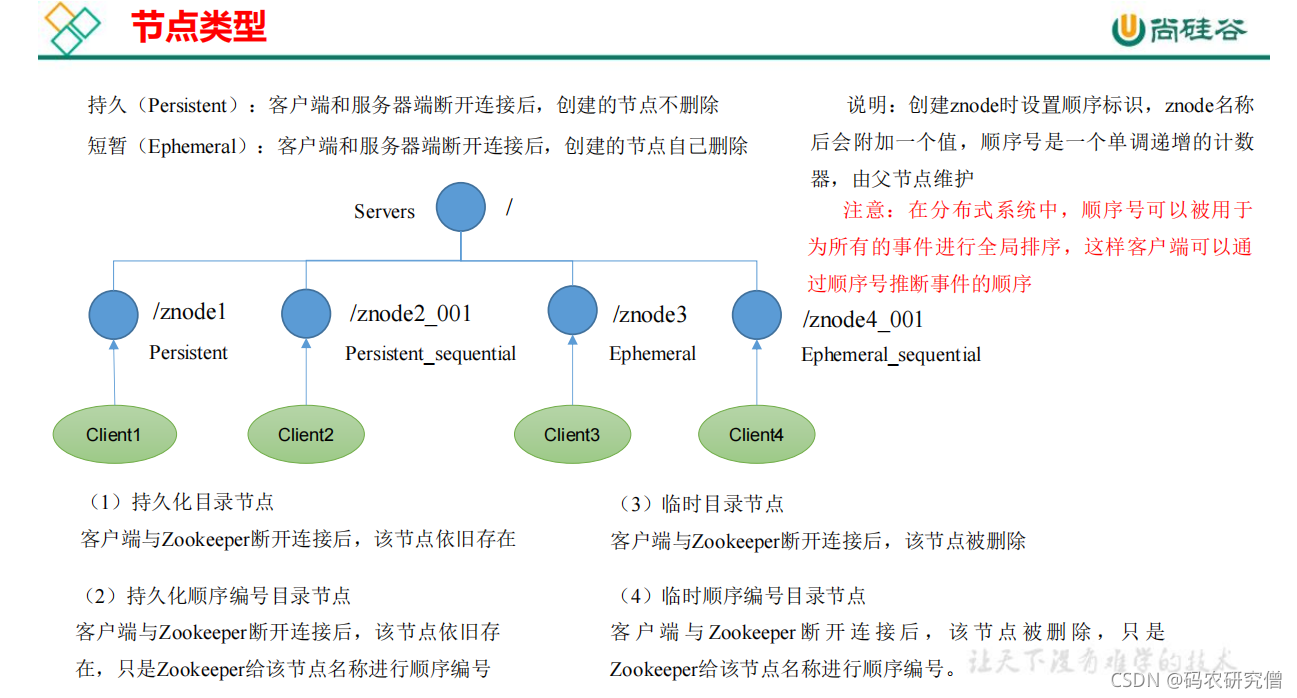

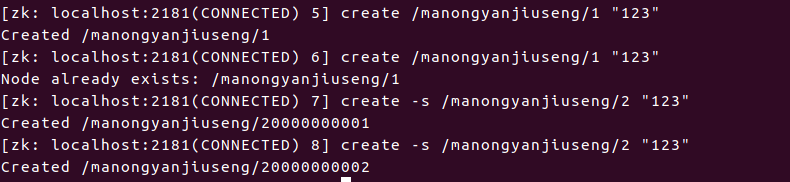

3.2.2 节点类型

节点类型分为(两两进行组合)

- 持久/短暂

- 有序号/无序号

创建节点不带序号的

通过create 进行创建

如果创建节点带序号的通过加上-s参数

即便创建两个一样的,-s 自动会加上序号辨别两者不同

退出客户端之后,这些节点并没有被清除

如果创建临时节点 加上参数 -e

临时节点不带序号-e

临时节点带序号-e -s

如果退出客户端,这些短暂节点将会被清除

如果修改节点的值,通过set key value即可

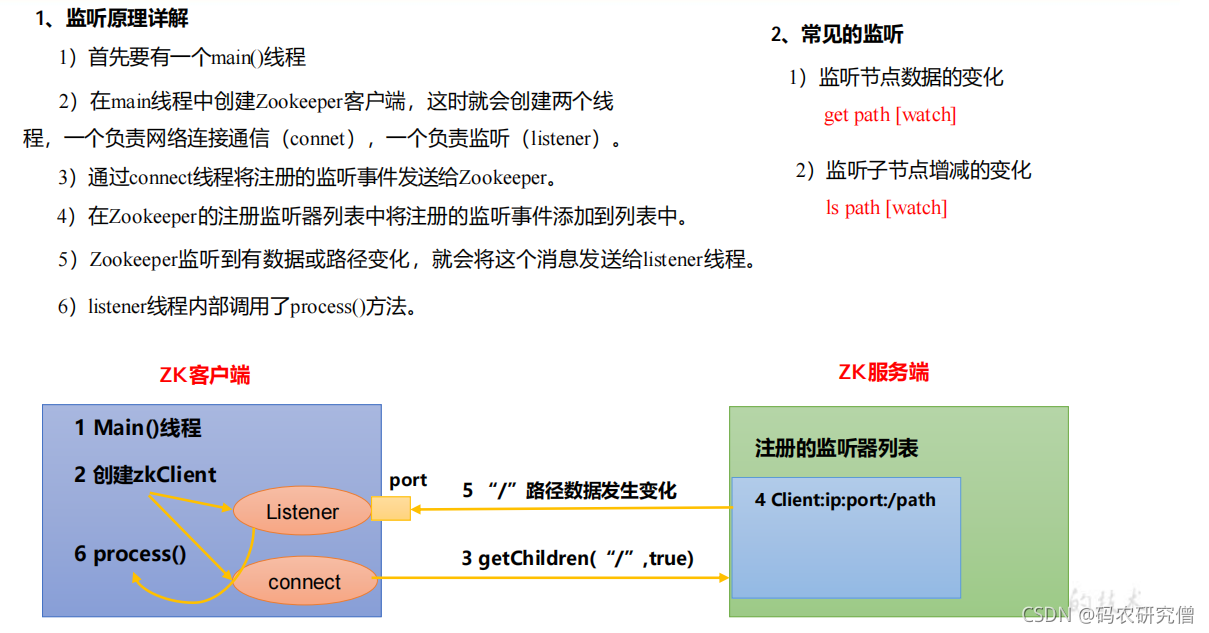

3.2.3 监听器原理

注册一次监听一次

主要有节点值的变化还有节点路径的变化

在一个服务器中通过修改其值或者路径

在另一个服务器中进行监听,通过get -w 节点值进行监听节点值的变化

如果是路径的变化,在另一个服务器中进行ls -w 路径进行监听

如果有很多个节点

不能直接使用删除delete

需要删除deleteall

查看节点的状态信息 stat

3.3 客户端代码操作

- 创建一个 Maven 工程

- 为 pom.xml 添加关键依赖

<dependencies>

<!-- 测试 -->

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>RELEASE</version>

</dependency>

<!-- 日志 -->

<dependency>

<groupId>org.apache.logging.log4j</groupId>

<artifactId>log4j-core</artifactId>

<version>2.8.2</version>

</dependency>

<!-- zookeeper -->

<dependency>

<groupId>org.apache.zookeeper</groupId>

<artifactId>zookeeper</artifactId>

<version>3.4.10</version>

</dependency>

</dependencies>- 需要在项目的

src/main/resources目录下,新建一个文件,命名为log4j.properties,在文件中填入如下内容:

log4j.rootLogger=INFO, stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spring.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%nimport org.apache.zookeeper.*;

import org.apache.zookeeper.data.Stat;

import org.junit.Before;

import org.junit.Test;

import javax.sound.midi.Soundbank;

import java.io.IOException;

import java.util.List;

public class TestZookeeper {

//连接的zk集群

private String connectString = "192.168.199.123:2181,192.168.199.124:2181";

//设置连接超时时间

private int sessionTimeout = 2000;

private ZooKeeper zkClient;

@Test

public void init() throws IOException {

zkClient = new ZooKeeper(connectString, sessionTimeout, new Watcher() {

//process会在init中先运行一遍,之后有其他将监听设为true时,会调用process方法

public void process(WatchedEvent watchedEvent) {

}

});

}

}创建节点,获取子节点并监控节点的变化,判断节点是否存在

import org.apache.zookeeper.*;

import org.apache.zookeeper.data.Stat;

import org.junit.Before;

import org.junit.Test;

import java.io.IOException;

import java.util.List;

public class TestZookeeper {

//连接的zk集群

private String connectString = "192.168.199.123:2181,192.168.199.124:2181";

//设置连接超时时间

private int sessionTimeout = 2000;

private ZooKeeper zkClient;

//在Test之前执行,不然会报错

@Before

public void init() throws IOException {

zkClient = new ZooKeeper(connectString, sessionTimeout, new Watcher() {

//process会在init中先运行一遍,之后有其他将监听设为true时,会调用process方法

public void process(WatchedEvent watchedEvent) {

List<String> children = null;

try {

children = zkClient.getChildren("/", true);

for(String i :children){

System.out.println(i);

}

System.out.println("------------");

} catch (KeeperException e) {

e.printStackTrace();

} catch (InterruptedException e) {

e.printStackTrace();

}

}

});

}

//创建节点

@Test

public void createNode() throws KeeperException, InterruptedException {

//参数:节点名,节点中的值,访问权限(只读什么的),节点类型(持久的,短暂的,带序号的)

String path = zkClient.create("/sichuan", "chengdu".getBytes(), ZooDefs.Ids.OPEN_ACL_UNSAFE, CreateMode.PERSISTENT);

System.out.println(path);

}

//获取子节点并监控节点的变化

@Test

public void getDataAndWatch() throws KeeperException, InterruptedException {

//参数:需要获取子节点的路径,是否监听

//监听的过程需要在init方法中的process中修改

List<String> children = zkClient.getChildren("/", true);

for(String i :children){

System.out.println(i);

}

Thread.sleep(Long.MAX_VALUE);

}

// 判断节点是否存在

@Test

public void exist() throws Exception {

Stat stat = zkClient.exists("/sichuan", false);

System.out.println(stat == null ? "not exist" : "exist");

}

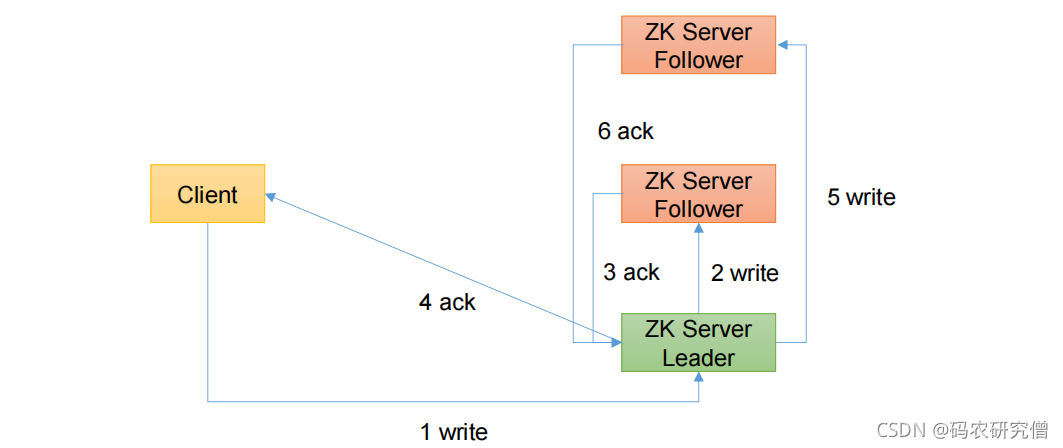

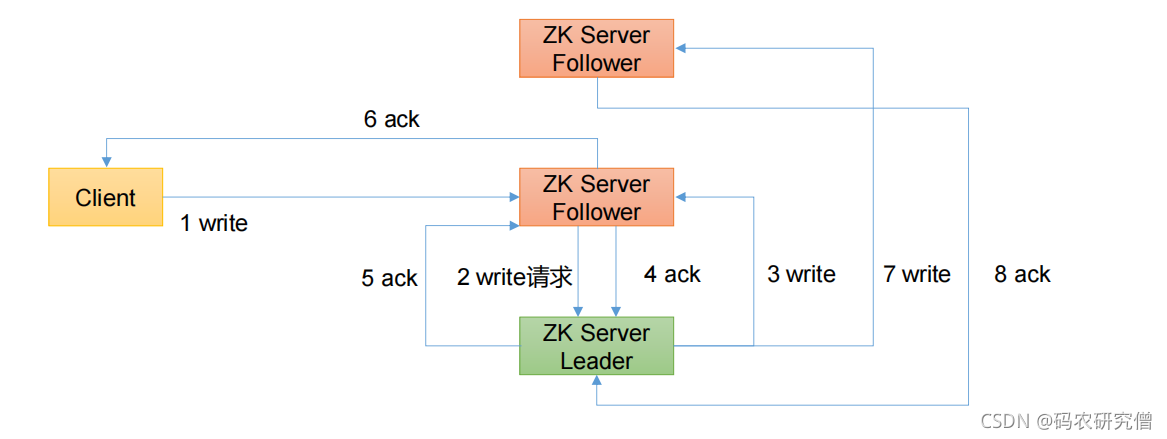

}发送给leader的时候

通俗解释:客户端给服务器的leader发送写请求,写完数据后给手下发送写请求,手下写完发送给leader,超过半票以上都写了则发回给客户端。之后leader在给其他手下让他们写,写完在发数据给leader

发送给follower的时候

通俗解释:客户端给手下发送写的请求,手下给leader发送写的请求,写完后,给手下发送写的请求,手下写完后给leader发送确认,超过半票,leader确认后,发给刻划断,之后leader在发送写请求给其他手下

3.4 服务器节点动态上下线

import org.apache.zookeeper.*;

import java.io.IOException;

import java.util.ArrayList;

import java.util.List;

//客户端与zk集群连接

public class DistributeClient {

public static void main(String[] args) throws IOException, KeeperException, InterruptedException {

DistributeClient client = new DistributeClient();

//连接zk集群

client.getConnet();

//注册节点

client.getChildren();

//业务逻辑

client.business();

}

private void business() throws InterruptedException {

Thread.sleep(Long.MAX_VALUE);

}

private void getChildren() throws KeeperException, InterruptedException {

//获取子节点名称并监听

List<String> children = zkClient.getChildren("/servers", true);

//存储服务器节点主机名称集合

ArrayList<String> hosts = new ArrayList<String>();

for (String i : children){

byte[] data = zkClient.getData("/servers/" + i, false, null);

hosts.add(new String(data));

}

//将所有在线主机名称打印到控制台

System.out.println(hosts);

}

//连接的zk集群

private String connectString = "192.168.199.123:2181,192.168.199.124:2181";

//设置连接超时时间

private int sessionTimeout = 2000;

private ZooKeeper zkClient;

private void getConnet() throws IOException {

zkClient = new ZooKeeper(connectString, sessionTimeout, new Watcher() {

//监听内容

public void process(WatchedEvent watchedEvent) {

try {

getChildren();

} catch (KeeperException e) {

e.printStackTrace();

} catch (InterruptedException e) {

e.printStackTrace();

}

}

});

}

}4.分布式锁

4.1 原生zookeeper分布式锁

创建节点,判断是否是最小的节点

如果不是最小的节点,需要监听前一个的节点

健壮性可以通过CountDownLatch类

内部的代码中具体参数设置

监听函数

如果集群状态是连接,则释放connectlatch

如果集群类型是删除,且前一个节点的位置等于该节点的文职,则释放该节点

判断节点是否存在不用一直监听

获取节点信息要一直监听getData

public class DistributedLock {

private final String connectString = "hadoop102:2181,hadoop103:2181,hadoop104:2181";

private final int sessionTimeout = 2000;

private final ZooKeeper zk;

private CountDownLatch connectLatch = new CountDownLatch(1);

private CountDownLatch waitLatch = new CountDownLatch(1);

private String waitPath;

private String currentMode;

public DistributedLock() throws IOException, InterruptedException, KeeperException {

// 获取连接

zk = new ZooKeeper(connectString, sessionTimeout, new Watcher() {

@Override

public void process(WatchedEvent watchedEvent) {

// connectLatch 如果连接上zk 可以释放

if (watchedEvent.getState() == Event.KeeperState.SyncConnected){

connectLatch.countDown();

}

// waitLatch 需要释放

if (watchedEvent.getType()== Event.EventType.NodeDeleted && watchedEvent.getPath().equals(waitPath)){

waitLatch.countDown();

}

}

});

// 等待zk正常连接后,往下走程序

connectLatch.await();

// 判断根节点/locks是否存在

Stat stat = zk.exists("/locks", false);

if (stat == null) {

// 创建一下根节点

zk.create("/locks", "locks".getBytes(), ZooDefs.Ids.OPEN_ACL_UNSAFE, CreateMode.PERSISTENT);

}

}

// 对zk加锁

public void zklock() {

// 创建对应的临时带序号节点

try {

currentMode = zk.create("/locks/" + "seq-", null, ZooDefs.Ids.OPEN_ACL_UNSAFE, CreateMode.EPHEMERAL_SEQUENTIAL);

// wait一小会, 让结果更清晰一些

Thread.sleep(10);

// 判断创建的节点是否是最小的序号节点,如果是获取到锁;如果不是,监听他序号前一个节点

List<String> children = zk.getChildren("/locks", false);

// 如果children 只有一个值,那就直接获取锁; 如果有多个节点,需要判断,谁最小

if (children.size() == 1) {

return;

} else {

Collections.sort(children);

// 获取节点名称 seq-00000000

String thisNode = currentMode.substring("/locks/".length());

// 通过seq-00000000获取该节点在children集合的位置

int index = children.indexOf(thisNode);

// 判断

if (index == -1) {

System.out.println("数据异常");

} else if (index == 0) {

// 就一个节点,可以获取锁了

return;

} else {

// 需要监听 他前一个节点变化

waitPath = "/locks/" + children.get(index - 1);

zk.getData(waitPath,true,new Stat());

// 等待监听

waitLatch.await();

return;

}

}

} catch (KeeperException e) {

e.printStackTrace();

} catch (InterruptedException e) {

e.printStackTrace();

}

}

// 解锁

public void unZkLock() {

// 删除节点

try {

zk.delete(this.currentMode,-1);

} catch (InterruptedException e) {

e.printStackTrace();

} catch (KeeperException e) {

e.printStackTrace();

}

}

}public class DistributedLockTest {

public static void main(String[] args) throws InterruptedException, IOException, KeeperException {

final DistributedLock lock1 = new DistributedLock();

final DistributedLock lock2 = new DistributedLock();

new Thread(new Runnable() {

@Override

public void run() {

try {

lock1.zklock();

System.out.println("线程1 启动,获取到锁");

Thread.sleep(5 * 1000);

lock1.unZkLock();

System.out.println("线程1 释放锁");

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}).start();

new Thread(new Runnable() {

@Override

public void run() {

try {

lock2.zklock();

System.out.println("线程2 启动,获取到锁");

Thread.sleep(5 * 1000);

lock2.unZkLock();

System.out.println("线程2 释放锁");

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}).start();

}

}4.2 curator分布式锁

原生的 Java API 开发存在的问题

(1)会话连接是异步的,需要自己去处理。比如使用CountDownLatch

(2)Watch 需要重复注册,不然就不能生效

(3)开发的复杂性还是比较高的

(4)不支持多节点删除和创建。需要自己去递归

curator可以解决上面的问题

添加相关的依赖文件

<dependency>

<groupId>org.apache.curator</groupId>

<artifactId>curator-framework</artifactId>

<version>4.3.0</version>

</dependency>

<dependency>

<groupId>org.apache.curator</groupId>

<artifactId>curator-recipes</artifactId>

<version>4.3.0</version>

</dependency>

<dependency>

<groupId>org.apache.curator</groupId>

<artifactId>curator-client</artifactId>

<version>4.3.0</version>

</dependency>主要是通过工程类的定义

编写实现代码如下

public class CuratorLockTest {

public static void main(String[] args) {

// 创建分布式锁1

InterProcessMutex lock1 = new InterProcessMutex(getCuratorFramework(), "/locks");

// 创建分布式锁2

InterProcessMutex lock2 = new InterProcessMutex(getCuratorFramework(), "/locks");

new Thread(new Runnable() {

@Override

public void run() {

try {

lock1.acquire();

System.out.println("线程1 获取到锁");

lock1.acquire();

System.out.println("线程1 再次获取到锁");

Thread.sleep(5 * 1000);

lock1.release();

System.out.println("线程1 释放锁");

lock1.release();

System.out.println("线程1 再次释放锁");

} catch (Exception e) {

e.printStackTrace();

}

}

}).start();

new Thread(new Runnable() {

@Override

public void run() {

try {

lock2.acquire();

System.out.println("线程2 获取到锁");

lock2.acquire();

System.out.println("线程2 再次获取到锁");

Thread.sleep(5 * 1000);

lock2.release();

System.out.println("线程2 释放锁");

lock2.release();

System.out.println("线程2 再次释放锁");

} catch (Exception e) {

e.printStackTrace();

}

}

}).start();

}

private static CuratorFramework getCuratorFramework() {

ExponentialBackoffRetry policy = new ExponentialBackoffRetry(3000, 3);

CuratorFramework client = CuratorFrameworkFactory.builder().connectString("hadoop102:2181,hadoop103:2181,hadoop104:2181")

.connectionTimeoutMs(2000)

.sessionTimeoutMs(2000)

.retryPolicy(policy).build();

// 启动客户端

client.start();

System.out.println("zookeeper 启动成功");

return client;

}

}5. 总结企业面试

企业中常考的面试有选举机制、集群安装以及常用命令

5.1选举机制

半数机制,超过半数的投票通过,即通过。

(1)第一次启动选举规则:

投票过半数时,服务器 id 大的胜出

(2)第二次启动选举规则:

①EPOCH 大的直接胜出

②EPOCH 相同,事务 id 大的胜出

③事务 id 相同,服务器 id 大的胜出

5.2集群安装

安装奇数台

服务器台数多:好处,提高可靠性;坏处:提高通信延时

常用命令

ls、get、create、delete

6. 算法基础

以下的算法基础主要研究数据是如何保持一致性的

也就是多台服务器的决定问题

比如著名的拜占庭将军问题

6.1 Paxos 算法

一种基于消息传递且具有高度容错特性的一致性算法

Paxos算法解决的问题:就是如何快速正确的在一个分布式系统中对某个数据值达成一致,并且保证不论发生任何异常,都不会破坏整个系统的一致性

有唯一的请求id

不接受比其更小的id发话

情况1:

情况2:A5的覆盖,同时A5做了老大

情况3:

以上情况是因为多个 Proposers 相互争夺 Acceptor,造成迟迟无法达成一致的情况。针对这种情况。一种改进的 Paxos 算法被提出:从系统中选出一个节点作为 Leader,只有 Leader 能够发起提案。这样,一次 Paxos 流程中只有一个Proposer,不会出现活锁的情况,此时只会出现例子中第一种情况

6.2 ZAB协议

只有一个服务器提交,没有服务器抢

主要的消息广播如下

准备发送,确认收到发送

在发送事务,确认收到事务过半之后

全部发送消息

但如果宕机

假设两种服务器异常情况:

(1)假设一个事务在Leader提出之后,Leader挂了。

(2)一个事务在Leader上提交了,并且过半的Follower都响应Ack了,但是Leader在Commit消息发出之前挂了。

Zab协议崩溃恢复要求满足以下两个要求:

(1)确保已经被Leader提交的提案Proposal,必须最终被所有的Follower服务器提交。 (已经产生的提案,Follower必须执行)

(2)确保丢弃已经被Leader提出的,但是没有被提交的Proposal。(丢弃胎死腹中的提案)

之后开始新的leader的选举

Leader选举:根据上述要求,Zab协议需要保证选举出来的Leader需要满足以下条件:

(1)新选举出来的Leader不能包含未提交的Proposal。即新Leader必须都是已经提交了Proposal的Follower服务器节点

(2)新选举的Leader节点中含有最大的zxid。这样做的好处是可以避免Leader服务器检查Proposal的提交和丢弃工作。

6.3 CAP理论

分布式系统不能同时满足以下三种:

一致性(多个副本之间是否能够保持数据一致的特性)

可用性(系统提供的服务必须一直处于可用的状态)

分区容错性(分布式系统在遇到任何网络分区故障的时候,仍然需要能够保证对外提供满足一致性和可用性的服务)

这三个基本需求,最多只能同时满足其中的两项,因为P是必须的,因此往往选择就在CP或者AP中

ZooKeeper保证的是一致性和分区容错性

浙公网安备 33010602011771号

浙公网安备 33010602011771号