5. PyTorch模型定义

5.1 PyTorch模型定义的方式

5.1.1 必要的知识回顾

- Module类是torch.nn模块里提供的一个模型构造类(nn.Moduel),是所有神经网络模块的基类,通过继承nn.Moduel定义所需的模型。

- Pytorch模型定义包括两部分:各个部分的初始化(__init__);数据流向定义(forward)。

- 基于nn.Module,可通过Sequential,ModuleList和ModuleDict三种方式定义模型。

5.1.2 nn.Sequential()

适用于:模型的前向计算为简单串联各个层的计算。

nn.Sequential()接收一个子模块的有序字典(OrderedDict) 或者一系列子模块作为参数来逐一添加 Module 的实例

Sequential定义代码:

class MySequential(nn.Module): from collections import OrderedDict def __init__(self, *args): super(MySequential, self).__init__() if len(args) == 1 and isinstance(args[0], OrderedDict): # 如果传入的是一个OrderedDict for key, module in args[0].items(): self.add_module(key, module) # add_module方法会将module添加进self._modules(一个OrderedDict) else: # 传入的是一些Module for idx, module in enumerate(args): self.add_module(str(idx), module) def forward(self, input): # self._modules返回一个 OrderedDict,保证会按照成员添加时的顺序遍历成 for module in self._modules.values(): input = module(input) return input

- 直接排列

import torch.nn as nn net = nn.Sequential( nn.Linear(784, 256), nn.ReLU(), nn.Linear(256, 10)) print(net) 输出: Sequential( (0): Linear(in_features=784, out_features=256, bias=True) (1): ReLU() (2): Linear(in_features=256, out_features=10, bias=True) )

- 使用有序字典(OrderedDict)

import collections import torch.nn as nn net2 = nn.Sequential(collections.OrderedDict([ ('fc1':, nn.Linear(784, 256)), ('relu1':, nn.ReLU()), ('fc2':, nn.Linear(256, 20))])) print(net2)

输出:

Sequential(

(fc1): Linear(in_features=784, out_features=256, bias=True)

(relu1): ReLU()

(fc2): Linear(in_features=256, out_features=10, bias=True)

)

优点: 简单、易读,不需要再写forward

缺点:使得模型定义丧失灵活性;如需要在模型中间加入一个外部输入时就不适合用Sequential的方式实现。

5.1.3 nn.ModuleList()

ModuleList 接收一个子模块(或层,需属于nn.Module类)的列表作为输入,然后也可以类似List那样进行append和extend操作。同时,子模块或层的权重也会自动添加到网络中来。

net.nn.ModuleList([nn.Linear(784, 256), nn.ReLU()]) net.append(nn.Linear(256, 10)) # 类似list的append操作 print(net[-1])# 类似List的索引访问 print(net())

输出:

Linear(in_features=256, out_features=10, bias=True)

ModuleList(

(0): Linear(in_features=784, out_features=256, bias=True)

(1): ReLU()

(2): Linear(in_features=256, out_features=10, bias=True)

)

注:nn.ModuleList 并没有定义一个网络,它只是将不同的模块储存在一起。ModuleList中元素的先后顺序并不代表其在网络中的真实位置顺序,需要经过forward函数指定各个层的先后顺序后才算完成了模型的定义。

具体实现时用for循环即可完成:

class model(nn.Module): def __init__(self, ...): super().__init__() self.modulelist = ... ... def forward(self, x): for layer in self.modulelist: x = layer(x) return x

5.1.4 nn.ModuleDict()

ModuleDict和ModuleList的作用类似,只是ModuleDict能够更方便地为神经网络的层添加名称。

net = nn.ModuleDict({ 'linear':nn.Linear(256, 10), 'act':nn.ReLU()}) net['output'] = nn.Linear(256, 10) print(net['linear']) # 访问 print(net.output) print(net)

输出:

Linear(in_features=784, out_features=256, bias=True)

Linear(in_features=256, out_features=10, bias=True)

ModuleDict(

(act): ReLU()

(linear): Linear(in_features=784, out_features=256, bias=True)

(output): Linear(in_features=256, out_features=10, bias=True)

)

5.1.5 三种方法的比较与适用场景

Sequential适用于快速验证结果,因为已经明确了要用哪些层,直接写一下就好了,不需要同时写__init__和forward;

ModuleList和ModuleDict在某个完全相同的层需要重复出现多次时,非常方便实现,可以”一行顶多行“;

当我们需要之前层的信息的时候,比如 ResNets 中的残差计算,当前层的结果需要和之前层中的结果进行融合,一般使用 ModuleList/ModuleDict 比较方便。

5.2 利用模型块快速搭建复杂网络

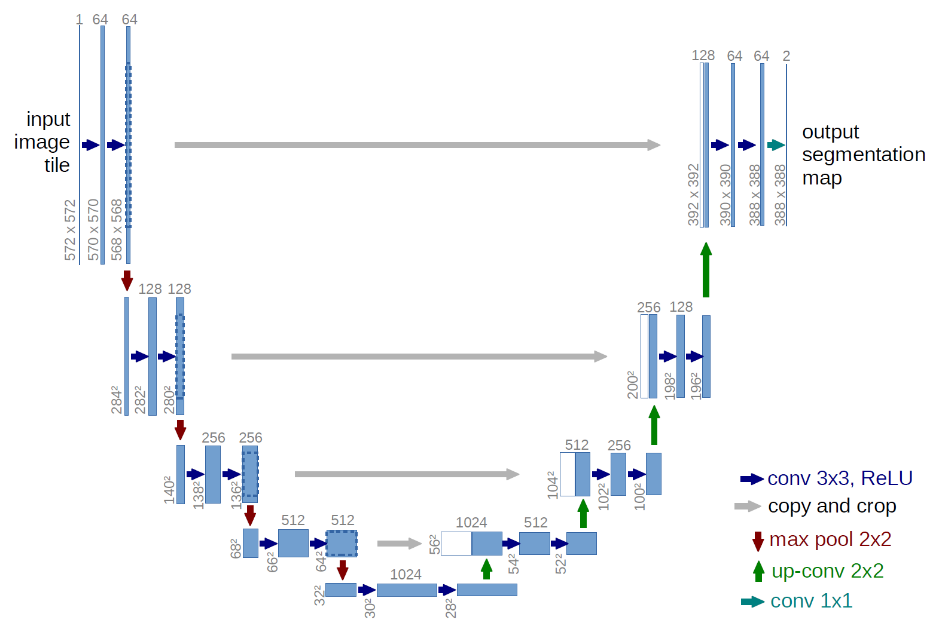

5.2.1 U-Net简介

U-Net是分割 (Segmentation) 模型的杰作,通过残差连接结构解决了模型学习中的退化问题,使得神经网络的深度能够不断扩展。

5.2.2 U-Net模型块分析

同一层左右两侧的模型块之间也有连接,称为“Skip-connection”

组成U-Net的模型块主要有如下几个部分:

1)每个子块内部的两次卷积(Double Convolution)

2)左侧模型块之间的下采样连接,即最大池化(Max pooling)

3)右侧模型块之间的上采样连接(Up sampling)

4)输出层的处理

5.2.3 U-Net模型块实现

import torch import torch.nn as nn import torch.nn.functional as F class DoubleConv(nn.Module): """(convolution => [BN] => ReLU) * 2""" def __init__(self, in_channels, out_channels, mid_channels=None): super().__init__() if not mid_channels: mid_channels = out_channels self.double_conv = nn.Sequential( nn.Conv2d(in_channels, mid_channels, kernel_size=3, padding=1, bias=False), nn.BatchNorm2d(mid_channels), nn.ReLU(inplace=True), nn.Conv2d(mid_channels, out_channels, kernel_size=3, padding=1, bias=False), nn.BatchNorm2d(out_channels), nn.ReLU(inplace=True) ) def forward(self, x): return self.double_conv(x)

class Down(nn.Module): """Downscaling with maxpool then double conv""" def __init__(self, in_channels, out_channels): super().__init__() self.maxpool_conv = nn.Sequential( nn.MaxPool2d(2), DoubleConv(in_channels, out_channels) ) def forward(self, x): return self.maxpool_conv(x)

class Up(nn.Module): """Upscaling then double conv""" def __init__(self, in_channels, out_channels, bilinear=False): super().__init__() #如果是双线性(bilinear)的,则使用普通卷积来减少通道数 if bilinear: self.up = nn.Upsample(scale_factor=2, mode='bilinear', align_corners=True) self.conv = DoubleConv(in_channels, out_channels, in_channels // 2) else: self.up = nn.ConvTranspose2d(in_channels, in_channels // 2, kernel_size=2, stride=2) self.conv = DoubleConv(in_channels, out_channels) def forward(self, x1, x2): x1 = self.up(x1) # input is CHW diffY = x2.size()[2] - x1.size()[2] diffX = x2.size()[3] - x1.size()[3] x1 = F.pad(x1, [diffX // 2, diffX - diffX // 2, diffY // 2, diffY - diffY // 2]) # if you have padding issues, see # https://github.com/HaiyongJiang/U-Net-Pytorch-Unstructured-Buggy/commit/0e854509c2cea854e247a9c615f175f76fbb2e3a # https://github.com/xiaopeng-liao/Pytorch-UNet/commit/8ebac70e633bac59fc22bb5195e513d5832fb3bd x = torch.cat([x2, x1], dim=1) return self.conv(x)

class OutConv(nn.Module): def __init__(self, in_channels, out_channels): super(OutConv, self).__init__() self.conv = nn.Conv2d(in_channels, out_channels, kernel_size=1) def forward(self, x): return self.conv(x)

5.2.4 利用模型块组装U-Net

class UNet(nn.Module): def __init__(self, n_channels, n_classes, bilinear=False): super(UNet, self).__init__() self.n_channels = n_channels self.n_classes = n_classes self.bilinear = bilinear self.inc = DoubleConv(n_channels, 64) self.down1 = Down(64, 128) self.down2 = Down(128, 256) self.down3 = Down(256, 512) factor = 2 if bilinear else 1 self.down4 = Down(512, 1024 // factor) self.up1 = Up(1024, 512 // factor, bilinear) self.up2 = Up(512, 256 // factor, bilinear) self.up3 = Up(256, 128 // factor, bilinear) self.up4 = Up(128, 64, bilinear) self.outc = OutConv(64, n_classes) def forward(self, x): x1 = self.inc(x) x2 = self.down1(x1) x3 = self.down2(x2) x4 = self.down3(x3) x5 = self.down4(x4) x = self.up1(x5, x4) x = self.up2(x, x3) x = self.up3(x, x2) x = self.up4(x, x1) logits = self.outc(x) return logits

5.3 PyTorch修改模型

5.3.1 修改模型层

import torchvision.models as models net = models.resnet50() print(net)

输出:

ResNet(

(conv1): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace=True)

(maxpool): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

(layer1): Sequential(

(0): Bottleneck(

(conv1): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv3): Conv2d(64, 256, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn3): BatchNorm2d(256