K8s深入了解

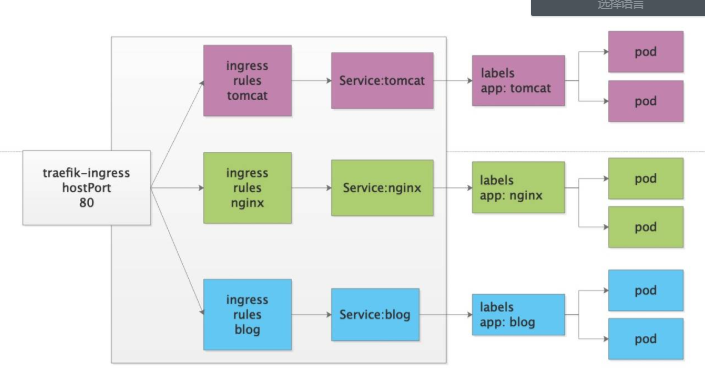

Ingress控制器介绍

1.没有ingress之前,pod对外提供服务只能通过NodeIP:NodePort的形式,但是这种形式有缺点,一个节点上的PORT不能重复利用。比如某个服务占用了80,那么其他服务就不能在用这个端口了。

2.NodePort是4层代理,不能解析7层的http,不能通过域名区分流量

3.为了解决这个问题,我们需要用到资源控制器叫Ingress,作用就是提供一个统一的访问入口。工作在7层

4.虽然我们可以使用nginx/haproxy来实现类似的效果,但是传统部署不能动态的发现我们新创建的资源,必须手动修改配置文件并重启。

5.适用于k8s的ingress控制器主流的有ingress-nginx和traefik

6.ingress-nginx == nginx + go --> deployment部署

7.traefik有一个UI界面

安装部署traefik

1.traefik_dp.yaml

kind: Deployment

apiVersion: apps/v1

metadata:

name: traefik-ingress-controller

namespace: kube-system

labels:

k8s-app: traefik-ingress-lb

spec:

replicas: 1

selector:

matchLabels:

k8s-app: traefik-ingress-lb

template:

metadata:

labels:

k8s-app: traefik-ingress-lb

name: traefik-ingress-lb

spec:

serviceAccountName: traefik-ingress-controller

terminationGracePeriodSeconds: 60

tolerations:

- operator: "Exists"

nodeSelector:

kubernetes.io/hostname: node1

containers:

- image: traefik:v1.7.17

name: traefik-ingress-lb

ports:

- name: http

containerPort: 80

hostPort: 80

- name: admin

containerPort: 8080

args:

- --api

- --kubernetes

- --logLevel=INFO

2.traefik_rbac.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: traefik-ingress-controller

namespace: kube-system

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: traefik-ingress-controller

rules:

- apiGroups:

- ""

resources:

- services

- endpoints

- secrets

verbs:

- get

- list

- watch

- apiGroups:

- extensions

resources:

- ingresses

verbs:

- get

- list

- watch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: traefik-ingress-controller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: traefik-ingress-controller

subjects:

- kind: ServiceAccount

name: traefik-ingress-controller

namespace: kube-system

3.traefik_svc.yaml

kind: Service

apiVersion: v1

metadata:

name: traefik-ingress-service

namespace: kube-system

spec:

selector:

k8s-app: traefik-ingress-lb

ports:

- protocol: TCP

port: 80

name: web

- protocol: TCP

port: 8080

name: admin

type: NodePort

4.应用资源配置

kubectl create -f ./

5.查看并访问

kubectl -n kube-system get svc

创建traefik的web-ui的ingress规则

1.类比nginx:

upstream traefik-ui {

server traefik-ingress-service:8080;

}

server {

location / {

proxy_pass http://traefik-ui;

include proxy_params;

}

}

2.ingress写法:

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: traefik-ui

namespace: kube-system

spec:

rules:

- host: traefik.ui.com

http:

paths:

- path: /

backend:

serviceName: traefik-ingress-service

servicePort: 8080

3.访问测试:

traefik.ui.com

ingress实验

1.实验目标

未使用ingress之前只能通过IP+端口访问:

tomcat 8080

nginx 8090

使用ingress之后直接可以使用域名访问:

traefik.nginx.com:80 --> nginx 8090

traefik.tomcat.com:80 --> tomcat 8080

2.创建2个pod和svc

mysql-dp.yaml

mysql-svc.yaml

tomcat-dp.yaml

tomcat-svc.yaml

nginx-dp.yaml

nginx-svc-clusterip.yaml

3.创建ingress控制器资源配置清单并应用

cat >nginx-ingress.yaml <<EOF

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: traefik-nginx

namespace: default

spec:

rules:

- host: traefik.nginx.com

http:

paths:

- path: /

backend:

serviceName: nginx-service

servicePort: 80

EOF

cat >tomcat-ingress.yaml<<EOF

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: traefik-tomcat

namespace: default

spec:

rules:

- host: traefik.tomcat.com

http:

paths:

- path: /

backend:

serviceName: myweb

servicePort: 8080

EOF

kubectl apply -f nginx-ingress.yaml

kubectl apply -f tomcat-ingress.yaml

4.查看创建的资源

kubectl get svc

kubectl get ingresses

kubectl describe ingresses traefik-nginx

kubectl describe ingresses traefik-tomcat

5.访问测试

traefik.nginx.com

traefik.tomcat.com

数据持久化

Volume介绍

Volume是Pad中能够被多个容器访问的共享目录

Kubernetes中的Volume不Pad生命周期相同,但不容器的生命周期丌相关

Kubernetes支持多种类型的Volume,并且一个Pod可以同时使用任意多个Volume

Volume类型包括:

- EmptyDir:Pod分配时创建, K8S自动分配,当Pod被移除数据被清空。用于临时空间等。

- hostPath:为Pod上挂载宿主机目录。用于持久化数据。

- nfs:挂载相应磁盘资源。

EmptyDir实验

cat >emptyDir.yaml <<EOF

apiVersion: v1

kind: Pod

metadata:

name: busybox-empty

spec:

containers:

- name: busybox-pod

image: busybox

volumeMounts:

- mountPath: /data/busybox/

name: cache-volume

command: ["/bin/sh","-c","while true;do echo $(date) >> /data/busybox/index.html;sleep 3;done"]

volumes:

- name: cache-volume

emptyDir: {}

EOF

hostPath实验

1.发现的问题:

- 目录必须存在才能创建

- POD不固定会创建在哪个Node上,数据不统一

2.type类型说明

https://kubernetes.io/docs/concepts/storage/volumes/#hostpath

DirectoryOrCreate 目录不存在就自动创建

Directory 目录必须存在

FileOrCreate 文件不存在则创建

File 文件必须存在

3.根据Node标签选择POD创建在指定的Node上

方法1: 直接选择Node节点名称

apiVersion: v1

kind: Pod

metadata:

name: busybox-nodename

spec:

nodeName: node2

containers:

- name: busybox-pod

image: busybox

volumeMounts:

- mountPath: /data/pod/

name: hostpath-volume

command: ["/bin/sh","-c","while true;do echo $(date) >> /data/pod/index.html;sleep 3;done"]

volumes:

- name: hostpath-volume

hostPath:

path: /data/node/

type: DirectoryOrCreate

方法2: 根据Node标签选择Node节点

kubectl label nodes node3 disktype=SSD

apiVersion: v1

kind: Pod

metadata:

name: busybox-nodename

spec:

nodeSelector:

disktype: SSD

containers:

- name: busybox-pod

image: busybox

volumeMounts:

- mountPath: /data/pod/

name: hostpath-volume

command: ["/bin/sh","-c","while true;do echo $(date) >> /data/pod/index.html;sleep 3;done"]

volumes:

- name: hostpath-volume

hostPath:

path: /data/node/

type: DirectoryOrCreate

4.实验-编写mysql的持久化deployment

apiVersion: apps/v1

kind: Deployment

metadata:

name: mysql-dp

namespace: default

spec:

selector:

matchLabels:

app: mysql

replicas: 1

template:

metadata:

name: mysql-pod

namespace: default

labels:

app: mysql

spec:

containers:

- name: mysql-pod

image: mysql:5.7

ports:

- name: mysql-port

containerPort: 3306

env:

- name: MYSQL_ROOT_PASSWORD

value: "123456"

volumeMounts:

- mountPath: /var/lib/mysql

name: mysql-volume

volumes:

- name: mysql-volume

hostPath:

path: /data/mysql

type: DirectoryOrCreate

nodeSelector:

disktype: SSD

PV和PVC

1.master节点安装nfs

yum install nfs-utils -y

mkdir /data/nfs-volume -p

vim /etc/exports

/data/nfs-volume 10.0.0.0/24(rw,async,no_root_squash,no_all_squash)

systemctl start rpcbind

systemctl start nfs

showmount -e 127.0.0.1

2.所有node节点安装nfs

yum install nfs-utils.x86_64 -y

showmount -e 10.0.0.11

3.编写并创建nfs-pv资源

cat >nfs-pv.yaml <<EOF

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv01

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

storageClassName: nfs

nfs:

path: /data/nfs-volume/mysql

server: 10.0.0.11

EOF

kubectl create -f nfs-pv.yaml

kubectl get persistentvolume

3.创建mysql-pvc

cat >mysql-pvc.yaml <<EOF

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: mysql-pvc

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

storageClassName: nfs

EOF

kubectl create -f mysql-pvc.yaml

kubectl get pvc

4.创建mysql-deployment

cat >mysql-dp.yaml <<EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: mysql

spec:

replicas: 1

selector:

matchLabels:

app: mysql

template:

metadata:

labels:

app: mysql

spec:

containers:

- name: mysql

image: mysql:5.7

ports:

- containerPort: 3306

env:

- name: MYSQL_ROOT_PASSWORD

value: "123456"

volumeMounts:

- name: mysql-pvc

mountPath: /var/lib/mysql

- name: mysql-log

mountPath: /var/log/mysql

volumes:

- name: mysql-pvc

persistentVolumeClaim:

claimName: mysql-pvc

- name: mysql-log

hostPath:

path: /var/log/mysql

nodeSelector:

disktype: SSD

EOF

kubectl create -f mysql-dp.yaml

kubectl get pod -o wide

5.测试方法

1.创建nfs-pv

2.创建mysql-pvc

3.创建mysql-deployment并挂载mysq-pvc

4.登陆到mysql的pod里创建一个数据库

5.将这个pod删掉,因为deployment设置了副本数,所以会自动再创建一个新的pod

6.登录这个新的pod,查看刚才创建的数据库是否依然能看到

7.如果仍然能看到,则说明数据是持久化保存的

6.accessModes字段说明

ReadWriteOnce 单路读写

ReadOnlyMany 多路只读

ReadWriteMany 多路读写

resources 资源的限制,比如至少5G

7.volumeName精确匹配

#capacity 限制存储空间大小

#reclaim policy pv的回收策略

#retain pv被解绑后上面的数据仍保留

#recycle pv上的数据被释放

#delete pvc和pv解绑后pv就被删除

备注:用户在创建pod所需要的存储空间时,前提是必须要有pv存在

才可以,这样就不符合自动满足用户的需求,而且之前在k8s 9.0

版本还可删除pv,这样造成数据不安全性

configMap资源

1.为什么要用configMap?

将配置文件和POD解耦

2.congiMap里的配置文件是如何存储的?

键值对

key:value

文件名:配置文件的内容

3.configMap支持的配置类型

直接定义的键值对

基于文件创建的键值对

4.configMap创建方式

命令行

资源配置清单

5.configMap的配置文件如何传递到POD里

变量传递

数据卷挂载

6.命令行创建configMap

kubectl create configmap --help

kubectl create configmap nginx-config --from-literal=nginx_port=80 --from-literal=server_name=nginx.cookzhang.com

kubectl get cm

kubectl describe cm nginx-config

7.POD环境变量形式引用configMap

kubectl explain pod.spec.containers.env.valueFrom.configMapKeyRef

cat >nginx-cm.yaml <<EOF

apiVersion: v1

kind: Pod

metadata:

name: nginx-cm

spec:

containers:

- name: nginx-pod

image: nginx:1.14.0

ports:

- name: http

containerPort: 80

env:

- name: NGINX_PORT

valueFrom:

configMapKeyRef:

name: nginx-config

key: nginx_port

- name: SERVER_NAME

valueFrom:

configMapKeyRef:

name: nginx-config

key: server_name

EOF

kubectl create -f nginx-cm.yaml

8.查看pod是否引入了变量

[root@node1 ~/confimap]# kubectl exec -it nginx-cm /bin/bash

root@nginx-cm:~# echo ${NGINX_PORT}

80

root@nginx-cm:~# echo ${SERVER_NAME}

nginx.cookzhang.com

root@nginx-cm:~# printenv |egrep "NGINX_PORT|SERVER_NAME"

NGINX_PORT=80

SERVER_NAME=nginx.cookzhang.com

注意:

变量传递的形式,修改confMap的配置,POD内并不会生效

因为变量只有在创建POD的时候才会引用生效,POD一旦创建好,环境变量就不变了

8.文件形式创建configMap

创建配置文件:

cat >www.conf <<EOF

server {

listen 80;

server_name www.cookzy.com;

location / {

root /usr/share/nginx/html/www;

index index.html index.htm;

}

}

EOF

创建configMap资源:

kubectl create configmap nginx-www --from-file=www.conf=./www.conf

查看cm资源

kubectl get cm

kubectl describe cm nginx-www

编写pod并以存储卷挂载模式引用configMap的配置

cat >nginx-cm-volume.yaml <<EOF

apiVersion: v1

kind: Pod

metadata:

name: nginx-cm

spec:

containers:

- name: nginx-pod

image: nginx:1.14.0

ports:

- name: http

containerPort: 80

volumeMounts:

- name: nginx-www

mountPath: /etc/nginx/conf.d/

volumes:

- name: nginx-www

configMap:

name: nginx-www

items:

- key: www.conf

path: www.conf

EOF

测试:

1.进到容器内查看文件

kubectl exec -it nginx-cm /bin/bash

cat /etc/nginx/conf.d/www.conf

2.动态修改configMap

kubectl edit cm nginx-www

3.再次进入容器内观察配置会不会自动更新

cat /etc/nginx/conf.d/www.conf

nginx -T

安全认证和RBAC

API Server是访问控制的唯一入口

在k8s平台上的操作对象都要经历三种安全相关的操作

1.认证操作

http协议 token 认证令牌

ssl认证 kubectl需要证书双向认证

2.授权检查

RBAC 基于角色的访问控制

3.准入控制

进一步补充授权机制,一般在创建,删除,代理操作时作补充

k8s的api账户分为2类

1.实实在在的用户 人类用户 userAccount

2.POD客户端 serviceAccount 默认每个POD都有认真信息

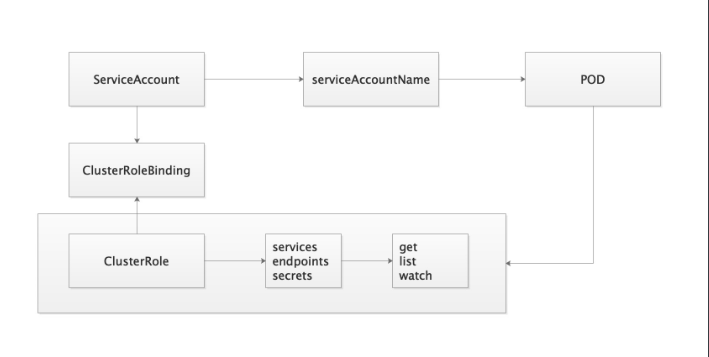

RBAC就要角色的访问控制

你这个账号可以拥有什么权限

以traefik举例:

1.创建了账号 ServiceAccount:traefik-ingress-controller

2.创建角色 ClusterRole: traefik-ingress-controller

Role POD相关的权限

ClusterRole namespace级别操作

3.将账户和权限角色进行绑定 traefik-ingress-controller

RoleBinding

ClusterRoleBinding

4.创建POD时引用ServiceAccount

serviceAccountName: traefik-ingress-controller

注意!!!

kubeadm安装的k8s集群,证书默认只有1年

k8s dashboard

1.官方项目地址

https://github.com/kubernetes/dashboard

2.下载配置文件

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-rc5/aio/deploy/recommended.yaml

3.修改配置文件

39 spec:

40 type: NodePort

41 ports:

42 - port: 443

43 targetPort: 8443

44 nodePort: 30000

4.应用资源配置

kubectl create -f recommended.yaml

5.创建管理员账户并应用

cat > dashboard-admin.yaml<<EOF

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

EOF

kubectl create -f dashboard-admin.yaml

6.查看资源并获取token

kubectl get pod -n kubernetes-dashboard -o wide

kubectl get svc -n kubernetes-dashboard

kubectl get secret -n kubernetes-dashboard

kubectl -n kubernetes-dashboard describe secret $(kubectl -n kubernetes-dashboard get secret | grep admin-user | awk '{print $1}')

7.浏览器访问

https://10.0.0.11:30000

google浏览器打不开就换火狐浏览器

黑科技

this is unsafe

研究的方向

0.namespace

1.ServiceAccount

2.Service

3.Secret

4.configMap

5.RBAC

6.Deployment

重启k8s二进制安装(kubeadm)需要重启组件

1.kube-apiserver

2.kube-proxy

3.kube-sechduler

4.kube-controller

5.etcd

6.coredns

7.flannel

8.traefik

9.docker

10.kubelet

掌握—》熟悉—》了解

- 掌握:倒背如流。

- 熟悉:正背如流。

- 了解:看到能够想起。

如果喜欢本篇博文,博文左边可以点个赞,谢谢您啦!

如果您喜欢厚颜无耻的博主我,麻烦点个

关注