HBase HA的分布式集群部署(适合3、5节点)

本博文的主要内容有:

.HBase的分布模式(3、5节点)安装

.HBase的分布模式(3、5节点)的启动

.HBase HA的分布式集群的安装

.HBase HA的分布式集群的启动

.HBase HA的切换

HBase HA分布式集群搭建———集群架构

HBase HA分布式集群搭建———安装步骤

HBase的分布模式(3、5节点)安装

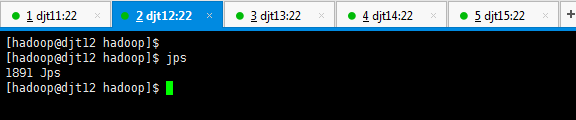

1、分别对djt11、djt12、djt13、djt14、djt15的启动进程恢复到没有任何启动进程的状态。

[hadoop@djt11 hadoop]$ pwd

[hadoop@djt11 hadoop]$ jps

[hadoop@djt12 hadoop]$ jps

[hadoop@djt13 hadoop]$ jps

[hadoop@djt14 hadoop]$ jps

[hadoop@djt15 hadoop]$ jps

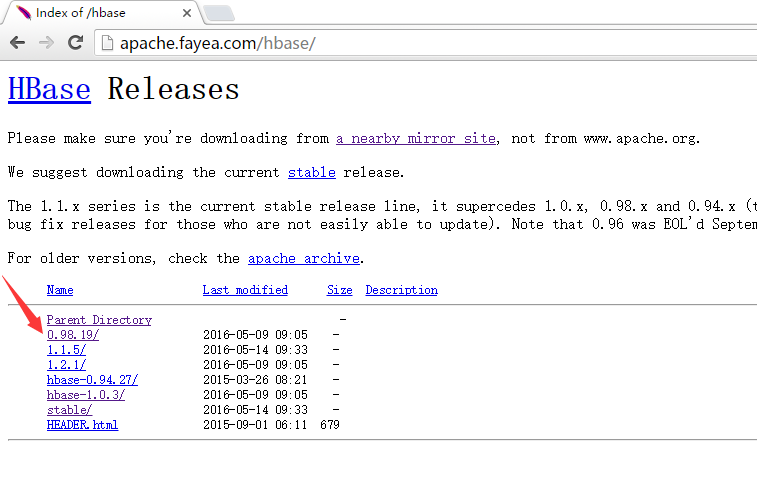

2、切换到app安装目录

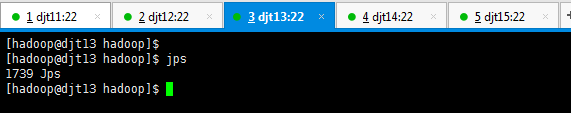

下载HBase压缩包

[hadoop@djt11 hadoop]$ pwd

[hadoop@djt11 hadoop]$ cd ..

[hadoop@djt11 app]$ pwd

[hadoop@djt11 app]$ ls

[hadoop@djt11 app]$ rz

[hadoop@djt11 app]$ ls

[hadoop@djt11]$ tar -zxvf hbase-0.98.19-hadoop2-bin.tar.gz

[hadoop@djt11 app]$ pwd

[hadoop@djt11 app]$ ls

[hadoop@djt11 app]$ mv hbase-0.98.19-hadoop2 hbase

[hadoop@djt11 app]$ rm -rf hbase-0.98.19-hadoop2-bin.tar.gz

[hadoop@djt11 app]$ ls

[hadoop@djt11 app]$ pwd

[hadoop@djt11 app]$

[hadoop@djt11 app]$ ls

[hadoop@djt11 app]$ cd hbase/

[hadoop@djt11 hbase]$ ls

[hadoop@djt11 hbase]$ cd conf/

[hadoop@djt11 conf]$ pwd

[hadoop@djt11 conf]$ ls

[hadoop@djt11 conf]$ vi regionservers

djt13

djt14

djt15

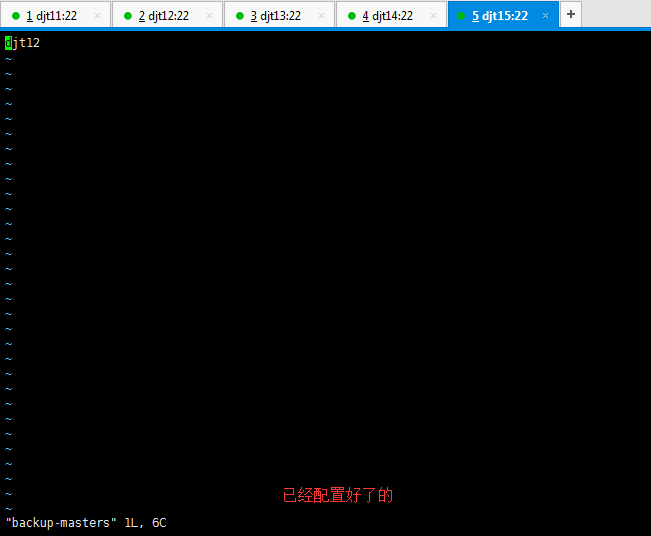

[hadoop@djt11 conf]$ vi backup-masters

djt12

[hadoop@djt11 conf]$ cp /home/hadoop/app/hadoop/etc/hadoop/core-site.xml ./

[hadoop@djt11 conf]$ cp /home/hadoop/app/hadoop/etc/hadoop/hdfs-site.xml ./

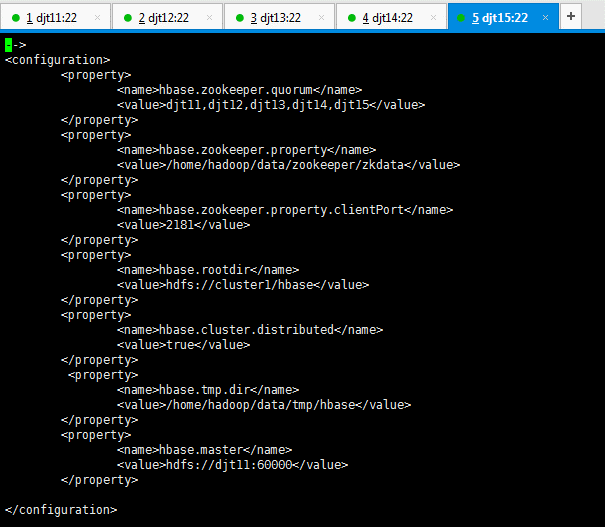

[hadoop@djt11 conf]$ vi hbase-site.xml

<configuration>

<property>

<name>hbase.zookeeper.quorum</name>

<value>djt11,djt12,djt13,djt14,djt15</value>

</property>

<property>

<name>hbase.zookeeper.property。dataDir</name>

<value>/home/hadoop/data/zookeeper/zkdata</value>

</property>

<property>

<name>hbase.zookeeper.property.clientPort</name>

<value>2181</value>

</property>

<property>

<name>hbase.rootdir</name>

<value>hdfs://cluster1/hbase</value>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.tmp.dir</name>

<value>/home/hadoop/data/tmp/hbase</value>

</property>

<property>

<name>hbase.master</name>

<value>hdfs://djt11:60000</value>

</property>

</configuration>

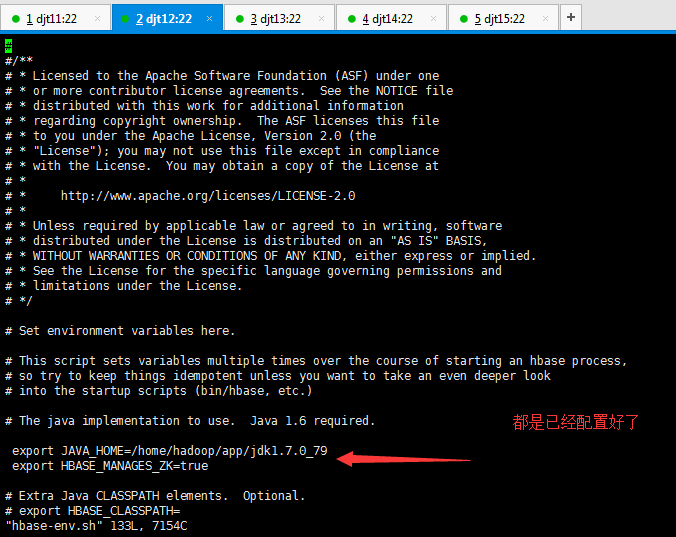

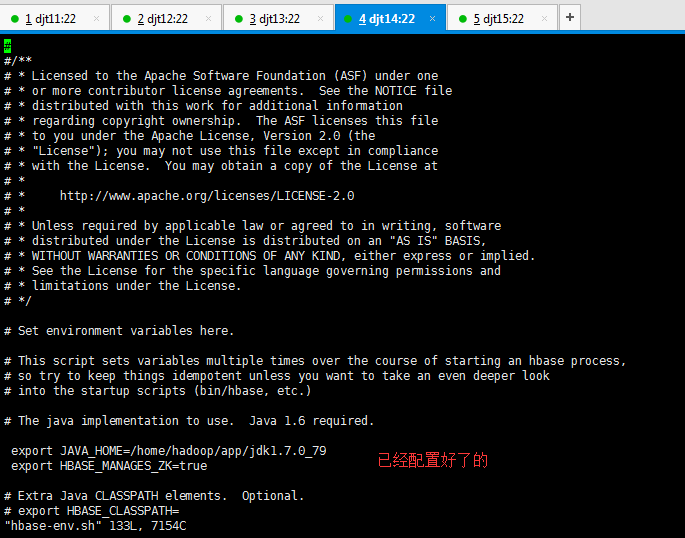

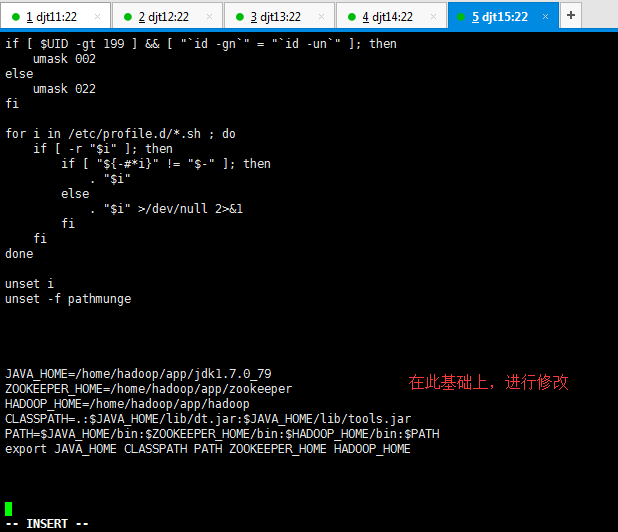

vi hbase-env.sh

#export JAVA_HOME=/usr/java/jdk1.6.0/

修改为,

export JAVA_HOME=/home/hadoop/app/jdk1.7.0_79

export HBASE_MANAGES_ZK=true

这里,有一个知识点。

进程HQuorumPeer,设HBASE_MANAGES_ZK=true,在启动HBase时,HBase把Zookeeper作为自身的一部分运行。

进程QuorumPeerMain,设HBASE_MANAGES_ZK=false,先手动启动Zookeeper,再启动HBase。

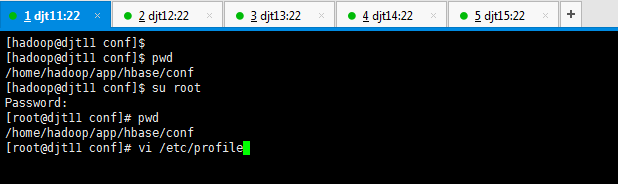

[hadoop@djt11 conf]$ pwd

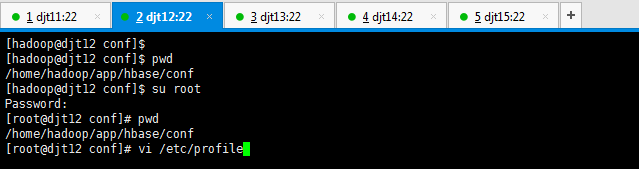

[hadoop@djt11 conf]$ su root

[root@djt11 conf]# pwd

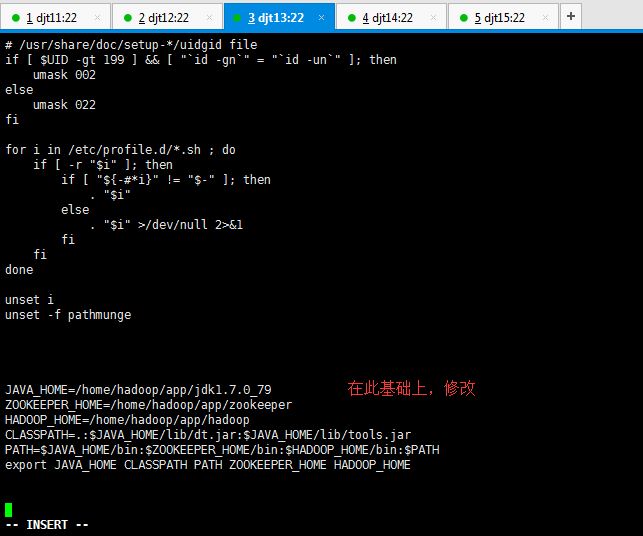

[root@djt11 conf]# vi /etc/profile

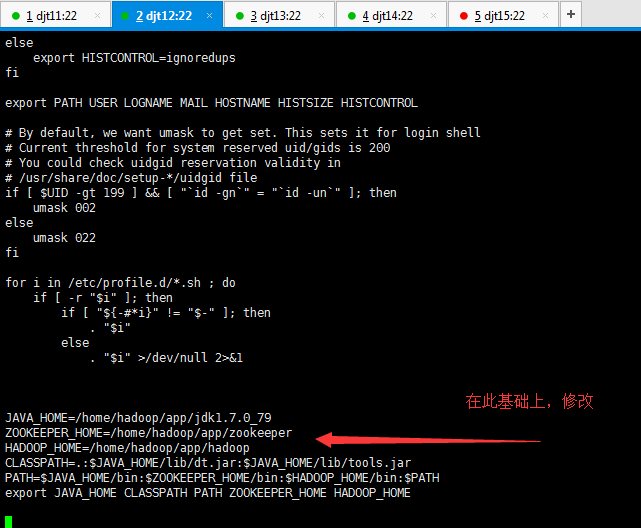

JAVA_HOME=/home/hadoop/app/jdk1.7.0_79

ZOOKEEPER_HOME=/home/hadoop/app/zookeeper

HADOOP_HOME=/home/hadoop/app/hadoop

HIVE_HOME=/home/hadoop/app/hive

CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

PATH=$JAVA_HOME/bin:$ZOOKEEPER_HOME/bin:$HADOOP_HOME/bin:$HIVE_HOME/bin:/home/hadoop/tools:$PATH

export JAVA_HOME CLASSPATH PATH ZOOKEEPER_HOME HADOOP_HOME HIVE_HOME

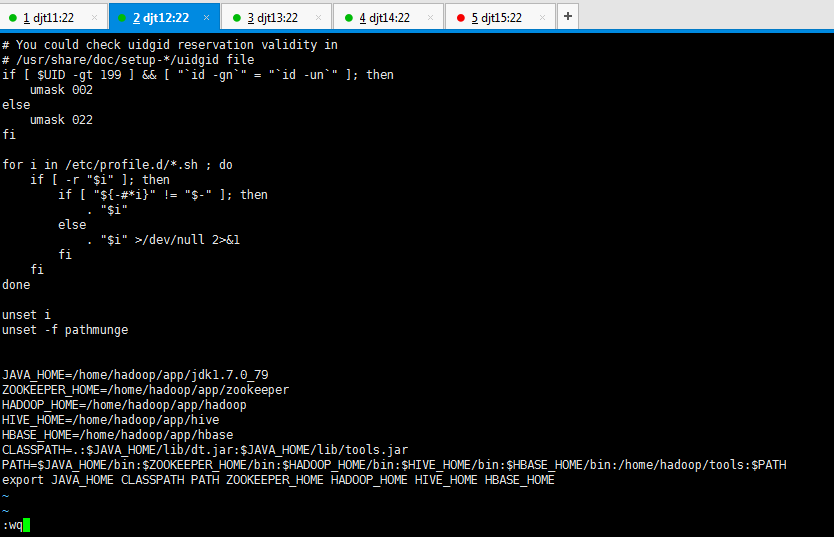

JAVA_HOME=/home/hadoop/app/jdk1.7.0_79

ZOOKEEPER_HOME=/home/hadoop/app/zookeeper

HADOOP_HOME=/home/hadoop/app/hadoop

HIVE_HOME=/home/hadoop/app/hive

HBASE_HOME=/home/hadoop/app/hbase

CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

PATH=$JAVA_HOME/bin:$ZOOKEEPER_HOME/bin:$HADOOP_HOME/bin:$HIVE_HOME/bin:$HBASE_HOME/bin:/home/hadoop/tools:$PATH

export JAVA_HOME CLASSPATH PATH ZOOKEEPER_HOME HADOOP_HOME HIVE_HOME HBASE_HOME

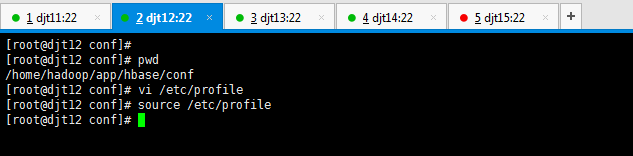

[root@djt11 conf]# source /etc/profile

[root@djt11 conf]# su hadoop

这个脚本,我们之前在搭建hadoop的5节点时,已经写好并可用的。这里,我们查看下并温习。不作修改

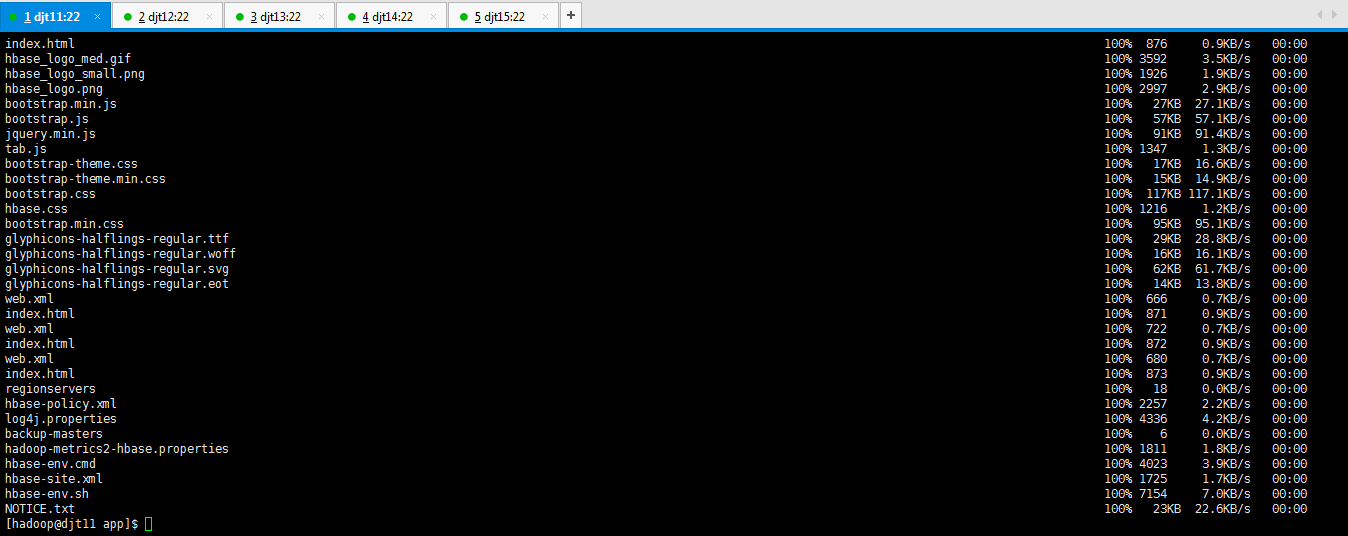

将djt11的hbase分发到slave,即djt12、djt13、djt14、djt15

[hadoop@djt11 tools]$ pwd

[hadoop@djt11 tools]$ cd /home/hadoop/app/

[hadoop@djt11 app]$ ls

[hadoop@djt11 app]$ deploy.sh hbase /home/hadoop/app/ slave

查看分发后的结果情况

表明,分发成功!

接下来,分别也跟djt11亿元,进行djt12、djt13、djt14、djt15的配置。

djt12的配置:

[hadoop@djt12 hbase]$ pwd

[hadoop@djt12 hbase]$ ls

[hadoop@djt12 hbase]$ cd conf/

[hadoop@djt12 conf]$ pwd

[hadoop@djt12 conf]$ ls

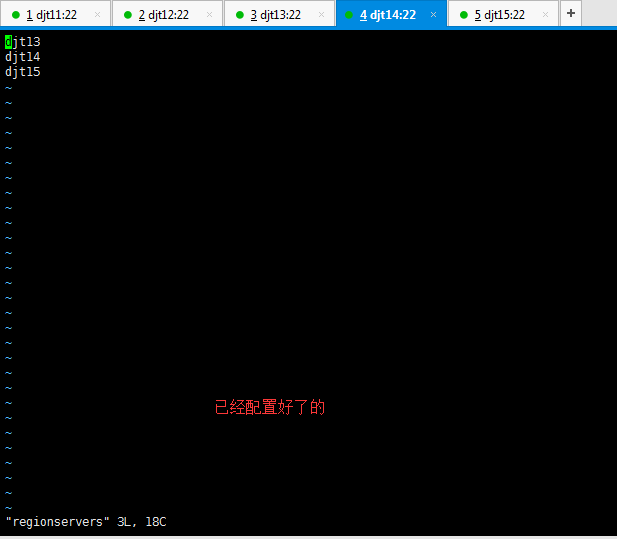

[hadoop@djt12 conf]$ vi regionservers

都是已经配置好了的

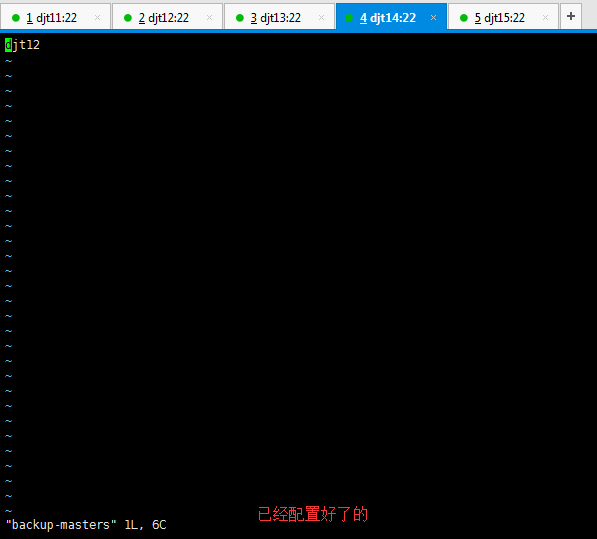

[hadoop@djt12 conf]$ vi backup-masters

都是之前已经配置好了的

[hadoop@djt12 conf]$ cp /home/hadoop/app/hadoop/etc/hadoop/core-site.xml ./

[hadoop@djt12 conf]$ cp /home/hadoop/app/hadoop/etc/hadoop/hdfs-site.xml ./

[hadoop@djt12 conf]$ vi hbase-site.xml

<configuration>

<property>

<name>hbase.zookeeper.quorum</name>

<value>djt11,djt12,djt13,djt14,djt15</value>

</property>

<property>

<name>hbase.zookeeper.property</name>

<value>/home/hadoop/data/zookeeper/zkdata</value>

</property>

<property>

<name>hbase.zookeeper.property.clientPort</name>

<value>2181</value>

</property>

<property>

<name>hbase.rootdir</name>

<value>hdfs://cluster1/hbase</value>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.master</name>

<value>hdfs://djt11:60000</value>

</property>

</configuration>

[hadoop@djt12 conf]$ vi hbase-env.sh

[hadoop@djt12 conf]$ pwd

[hadoop@djt12 conf]$ su root

[root@djt12 conf]# pwd

[root@djt12 conf]# vi /etc/profile

[root@djt12 conf]# cd ..

[root@djt12 hbase]# pwd

[root@djt12 hbase]# su hadoop

[hadoop@djt12 hbase]$ pwd

[hadoop@djt12 hbase]$ ls

[hadoop@djt12 hbase]$

djt13的配置

[hadoop@djt13 app]$ pwd

[hadoop@djt13 app]$ ls

[hadoop@djt13 app]$ cd hbase/

[hadoop@djt13 hbase]$ ls

[hadoop@djt13 hbase]$ cd conf/

[hadoop@djt13 conf]$ ls

[hadoop@djt13 conf]$ vi regionservers

[hadoop@djt13 conf]$ vi backup-masters

[hadoop@djt13 conf]$ cp /home/hadoop/app/hadoop/etc/hadoop/core-site.xml ./

[hadoop@djt13 conf]$ cp /home/hadoop/app/hadoop/etc/hadoop/hdfs-site.xml ./

[hadoop@djt13 conf]$ vi hbase-site.xml

<configuration>

<property>

<name>hbase.zookeeper.quorum</name>

<value>djt11,djt12,djt13,djt14,djt15</value>

</property>

<property>

<name>hbase.zookeeper.property</name>

<value>/home/hadoop/data/zookeeper/zkdata</value>

</property>

<property>

<name>hbase.zookeeper.property.clientPort</name>

<value>2181</value>

</property>

<property>

<name>hbase.rootdir</name>

<value>hdfs://cluster1/hbase</value>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.master</name>

<value>hdfs://cluster1:60000</value>

</property>

(注意,我的图片里是错误的!) 因为是做了高可用,是cluster1而不是单独的djt11。cluster1包括了djt11和djt12

</configuration>

[hadoop@djt13 conf]$ pwd

[hadoop@djt13 conf]$ su root

[root@djt13 conf]# pwd

[root@djt13 conf]# vi /etc/profile

JAVA_HOME=/home/hadoop/app/jdk1.7.0_79

ZOOKEEPER_HOME=/home/hadoop/app/zookeeper

HADOOP_HOME=/home/hadoop/app/hadoop

HIVE_HOME=/home/hadoop/app/hive

HBASE_HOME=/home/hadoop/app/hbase

CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

PATH=$JAVA_HOME/bin:$ZOOKEEPER_HOME/bin:$HADOOP_HOME/bin:$HIVE_HOME/bin:$HBASE_HOME/bin:/home/hadoop/tools:$PATH

export JAVA_HOME CLASSPATH PATH ZOOKEEPER_HOME HADOOP_HOME HIVE_HOME HBASE_HOME

[hadoop@djt13 conf]$ pwd

[hadoop@djt13 conf]$ su root

[root@djt13 conf]# pwd

[root@djt13 conf]# vi /etc/profile

[root@djt13 conf]# source /etc/profile

[root@djt13 conf]# cd ..

[root@djt13 hbase]# pwd

[root@djt13 hbase]# su hadoop

[hadoop@djt13 hbase]$ pwd

[hadoop@djt13 hbase]$ ls

[hadoop@djt13 hbase]$

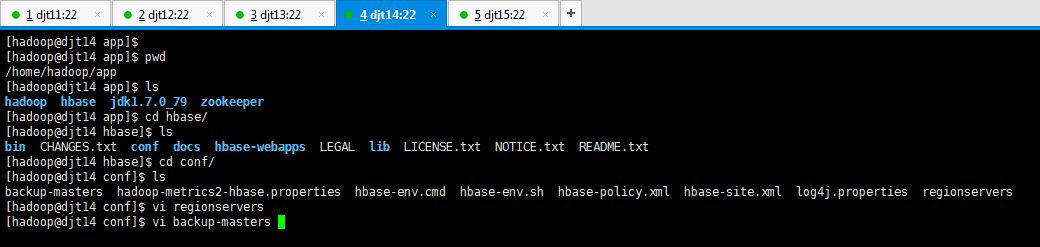

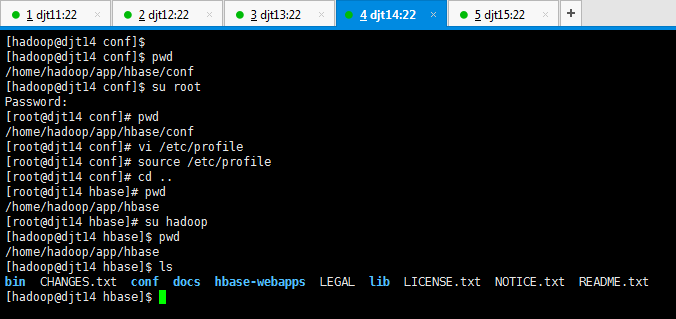

djt14的配置

[hadoop@djt14 app]$ pwd

[hadoop@djt14 app]$ ls

[hadoop@djt14 app]$ cd hbase/

[hadoop@djt14 hbase]$ ls

[hadoop@djt14 hbase]$ cd conf/

[hadoop@djt14 conf]$ ls

[hadoop@djt14 conf]$ vi regionservers

[hadoop@djt14 conf]$ vi backup-masters

[hadoop@djt14 conf]$ cp /home/hadoop/app/hadoop/etc/hadoop/core-site.xml ./

[hadoop@djt14 conf]$ cp /home/hadoop/app/hadoop/etc/hadoop/hdfs-site.xml ./

[hadoop@djt14 conf]$ vi hbase-site.xml

<configuration>

<property>

<name>hbase.zookeeper.quorum</name>

<value>djt11,djt12,djt13,djt14,djt15</value>

</property>

<property>

<name>hbase.zookeeper.property</name>

<value>/home/hadoop/data/zookeeper/zkdata</value>

</property>

<property>

<name>hbase.zookeeper.property.clientPort</name>

<value>2181</value>

</property>

<property>

<name>hbase.rootdir</name>

<value>hdfs://cluster1/hbase</value>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.master</name>

<value>hdfs://djt11:60000</value>

</property>

</configuration>

[hadoop@djt14 conf]$ vi hbase-env.sh

[hadoop@djt14 conf]$ pwd

[hadoop@djt14 conf]$ su root

[root@djt14 conf]# pwd

[root@djt14 conf]# vi /etc/profile

[hadoop@djt14 conf]$ pwd

[hadoop@djt14 conf]$ su root

[root@djt14 conf]# pwd

[root@djt14 conf]# vi /etc/profile

[root@djt14 conf]# source /etc/profile

[root@djt14 conf]# cd ..

[root@djt14 hbase]# pwd

[root@djt14 hbase]# su hadoop

[hadoop@djt14 hbase]$ pwd

[hadoop@djt14 hbase]$ ls

[hadoop@djt14 hbase]$

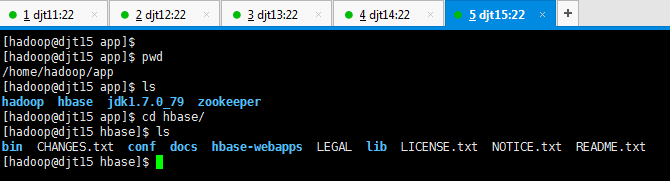

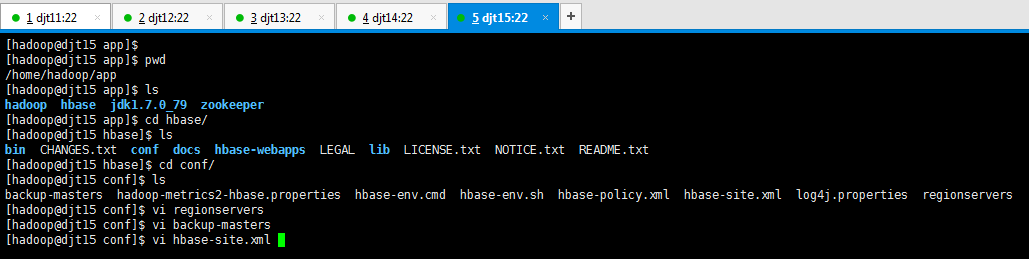

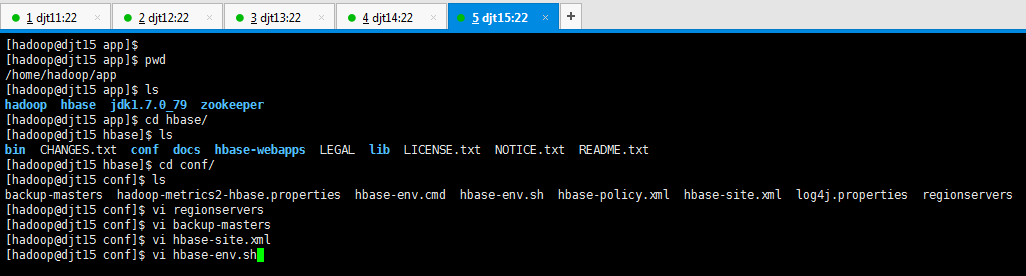

djt15的配置

[hadoop@djt15 app]$ pwd

[hadoop@djt15 app]$ ls

[hadoop@djt15 app]$ cd hbase/

[hadoop@djt15 hbase]$ ls

[hadoop@djt15 hbase]$ cd conf/

[hadoop@djt15 conf]$ ls

[hadoop@djt15 conf]$ vi regionservers

[hadoop@djt15 conf]$ vi backup-masters

[hadoop@djt15 conf]$ cp /home/hadoop/app/hadoop/etc/hadoop/core-site.xml ./

[hadoop@djt15 conf]$ cp /home/hadoop/app/hadoop/etc/hadoop/hdfs-site.xml ./

[hadoop@djt15 conf]$ vi hbase-site.xml

<configuration>

<property>

<name>hbase.zookeeper.quorum</name>

<value>djt11,djt12,djt13,djt14,djt15</value>

</property>

<property>

<name>hbase.zookeeper.property</name>

<value>/home/hadoop/data/zookeeper/zkdata</value>

</property>

<property>

<name>hbase.zookeeper.property.clientPort</name>

<value>2181</value>

</property>

<property>

<name>hbase.rootdir</name>

<value>hdfs://cluster1/hbase</value>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.master</name>

<value>hdfs://djt11:60000</value>

</property>

</configuration>

[hadoop@djt15 conf]$ vi hbase-env.sh

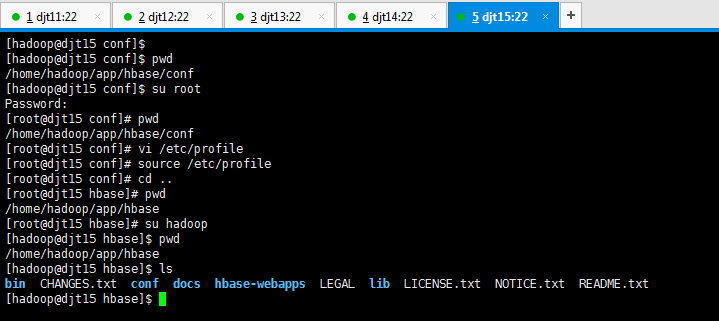

[hadoop@djt15 conf]$ pwd

[hadoop@djt15 conf]$ su root

[root@djt15 conf]# pwd

[root@djt15 conf]# vi /etc/profile

[hadoop@djt15 conf]$ pwd

[hadoop@djt15 conf]$ su root

[root@djt15 conf]# pwd

[root@djt15 conf]# vi /etc/profile

[root@djt15 conf]# source /etc/profile

[root@djt15 conf]# cd ..

[root@djt15 hbase]# pwd

[root@djt15 hbase]# su hadoop

[hadoop@djt15 hbase]$ pwd

[hadoop@djt15 hbase]$ ls

[hadoop@djt15 hbase]$

.HBase的分布模式(3、5节点)的启动

这里,只需启动sbin/start-dfs.sh即可。

不需sbin/start-all.sh (它包括sbin/start-dfs.sh和sbin/start-yarn.sh)

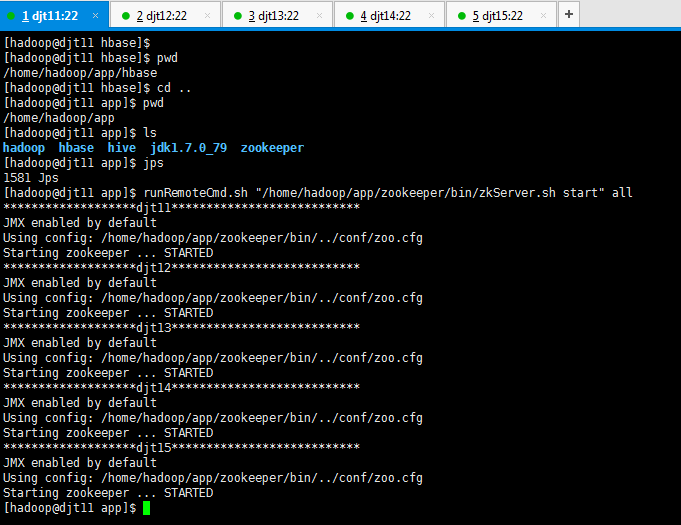

启动zookeeper,是因为,hbase是建立在zookeeper之上的。数据是保存在hdfs。

[hadoop@djt11 app]$ jps

[hadoop@djt11 app]$ ls

[hadoop@djt11 app]$ cd hadoop/

[hadoop@djt11 hadoop]$ ls

[hadoop@djt11 hadoop]$ sbin/start-dfs.sh

[hadoop@djt11 hadoop]$ jps

[hadoop@djt12 hadoop]$ jps

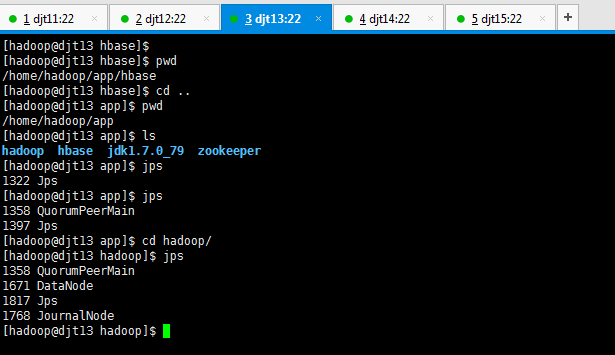

[hadoop@djt13 app]$ cd hadoop/

[hadoop@djt13 hadoop]$ jps

[hadoop@djt14 app]$ cd hadoop/

[hadoop@djt14 hadoop]$ pwd

[hadoop@djt14 hadoop]$ jps

[hadoop@djt15 app]$ cd hadoop/

[hadoop@djt15 hadoop]$ pwd

[hadoop@djt15 hadoop]$ jps

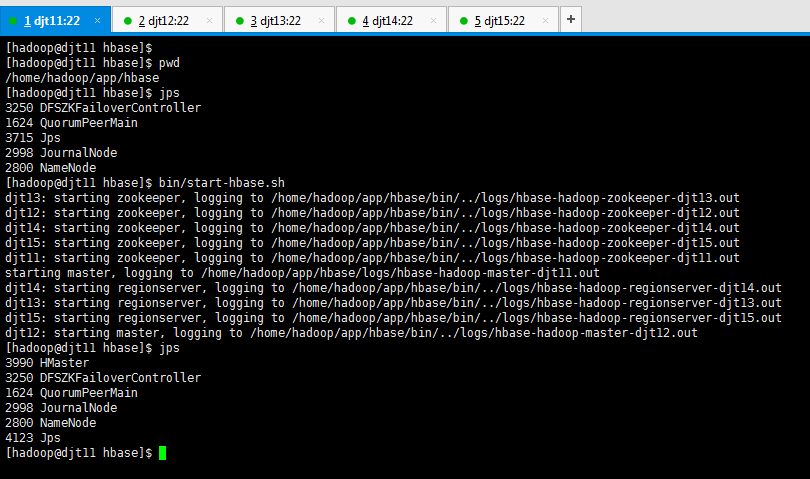

[hadoop@djt11 hbase]$ bin/start-hbase.sh

[hadoop@djt11 hbase]$ jps

[hadoop@djt12 hbase]$ cd ..

[hadoop@djt12 app]$ ls

[hadoop@djt12 app]$ cd hbase/

[hadoop@djt12 hbase]$ pwd

[hadoop@djt12 hbase]$ jps

[hadoop@djt13 hbase]$ cd ..

[hadoop@djt13 app]$ ls

[hadoop@djt13 app]$ cd hbase/

[hadoop@djt13 hbase]$ pwd

[hadoop@djt13 hbase]$ jps

[hadoop@djt14 hbase]$ cd ..

[hadoop@djt14 app]$ ls

[hadoop@djt14 app]$ cd hbase/

[hadoop@djt14 hbase]$ pwd

[hadoop@djt14 hbase]$ jps

[hadoop@djt15 hbase]$ cd ..

[hadoop@djt15 app]$ ls

[hadoop@djt15 app]$ cd hbase/

[hadoop@djt15 hbase]$ pwd

[hadoop@djt15 hbase]$ jps

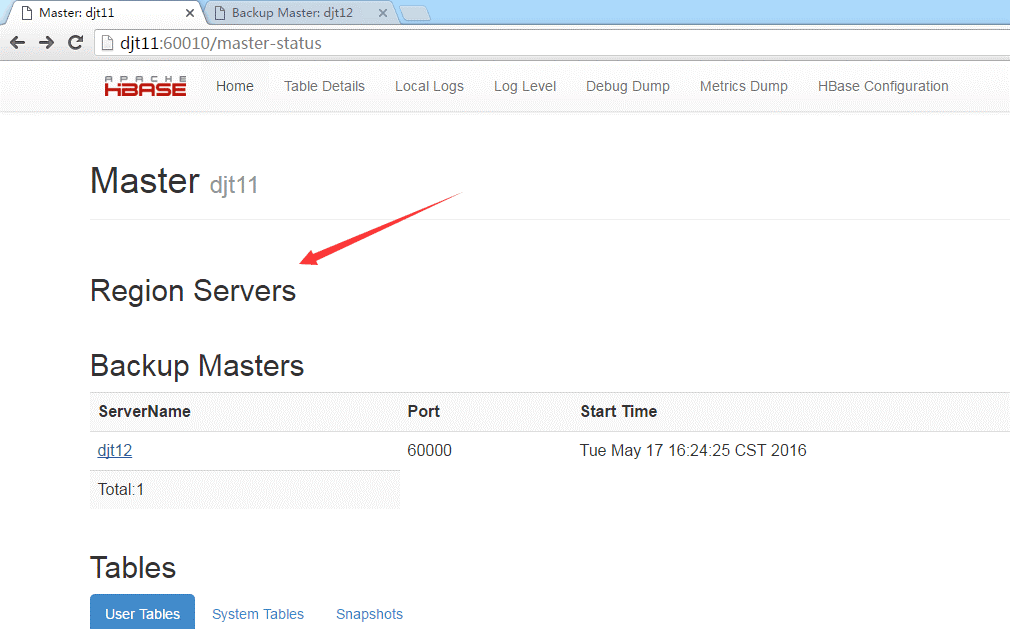

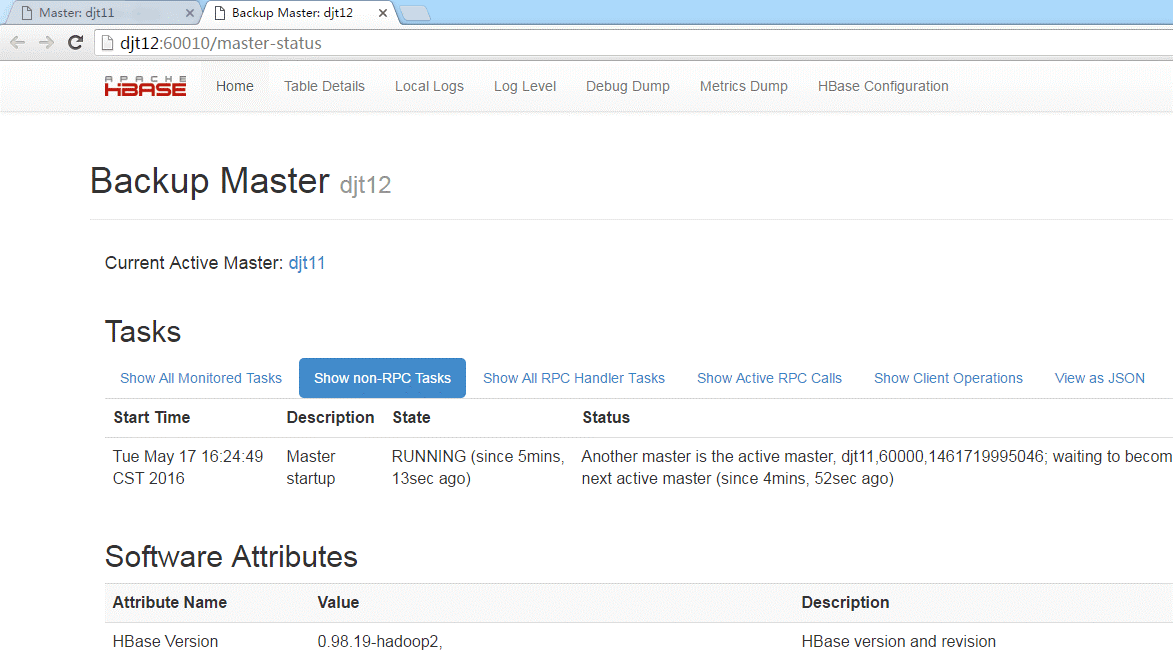

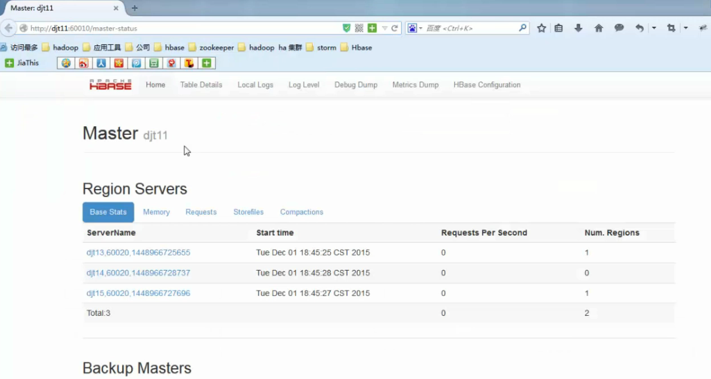

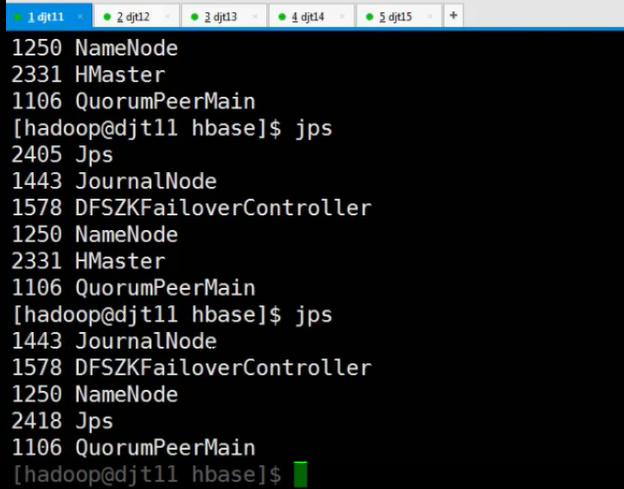

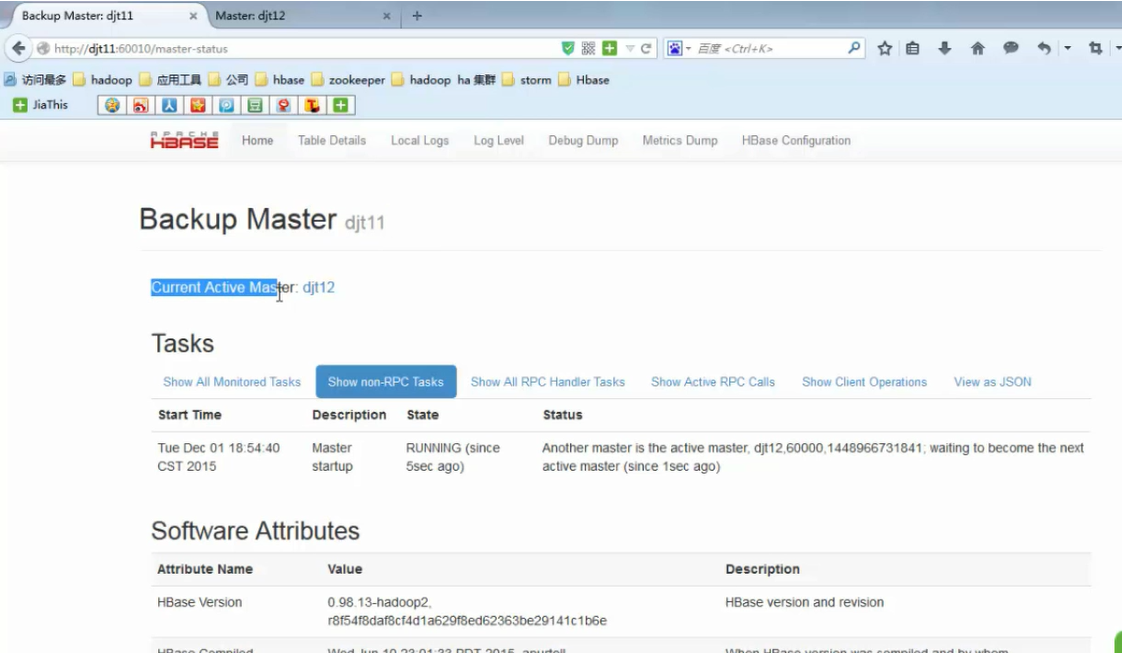

那么,djt11的master被杀死掉,则访问不到了。

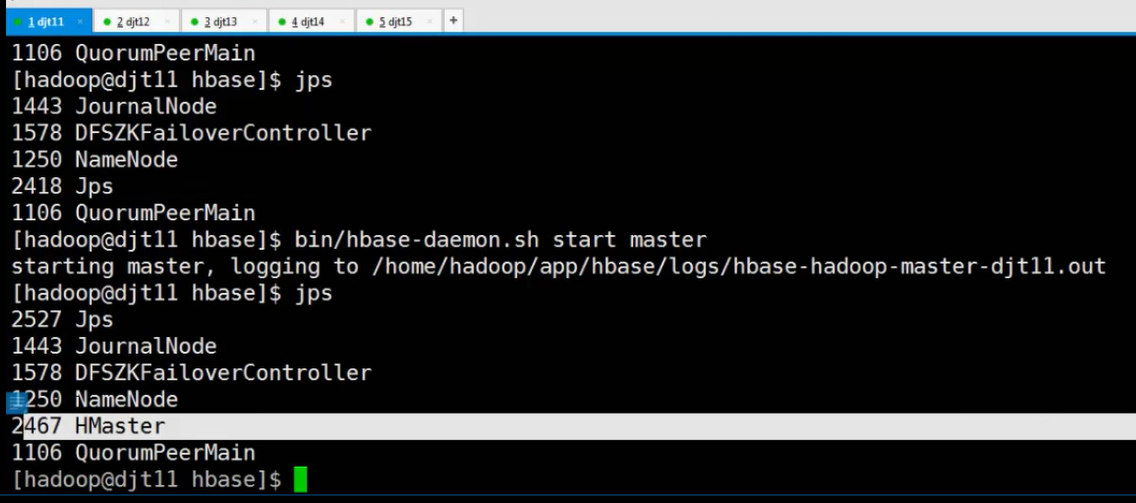

然后,我们再把djt11的master启起来,

则,djt11由不可访问,变成备用的master了。djt12依然还是主用的master

成功!

作者:大数据和人工智能躺过的坑

出处:http://www.cnblogs.com/zlslch/

本文版权归作者和博客园共有,欢迎转载,但未经作者同意必须保留此段声明,且在文章页面明显位置给出原文链接,否则保留追究法律责任的权利。

如果您认为这篇文章还不错或者有所收获,您可以通过右边的“打赏”功能 打赏我一杯咖啡【物质支持】,也可以点击右下角的【好文要顶】按钮【精神支持】,因为这两种支持都是我继续写作,分享的最大动力!

【推荐】国内首个AI IDE,深度理解中文开发场景,立即下载体验Trae

【推荐】编程新体验,更懂你的AI,立即体验豆包MarsCode编程助手

【推荐】抖音旗下AI助手豆包,你的智能百科全书,全免费不限次数

【推荐】轻量又高性能的 SSH 工具 IShell:AI 加持,快人一步