scrapy框架综合运用 爬取天气预报 + 定时任务

爬取目标网站:

http://www.weather.com.cn/

具体区域天气地址:

http://www.weather.com.cn/weather1d/101280601.shtm(深圳)

开始:

scrapy startproject weather

编写items.py

import scrapy

class WeatherItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

date = scrapy.Field()

temperature = scrapy.Field()

weather = scrapy.Field()

wind = scrapy.Field()

编写spider:

# -*- coding: utf-8 -*-

# @Time : 2019/8/1 15:40

# @Author : wujf

# @Email : 1028540310@qq.com

# @File : weather.py

# @Software: PyCharm

import scrapy

from weather.items import WeatherItem

class weather(scrapy.Spider):

name = 'weather'

allowed_domains = ['www.weather.com.cn/weather/101280601.shtml']

start_urls = [

'http://www.weather.com.cn/weather/101280601.shtml'

]

def parse(self, response):

'''

筛选信息的函数

date= 日期

temperaturature = 当天的温度

weather = 当天的天气

wind = 当天的风向

:param response:

:return:

'''

items = []

day = response.xpath('//ul[@class="t clearfix"]')

for i in list(range(7)):

item = WeatherItem()

item['date']= day.xpath('./li['+str(i+1)+']/h1//text()').extract_first()

item['temperature'] = day.xpath('./li['+str(i+1)+']/p[@class="tem"]/i//text()').extract_first()

item['weather'] = day.xpath('./li['+str(i+1)+']/p[@class="wea"]//text()').extract_first()

item['wind'] = day.xpath('./li[' + str(i + 1) + ']/p[@class="win"]/i//text()').extract_first()

#print(item)

items.append(item)

return items

编写管道PIPELINE:

pipelines.py是用来处理收尾爬虫抓到的数据的,一般情况下,我们会将数据存到本地

1.文本形式:最基本存储方式

2.json格式:方便调用

3.数据库:数据量比较大选择的存储方式

import os

import requests

import json

import codecs

import pymysql

'''文本方式'''

class WeatherPipeline(object):

def process_item(self, item, spider):

#print(item)

#获取当前目录

base_dir = os.getcwd()

#filename = base_dir+'\\data\\test.txt'

filename = r'E:\Python\weather\weather\data\test.txt'

with open(filename,'a') as f:

f.write(item['date'] + '\n')

f.write(item['temperature'] + '\n')

f.write(item['weather'] + '\n')

f.write(item['wind'] + '\n\n')

return item

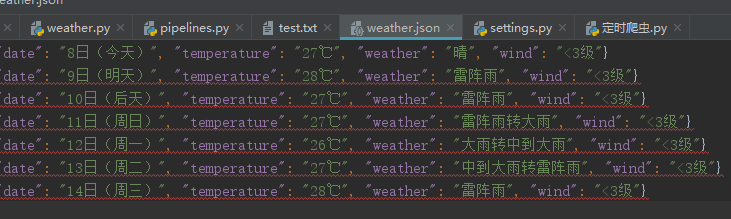

'''json数据'''

class W2json(object): def process_item(self, item, spider): ''' 讲爬取的信息保存到json 方便其他程序员调用 ''' base_dir = os.getcwd() #filename = base_dir + '/data/weather.json' filename = r'E:\Python\weather\weather\data\weather.json' # 打开json文件,向里面以dumps的方式吸入数据 # 注意需要有一个参数ensure_ascii=False ,不然数据会直接为utf编码的方式存入比如:“/xe15” with codecs.open(filename, 'a') as f: line = json.dumps(dict(item), ensure_ascii=False) + '\n' f.write(line) return item

class W2mysql(object):

def process_item(self, item, spider):

'''

讲爬取的信息保存到mysql

'''

date = item['date']

temperature = item['temperature']

weather = item['weather']

wind = item['wind']

connection = pymysql.connect(

host = '127.0.0.1',

user = 'root',

passwd='root',

db = 'scrapy',

# charset='utf-8',

cursorclass = pymysql.cursors.DictCursor

)

try:

with connection.cursor() as cursor:

#创建更新值的sql语句

sql = """INSERT INTO `weather` (date, temperature, weather, wind) VALUES (%s, %s, %s, %s) """

cursor.execute(

sql,(date,temperature,weather,wind)

)

connection.commit()

finally:

connection.close()

return item

然后在settings.py里面配置下

'''

设置日志等级

ERROR : 一般错误

WARNING : 警告

INFO : 一般的信息

DEBUG : 调试信息

默认的显示级别是DEBUG

'''

LOG_LEVEL = 'INFO'

ITEM_PIPELINES = {

'weather.pipelines.WeatherPipeline': 300,

'weather.pipelines.W2json': 400,

'weather.pipelines.W2mysql': 300,

}

上面三个类就展示三种数据整理方式。

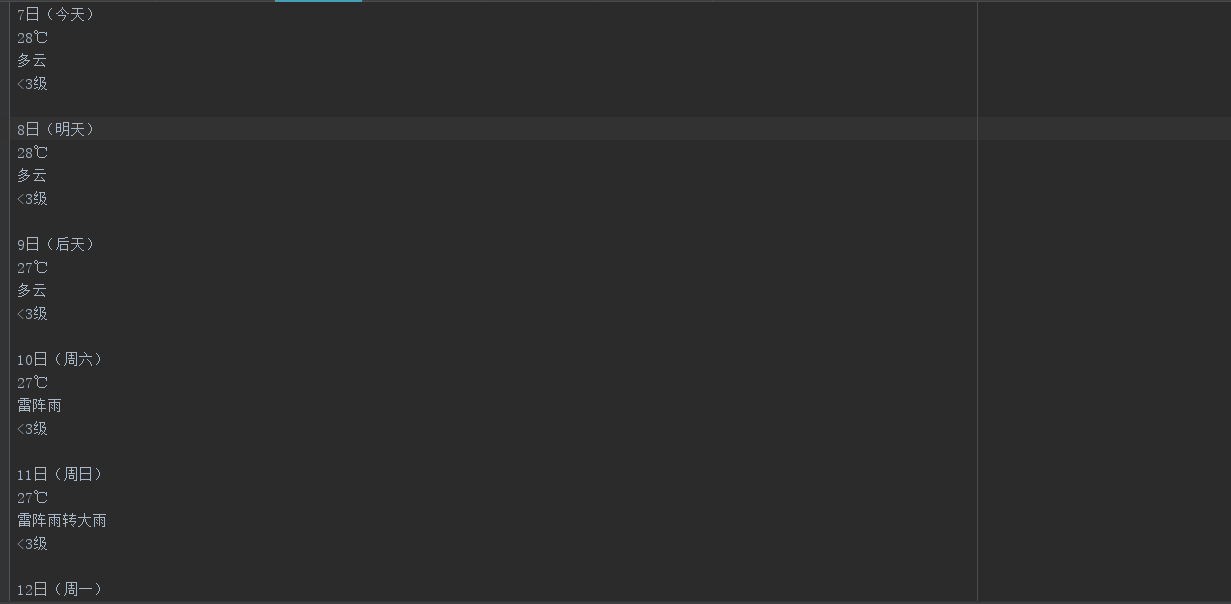

最后运行scrapy crawl weather得到三种结果:

最后写个定时爬区任务

# -*- coding: utf-8 -*-

# @Time : 2019/8/3 15:38

# @Author : wujf

# @Email : 1028540310@qq.com

# @File : 定时爬虫.py

# @Software: PyCharm

'''

第一种方法 采用sleep

'''

# import time

# import os

# while True:

# os.system('scrapy crawl weather')

# time.sleep(3)

# 第二种

from scrapy import cmdline

import os

#retal = os.getcwd() #获取当前目录

#print(retal)

os.chdir(r'E:\Python\weather\weather') #改变目录 因为只有进入scrapy框架才能执行scrapy crawl weather

cmdline.execute(['scrapy', 'crawl', 'weather'])

还有一个中间件,但是我手上没有代理ip ,所以暂时玩不了。

OK,到此结束!

龙卷风之殇