openstack--5--控制节点和计算节点安装配置nova

Nova相关介绍

目前的Nova主要由API,Compute,Conductor,Scheduler组成

Compute:用来交互并管理虚拟机的生命周期;

Scheduler:从可用池中根据各种策略选择最合适的计算节点来创建新的虚拟机;

Conductor:为数据库的访问提供统一的接口层。

Compute Service Nova 是 OpenStack 最核心的服务,负责维护和管理云环境的计算资源。

OpenStack 作为 IaaS 的云操作系统,虚拟机生命周期管理也就是通过 Nova 来实现的

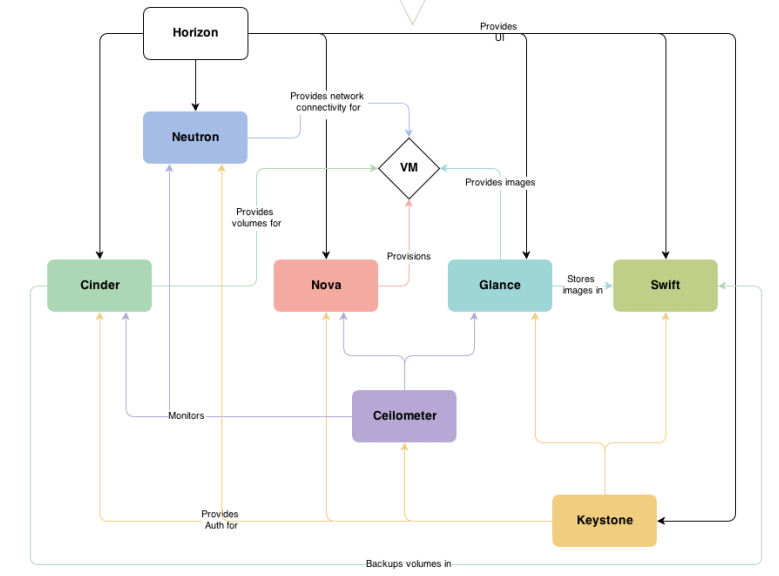

在上图中可以看到,Nova 处于 Openstak 架构的中心,其他组件都为 Nova 提供支持:

Glance 为 VM 提供 image

Cinder 和 Swift 分别为 VM 提供块存储和对象存储

Neutron 为 VM 提供网络连接

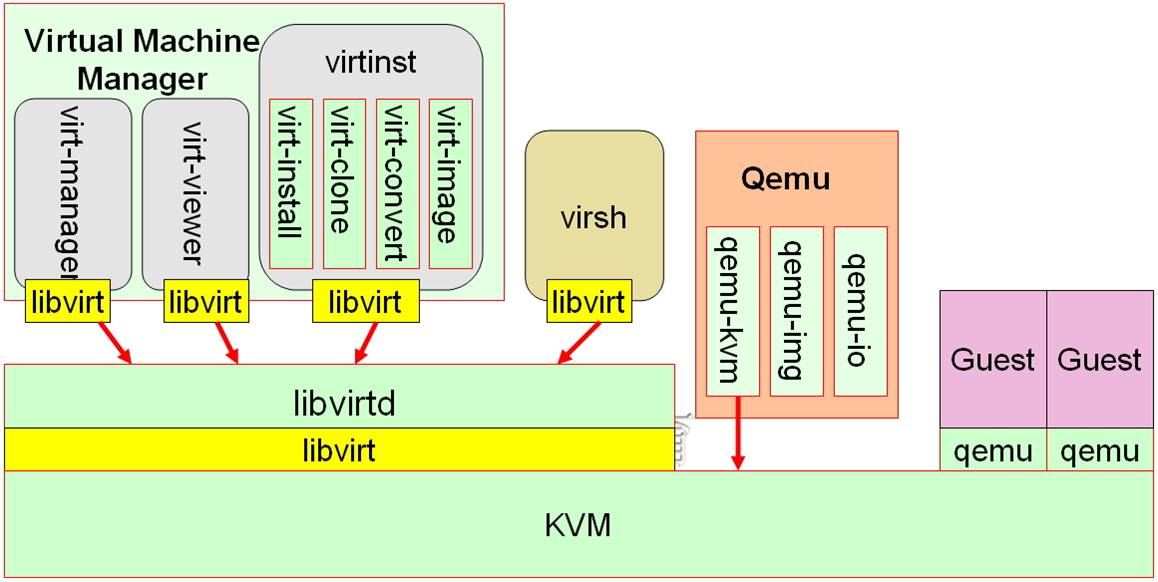

Nova 架构如下

Nova 的架构比较复杂,包含很多组件。

这些组件以子服务(后台 deamon 进程)的形式运行,可以分为以下几类:

AP部分

nova-api

其它模块的通过HTTP协议进入Nova模块的接口,api是外部访问nova的唯一途径

Compute Core部分

nova-scheduler

虚机调度服务,负责决定在哪个计算节点上运行虚机

Nova scheduler模块决策一个虚拟机应该调度到某个物理节点,需要两个步骤

1、过滤(Fliter):先过滤出符合条件的主机,空闲资源足够的。

2、计算权重

nova-compute

管理虚机的核心服务,通过调用 Hypervisor API 实现虚机生命周期管理

Hypervisor

计算节点上跑的虚拟化管理程序,虚机管理最底层的程序。 不同虚拟化技术提供自己的 Hypervisor。 常用的 Hypervisor 有 KVM,Xen, VMWare 等

nova-conductor

nova-compute 经常需要更新数据库,比如更新虚机的状态。 出于安全性和伸缩性的考虑,nova-compute 并不会直接访问数据库(早期版本计算节点可以直接访问数据库,计算节点一旦被入侵,整个库都危险)

而是将这个任务委托给 nova-conductor,这个我们在后面会详细讨论。

Console Interface部分

nova-console

用户可以通过多种方式访问虚机的控制台: nova-novncproxy,基于 Web 浏览器的 VNC 访问 nova-spicehtml5proxy,基于 HTML5 浏览器的 SPICE 访问 nova-xvpnvncproxy,基于 Java 客户端的 VNC 访问

nova-consoleauth

负责对访问虚机控制台请亲提供 Token 认证

nova-cert

Database部分

Nova 会有一些数据需要存放到数据库中,一般使用 MySQL。 Nova 使用名为 "nova" 和"nova_api"的数据库。

某个主机硬件资源足够并不表示符合Nova scheduler。

可能认为会没有资源的场景:

1、网络故障,主机网络节点有问题

2、nova compute故障了

控制节点安装和配置Nova

1、控制节点安装软件包

[root@linux-node1 ~]# yum install openstack-nova-api openstack-nova-conductor openstack-nova-console openstack-nova-novncproxy openstack-nova-scheduler -y Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile * base: mirrors.163.com * epel: mirror.premi.st * extras: mirrors.163.com * updates: mirrors.163.com Package 1:openstack-nova-api-13.1.2-1.el7.noarch already installed and latest version Package 1:openstack-nova-conductor-13.1.2-1.el7.noarch already installed and latest version Package 1:openstack-nova-console-13.1.2-1.el7.noarch already installed and latest version Package 1:openstack-nova-novncproxy-13.1.2-1.el7.noarch already installed and latest version Package 1:openstack-nova-scheduler-13.1.2-1.el7.noarch already installed and latest version Nothing to do [root@linux-node1 ~]#

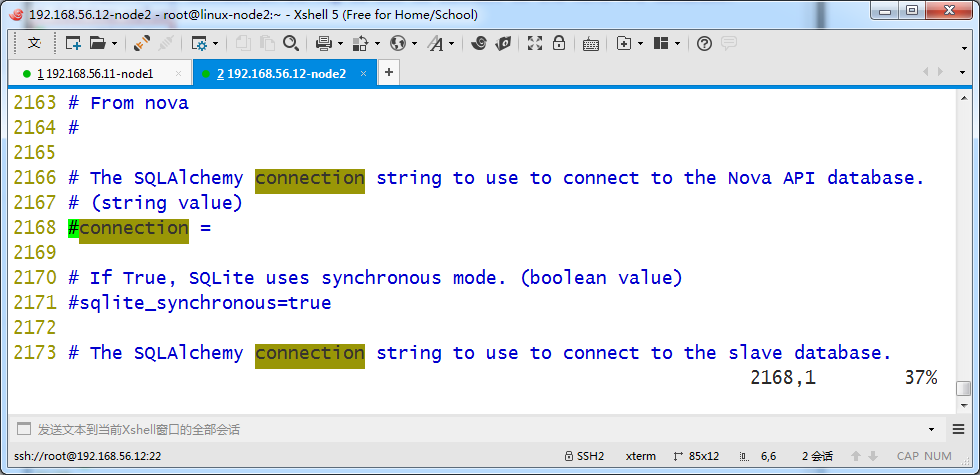

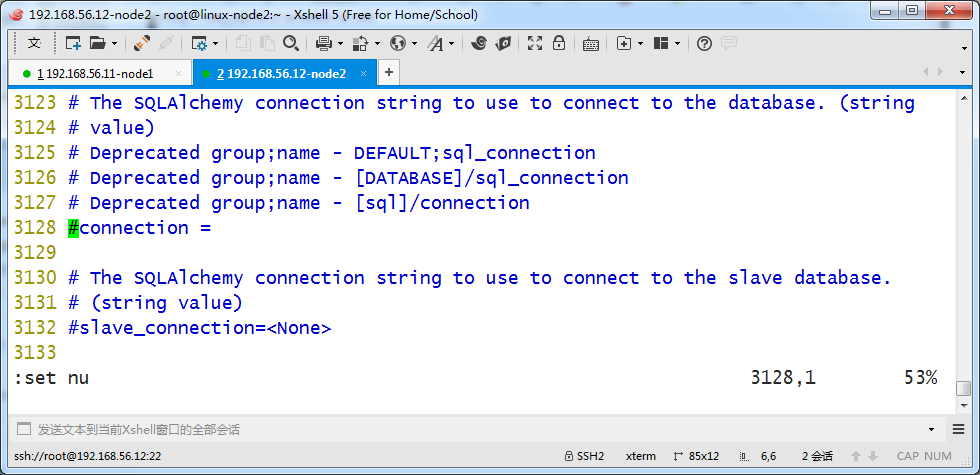

2、配置部分---数据库连接配置

/etc/nova/nova.conf

在[api_database]和[database]部分,配置数据库的连接:

[api_database] ... connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova_api [database] ... connection = mysql+pymysql://nova:NOVA_DBPASS@controller/nova

更改结果如下

[root@linux-node1 ~]# grep -n '^[a-Z]' /etc/nova/nova.conf 2168:connection = mysql+pymysql://nova:nova@192.168.56.11/nova_api 3128:connection = mysql+pymysql://nova:nova@192.168.56.11/nova [root@linux-node1 ~]#

同步数据到mysql库,出现警告也没关系

[root@linux-node1 ~]# su -s /bin/sh -c "nova-manage api_db sync" nova [root@linux-node1 ~]# su -s /bin/sh -c "nova-manage db sync" nova [root@linux-node1 ~]#

[root@linux-node1 ~]# mysql -h192.168.56.11 -unova -pnova -e "use nova;show tables;" +--------------------------------------------+ | Tables_in_nova | +--------------------------------------------+ | agent_builds | | aggregate_hosts | | aggregate_metadata | | aggregates | | allocations | | block_device_mapping | | bw_usage_cache | | cells | | certificates | | compute_nodes | | console_pools | | consoles | | dns_domains | | fixed_ips | | floating_ips | | instance_actions | | instance_actions_events | | instance_extra | | instance_faults | | instance_group_member | | instance_group_policy | | instance_groups | | instance_id_mappings | | instance_info_caches | | instance_metadata | | instance_system_metadata | | instance_type_extra_specs | | instance_type_projects | | instance_types | | instances | | inventories | | key_pairs | | migrate_version | | migrations | | networks | | pci_devices | | project_user_quotas | | provider_fw_rules | | quota_classes | | quota_usages | | quotas | | reservations | | resource_provider_aggregates | | resource_providers | | s3_images | | security_group_default_rules | | security_group_instance_association | | security_group_rules | | security_groups | | services | | shadow_agent_builds | | shadow_aggregate_hosts | | shadow_aggregate_metadata | | shadow_aggregates | | shadow_block_device_mapping | | shadow_bw_usage_cache | | shadow_cells | | shadow_certificates | | shadow_compute_nodes | | shadow_console_pools | | shadow_consoles | | shadow_dns_domains | | shadow_fixed_ips | | shadow_floating_ips | | shadow_instance_actions | | shadow_instance_actions_events | | shadow_instance_extra | | shadow_instance_faults | | shadow_instance_group_member | | shadow_instance_group_policy | | shadow_instance_groups | | shadow_instance_id_mappings | | shadow_instance_info_caches | | shadow_instance_metadata | | shadow_instance_system_metadata | | shadow_instance_type_extra_specs | | shadow_instance_type_projects | | shadow_instance_types | | shadow_instances | | shadow_key_pairs | | shadow_migrate_version | | shadow_migrations | | shadow_networks | | shadow_pci_devices | | shadow_project_user_quotas | | shadow_provider_fw_rules | | shadow_quota_classes | | shadow_quota_usages | | shadow_quotas | | shadow_reservations | | shadow_s3_images | | shadow_security_group_default_rules | | shadow_security_group_instance_association | | shadow_security_group_rules | | shadow_security_groups | | shadow_services | | shadow_snapshot_id_mappings | | shadow_snapshots | | shadow_task_log | | shadow_virtual_interfaces | | shadow_volume_id_mappings | | shadow_volume_usage_cache | | snapshot_id_mappings | | snapshots | | tags | | task_log | | virtual_interfaces | | volume_id_mappings | | volume_usage_cache | +--------------------------------------------+ [root@linux-node1 ~]# mysql -h192.168.56.11 -unova -pnova -e "use nova_api;show tables;" +--------------------+ | Tables_in_nova_api | +--------------------+ | build_requests | | cell_mappings | | flavor_extra_specs | | flavor_projects | | flavors | | host_mappings | | instance_mappings | | migrate_version | | request_specs | +--------------------+ [root@linux-node1 ~]#

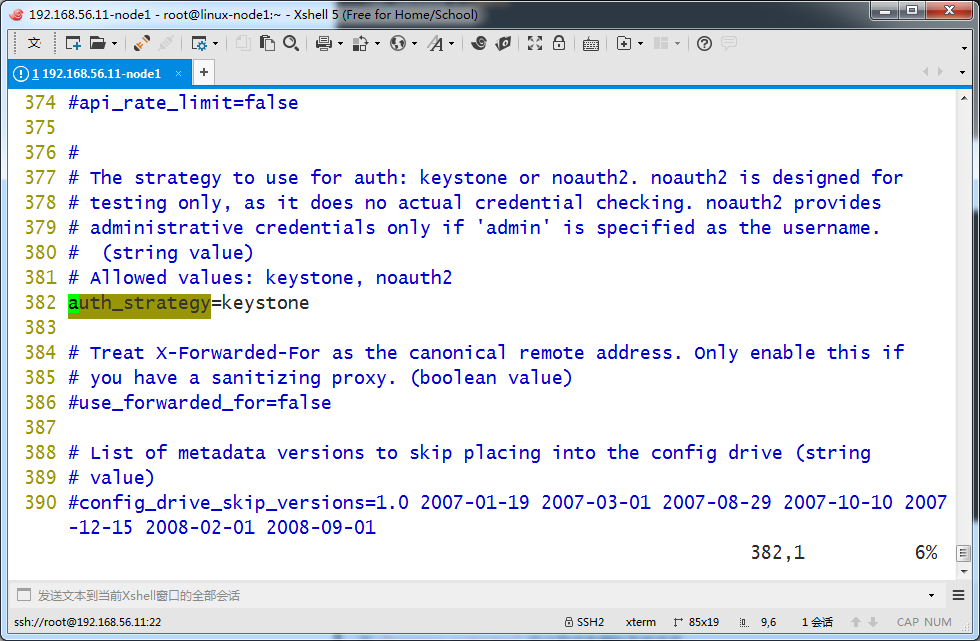

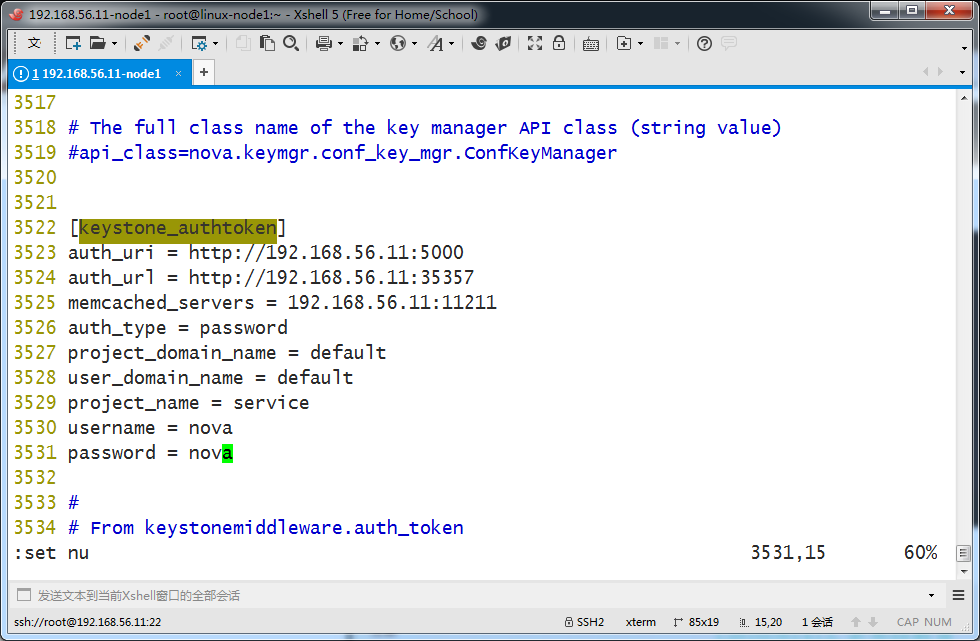

3、 配置部分---keystone配置

在[DEFAULT] 和 [keystone_authtoken] 部分,配置认证服务访问:

[DEFAULT] ... auth_strategy = keystone [keystone_authtoken] ... auth_uri = http://192.168.56.11:5000 auth_url = http://192.168.56.11:35357 memcached_servers = 192.168.56.11:11211 auth_type = password project_domain_name = default user_domain_name = default project_name = service username = nova password = nova

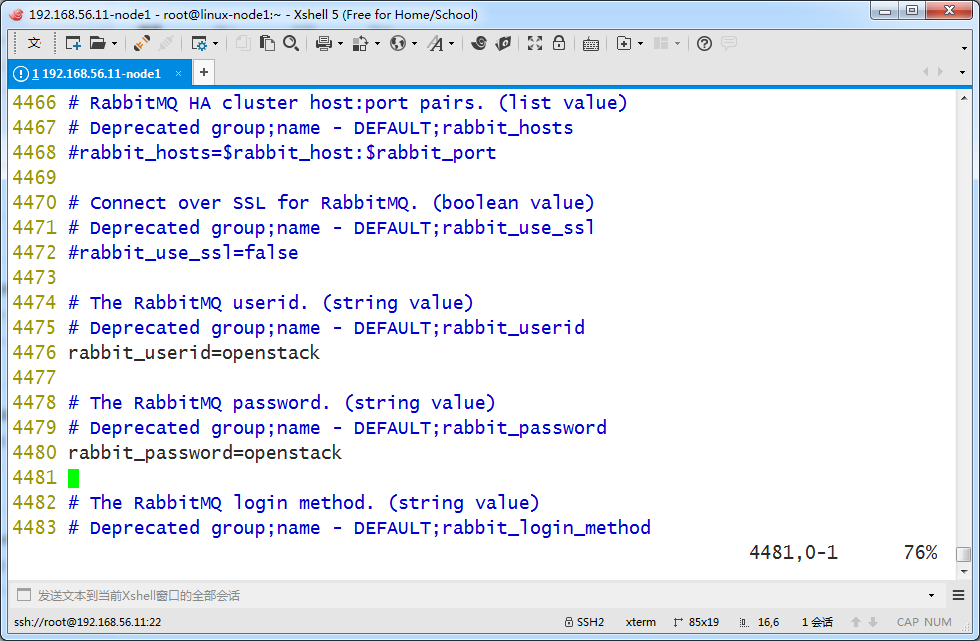

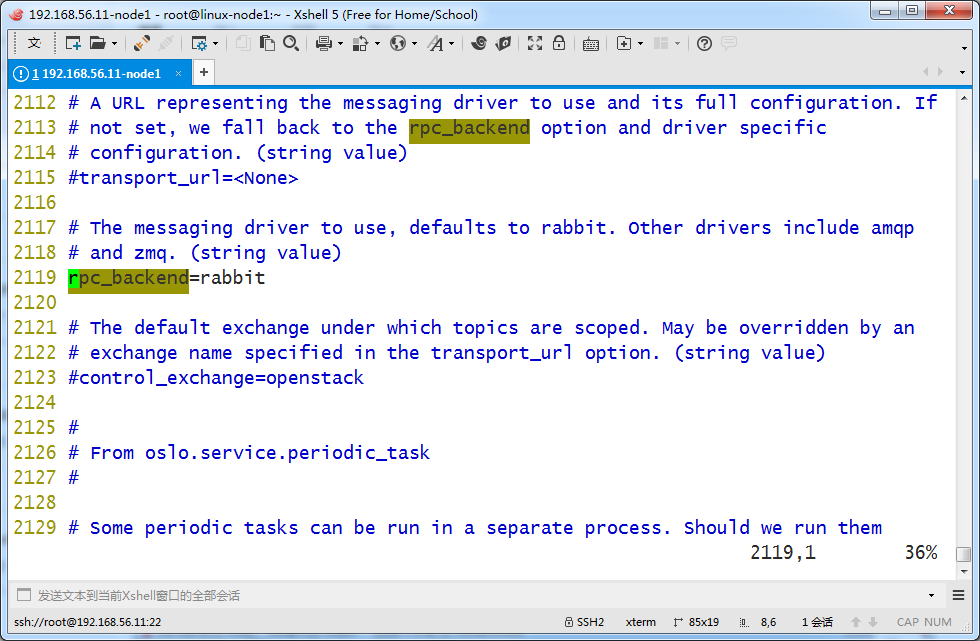

4、配置部分---RabbitMQ配置

修改rabbitmq的配置,nova它们各个组件之间要用到

在 [DEFAULT] 和 [oslo_messaging_rabbit]部分,配置 RabbitMQ 消息队列访问:

[DEFAULT] ... rpc_backend = rabbit [oslo_messaging_rabbit] ... rabbit_host=192.168.56.11 rabbit_userid = openstack rabbit_password = openstack

下面也可以把端口部分注释去掉

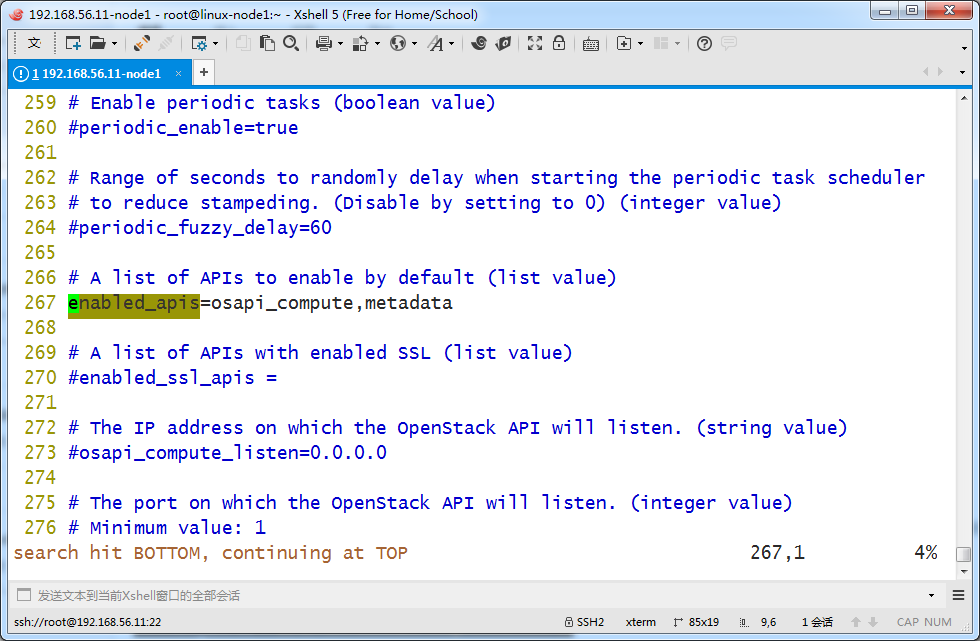

5、配置部分---nova自身功能模块的配置

在[DEFAULT]部分,只启用计算和元数据API:

[DEFAULT] ... enabled_apis = osapi_compute,metadata

在 [DEFAULT 部分,这里就不配置了,以删除线标识

[DEFAULT]

...

my_ip = 10.0.0.11

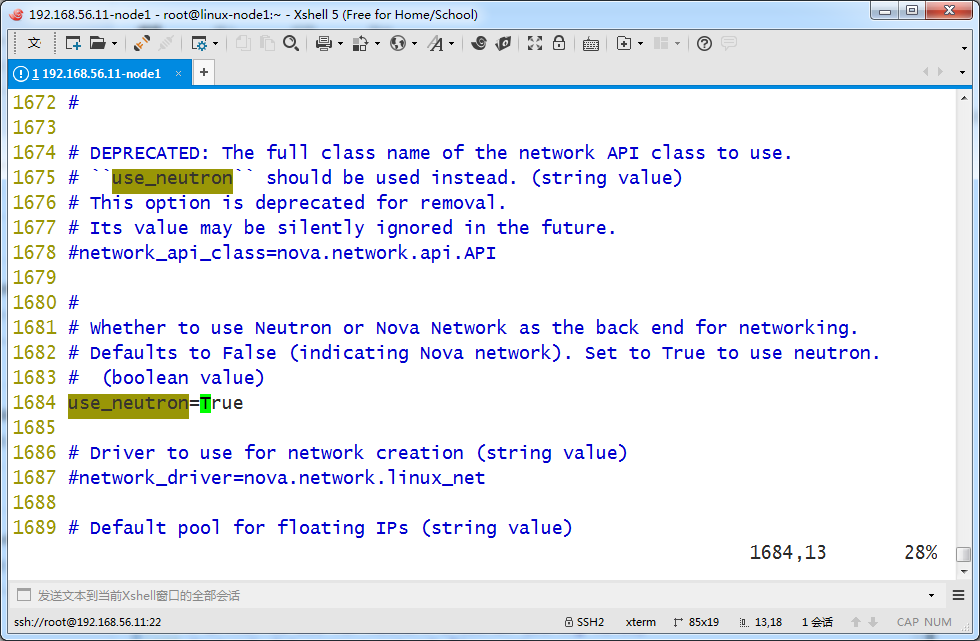

在 [DEFAULT] 部分,使能 Networking 服务:

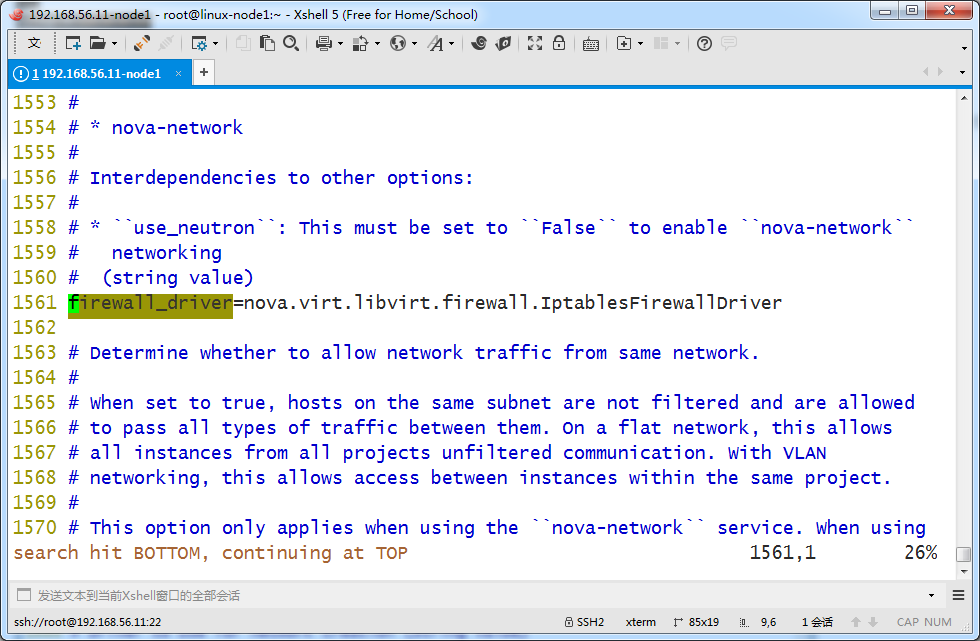

默认情况下,计算服务使用内置的防火墙服务。由于网络服务包含了防火墙服务

你必须使用nova.virt.firewall.NoopFirewallDriver防火墙服务来禁用掉计算服务内置的防火墙服务

[DEFAULT] ... use_neutron = True firewall_driver = nova.virt.firewall.NoopFirewallDriver

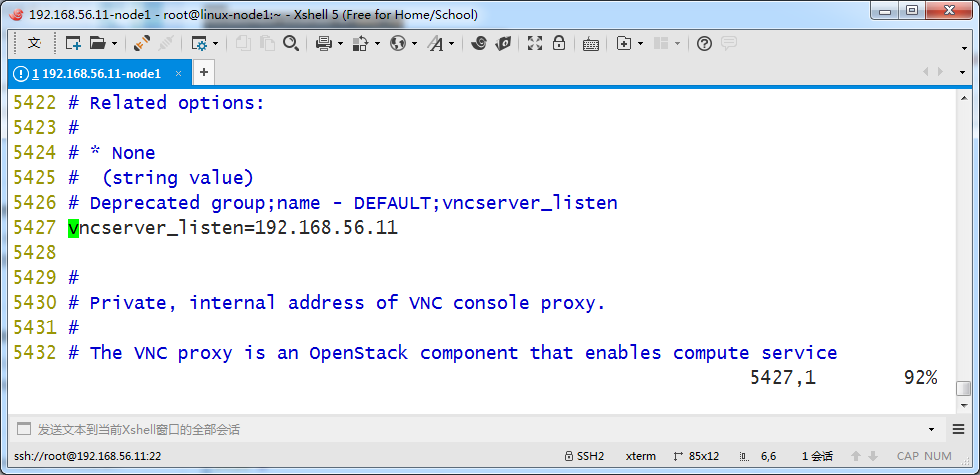

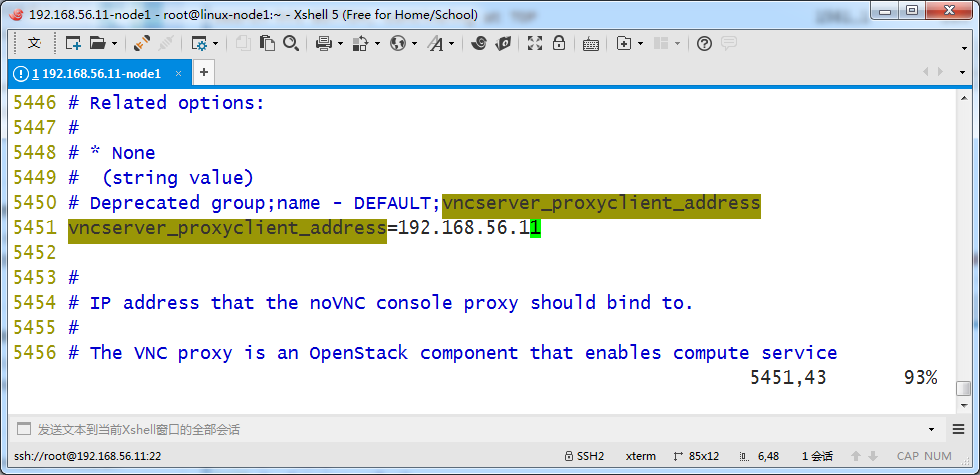

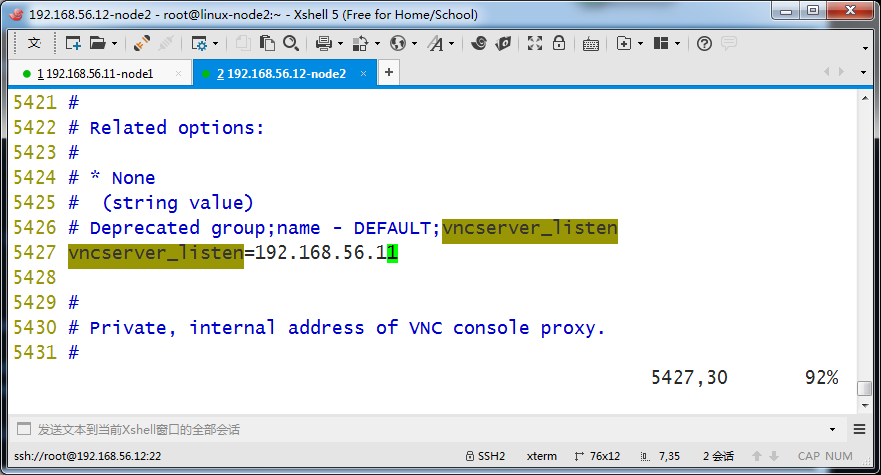

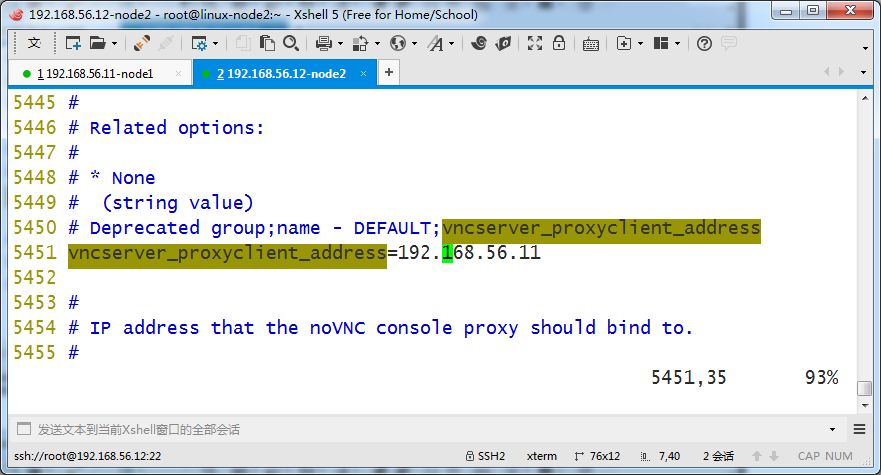

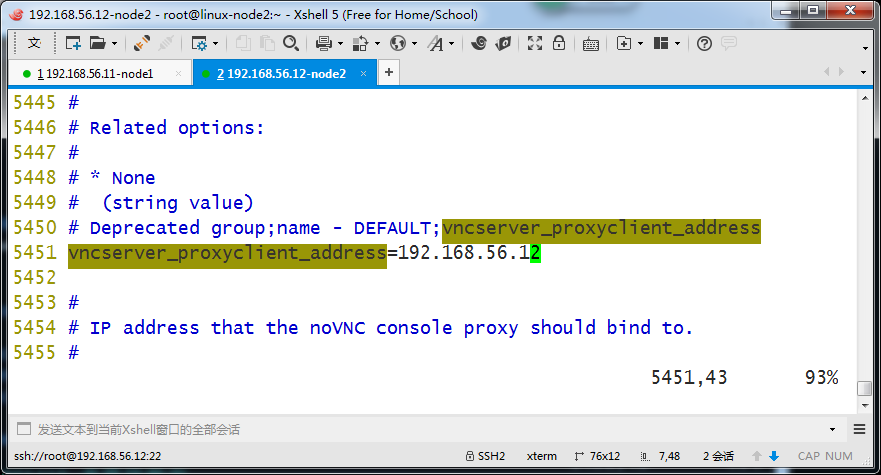

在[vnc]部分,配置VNC代理使用控制节点的管理接口IP地址 (这里原来是$my_ip):

[vnc] ... vncserver_listen = 192.168.56.11 vncserver_proxyclient_address = 192.168.56.11

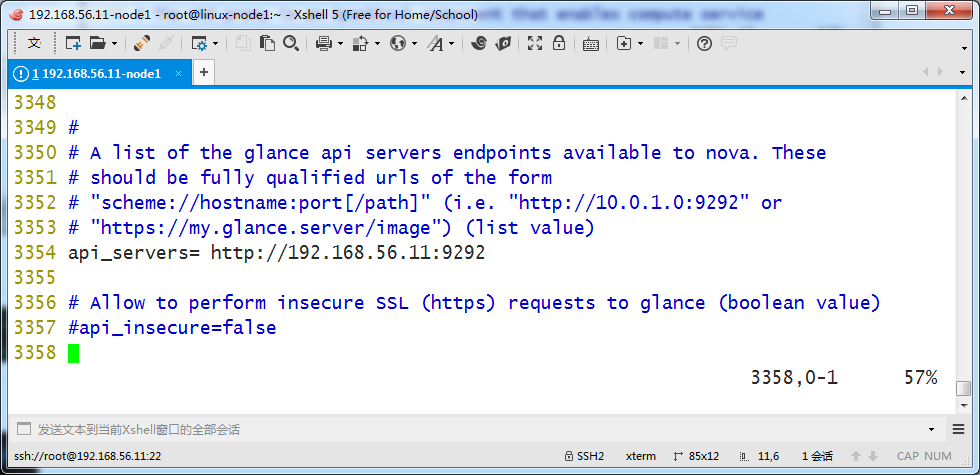

在 [glance] 区域,配置镜像服务 API 的位置:

[glance] ... api_servers = http://192.168.56.11:9292

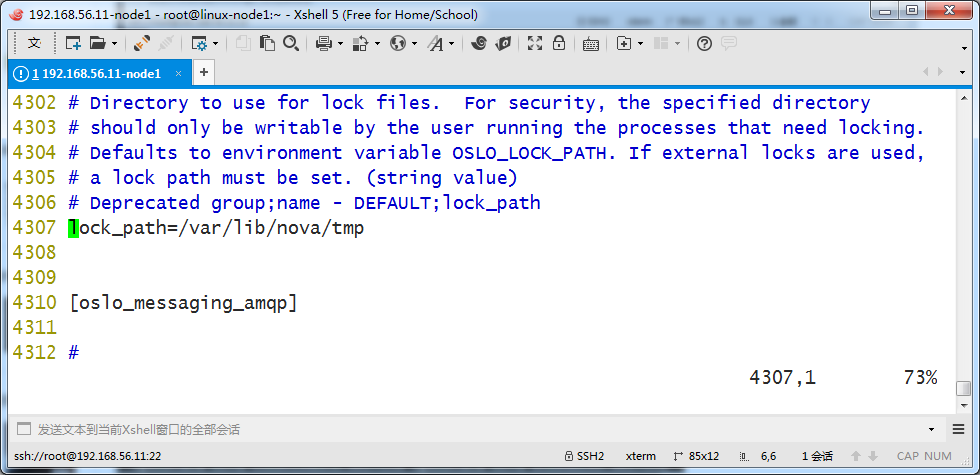

在 [oslo_concurrency] 部分,配置锁路径:

[oslo_concurrency] ... lock_path = /var/lib/nova/tmp

[root@linux-node1 ~]# grep -n '^[a-Z]' /etc/nova/nova.conf 267:enabled_apis=osapi_compute,metadata 382:auth_strategy=keystone 1561:firewall_driver=nova.virt.libvirt.firewall.IptablesFirewallDriver 1684:use_neutron=True 2119:rpc_backend=rabbit 2168:connection = mysql+pymysql://nova:nova@192.168.56.11/nova_api 3128:connection = mysql+pymysql://nova:nova@192.168.56.11/nova 3354:api_servers= http://192.168.56.11:9292 3523:auth_uri = http://192.168.56.11:5000 3524:auth_url = http://192.168.56.11:35357 3525:memcached_servers = 192.168.56.11:11211 3526:auth_type = password 3527:project_domain_name = default 3528:user_domain_name = default 3529:project_name = service 3530:username = nova 3531:password = nova 4307:lock_path=/var/lib/nova/tmp 4458:rabbit_host=192.168.56.11 4464:rabbit_port=5672 4476:rabbit_userid=openstack 4480:rabbit_password=openstack 5427:vncserver_listen=192.168.56.11 5451:vncserver_proxyclient_address=192.168.56.11 [root@linux-node1 ~]#

7、启动nova服务

启动 nova 服务并将其设置为随系统启动

命令如下

systemctl enable openstack-nova-api.service \ openstack-nova-consoleauth.service openstack-nova-scheduler.service \ openstack-nova-conductor.service openstack-nova-novncproxy.service systemctl start openstack-nova-api.service \ openstack-nova-consoleauth.service openstack-nova-scheduler.service \ openstack-nova-conductor.service openstack-nova-novncproxy.service

执行过程如下

[root@linux-node1 ~]# systemctl enable openstack-nova-api.service \ > openstack-nova-consoleauth.service openstack-nova-scheduler.service \ > openstack-nova-conductor.service openstack-nova-novncproxy.service Created symlink from /etc/systemd/system/multi-user.target.wants/openstack-nova-api.service to /usr/lib/systemd/system/openstack-nova-api.service. Created symlink from /etc/systemd/system/multi-user.target.wants/openstack-nova-consoleauth.service to /usr/lib/systemd/system/openstack-nova-consoleauth.service. Created symlink from /etc/systemd/system/multi-user.target.wants/openstack-nova-scheduler.service to /usr/lib/systemd/system/openstack-nova-scheduler.service. Created symlink from /etc/systemd/system/multi-user.target.wants/openstack-nova-conductor.service to /usr/lib/systemd/system/openstack-nova-conductor.service. Created symlink from /etc/systemd/system/multi-user.target.wants/openstack-nova-novncproxy.service to /usr/lib/systemd/system/openstack-nova-novncproxy.service. [root@linux-node1 ~]# systemctl start openstack-nova-api.service \ > openstack-nova-consoleauth.service openstack-nova-scheduler.service \ > openstack-nova-conductor.service openstack-nova-novncproxy.service [root@linux-node1 ~]#

下面步骤之前已经操作了,这里就不做了,以删除线标识

1、获得 admin 凭证来获取只有管理员能执行的命令的访问权限

source admin-openrc

2、 要创建服务证书,完成这些步骤

创建 nova 用户

openstack user create --domain default \

--password-prompt nova

3、给 nova 用户添加 admin 角色

$ openstack role add --project service --user nova admin

openstack service create --name nova --description "OpenStack Compute" compute

执行过程如下

[root@linux-node1 ~]# source admin-openstack.sh [root@linux-node1 ~]# openstack service create --name nova --description "OpenStack Compute" compute +-------------+----------------------------------+ | Field | Value | +-------------+----------------------------------+ | description | OpenStack Compute | | enabled | True | | id | e1e90d1948fb4384a8d2b09edb2c0cf6 | | name | nova | | type | compute | +-------------+----------------------------------+ [root@linux-node1 ~]#

openstack endpoint create --region RegionOne compute public http://192.168.56.11:8774/v2.1/%\(tenant_id\)s

[root@linux-node1 ~]# openstack endpoint create --region RegionOne compute public http://192.168.56.11:8774/v2.1/%\(tenant_id\)s +--------------+----------------------------------------------+ | Field | Value | +--------------+----------------------------------------------+ | enabled | True | | id | 7017bf5b4990451296c6b51aff13e6f4 | | interface | public | | region | RegionOne | | region_id | RegionOne | | service_id | e1e90d1948fb4384a8d2b09edb2c0cf6 | | service_name | nova | | service_type | compute | | url | http://192.168.56.11:8774/v2.1/%(tenant_id)s | +--------------+----------------------------------------------+ [root@linux-node1 ~]#

创建internal端点

命令如下

openstack endpoint create --region RegionOne compute internal http://192.168.56.11:8774/v2.1/%\(tenant_id\)s

操作如下

[root@linux-node1 ~]# openstack endpoint create --region RegionOne compute internal http://192.168.56.11:8774/v2.1/%\(tenant_id\)s +--------------+----------------------------------------------+ | Field | Value | +--------------+----------------------------------------------+ | enabled | True | | id | b3325481dd704aeb94c02c48b97e0991 | | interface | internal | | region | RegionOne | | region_id | RegionOne | | service_id | e1e90d1948fb4384a8d2b09edb2c0cf6 | | service_name | nova | | service_type | compute | | url | http://192.168.56.11:8774/v2.1/%(tenant_id)s | +--------------+----------------------------------------------+ [root@linux-node1 ~]#

创建admin端点

命令如下

openstack endpoint create --region RegionOne compute admin http://192.168.56.11:8774/v2.1/%\(tenant_id\)s

操作如下

[root@linux-node1 ~]# openstack endpoint create --region RegionOne compute admin http://192.168.56.11:8774/v2.1/%\(tenant_id\)s +--------------+----------------------------------------------+ | Field | Value | +--------------+----------------------------------------------+ | enabled | True | | id | 8ce0f94f658141ce94aa339b43c48eea | | interface | admin | | region | RegionOne | | region_id | RegionOne | | service_id | e1e90d1948fb4384a8d2b09edb2c0cf6 | | service_name | nova | | service_type | compute | | url | http://192.168.56.11:8774/v2.1/%(tenant_id)s | +--------------+----------------------------------------------+ [root@linux-node1 ~]#

检查创建结果

[root@linux-node1 ~]# openstack host list +----------------------+-------------+----------+ | Host Name | Service | Zone | +----------------------+-------------+----------+ | linux-node1.nmap.com | scheduler | internal | | linux-node1.nmap.com | consoleauth | internal | | linux-node1.nmap.com | conductor | internal | +----------------------+-------------+----------+ [root@linux-node1 ~]#

计算节点安装和配置nova

nova compute通过libvirt管理kvm,计算节点是真正运行虚拟机的

计算节点机器必须打开vt-x

计算节点操作之前先同步下时间

[root@linux-node2 ~]# ntpdate time1.aliyun.com 18 Feb 12:53:43 ntpdate[3184]: adjust time server 115.28.122.198 offset -0.005554 sec [root@linux-node2 ~]# date Sat Feb 18 12:53:45 CST 2017 [root@linux-node2 ~]#

1、安装软件包

[root@linux-node2 ~]# yum install openstack-nova-compute -y Loaded plugins: fastestmirror Loading mirror speeds from cached hostfile * base: mirrors.aliyun.com * epel: mirrors.tuna.tsinghua.edu.cn * extras: mirrors.163.com * updates: mirrors.aliyun.com Package 1:openstack-nova-compute-13.1.2-1.el7.noarch already installed and latest version Nothing to do [root@linux-node2 ~]#

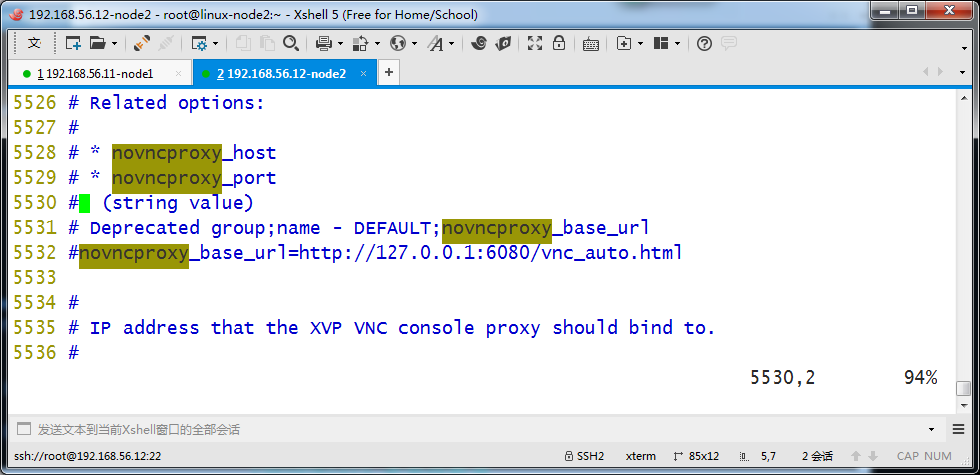

关于novncproxy

novncproxy的端口是6080 ,登录控制节点查看下

[root@linux-node1 ~]# netstat -lntp | grep 6080 tcp 0 0 0.0.0.0:6080 0.0.0.0:* LISTEN 13967/python2 [root@linux-node1 ~]# [root@linux-node1 ~]# ps aux | grep 13967 nova 13967 0.0 1.6 379496 66716 ? Ss 11:24 0:06 /usr/bin/python2 /usr/bin/nova-novncproxy --web /usr/share/novnc/ root 16309 0.0 0.0 112644 968 pts/0 S+ 13:07 0:00 grep --colour=auto 13967 [root@linux-node1 ~]#

2、计算节点配置文件修改

计算节点的配置文件更改的地方和控制节点几乎一致,因此可以把控制节点的配置文件拷贝过来使用,还需要修改个别地方

1、计算节点没有配置连接数据库。(其实拷贝过来不删数据库的配置也能运行正常,但是不规范)

2、计算节点vnc多配置一行

[root@linux-node1 ~]# ls /etc/nova/ -l total 224 -rw-r----- 1 root nova 3673 Oct 10 21:20 api-paste.ini -rw-r----- 1 root nova 184346 Feb 18 11:20 nova.conf -rw-r----- 1 root nova 27914 Oct 10 21:20 policy.json -rw-r--r-- 1 root root 72 Oct 13 20:01 release -rw-r----- 1 root nova 966 Oct 10 21:20 rootwrap.conf [root@linux-node1 ~]#

[root@linux-node1 ~]# rsync -avz /etc/nova/nova.conf root@192.168.56.12:/etc/nova/ root@192.168.56.12's password: sending incremental file list sent 41 bytes received 12 bytes 15.14 bytes/sec total size is 184346 speedup is 3478.23 [root@linux-node1 ~]#

[root@linux-node2 ~]# ll /etc/nova/ total 224 -rw-r----- 1 root nova 3673 Oct 10 21:20 api-paste.ini -rw-r----- 1 root nova 184346 Feb 18 11:20 nova.conf -rw-r----- 1 root nova 27914 Oct 10 21:20 policy.json -rw-r--r-- 1 root root 72 Oct 13 20:01 release -rw-r----- 1 root nova 966 Oct 10 21:20 rootwrap.conf [root@linux-node2 ~]#

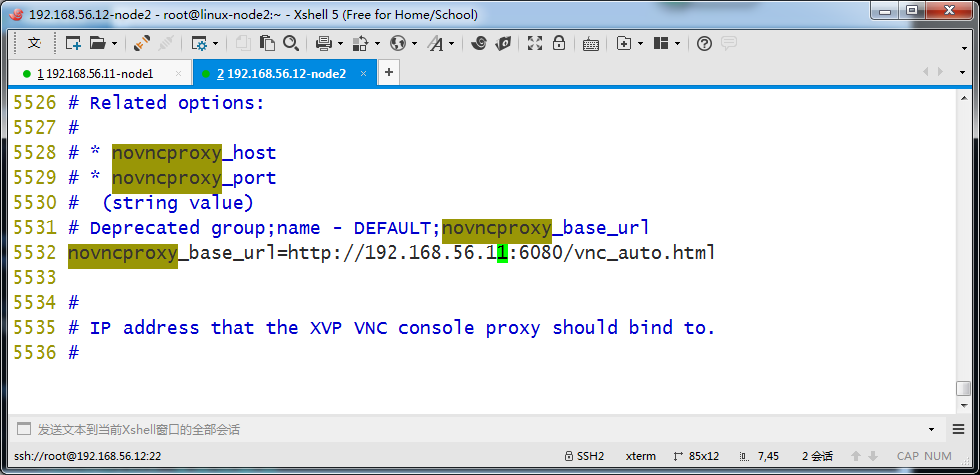

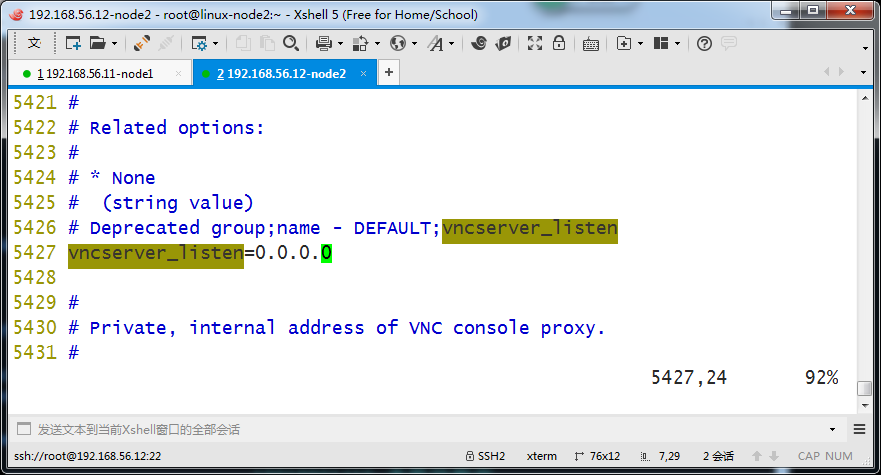

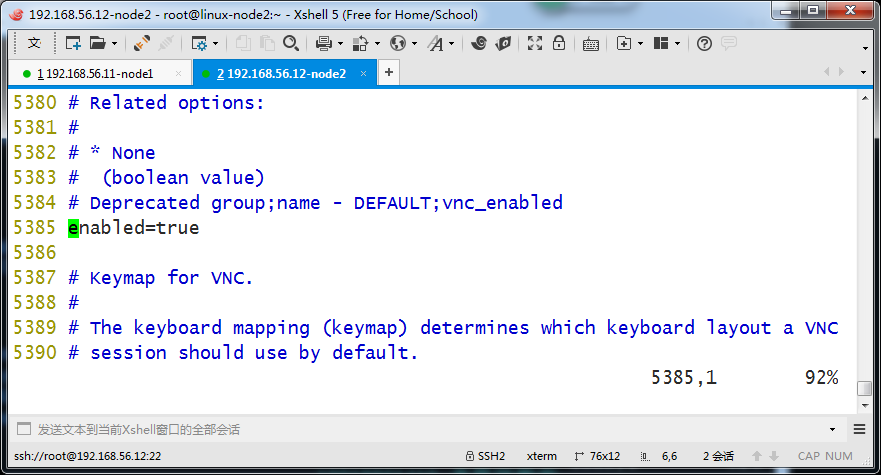

在[vnc]部分,启用并配置远程控制台访问:

[vnc] ... enabled = True vncserver_listen = 0.0.0.0 vncserver_proxyclient_address = $my_ip novncproxy_base_url = http://controller:6080/vnc_auto.html

服务器组件监听所有的 IP 地址,而代理组件仅仅监听计算节点管理网络接口的 IP 地址。

基本的 URL 只是你可以使用 web 浏览器访问位于该计算节点上实例的远程控制台的位置。

改成如下

[root@linux-node2 ~]# egrep -c '(vmx|svm)' /proc/cpuinfo 4 [root@linux-node2 ~]#

如果这个命令返回了 one or greater 的值,那么你的计算节点支持硬件加速且不需要额外的配置。

如果这个命令返回了 zero 值,那么你的计算节点不支持硬件加速。你必须配置 libvirt 来使用 QEMU 去代替 KVM

在 /etc/nova/nova.conf 文件的 [libvirt] 区域做出如下的编辑:

[libvirt] ... virt_type = qemu

外把下面注释去掉

[libvirt]模块部分也修改完毕

[root@linux-node2 ~]# grep -n '^[a-Z]' /etc/nova/nova.conf 267:enabled_apis=osapi_compute,metadata 382:auth_strategy=keystone 1561:firewall_driver=nova.virt.libvirt.firewall.IptablesFirewallDriver 1684:use_neutron=True 2119:rpc_backend=rabbit 3354:api_servers= http://192.168.56.11:9292 3523:auth_uri = http://192.168.56.11:5000 3524:auth_url = http://192.168.56.11:35357 3525:memcached_servers = 192.168.56.11:11211 3526:auth_type = password 3527:project_domain_name = default 3528:user_domain_name = default 3529:project_name = service 3530:username = nova 3531:password = nova 3683:virt_type=kvm 4307:lock_path=/var/lib/nova/tmp 4458:rabbit_host=192.168.56.11 4464:rabbit_port=5672 4476:rabbit_userid=openstack 4480:rabbit_password=openstack 5385:enabled=true 5427:vncserver_listen=0.0.0.0 5451:vncserver_proxyclient_address=192.168.56.12 5532:novncproxy_base_url=http://192.168.56.11:6080/vnc_auto.html [root@linux-node2 ~]#

3、启动服务和检查状态

启动计算服务及其依赖,并将其配置为随系统自动启动

[root@linux-node2 ~]# systemctl enable libvirtd.service openstack-nova-compute.service Created symlink from /etc/systemd/system/multi-user.target.wants/openstack-nova-compute.service to /usr/lib/systemd/system/openstack-nova-compute.service. [root@linux-node2 ~]# systemctl start libvirtd.service openstack-nova-compute.service [root@linux-node2 ~]#

[root@linux-node1 ~]# source admin-openstack.sh [root@linux-node1 ~]# openstack host list +----------------------+-------------+----------+ | Host Name | Service | Zone | +----------------------+-------------+----------+ | linux-node1.nmap.com | scheduler | internal | | linux-node1.nmap.com | consoleauth | internal | | linux-node1.nmap.com | conductor | internal | | linux-node2.nmap.com | compute | nova | +----------------------+-------------+----------+ [root@linux-node1 ~]#

控制节点列出nova的服务,后面的update时间都几乎一致,如果差距过大,可能造成无法创建虚拟机

[root@linux-node1 ~]# nova service-list +----+------------------+----------------------+----------+---------+-------+----------------------------+-----------------+ | Id | Binary | Host | Zone | Status | State | Updated_at | Disabled Reason | +----+------------------+----------------------+----------+---------+-------+----------------------------+-----------------+ | 1 | nova-scheduler | linux-node1.nmap.com | internal | enabled | up | 2017-02-18T05:41:14.000000 | - | | 2 | nova-consoleauth | linux-node1.nmap.com | internal | enabled | up | 2017-02-18T05:41:14.000000 | - | | 3 | nova-conductor | linux-node1.nmap.com | internal | enabled | up | 2017-02-18T05:41:13.000000 | - | | 7 | nova-compute | linux-node2.nmap.com | nova | enabled | up | 2017-02-18T05:41:13.000000 | - | +----+------------------+----------------------+----------+---------+-------+----------------------------+-----------------+ [root@linux-node1 ~]#

下面命令测试nova连接glance是否正常

[root@linux-node1 ~]# nova image-list +--------------------------------------+--------+--------+--------+ | ID | Name | Status | Server | +--------------------------------------+--------+--------+--------+ | 9969eaa3-0296-48cc-a42e-a02251b778a6 | cirros | ACTIVE | | +--------------------------------------+--------+--------+--------+ [root@linux-node1 ~]#