Map Reduce Application(Partitioninig/Binning)

Map Reduce Application(Partitioninig/Group data by a defined key)

Assuming we want to group data by the year(2008 to 2016) of their [last access date time]. For each year, we use a reducer to collect them and output the data in this group/partition(year of the last access datetime). So, we want the MR to partition our key by year. We will lean what's the default partitioner and see how to set custom partitioner.

The default partitioner:

1 public int getPartition(K key, V value, 2 int numReduceTasks) { 3 return (key.hashCode() & Integer.MAX_VALUE) % numReduceTasks; 4 }

Custom Partitioner:

job.setPartitionerClass(CustomPartitioner.class)

With blew partitioner, the data of different year of [last access date time] will be assigned to different / unique partition. The num of reduce tasks is 9.

1 public static class CustomPartitioner extends Partitioner<Text, Text>{ 2 @Override 3 public int getPartition(Text key, Text value, int numReduceTasks){ 4 if(numReduceTasks == 0){ 5 return 0; 6 } 7 return key-2008 8 }

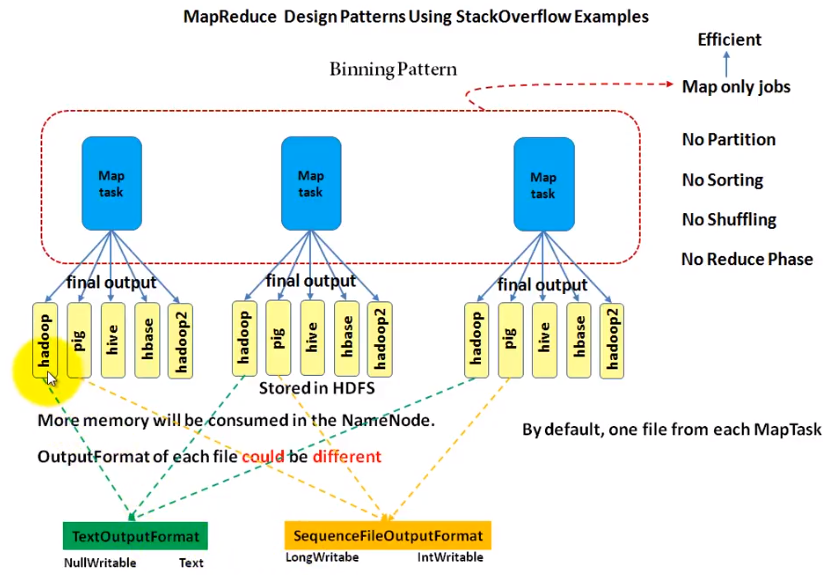

Binning pattern

The text/comments/answer/question....contains the specific words will be written into the corresponding files from mapper.

See below picture to understand the binning pattern. It is easier than partitioning as it does not have partition/sorting/shuffling and reducer(job.setNumReduceTasks(0)). The outputs from mappers compose the final outputs.

MultipleOutputs.addNamedOutput(job,"namedoutput",TextOutputFormat.class, NullWritable.class, Text.class)

In the mapper setup function, create the MultipleOutputs intance by calling its constructor

MultipleOutputs(TaskInputOutputContext<?,?,KEYOUT,VALUEOUT> context)

Creates and initializes multiple outputs support, it should be instantiated in the Mapper/Reducer setup method.

1 @Override 2 protected void setup(Context context){ 3 maltipleOutputs = new MultipleOurputs(context); 4 }

Write your logic in the mapper function and output the result. "$tag/$tag-tag" means folder $pag will be created and $tag-tag is the prefix of the files(to distinguish the different mappers with suffix).

See doc for MultipleOutputs:https://hadoop.apache.org/docs/r3.0.1/api/org/apache/hadoop/mapreduce/lib/output/MultipleOutputs.html

1 if(tag.equalsIgnoreCase("pig"){ 2 multipleOutputs.write("namedoutput",key,value,"pig/pig-tag"); 3 } 4 5 if(tag.equalsIgnoreCase("hive"){ 6 multipleOutputs.write("namedoutput",key,value,"hive/hive-tag"); 7 } 8 .....