python爬虫—爬取拉钩网

本人自学python,小试牛刀,爬取广州片区拉钩网招聘信息。仅用于学习

参考文章:https://blog.csdn.net/SvJr6gGCzUJ96OyUo/article/details/80544872

开发环境:

python3.7

windows 10

一、需求

了解拉钩网上数据分析招聘信息。

二、针对爬取页面进行分析

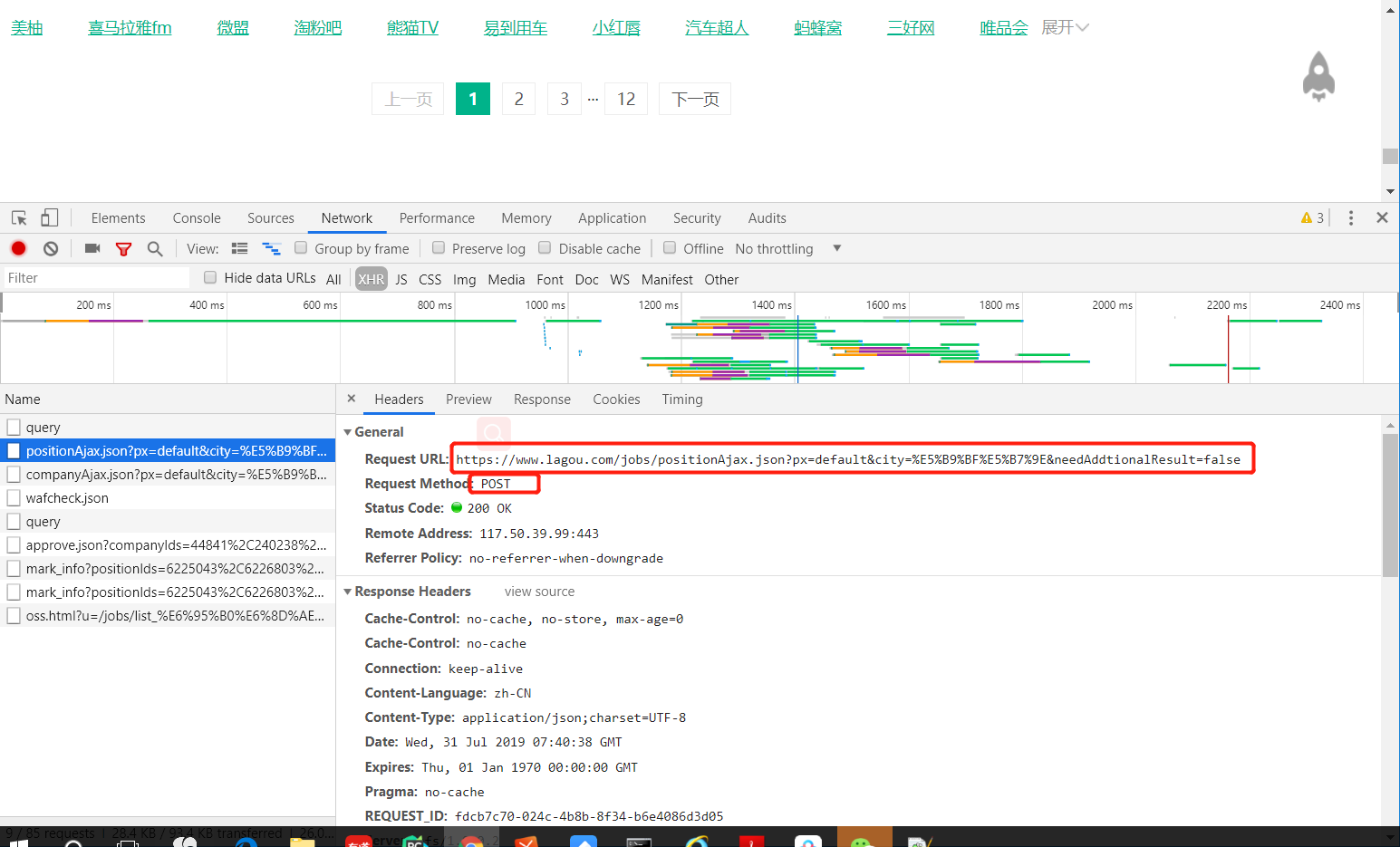

(1)根据Network-XHR-headers判断url。查看Request URL、Request Method(请求方法)

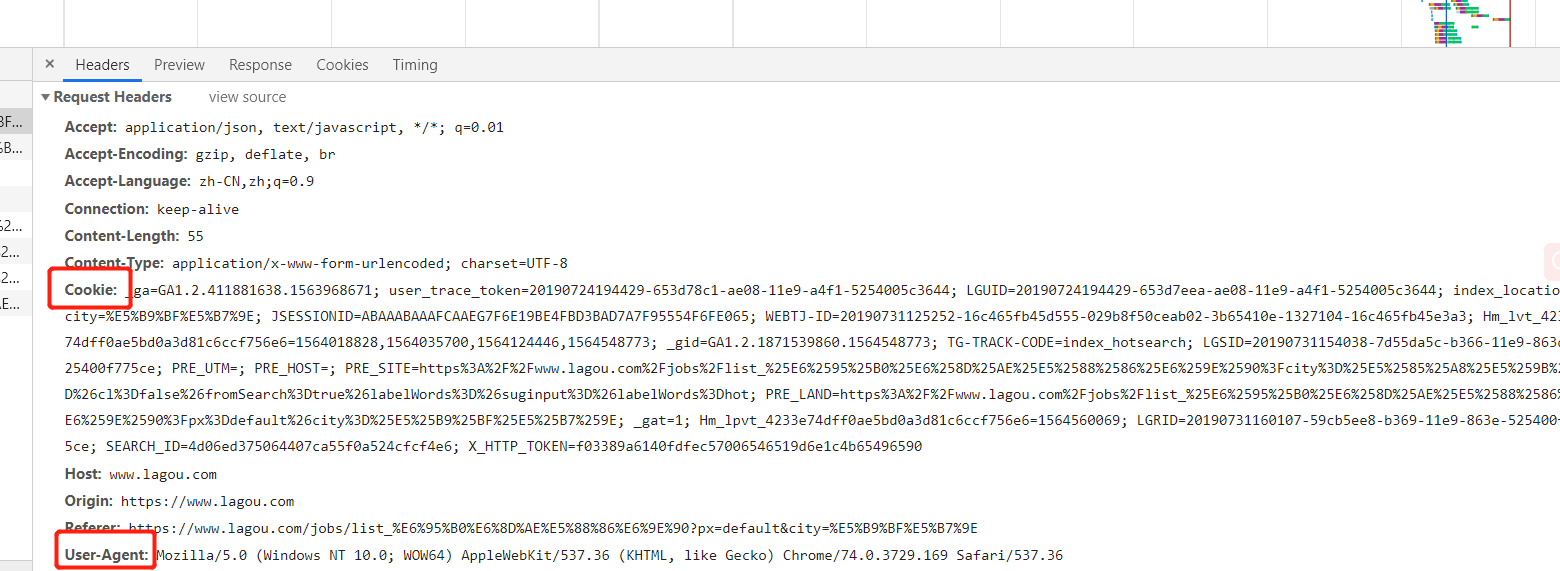

(2)查看headers

拉钩cookie需要验证,解决方法(1)、在查看url前先手动登陆拉钩网站,再查看具体url,requsets时写入完整cookie。

方法(2)运用requsets.Session()方法,运用网址创建cookie储存https://www.lagou.com/jobs/list_%E6%95%B0%E6%8D%AE%E5%88%86%E6%9E%90?city=%E5%85%A8%E5%9B%BD&cl=false&fromSearch=true&labelWords=&suginput=&labelWords=hot

用法解析来源https://blog.csdn.net/wumxiaozhu/article/details/81542480

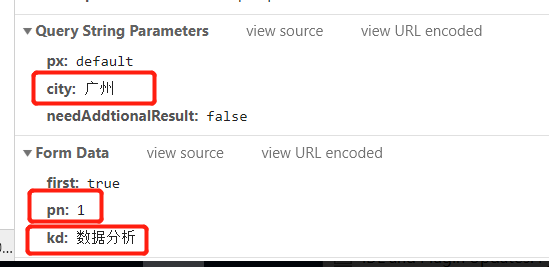

(3)查看关键数据,可以改变地区、页码、搜索kd

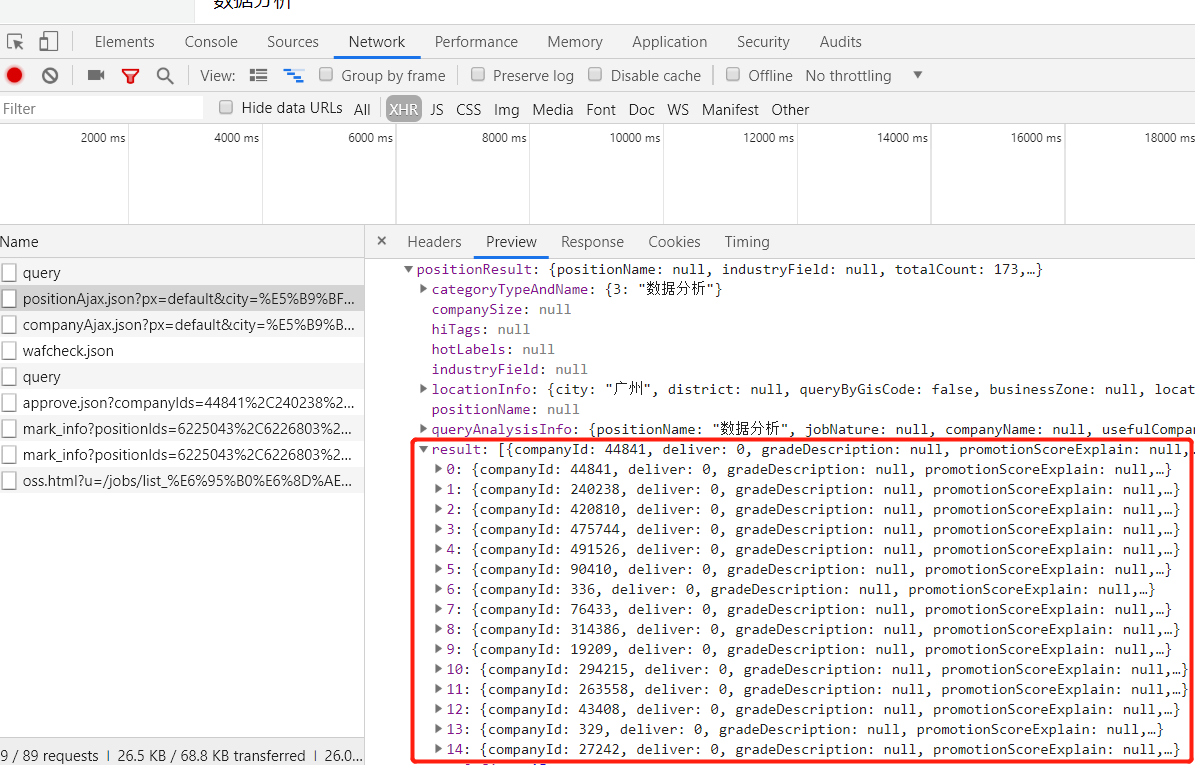

(4)Network-XHR-Preview里包含需要爬取信息

三、代码

import requests as re import json import pandas as pd import time import random #获取网页url def get_url(keyword,pn): base_url1 = "https://www.lagou.com/jobs/list_%E6%95%B0%E6%8D%AE%E5%88%86%E6%9E%90?labelWords=&fromSearch=true&suginput=" #不需要cookie的url base_url = "https://www.lagou.com/jobs/positionAjax.json?px=default&city=%E5%B9%BF%E5%B7%9E&needAddtionalResult=false" base_headers = {"Accept": "application/json, text/javascript, */*; q=0.01", "Host": "www.lagou.com", "Referer": "https://www.lagou.com/jobs/list_%E6%95%B0%E6%8D%AE%E5%88%86%E6%9E%90?labelWords=&fromSearch=true&suginput=", 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.169 Safari/537.36' } data = { "first": "true", "pn": "{}".format(pn), "kd": "{}".format(keyword) } session = re.Session() #创建cookie存储 session.get(url=base_url1,headers=base_headers) #通过网址url1建立cookie response = session.post(url=base_url, headers=base_headers, data=data) response.encoding = 'utf-8' #print(response) return response #解析网页信息 def total_Count(response): page = response.json() # print(page) total_count = page['content']['positionResult']['totalCount'] #totalCount为总个数 pn_count = int(total_count)//15 + 1 #页数 print('职位总数{},共{}页'.format(total_count,pn_count)) return pn_count def parse_url(response): page = response.json() for i in range(1,16): jobs_list = page['content']['positionResult']['result'] #print(jobs_list) page_info_list = [] #用于存储data for i in jobs_list: job_info = [] job_info.append(i['companyFullName']) job_info.append(i['companyShortName']) job_info.append(i['companySize']) job_info.append(i['financeStage']) job_info.append(i['district']) job_info.append(i['positionName']) job_info.append(i['workYear']) job_info.append(i['education']) job_info.append(i['salary']) job_info.append(i['positionAdvantage']) job_info.append(i['industryField']) page_info_list.append(job_info) print(page_info_list) return page_info_list def save_data(page_info_list): df = pd.DataFrame(data=page_info_list, columns=['公司全名', '公司简称', '公司规模', '融资阶段', '区域', '职位名称', '工作经验', '学历要求', '工资', '职位福利','行业']) #print(df) df.to_csv('lagou1_jobs.csv', index=False) print('保存完成') # #调用中心 def main(): keyword = input('输入查找内容:') #输入搜索内容 a = get_url(keyword,pn=1) #获取respones b = total_Count(a) # 获得数据总个数和页数 total_info = [] #用来储存每页parse_url for i in range(1,int(b)+1): #实现翻页效果 a = get_url(keyword, pn=i) c = parse_url(a) total_info += ci time.sleep(20) print('成功获取第{}页'.format(i)) d = save_data(total_info) if __name__ =="__main__": main()

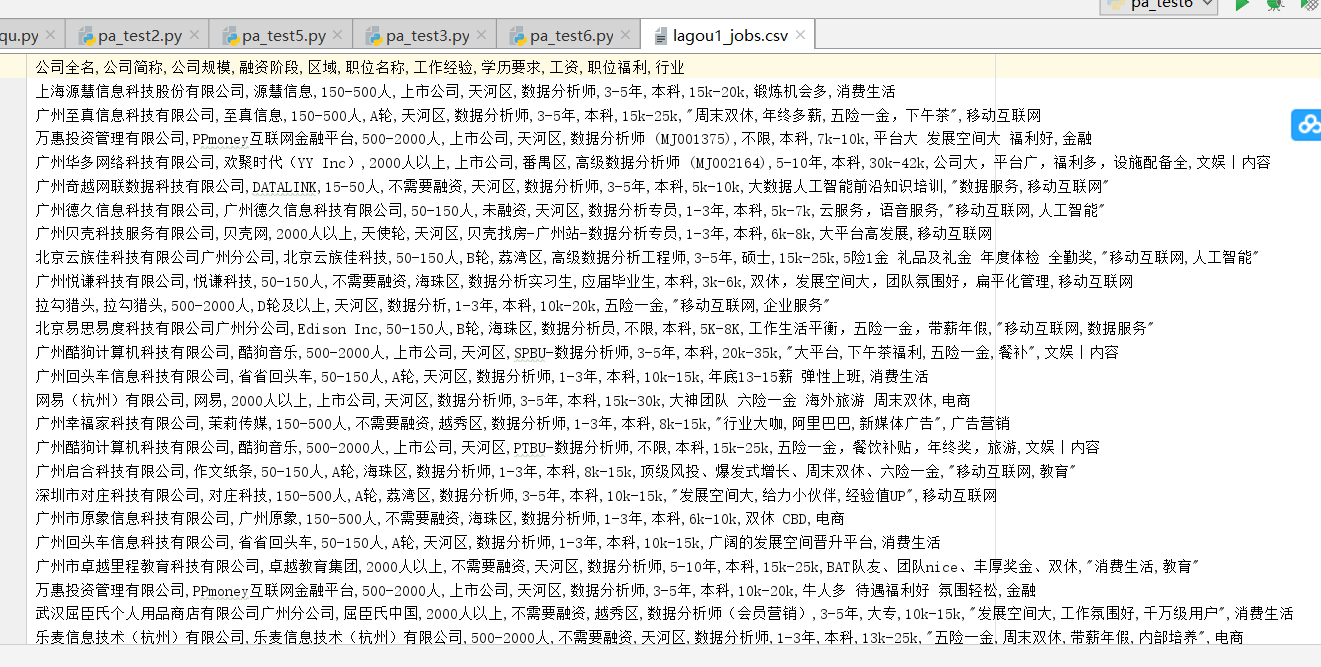

执行结果:

问题:

一、其中主要遇到问题,获取响应,返回{'status': False, 'msg': '您操作太频繁,请稍后再访问', 'clientIp': '120.230.127.181', 'state': 2402},经分析爬取的url需要cookie,但python执行过程中cookie不连续,每次执行都是新的cookie,因此运用requsets.session()建立连接。