java爬知乎问题的所有回答

突然想爬知乎问题的答案, 然后就开始研究知乎页面,刚开始是爬浏览器渲染好的页面, 解析DOM,找到特定的标签,

后来发现,每次只能得到页面加载出来的几条数据,想要更多就要下拉页面,然后浏览器自动加载几条数据,这样的话解析DOM没用啊,

不能获得所有的回答,然后就搜索了下问题,发现可以使用模拟浏览器发送请求给服务器,获取服务器响应,解析返回的数据,

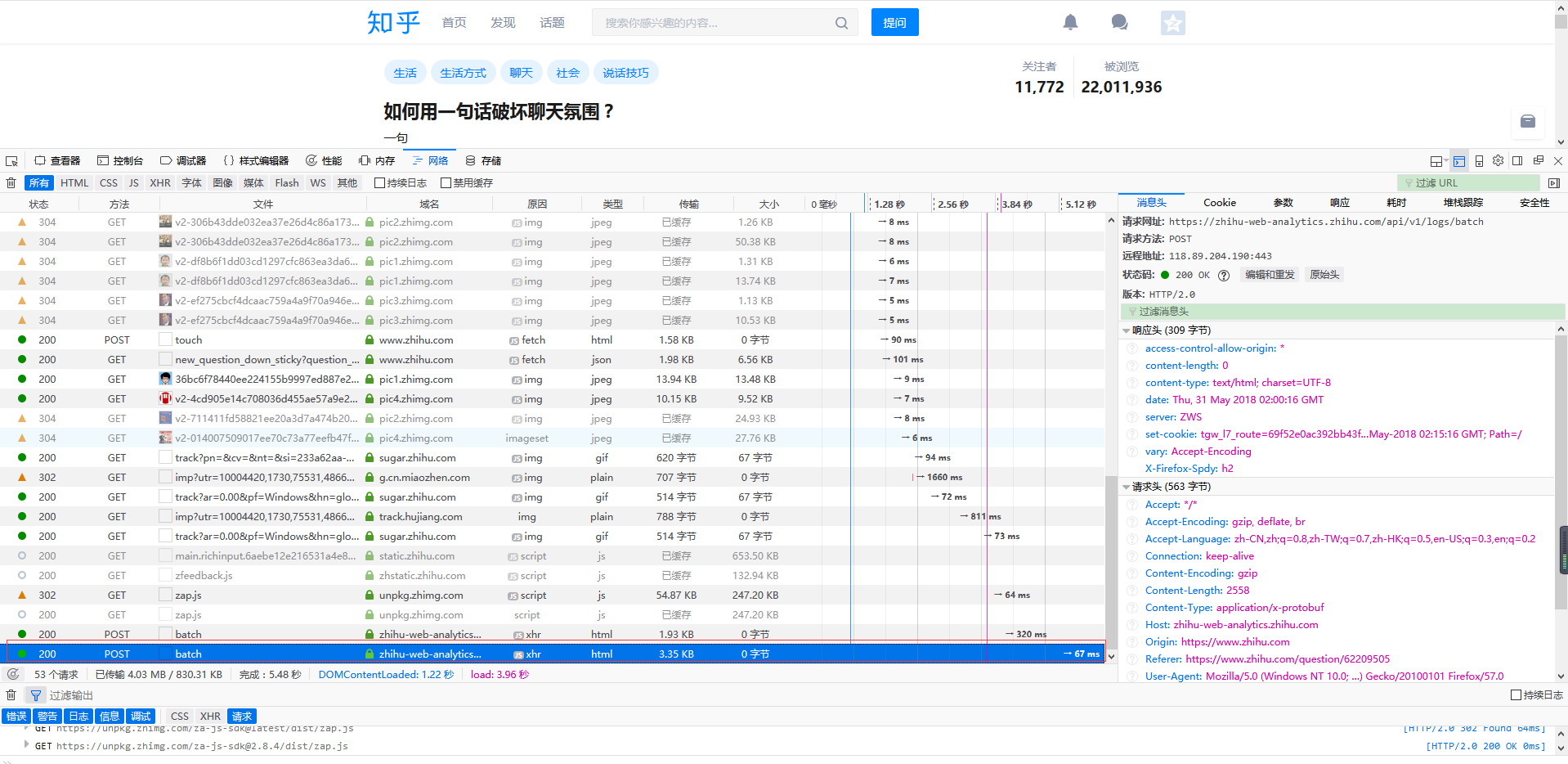

有了方法,接着就是分析网络请求了, 我用的是火狐浏览器, 按F12点击 网络

鼠标定位到当前位置的最底端

然后下拉滚动条,感觉已经加载新内容了就可以停止了, 这个时候请求新内容的url肯定已经出来了, 剩下的就是找出这个url。

一种方法是看url的意思, 这个不太好看的出来,另一种就是直接复制url到浏览器, 看返回结果,最后得到的请求url是

把链接拿到浏览器地址栏,查看的结果是

接下来就是写代码了

import com.google.gson.Gson; import com.google.gson.internal.LinkedTreeMap; import java.io.*; import java.net.*; import java.util.*; public class ZhiHu { public static void main(String[] args){

//请求链接 String url = "https://www.zhihu.com/api/v4/questions/62209505/answers?" + "include=data[*].is_normal,admin_closed_comment,reward_info," + "is_collapsed,annotation_action,annotation_detail,collapse_reason," + "is_sticky,collapsed_by,suggest_edit,comment_count,can_comment,content," + "editable_content,voteup_count,reshipment_settings,comment_permission," + "created_time,updated_time,review_info,relevant_info,question,excerpt," + "relationship.is_authorized,is_author,voting,is_thanked,is_nothelp;data[*].mark_infos[*].url;" + "data[*].author.follower_count,badge[?(type=best_answerer)].topics&sort_by=default";

//调用方法 StringBuffer stringBuffer = sendGet(url,20,0);

//输出结果 System.out.println(delHTMLTag(new String(stringBuffer))); } public static StringBuffer sendGet(String baseUrl,int limit,int offset) { //存放每次获取的返回结果 String responseResult = "";

//读取服务器响应的流 BufferedReader bufferedReader = null; //存放所有的回答内容 StringBuffer stringBuffer = new StringBuffer(); //每次返回的回答数 int num = 0; try { //更改链接的limit设置每次返回的回答条数, 更改offset设置查询的起始位置 //即上一次的limit+offset是下一次的起始位置,经过试验,每次最多只能返回20条结果 String urlToConnect = baseUrl + "&limit="+limit+"&offset="+offset; URL url = new URL(urlToConnect); // 打开和URL之间的连接 URLConnection connection = url.openConnection(); // 设置通用的请求属性,这个在上面的请求头中可以找到 connection.setRequestProperty("Referer","https://www.zhihu.com/question/276275499"); connection.setRequestProperty("origin","https://www.zhihu.com"); connection.setRequestProperty("x-udid","换成自己的udid"); connection.setRequestProperty("Cookie","换成自己的cookie值"); connection.setRequestProperty("accept", "application/json, text/plain, */*"); connection.setRequestProperty("connection", "Keep-Alive"); connection.setRequestProperty("Host", "www.zhihu.com"); connection.setRequestProperty("user-agent", "Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:57.0) Gecko/20100101 Firefox/57.0"); // 建立实际的连接 connection.connect(); // 定义 BufferedReader输入流来读取URL的响应 bufferedReader = new BufferedReader(new InputStreamReader(connection.getInputStream())); String line = null; while ((line = bufferedReader.readLine()) != null) { responseResult += line; } //将返回结果转成map Gson gson = new Gson(); Map<String, Object> map = new HashMap<String,Object>(); map = gson.fromJson(responseResult, map.getClass()); //获取page信息 LinkedTreeMap<String,Object> pageList = (LinkedTreeMap<String,Object>)map.get("paging"); //得到总条数 double totals = (Double)pageList.get("totals"); //等于0 说明查询的是最后不足20条的回答 if(totals-(limit + offset) != 0) { //如果每页的页数加上起始位置与总条数的差大于20, 可以递归查找下一个20条内容 if (totals - (limit + offset) > 20) { //追加返回结果 stringBuffer.append(sendGet(baseUrl, 20, limit + offset)); stringBuffer.append("\r\n");//换行,调整格式 } else { //如果不大于20,说明是最后的几条了,这时需要修改limit的值 stringBuffer.append(sendGet(baseUrl, (int) (totals - (limit + offset)), limit + offset)); stringBuffer.append("\r\n"); } } //获得包含回答的数组 ArrayList<LinkedTreeMap<String,String>> dataList = (ArrayList<LinkedTreeMap<String,String>>)map.get("data"); //追加每一条回答,用于返回 for(LinkedTreeMap<String,String> contentLink : dataList){ stringBuffer.append(contentLink.get("content")+"\r\n\r\n"); num++;//本次查询到多少条回答 } System.out.println("回答数 "+num); } catch (Exception e) { System.out.println("发送GET请求出现异常!" + e); e.printStackTrace(); } // 使用finally块来关闭输入流 finally { try { if (bufferedReader != null) { bufferedReader.close(); } } catch (Exception e2) { e2.printStackTrace(); } } //返回本次查到的所有回答 return stringBuffer; }

private static final String regEx_script = "<script[^>]*?>[\\s\\S]*?<\\/script>"; // 定义script的正则表达式

private static final String regEx_style = "<style[^>]*?>[\\s\\S]*?<\\/style>"; // 定义style的正则表达式

private static final String regEx_html = "<[^>]+>"; // 定义HTML标签的正则表达式

private static final String regEx_space = "\\s*|\t|\r|\n";//定义空格回车换行符

/**

* @param htmlStr

* @return

* 删除Html标签

*/

public static String delHTMLTag(String htmlStr) {

Pattern p_script = Pattern.compile(regEx_script, Pattern.CASE_INSENSITIVE);

Matcher m_script = p_script.matcher(htmlStr);

htmlStr = m_script.replaceAll(""); // 过滤script标签

Pattern p_style = Pattern.compile(regEx_style, Pattern.CASE_INSENSITIVE);

Matcher m_style = p_style.matcher(htmlStr);

htmlStr = m_style.replaceAll(""); // 过滤style标签

Pattern p_html = Pattern.compile(regEx_html, Pattern.CASE_INSENSITIVE);

Matcher m_html = p_html.matcher(htmlStr);

htmlStr = m_html.replaceAll(""); // 过滤html标签

return htmlStr.replaceAll(" ",""); // 返回文本字符串

}

}

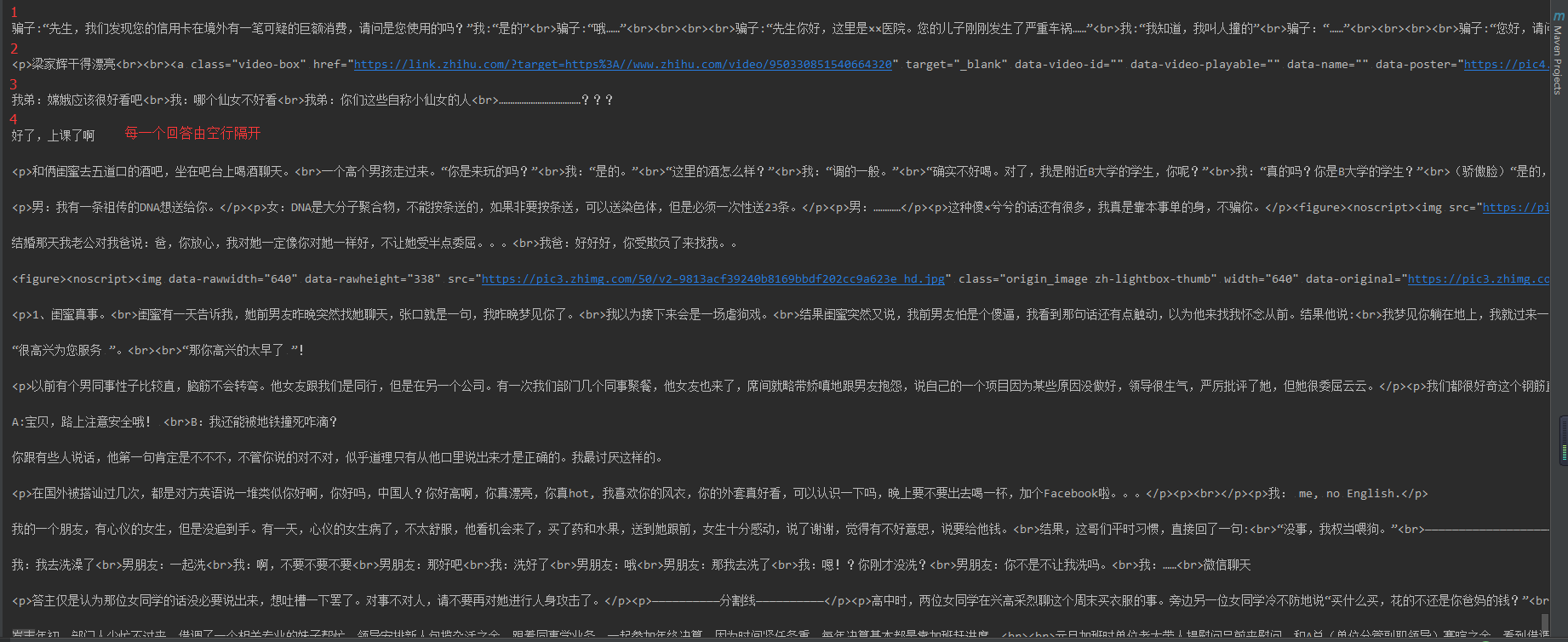

输出结果

回答数量越多,显示的时候需要的时间也就越长,显示的顺序跟页面上的不一样, 从下到上,20个一组显示

为了缩短等待时间, 使用多线程

ZhiHuSpider.java 先获取总共的条数,然后offset每次增加20,循环创建线程

import com.google.gson.Gson; import com.google.gson.internal.LinkedTreeMap; import java.io.BufferedReader; import java.io.IOException; import java.io.InputStreamReader; import java.net.URL; import java.net.URLConnection; import java.util.HashMap; import java.util.Map; public class ZhiHuSpider { public static void main(String[] args){ String baseUrl= "https://www.zhihu.com/api/v4/questions/62209505/answers?" + "include=data[*].is_normal,admin_closed_comment,reward_info," + "is_collapsed,annotation_action,annotation_detail,collapse_reason," + "is_sticky,collapsed_by,suggest_edit,comment_count,can_comment,content," + "editable_content,voteup_count,reshipment_settings,comment_permission," + "created_time,updated_time,review_info,relevant_info,question,excerpt," + "relationship.is_authorized,is_author,voting,is_thanked,is_nothelp;data[*].mark_infos[*].url;" + "data[*].author.follower_count,badge[?(type=best_answerer)].topics&sort_by=default"; //存放每次获取的返回结果 String responseResult = ""; BufferedReader bufferedReader = null; //存放多有的的回答内容 StringBuffer stringBuffer = new StringBuffer(); //每次返回的回答数 URLConnection connection = null; try { String urlToConnect = baseUrl+ "&limit="+20+"&offset="+0; URL url = new URL(urlToConnect); // 打开和URL之间的连接 connection = url.openConnection(); // 设置通用的请求属性 connection.setRequestProperty("Referer","https://www.zhihu.com/question/276275499"); connection.setRequestProperty("origin","https://www.zhihu.com"); connection.setRequestProperty("x-udid","换成自己的udid值"); connection.setRequestProperty("Cookie","换成自己的cookie值"); connection.setRequestProperty("accept", "application/json, text/plain, */*"); connection.setRequestProperty("connection", "Keep-Alive"); connection.setRequestProperty("Host", "www.zhihu.com"); connection.setRequestProperty("user-agent", "Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:57.0) Gecko/20100101 Firefox/57.0"); // 建立实际的连接 connection.connect(); // 定义 BufferedReader输入流来读取URL的响应 bufferedReader = new BufferedReader(new InputStreamReader(connection.getInputStream())); String line = null; while ((line = bufferedReader.readLine()) != null) { responseResult += line; } //将返回结果转成map Gson gson = new Gson(); Map<String, Object> map = new HashMap<String,Object>(); map = gson.fromJson(responseResult, map.getClass()); //获取page信息 LinkedTreeMap<String,Object> pageList = (LinkedTreeMap<String,Object>)map.get("paging"); //得到总条数 double totals = (Double)pageList.get("totals"); for(int offset = 0 ;offset < totals; offset += 20){ SpiderThread spiderThread = new SpiderThread(baseUrl,20,offset); new Thread(spiderThread).start(); } } catch (IOException e) { e.printStackTrace(); }finally{ try { if(bufferedReader != null) { bufferedReader.close(); } } catch (IOException e) { e.printStackTrace(); } } } }

SpiderThread.java

import com.google.gson.Gson; import com.google.gson.internal.LinkedTreeMap; import java.io.BufferedReader; import java.io.InputStreamReader; import java.net.URL; import java.net.URLConnection; import java.util.ArrayList; import java.util.HashMap; import java.util.Map; public class SpiderThread implements Runnable{ private String baseUrl; private int limit; private int offset;

SpiderThread(String baseUrl,int limit,int offset){ this.baseUrl = baseUrl; this.limit = limit; this.offset = offset; } public void run() { System.out.println(delHTMLTag(new String(sendGet(baseUrl,limit,offset)))); } public StringBuffer sendGet(String baseUrl,int limit,int offset) { //存放每次获取的返回结果 String responseResult = ""; BufferedReader bufferedReader = null; //存放多有的的回答内容 StringBuffer stringBuffer = new StringBuffer(); //每次返回的回答数 int num = 0; try { //更改链接的limit设置每次返回的回答条数, 更改offset设置查询的起始位置 //即上一次的limit+offset是下一次的起始位置,经过试验,每次最多只能返回20条结果 String urlToConnect = baseUrl + "&limit="+limit+"&offset="+offset; URL url = new URL(urlToConnect); // 打开和URL之间的连接 URLConnection connection = url.openConnection(); // 设置通用的请求属性 connection.setRequestProperty("Referer","https://www.zhihu.com/question/276275499"); connection.setRequestProperty("origin","https://www.zhihu.com"); connection.setRequestProperty("x-udid","换成自己的udid值"); connection.setRequestProperty("Cookie","换成自己的cookie值"); connection.setRequestProperty("accept", "application/json, text/plain, */*"); connection.setRequestProperty("connection", "Keep-Alive"); connection.setRequestProperty("Host", "www.zhihu.com"); connection.setRequestProperty("user-agent", "Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:57.0) Gecko/20100101 Firefox/57.0"); // 建立实际的连接 connection.connect(); // 定义 BufferedReader输入流来读取URL的响应 bufferedReader = new BufferedReader(new InputStreamReader(connection.getInputStream())); String line = null; while ((line = bufferedReader.readLine()) != null) { responseResult += line; } //将返回结果转成map Gson gson = new Gson(); Map<String, Object> map = new HashMap<String,Object>(); map = gson.fromJson(responseResult, map.getClass()); //获得包含回答的数组 ArrayList<LinkedTreeMap<String,String>> dataList = (ArrayList<LinkedTreeMap<String,String>>)map.get("data"); //追加每一条回答,用于返回 // String str = null; for(LinkedTreeMap<String,String> contentLink : dataList){ stringBuffer.append(contentLink.get("content")+"\r\n\r\n"); num++;//本次查询到多少条回答 } System.out.println("回答条数====================================="+num); } catch (Exception e) { System.out.println("发送GET请求出现异常!" + e); e.printStackTrace(); } // 使用finally块来关闭输入流 finally { try { if (bufferedReader != null) { bufferedReader.close(); } } catch (Exception e2) { e2.printStackTrace(); } } //返回本次查到的所有回答 return stringBuffer; }

private static final String regEx_script = "<script[^>]*?>[\\s\\S]*?<\\/script>"; // 定义script的正则表达式

private static final String regEx_style = "<style[^>]*?>[\\s\\S]*?<\\/style>"; // 定义style的正则表达式

private static final String regEx_html = "<[^>]+>"; // 定义HTML标签的正则表达式

private static final String regEx_space = "\\s*|\t|\r|\n";//定义空格回车换行符

/**

* @param htmlStr

* @return

* 删除Html标签

*/

public static String delHTMLTag(String htmlStr) {

Pattern p_script = Pattern.compile(regEx_script, Pattern.CASE_INSENSITIVE);

Matcher m_script = p_script.matcher(htmlStr);

htmlStr = m_script.replaceAll(""); // 过滤script标签

Pattern p_style = Pattern.compile(regEx_style, Pattern.CASE_INSENSITIVE);

Matcher m_style = p_style.matcher(htmlStr);

htmlStr = m_style.replaceAll(""); // 过滤style标签

Pattern p_html = Pattern.compile(regEx_html, Pattern.CASE_INSENSITIVE);

Matcher m_html = p_html.matcher(htmlStr);

htmlStr = m_html.replaceAll(""); // 过滤html标签

return htmlStr.replaceAll(" ",""); // 返回文本字符串

}

}

遇到一个问题

试了下, 其他的都是没问题的,totals就是总条数,就这个有问题,

链接是:https://www.zhihu.com/api/v4/questions/62209505/answers?include=data[*].is_normal,admin_closed_comment,reward_info,is_collapsed,annotation_action,annotation_detail,collapse_reason,is_sticky,collapsed_by,suggest_edit,comment_count,can_comment,content,editable_content,voteup_count,reshipment_settings,comment_permission,created_time,updated_time,review_info,relevant_info,question,excerpt,relationship.is_authorized,is_author,voting,is_thanked,is_nothelp;data[*].mark_infos[*].url;data[*].author.follower_count,badge[?(type=best_answerer)].topics&limit=20&offset=2020&sort_by=default

2018.6.1

添加了个去掉html标签的方法, 显示的更好看点