Scrapy框架中结合splash 解析js ——环境配置

环境配置:

http://splash.readthedocs.io/en/stable/install.html

pip install scrapy-splash

service docker start

docker pull scrapinghub/splash

docker run -p 8050:8050 scrapinghub/splash

----

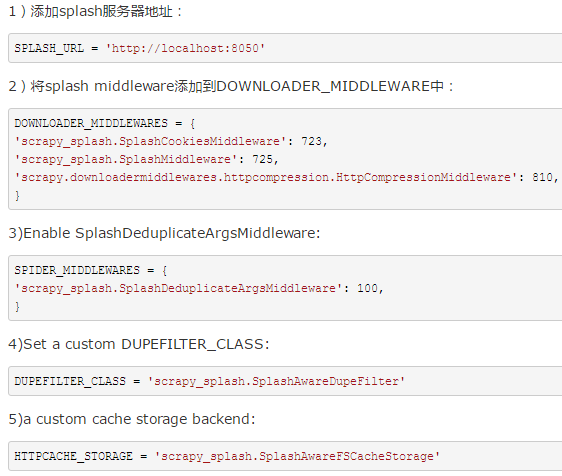

settings.py

#--

SPLASH_URL = 'http://localhost:8050'

#--

DOWNLOADER_MIDDLEWARES = {

'scrapy_splash.SplashCookiesMiddleware': 723,

'scrapy_splash.SplashMiddleware': 725,

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware': 810,

}

#--

SPIDER_MIDDLEWARES = {

'scrapy_splash.SplashDeduplicateArgsMiddleware': 100,

}

#--

DUPEFILTER_CLASS = 'scrapy_splash.SplashAwareDupeFilter'

#--

HTTPCACHE_STORAGE = 'scrapy_splash.SplashAwareFSCacheStorage'

import scrapy

from scrapy_splash import SplashRequest

class MySpider(scrapy.Spider):

start_urls = ["http://example.com", "http://example.com/foo"]

def start_requests(self):

for url in self.start_urls:

yield SplashRequest(url, self.parse, args={'wait': 0.5})

def parse(self, response):

# response.body is a result of render.html call; it

# contains HTML processed by a browser.

# ...

参考链接: https://germey.gitbooks.io/python3webspider/content/7.2-Splash%E7%9A%84%E4%BD%BF%E7%94%A8.html

http://blog.csdn.net/qq_23849183/article/details/51287935

http://ae.yyuap.com/pages/viewpage.action?pageId=919763