fesh个人实践,欢迎经验交流!本文Blog地址:http://www.cnblogs.com/fesh/p/3900253.html

Apache ZooKeeper是一个为分布式应用所设计的开源协调服务,其设计目的是为了减轻分布式应用程序所承担的协调任务。它可以为用户提供同步、配置管理、分组和命名等服务。在这里,对ZooKeeper的完全分布式集群安装部署进行介绍。

一、基本环境

JDK :1.8.0_11 (要求1.6+)

ZooKeeper:3.4.6

主机数:3(要求3+,且必须是奇数,因为ZooKeeper的选举算法)

| 主机名 | IP地址 | JDK | ZooKeeper | myid |

| master | 192.168.145.129 | 1.8.0_11 | server.1 | 1 |

| slave1 | 192.168.145.130 | 1.8.0_11 | server.2 | 2 |

| slave2 | 192.168.145.131 | 1.8.0_11 | server.3 | 3 |

二、master节点上安装配置

1、下载并解压ZooKeeper-3.4.6.tar.gz

tar -zxvf zookeeper-3.4.6.tar.gz

这里路径为 /home/fesh/zookeeper-3.4.6

2、设置the Java heap size (个人感觉一般不需要配置)

保守地use a maximum heap size of 3GB for a 4GB machine

3、$ZOOKEEPER_HOME/conf/zoo.cfg

cp zoo_sample.cfg zoo.cfg

新建此配置文件,并设置内容

# The number of milliseconds of each tick tickTime=2000 # The number of ticks that the initial # synchronization phase can take initLimit=10 # The number of ticks that can pass between # sending a request and getting an acknowledgement syncLimit=5 # the directory where the snapshot is stored. # do not use /tmp for storage, /tmp here is just # example sakes. dataDir=/home/fesh/data/zookeeper # the port at which the clients will connect clientPort=2181

server.1=master:2888:3888

server.2=slave1:2888:3888

server.3=slave2:2888:3888

4、/home/fesh/data/zookeeper/myid

在节点配置的dataDir指定的目录下面,创建一个myid文件,里面内容为一个数字,用来标识当前主机,$ZOOKEEPER_HOME/conf/zoo.cfg文件中配置的server.X,则myid文件中就输入这个数字X。(即在每个节点上新建并设置文件myid,其内容与zoo.cfg中的id相对应)这里master节点为 1

mkdir -p /home/fesh/data/zookeeper cd /home/fesh/data/zookeeper touch myid echo "1" > myid

5、设置日志

conf/log4j.properties

# Define some default values that can be overridden by system properties

zookeeper.root.logger=INFO, CONSOLE

改为

# Define some default values that can be overridden by system properties

zookeeper.root.logger=INFO, ROLLINGFILE

将

#

# Add ROLLINGFILE to rootLogger to get log file output

# Log DEBUG level and above messages to a log file

log4j.appender.ROLLINGFILE=org.apache.log4j.RollingFileAppender

改为---每天一个log日志文件,而不是在同一个log文件中递增日志

#

# Add ROLLINGFILE to rootLogger to get log file output

# Log DEBUG level and above messages to a log file

log4j.appender.ROLLINGFILE=org.apache.log4j.DailyRollingFileAppender

bin/zkEvn.sh

if [ "x${ZOO_LOG_DIR}" = "x" ]

then

ZOO_LOG_DIR="."

fi

if [ "x${ZOO_LOG4J_PROP}" = "x" ]

then

ZOO_LOG4J_PROP="INFO,CONSOLE"

fi

改为

if [ "x${ZOO_LOG_DIR}" = "x" ]

then

ZOO_LOG_DIR="$ZOOBINDIR/../logs"

fi

if [ "x${ZOO_LOG4J_PROP}" = "x" ]

then

ZOO_LOG4J_PROP="INFO,ROLLINGFILE"

fi

参考:Zookeeper运维的一些经验

http://mp.weixin.qq.com/s?__biz=MzAxMjQ5NDM1Mg==&mid=2651024176&idx=1&sn=7659ea6a7bf5c37b083e30060c3e55ca&chksm=8047384fb730b1591ff1ce7081822577112087fc7ec3976f020a263b503f6a8ef0856b3a3057&scene=0#wechat_redirect&utm_source=tuicool&utm_medium=referral

三、从master节点分发文件到其他节点

1、在master节点的/home/fesh/目录下

scp -r zookeeper-3.4.6 slave1:~/

scp -r zookeeper-3.4.6 slave2:~/

scp -r data slave1:~/

scp -r data slave2:~/

2、在slave1节点的/home/fesh/目录下

vi ./data/zookeeper/myid

修改为 2

3、在slave2节点的/home/fesh/目录下

vi ./data/zookeeper/myid

修改为 3

四、其他配置

1、在每个节点配置/etc/hosts (并保证每个节点/etc/hostname中分别为master、slave1、slave2) 主机 -IP地址映射

192.168.145.129 master 192.168.145.130 slave1 192.168.145.131 slave2

2、在每个节点配置环境变量/etc/profile

#Set ZOOKEEPER_HOME ENVIRONMENT

export ZOOKEEPER_HOME=/home/fesh/zookeeper-3.4.6

export PATH=$PATH:$ZOOKEEPER_HOME/bin

五、启动

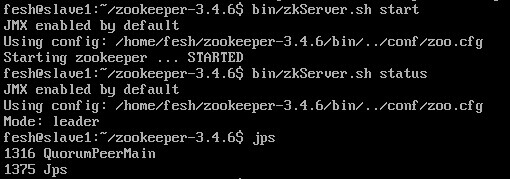

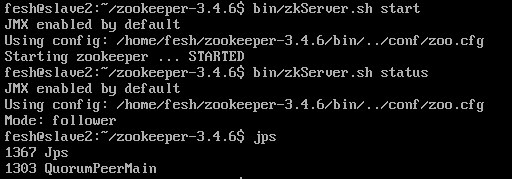

在每个节点上$ZOOKEEPER_HOME目录下,运行 (这里的启动顺序为 master > slave1 > slave2 )

bin/zkServer.sh start

并用命令查看启动状态

bin/zkServer.sh status

master节点

slave1节点

slave2节点

(注:之前我配置正确的,但是一直都是,每个节点上都启动了,但就是互相连接不上,最后发现好像是防火墙的原因,啊啊啊!一定要先把防火墙关了! sudo ufw disable )

查看$ZOOKEEPER_HOME/zookeeper.out 日志,会发现开始会报错,但当leader选出来之后 就没有问题了。

参考:

1、http://zookeeper.apache.org/doc/r3.4.6/zookeeperAdmin.html

2、一个很好的博客http://www.blogjava.net/hello-yun/archive/2012/05/03/377250.html