[sqoop1.99.7] sqoop实例——数据ETL

一、创建一个mysql的link

MySQL链接使用的是JDBC,必须有对应的驱动文件jar,还得有对应的访问权限,请确保能在server端访问MySQL。确保mysql的jar包已经导入到${SQOOP_HOME}/server/lib/目录下。

create link -c generic-jdbc-connector

这时候就会出现交互会话,提示你输入各项参数:

【Link configuration】

Name:标示这个link的字符串。比如:mysql-link-1 Driver Class:指定jdbc启动时所需要加载的driver类:com.mysql.jdbc.Driver。 Connection String:jdbc:mysql://localhost/test_db,test_db是本例的数据库名称。 Username:链接数据库的用户名,也就是mysql客户端传入的-u参数。本例是test。 Password:链接数据库的用户密码。 FetchSize:这个属性并没有在官方文档上描述,我也不知道说的是什么,直接回车了,使用的默认值。 填写完上面几项,将提供一个可以输入JDBC属性的hash,提示符是entry#,这时候可以手动指定很多JDBC属性的值。

本例只覆盖了一个protocol值为tcp:protocol=tcp 再按回车,之后会再定义一下SQL方言。各个数据库系统提供商们对SQL语言标准的理解和实现各有不同,

于是各有各的一些细微差别。以下属性就是用于指定这些区别的。

至此,就可以完成这个link的创建。命令行提示符也会还原为sqoop:000>

例子:

sqoop:000> create link -connector generic-jdbc-connector

Creating link for connector with name generic-jdbc-connector

Please fill following values to create new link object

Name: mysql-link

Database connection

Driver class: com.mysql.jdbc.Driver

Connection String: jdbc:mysql://192.168.200.250:3306/testdb

Username: root

Password: *******

Fetch Size:

Connection Properties:

There are currently 0 values in the map:

entry# protocol=tcp

There are currently 1 values in the map:

protocol = tcp

entry#

SQL Dialect

Identifier enclose: 注意 这里不能直接回车!要打一个空格符号!因为如果不打,查询mysql表的时候会在表上加上“”,导致查询出错!

New link was successfully created with validation status OK and name mysql-link

sqoop:000> show link

+------------+------------------------+---------+

| Name | Connector Name | Enabled |

+------------+------------------------+---------+

| mysql-link | generic-jdbc-connector | true |

+------------+------------------------+---------+

二、创建一个hdfs的link

create link -connector hdfs-connector

hdfs的参数只有一个Name和一个HDFS URI,Name同上。URI是hadoop中配置hdfs-site.xml中的属性fs.defaultFS的值。本例为hdfs://localhost:9000,回车后没有什么错误就会显示successful信息。

例子:

sqoop:000> create link -connector hdfs-connector

Creating link for connector with name hdfs-connector

Please fill following values to create new link object

Name: hdfs-link

HDFS cluster

URI: hdfs://hadoop-allinone-200-123.wdcloud.locl:9000

Conf directory:

Additional configs::

There are currently 0 values in the map:

entry#

New link was successfully created with validation status OK and name hdfs-link

查看创建的link

sqoop:000> show link +--------------+------------------------+---------+ | Name | Connector Name | Enabled | +--------------+------------------------+---------+ | hdfs-link-1 | hdfs-connector | true | | mysql-link-1 | generic-jdbc-connector | true | +--------------+------------------------+---------+

三、创建传输任务JOB

create job -f "mysql-link" -t "hdfs-link"

-f指定from,即是数据源位置,-t指定to,即是目的地位置。本例是从MySQL传递数据到HDFS,所以就是from mysql to HDFS。参数值就是在创建链接(link)时指定的Name。

以下是各个属性

Name:一个标示符,自己指定即可。

Schema Name:指定Database或Schema的名字,在MySQL中,Schema同Database类似,具体什么区别没有深究过,但官网描述在创建时差不多。这里指定数据库名字为db_ez即可,本例的数据库。

Table Name:本例使用的数据库表为tb_forhadoop,自己指定导出的表。多表的情况请自行查看官方文档。

SQL Statement:就是sql查询语句,文档上说需要指定一个$condition,但我一直没有创建成功,貌似是一个条件子句。

配置完以上几项,又回出现element#提示符,提示输入一些hash值,这里我没有再指定什么。直接回车过。而以下几个配置我也是直接回车,使用默认值,大概是一些与数据库相关的参数。

Partition column:

Partition column nullable:

Boundary query

Last value

后面需要配置数据目的地各项值:

Null alue:大概说的是如果有空值用什么覆盖

File format:指定在HDFS中的数据文件是什么文件格式,这里使用TEXT_FILE,即最简单的文本文件。

Compression codec:用于指定使用什么压缩算法进行导出数据文件压缩,我指定NONE,这个也可以使用自定义的压缩算法CUSTOM,用Java实现相应的接口。

Custom codec:这个就是指定的custom压缩算法,本例选择NONE,所以直接回车过去。

Output directory:指定存储在HDFS文件系统中的路径,这里最好指定一个存在的路径,或者存在但路劲下是空的,貌似这样才能成功。

Append mode:用于指定是否是在已存在导出文件的情况下将新数据追加到数据文件中。

Extractors:不清楚是什么,我取了一个1

Loaders:同上

最后再次出现element#提示符,用于输入extra mapper jars的属性,可以什么都不写。直接回车。

至此若出现successful则证明已经成功创建。

例子:

先在mysql数据库创建一个名为testdb的db,并在该db下创建表table001

开始创建任务

sqoop:000> create job -f "mysql-link" -t "hdfs-link" Creating job for links with from name mysql-link-200.250.sqoop and to name hdfs-link Please fill following values to create new job object Name: job1 Database source Schema name: testdb Table name: table001 SQL statement: Column names: There are currently 0 values in the list: element# Partition column: Partition column nullable: Boundary query: Incremental read Check column: Last value: Target configuration Override null value: Null value: File format: 0 : TEXT_FILE 1 : SEQUENCE_FILE 2 : PARQUET_FILE Choose: 0 Compression codec: 0 : NONE 1 : DEFAULT 2 : DEFLATE 3 : GZIP 4 : BZIP2 5 : LZO 6 : LZ4 7 : SNAPPY 8 : CUSTOM Choose: 0 Custom codec: Output directory: /wdcloud/app/sqoop-1.99.7/import_data Append mode: Throttling resources Extractors: 2 Loaders: 2 Classpath configuration Extra mapper jars: There are currently 0 values in the list: element# New job was successfully created with validation status OK and name mysql-import-user-table

查看任务:

sqoop:000> show job

+----+------+-------------------------------------+----------------------------+---------+

| Id | Name | From Connector | To Connector | Enabled |

+----+------+-------------------------------------+----------------------------+---------+

| 2 | job1 | mysql-link (generic-jdbc-connector) | hdfs-link (hdfs-connector) | true |

+----+------+-------------------------------------+----------------------------+---------+

四、执行和查看Job状态

start job -n jobname

status job -n jobname

启动任务报错

sqoop:000> start job -n job1

Exception has occurred during processing command

Exception: org.apache.sqoop.common.SqoopException Message: GENERIC_JDBC_CONNECTOR_0001:Unable to get a connection -

设置可查看具体出错信息

sqoop:000> set option --name verbose --value true Verbose option was changed to true

报错信息:好像是找不到这台mysql主机

sqoop:000> start job -n job1 Exception has occurred during processing command Exception: org.apache.sqoop.common.SqoopException Message: GENERIC_JDBC_CONNECTOR_0001:Unable to get a connection - Stack trace: at org.apache.sqoop.client.request.ResourceRequest (ResourceRequest.java:137) at org.apache.sqoop.client.request.ResourceRequest (ResourceRequest.java:187) at org.apache.sqoop.client.request.JobResourceRequest (JobResourceRequest.java:113) 。。。。。。。

关键信息: Caused by: Exception: java.lang.Throwable Message: Communications link failure Caused by: Exception: java.net.UnknownHostException Message: mysql.server: Name or service not known

原来是创建mysq的link的时候 url写错了,修改url为:jdbc:mysql://192.168.200.250:3306/testdb

继续执行报错

执行一直报这个错 搞不下去了 不知道为什么给shema和table加了双引号 Exception has occurred during processing command Exception: org.apache.sqoop.common.SqoopException Message: GENERIC_JDBC_CONNECTOR_0016:Can't fetch schema - Caused by: Exception: java.lang.Throwable Message: You have an error in your SQL syntax; check the manual that corresponds to your MySQL server version for the right syntax to use near '"testdb"."table001"' at line 1

执行一直报这个错 搞不下去了 不知道为什么给shema和table加了双引号 Exception has occurred during processing command Exception: org.apache.sqoop.common.SqoopException Message: GENERIC_JDBC_CONNECTOR_0016:Can't fetch schema - Caused by: Exception: java.lang.Throwable Message: You have an error in your SQL syntax; check the manual that corresponds to your MySQL server version for the right syntax to use near '"testdb"."table001"' at line 1

解决: 在创建mysql链接时,Identifier enclose:指定SQL中标识符的定界符,也就是说,有的SQL标示符是一个引号:select * from "table_name",这种定界符在MySQL中是会报错的。这个属性默认值就是双引号,所以不能使用回车,必须将之覆盖,我使用空格覆盖了这个值。吐槽一下,这个错误我整了一整天才搞明白,官方文档也是坑啊! 所以修改下mysql的link吧。

继续报错

2016-12-19 03:16:43 EST: FAILURE_ON_SUBMIT

Exception: org.apache.hadoop.security.AccessControlException: Permission denied: user=root, access=WRITE, inode="/tmp/hadoop-yarn/staging/root/.staging":hadoop:supergroup:drwxr-xr-x

提示往HDFS写文件是不容许的,这个文件夹没有权限,进入hdfs目录为hadoop用户的/tmp文件夹授权

[root@hadoop-allinone-200-123 bin]# su hadoop

[hadoop@hadoop-allinone-200-123 bin]$ ./hadoop fs -ls / Found 1 items drwxr-xr-x - hadoop supergroup 0 2016-11-24 21:50 /hbase

#创建/tmp文件夹 [hadoop@hadoop-allinone-200-123 bin]$ ./hadoop fs -mkdir /tmp [hadoop@hadoop-allinone-200-123 bin]$ ./hadoop fs -ls / Found 2 items drwxr-xr-x - hadoop supergroup 0 2016-11-24 21:50 /hbase drwxr-xr-x - hadoop supergroup 0 2016-12-19 03:34 /tmp

#为tmp文件夹授权777 [hadoop@hadoop-allinone-200-123 bin]$ ./hadoop fs -chmod 777 /tmp [hadoop@hadoop-allinone-200-123 bin]$ ./hadoop fs -ls / Found 2 items drwxr-xr-x - hadoop supergroup 0 2016-11-24 21:50 /hbase drwxrwxrwx - hadoop supergroup 0 2016-12-19 03:34 /tmp

终于不报错了:

sqoop:000> start job -n job1 Submission details Job Name: job1 Server URL: http://localhost:12000/sqoop/ Created by: root Creation date: 2016-12-19 03:35:15 EST Lastly updated by: root External ID: job_1479957438728_0001 http://hadoop-allinone-200-123.wdcloud.locl:8088/proxy/application_1479957438728_0001/ Source Connector schema: Schema{name= testdb . table001 ,columns=[ FixedPoint{name=id,nullable=true,type=FIXED_POINT,byteSize=4,signed=true}, Text{name=name,nullable=true,type=TEXT,charSize=null}, Text{name=address,nullable=true,type=TEXT,charSize=null}]} 2016-12-19 03:35:15 EST: BOOTING - Progress is not available

查看任务执行状态,继续报错

sqoop:000> status job -n job1 Exception has occurred during processing command Exception: org.apache.sqoop.common.SqoopException Message: MAPREDUCE_0003:Can't get RunningJob instance - Caused by: Exception: java.io.IOException Message: java.net.ConnectException: Call From hadoop-allinone-200-123.wdcloud.locl/192.168.200.123 to 0.0.0.0:10020 failed on connection exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused Caused by: Exception: java.net.ConnectException Message: Connection refused

表示: hadoop运行mapreduce作业无法连接0.0.0.0/0.0.0.0:10020

<property>

<name>mapreduce.jobhistory.address</name>

<value>hadoop-allinone-200-123.wdcloud.locl:10020</value>

</property>

[hadoop@hadoop-allinone-200-123 sbin]$ pwd /wdcloud/app/hadoop-2.7.3/sbin [hadoop@hadoop-allinone-200-123 sbin]$ ll | grep jobhistory -rwxr-xr-x 1 hadoop hadoop 4080 Aug 17 21:49 mr-jobhistory-daemon.sh [hadoop@hadoop-allinone-200-123 sbin]$ ./mr-jobhistory-daemon.sh start historyserver starting historyserver, logging to /wdcloud/app/hadoop-2.7.3/logs/mapred-hadoop-historyserver-hadoop-allinone-200-123.wdcloud.locl.out [hadoop@hadoop-allinone-200-123 sbin]$ jps | grep JobHistoryServer 16818 JobHistoryServer

终于可以执行了,但是任务执行失败

sqoop:000> status job -n job1 Submission details Job Name: job1 Server URL: http://localhost:12000/sqoop/ Created by: root Creation date: 2016-12-19 04:01:25 EST Lastly updated by: root External ID: job_1479957438728_0004 http://hadoop-allinone-200-123.wdcloud.locl:8088/proxy/application_1479957438728_0004/ 2016-12-19 04:02:51 EST: RUNNING - 0.00 %

报错信息:(据说是内存不足?)

sqoop:000> status job -n job1 Submission details Job Name: job1 Server URL: http://localhost:12000/sqoop/ Created by: root Creation date: 2016-12-19 04:01:25 EST Lastly updated by: root External ID: job_1479957438728_0004 http://hadoop-allinone-200-123.wdcloud.locl:8088/proxy/application_1479957438728_0004/ 2016-12-19 04:04:48 EST: FAILED Exception: Job Failed with status:3

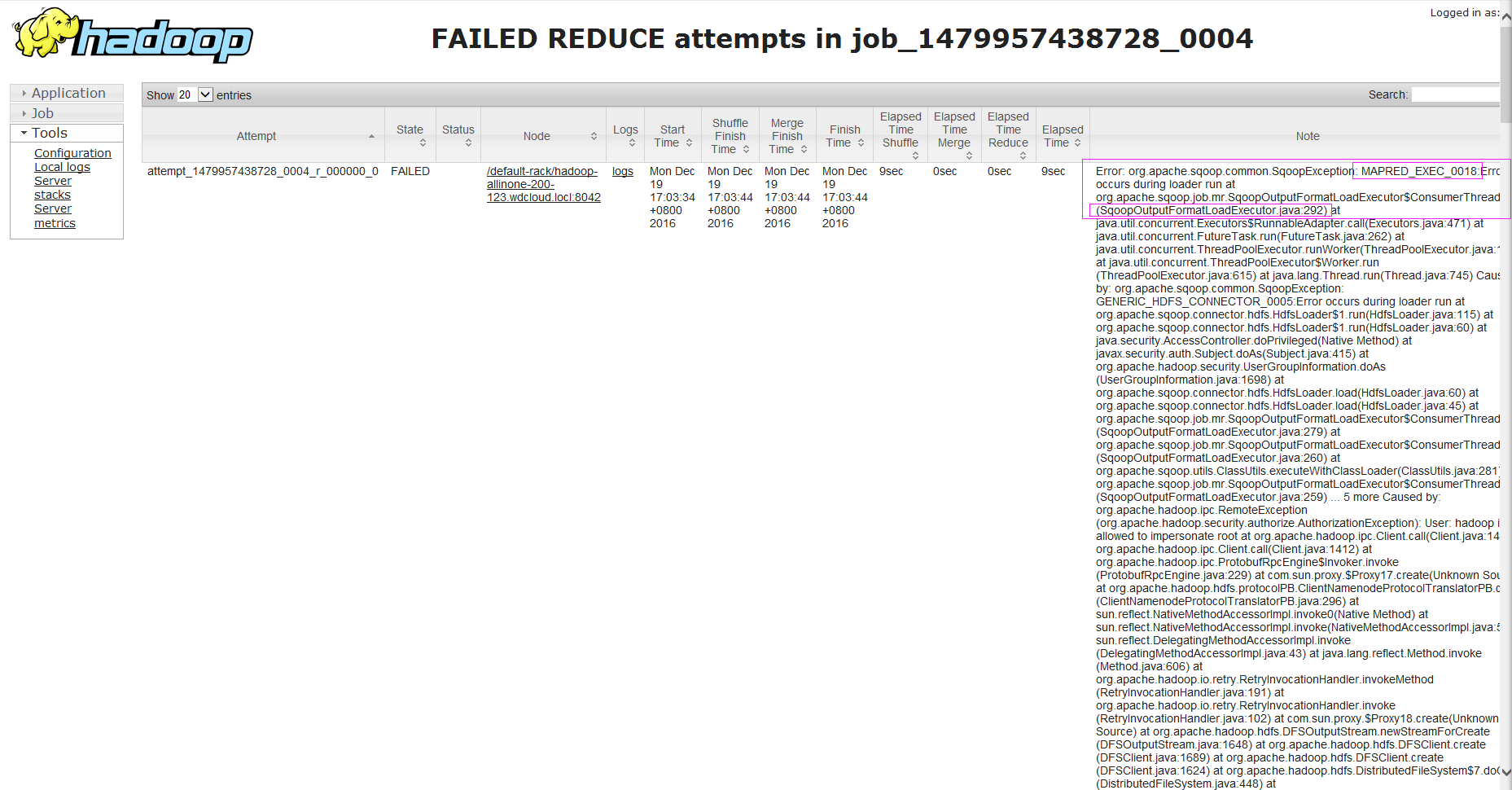

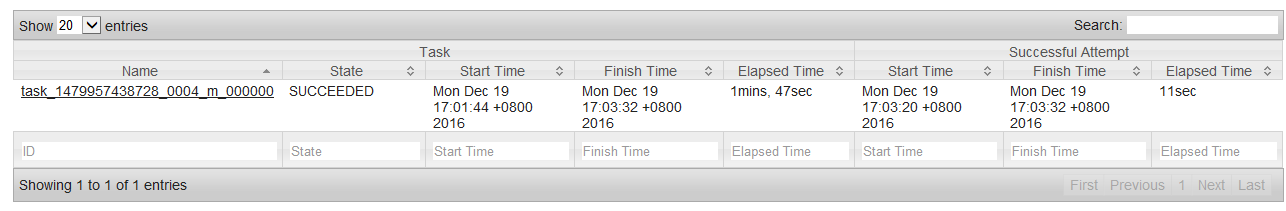

能map不能reduce,呵呵哒!

reduce

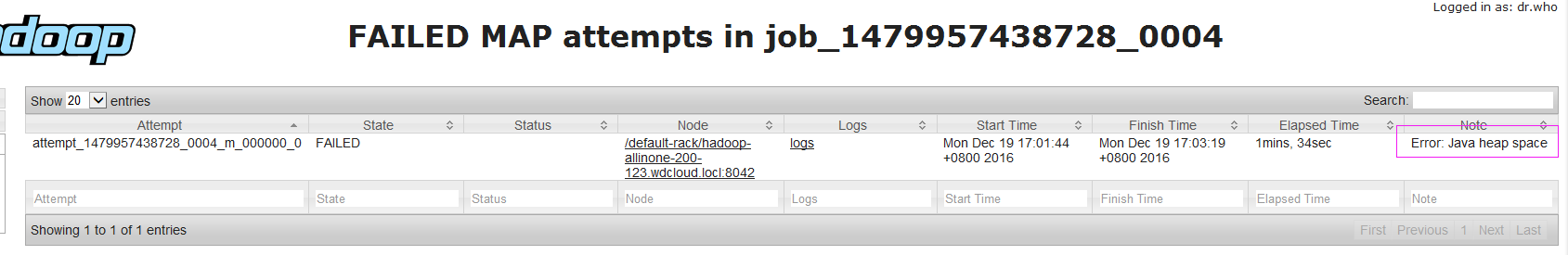

map任务内存溢出

在mapred-site.xml中设置:(默认200)

<property>

<name>mapred.child.java.opts</name>

<value>-Xmx2048m</value>

</property>

reduce出错信息